id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,449,283 | Testing Step Functions Locally | Have you built a Step Function with many steps, retries and end states - but you are left wondering,... | 0 | 2023-04-26T20:36:23 | https://instil.co/blog/testing-step-functions-locally/ | aws, serverless, stepfunctions | ---

canonical_url: https://instil.co/blog/testing-step-functions-locally/

---

Have you built a Step Function with many steps, retries and end states - but you are left wondering, how do I test this masterpiece to ensure it's as wonderful as I think it is? Then you've come to the right place! Have a look at how we test Step Functions locally to give you more confidence in your work.

---

As you may have seen in our previous posts, we love Step Functions. It's great being able to build your Step Function in the console, see the payloads passing through your states and everything going green for you to say “Wooh! You’ve stepped through a Step Function successfully.”. But what if it didn’t, and it’s actually not doing what you expect, it’s going red and throwing useless errors or worse, it’s green but not giving you the response you want. What do you need? Tests!

## What does AWS provide to help you test?

AWS itself provides some basic tools required for testing Step Functions - no they’re not the silver bullet in which you can just quickly write and run to test your Step Functions - but they certainly give you a jump start.

[Step Functions Local](https://docs.aws.amazon.com/step-functions/latest/dg/sfn-local.html) documentation states:

> AWS Step Functions Local is a downloadable version of Step Functions that lets you develop and test applications using a version of Step Functions running in your own development environment.

With Step Functions Local you can test locally or as part of a pipeline. You can test your flows, inputs, outputs, retries, back-offs and error states to ensure it performs as you expect.

*Note:*

Step Functions Local can sometimes be behind the Step Functions feature set. We have noticed when a new feature is implemented in Step Functions, the Step Functions Local container image may not be updated to include those features immediately. This is understandably not ideal - but you can keep an eye on the container [here](https://hub.docker.com/r/amazon/aws-stepfunctions-local/tags) for new versions in which AWS are actively updating.

## How to get it up and running

At Instil, we knew that we needed to run these tests as part of the pipeline but also run them locally when developing or investigating issues. AWS kindly provides some help with running the tests via the AWS CLI which is great, but we wanted to create these tests to last and have them run as part of our deployment pipeline. So we found this solution.

Here’s what you need:

1. AWS Step Functions Local ([Docker Image](https://docs.aws.amazon.com/step-functions/latest/dg/sfn-local-docker.html))

2. [Testcontainers](https://www.npmjs.com/package/testcontainers) package

3. [AWS SDK](https://www.npmjs.com/package/aws-sdk) package

4. [Wait For Expect](https://www.npmjs.com/package/wait-for-expect) package

### Step 1: Have a look at your Step Function

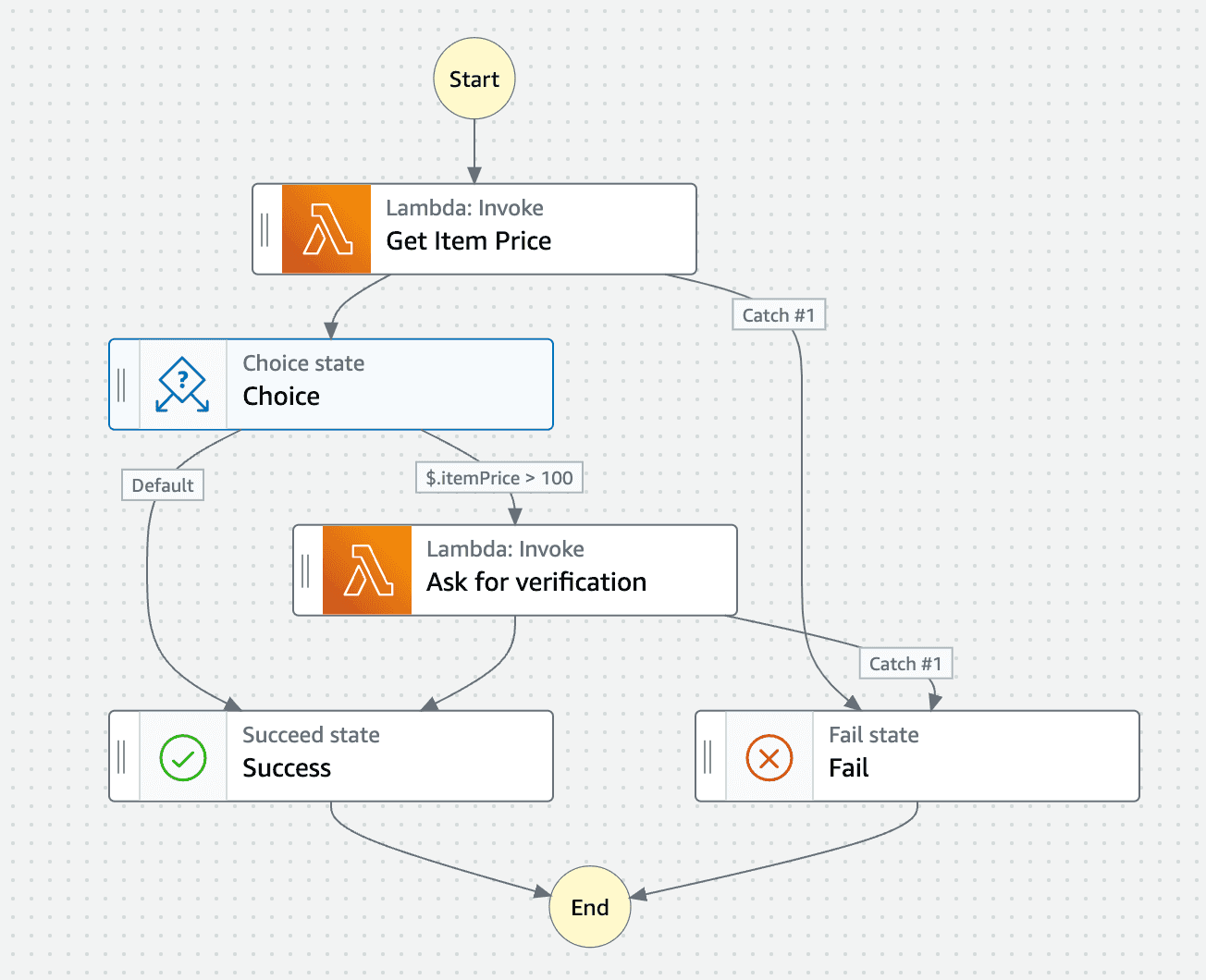

The Step Functions Workflow Studio is great for building out your Step Function in the console. It makes creating your Step Function user-friendly and makes visualising it super easy. Here we have an example Step Function.

<br/>

It has a couple of lambdas, a choice state for checking the response of the first lambda and some success and failure paths. It has 4 flows which we would want to test if I can count correctly:

1. Get Item Price → “Item Price <= 100” → Success

2. Get Item Price → “Item Price > 100” → Ask for verification → Success

3. Get Item Price → Fail

4. Get Item Price → “Item Price > 100” → Ask for verification → Fail

Now we have an idea of what we want to test from our Step Function, we can get to work.

### Step 2: Download your ASL file from the Step Function Workflow Studio

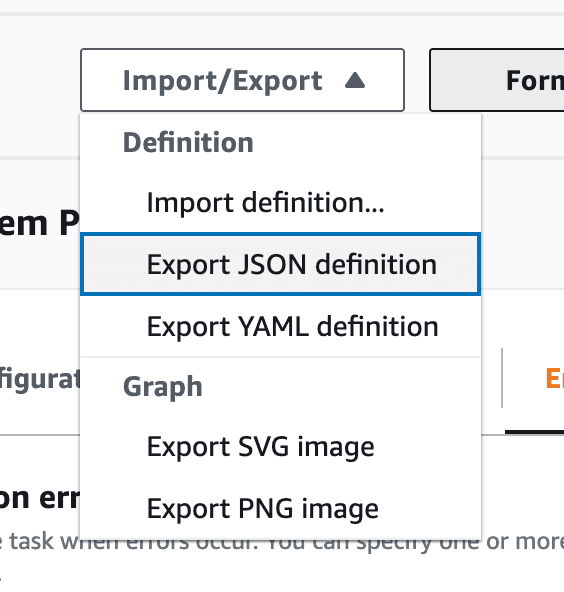

To use the Step Function Local container, we need our Step Function in [ASL](https://docs.aws.amazon.com/step-functions/latest/dg/concepts-amazon-states-language.html) (Amazon States Language) which is AWS’ own language for defining Step Functions and their states. You can do this from the Step Function console by exporting the JSON definition.

### Step 3: Get that Docker container spinning

You need the container up and running to be able to run the Step Function locally within it, we used `testcontainers` to spin up the short-lived container and have it ready for testing.

```

import {GenericContainer} from "testcontainers";

const awsStepFunctionsLocalContainer = await new GenericContainer("amazon/aws-stepfunctions-local")

.withExposedPorts(8083)

.withBindMount("your-path-to/MockConfigFile.json", "/home/MockConfigFile.json", "ro")

.withEnv("SFN_MOCK_CONFIG", "/home/MockConfigFile.json")

.start();

```

<br/>

*Note:*

- Test Containers picks a random free port on the host machine and uses 8083 above to map it, so you don’t need to worry about clashes.

- `MockConfigFile.json` is the file we use for mocking how the AWS services respond in your Step Function test executions, we will come to how to create those in the next step!

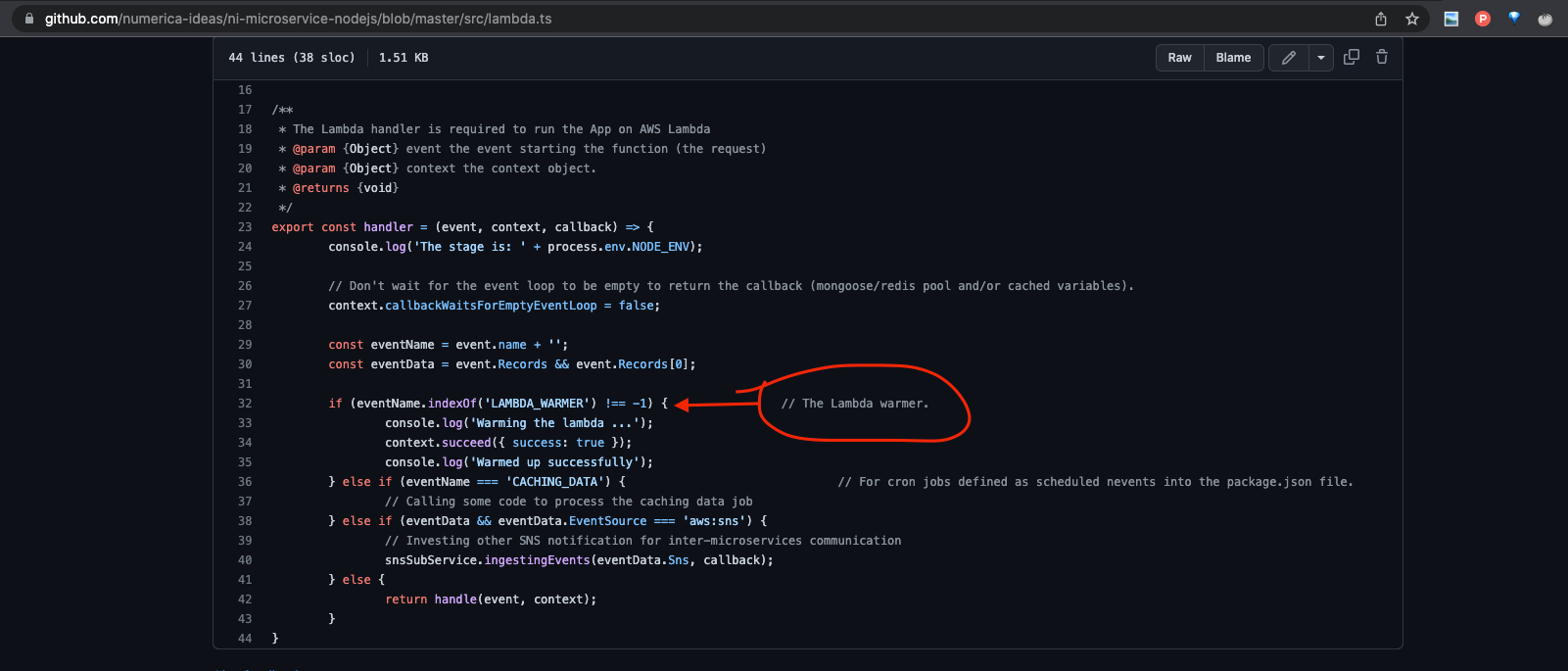

### Step 4: Create your MockConfigFile

The use of a mock config file is how we define the test cases, flows and responses of AWS service integrations within the Step Function. It makes up the meat of your Step Function testing journey and ultimately controls how detailed you want your tests to be.

The mock config is a JSON file which according to AWS’ own [documentation](https://docs.aws.amazon.com/step-functions/latest/dg/sfn-local-mock-cfg-file.html#mock-cfg-struct) includes:

- `StateMachines` - The fields of this object represent state machines configured to use mocked service integrations.

- `MockedResponse` - The fields of this object represent mocked responses for service integration calls.

Here’s what ours looks like as a finished product below. Make sure the names of the steps are identical to those named in the ASL file i.e “Get Item” in the test case is “Get Item” from the ASL file.

*Note:*

A great thing you can do in this file also detailed in the AWS documentation is to test the retry and backoff behaviour of some of your steps. For example, you could test that a lambda responds with an error on its first invocation, automatically retries and then returns successfully on its second invocation. Something like this is shown in the `MockedGetItemAbove100` mocked response below.

```

{

"StateMachines": {

"ItemPriceChecker": {

"TestCases": {

"shouldSuccessfullyGetItemWithPriceBelow100": {

"Get Item Price": "MockedGetItemBelow100"

},

"shouldSuccessfullyGetItemAndVerifyWithPriceEqualOrAbove100": {

"Get Item Price": "MockedGetItemAbove100",

"Ask for verification": "MockedAskForVerificationSuccess"

},

"shouldFailToGetItem": {

"Get Item Price": "MockedGenericLambdaFailure"

},

"shouldFailToVerifyItemWithPriceEqualOrAbove100": {

"Get Item Price": "MockedGetItemAbove100",

"Ask for verification": "MockedGenericLambdaFailure"

}

}

}

},

"MockedResponses": {

"MockedGetItemBelow100": {

"0": {

"Return": {

"StatusCode": 200,

"Payload": {

"StatusCode": 200,

"itemPrice": 80

}

}

}

},

"MockedGetItemAbove100": {

"0": {

"Throw": {

"Error": "Lambda.TimeoutException",

"Cause": "Lambda timed out."

}

},

"1": {

"Return": {

"StatusCode": 200,

"Payload": {

"StatusCode": 200,

"itemPrice": 100

}

}

}

},

"MockedAskForVerificationSuccess": {

"0": {

"Return": {"StatusCode": 200}

}

},

"MockedGenericLambdaFailure": {

"0": {

"Throw": {

"Error":"Lambda.GenericLambdaFailure",

"Cause":"The lambda failed generically."

}

}

}

}

}

```

### Step 5: Prepping the tests

So you have the Step Function and test cases ready, all you need now is to get them running. This first function will get the client for the Step Function Local container and allow you to run commands against it for testing the local version of your Step Function:

```

import {SFNClient} from "@aws-sdk/client-sfn";

const sfnLocalClient = new SFNClient({

endpoint: `http://${awsStepFunctionsLocalContainer?.getHost()}:${awsStepFunctionsLocalContainer?.getMappedPort(8083)}`,

region: "eu-west-2",

credentials: {

accessKeyId: "test",

secretAccessKey: "test",

sessionToken: "test"

}

});

```

<br/>

***Important:***

As you can see, we used “test” above for the credentials. This is to ensure the Step Function doesn’t interact with our actual deployed environment in AWS.

Step Functions Local allows you to run tests against actual deployed services (so feel free to do so for your case) but since we have mocked the services using `MockConfigFile.json` then we don’t want to do that. By using fake credentials then it just defaults to the mocked services from our file.

<br/>

Next, create your local Step Function instance in the docker container using the client just created.

```

import {CreateStateMachineCommand} from "@aws-sdk/client-sfn";

import {readFileSync} from "fs";

const localStepFunction = await sfnLocalClient.send(

new CreateStateMachineCommand({

definition: readFileSync("your-path-to/ItemPriceCheckerAsl.json", "utf8"),

name: "ItemPriceChecker",

roleArn: undefined

})

);

```

<br/>

You can then start a Step Function execution for one of the test cases. This will run the Step Function in the container and use the mocked AWS service integrations defined in the `MockConfigFile.json` to determine the path it takes. Here is the function you can use, we have it wrapped here so it can be ran for each specific test case.

The `stepFunctionInput` is a JSON string of what you would be passing in to the Step Function. In our case for the `ItemPriceChecker` there is no input to the Step Function as the item price is retrieved in the first step - so the input can be anything e.g `{}`. Make sure for your own Step Function to pass in any input required or use `{}` similar to the example if no input is required.

```

import {StartExecutionCommand, StartExecutionCommandOutput} from "@aws-sdk/client-sfn";

async function startStepFunctionExecution(testName: string, stepFunctionInput: string): Promise<StartExecutionCommandOutput> {

return await sfnLocalClient.send(

new StartExecutionCommand({

stateMachineArn: `${

localStepFunction.stateMachineArn as string

}#${testName}`,

input: stepFunctionInput

})

);

}

```

### Step 6: Finally some testing!

Now you have your running Step Function execution for a particular test case, we need to actually test it worked. This is where AWS isn’t super helpful, there is no provided API for interacting with the Step Function execution and determining how the Step Function handled your test data. So we had to make our own! Sort of.

Here’s an example using the Step Function execution from above:

```

import {GetExecutionHistoryCommand, GetExecutionHistoryCommandOutput, StartExecutionCommandOutput} from "@aws-sdk/client-sfn";

import waitFor from "wait-for-expect";

it("should successfully get item with price below 100", async () => {

const stepFunctionInput = {};

const expectedOutput = JSON.stringify({

StatusCode: 200,

itemPrice: 80

});

// This runs the Step Function and returns the execution details using the function created earlier in the post

const stepFunctionExecutionResult = await startStepFunctionExecution(

"shouldSuccessfullyGetItemWithPriceBelow100",

stepFunctionInput

);

// This checks the states to ensure the execution successfully completed with the correct output

await thenTheItemPriceIsReturned(stepFunctionExecutionResult, expectedOutput);

});

async function thenTheItemPriceIsReturned(

startLocalSFNExecutionResult: StartExecutionCommandOutput,

expectedOutput: string

): Promise<void> {

// Since the execution arn is provided, it could still be running so this waits for the execution to finish by checking for the result you need

await waitFor(async () => {

const getExecutionHistoryResult = await getExecutionHistory(startLocalSFNExecutionResult.executionArn);

const successStateExitedEvent = getExecutionHistoryResult.events?.find(event => event.type === "SucceedStateExited");

expect(successStateExitedEvent?.stateExitedEventDetails?.name).toEqual("Success");

expect(successStateExitedEvent?.stateExitedEventDetails?.output).toEqual(expectedOutput);

});

}

async function getExecutionHistory(executionArn: string | undefined): Promise<GetExecutionHistoryCommandOutput> {

return await sfnLocalClient.send(

new GetExecutionHistoryCommand({

executionArn

})

);

}

```

<br/>

There is a lot of information above but at its heart, it simply runs the Step Function in the container and returns the execution information to the test. It then grabs the execution history of the running local Step Function and checks for an event showing it succeeded; this allows the test to then also check the execution output and ensure it has succeeded correctly.

### Step 7: Make sure to tear it all down

One thing that can be easily forgotten is your container running as a part of your test. A good thing to do is make sure it is torn down correctly at the end of your test run. This can be done very easily as part of an `afterAll` if running multiple tests and is simple done by stopping the test containers instance.

`awsStepFunctionsLocalContainer.stop();`

### Step 8: Expand and add more tests

Now this is up to you! You can continue to test the rest of the flow cases for the Step Function, checking it has emitted “FailStateExited” in the execution history for the failed cases or expanding your testing flows.

The `HistoryEventType` from the `aws-sdk` gives you all the event types which can be logged in the Step Function Local execution history, this allows you to write tests however you like for checking the execution of the Step Function. Here are some examples of matcher functions we have written for different types of events:

```

import {HistoryEvent} from "@aws-sdk/client-sfn";

async findExecutionSucceededEventInHistory(executionArn: string | undefined): Promise<HistoryEvent | undefined> {

return await findEventFromExecutionHistory(executionArn, "ExecutionSucceeded");

}

async findFailStateEnteredEventInHistory(executionArn: string | undefined): Promise<HistoryEvent | undefined> {

return await findEventFromExecutionHistory(executionArn, "FailStateEntered");

}

async findSucceedStateExitedEventInHistory(executionArn: string | undefined): Promise<HistoryEvent | undefined> {

return await findEventFromExecutionHistory(executionArn, "SucceedStateExited");

}

async findEventFromExecutionHistory(executionArn: string | undefined, eventKey: HistoryEventType): Promise<HistoryEvent | undefined> {

const history = await getExecutionHistory(executionArn);

return history.events?.find(

event => event.type === eventKey

);

}

```

## You’re good to go!

What we have created above is hopefully something quite simple for testing Step Functions. We additionally improved this by creating a Step Function testing service class which holds all the re-usable functions and can be called easily within the required test file. With this we were able to run our Step Function tests as part of our deployment pipeline, providing greater confidence in our code and allowing us to integrate Step Functions more into our applications.

<br/>

***Important:***

Now it's also good to note here that this is not everything we do at Instil to test our Step Functions, it is simply a companion that enables us to test the difficult edge cases including complicated flows, retries and back-offs etc. We are advocates for testing in the cloud - and this local testing mixed with integration testing in the cloud (focusing more on Step Functions interacting with other parts of the cloud rather than edge cases) is a good starting place for testing your Step Functions.

Additionally, we do hope to see some improvements to the Step Functions Local client in future from AWS, possibly providing their own matchers for checking that states have been entered and exited correctly within the tested Step Function, but if not we will just have to do it ourselves!

| tombailey14 |

1,449,359 | Blockchain-Powered Social Media for Musicians’ Success | How Social Media on Blockchain can help Musicians in their publicity, ownership, and... | 0 | 2023-04-28T16:12:12 | https://cryptoloom.xyz/rocking-revenues-and-raving-fans-harnessing-blockchain-powered-social-media-for-musicians-success/ | general, blockchainbenefits, fanbasegrowth, musicianspublicity | ---

title: Blockchain-Powered Social Media for Musicians’ Success

published: true

date: 2023-04-26 17:45:20 UTC

tags: General,blockchainbenefits,fanbasegrowth,musicianspublicity

canonical_url: https://cryptoloom.xyz/rocking-revenues-and-raving-fans-harnessing-blockchain-powered-social-media-for-musicians-success/

---

#### How Social Media on Blockchain can help Musicians in their publicity, ownership, and increasing fan base

In recent times, we have experienced a surge in social media usage, and it has become the primary means of communication for many people, including musicians. Social media platforms give musicians the ability to connect with their fans, share their work, and grow their influence. However, despite the positives, traditional social media platforms do have their drawbacks, such as lack of ownership and control for the musician.

Enter blockchain technology. This cutting-edge innovation, best known for its application in cryptocurrencies like Bitcoin, offers tremendous potential for musicians seeking to enhance their publicity, ownership, and fan base. In this article, we will delve deeper into how social media on the blockchain can rescue musicians from their existing struggles and unlock new possibilities for them.

## What is Blockchain, and how does it help?

Blockchain is essentially a secure and decentralized digital ledger system that allows for transparent transactions while maintaining privacy. Each block contains a list of transactions that can be linked to other blocks to form a chain. By design, these blocks are tamper-proof, so the data stays secure and trustworthy.

Social media platforms can be built on top of a blockchain network, providing a robust and decentralized solution for musicians. Through these decentralized social media platforms, musicians can gain benefits such as improved control over their content, better ownership and monetization models, and increased opportunities for fan interactions.

Now, let’s dive into the specifics of how blockchain-based social media can help musicians in their publicity, ownership, and fan base growth.

### 1. Ownership and control of content

One of the most significant issues musicians face on traditional social media platforms is the control and ownership of their content. Despite creating the content, artists often face restrictions, unwanted ads, and potential censorship by the platform. Blockchain technology essentially eliminates these issues by giving musicians complete control over their work.

With blockchain-based social media platforms, each piece of content is posted as a transaction on the decentralized ledger. This means the content is verifiable, secure, and can’t be tampered with or stolen. Furthermore, musicians don’t have to worry about their content being used without their consent, as their work is secured by blockchain’s cryptographic features.

### 2. Monetization and revenue streams

Traditional revenue streams for musicians, such as record sales and live shows, have been significantly impacted by the rise of digital music and streaming services. As a result, musicians often struggle to make a living from their work, even with the help of social media. The solution? Blockchain-based social media platforms can offer various monetization models that empower musicians and provide them with fair compensation.

Tokenization is one innovative approach introduced by blockchain technology that creates new forms of value and rewards in the digital space. Musicians can tokenize their content on these platforms, allowing fans to purchase, trade, and support their work using cryptocurrency. By establishing a direct economic relationship with their fans, musicians can receive steady revenue without relying on intermediaries, ultimately leaving more value in the artists’ pockets.

### 3. Enhanced fan engagement and interaction

The relationship between musicians and their fans is integral to the success and growth of their careers. Traditional social media platforms offer limited options for musicians to engage with their fans and effectively increase their fan base. Blockchain-based platforms can solve these issues by providing musicians with the ability to create unique engagement opportunities, foster communities, and promote a sense of belonging among their fans.

Blockchain technology allows for the creation of unique "smart contracts," which can be used to design personalized interactions and experiences for fans. These smart contracts can be used to develop various fan-centric events such as ticket sales for live performances, merchandise sales, virtual shows, and even offering perks to loyal fans. By offering something unique and exciting to their fans, musicians can strengthen their relationship with their supporters and attract new followers.

### 4. Enabling collaborations and networking

Blockchain-based social media platforms also open up avenues for musicians to collaborate with fellow artists and industry professionals. These platforms can facilitate secure collaboration between musicians, producers, and record labels, ensuring that everyone involved maintains their rights and benefits from the project.

Additionally, blockchain technology can be utilized to create decentralized music marketplaces where musicians can connect with their peers and support one another by sharing their work, knowledge, and resources. Networking on these platforms can accelerate the growth of musicians and elevate their opportunities within the industry.

## Blockchain-based Social Media Platforms: A New Hope for Musicians

Several blockchain-based social media platforms, such as Audius, Choon, and Musicoin, have already made their presence known in the music industry. These platforms offer a taste of how blockchain technology can revolutionize the way musicians share their work, connect with their fans, and sustain their careers.

By embracing blockchain-based social media platforms, musicians can take control of their content, boost their fan base, and unlock new revenue streams. While it may still be early days for this technology, the potential impact on the music industry and the empowerment it can bring to musicians make it an exciting avenue to watch.

So, it’s time for musicians to step into this new realm of possibilities and explore how social media on the blockchain can help them elevate their careers and provide them with the control and ownership that they rightfully deserve.

* * *

**References:**

1. Tapscott, D., & Tapscott, A. (2017). _Blockchain Revolution: How the Technology Behind Bitcoin and Other Cryptocurrencies Is Changing the World_ (Reprint edition). Portfolio.

2. Passy, J. (2018, May 17). How the blockchain could break big tech’s hold on AI. _MarketWatch_. Retrieved from https://www.marketwatch.com/story/how-the-blockchain-could-break-big-techs-hold-on-ai-2018-05-17

3. Brown, A. (2020). _Using Blockchain Technology to Engage Fans in the Music Industry_ (Master’s thesis, Northeastern University – College of Professional Studies). Retrieved from https://repository.library.northeastern.edu/files/neu:cj82sz51r/fulltext.pdf

4. Di lorio, A. (2021, April 7). Social Media on the Blockchain: Pioneers in the New Digital Landscape. _Entrepreneur_. Retrieved from https://www.entrepreneur.com/article/367657

The post [Rocking Revenues and Raving Fans: Harnessing Blockchain-Powered Social Media for Musicians’ Success](https://cryptoloom.xyz/rocking-revenues-and-raving-fans-harnessing-blockchain-powered-social-media-for-musicians-success/) appeared first on [CryptoLoom](https://cryptoloom.xyz). | cryptoloom |

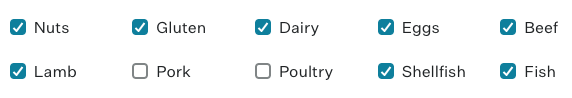

1,449,402 | Chakra checkbox and react-hook-form | My goal was to create a list of checkboxes with fixed values i.e. Nuts, Gluten, Dairy, etc. After... | 0 | 2023-04-26T22:54:39 | https://dev.to/fazuelinton/chakra-checkbox-and-react-hook-form-2mcg | My goal was to create a list of checkboxes with fixed values i.e. Nuts, Gluten, Dairy, etc.

After fetching the data and wiring it up with react-hook-form here's what I did:

## import the required packages from libs

```typescript

import { Controller } from 'react-hook-form';

import {

Checkbox,

CheckboxGroup,

CheckboxProps,

FormControl,

forwardRef

} from '@chakra-ui/react';

```

## create a new component `CheckboxCustom`

```typescript

...

type CheckboxCustomProps = CheckboxProps & { control: any; name: string; children: ReactNode };

const CheckboxCustom = forwardRef<CheckboxCustomProps, 'input'>(({ children, ...rest }, ref) => {

rest.control;

return (

<Controller

name={rest.name}

control={rest.control}

render={({ field: { value } }) => (

<Checkbox ref={ref} isChecked={value} {...rest}>

{children}

</Checkbox>

)}

/>

);

});

```

## use the custom component to render the checkboxes

```typescript

<FormControl>

<CheckboxGroup>

<SimpleGrid columns={5}>

<CheckboxCustom control={control} {...register('noNuts')}> Nuts

</CheckboxCustom>

<CheckboxCustom control={control} {...register('noGluten')}> Gluten

</CheckboxCustom>

...

</SimpleGrid>

</CheckboxGroup>

</FormControl>

``` | fazuelinton | |

1,449,403 | What's the Worst Question You've Ever Been Asked in an Interview? | Job interviews can be nerve-wracking, and sometimes interviewers ask questions that catch you off... | 22,092 | 2023-04-27T07:00:00 | https://dev.to/codenewbieteam/whats-the-worst-question-youve-ever-been-asked-in-an-interview-1891 | discuss, beginners, career, codenewbie | Job interviews can be nerve-wracking, and sometimes interviewers ask questions that catch you off guard, or that are just plain...weird and terrible.

What's the worst question you've ever been asked in an interview? And how did you respond? :eyes:

---

Follow the [CodeNewbie Org](https://dev.to/codenewbieteam) and [#codenewbie](https://dev.to/t/codenewbie) for more discussions and online camaraderie!

{% embed [https://dev.to/t/codenewbie](https://dev.to/t/codenewbie) %} | ben |

1,449,461 | Building a Message Component for My Project | Today, on day 86 of my #100DaysOfCode challenge, I worked on my project and made some progress. I... | 0 | 2023-04-27T01:20:28 | https://dev.to/arashjangali/building-a-message-component-for-my-project-fbc | webdev, javascript, programming, react | Today, on day 86 of my #100DaysOfCode challenge, I worked on my project and made some progress. I created a message component that I'm working on to render conditionally when the liked users are clicked on. This feature will allow users to send messages to each other through the app, making it easier to connect and communicate with potential clients or contractors.

Implementing this feature was challenging, but it was a lot of fun. I started by creating the message component, which will be responsible for rendering the message form and handling the message submission. Then, I had to figure out how to conditionally render the message component when the user clicks on a liked user.

Once I had the message component rendering correctly, I had to implement the functionality for sending messages. When the user selects a liked user, they will be able to send a message that will end up in their document in the database. This required me to write some backend code to handle the message submission and store it in the appropriate document.

Overall, it was a productive and satisfying day of coding. I'm excited to continue working on this feature and seeing how it improves the user experience for the app. | arashjangali |

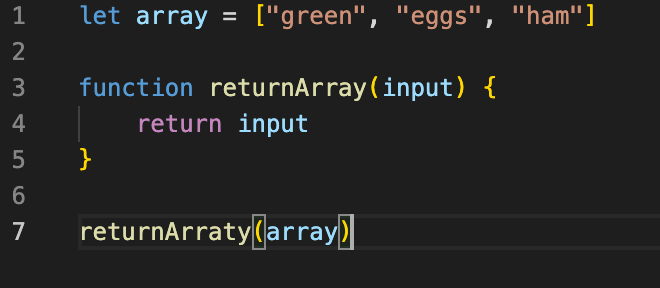

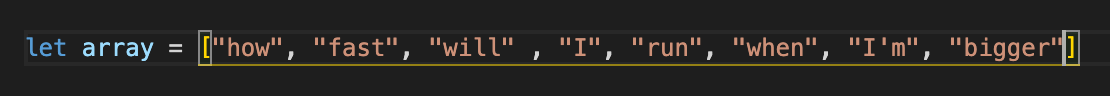

1,449,556 | Clarity Through Destructuring | For aspiring software developers, it's easy to overlook certain concepts that seem trivial or... | 0 | 2023-04-28T02:50:01 | https://dev.to/hillswor/clarity-through-destructuring-2eeg | react, javascript, beginners, nextjs | For aspiring software developers, it's easy to overlook certain concepts that seem trivial or confusing at first. One such concept that I initially dismissed was object and array destructuring in JavaScript. However, as I progressed in my learning journey and started working with frameworks like React, I quickly realized the power and convenience that destructuring can provide.

## What is Destructuring?

Destructuring is a way of extracting values from arrays and objects and assigning them to variables in a concise and readable manner. It allows you to "destructure" the data into smaller pieces, making it easier to work with and more expressive.

## Array Destructuring in JavaScript

Array destructuring is used to extract values from an array and assign them to variables. Here's an example:

```

const numbers = [1, 2, 3, 4, 5];

const [first, second, , fourth] = numbers;

console.log(first); // 1

console.log(second); // 2

console.log(fourth); // 4

```

In this example, we're destructuring the numbers array and assigning the first element to the first variable, the second element to the second variable, and the fourth element to the fourth variable. Notice how we're using commas to skip over the third element (which has a value of 3).

## Object Destructuring in JavaScript

Object destructuring is used to extract properties from an object and assign them to variables. Here's an example:

```

const person = {

firstName: "John",

lastName: "Doe",

age: 30,

address: {

street: "123 Main St",

city: "Anytown",

state: "CA",

zip: "12345"

}

};

const { firstName, lastName, address: { city } } = person;

console.log(firstName); // "John"

console.log(lastName); // "Doe"

console.log(city); // "Anytown"

```

In this example, we're destructuring the person object and assigning the firstName and lastName properties to variables with the same names. We're also destructuring the address property and assigning the city property to a variable with the same name.

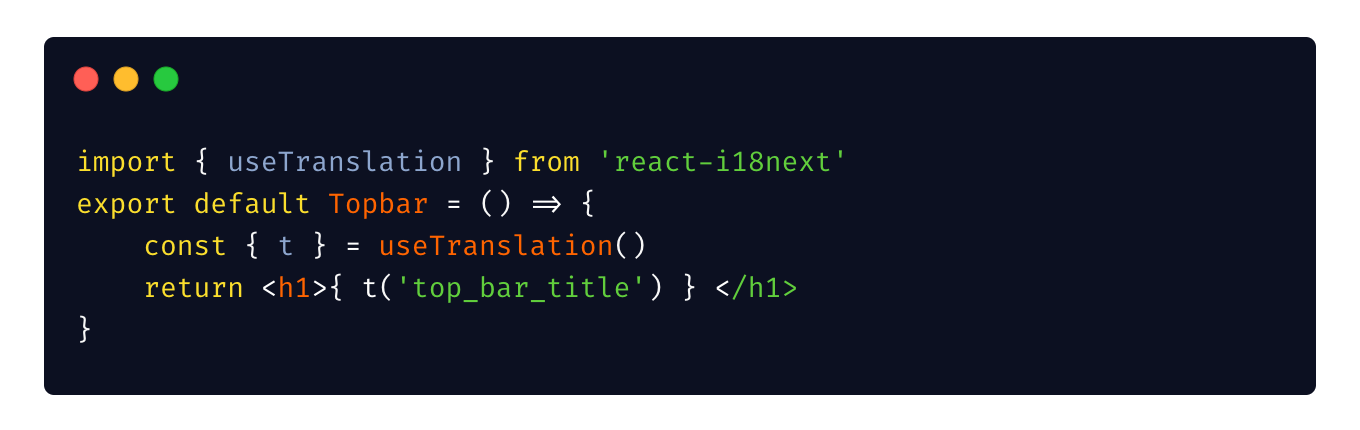

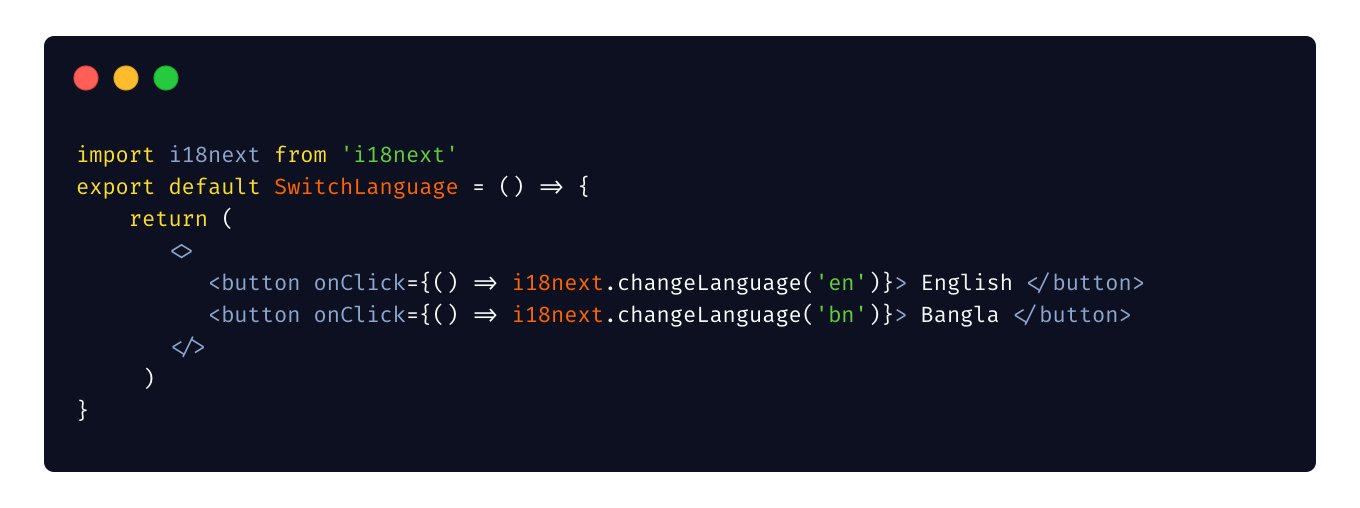

## Destructuring in React

Destructuring is especially useful when working with React. In React components, props are often passed down to child components. Destructuring allows you to extract only the props you need, making your code easier to read and understand. Here's the basic syntax for destructuring props:

```

function ChildComponent({ prop1, prop2 }) {

// Use prop1 and prop2 here

}

```

Here is a more detailed example:

```

import React from "react";

function Person(props) {

const { firstName, lastName, age } = props;

return (

<div>

<h1>{firstName} {lastName}</h1>

<p>{age}</p>

</div>

);

}

export default Person;

```

In this example, we're destructuring the props object and assigning the firstName, lastName, and age properties to variables with the same names. This makes it easier to access and use these values within the component's JSX.

## Conclusion

Destructuring can greatly simplify and improve your code by making it more readable and expressive. Whether you're working with arrays, objects, or React components, mastering destructuring can make your coding experience more enjoyable and productive. | hillswor |

1,449,801 | The Future of Web Development: Trends and Predictions for 2023 | The world of web development is constantly evolving, and staying up-to-date with the latest trends... | 0 | 2023-04-27T09:35:04 | https://dev.to/rr9853462/the-future-of-web-development-trends-and-predictions-for-2023-39i1 | webdev, webdesign, hirewebdevelopers, mobile | The world of web development is constantly evolving, and staying up-to-date with the latest trends and predictions is essential for any web development company or business that relies on **[web development services](https://www.webcluesinfotech.com/web-development-services)**. As we approach 2023, it's important to take a look at the emerging technologies and trends that will shape the future of web development.

**Voice-activated interfaces:**

With the increasing popularity of voice-activated personal assistants like Amazon's Alexa and Google Home, it's no surprise that voice-activated interfaces will become more prevalent in web development. This means that web developers will need to adapt their designs to accommodate voice commands and interactions.

**AI and machine learning:**

Artificial intelligence and machine learning will continue to be major players in web development. These technologies will allow web developers to create personalized experiences for users, optimize websites for search engines, and automate repetitive tasks.

**Progressive Web Apps (PWAs):**

PWAs are web applications that can be accessed through a browser but function like native mobile apps. They offer fast performance, offline capabilities, and push notifications, making them an attractive option for businesses looking to improve their mobile experience.

**Augmented Reality (AR) and Virtual Reality (VR):**

AR and VR are already being used in web development to create immersive experiences for users. As the technology becomes more accessible, we can expect to see more websites and applications incorporating AR and VR elements.

**Single-page applications (SPAs):**

SPAs are web applications that load a single HTML page and dynamically update the content as the user interacts with it. They offer a seamless user experience and can be faster than traditional multi-page applications.

To keep up with these trends, businesses will need to hire web developers who are skilled in these emerging technologies. Web development companies will need to invest in training and development to ensure their teams are up-to-date with the latest trends and techniques.

> In addition to these trends, there are several predictions for the future of web development:

**1.Increased use of chatbots:** Chatbots are already being used on many websites to provide customer service and support. As AI and machine learning continue to advance, we can expect chatbots to become even more sophisticated and integrated into the user experience.

**2.More emphasis on accessibility:** As the importance of accessibility continues to grow, we can expect web development to prioritize accessibility features such as screen readers, keyboard navigation, and color contrast.

**3.Continued focus on website security:** With the increasing threat of cyberattacks, web development will continue to prioritize website security. This includes measures such as SSL certificates, firewalls, and regular software updates.

**4.Greater emphasis on user privacy:** With the rise of data breaches and concerns over user privacy, web development will need to prioritize measures such as GDPR compliance, cookie notices, and data encryption.

**Conclusion**

In conclusion, the future of web development is exciting and full of possibilities. By staying up-to-date with the latest trends and predictions, businesses can ensure they are offering the best possible user experience. To achieve this, it's essential to **[hire web developers](https://www.webcluesinfotech.com/web-development-services)** who are skilled in emerging technologies and invest in ongoing training and development.

| rr9853462 |

1,449,563 | Kết quả bóng đá thethao247.vn | https://thethao247.vn/ket-qua-bong-da-truc-tuyen/ (LIVE) - Nhận ngay kết quả bóng đá(KQBĐ) mới nhất... | 0 | 2023-04-27T03:22:30 | https://dev.to/kqbdthethao247/ket-qua-bong-da-thethao247vn-2k69 | https://thethao247.vn/ket-qua-bong-da-truc-tuyen/ (LIVE) - Nhận ngay kết quả bóng đá(KQBĐ) mới nhất của tất cả các trận đấu✔️ giải đấu diễn ra vào đêm qua & hôm nay từ Thể Thao 247✔️ Tỷ số được cập nhật liên tục 24h✔️✔️✔️

https://velog.io/@kqbdthethao247

https://pbase.com/ketquabongdathethao247/ketquabongdathethao247

https://plaza.rakuten.co.jp/kqbdthethao247

https://app.bountysource.com/people/116350-kqbdthethao247

https://penzu.com/p/3c09dd12

https://peatix.com/user/17049592 | kqbdthethao247 | |

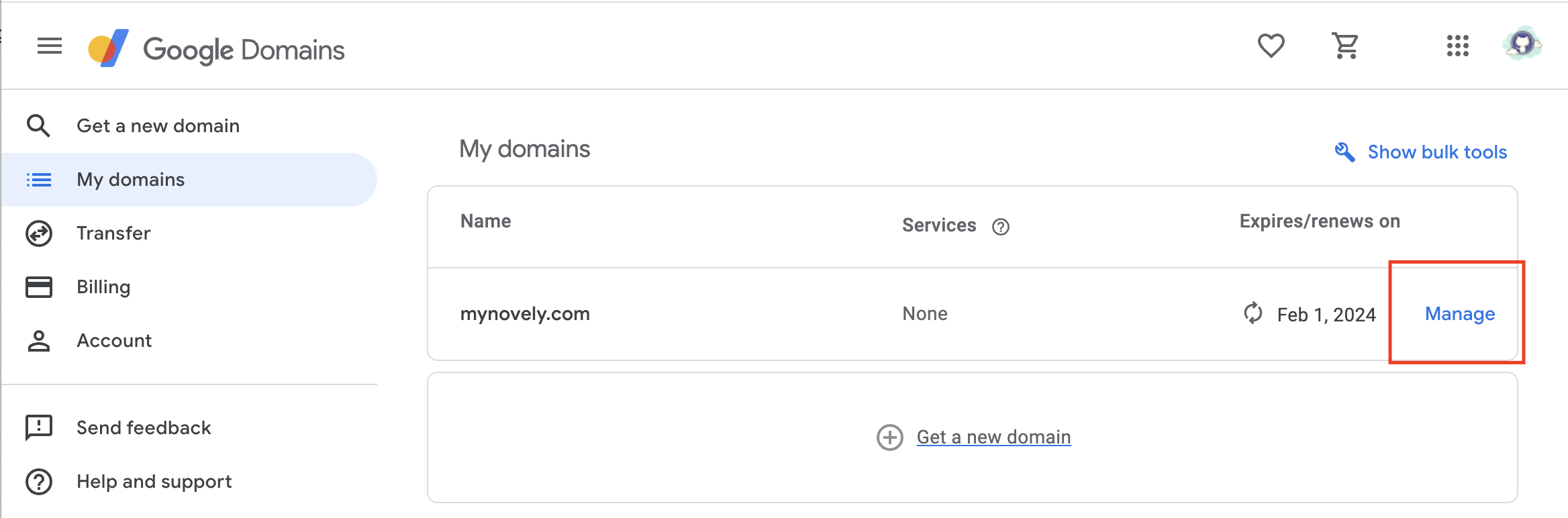

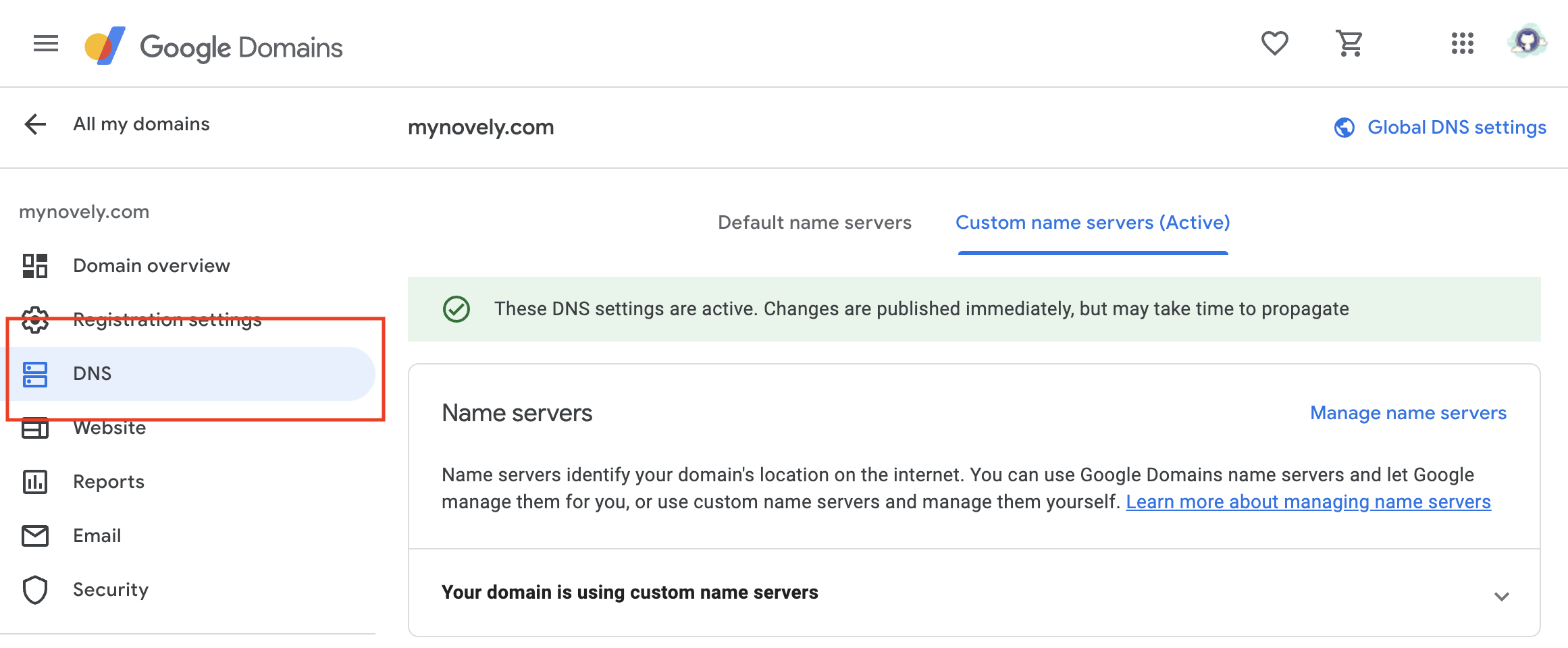

1,449,567 | How to Link Your Google Domain with Vercel: A Step-by-Step Guide (2023) | Log in to your Google Domains account and navigate to the My Domains page. If you have multiple... | 0 | 2023-04-27T03:35:05 | https://dev.to/azadshukor/how-to-link-your-google-domain-with-vercel-a-step-by-step-guide-2023-5ac9 | Log in to your Google Domains account and navigate to the My Domains page. If you have multiple domains, make sure you select the one you want to link with Vercel.

Click on the **"Manage"** button on the right side of the domain name. This will take you to the domain management page.

On the left sidebar, select **"DNS"**. This will bring up the DNS settings for your domain.

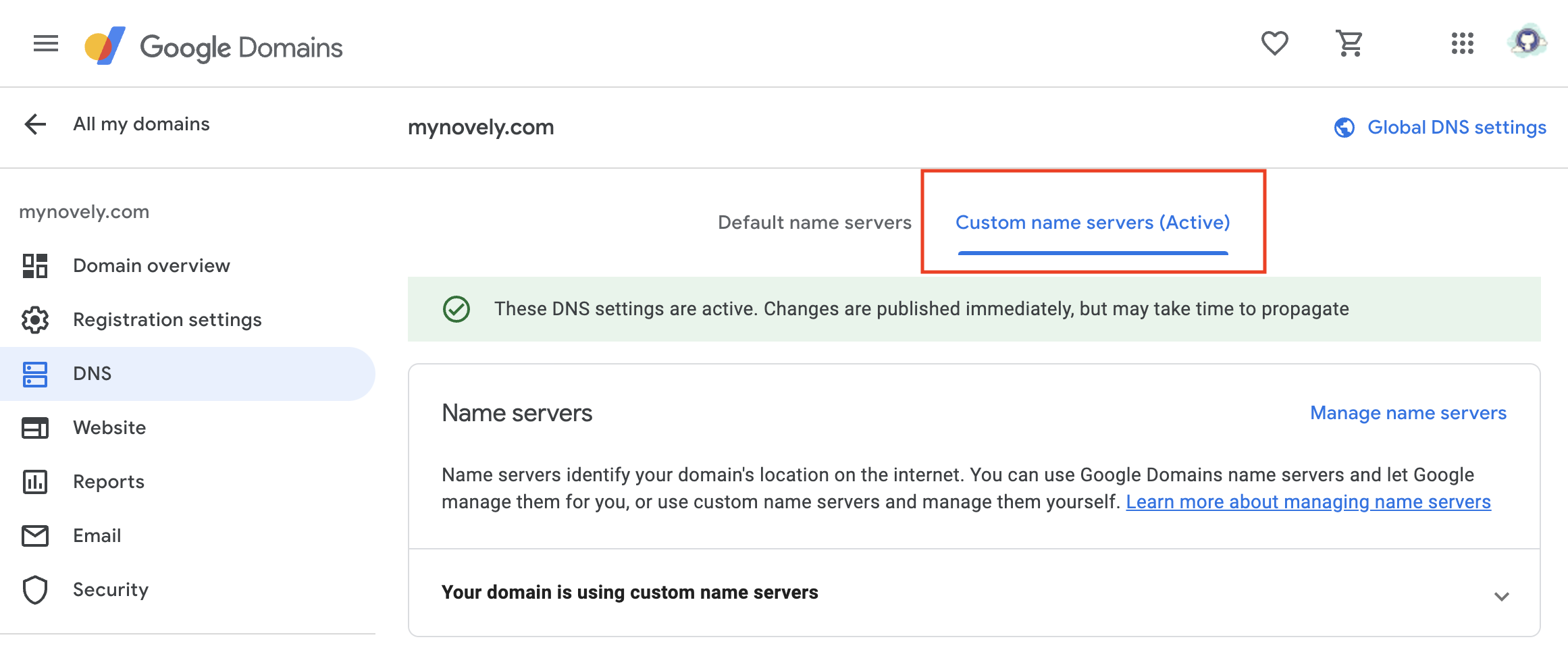

Click on **"Custom name servers"**. This will allow you to add custom name servers for your domain.

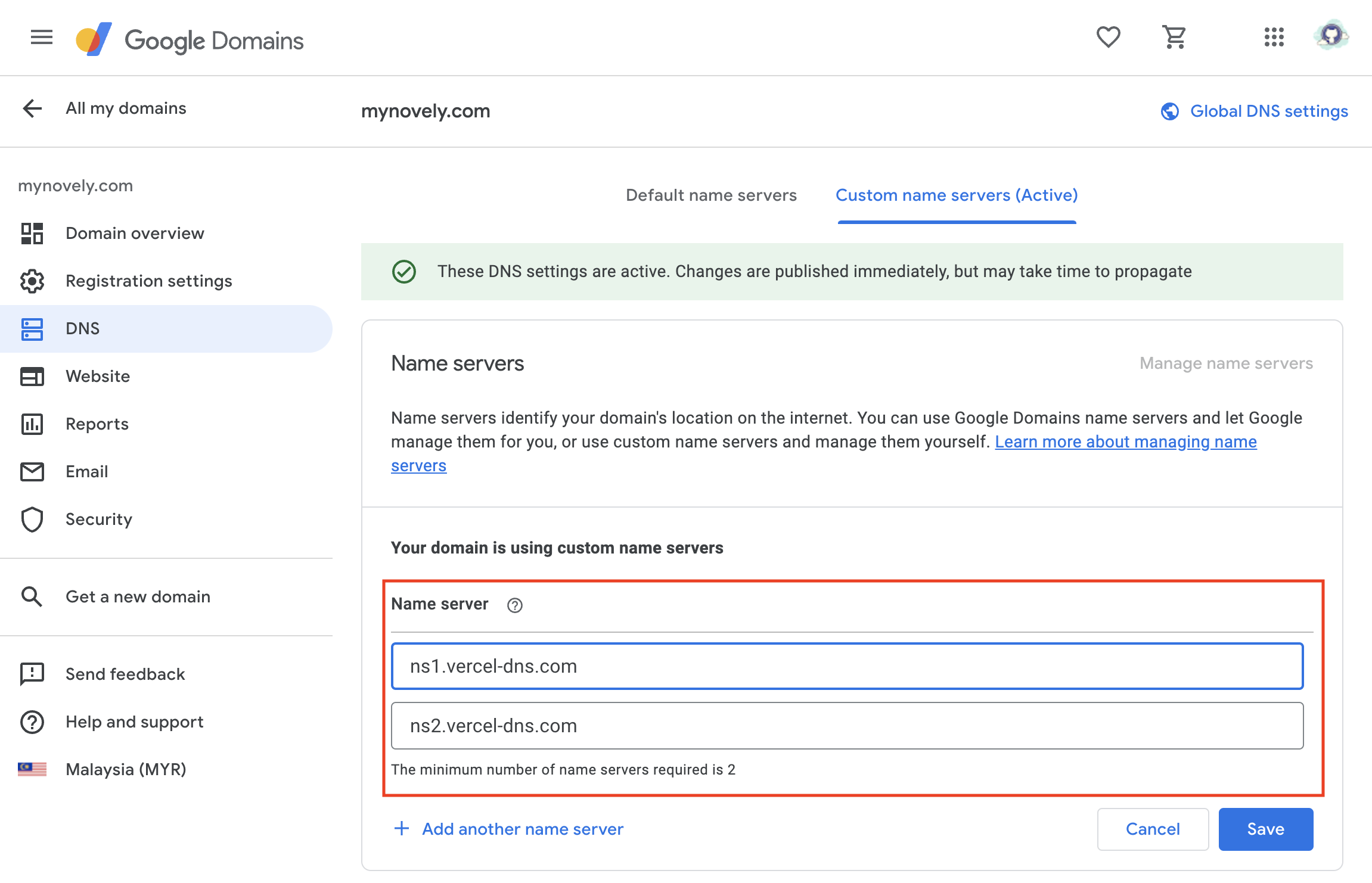

Under **"Name Server"**, add the following two name servers:

```

ns1.vercel-dns.com

ns2.vercel-dns.com

```

These are the name servers provided by Vercel, and they will handle the DNS requests for your domain.

Click on **"Save"** to save the changes. Once you've added the custom name servers, your Google domain will be linked with Vercel, and you can start using it to host your website or web application.

| azadshukor | |

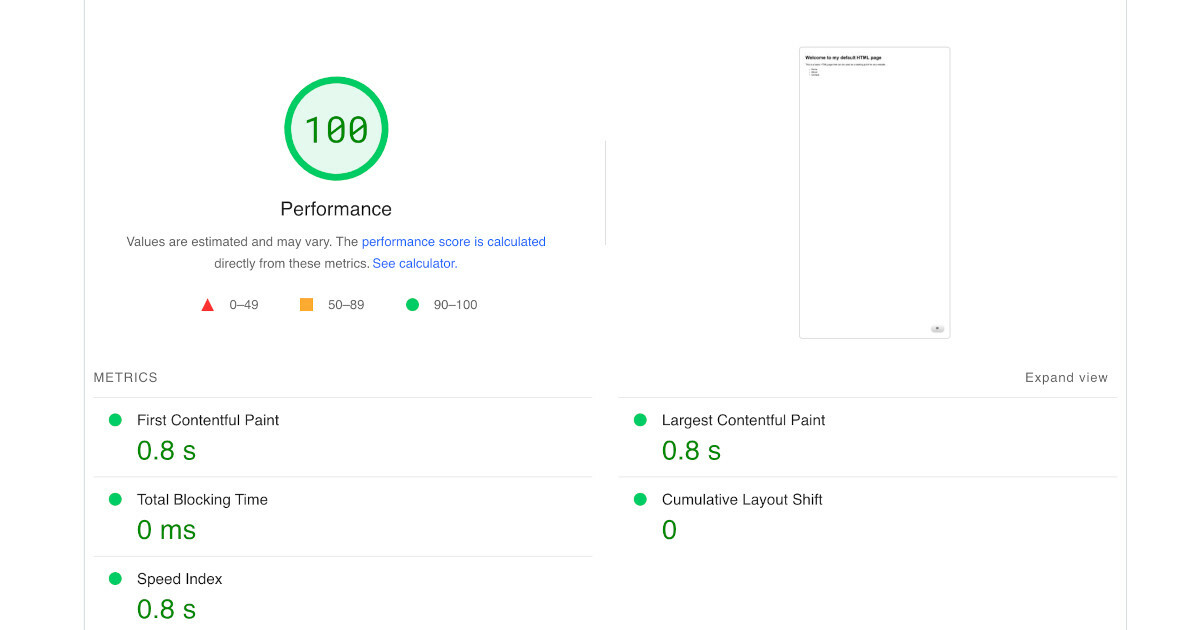

1,449,581 | How we built the Fastest ChatGPT Website Chatbot | A ChatGPT-based website chatbot can dramatically decrease your website's performance if it's not... | 0 | 2023-04-27T04:30:44 | https://ainiro.io/blog/the-fastest-chatgpt-website-chatbot | openai, chatgpt, webdev, tutorial | A ChatGPT-based website chatbot can _dramatically decrease your website's performance_ if it's not correctly implemented. Today we were able to further optimised [our ChatGPT chatbot](https://ainiro.io) and make it 20x faster than what we started out with as we cloned AISTA's technology. In totalt we have now reduced the initial page load time from more than 1,000kB to less than 48kB. This is a 20x improvement in load time, significantly impacting SEO and usability.

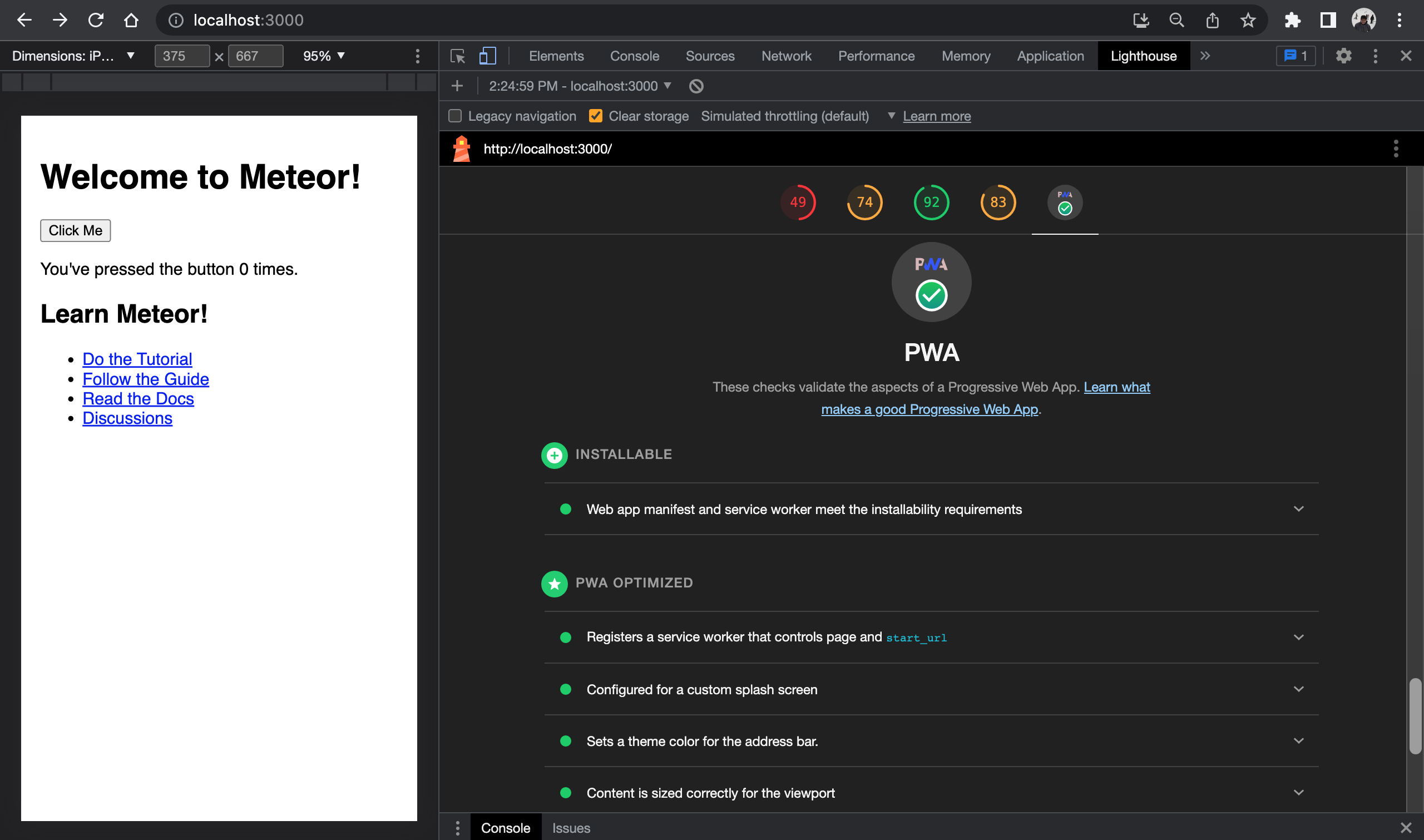

In this article I will tell you how, in addition to why this is important, and why you should care. The image below shows a default empty HTML page, with our chatbot embedded. Basically it scores 100% on every single parameter that exists.

## The importance of a CDN

A Content Deliver Network (CDN) allows you to serve static resources _a lot_ faster. To understand why, realise that the internet has a lot to do with geography. I live in Cyprus. From Cyprus to Ukraine I have a TCP hop time (latency) of probably 100+ milliseconds. This doesn't sound like much, but when your website is serving 50+ HTTP requests, this becomes an additional load time of 50 x 0.1 seconds, resulting in 5 seconds more time to load.

> If your webpage requires 5 seconds to load, you might as well send a postcard!

We're using a global CDN that caches every single static file locally, reducing latency to ~10 milliseconds. Hint; [CloudFlare](https://cloudflare.com) - USE IT!

## Lazy initialising reCAPTCHA

A chatbot such as ours needs reCAPTCHA support. Without it, any script kid can create a never ending while loop in Postman, and burn through thousands of dollars in your OpenAI API account in a couple of hours. However, reCAPTCHA is terribly implemented. It's a _"blocking library"_, implying the rendering of your webpage will halt while reCAPTCHA is downloaded and initialised. I wrote about [how to defer reCAPTCHA loading in this article](https://ainiro.io/blog/how-we-made-our-chatbot-10x-faster) if interested. However, the idea is that we don't load reCAPTCHA before the chat button is clicked. You can actually see this if you inspect the network tab on this page, for then to click the little blue robot in the bottom/right corner. The idea is to _never_ load anything before you need it!

## Trimming fonts

We're using [IcoFont](https://www.icofont.com/icons) for our chatbots. These are the small icons displayed by default on for instance the chess theme, and/or the [AI search](https://ainiro.io/blog/put-ai-search-on-your-website) button. IcoFont has a really amazing feature, which is that you can _"cherry pick"_ which icons to download. If you download some _"font icon library"_ you will typically get hundreds of icons. These icons will consume bandwidth, and you rarely need more than a handful. The default version of IcoFont for instance requires 0.5 megabytes of download. By cherry picking which icons we want in our set, we were able to reduce the size of our IcoFont library from ~500kB to ~5kB. 0.49 megabytes of bandwidth saved!

## GZIP'ing content

This one we get for free, it's an integrated part of CloudFlare's services, that reduces the size of static content such as JavaScript and CSS by roughly 90 to 95 percent. If you've got heavy JavaScript and CSS files, you want to make sure your content is GZip'ed as it's being served. However, we got this one _"for free"_ because of using CloudFlare's CDN features.

## Zero "Frameworks"

If you're creating a chatbot that's intended to be embedded on a website, then _do not start out by dragging in Bootstrap or jQuery_. The domain problem for a chatbot is easy, you don't need Bootstrap or jQuery. These libraries are hundreds of kilobytes in size, and this becomes a cost for the website you're embedding the chatbot on. Our chatbot exclusively contains _"good old fashioned JavaScript and CSS"_, and no frameworks. If your ChatGPT-based website chatbot downloads jQuery or Bootstrap, you might want to reconsider what chatbot service you're using ...

## Page load time matters

There are literally no parameter that's more important for user experience and SEO than page load time. Google will rank you higher than your competitors if your page is loading faster assuming the rest of the parameters are equal. This implies that if you're _"embedding the coolest ChatGPT-based website chatbot on Earth on your page"_, you might wake up one day and realise you've lost 50% of your organic clicks from Google, and that the average engagement time just dropped by 50%, because people are clicking the back button because they're tired of waiting for your webpage to load.

Some millennials will even watch YouTube videos on 1.5x speed, because they don't have time to watch these at normal speed. Do me a favour please; Copy the URL of this page, and open it in an incognito browser window. Did you notice it loaded in less than 1 second? That took _a lot_ of effort from us, but inevitably over time results in a better user experience, and more _"Google Luv"_ over time.

Before you chose a ChatGPT website chatbot, ask yourself _"what's the cost"_. Well, if you chose us, you now know, 47kB. Most others are in the 1,000+ range here ... 😉

* [Contact us for a quote on our ChatGPT website chatbot](https://ainiro.io/contact-us#demo)

* [Or create your own ChatGPT website chatbot here](https://ainiro.io/blog/how-to-get-chatgpt-on-your-website)

Psst, we're constantly working to further improve our chatbot tech, and I suspect we might be able to squeeze it down to less than 30kB in total over the next weeks - However, even at 48kB, I suspect we're probably the fastest ChatGPT-based Website Chatbot on Earth 😁

| polterguy |

1,449,658 | Breaking News: AI is Not Replacing These Jobs Anytime Soon (Sorry Robots) | Jobs that require heart: Jobs that require empathy and human connection, like therapists and... | 0 | 2023-04-27T06:42:35 | https://dev.to/vvk/breaking-news-ai-is-not-replacing-these-jobs-anytime-soon-sorry-robots-211c |

Jobs that require heart: Jobs that require empathy and human connection, like therapists and bartenders

Jobs that require humor: Jobs that require a sense of humor, like comedians and copywriters

Jobs that require creativity: Jobs that require creative thinking, like artists and musicians

Jobs that require critical thinking: Jobs that require critical thinking and problem solving, like detectives and scientists

Conclusion: A wrap-up that highlights the importance of human skills and why we should celebrate the jobs that AI can't touch | vvk | |

1,449,664 | 5 Best Frameworks: Cross-Platform Mobile App Development | Cross-platform mobile app development frameworks are the best thing in the development process.... | 0 | 2023-04-27T06:55:16 | https://dev.to/quokkalabs/5-best-frameworks-cross-platform-mobile-app-development-38kf | mobile, android, ios, development | [Cross-platform mobile app development](https://quokkalabs.com/mobile-app-development?utm_source=Dev.to&utm_medium=blog&utm_campaign=Service%20Page) frameworks are the best thing in the development process. Rather than only one platform, any developers can now create applications for various platforms. They don't need to repeatedly compose a similar source code - it's hugely time to be protected and energy efficient.

However, there's no question that individuals and organizations are eager to hire cross-platform app developers to deal with such frameworks since they're profoundly helpful but also very costly. Yet at the same time, they, in all actuality, do enjoy extraordinary benefits against the high advancement costs.

_There are various frameworks available in the market. The developers accepted and adopted a few of them as pros and cons. So let's check out probably the best cross-platform application framework for creating robust, versatile mobile applications that are accessible nowadays:_

## 1. React Native

- Programming language: JavaScript

- Top Applications: Airbnb, Instagram, Skype, Shopify

Created by Meta (previously Facebook) in 2015, [React Native](https://quokkalabs.com/react-native-app-development?utm_source=Dev.to&utm_medium=blog&utm_campaign=Service%20Page) has progressively developed its prevalence in the local dev community. This open-source UI improvement framework joins the best of JavaScript and React.JS while permitting engineers to compose modules in Java, Swift, or Objective-C dialects. Using local native modules and libraries in the platform, the development group can execute weighty activities like picture altering, video handling, and different errands not covered by the framework APIs.

### Other exceptional key features of React Native:

- Its quick refresh features include giving developers momentary input on alters made to their React parts. Upholds an assortment of outsider libraries, for example, Redux, MobX, Ionic, and so on, considering straightforward relocation.

- It has Part-based GUI creation that's mainly used for front-end applications creation. It provides support like dealing with user communications through touch occasions, simplifying it for developers to reuse existing UI parts.

- Besides, it has a sizable community. Various UI structures, instructional exercises, and libraries are accessible to work with the expectation of absorbing information.

- Concerning commitment to gathering articles, instruments, and assets about unambiguous innovations, React Native is vastly improved than Flutter, Xamarin, or Ionic.

- Its specialty is giving the engineers a choice to compose code only one opportunity to get utilized for both the iOS and Android stages. Because of some code benefits, the improvement time gets diminished, and consequently, engineers can focus on one more mechanical part of the application advancement.

- The requirement for composing the code just once in this manner takes care of picking either native or cross-platform app development. This framework by Facebook is ideal for growing startup businesses and entrepreneurs.

**Read More:** {% embed https://quokkalabs.com/blog/how-to-hire-react-developers-a-step-by-step-guide-for-recruiters-2/?utm_source=Dev.to&utm_medium=blog&utm_campaign=Web%20Blog %}

## 2. Flutter

- Programming language: Dart

- Top Applications: Alibaba, Google Ads, PostMuse

Released by Google in 2018, it is an open-source framework designed to create mobile, web, and desktop applications from one codebase. Flutter is another ideal choice for building cross-stage versatile applications. Nonetheless, which isolates Flutter from different systems is the programming language.

Flutter utilizes Dart, while React involves JavaScript as its customizing language. Disregarding being new and one of a kind to the universe of developers, Flutter is keeping different structures honest because of its superb graphical library and smooth execution. It gives similar local insight and gives the clients a one-of-a-kind and upgraded application-seeing experience.

### Other exceptional key features of Flutter:

- It offers widgets drawn from its superior rendering engine - fast, customizable, and alluring.

- Dart language offers more modern components to alter UI design for any screen, simplify debugging, streamline investigating, and work on the application's presentation through AOT gathering.

- Hot Reload's usefulness allows engineers to see prompt updates of new codes without application reload.

- Because of its rich widgets, Flutter applications will generally have an incredible look and feel. It likewise furnishes phenomenal documentation with substantial group help, making it simple to begin creating with the stage. At long last, its improvement time is much quicker than the native development.

**Read More:** {% embed https://quokkalabs.com/blog/what-is-new-in-flutter/?utm_source=Dev.to&utm_medium=blog&utm_campaign=Web%20Blog %}

## 3. Xamarin

- Programming language: C#

- Top Applications: UPS, Storyo, Alaska Airlines

The next competitor in the list of cross-platform [mobile app development](https://quokkalabs.com/mobile-app-development?utm_source=Dev.to&utm_medium=blog&utm_campaign=Service%20Page) frameworks is Xamarin. This Microsoft-claimed stage can be sent to construct Android, iOS, and Microsoft applications with .NET.

With Xamarin, engineers can share around 90% of their applications across stages. They just need to compose all of their business rationales in a solitary language or reuse existing application code yet accomplish the native look, feel, and execution at every stage.

### Other exceptional key features of Xamarin:

- Specifically ties in almost all essential stage SDKs in Android and iOS, thus offering intense compile time checking. This outcome resulted in fewer run-time mistakes and productive applications.

- Supporting an immediate joining of Objective-C, Java, and C++ libraries, Xamarin qualifies engineers to reuse existing iOS and Android libraries written in those dialects.

- It is based on C#, an advanced programming language that offers many benefits over Objective-C and Java, including dynamic language capacities and utilitarian builds such as lambdas, equal programming, generics, LINQ, etc.

- Utilizing the .NET BCL, Xamarin-based applications approach a significant assortment of classes with abundant smoothed-out usefulness like IO, XML, String, etc.

- Existing C# can likewise be ordered for use in an application, opening up an option of thousands of libraries that add highlights past the BCL.

**Read More:** {% embed https://quokkalabs.com/blog/a-step-by-step-guide-to-the-mobile-app-development-process/?utm_source=Dev.to&utm_medium=blog&utm_campaign=Web%20Blog %}

## 4. Ionic

- Programming language: JavaScript

- Top Applications: BBC, EA Games, MarketWatch

One more eminent cross-platform framework in this rundown is Ionic. The open-source UI tool compartment assists developers with making versatile mobile and desktop applications utilizing a blend of local and web innovations like HTML, CSS, and JavaScript, with integration for the Angular, Vue, and React framework systems.

### Other exceptional key features of Ionic:

- Because of a SaaS UI structure framework fabricated explicitly for the versatile operating system, Ionic offers various UI parts for building applications.

- With the help of Cordova and Capacitor modules plugins, the Ionic gives developers admittance to the gadget's implicit functionalities, containing cameras, sound recorders, GPS, etc.

- It offers its own IDE called Ionic Studio, made for fast application improvement and prototyping with negligible coding.

- With Ionic, developers can create a splendidly innovative UI and integrate easy-to-use components into the application. So, Ionic-based applications are brilliant and naive, making them the best system for PWA development.

## 5. Apache Cordova

- Programming language: HTML 5, CSS3, and JavaScript

- Top Applications: Untappd, Localeur, SparkChess

Apache Cordova, previously known as PhoneGap, is an open-source versatile development framework for making hybrid portable applications utilizing standard web innovations like _HTML, JavaScript, and CSS_. Consequently, developers are not required to become familiar with a particular improvement programming language to create an application. Applications work inside coverings designated to every stage and depend on norms agreeable to APIs to get to every gadget's information, sensors, and network.

### Other exceptional key features of Apache Cordova:

- It gives a cloud arrangement that allows developers to share their applications during the improvement interaction for input from colleagues.

- It permits admittance to local gadget APIs to be reached in a module manner because of its plugin-able architecture.

- The most concerning issue with Cordova and other cross-stage platforms is that their applications won't be as quick as local code composed on the gadget. A genuine illustration of this is gaming.

- Complex animation by the canva in Cordova isn't going to perform as well as local code and, in all probability, wouldn't be satisfactory to giving clients a decent encounter.

### That's it!!!

These are some of the ideal frameworks that expert developers mostly choose, but you'd need to pick the one that would go perfectly with your action plan. The one that could ideally address your business on each significant cross-platform mobile application. If you have any queries, questions, or suggestions, comment below or [contact us now](https://quokkalabs.com/contact-us?utm_source=Dev.to&utm_medium=blog&utm_campaign=Contact%20Us).

Thanks!!!

| labsquokka |

1,449,808 | STORY OF MY LIFE | I am Elyse NIYOMWUNGERE but call me Songa i am refugee in Mahama Camp in Rwanda coutry. I studied... | 0 | 2023-04-27T09:39:25 | https://dev.to/songa210/story-of-my-life-2npi | i, am | I am Elyse NIYOMWUNGERE but call me Songa i am refugee in Mahama Camp in Rwanda coutry.

I studied Computer Application And Multimedia In Kirehe Adventist TVET School(KATS).

I worked in Capital Record Empire LTD in terms of Multimedia in kigali, remera and also i worked in JAPTECT Company in terms of Hardware Maintenance and Networking in Kigali Rusozi.

so my every day life, i like or i worked photographer and Editing videos.

so this is my story of life thank you.

| songa210 |

1,450,000 | Interview experience of Junior Ruby and Rails developers | Dear Junior Ruby and Rails Developers, Can you shed some light on your experiences applying for jobs... | 0 | 2023-04-27T12:35:42 | https://dev.to/makisushi/interview-experience-of-junior-ruby-and-rails-developers-4968 | ruby, rails, beginners, discuss | Dear Junior Ruby and Rails Developers,

Can you shed some light on your experiences applying for jobs and the interview process?

- How did it go?

- Where did you get stuck?

- What was the result?

- What did you learn,

- What were you missing?

- If you are aware, what did you have that helped you get the job?

Also, anything else that you think is worth sharing. | makisushi |

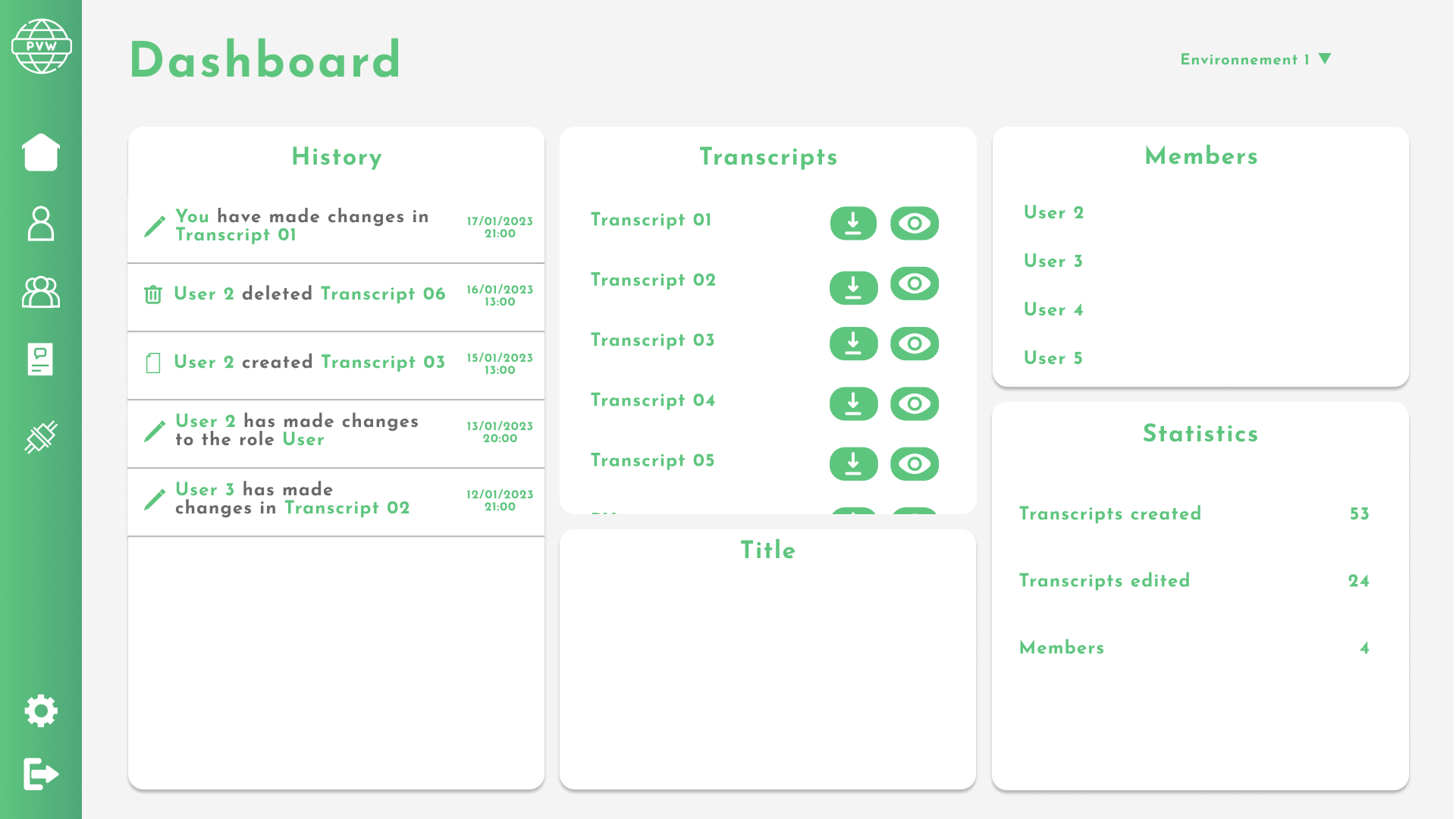

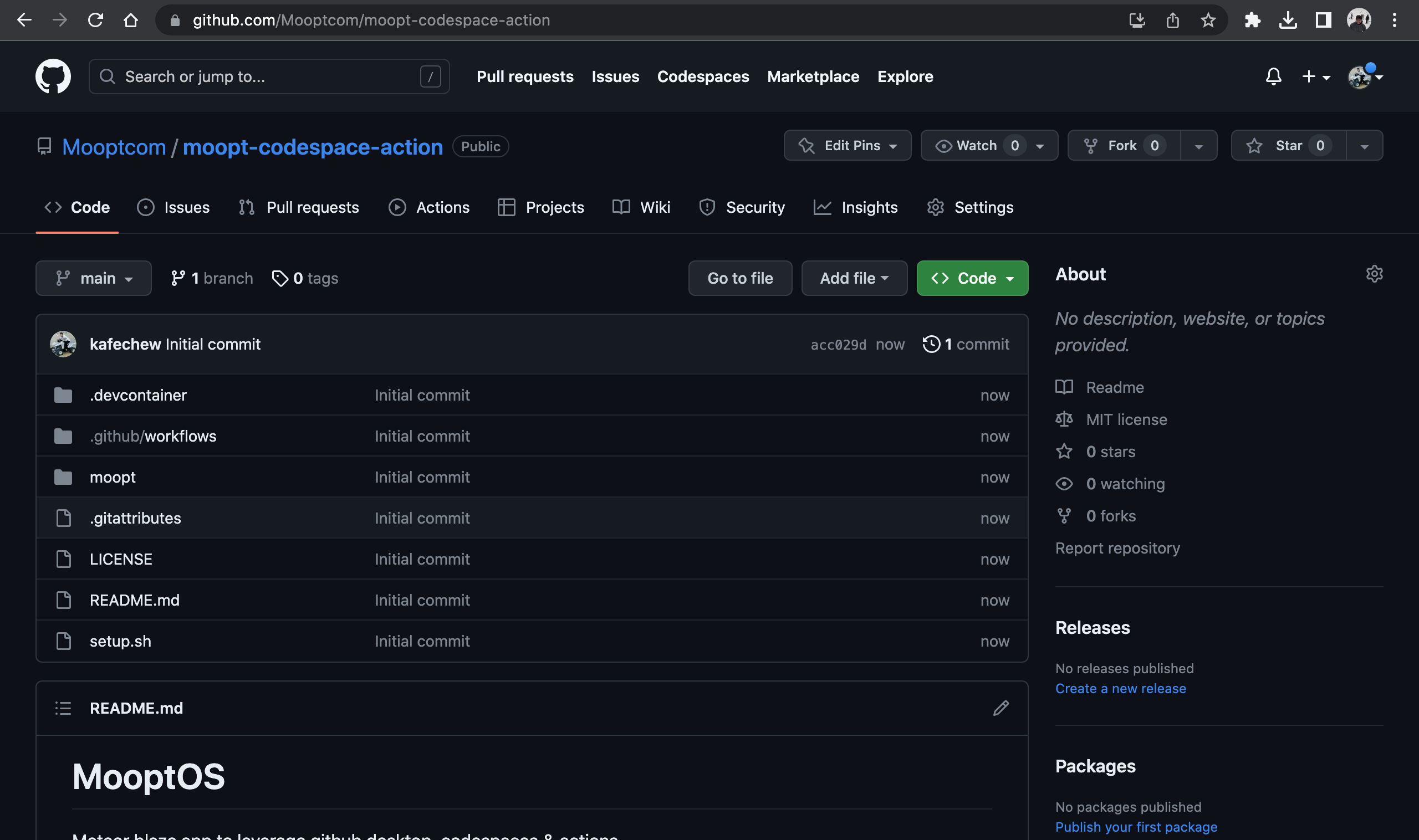

1,450,009 | Transcript Management App | What I built I built a transcript management web application that allows accountant... | 0 | 2023-04-27T12:57:55 | https://dev.to/tommyriquet/transcript-management-app-23n3 | githubhack23 | ## What I built

I built a transcript management web application that allows accountant offices to manage transcripts in a centralized environment. The frontend of the application is built using React, while the backend is built using NodeJS.

### Category Submission:

DIY Deployments

### Screenshots

### Description

This web application was made to simplifies the management of transcripts for employees in offices. They can easily manage and access all their transcripts and minutes from a centralized location. This ensures that they do not have to waste time and resources on searching for specific transcripts.

### Link to Source Code

PVonWeb's source code can be found [here](https://github.com/TommyRiquet/PVonWeb)

### Permissive License

PVonWeb is licensed under the MIT license.

## Background

PVonWeb was created to solve a specific problem faced by accountant offices. The management of transcripts and minutes is a critical task in the accounting industry, but it can be time-consuming and tedious. PVonWeb was designed to simplify this process and make it more efficient.

### How I built it

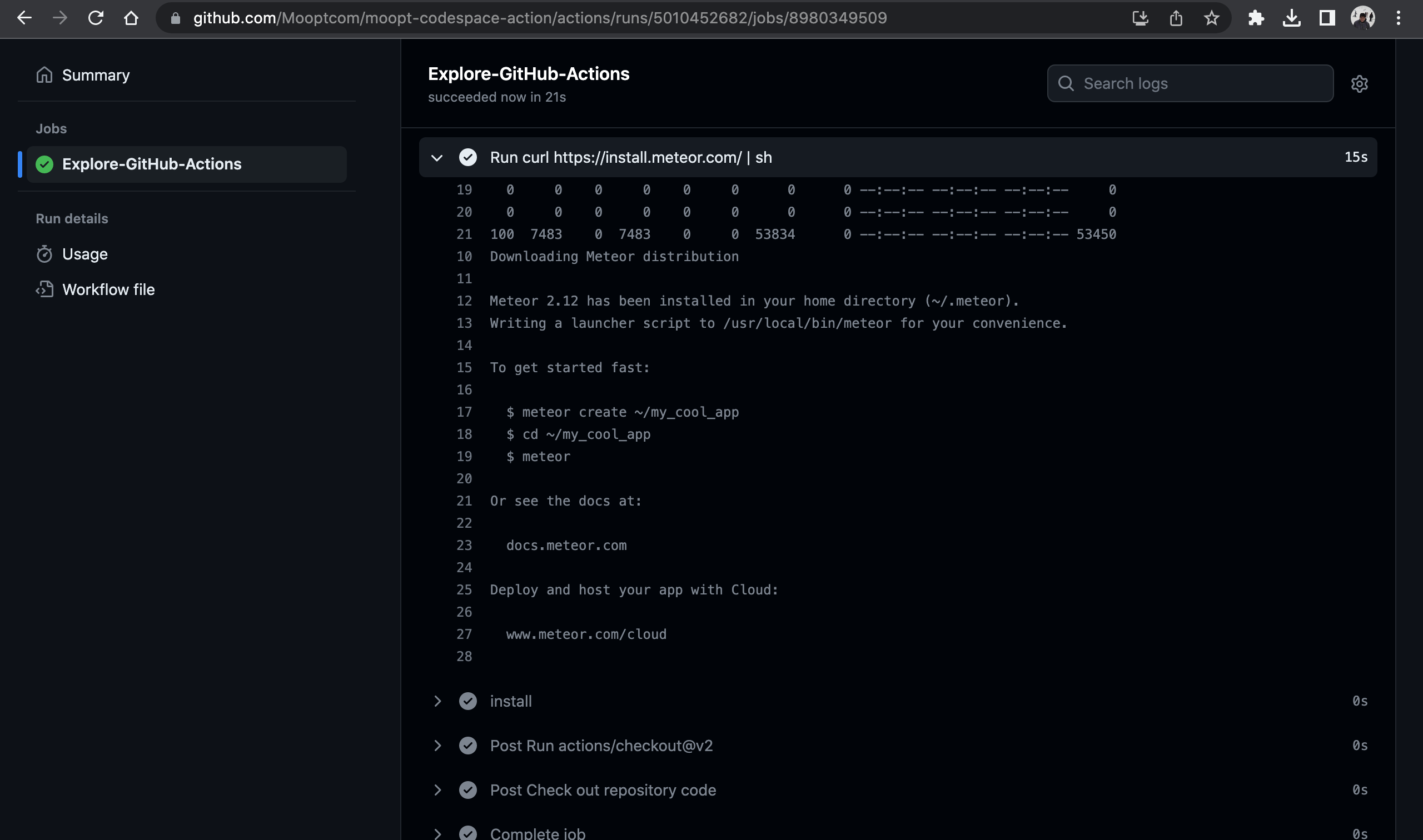

PVonWeb was built using React for the frontend and NodeJS for the backend. GitHub Actions were used to automate the whole development process, from the unit tests to the deployment process, which made it easy to update the application with new features and bug fixes.

#### Workflows built with Github Actions

- Code Linter checking

- Commit message checking

- Unit testing

- Integration testing

- Testing deployement script

- End-to-end testing

- Staging deployement script

- Production deployement script

#### Github Templates

We created a GitHub Issue template to make it easier and faster for developers to submit issues. This template provides a step-by-step guide for creating user stories, bug reports, or technical tasks, and ensures that all issues are consistent in format. By using this template, developers can submit their issues in a structured format, which makes the review process smoother.

#### Conclusion

During the development of this project, I gained valuable experience and knowledge in using Github Actions for automation. Prior to this, I had limited knowledge of Github Actions, but throughout the development process, I became more proficient and learned a great deal about its capabilities. The skills I acquired through this experience will be beneficial for future projects as well.

### Additional Resources/Info

+Made with love from Belgium

| tommyriquet |

1,450,026 | Where's your author? | Hi all, Continuing fixing some sites without an author, I have came across these projects: ApiTestEz... | 0 | 2023-04-27T13:28:49 | https://dev.to/fredadiv/wheres-your-author-a65 | osdc | Hi all,

Continuing fixing some sites without an author, I have came across these projects:

[ApiTestEz](https://github.com/bruce4520196/ApiTestEz#readme) which is missing the pyproject.toml file. I added it with the author's name, but it still missing the email address. [PR](https://github.com/bruce4520196/ApiTestEz/pull/1/commits/93e303d0d16f3f8889c771eb2e3a4486a7cf0695)

[lightgrad](https://github.com/marcosalvalaggio-bip/lightgrad) was also missing the author id, so I added the toml file. Unfortenetly, the Github user has no details so I just used the user name. [PR](https://github.com/marcosalvalaggio-bip/lightgrad/pull/1/commits/c956b8b5ccae7954a073c15e6e000abb85e098fa)

The author accepted the PR in merged it into the project :-)

[gbs](https://github.com/gopherball/gbs) has a toml file, but without the author section, so I added it. [PR](https://github.com/gopherball/gbs/pull/1/commits/875bff72b4791facd6a99567a9c8051d198162aa)

[autosrt](https://github.com/botbahlul/autosrt) is also missing the pyproject file so I added it. [PR](https://github.com/botbahlul/autosrt/pull/4/commits/83c8d773e39177a9c3250826a4d008fa93f69e17)

[pheno-utils](https://github.com/hrossman/pheno-utils) has no indication about the author so I added the toml file. [PR](https://github.com/hrossman/pheno-utils/pull/1/commits/ee82acd89dd131dc500a3cbc6769bc68b8d6e62f)

| fredadiv |

1,450,167 | All Articles Are Wrong, Some Are Useful | As software developers, we are always foraging the internet for articles, tutorials, StackOverflow... | 0 | 2023-04-27T15:35:03 | https://dev.to/nkrumahthis/all-articles-are-wrong-some-are-useful-3h2c | beginners, productivity | As software developers, we are always foraging the internet for articles, tutorials, StackOverflow answers and blog posts hoping that they have the latest and greatest solution for our coding problems. But how many times have we found ourselves frustrated with code that doesn't work, despite following the instructions from an article or tutorial to the letter? Or worse yet, how many times have we been misled by articles that are simply incorrect?

The truth is, all articles are wrong. Every single one. No matter how well-researched, well-written, or well-intentioned an article is, there is always the possibility that it is wrong in some way. Maybe the code won't work in a certain environment, or maybe it relies on assumptions that aren't true in every case. Even articles that are factually correct can be wrong in their applicability to your specific situation.

However, just because all articles are wrong doesn't mean that they are useless. In fact, some articles can be incredibly helpful in our daily work. But how do we distinguish the useful articles from the ones that will lead us astray?

One way is to approach articles with a healthy dose of skepticism. Don't blindly follow the instructions or advice given in an article without first testing it thoroughly in your own environment. Ask yourself if the article makes sense for your specific situation, or if there are any assumptions that the article is making that don't apply to your code. And don't be afraid to question the author or reach out to the community for clarification.

Another way to determine the usefulness of an article is to consider the source. Is the author an expert in the field, or are they simply regurgitating information they found elsewhere? Is the article backed up by data or research, or is it purely anecdotal? Taking the time to evaluate the credibility of the source can help you determine if the article is worth your time and attention.

Finally, it's important to remember that no single article or source can provide all the answers. As software developers, we are constantly learning and growing, and the best way to do so is by exposing ourselves to a wide variety of opinions, ideas, and techniques. So don't be afraid to read articles that challenge your assumptions or offer a different perspective, even if you ultimately decide that they aren't useful for your specific situation.

In conclusion, all articles are wrong, but some can be incredibly useful. As software developers, it's our job to approach articles with a critical eye, test them thoroughly, and evaluate the credibility of the source. By doing so, we can continue to learn and grow in our careers, even in the face of conflicting information and opinions. | nkrumahthis |

1,450,523 | Why Choose CoinEx? | Experience What Makes CoinEx So Remarkable. CoinEx is a robust name within the cryptocurrency sector... | 0 | 2023-04-27T21:45:30 | https://dev.to/agboobinnaya/why-choose-coinex-42jg | crypto, blockchain, web3, defi | _Experience What Makes CoinEx So Remarkable._

CoinEx is a robust name within the cryptocurrency sector and is often considered synonymous with privacy while offering a plethora of stablecoins—over 600 in fact. With access to perpetual and spot markets, even margin trading, and all of this coupled with low fees and amazing security, CoinEx has become an all-in-one store for the majority of cryptocurrency traders. For most, its biggest selling point would probably be its lack of mandatory KYC requirements. However, the exchange has a lot more to offer besides that.

**What Makes It So Special?**

CoinEx comes with every essential feature for beginner and veteran cryptocurrency traders alike. Veteran traders, in particular those who have high requirements pertaining to privacy, will find that CoinEx has everything they could possibly need. With that said, let's look at some of its notable features.

For one, as hinted at earlier, it has a large selection of cryptocurrencies, and not just that, but it is constantly integrating exciting new projects, which go through the several vetting processes it has. Then there are the fees to consider, which are competitively low. Furthermore, CoinEx's fees can be reduced even further if one holds their native token, i.e., CET, or chooses it when paying their fees.

On the other hand, its security is top-notch, which is made even more apparent since it has never experienced any kind of hack. This is due to the fact that it utilizes cold wallet storage and lets its users know if anything suspicious happens on their accounts. Such security is also backed by its proof-of-reserves (PoR) and its 100% reserve rate. Also, with PoR, investors can rest assured that CoinEx actually holds their assets and can cover their withdrawals at any given moment, allowing for more transparency.

As mentioned previously, CoinEx does not make KYC mandatory. All one needs to do is enter their email address, a reliable password, and 2FA, and they're all set to go and can begin trading. Still, for withdrawals exceeding $10,000, you need to get verified. Another thing worth mentioning is that it does not charge any fees for deposits, while withdrawals can incur some fees depending on the blockchain involved. Then there is its detailed Help Center as well, which consists of numerous detailed guides for practically any problem you might be facing, and if by some chance you are not able to find what you are looking for, you can directly contact their customer support.

**Final Thoughts**

CoinEx can be an excellent crypto exchange for users who are searching for more robust spot trading markets, as well as for margin trading and perpetual futures if they happen to be on the more experienced end of traders. The number of cryptocurrencies that CoinEx supports is enormous and is still gradually growing, which means you'll be able to find a decent collection of low-market-cap altcoins on offer. Then there is the fact that it does not force KYC on its users, which is particularly relevant if they prefer their privacy.

| agboobinnaya |

1,450,617 | Invariant Violation: requireNativeComponent: “RNSScreenStackHeaderSubview” was not found in the UIManager | Hello developers and enthusiasts of React Native and Expo! Today, let’s discuss an error that you... | 0 | 2023-04-27T22:52:27 | https://dev.to/okorelens/invariant-violation-requirenativecomponent-rnsscreenstackheadersubview-was-not-found-in-the-uimanager-3op6 | expo, reactnative, javascript |

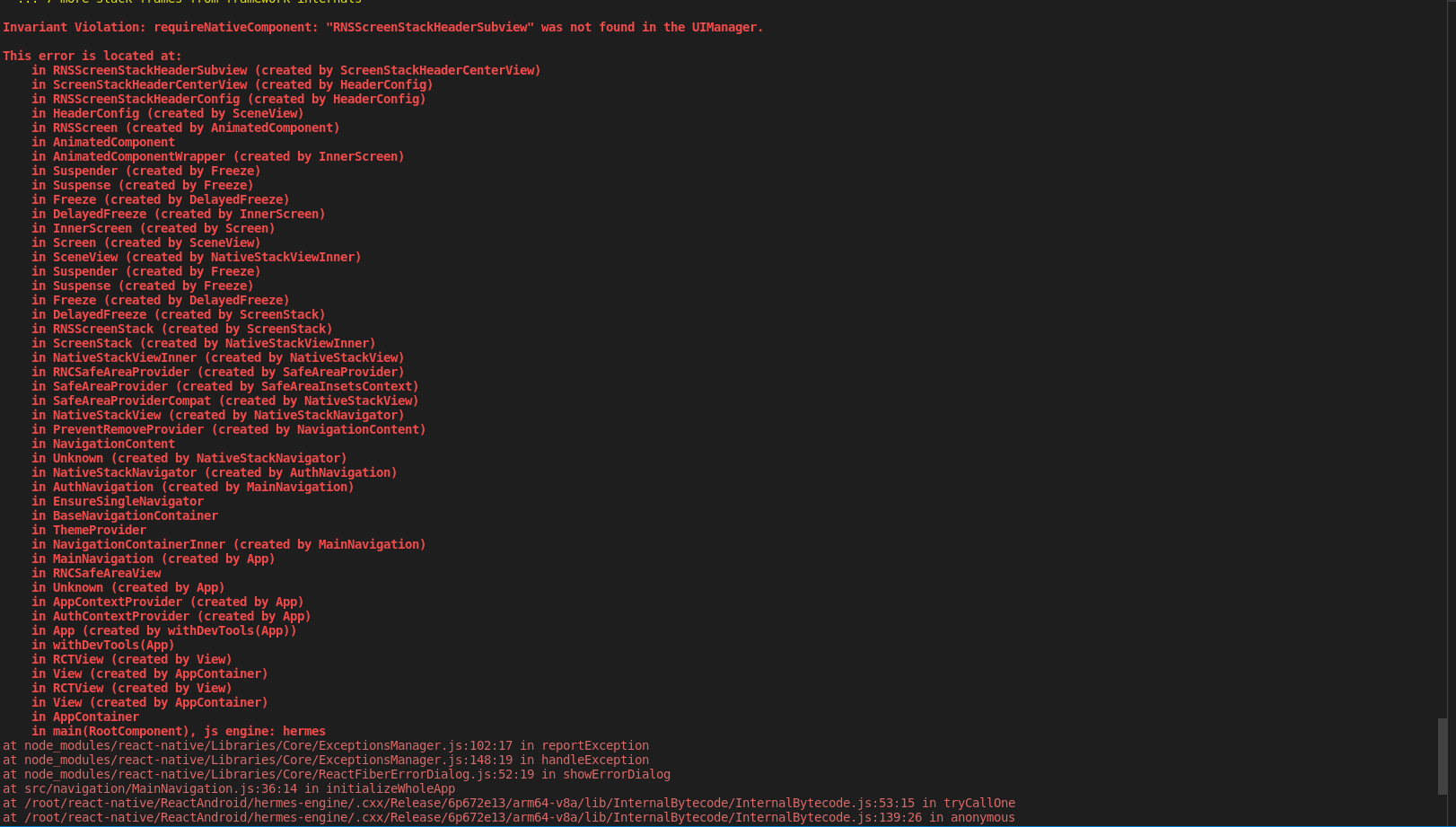

Hello developers and enthusiasts of React Native and Expo! Today, let’s discuss an error that you may have encountered or could encounter in the future: “Invariant Violation: requireNativeComponent: ‘RNSScreenStackHeaderSubview’ was not found in the UIManager”.

I recently faced this error while building a mobile application for a client. After running “eas build” and generating the app successfully, it kept crashing upon opening. Interestingly, the app worked fine whenever I served it on Expo Go. Eventually, I had to debug the application and that’s when I came across this error.

In my experience, tracing the parent of the component or the stack and installing the package that uses the component often solved such errors. However, this error was different and my first attempt to find a solution on Stack Overflow and online articles didn’t work. I even tried ChatGPT but to no avail.

If you’re facing this error, the first thing to note is that it’s caused by conflicting dependencies that make use of the react-navigation and react-native-screens packages, or there could be a missing package in your project. To resolve this error, make sure that these packages are installed and up-to-date.

_npm i react-navigation react-native-screens @react-native-community/masked-view react-native-gesture-handler react-native-reanimated react-native-safe-area-context_

We have another option, which involves deleting the node modules folder and package-lock.json files. Then, we can update all the packages by running the appropriate command.

_npm update_

I’d like to share another helpful tool called “expo doctor”. It checks that the dependencies installed in your project match the correct versions that your app is using. By appending “-fix-dependencies”, this tool can install the correct versions of the packages used by your app.

_expo doctor --fix-dependencies_

Once you have completed these steps, rebuild your app and you’ll be one step closer to releasing your application.

| okorelens |

1,450,702 | Using Apache ECharts with ReactJS and TypeScript: Server Side Rendering (SSR) | What is SSR? BairesDev explains this well: Server Side Rendering (SSR) is a paradigm under... | 0 | 2023-10-19T07:11:17 | https://dev.to/manufac/using-apache-echarts-with-reactjs-and-typescript-server-side-rendering-ssr-28m7 | ssr, react, echarts, typescript | ### What is SSR?

[BairesDev](https://www.bairesdev.com/blog/server-side-rendering-react/) explains this well: Server Side Rendering (SSR) is a paradigm under which we _render web pages on the server before sending them to the client_.

It has both [pros](https://www.debugbear.com/blog/server-side-rendering#what-are-the-advantages-of-server-side-rendering) and [cons](https://www.debugbear.com/blog/server-side-rendering#are-there-disadvantages-to-server-side-rendering), but some use cases may well justify its adoption. For instance, better search engine indexability could be a huge selling point for SSR for some people.

Broadly speaking, whatever aligns well with your end objectives (CSR, SSR, or a hybrid approach) is fine. However, in this article, we mainly explore **how to render charts using Apache ECharts via SSR**.

### How does Apache ECharts help us in building SSR apps?

As a charting library, it offers built-in support for both CSR and SSR modes.

The visualization instances produced by Apache ECharts can easily be converted to PNG data URLs on the server side. Further, it exposes an [isomorphic](https://en.wikipedia.org/wiki/Isomorphic_JavaScript) API to allow converting these instances to SVG strings as well.

Subsequently, these image strings can be rendered in the browser using the [`<img>`](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/img) element, allowing for server-side rendering (SSR) capability.

The following discussion focuses on building ReactJS SSR applications using **NextJS** but this approach should work as a blueprint for other frameworks too.

---

Before we dive into the SSR way of using ECharts, here's a quick refresher on the CSR approach.

### CSR Snippets

If you pick the Canvas Renderer, here's how a CSR Scatter Plot component may look like. _If the following syntax feels alien, you may want to go through two other articles in this series first: [this](https://dev.to/manufac/using-apache-echarts-with-react-and-typescript-353k) and [this](https://dev.to/manufac/using-apache-echarts-with-react-and-typescript-optimizing-bundle-size-29l8)._

```ts

// Import necessary modules and types from "echarts"

import { ... } from "echarts/...";

import { CanvasRenderer } from "echarts/renderers";

import { useRef, useEffect } from "react";

use([

...,

...,

CanvasRenderer,

]);

export interface Props {

theme?: "light" | "dark";

data: Record<string, number>[];

}

export function CanvasRendererScatterPlot({

theme,

data,

}: Props): JSX.Element {

const chartRef = useRef<HTMLDivElement>(null);

useEffect(() => {

const chart = init(chartRef.current, theme);

return () => {

chart?.dispose();

};

}, [theme]);

useEffect(() => {

if (chartRef.current !== null) {

const chart = getInstanceByDom(chartRef.current);

const option = {...};

chart?.setOption(option, true);

}

}, [data, theme]);

return (

<div

ref={chartRef}

style={...}

/>

);

}

```

But if you want to pick the SVG Renderer instead, the code is mostly the same except that instead of using `CanvasRenderer`, we will need to use `SVGRenderer`.

Now, moving onto the SSR approach:

### SSR Snippets

**A. Canvas**

```ts

import { ... } from "echarts/...";

import { CanvasRenderer } from "echarts/renderers";

import { Canvas, createCanvas } from "canvas";

use([

...,

...,

CanvasRenderer,

]);

export function getCanvasScatterPlotServerSideProps(points: number): Canvas {

const canvas = createCanvas(400, 500);

const chart = init(canvas as unknown as HTMLCanvasElement);

const option = {

...,

series: {

type: "scatter",

encode: {

x: "x",

y: "y",

},

progressiveThreshold: points + 1,

},

...

};

chart.setOption(option);

return canvas;

}

```

The `dataURL` can be generated from the `Canvas` instance via `canvas.toDataURL()`. This data URL can be sent to the client which can then render the chart image as follows:

```ts

import Image from "next/image";

export function SSRCanvasRendererScatterPlot({ url }: { url: string }): JSX.Element {

return (

<div style={...}>

<Image src={url} width={400} height={500} alt="SSR canvas scatter plot" />

</div>

);

}

```

**B. SVG**

```ts

import { ... } from "echarts/...";

import { SVGRenderer } from "echarts/renderers";

use([

...,

...,

SVGRenderer,

]);

export function getSVGScatterPlotServerSideProps(points: number): string {

const chart = init(null as unknown as HTMLElement, undefined, {

renderer: "svg",

ssr: true,

width: 400,

height: 500,

});

const option = {

...,

animation: false,

series: {

type: "scatter",

encode: {

x: "x",

y: "y",

},

progressiveThreshold: points + 1,

},

...

};

chart.setOption(option);

const chartData = chart.renderToSVGString();

chart.dispose();

return chartData;

}

```

Similar to Canvas, the SVG string can be sent to the client where it can be rendered as follows:

```ts

import Image from "next/image";

export function SSRSVGRendererScatterPlot({

svgDataString,

}: {

svgDataString: string;

}): JSX.Element {

return (

<div style={{ ... }}>

<Image

src={`data:image/svg+xml;utf8,${encodeURIComponent(svgDataString)}`}

width={400}

height={500}

alt="SSR SVG scatter plot"

/>

</div>

);

}

```

Now that we know how to generate charts on server-side, we simply need a mechanism to fetch that data on the client-side for final rendering. The [API Routes](https://nextjs.org/docs/pages/building-your-application/routing/api-routes) approach is showcased below. However, the [Server Components](https://nextjs.org/docs/app/building-your-application/rendering/server-components) approach should work fine as well.

```ts

// Next.js API route support: https://nextjs.org/docs/api-routes/introduction

import { getCanvasScatterPlotServerSideProps } from "@/components/SSRCanvasRendererScatterPlot";

import { getSVGScatterPlotServerSideProps } from "@/components/SSRSVGRendererScatterplot";

import { SSRScatterPlotData } from "@/components/utils";

import type { NextApiRequest, NextApiResponse } from "next";

export default function handler(req: NextApiRequest, res: NextApiResponse<SSRScatterPlotData>) {

const { points } = req.query;

let ssrData: SSRScatterPlotData = { svgDataString: "", canvasDataURL: "" };

...

...

const canvas = getCanvasScatterPlotServerSideProps(...);

const svgDataString = getSVGScatterPlotServerSideProps(...);

ssrData = { svgDataString, canvasDataURL: canvas.toDataURL() };

res.status(200).json(ssrData);

}

```