id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,475,257 | From Children's Musician to iOS Dev - Late in Life Career Change? | Hello Dev, I'm new here. This is my story... I teach college algebra. It’s a gig I took as a... | 0 | 2023-05-21T13:48:43 | https://dev.to/montyharper/from-childrens-musician-to-ios-dev-late-in-life-career-change-1dfa | beginners, career, mobile, ios | Hello Dev,

I'm new here. This is my story...

I teach college algebra. It’s a gig I took as a stop-gap measure to get myself past a rough patch in my music career. Spoiler alert — that didn’t work out. I’m still teaching college algebra, nearly seven years on.

For most of my adult life I’ve made a living as a full-time children’s songwriter, performer, and teaching artist. I still perform at libraries during the summer, and I have several CDs out. They’re pretty good. I’m on [Spotify](https://open.spotify.com/artist/66z8hGVbduE6jpBAHY7Eql) if you want to look me up.

I’ve also always wanted to be an author, and teaching has given me time to complete three middle-grade novels. I’ve been hoping this would become my creative salvation. I could get published, be a full-time writer, quit teaching, and make music on the side.

But authorship is a long and arduous journey. And teaching as an adjunct instructor has become my dead-end, low-pay, no-benefits, no-job-security albatross — it’s not a career. With neither music nor writing poised to take over any time soon, a neutron-star-sized clump of dread formed in my stomach when it came time to sign up for yet one more semester of college algebra this coming Fall. I felt stuck and depressed and desperate for change.

Desperate enough to start poking around, even though I couldn’t imagine a better option that wouldn’t require expensive education or years of prior experience. _You_ can, though, right?

What I discovered seemed way too good to be true! First of all, you need to know that all my non-music, non-writing projects over the years — anything I’ve done for fun or contemplated turning into some kind of business — from my high school years through to the pandemic — all those projects involved some kind of coding. I just never realized I was developing job-worthy skills that could lead to a fun, creative, high-earning career! I might even be able to work from home. With a bit of training I could potentially start job hunting within a few months!

_What?!? Is this for real? Pinch, pinch._

After a few days of giddy research, I jumped on board. I signed up for Udacity’s iOS Development course on April 22, 2023.

I’m writing this one month later from the eye of an information hurricane. I’ve been completely obsessed! All that I’ve encountered in the wonderland of tech, even including all the hurdles and frustrations (of which there are many), leaves me convinced I’ve dropped myself into the right place.

Simultaneously, I’m terrified I’ve made a huge mistake. Maybe I’m too old for this. Maybe I don’t have what it takes. Maybe it is all too good to be true. Maybe I’m chasing an unattainable dream.

Doesn’t matter.

If all I acquire is the ability to complete a few personal projects, it’ll be worth it. One thing on my to-make list is a calendar for my elderly mom. She has a brain disease that leaves her unable to comprehend time, which gives her constant anxiety. None of the dementia calendars out there have helped, and they all work on the same principal. My idea is different. Maybe it’ll work better for her. When I get discouraged, I picture my mom using an iPad, running my calendar app. For now, that project is my North Star. I have until mid August to get as far as I can before algebra instruction reclaims my time for another semester.

And I want that tech career, so I’m going all in. As I understand it, that means social media / blogging / learning in public. So here I am ready to give it my best!

What does it take for a fifty-something-year-old children’s musician to snag a new career in tech? Give me a follow. Let’s find out together!

| montyharper |

1,475,261 | Beyond Bugs: Exploring the Depths of Software Testing | Testing is a critical phase in the software development process. It ensures that the software meets... | 0 | 2023-05-21T14:20:22 | https://medium.com/@iamfaisalkhatri/beyond-bugs-exploring-the-depths-of-software-testing-6a3b7057060e | softwaretesting, softwareengineering, testing, learning | Testing is a critical phase in the software development process. It ensures that the software meets the requirements, functions as expected, and is reliable. However, there are many challenges that can erupt throughout the testing process.

In this blog, let me take you on a tour of the challenges faced while performing software testing, provide you some tips and tricks to overcome those challenges and also talk beyond catching bugs to efficiently test the software ensuring quality releases.

## Challenges faced in Software Testing

Testers or the QAs face various obstacles while performing Software Testing. These challenges must be overcome in order to perform testing without any blockers ensuring on time release of software to the market. Some of the commonly faced challenges are -

**Time Constraints**: Time Constraint is the most commonly faced challenge by QAs in mostly every software project. Testers have to work within tight schedules with limited time available for testing. With less time to test makes the testers rush test activities which eventually leads to overlooking some critical issues.

**Changing requirements**: With Agile in place, though we have the flexibility to adhere to changing requirements, it can be a significant challenge, as the QA team has to align all the tests as per the latest changes. With the release deadlines, it leads to Technical Debt for the testing team as they have to update all the automation stuff as well as the test scenarios/cases to align them with latest specifications.

**Test Data Management**: Generating and managing realistic test data is a challenge that is faced by almost every testing team especially while working on large and complex systems. Accurate and realistic test data is required for effective testing, in case that is not available chances of missing critical scenarios increases.

**Limitation of resources**: Infrastructure, tools, and shortage of skilled QAs are some of the commonly faced limitations by QA teams. In addition to these, Insufficient testing environments, lack of automation tools, and a shortage of experienced personnel can lead to the overall blocking of software testing thereby slowing the delivery time to the market.

**Communication Issues**: Communication and Collaboration between the Testers, BAs, Developers and the Stakeholders is most important for successful testing and improving the overall quality of the product. However, due to miscommunication, lack of clarity, experience and poor collaboration, overall quality of the product suffers.

To overcome these challenges, effective planning, coordination between teams and the use of the right tools and techniques are required.

## How to overcome the software testing challenges?

With the multiple challenges obstructing software testing, it becomes a kind of blocker for the testing team to progress with their daily activities. It is therefore required to resolve these challenges to work efficiently. Here are the following tips that can be considered -

**Test Early**: Testing early helps in early detecting and resolution of the issues. It is not necessary that we test the software only. The bug can be hidden in the specification as well as the feature request. Going thoroughly through the specification can help in detecting the hidden issues and correcting them before they are leaked into the product. This helps in overall cost and efforts.

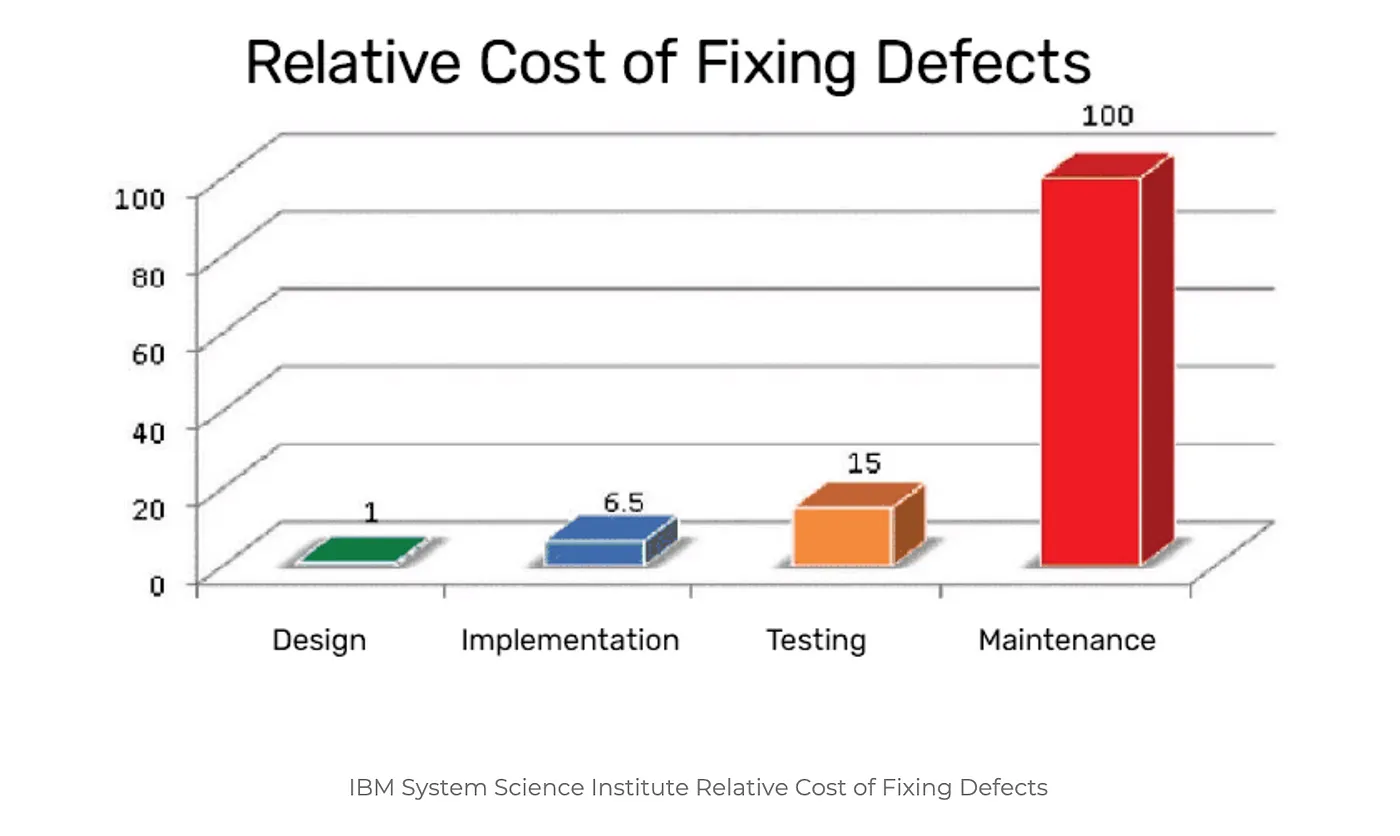

[IBM System Science Institute Relative Cost of Fixing Defects](https://www.researchgate.net/publication/255965523_Integrating_Software_Assurance_into_the_Software_Development_Life_Cycle_SDLC)

The cost of finding bugs in the later phase of software development is much higher as compared to that in the initial phase of development.

**Prioritize Test Cases**: Focus on the complex and high priority test scenarios, this will help you catch the critical bugs that might impact the overall functioning of the product. Prioritization can be done based on business requirements, its impact and probability of failure.

Business Analysts and Domain Experts’ help can be taken here to focus on major critical areas of the software and prioritize them for testing.

Review and update the Test Cases/Scenarios: As the requirements keep on changing, it is good to review and update the test cases/scenarios on a regular basis. This can help to avoid creation of Technical Debt and can save time by reusing these updated cases/scenarios for testing in subsequent cycles.

{% embed https://medium.com/@iamfaisalkhatri/best-examples-of-functional-test-cases-agilitest-blog-424260298b5 %}

**Automate Regression Tests**: Regression testing refers to retesting the existing functionality of the software after a defect is fixed, to check the stability of the software and verify that the fix doesn’t have any side effects. Performing regression testing manually is a very tedious and boring task and requires lots of manual effort. Hence, the test scenarios that need frequent testing should be automated.

Automating the regression tests helps in running all the tests quickly and get faster feedback on the builds.

For example, if you are working on a project that is developing an e-commerce application. Most of the regression tests would need registration, login, adding to product to cart, making payment, etc. All these test scenarios can be automated and regression testing can be a big time saver.

**Exploratory Testing of the product**: Exploratory testing refers to combining your experience, testing skills and domain knowledge to uncover the hidden issues that have not been discovered yet. It helps in adding more value to the product by exploring and testing the unexplored areas.

**Usability Testing**: It refers to testing the software for its ease of use. Most of the users stop using the software as they don’t consider it user friendly. This type of testing helps in checking the overall user experience and identify the design flaws and usability issues.

**Improve Communication and Collaboration **- Communication is the key in Software Engineering. Almost half of your issue gets resolved at the earliest if you communicate well. Having an open communication and collaborating with developers, designers, stakeholders can ensure shared understanding of requirements, defects, and testing goals.

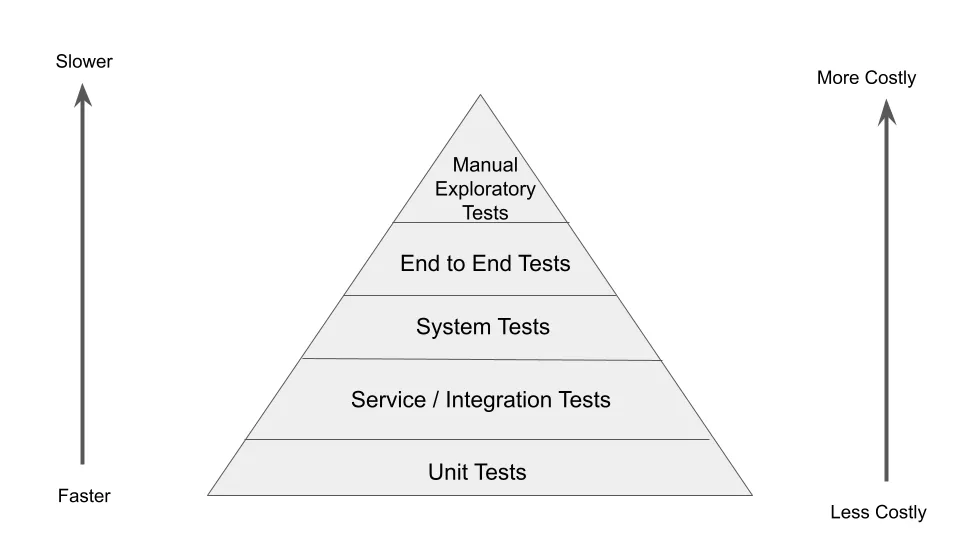

**Use Test Pyramid**: The concept of Test Pyramid was introduced by Mike Cohn in his book Succeeding with Agile. Test Pyramid helps in promoting a balanced approach to testing by organizing tests into different layers.

Unit Tests, Integration Tests, System Tests, End to End tests and Manual exploratory tests are layers of the Test Pyramid. As we have tests at every layer it helps in [Continuous Testing](https://medium.com/@iamfaisalkhatri/what-is-continuous-testing-lambdatest-bd2c464b414c) and provides with faster feedback by detecting issues early and fixing them.

## Software Testing Beyond bug detections

In this modern era of software development, there are teams working on agile methodologies like Scrum, Kanban, etc. We need to think about software testing beyond catching the bugs and writing the bug tickets.

How about taking actions to avoid the bug leakage in the code from the initial development stage itself? How about testing continuously without putting in manual efforts? How about baking the quality within the code itself?

There are lots of other areas to think about as well. It can all lead to delivering quality software and allow the team to maintain a balanced schedule between their work and life.

Let’s take a look at the other side of the term called “Software Testing”

**Confirming Functional Integrity**: Software Testing aims to ensure that the software is functioning as per the requirement specified. QAs check that the software is performing all the actions which it is intended to do. Also, more importantly, it is also important to verify that it is not performing the actions it is not intended to do. The latter point helps to find out the deviations from the actual working of the software and fix it before it makes its way to the production.

**Performing Usability and Accessibility Testing**: By performing the Usability testing, it can be ensured that the end user would be able to use the software smoothly. If the background color of the application is too dark or too itchy for the eyes, users may stop using it. Such suggestions can help improve the overall quality of the software.

Accessibility testing should be performed based on the targeted audience that would be using the software. By performing Accessibility Testing, we can check that software is easily accessible by differently abled people.

**Checking out for Security Vulnerabilities**: It can help in uncovering the potential security threats that can be faced by the system and make sure that the resources and data in the system are protected by attackers. The goal for doing this is-

- To identify the security risks.

- To measure potential threats.

- To identify vulnerabilities such as weak passwords, data breaches, unpatched software and misconfigured systems that could be exploited by the intruders.

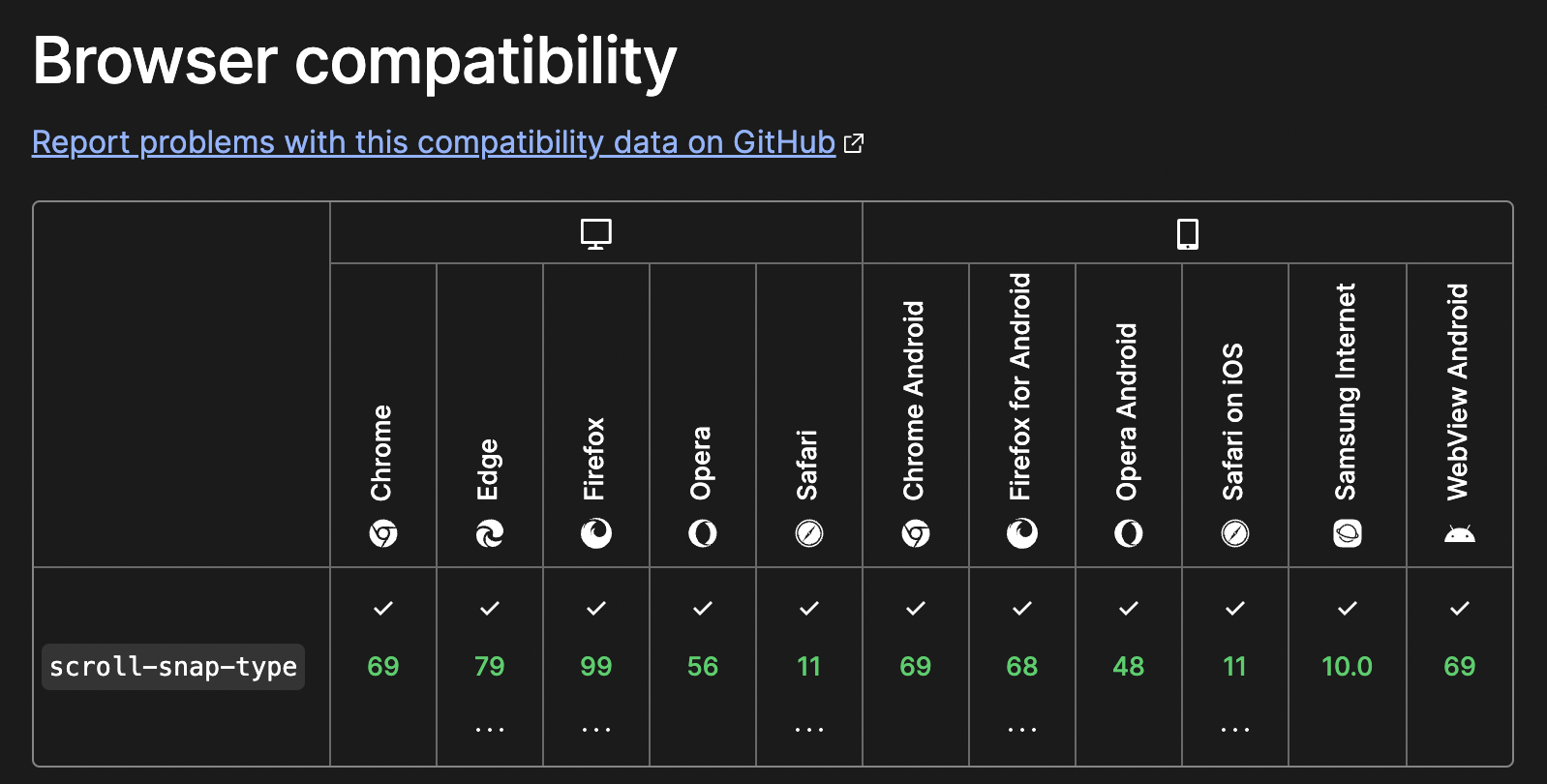

**Performing Cross Browser and Compatibility Testing**: With multiple Operating Systems such as Windows, MacOS, etc. and different browsers like Chrome, Firefox, Edge, Safari, etc. It is necessary to check the software is working as expected on different OS/Browser combinations. It can help uncover hidden issues which might go untested based on the assumption that if the software works on Chrome browser, it should work on Firefox browser as well.

Compatibility testing can help uncover issues related to installation, setup and configuration of software on different platforms like Windows, MacOS, Linux,etc.

{% embed https://medium.com/@iamfaisalkhatri/cross-browser-testing-in-selenium-webdriver-pcloudy-blog-46e9d70fa13a

%}

**Integrating automated tests in CICD**: Automated tests triggering automatically one after another once the developer pushes any code to the remote repository helps to get faster feedback without much manual effort. It can help bake the quality within the product itself.

{% embed https://medium.com/@iamfaisalkhatri/practical-guide-on-continuous-integration-for-automation-tests-vtest-blog-23e6d37f523f

%}

**Performance Optimization**: With multiple features getting added to the software brings in the responsibility to keep a check on the performance of the software.

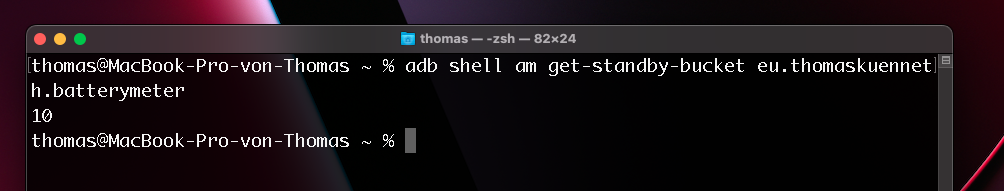

For example, in the case of a mobile project, areas like memory usage, battery usage, App load time, data sync time, page load time, App refreshing time, etc. are some of the areas which can be checked and accordingly if any lagging is found it can be improved. It is also important to check for fallback scenarios in case of network issues, App crash, etc.

{% embed https://mfaisalkhatri.github.io/2022/03/31/guidetomobiltesting/ %}

**Collaborating with DevOps**: QAs can also contribute and collaborate with the DevOps team. Checking logs in case of the pipeline failure, helping the developers in identifying the root cause of failures, setting up pipelines for different environments, running the test on cloud platforms, monitoring the automated pipelines, etc. are some of the areas where QAs can manage and help the DevOps team.

{% embed https://medium.com/@iamfaisalkhatri/devops-testing-vtest-blog-635d88de352f

%}

**Organize Bug Bash**: A bug bash is an activity where developers, testers, business analysts, designers, operations people and even stakeholders come together to perform all hands on, on the software. This helps in locating hidden bugs more quickly as every person uses the software differently. QAs should organize such bug bash a week before the release just in case if there are any critical issues discovered, it can be quickly fixed.

{% embed https://medium.com/@iamfaisalkhatri/what-is-a-bug-bash-c8ac3fd81661 %}

All this can happen once all the tedious tasks that need a lot of manual effort are automated so the testers can focus and work on overall improvement of the software.

{% embed https://youtu.be/Js0anAdYAA0 %}

## Conclusion

Software testing goes beyond simply finding and fixing bugs. It encompasses functional validation, performance optimization, verifying usability and accessibility, identifying security vulnerabilities , cross browser and compatibility testing, and regression testing. By exploring all these areas in detail, software testers can play a crucial role in ensuring the quality, reliability, and bring user satisfaction to software products.

It is important to tailor your testing techniques to the specific needs and requirements of the software project. By using comprehensive testing practices throughout the development lifecycle robust, efficient, and user-centric software can be delivered with quality.

> Freelance Work / Paid Trainings/Mentoring

> Contact me for Paid training/Mentoring related to Test Automation and Software Testing, ping me using any of the social media sites listed on [LinkTree](https://linktr.ee/faisalkhatri) or email me @mohammadfaisalkhatri@gmail.com.

| mfaisalkhatri |

1,475,580 | React Lifecycle | Introduction This would be a simple post about React lifecycle with hooks and react... | 0 | 2023-05-22T21:40:03 | https://dev.to/lausuarez02/react-lifecycle-22h1 | react, beginners, javascript, programming | ## Introduction

This would be a simple post about React lifecycle with hooks and react lifecycle with classes.

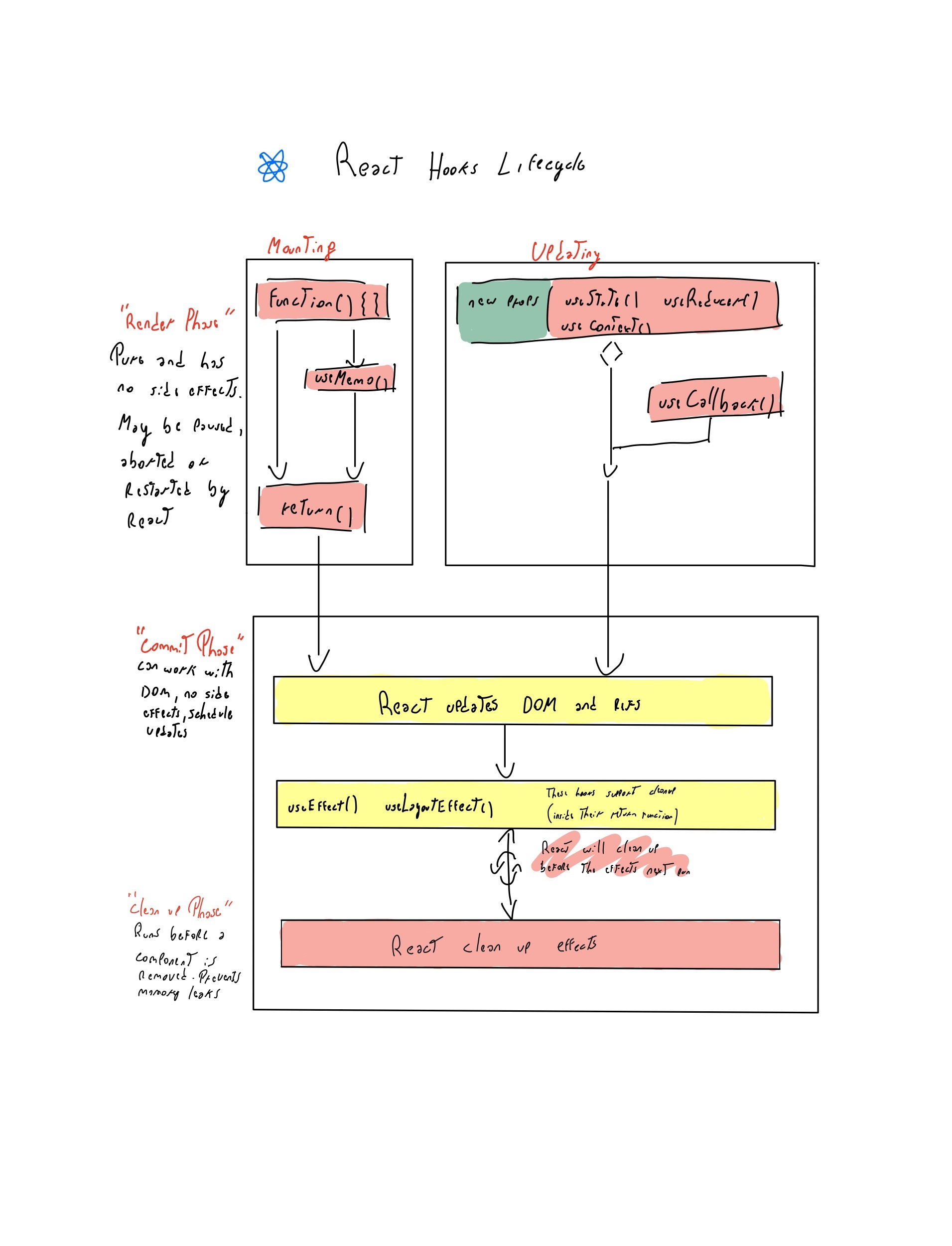

## React lifecycle with hooks.

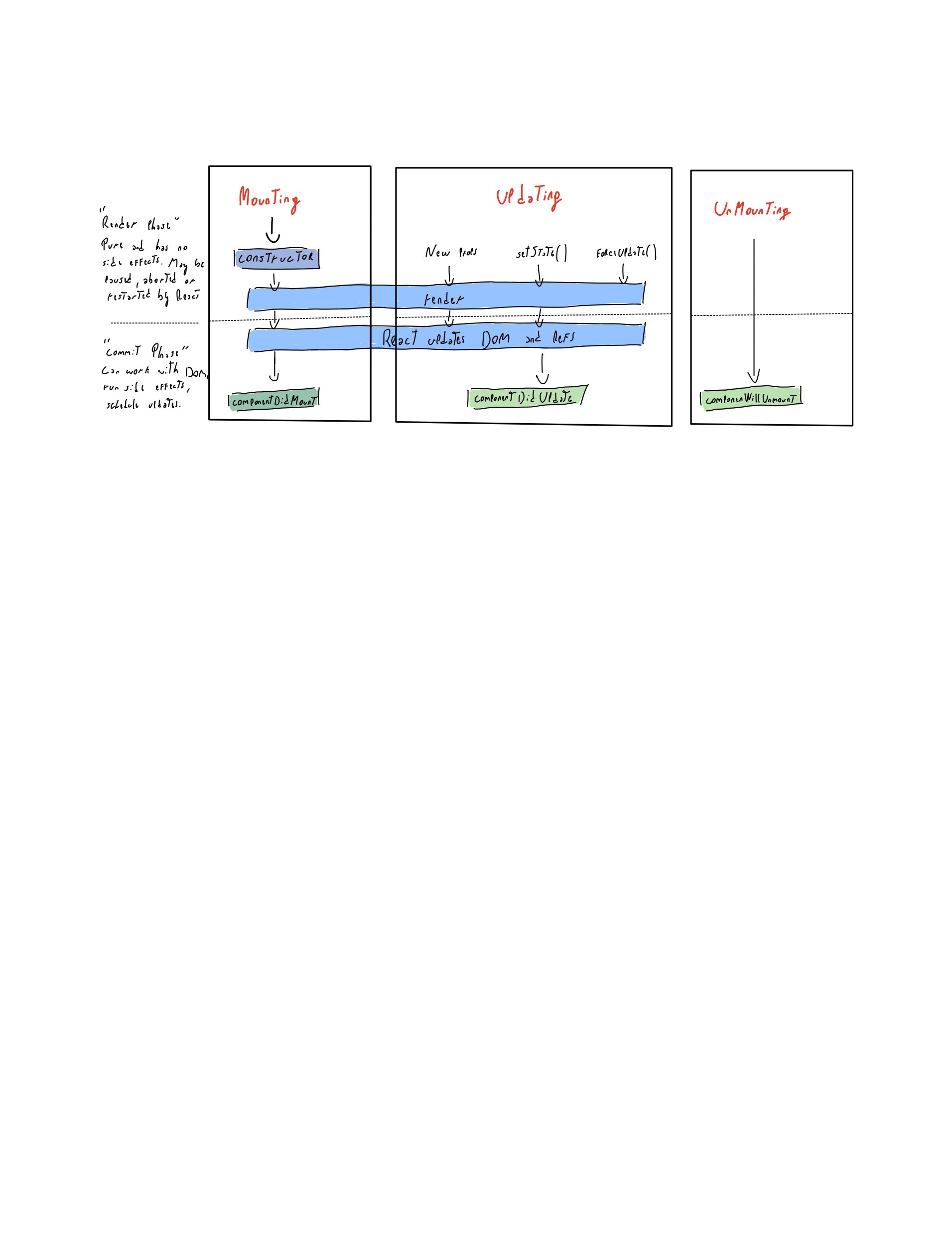

## React Lifecycle with classes

## Difference

I was wondering just like you about unmounting which in the hooks lifecycle but in this case the unmount will be when we do the clean up with for example useEffect, where inside the useEffect doing the return we are doing the unmounting which is called clean up.

## Conclusion

We can do basically the same and in a simpler way with functional components. We just have to know how to do it.

Hope someone found it useFul.

Lautaro

| lausuarez02 |

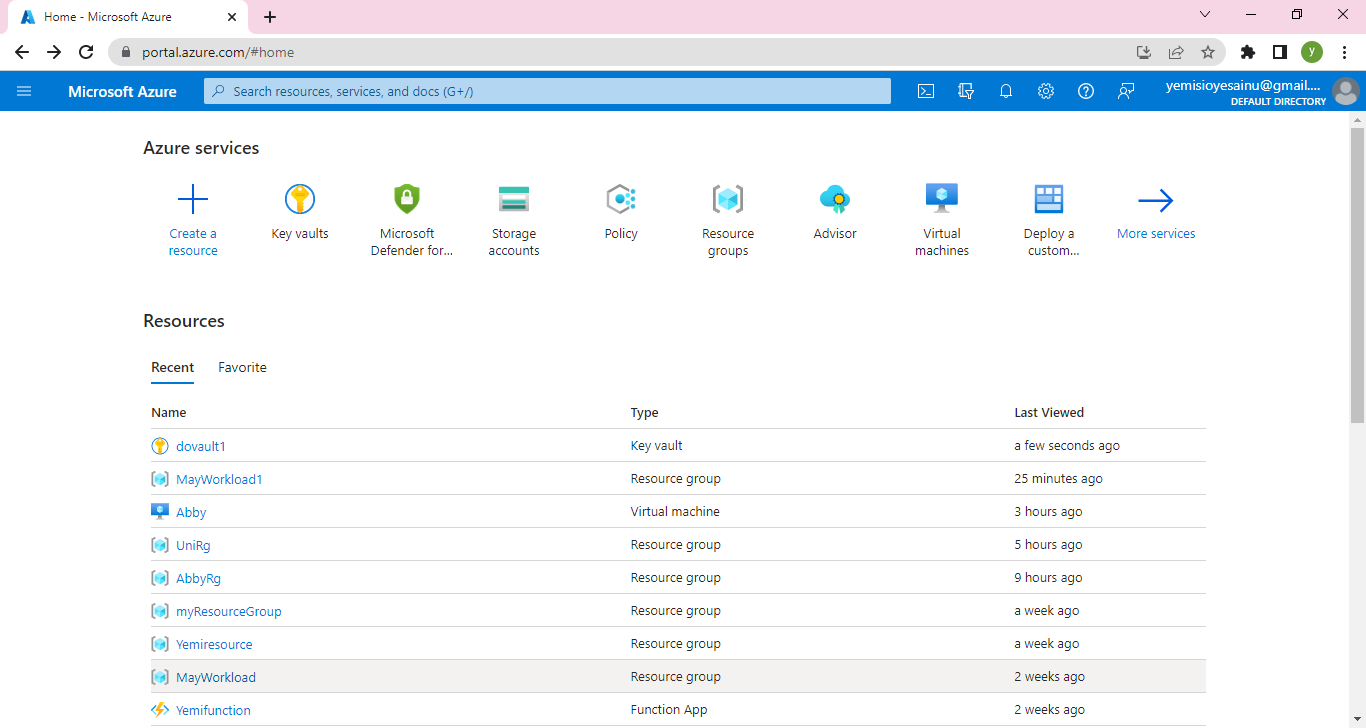

1,475,678 | A Step-by-Step Guide to Linking and Deploying a Node.js Web App to Azure App Services and Connecting it to an SQL Database | Introduction: In today's digital era, deploying web applications to the cloud has become increasingly... | 0 | 2023-05-21T22:31:49 | https://dev.to/chymee/a-step-by-step-guide-to-linking-and-deploying-a-nodejs-web-app-to-azure-app-services-and-connecting-it-to-an-sql-database-49j8 | Introduction:

In today's digital era, deploying web applications to the cloud has become increasingly popular due to its scalability and ease of management. Azure App Services, coupled with Node.js and an SQL database, offers a powerful combination for hosting and running your web applications. In this blog, I will walk you through the process of linking and deploying a Node.js web app to Azure App Services and connecting it to an SQL database.

Prerequisites:

Before we begin, make sure you have the following prerequisites in place:

An Azure account: Sign up for an Azure account at [click](https://azure.microsoft.com).

Node.js and npm: Install Node.js and npm on your local machine. You can download them from [click here](https://nodejs.org).

Azure CLI: Install Azure CLI on your machine. You can find installation instructions at https://docs.microsoft.com/cli/azure/install-azure-cli.

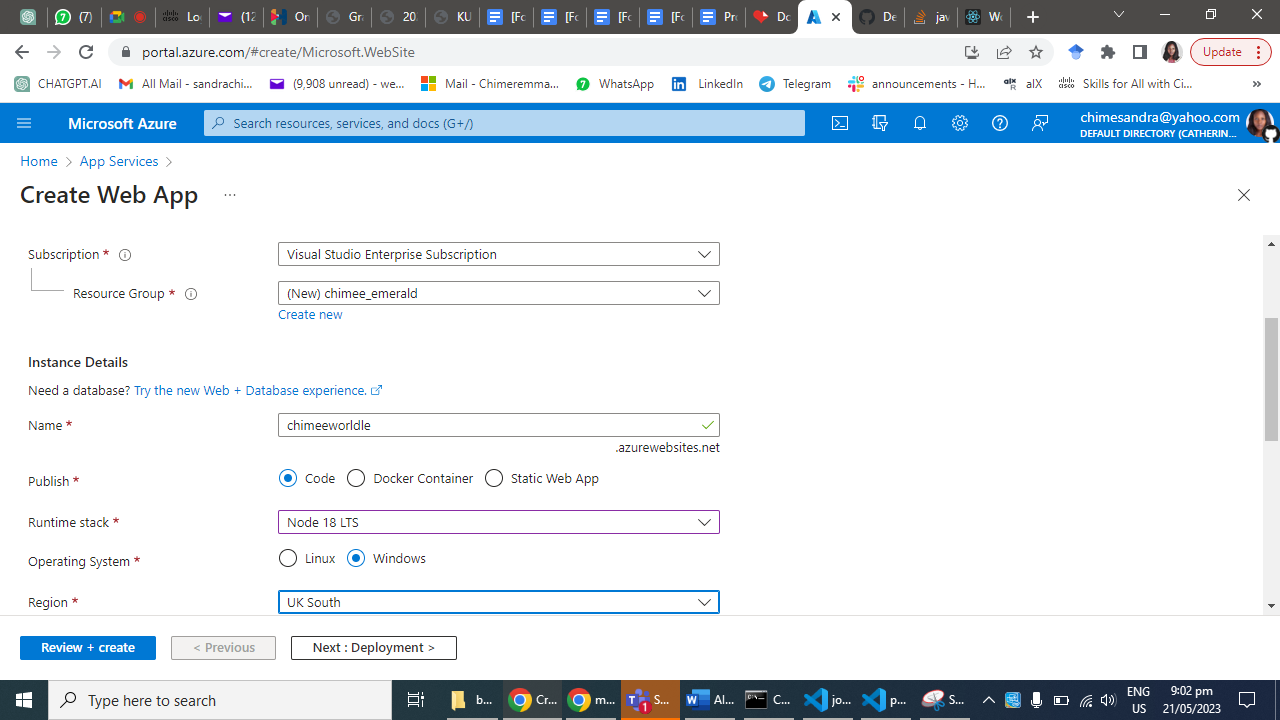

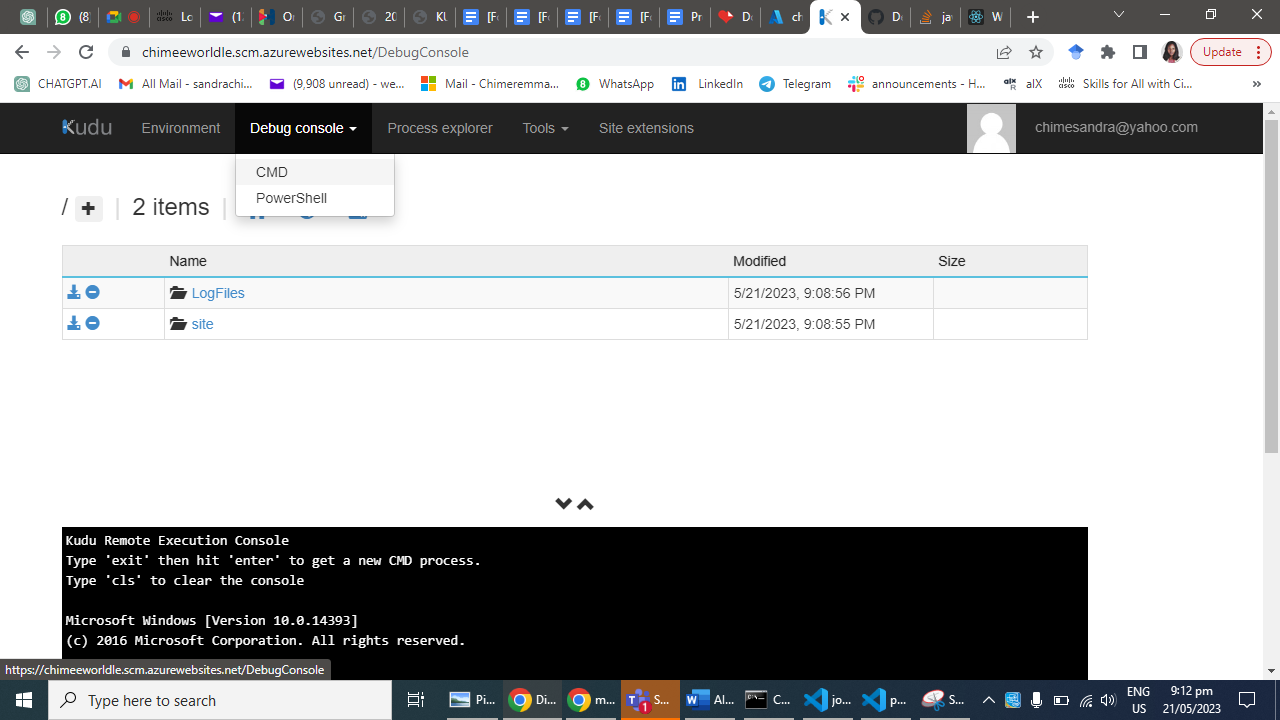

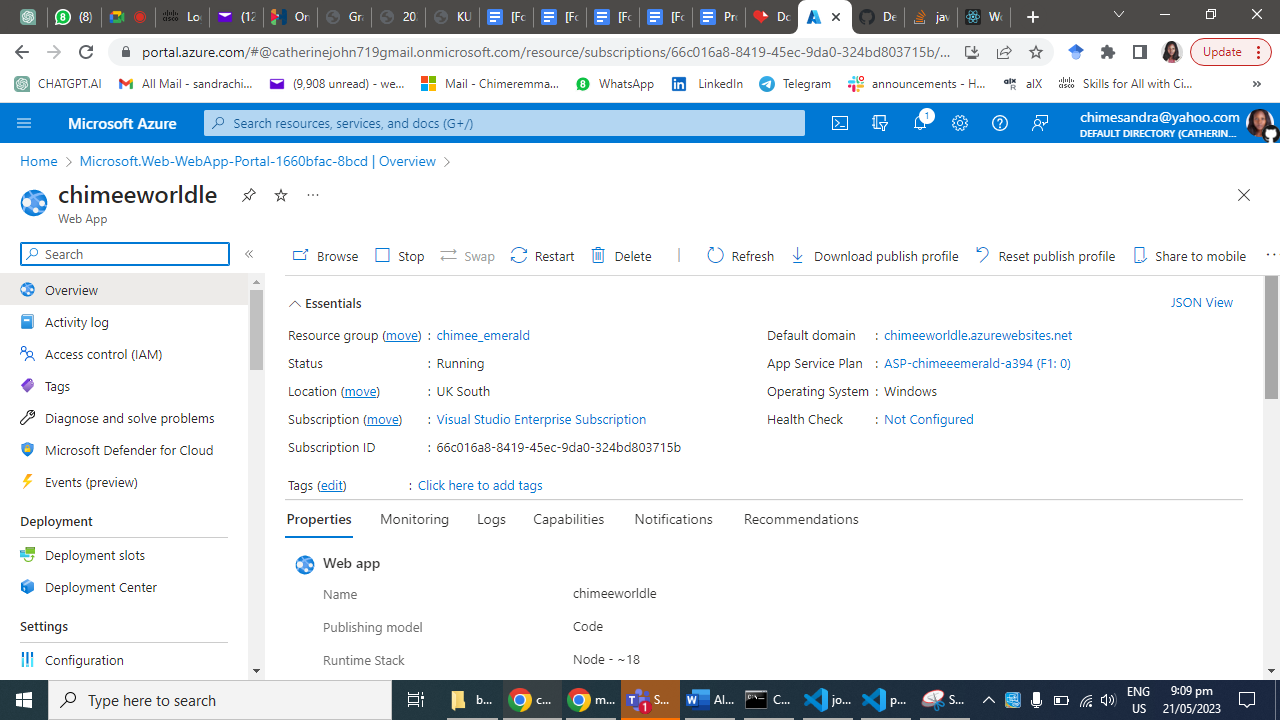

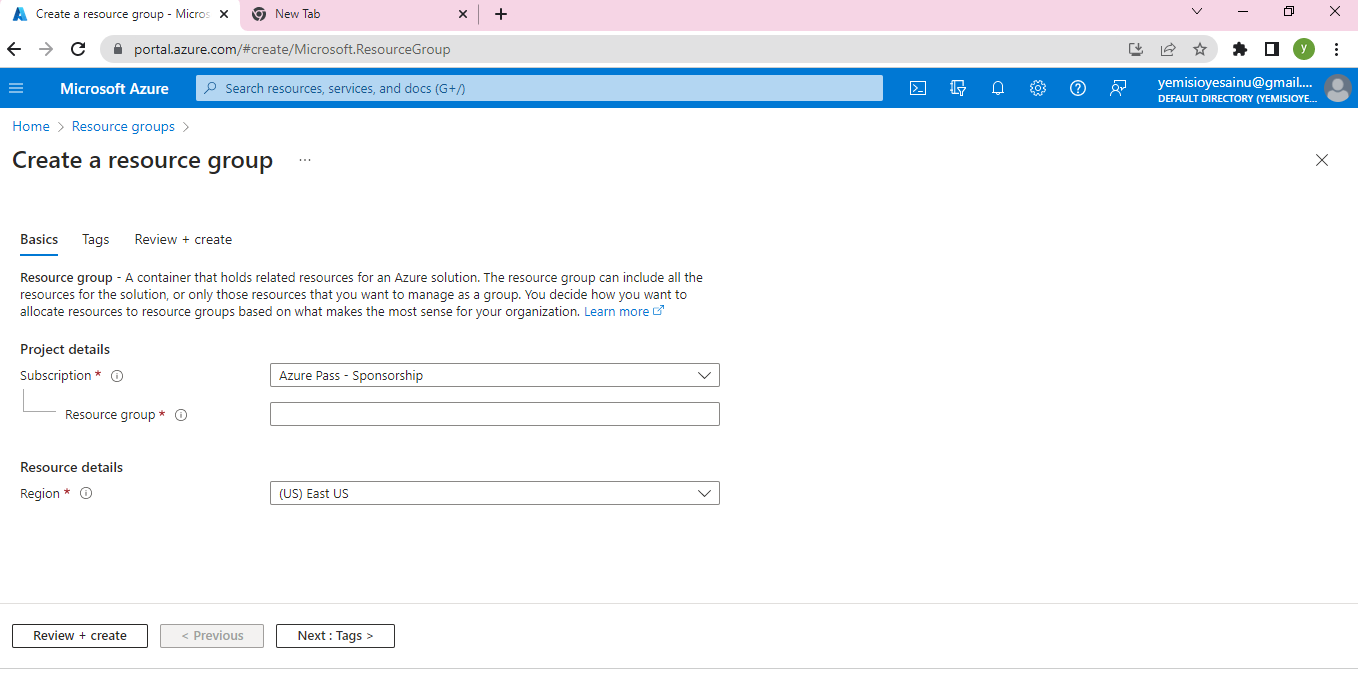

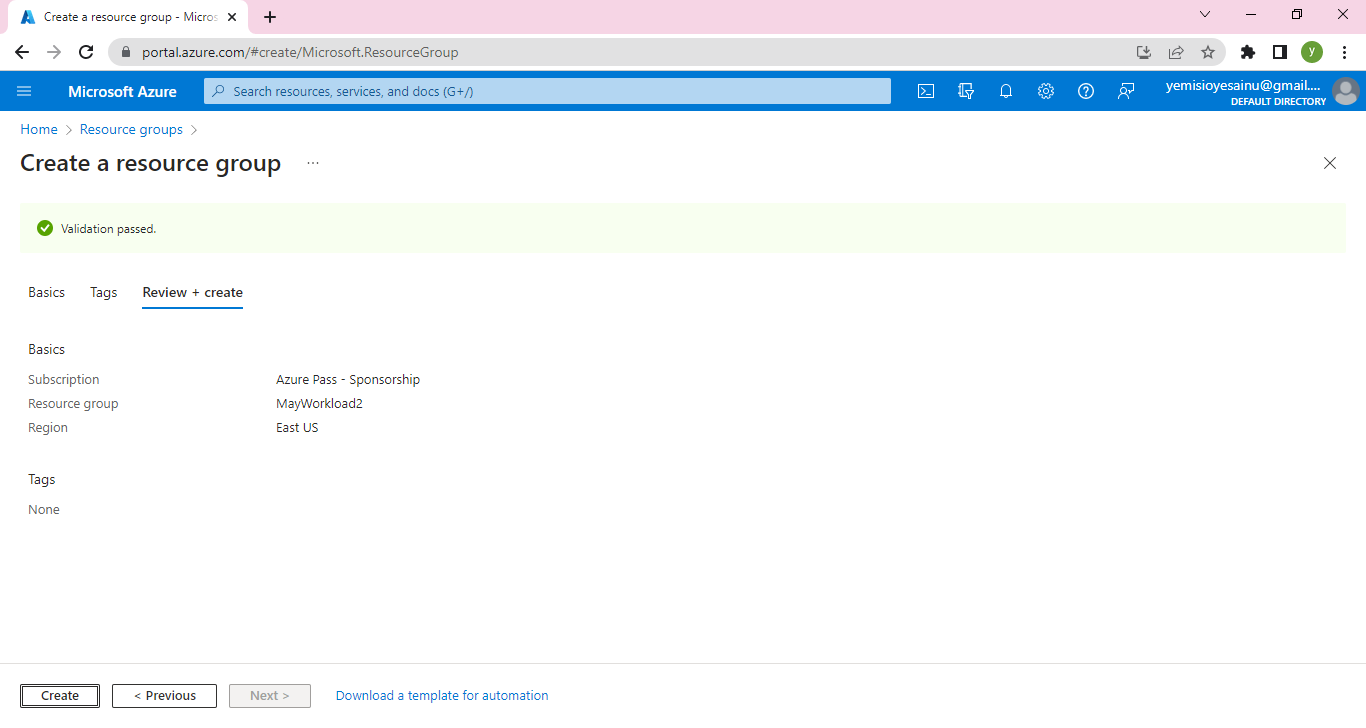

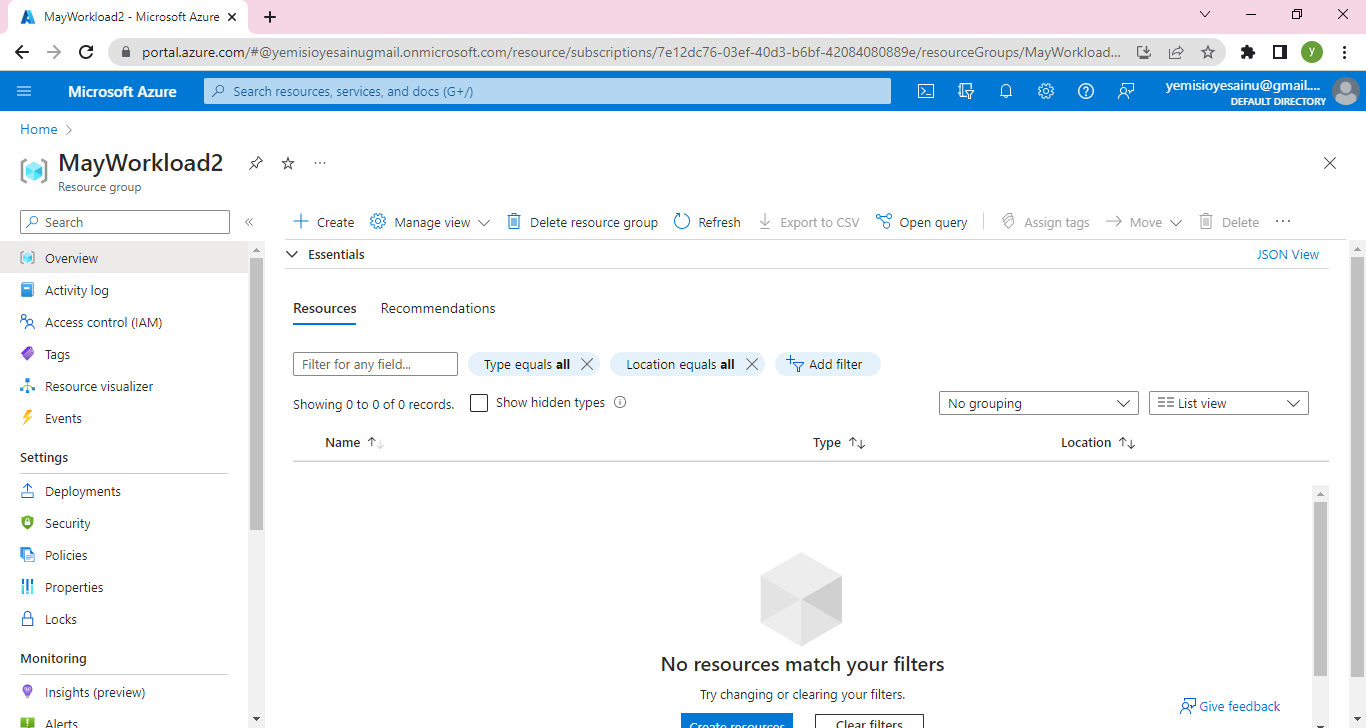

**Step 1: Create an Azure App Service**

Log in to the Azure portal [click here](https://portal.azure.com) using your Azure account credentials.

Click on "Create a resource" and search for "App Service."

Select "Web App" from the search results and click "Create."

Provide a unique name for your app, choose your preferred subscription, resource group, and operating system.

Configure the runtime stack to Node.js and choose the desired version.

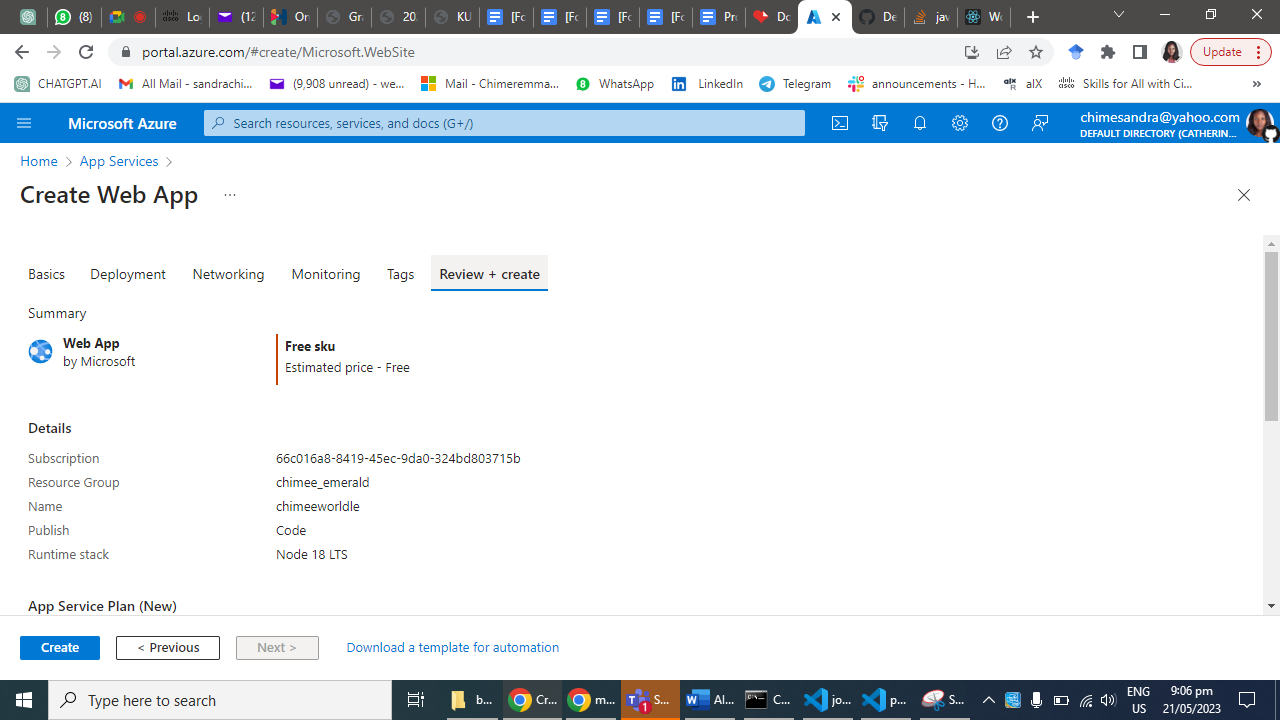

Click "Review + Create" and then "Create" to provision the App Service.

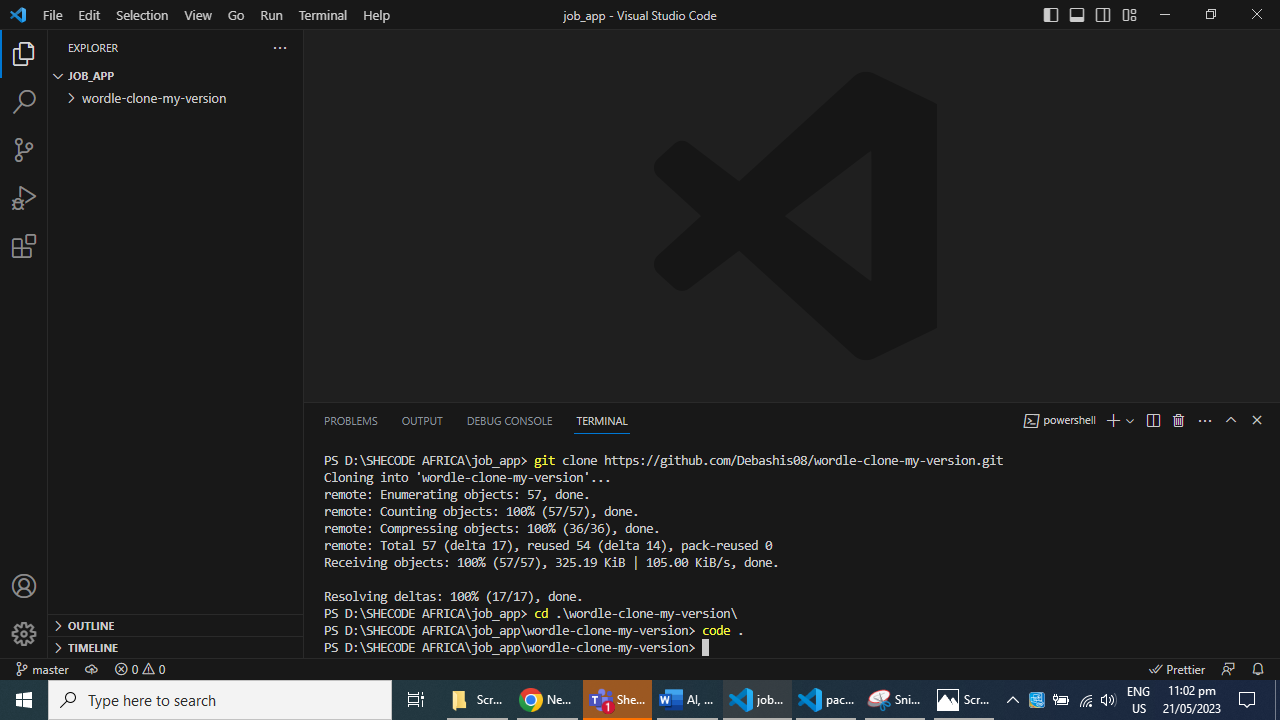

Step 2: Set up your Node.js Web App

Open a terminal or command prompt and navigate to your project's directory.

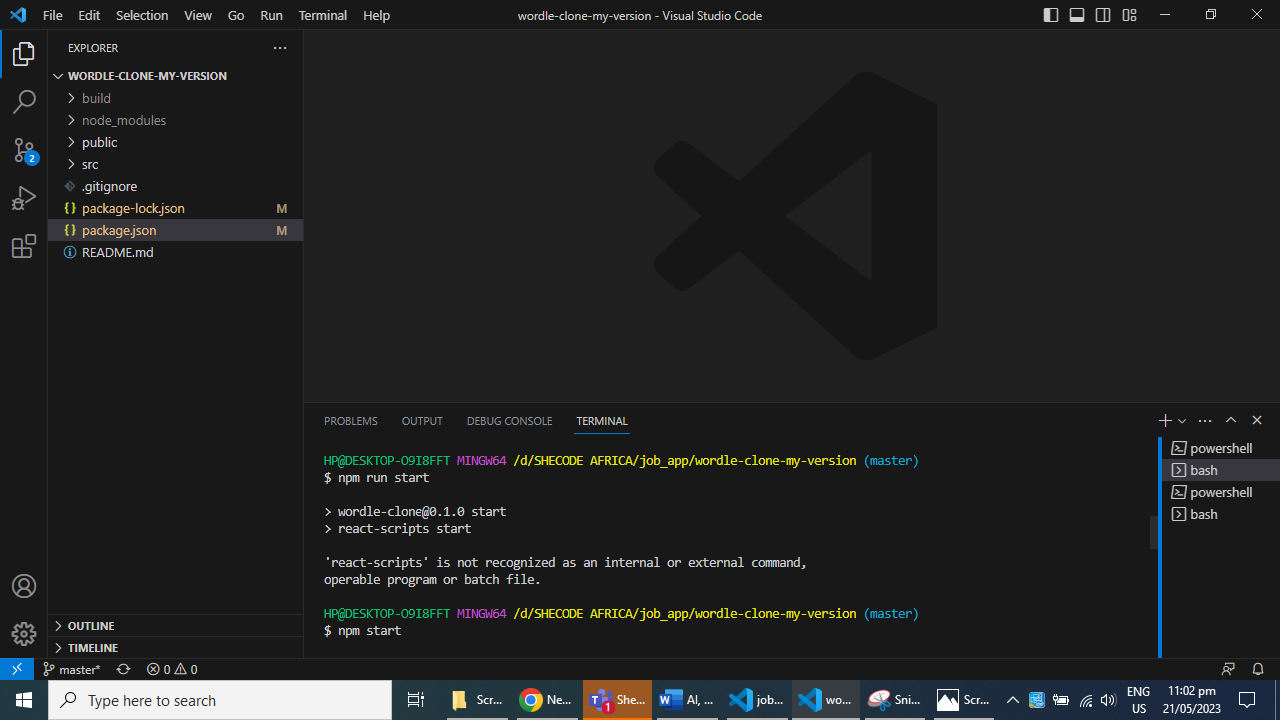

Initialize a new Node.js project by running the command: npm start.

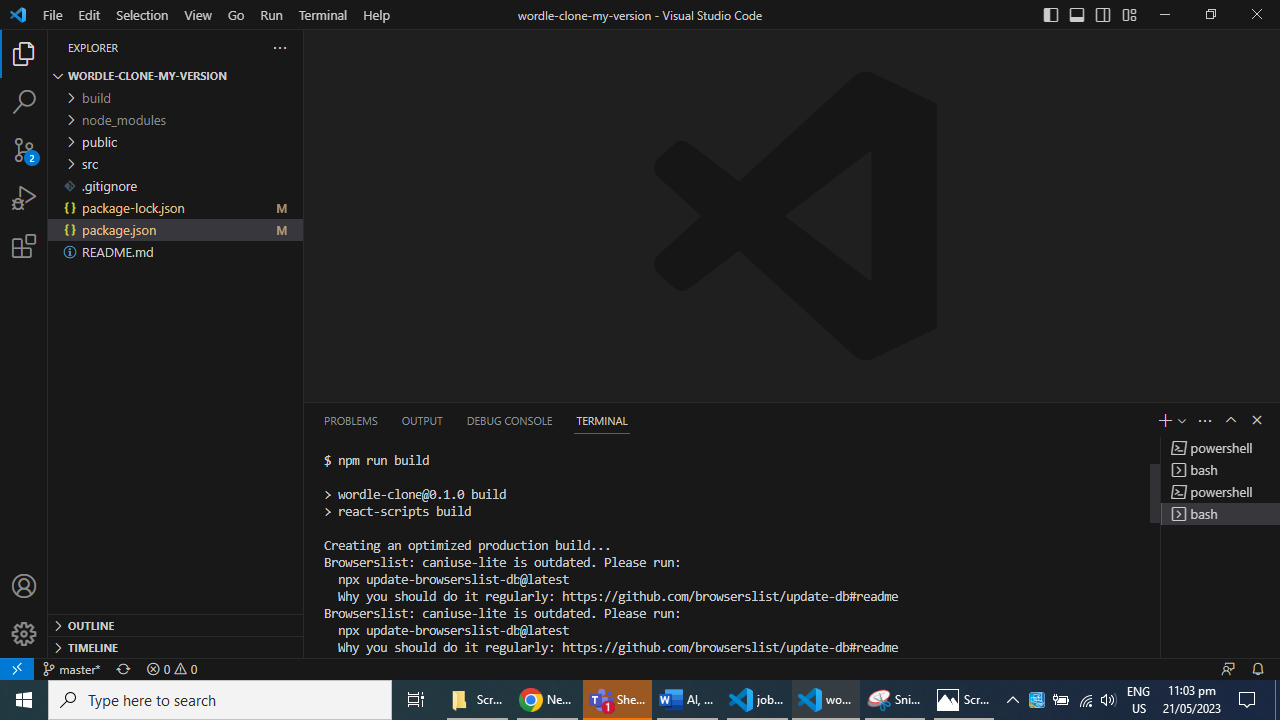

Install the necessary dependencies for your web app using npm run build.

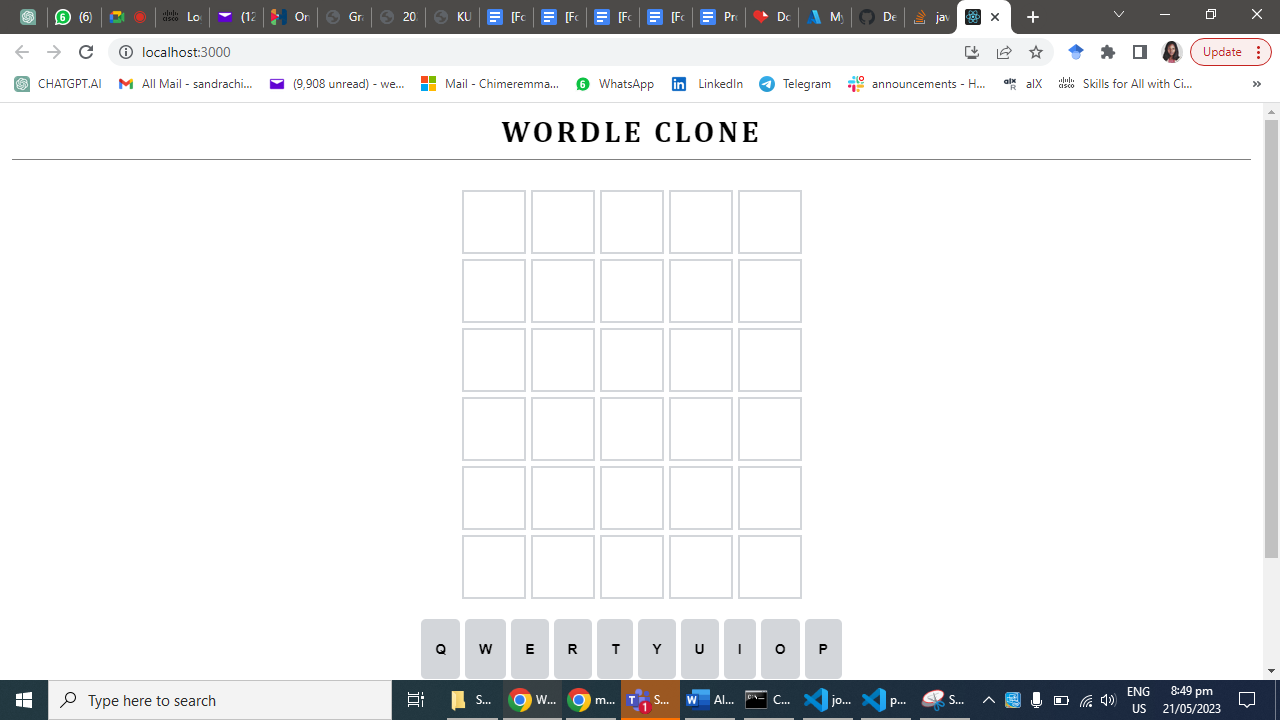

Test your application locally using the command: npm run start. I am using windows, so this will open on local host 3000.

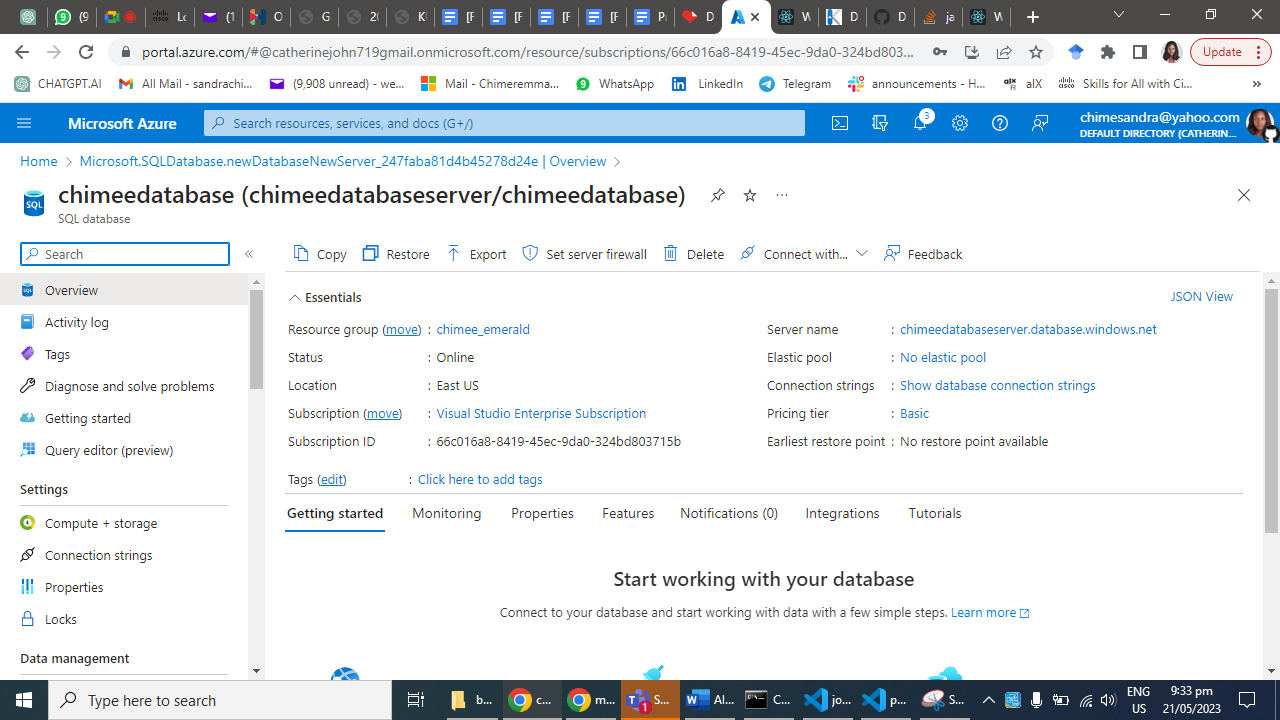

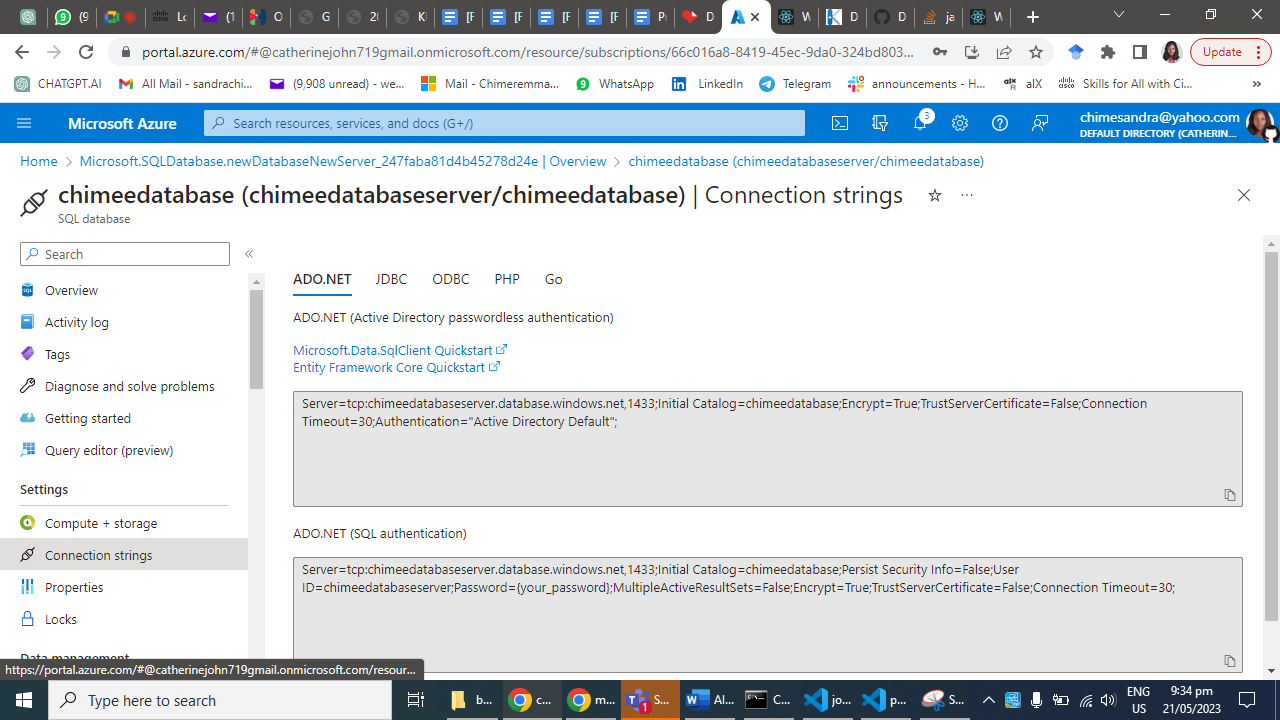

Step 3: Connect to an SQL Database

In the Azure portal, go to your App Service's "Overview" page.

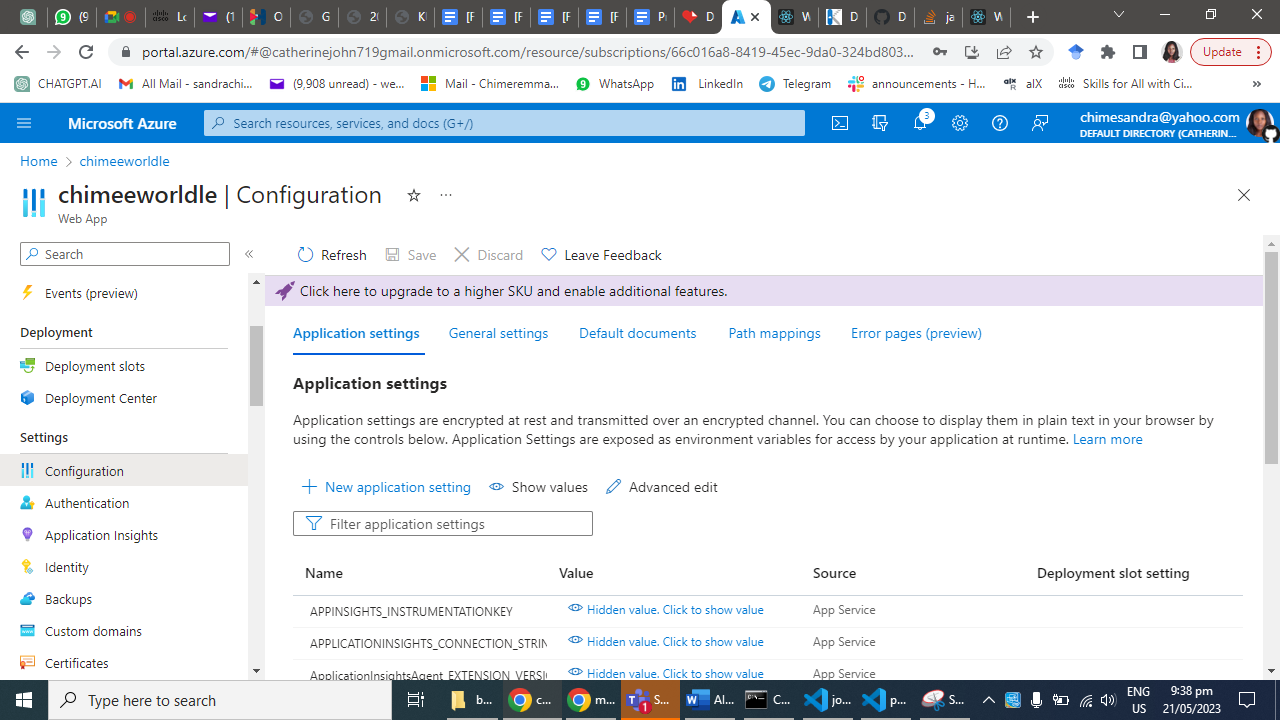

Under "Settings," click on "Configuration."

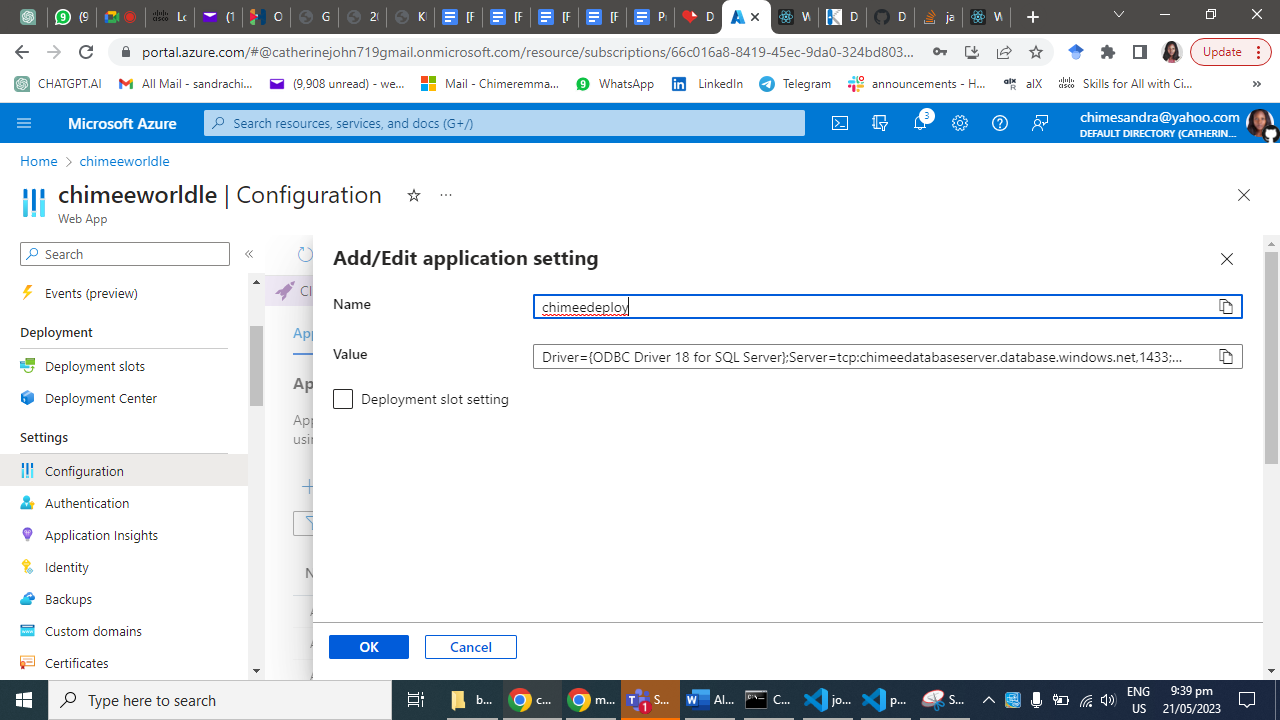

Add a new connection string by clicking on the "New connection string" button.

Enter a name for the connection string and select the SQL database provider.

Provide the necessary details, including the server name, database name, username, and password.

Click "OK" to save the connection string.

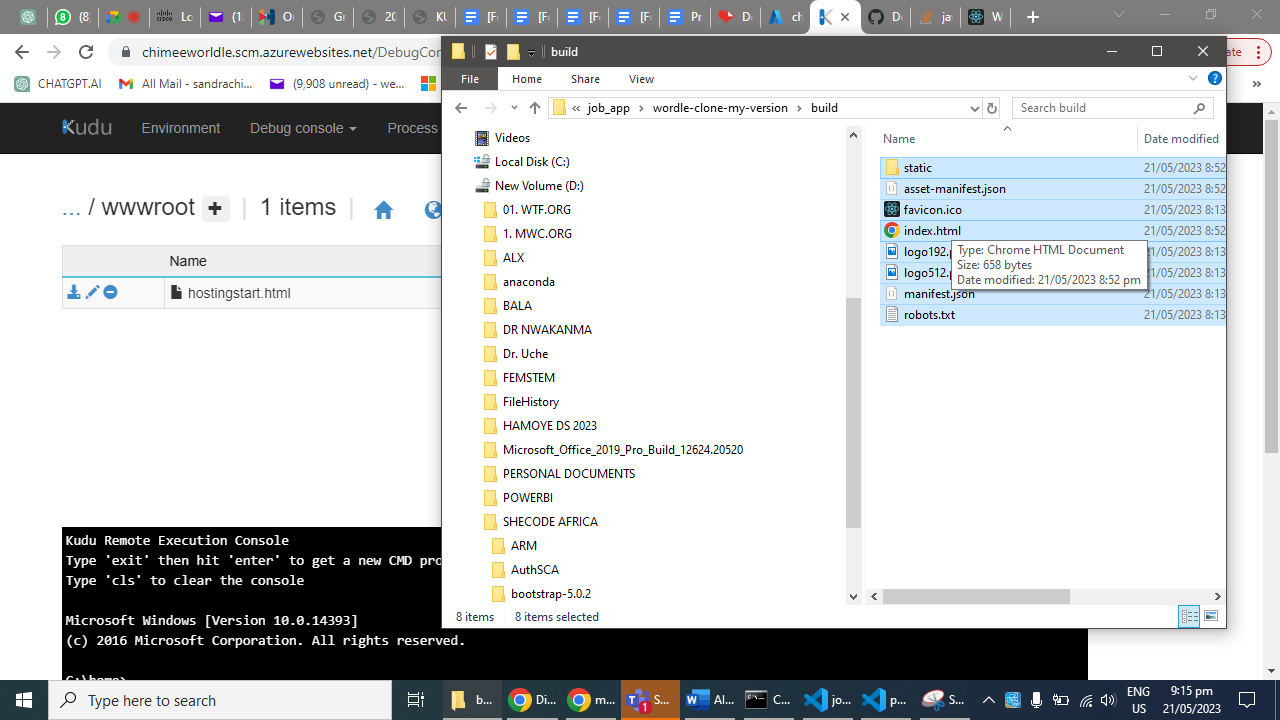

Step 4: Deploy the Node.js Web App to Azure

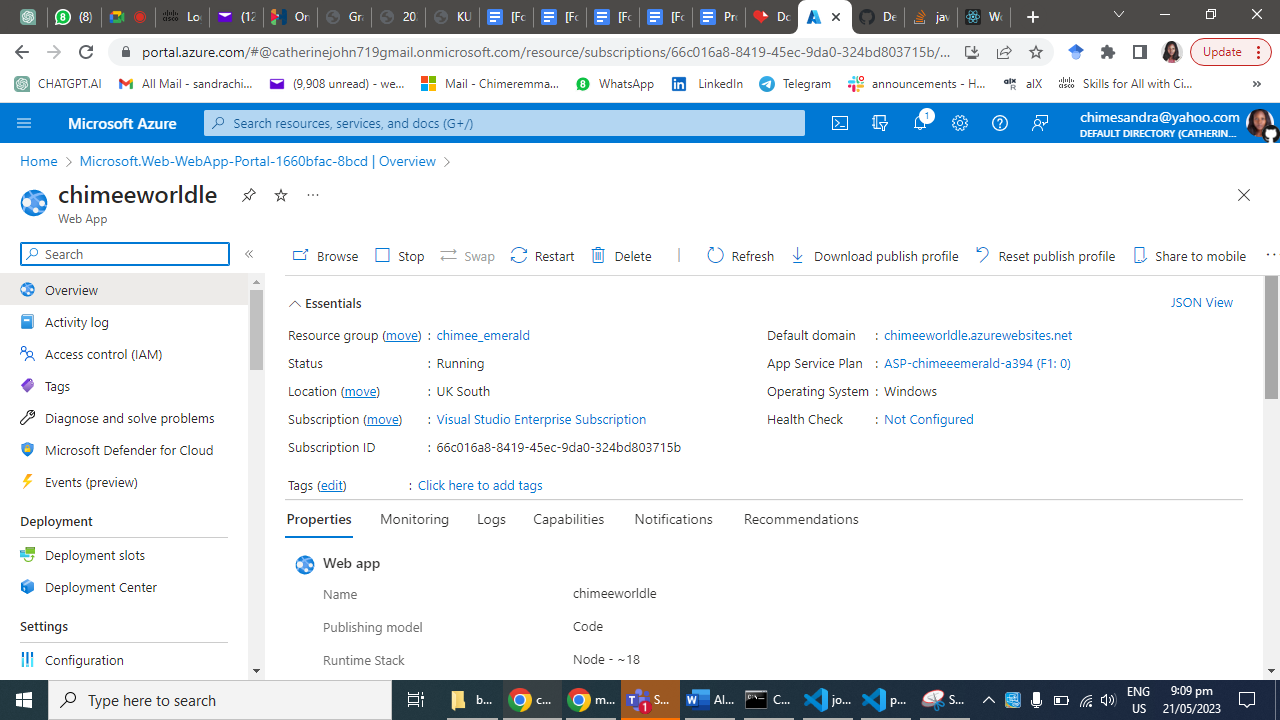

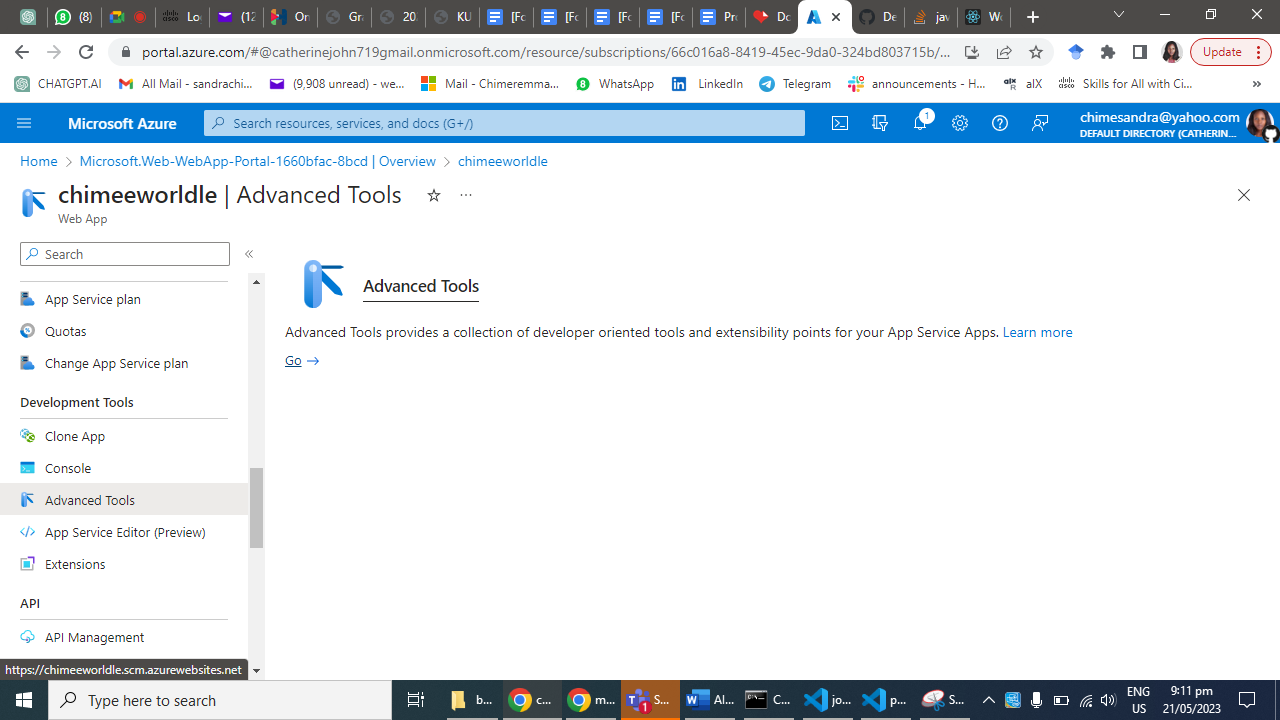

In the Azure portal, navigate to your App Service's "Overview" page.

Under "Deployment," select "Deployment Center."

Choose the deployment method that suits your project (e.g., Local Git, GitHub, Bitbucket, etc.).

Follow the instructions to link your repository and configure the deployment settings.

Once configured, trigger a deployment to deploy your Node.js web app to Azure.

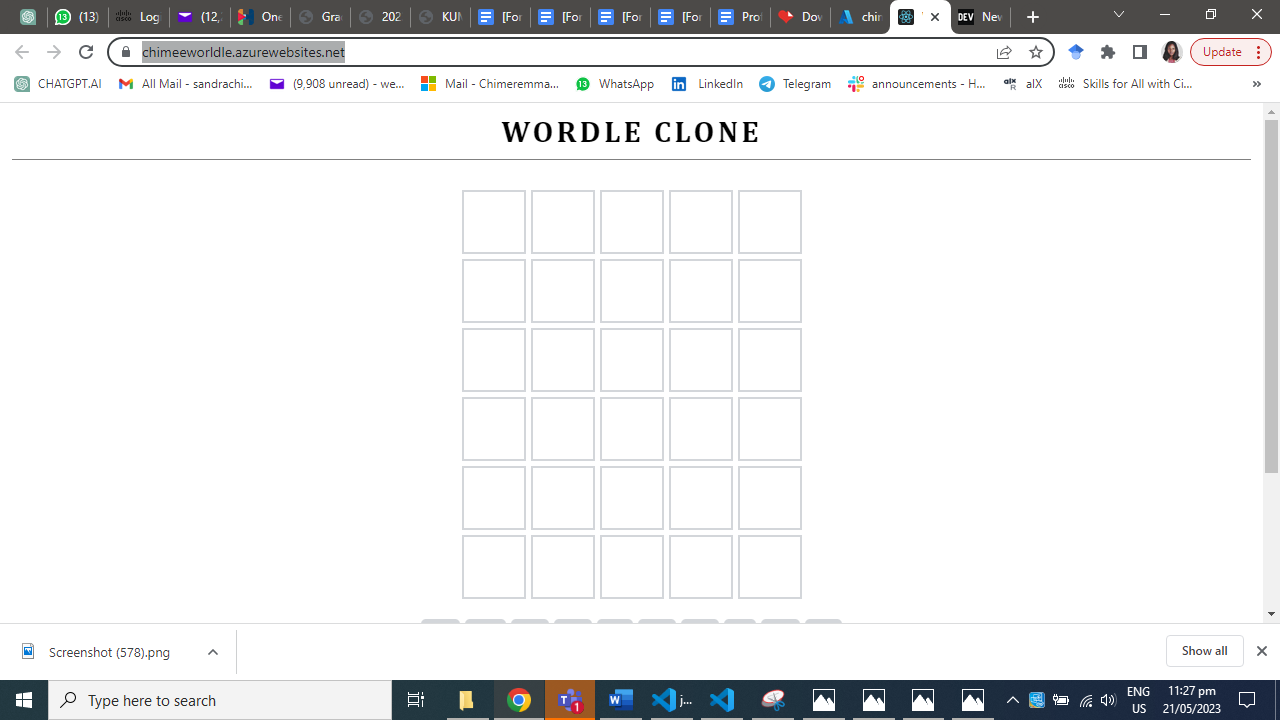

Step 5: Verify and Test

Once the deployment is complete, navigate to your Azure App Service's URL.

Test your web app's functionality and ensure that it connects to the SQL database successfully.

Monitor the application's logs and Azure portal's metrics to ensure everything is running smoothly.

Conclusion:

In Summary, we have explored the process of linking and deploying a Node.js web app to Azure App Services and connecting it to an SQL database. By following these steps, you can take advantage of Azure's robust infrastructure and scalability, allowing you to focus on building and maintaining your application without worrying about the underlying infrastructure. | chymee | |

1,475,853 | How to Install PrivateGPT - Local Document Question Answering with Privacy | How to Install PrivateGPT - Local Document Question Answering with Privacy There's something new in... | 0 | 2023-05-22T03:12:21 | https://dev.to/digital-nomad/how-to-install-privategpt-local-document-question-answering-with-privacy-5fka | ai, openai, chatgpt, tutorial | How to Install PrivateGPT - Local Document Question Answering with Privacy

There's something new in the AI space, in this post, we will walk you through the process of installing and setting up PrivateGPT.

What is it

A powerful tool that allows you to query documents locally without the need for an internet connection. Whether you're a researcher, dev, or just curious about exploring document querying tools, PrivateGPT provides an efficient and secure solution. This tutorial accompanies a Youtube video, where you can find a step-by-step demonstration of the installation process!

Prerequisites:

- Python 3.10 or later installed on your system or virtual env

- Basic knowledge of using the command line Interface (CLI/Terminal)

- Git installed

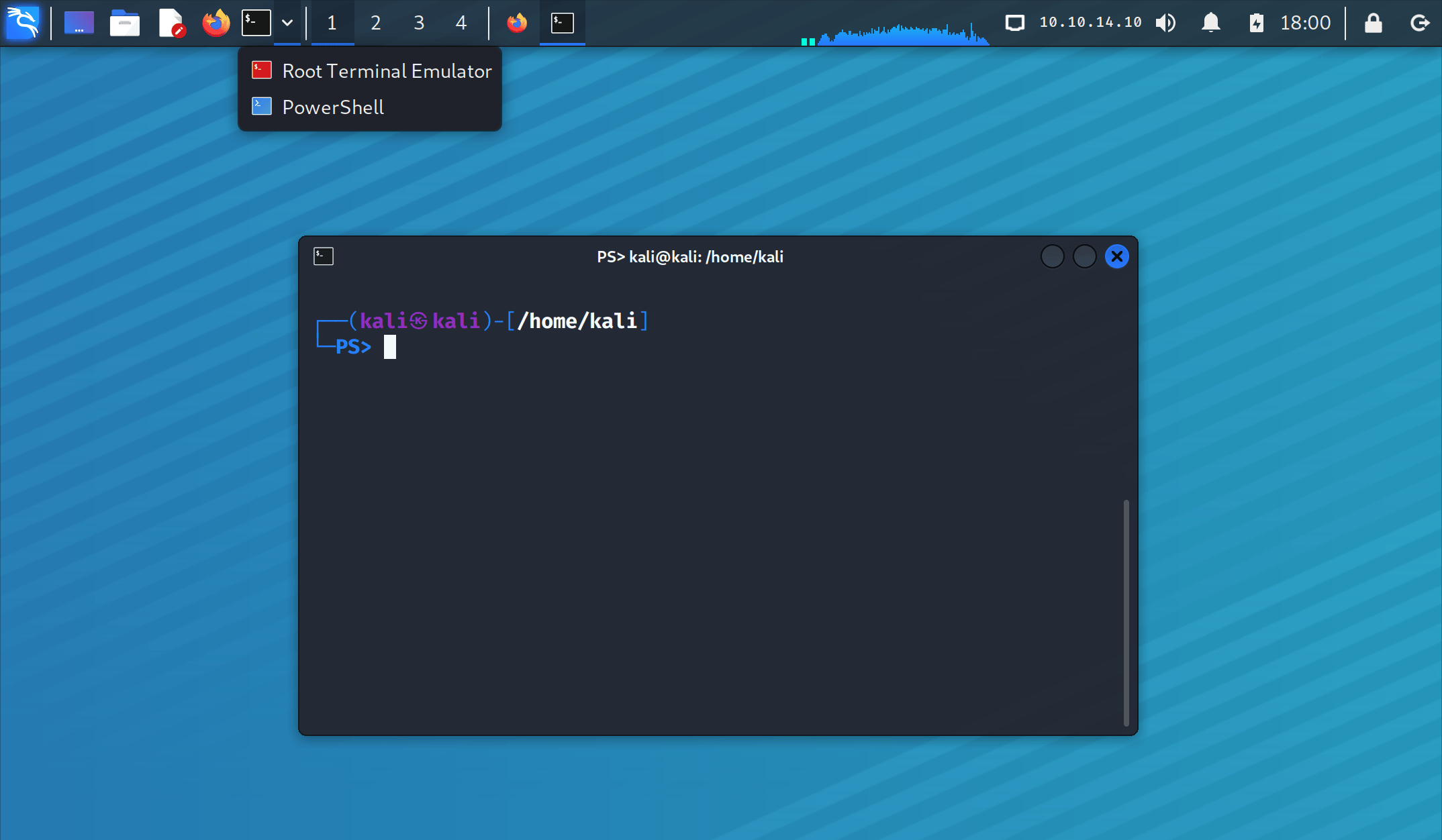

You can create a folder on your desktop. In the screenshot below you can see I created a folder called 'blog_projects'. Open the command line from that folder or navigate to that folder using the terminal/ Command Line. Follow the steps below to create a virtual environment.

**First, let's create a virtual environment.**

Create a virtual environment:

Open your terminal and navigate to the desired directory.

Run the following command to create a virtual environment (replace myenv with your preferred name):

python3 -m venv myenv

The name of your virtual environment will be 'myenv'

Activate the virtual environment:

On macOS and Linux, use the following command:

source myenv/bin/activate

On Windows, use the following command:

myenv\Scripts\activate

Run the git clone command to clone the repository:

git clone https://github.com/imartinez/privateGPT.git

By creating and activating the virtual environment before cloning the repository, we ensure that the project dependencies will be installed and managed within this environment. This helps maintain a clean and isolated development environment specific to this project.

After cloning the repository, you can proceed to install the project dependencies and start working on the project within the activated virtual environment.

Then copy the code repo from Github, and go into your directory or folder where you want your project to live. Open the terminal or navigate to your folder from the command line.

Once everything loads, you can run the install requirements command to install the needed dependencies.

Navigate to the directory where you want to install PrivateGPT.

CD <FOLDER NAME>

Run the following command to install the required dependencies:

pip install -r requirements.txt

Next, download the LLM model and place it in a directory of your choice. The default model is 'ggml-gpt4all-j-v1.3-groovy.bin,' but if you prefer a different GPT4All-J compatible model, you can download it and reference it in your .env file.

Rename the 'example.env' file to '.env' and edit the variables appropriately.

Set the 'MODEL_TYPE' variable to either 'LlamaCpp' or 'GPT4All,' depending on the model you're using.

Set the 'PERSIST_DIRECTORY' variable to the folder where you want your vector store to be stored.

Set the 'MODEL_PATH' variable to the path of your GPT4All or LlamaCpp supported LLM model.

Set the 'MODEL_N_CTX' variable to the maximum token limit for the LLM model.

Set the 'EMBEDDINGS_MODEL_NAME' variable to the SentenceTransformers embeddings model name (refer to https://www.sbert.net/docs/pretrained_models.html).

Make sure you create a models folder in your project to place the model you downloaded.

PrivateGPT comes with a sample dataset that uses a 'state of the union transcript' as an example. However, you can also ingest your own dataset. Let me show you how."

Put all your files into the 'source_documents' directory.

Make sure your files have one of the supported extensions: CSV, Word Document (docx, doc), EverNote (enex), Email (eml), EPub (epub), HTML File (html), Markdown (md), Outlook Message (msg), Open Document Text (odt), Portable Document Format (PDF), PowerPoint Document (pptx, ppt), Text file (txt).

Run the following command to ingest all the data:

python ingest.py

Perfect! The data ingestion process is complete. Now, let's move on to the next step!

------- ------ ------- ------- ------- --------------------if you have this error: cannot import name 'DEFAULT_CIPHERS' from 'urllib3.util.ssl_' use this command: python -m pip install requests "urllib3<2"

[ Key thing to mention, IF YOU ADD NEW DOCUMENTS TO YOUR SOURCE_DOCS you need to rerun ‘python ingest.py’

---------------------------------------------------------------

Asking Questions to Your Documents Host: Now comes the exciting part—asking questions to your documents using PrivateGPT. Let me show you how it's done.

Open your terminal or command prompt.

Navigate to the directory where you installed PrivateGPT.

[ project directory 'privateGPT' , if you type ls in your CLI you will see the READ.ME file, among a few files.]

Run the following command:

python privateGPT.py

Wait for the script to prompt you for input.

When prompted, enter your question!

Tricks and tips:

Use python privategpt.py -s [ to remove the sources from your output. So instead of displaying the answer and the source it will only display the source ]

On line 33, at the end of the command where you see’ verbose=false, ‘ enter ‘n threads=16’ which will use more power to generate text at a faster rate!

Pros & Cons

Great for anyone who wants to understand complex documents on their local computer.

Great for private data you don't want to leak out externally.

Particularly great for students, people new to an industry, anyone learning about taxes, or anyone learning anything complicated that they need help understanding.

The wait time can be 30-50 seconds or maybe even longer because you’re running it on your local computer.

END OF BLOG - how to install privateGPT & query documents locally and private

LETS CONNECT!!

Follow me on: Twitter, [Linkedin,](https://www.linkedin.com/in/olu-a/) Medium and AIapplicationsblog.com

Prepare for your next job application with — [Cover Letter Generator!](http://coverletterbuilder.up.railway.app/)

| digital-nomad |

1,475,911 | The risk of Open Source vs. proprietary 3rd party libraries | Using 3rd party libraries is a risky business. Both if they are proprietary and if they are open source. | 20,858 | 2023-05-22T04:45:57 | https://dev.to/szabgab/the-risk-of-open-source-vs-proprietary-3rd-party-libraries-2dfb | opensource, programming, discuss, business | ---

title: The risk of Open Source vs. proprietary 3rd party libraries

published: true

description: Using 3rd party libraries is a risky business. Both if they are proprietary and if they are open source.

tags: opensource, programming, discuss, business

series: opensource

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2023-05-21 07:38 +0000

---

Using 3rd party libraries always have risks. If you buy them from a company you might have the fuzzy feeling that you will be supported no matter what. However, at the end if the supplier company goes out of business, decides to discontinue the project, or just does not have the bandwidth to provide prompt service you are out of luck.

If this is a security issue and you don't get prompt fix for it, you are at a risk. If the problem is "just" a serious flaw in the software, you don't have any control over how fast you will get a fix.

You might have legal options, but do you as a CTO, a tech lead, or a developer want to wait till the legal options bear fruit? Probably not.

Using Open Source will not give you that fuzzy feeling, but it will give you controls. You can fix any problem yourself or you can hire someone to do it for you. Open Source has its own risks that I'll discuss in another post, but here are a few.

## Legal

* Does the package have an open source license compatible with the way you will want to use it?

* Does the package contain code that comes from other source that might not be open source or might have different licenses?

## Technical

* Do you really have access to the latest source code?

* Is it written in a language that you are familiar with?

* Does it have a good test coverage? Do you have access to it? Do you know how to run it?

* How many people maintain the project? How many people are familiar with the code-base?

* Does the project have an up-to-date public VCS?

* When was the last change recorded?

* How many open issues are there?

* How many open Pull-Request are there?

* How fast are PRs and Issues addressed?

* What is the complexity of the code?

## Do open source projects have more risk factors?

Seeing the above list one might think that Open Source have more risk factors, but in reality proprietary packages have similar risk-factors, but they are hidden from you and in most cases you cannot alleviate them.

## What do you think?

* What other risk factors do you see?

| szabgab |

1,476,518 | Fakta Menarik Honda PCX: Skutik Modern Terbaik di Indonesia | Apabila Anda mencari skutik modern yang andal dan elegan, maka Honda PCX bisa menjadi pilihan yang... | 0 | 2023-05-22T15:49:14 | https://dev.to/agnesolivo-leavime/fakta-menarik-honda-pcx-skutik-modern-terbaik-di-indonesia-25o2 | tutorial, python, javascript, devops |

Apabila Anda mencari skutik modern yang andal dan elegan, maka [Honda PCX](https://www.leavime.com) bisa menjadi pilihan yang tepat. Motor ini telah terbukti menjadi salah satu skutik terbaik di pasar Indonesia, dengan desain yang menawan dan teknologi canggih yang akan membuat pengalaman berkendara Anda lebih menyenangkan.

**Pengenalan Honda PCX

**

Honda PCX adalah skutik premium yang pertama kali diperkenalkan di Jepang pada tahun 2009. Kemudian, pada tahun 2010, Honda memperkenalkan motor ini di Indonesia dengan dua varian mesin, yaitu 125cc dan 150cc. Dalam beberapa tahun terakhir, Honda PCX semakin populer di Indonesia dan menjadi salah satu motor terlaris di pasar skutik premium.

Berikut ini adalah beberapa fitur utama Honda PCX yang membuatnya menjadi pilihan yang populer di kalangan konsumen Indonesia:

**Desain modern dan elegan**

- Mesin yang bertenaga dan ramah lingkungan

- Fitur keamanan dan kenyamanan yang canggih

- Performa yang andal dan mudah dikendalikan

**[Keunggulan Honda PCX](https://www.leavime.com)

**

Honda PCX menawarkan banyak keunggulan yang membuatnya menjadi salah satu skutik premium terbaik di Indonesia. Berikut ini adalah beberapa keunggulan yang dapat Anda nikmati jika membeli Honda PCX:

**Desain Elegan dan Modern**

Honda PCX menawarkan desain yang elegan dan modern, dengan balutan bodi yang ramping dan aerodinamis. Motor ini memiliki lampu depan LED yang tajam dan futuristik, serta lampu belakang yang dilengkapi dengan fitur stop lamp dan sein LED. Selain itu, Honda PCX juga dilengkapi dengan kunci kontak tanpa kunci yang sangat praktis dan mudah digunakan.

**Mesin Bertenaga dan Ramah Lingkungan**

Honda PCX memiliki mesin yang bertenaga dan efisien, dengan dua pilihan kapasitas mesin yaitu 125cc dan 150cc. Mesin ini dilengkapi dengan teknologi PGM-FI yang canggih, yang memastikan pembakaran bahan bakar yang lebih efisien dan ramah lingkungan. Selain itu, Honda PCX juga dilengkapi dengan transmisi otomatis yang sangat mudah digunakan.

**Fitur Keamanan dan Kenyamanan Canggih**

Honda PCX menawarkan banyak fitur keamanan dan kenyamanan yang canggih, seperti rem cakram dengan sistem ABS, suspensi depan teleskopik, dan suspensi belakang ganda yang membuat pengendara merasa nyaman saat berkendara. Selain itu, motor ini juga dilengkapi dengan fitur parkir otomatis dan kunci pengaman ganda untuk menjaga keamanan motor Anda.

**Performa Andal dan Mudah Dikendalikan**

Honda PCX memiliki performa yang andal dan mudah dikendalikan, dengan akselerasi yang responsif dan tenaga yang kuat. Motor ini juga dilengkapi dengan sistem pengereman yang responsif dan mudah dikendalikan, sehingga membuat pengendara merasa lebih aman dan percaya diri saat berkendara.

**Harga Terjangkau**

Meskipun Honda PCX memiliki banyak fitur dan teknologi canggih, motor ini tetap memiliki harga yang terjangkau untuk kelasnya. Dengan harga yang kompetitif, Honda PCX bisa menjadi alternatif yang menarik bagi konsumen yang mencari skutik premium dengan kualitas yang baik.

**FAQ Tentang Honda PCX**

Berikut ini adalah beberapa pertanyaan umum tentang Honda PCX beserta jawabannya:

- Apa perbedaan antara Honda PCX 125cc dan 150cc?

- Perbedaan utama antara Honda PCX 125cc dan 150cc adalah kapasitas mesinnya. Mesin Honda PCX 150cc lebih besar dan bertenaga daripada mesin 125cc.

- Apakah Honda PCX dilengkapi dengan teknologi PGM-FI?

- Ya, Honda PCX dilengkapi dengan teknologi PGM-FI yang canggih untuk pembakaran bahan bakar yang lebih efisien dan ramah lingkungan.

- Apakah Honda PCX memiliki sistem pengereman ABS?

- Ya, Honda PCX dilengkapi dengan sistem pengereman ABS yang canggih untuk meningkatkan keamanan pengendara.

- Apakah Honda PCX cocok untuk pengendara pemula?

- Ya, Honda PCX sangat cocok untuk pengendara pemula karena memiliki performa yang mudah dikendalikan dan fitur keamanan yang canggih.

- Berapa harga Honda PCX di Indonesia?

- Harga Honda PCX di Indonesia bervariasi tergantung pada varian dan spesifikasi motor. Namun, secara umum harga Honda PCX di Indonesia berkisar antara Rp 30 juta hingga Rp 40 juta.

- Apakah Honda PCX memiliki garansi?

- Ya, Honda PCX dilengkapi dengan garansi resmi dari pabrik untuk menjamin kualitas dan keandalan motor.

**Kesimpulan**

Honda PCX adalah skutik premium yang menawarkan banyak fitur dan teknologi canggih, serta desain yang elegan dan modern. Motor ini cocok untuk pengendara pemula maupun berpengalaman, dan memiliki performa yang andal dan mudah dikendalikan. Dengan harga yang terjangkau dan garansi resmi dari pabrik, Honda PCX bisa menjadi alternatif yang menarik bagi konsumen yang mencari skutik premium dengan kualitas yang baik.

kunjungi web berikut :

• [Leavime](https://www.leavime.com)

• [Money Info](https://moneyinfo.site)

• [Glass Styles](https://www.glassstyles.eu.org)

• [Pic Wallpapper](https://www.picwallpapper.eu.org)

• [News Goods Tee](https://newsgoodstee.com)

• [KUKOH](https://www.kukoh.com)

• [Bosz Desa](https://www.boszdesa.com)

• [Creative Maju](https://www.creativemaju.com)

• [Luincah](https://www.luincah.com)

• [Koplexs Studio](https://koplexsstudio.com)

• [Rita Movie](https://ritamovie.xyz)

• [Countena](https://www.countena.com)

Please support me. Thank You.

| agnesolivo-leavime |

1,475,963 | Surf the Web with Lightning Speed | Fast and reliable internet is no longer a luxury, but a necessity in today's digital age. With a... | 0 | 2023-05-22T06:59:46 | https://dev.to/oliver2232/surf-the-web-with-lightning-speed-d7h | Fast and reliable internet is no longer a luxury, but a necessity in today's digital age. With a diverse range of internet packages, you can choose the perfect plan tailored to your needs, ensuring a seamless online experience. Whether you're a casual web surfer, a streaming enthusiast, or a professional relying on a stable connection, [latest packages](https://latestpackages.com/) offer blazing-fast speeds that will keep you connected, productive, and entertained. Say goodbye to buffering and hello to uninterrupted browsing, streaming, and gaming with our top-notch internet packages | oliver2232 | |

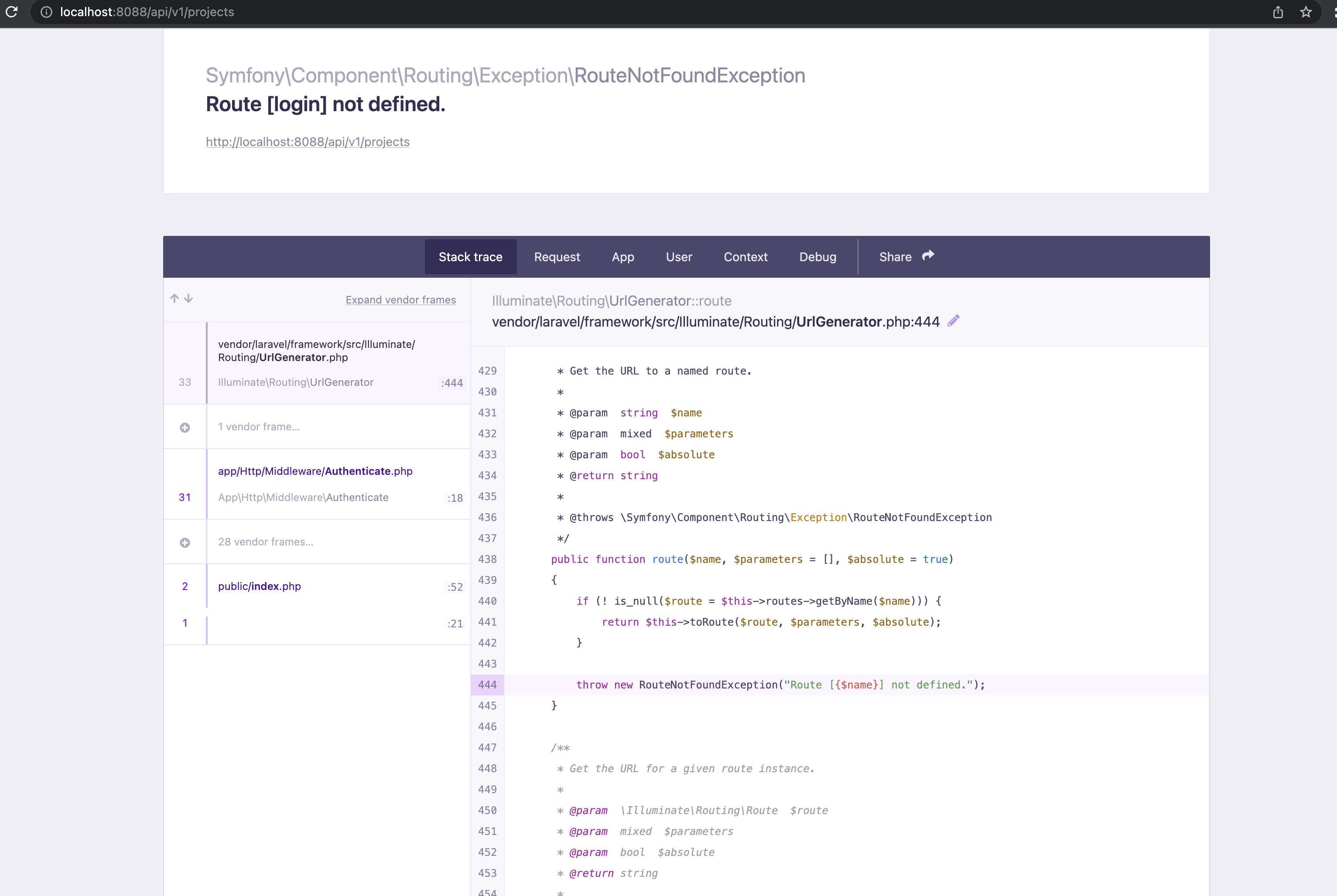

1,476,038 | How to return JSON response on API routes in Laravel | If you work with Laravel, you could have found yourself in a situation where you have written your... | 0 | 2023-05-22T08:19:45 | https://dev.to/onabright/how-to-return-json-response-on-api-routes-in-laravel-1cga | tutorial, laravel, api, webdev | If you work with Laravel, you could have found yourself in a situation where you have written your API Controllers, set up your API routes and setup authentication (for example, using Sanctum) to protect your routes from unauthorized access.

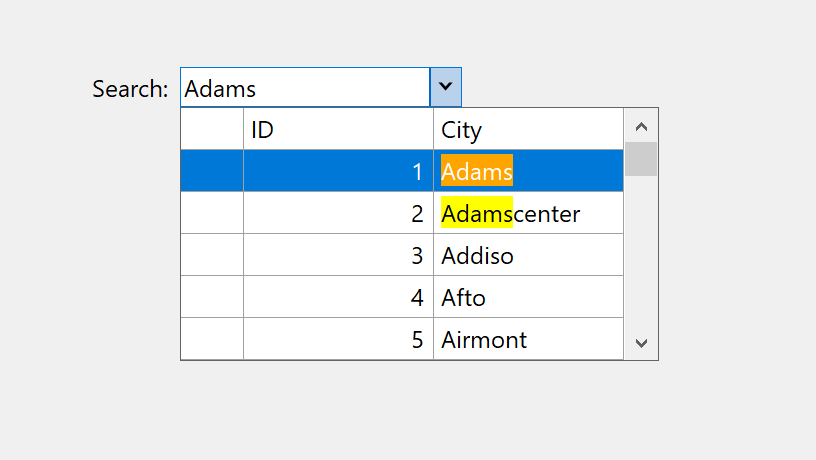

You then try to access a protected route through a browser which will return something like this:

First of all this is a good thing because it means your API route is protected and can only be accessed by authenticated users. However, it doesn't look pretty at all.

By default, Laravel returns header responses in HTML format.

If you access the API route/endpoint using an API testing tool like Postman, Fiddler, RESTer, etc., you could easily update the Header by adding an entry called `Accept` and setting it to `application/json`. This would display a 'graceful' json response instead of HTML.

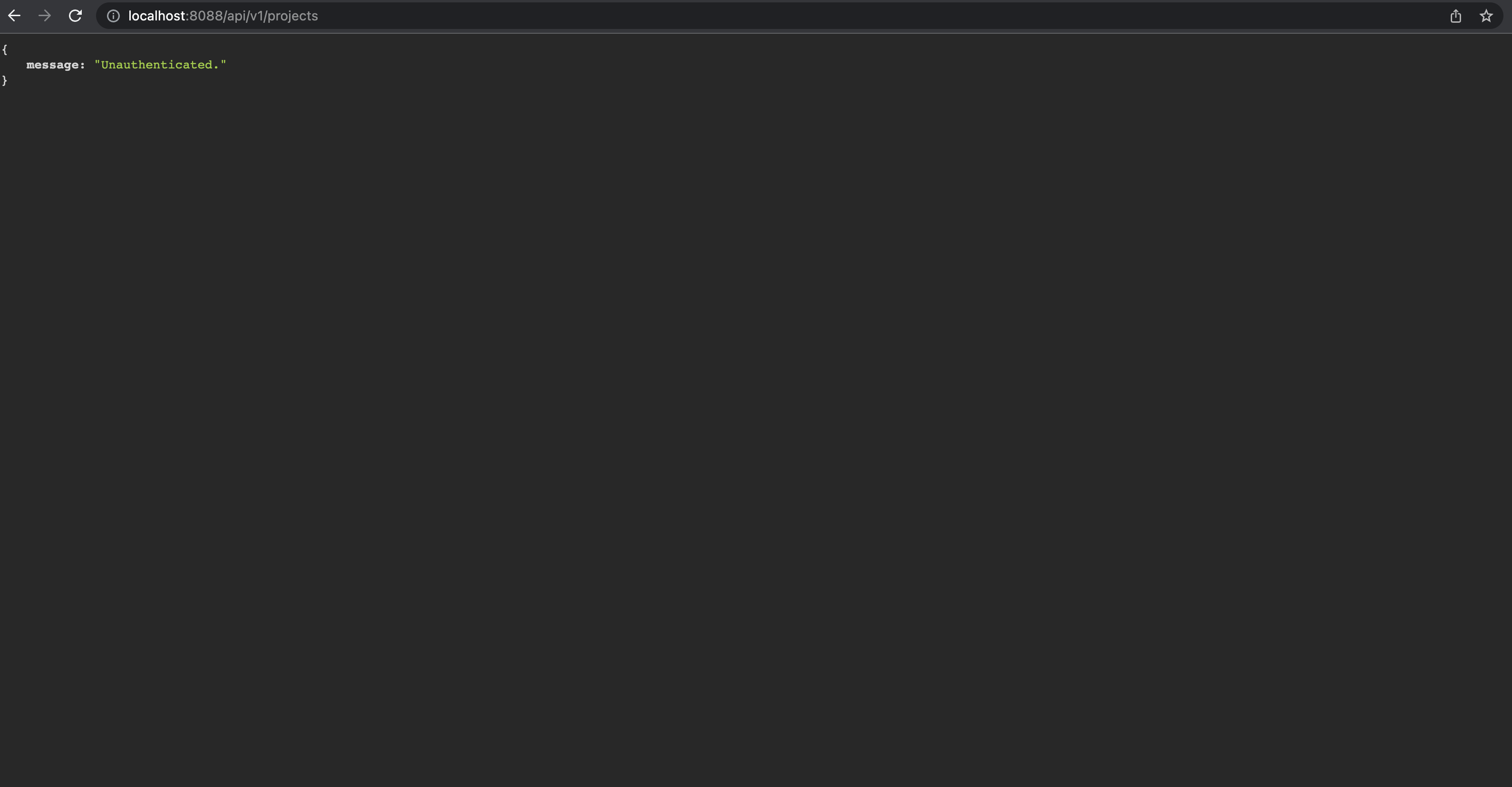

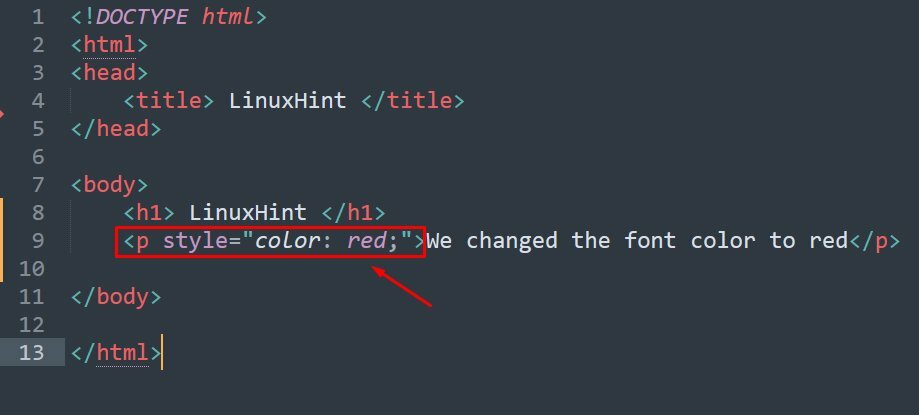

To change this default behaviour programmatically, we would want Laravel to return a json response telling the user that they are unauthenticated. How do we achieve this? It is actually pretty simple to do. Let's see how.

## 1. Create a Custom Middleware

Using the Artisan CLI, create the middleware like this:

```php

php artisan make:middleware ReturnJsonResponseMiddleware

```

Open the middleware file located in App\Http\Middleware.

Update the handle method to look like this:

```php

public function handle(Request $request, Closure $next)

{

$request->headers->set('Accept', 'application/json');

return $next($request);

}

```

What this does is that it sets the header to accept and return a json response.

## 2. Publish the Custom Middleware

To do this, we need to add our middleware to the Laravel Kernel under the application's global HTTP middleware stack.

To do this, open` Kernel.php` in App\Http and add the custom middleware class:

```php

protected $middleware = [

...

\App\Http\Middleware\ReturnJsonResponseMiddleware::class, //return graceful unauthenticated message

];

```

That's it! Now when a user tries to access a protect API route through the browser, they will get a json response:

```php

{

message: "Unauthenticated"

}

```

I hope this was helpful. | onabright |

1,476,044 | Healthcare Mobile App Development | Boost your business in the #healthcare industry with our top-notch #AppDevelopmentServices! Stay... | 0 | 2023-05-22T07:57:41 | https://dev.to/ryanhen36617931/healthcare-mobile-app-development-3bp4 | healthcaremobileappdevelopment, healthcareappdevelopment | Boost your business in the #healthcare industry with our top-notch #AppDevelopmentServices! Stay ahead of the competition and provide efficient healthcare

solutions to your customers. Contact us today to transform your ideas into reality!

Visit: https://www.uplogictech.com/healthcare-mobile-app-development-company

| ryanhen36617931 |

1,476,174 | 5 soft skills for developers | Soft skills are essential for developers, as they complement technical expertise and contribute to... | 0 | 2023-05-22T10:37:57 | https://dev.to/rafikadir/5-soft-skills-for-developers-41lm | webdev, coding, programming, frontend | Soft skills are essential for developers, as they complement technical expertise and contribute to effective collaboration, communication, and overall professional success. Here are some important soft skills for developers:

**Communication:** For developers, being able to communicate clearly is essential. Both technical and non-technical stakeholders require the capacity to communicate ideas coherently, actively listen to others, and understand technical knowledge.

**Collaboration:** Developers often work in teams, so strong collaboration skills are vital. This includes the ability to work well with others, contribute to discussions, share knowledge, and resolve conflicts constructively.

**Time management:** Developers often face multiple tasks and deadlines. Effective time management skills help them prioritize tasks, meet deadlines, and maintain productivity without compromising quality.

**Adaptability:** Since technology is a sector that is continuously changing, developers must be able to evolve with it. The ability to quickly adapt to altering goals, embrace new approaches, and be open to learning new technology are all desirable qualities.

**Continuous learning:** The technology landscape evolves rapidly, so developers must be committed to continuous learning. Staying up-to-date with new technologies, frameworks, and industry trends ensures professional growth and enhances problem-solving abilities.

----

👉 Get Frontend tips tricks on **Instagram**: [Rafi kadir](https://www.instagram.com/i_am_rafikadir/)

👉 Connect on **Linkedin** : [Rafi kadir](https://www.linkedin.com/in/rafikadir/) | rafikadir |

1,476,533 | 🌈 A One Person Startup Tech Stack for Ninja Developers - Next.js, Django, Kubernetes, and GCP 🚀 | Introduction In this article, I will share the tech stack I used to build my startup,... | 0 | 2023-05-22T16:17:30 | https://dev.to/chetanam/a-one-person-startup-tech-stack-nextjs-django-kubernetes-and-gcp-k62 | webdev, javascript, startup, python |

## Introduction

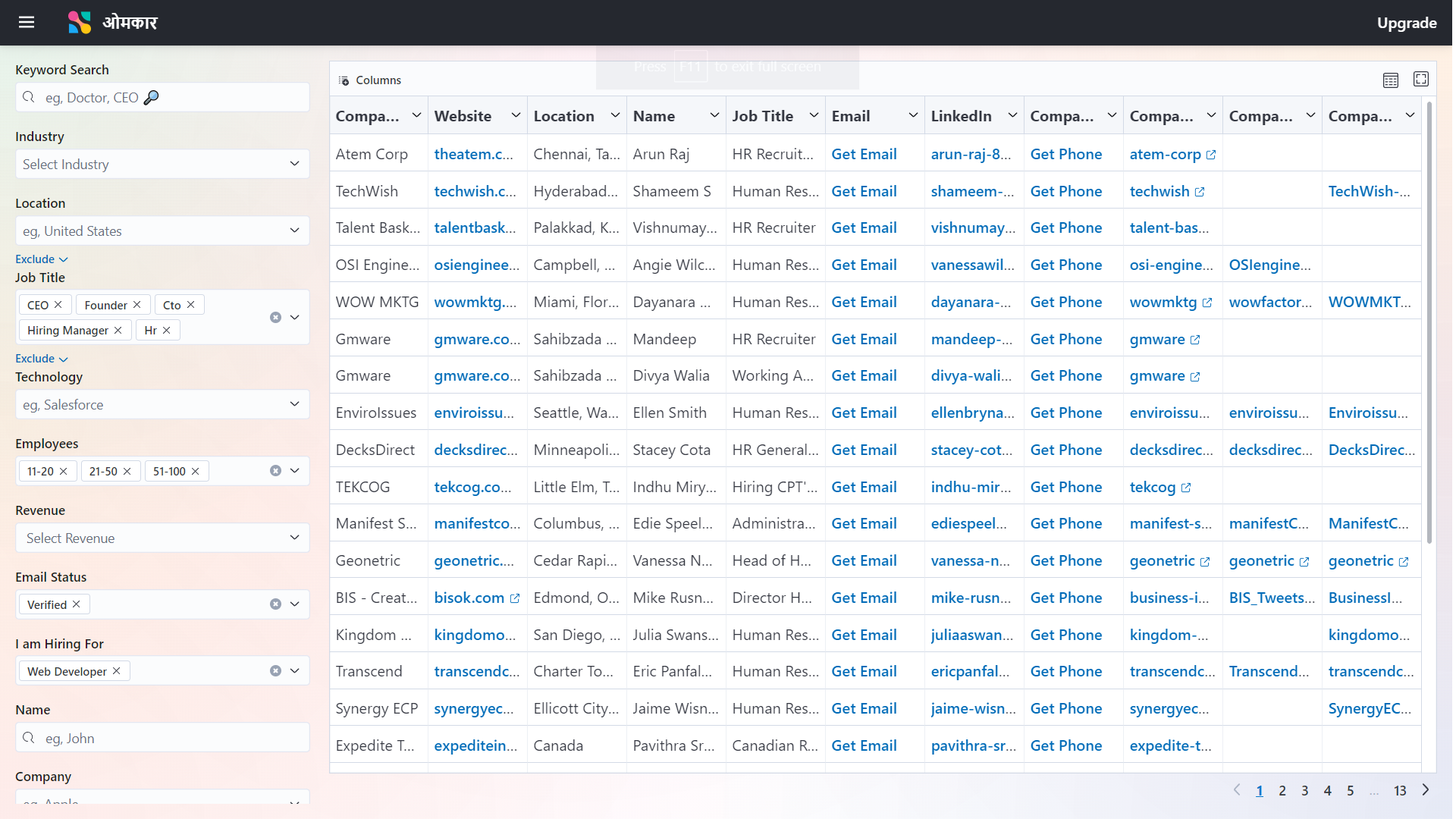

In this article, I will share the tech stack I used to build my startup, Omkar. My startup consists of several components, including Frontend, Backend, Blog.

By learning about my tech stack, you can gain insights that will assist you in selecting the tech stack for your startup.

## My Application Omkar Cloud

My Application Omkar Cloud is a full stack website with a Blog, developed using technologies like Django, Next.js, and Kubernetes

It is a People Search Engine that you can use, for example, to find people working in a company or CEOs of companies in an industry. Think of it as an advanced version of LinkedIn Search.

## Tech Stack of Omkar Cloud

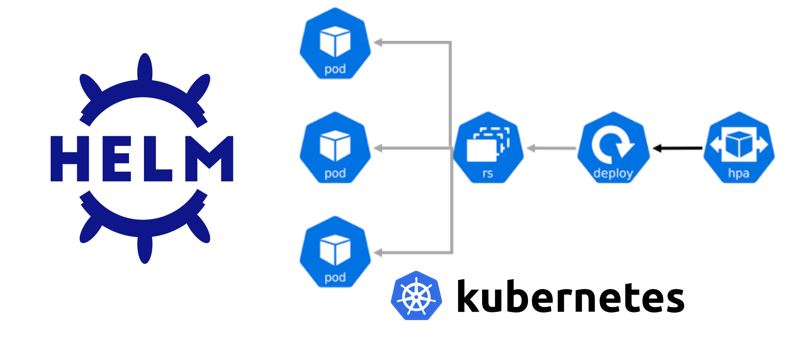

The Most Important Technologies in my Tech Stack are Kubernetes, GCP, Elastic UI, Next.js, GitHub Actions, and Django.

Here is a breakdown of my Tech Stack

### Frontend**

For the Frontend I have used Elastic UI, React and Next.js

**Elastic UI**

I have used many Component Libraries in the past, such as Bootstrap and Material UI. However, based on my experience, I have found the Component Library of Elastic UI to be particularly impressive. Elastic UI is really beautiful Component Library.

Therefore, I have chosen Elastic UI as my UI framework

**React**

Also, I had learnt two frameworks to create websites which are Svelte and React.

I wanted to use Svelte as it is much more concise than React. However, since the Elastic UI Library was not available for Svelte, I had no choice but to choose React as the only option available to me.

**Next.js**

Next.js is a framework built on top of React.

I think of Next.js as a framework for a framework 😂.

Next.js simplifies the usage of React and provides additional features. Therefore, instead of using the bare create-react-app setup, I opted to use Next.js as it makes working with React much easier

### Backend

For the Backend I used Django powered by SQLite Database

**Django**

I have learned various backend frameworks such as Express.js and Nest.js, but Django stood out as my favorite.

When working with Django, I found that I could accomplish tasks with significantly fewer lines of code compared to Nest.js or Express.js.

Django's conciseness is similar to Sanskrit Language, in Sanskrit you can convey your thoughts in very fewer words compared to English or Hindi Language.

Hence, I chose Django for its conciseness and simplicity.

**SQLite**

For the database, I had the option of using either SQLite or PostgreSQL. I opted for SQLite due to several reasons.

- Most Importantly for me as an Indian is that it is SQLite is cheaper than PostgreSQL since in SQLite there is no need to purchase a separate server.

- It allows developers to start developing faster since PostgreSQL requires spinning up a server, whereas SQLite is file-based

- It is easier to view table contents using SQLite Browser application in SQLite compared to PostgreSQL

Although, it can be argued that PostgreSQL is more Scalable but for a Start Up, SQLite does the Job Perfectly. Also, in future you can always migrate to PostgreSQL if necessary.

### Blog

To create the blog for my website, I utilized the tailwind-nextjs-starter-blog developed by Timothy Lin. It is a beautiful blogging platform built on Next.js.

### Deployment

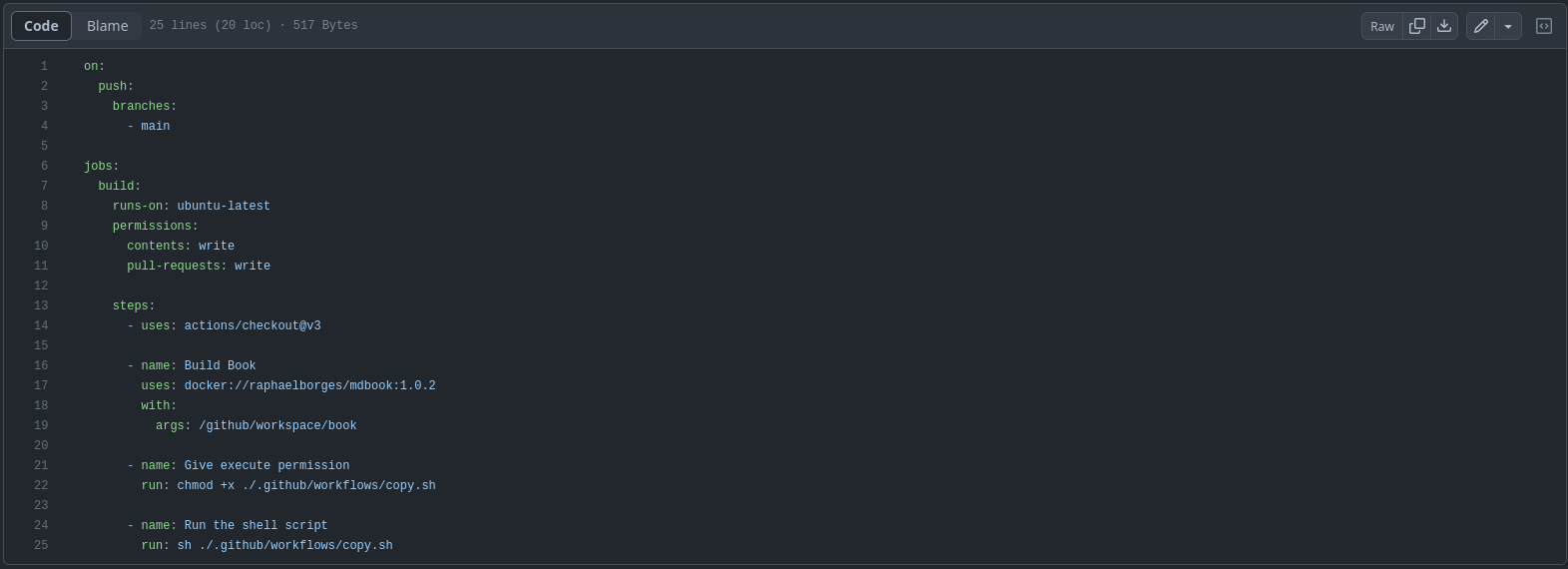

For deployment, I used Kubernetes, GitHub Actions, and Google Cloud Platform (GCP).

**Kubernetes**

I wanted to host the frontend, backend, and blog on a single domain, namely '**[www.omkar.cloud](http://www.omkar.cloud/)**' **at different paths**. Additionally, I needed to store the SQLite database file in storage.

Kubernetes provided a convenient method for orchestrating these needs. So, I used Kubernetes.

**GitHub Actions**

To automate the deployment process, I utilized GitHub Actions. With this setup, I could easily deploy a new version of my application by simply pushing the code to the master branch.

**GCP**

I chose GCP as the platform to run my entire Kubernetes stack. Google products have a reputation for quality, and GCP proved to be reliable and suitable for my needs. It costs me 2900 INR or $35 per month to run my full Stack.

### Other Tools

**Google Analytics.**

I used Google Analytics to track my website as It was the analytics software I was most familiar with.

**G Suite**

For creating a professional email address like **`info@omkar.cloud`**, I utilized G Suite. I opted for G Suite due to my familiarity with the Gmail interface and my resistance to change.

**Google Search Console**

I utilized Google Search Console to monitor the search ranking of my website, omkar.cloud, on Google.

**NameCheap**

I used **NameCheap** for buying domain name of omkar.cloud. I was satisfied with Name Cheap’s services.

## Final Thoughts

In short, I used the following technologies in my tech stack for **[omkar.cloud](http://www.omkar.cloud/)**:

Frontend:

- Elastic UI

- React

- Next.js

Backend:

- Django

- SQLite

Blog:

- tailwind-nextjs-starter-blog

Deployment:

- Kubernetes

- GitHub Actions

- Google Cloud Platform (GCP)

Other Tools:

- Google Analytics

- G Suite

- Google Search Console

- NameCheap

Overall, I am very satisfied with the tech stack of **[omkar.cloud](http://www.omkar.cloud/)**.

If you are creating a startup and considering the tech stack to use, based on my experience, I can confidently say that if you base your tech stack on mine, you will have a solid technological foundation for your startup.

I am curious to hear if you have any questions regarding the tech stack, so please feel free to ask in the comments.

Dhanyawad 🙏 | chetanam |

1,476,196 | Journey to Mastery: Completing the FreeCodeCamp JavaScript Algorithms and Data Structures Certification | FreeCodeCamp JavaScript Algorithms and Data Structures Certification. This comprehensive... | 0 | 2023-05-22T11:20:47 | https://dev.to/xmohammedawad/journey-to-mastery-completing-the-freecodecamp-javascript-algorithms-and-data-structures-certification-8jj | javascript, algorithms, webdev, coding | ### FreeCodeCamp JavaScript Algorithms and Data Structures Certification.

This comprehensive certification program provided me with a solid foundation in JavaScript programming, covering a wide range of concepts, algorithms, and data structures. In this article,

I will share my journey and the key learnings gained from each section of the certification.

#### Basic JavaScript:

The first step of the certification introduced me to the fundamental programming concepts in JavaScript. From numbers and strings to arrays, objects, loops, and conditional statements, I gained a deep understanding of how to work with basic data structures and control flow.

#### ES6:

As JavaScript constantly evolves, familiarity with the latest standards is crucial. The ES6 section delved into the new features introduced in ECMAScript 6, including arrow functions, destructuring, classes, promises, and modules. Learning these modern JavaScript techniques enabled me to write cleaner and more efficient code.

#### Regular Expressions:

Regular expressions, or regex, are powerful patterns used to match, search, and manipulate text. Through this section, I acquired the skills to construct complex regex patterns, leverage positive and negative lookaheads, and utilize capture groups. Regex opened up new possibilities for text processing and data manipulation.

#### Debugging:

A key skill for any developer is the ability to debug code effectively. The debugging section taught me how to utilize the JavaScript console to identify and resolve issues. I learned techniques to tackle syntax errors, runtime errors, and logical errors, ensuring my code operates as intended.

#### Basic Data Structures:

Understanding different data structures and knowing when to use them is crucial in programming. This section expanded my knowledge of arrays and objects, exploring their properties, methods, and manipulation techniques. I also learned about essential array methods like splice() and Object.keys() to efficiently work with data.

#### Basic Algorithm Scripting:

Algorithms form the backbone of programming, enabling efficient problem-solving. This section honed my algorithmic thinking skills by challenging me to solve various coding problems. From converting temperatures to handling complex 2D arrays, I gained confidence in breaking down problems into smaller parts and implementing effective solutions.

#### Object-Oriented Programming:

Object-Oriented Programming (OOP) is a popular approach to software development. Here, I delved into the principles of OOP in JavaScript, including objects, classes, prototypes, and inheritance. This knowledge empowered me to design and implement more organized and reusable code structures.

#### Functional Programming:

Functional Programming (FP) is another paradigm that promotes modular and reusable code. In this section, I learned about pure functions, avoiding mutations, and leveraging higher-order functions like map() and filter(). Functional programming concepts helped me write cleaner, more maintainable code.

#### The Projects:

To demonstrate my proficiency, I completed `21 intermediate algorithm` challenges and worked on `5 advanced projects`. These projects provided hands-on experience in solving real-world problems using JavaScript. By applying the concepts I learned, I gained confidence in tackling complex coding challenges and developing robust solutions.

#### Conclusion:

Completing the FreeCodeCamp JavaScript Algorithms and Data Structures Certification has been a transformative experience. I have not only deepened my understanding of JavaScript but also sharpened my problem-solving and critical-thinking skills. The certification journey has equipped me with a solid foundation to take on more significant coding projects and further advance my career as a JavaScript developer. I am excited to apply my newfound knowledge and continue exploring the vast world of JavaScript programming. | xmohammedawad |

1,476,337 | Deploy your React, NodeJS apps using Jenkins Pipeline | 🚀 As we are working on our Open Source Project named NoMise which is being built using ReactJs,... | 0 | 2023-05-24T14:43:52 | https://dev.to/lovepreetsingh/deploy-your-react-nodejs-apps-using-jenkins-pipeline-22pl | jenkins, development, programming, node | 🚀 As we are working on our Open Source Project named [NoMise](https://github.com/AlphaDecodeX/NoMise_Store) which is being built using ReactJs, Typescript and TailwindCss. When it comes to deployment we have several options:-

- Easy Deploy using providers like Vercel or Netlify where you have to drag and drop your code repository

- Deploy your code in Docker container and run that container in a cloud server

- Setup a CI/CD (Continuos Integration, Continuos delivery) Pipeline to deploy your App

Which one you'll choose?

😎 Obviously one should go with third because of its benefits. Once you have setuped your pipeline for the deployment, you don't need to build, deploy to container and host in cloud again and again. With one click, your code will build, containerized in a docker container and deployed to cloud (AWS EC2 instance in our case).

✨ If you haven't understood, Don't worry we will go step by step.

## 📍Introduction

First things first, what is jenkins, ci/cd pipeline and ec2 instance that we talked about above.

- Jenkins is a tool that make our life easier with deployments and running some automated tests like to deploy manually you first need to run ```npm run build``` then copy the build folder and containerize it and then serve it on a server. It can all be done by a set of actions that jenkins provide.

- ci/cd pipeline is continuos integration and continuos delivery which means code can be directly picked from a repository and after some tests run it will be deployed on the server

- EC2 is nothing but a computer at the end which is provided to you as a server to which we can SSH and run our scripts, code etc.

Note:- Those who are thinking that AWS is paid, yes it is but with free tier you can explore many things.

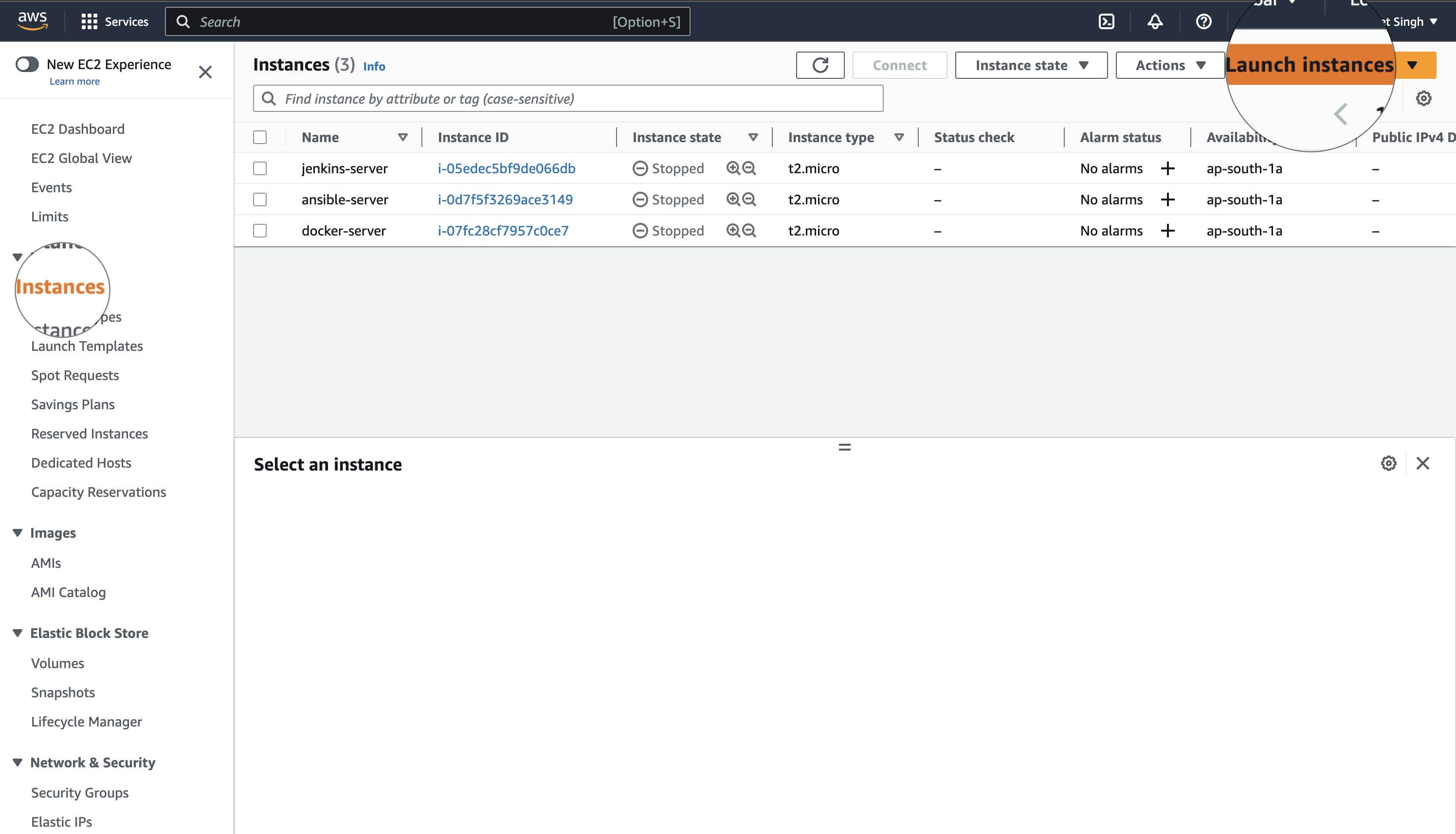

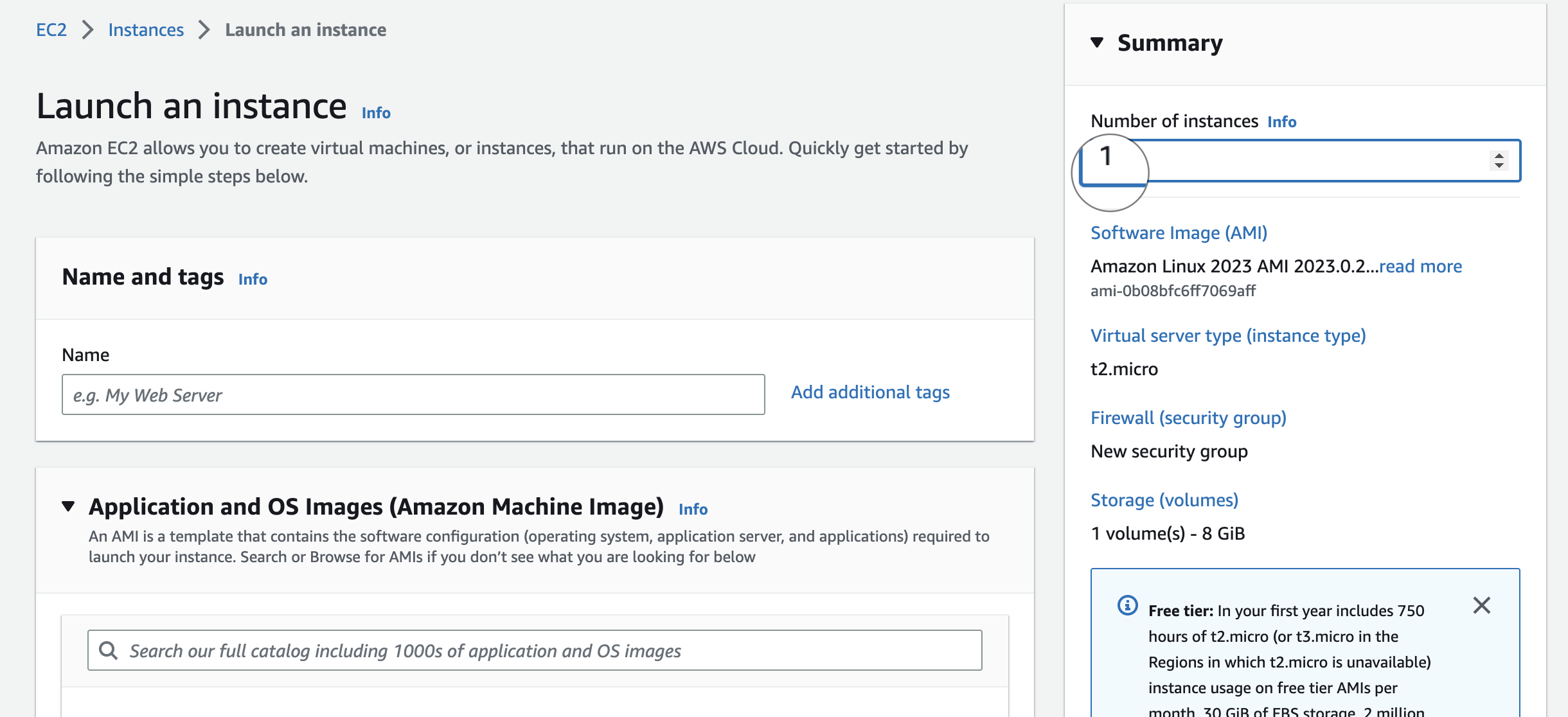

## 📌 Launching AWS EC2 instances

1. Go to AWS and signup for your new account and add a Debit/Credit card. For safety add with minimum balance if you don't want to spend over the free limit.

2. Search for EC2 and launch instances

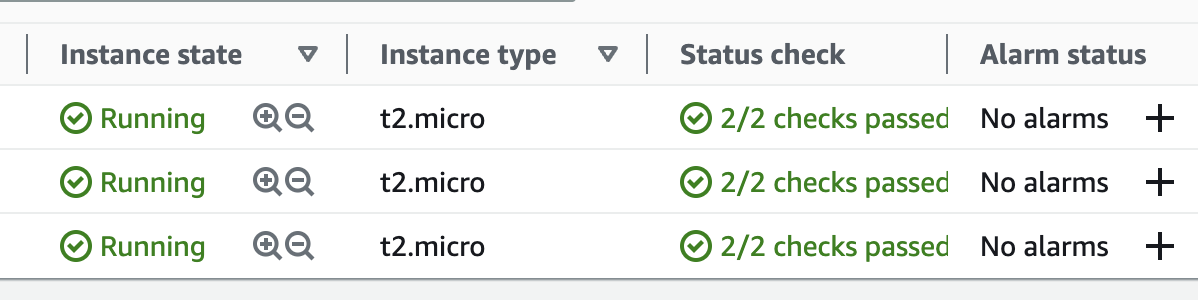

3. Select Default settings and launch 3 instances because

- one we'll use to install jenkins

- second we'll install ansible server which will dockerise the build code that we'll get from the jenkins over SSH

- third instance will run a docker container that will be served to the public to access

Note:- Write three to launch 3 instances

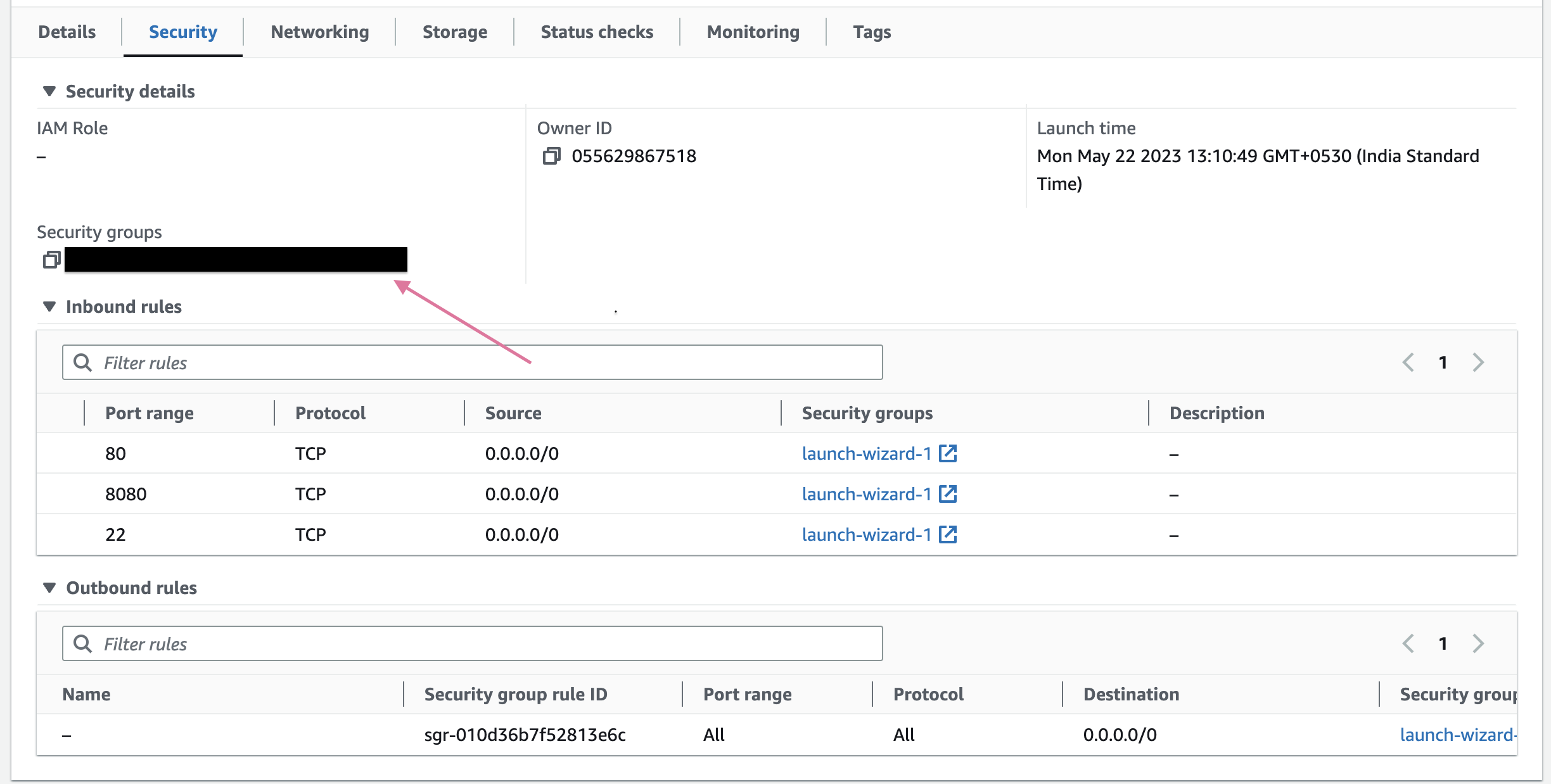

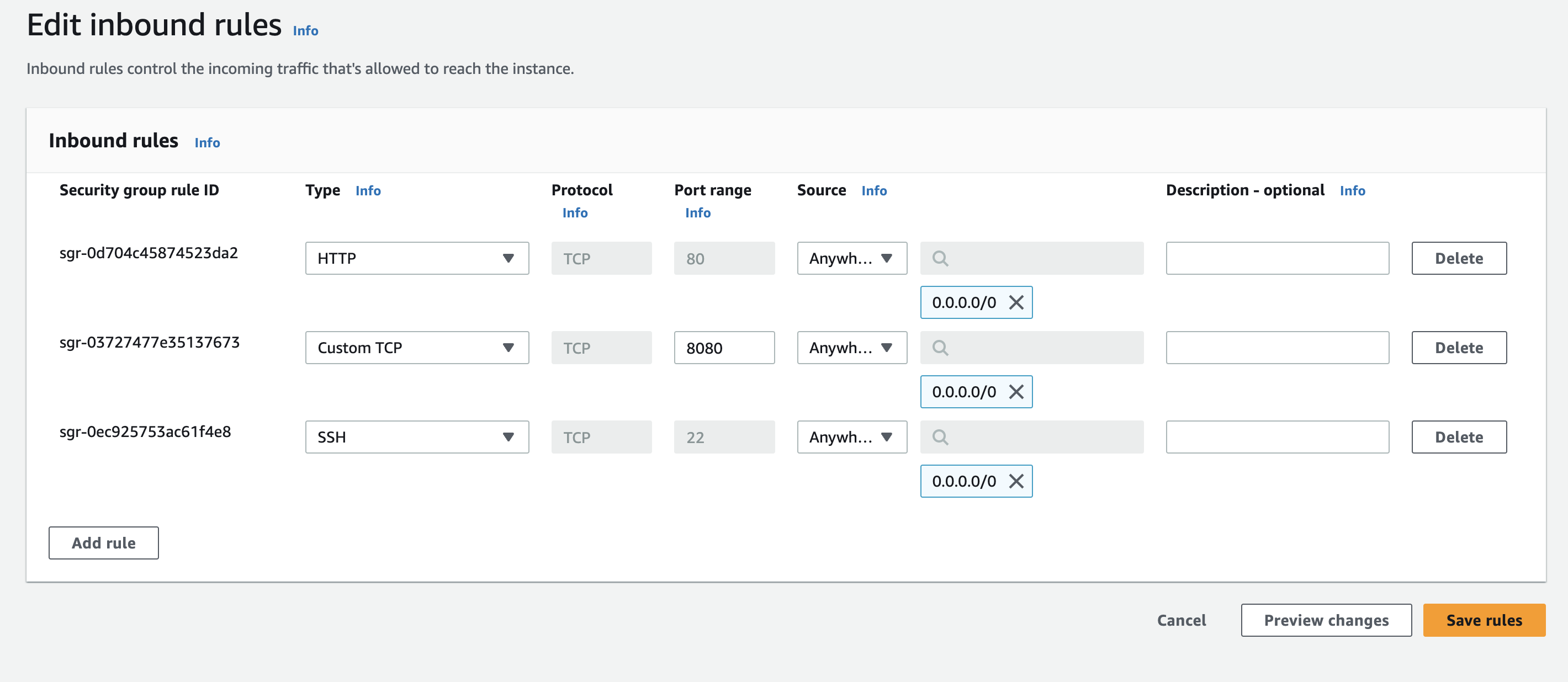

4. Now, we'll add some security rules to our instances because to access these instances/servers some request or ssh will be made. So, it will not allow every request who is coming to access them.

5. To Add security rules, click on security group and edit inbound rules:-

- Add these rules

Note:- It is not good to allow any traffic but for testing it is okay.

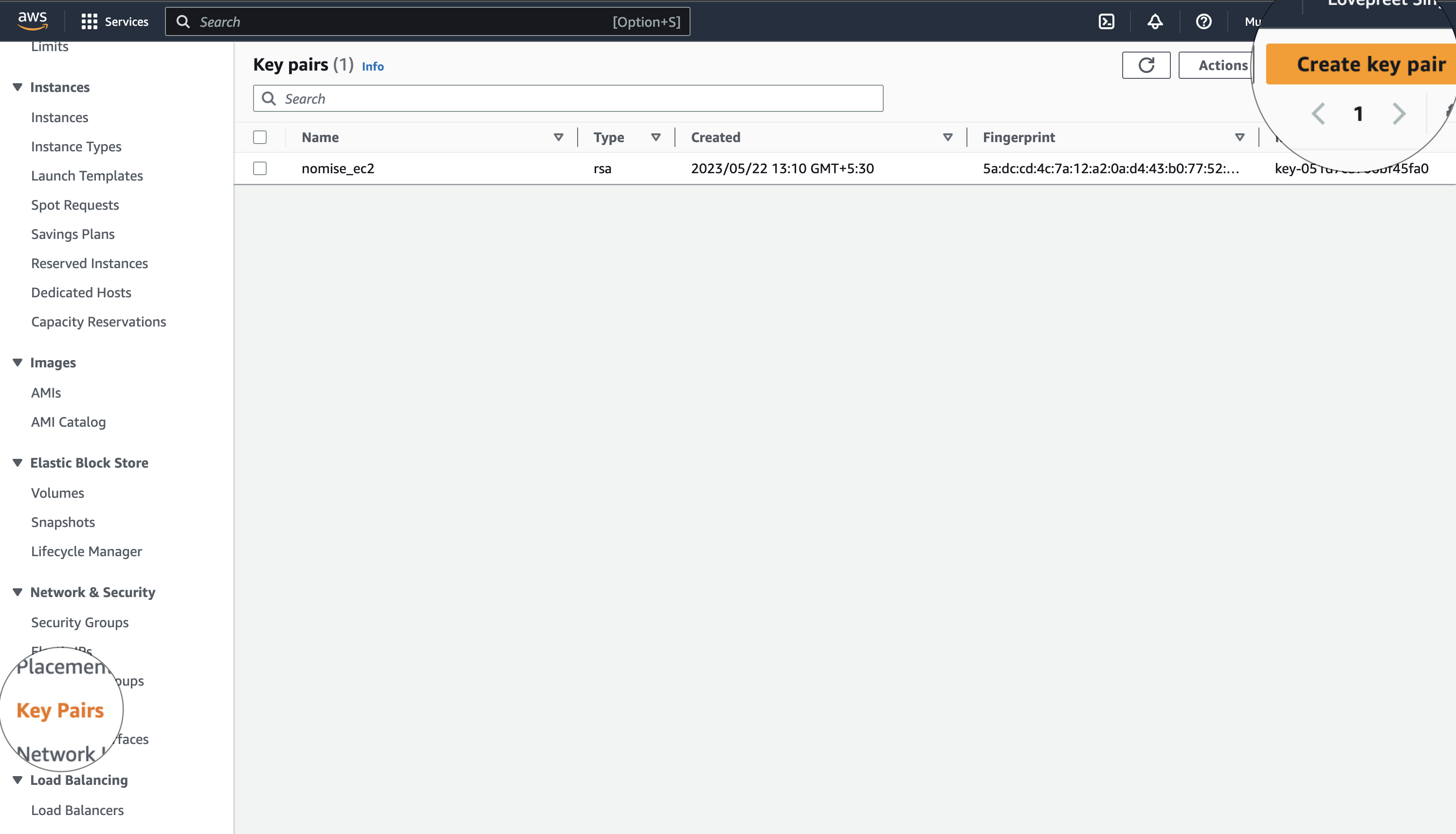

6. Now, It is time to ssh to your instances. But before going inside the EC2 instances, Make sure you have the key-pair (.pem file). To Download the pem file follow the below image:-

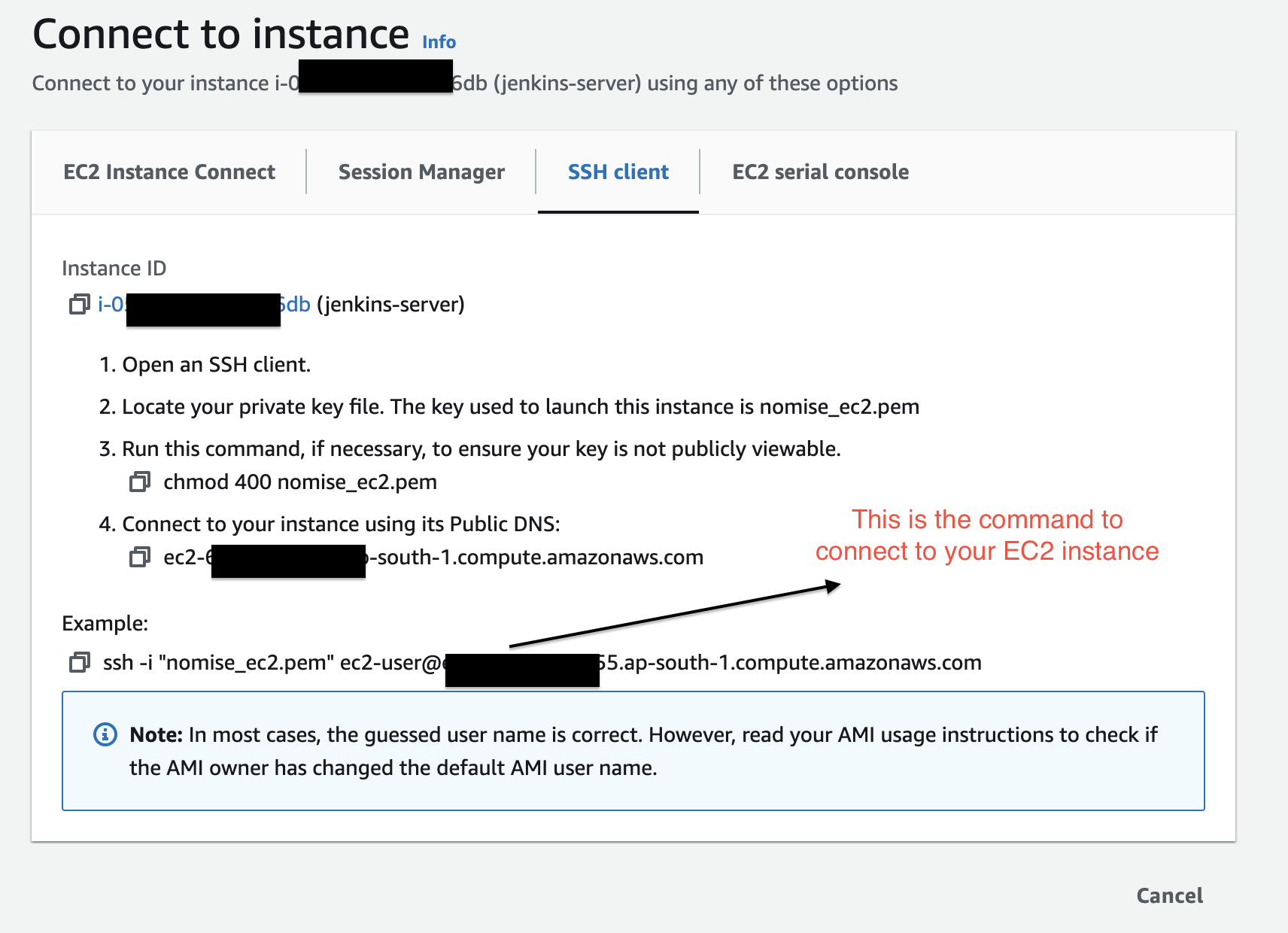

7. I have saved the pem file in my ~/.ssh folder. And now to SSH into a particular instance, click on Connect and you'll get this window

Note:- Make sure you give the correct path of .pem file saved in the previous step and also you changed its access rights using chmod as shown in the picture.

8. Now, similarly you can connect to all the instances using SSH.

## 📌 Setup Jenkins, Docker and Ansible Server

Now it is the time to setup Jenkins pipeline with Ansible server and docker server.

Note:- make sure you stop the instances when you are not doing anything (If you are using AWS Free tier)

1. Let's SSH into our first instance (Jenkins). For me the command to run is

```BASH

ssh -i ~/.ssh/nomise_ec2.pem ec2-user@ec2-65-3-155-155.ap-south-1.compute.amazonaws.com

```

After getting into the ec2 instance run the below commands one by one:-

```BASH

sudo yum update –y

sudo wget -O /etc/yum.repos.d/jenkins.repo https://pkg.jenkins.io/redhat-stable/jenkins.repo

sudo rpm --import https://pkg.jenkins.io/redhat-stable/jenkins.io-2023.key

sudo yum upgrade

sudo amazon-linux-extras install java-openjdk11 -y

sudo dnf install java-11-amazon-corretto -y

sudo yum install jenkins -y

sudo systemctl enable jenkins

sudo systemctl status jenkins

```

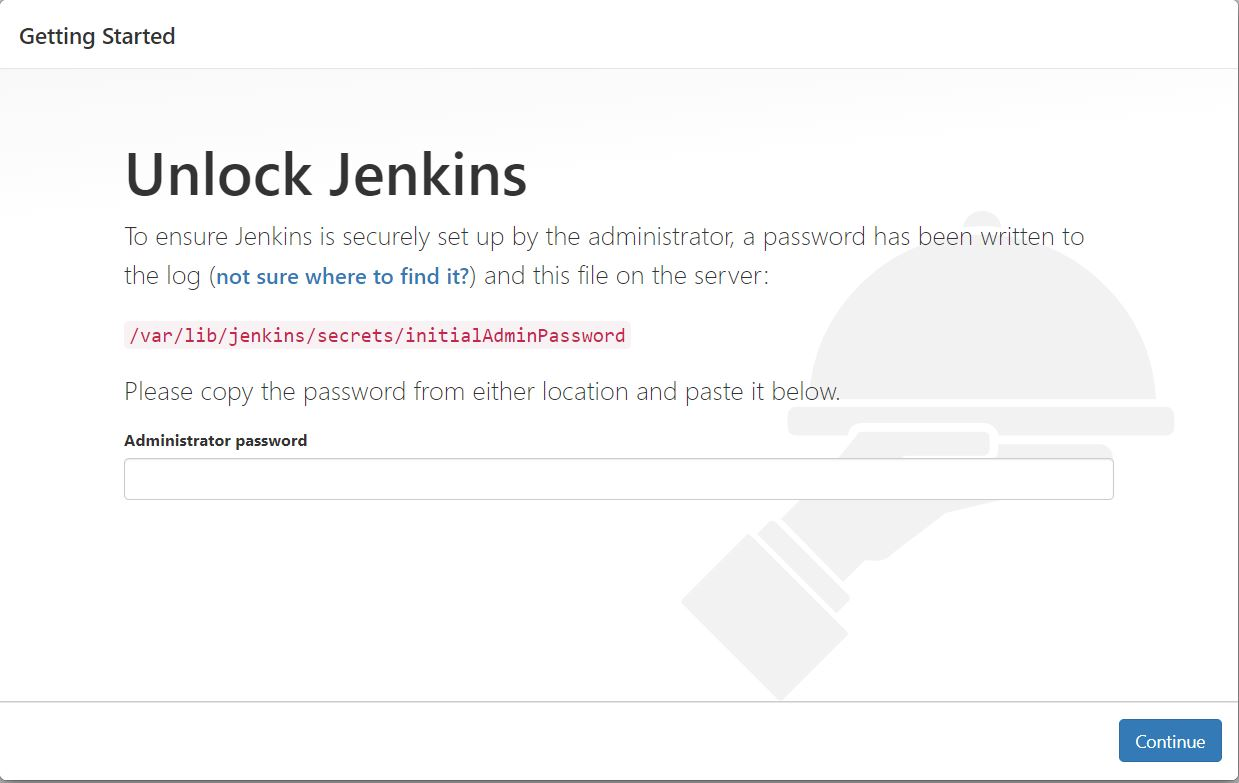

2. After that you'll get Jenkins running on your localhost. Go to your instance on AWS and find your public ipv4 address on the instance homepage and go to http://<your_server_public_address>:8080 and the below screen of jenkins would appear

- Enter this command in your terminal to get the password to enter in jenkins

```BASH

sudo cat /var/lib/jenkins/secrets/initialAdminPassword

```

🥳 And that's how Jenkins will be started.

----

That's It for today Guys, In next Part we'll see How to build Docker Container and deploy through Jenkins.

✨ For the next Blog, Follow Now

| lovepreetsingh |

1,476,392 | Effortless NFS file transfer in CI/CD pipelines without Privileged access | In the realm of continuous integration and deployment (CI/CD) pipelines, the seamless transfer of... | 0 | 2023-05-22T15:23:59 | https://dev.to/kha7iq/effortless-nfs-file-transfer-in-cicd-pipelines-without-privileged-access-p1e | In the realm of continuous integration and deployment (CI/CD) pipelines, the seamless transfer of artifacts to any remote servers holds immense significance.

Traditionally, in the case of NFS, achieving this demands mounting of the nfs share inside the runner, thereby restricting it to a specific node and granting privileged access.

However, NCP (NFS Copy), can be utilized to

allows direct artifact copying to remote NFS servers without the need for mounting, eliminating the constraints of privileged access.

In this article, we delve into the utilization of NCP in GitLab pipelines.

## Understanding the Pipeline

Let's examine a sample pipeline to better understand how NCP can be leveraged for artifact transfer:

```yaml

stages:

- build

- publish

build:

stage: build

script:

- echo "build test artifact" > output.txt

artifacts:

paths:

- output.txt

expire_in: 2 hours

publish:

stage: publish

needs: ["build"]

image: docker.io/khaliq/ncp:latest

script:

- ncp to --host 192.168.0.80 --nfspath data --input output.txt

```

The pipeline consists of two stages: `build` and `publish`. In the `build` stage, a test artifact is created by echoing the text "build test artifact" into a file called `output.txt`.

This stage is responsible for building the artifact that will later be transferred to the remote NFS server.

```yaml

publish:

stage: publish

needs: ["build"]

image: docker.io/khaliq/ncp:ncp

script:

- ncp to --host 192.168.0.80 --nfspath data --input output.txt

```

The publish stage is where the artifact is transferred using NCP. Here's a breakdown of the command used:

`ncp to`: Specifies the direction of the transfer, indicating that the artifact will be sent to the remote server.

`--host`: Specifies the IP address or hostname of the remote NFS server.

`--nfspath`: Defines the target path on the remote server where the artifact will be stored.

`--input`: Specifies the input artifact to be transferred, in this case, `output.txt`.

With this simple command, it handles the transfer of the artifact to the specified remote NFS server, without the need to mount nfs shares.

## Conclusion

NCP simplifies remote NFS server integration in CI/CD pipelines. By eliminating nfs share mounting and the need for privileged access, it streamlines artifact transfer, granting the freedom to run jobs anywhere.

Checkout the [GitHub repository](https://github.com/kha7iq/ncp) for more information. | kha7iq | |

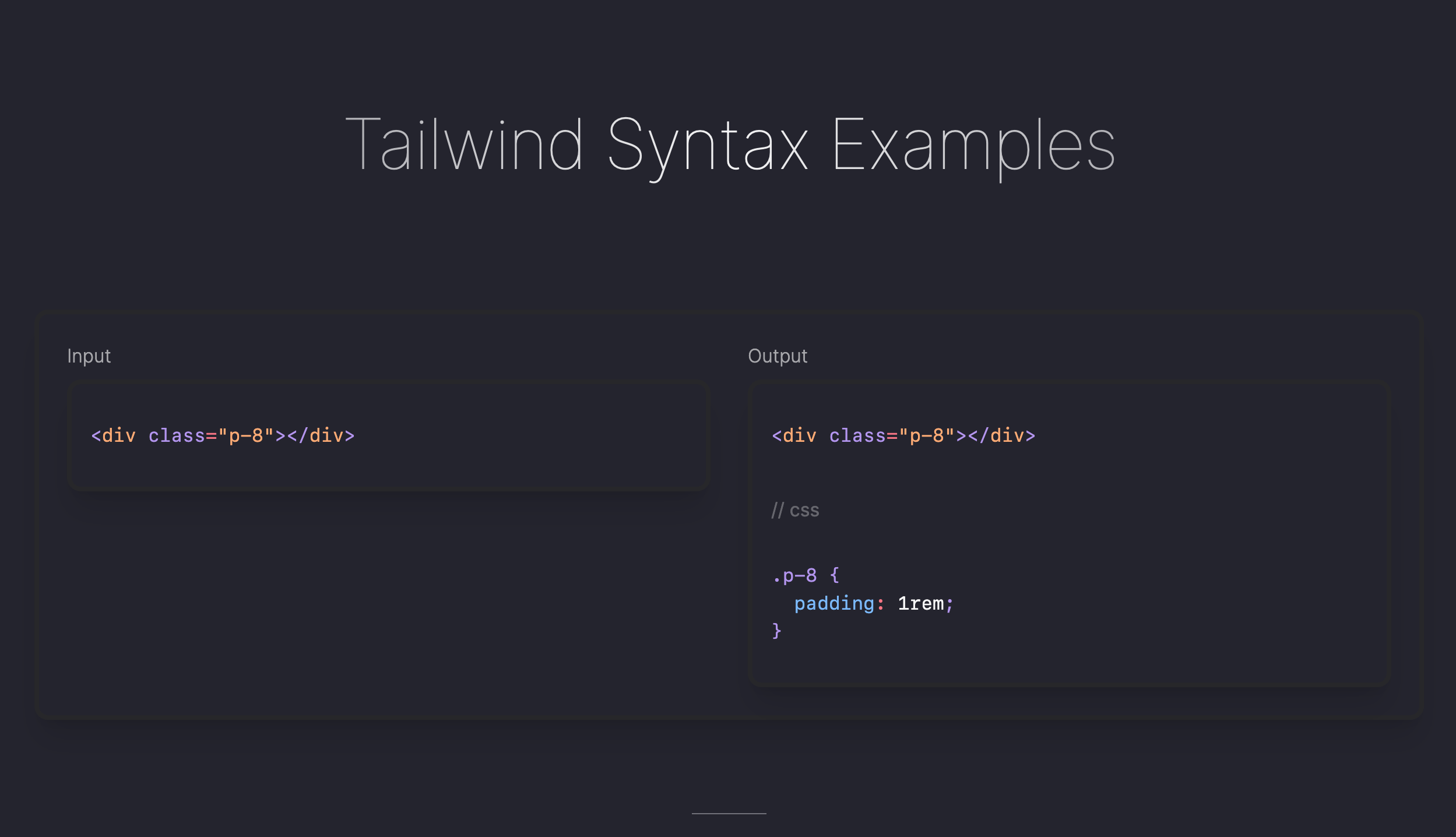

1,476,461 | Advanced Tailwind Syntax | It's common to use Tailwind with classes like m-4 bg-red-500 that use the theme tokens. Tailwind Docs... | 0 | 2023-05-22T14:31:06 | https://dev.to/tresorama/advanced-tailwind-syntax-11bm | frontend, learning, react, vue |

It's common to use Tailwind with classes like `m-4 bg-red-500` that use the `theme` tokens.

[Tailwind Docs](https://tailwindcss.com/docs/installation) is well done and covers everything.

---

But when you need to write CSS that doesn't use theme, you are using the tailwind syntax for **arbitrary rules**.

**This is when you can find this cheat sheet useful.**

{% embed https://tailwind-syntax-examples.vercel.app/ %}

---

Feel free to suggest something that is missing...

| tresorama |

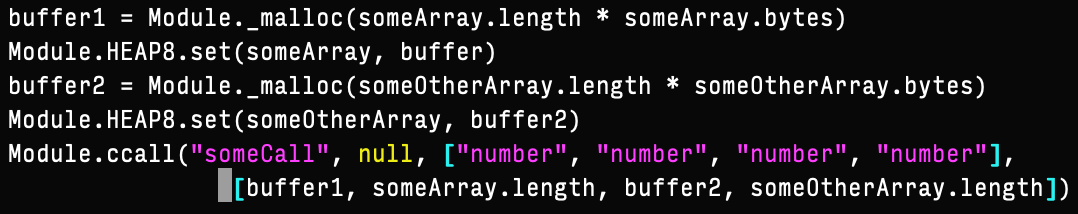

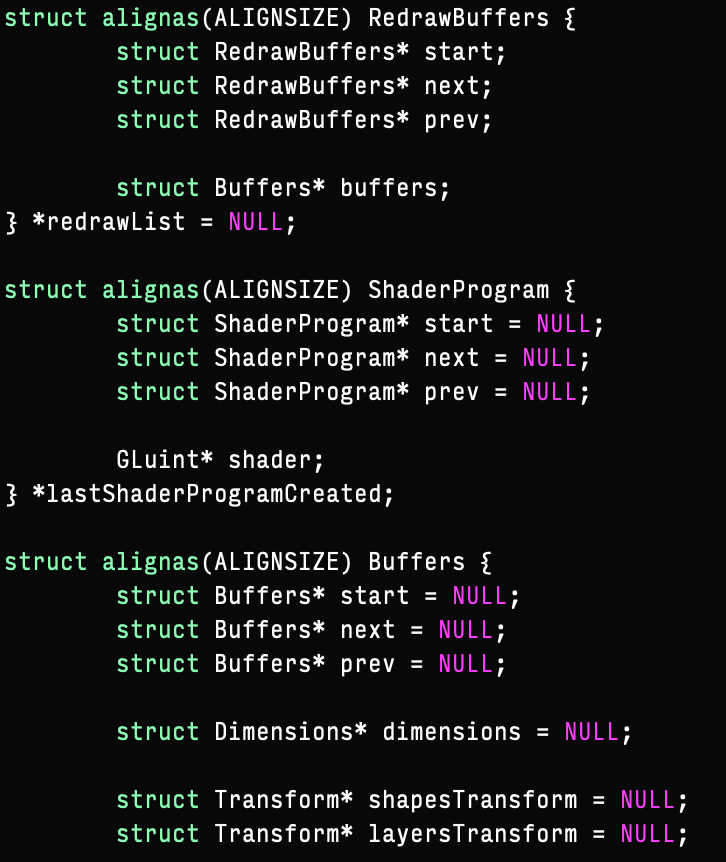

379,124 | WASM: Memory Management | So you have chosen to write your new web app in WASM - exciting! On top of that, you want to write it... | 0 | 2020-07-02T16:37:50 | https://dev.to/shaafiee/wasm-memory-management-33l6 | cpp, webassembly, javascript | So you have chosen to write your new web app in WASM - exciting! On top of that, you want to write it in C++ to have fine-grained control over data storage and manipulation.

Here's some great advice that will help you overcome serious headaches.

Firstly, because the memory available to your program is actually a JS object, it is available as one contiguous chunk that is limited to linear scaling. This means that you have to be very careful about deleting objects and freeing memory. In fact, stop deleting objects altogether. If you feel the need to get rid of temporary memory objects then create a separate temporary memory object within JS for that operation, like so:

The second big hint is, align your data structures. When you have lots of data structures that go in and out of the execution scope, you will run into lots of segmentation faults due to memory misalignments, particularly if your structures have many levels of invariably scaling sub-structures, such as in the case of Markov chains.

No alt text provided for this image

Explicit memory alignment will have penalties in terms of growth of memory as your Markov chains' complexities increase - this is where multiple memory objects come in handy. This drawback is worth the performance and stability bonuses, which you will learn as you dig into WASM.

Have fun in your WASM journey!

| shaafiee |

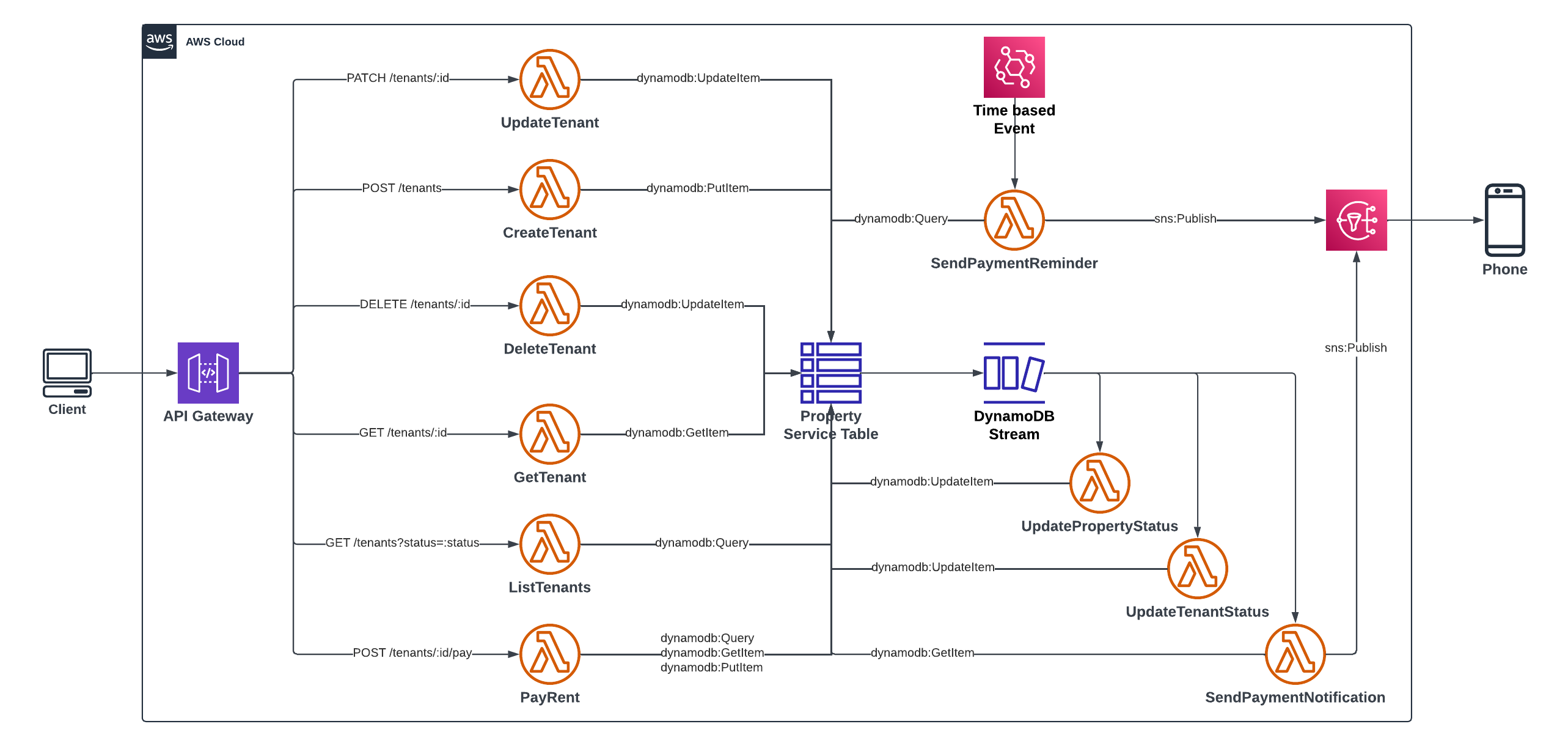

1,476,741 | Serverless API Development on AWS with TypeScript - Part 1 | Introduction This article is written to help anyone who needs a guide in building their... | 23,092 | 2023-05-23T08:57:01 | https://soprinye.com/serverless-api-development-on-aws-with-typescript-part-1 |

## Introduction

This article is written to help anyone who needs a guide in building their first serverless API on AWS using the Serverless Framework and TypeScript. If you have never built an API using the Serverless Framework, you would need to set up your development environment. Check out my post on [Setting up for Serverless Development on AWS](https://dev.to/aws-builders/setting-up-for-serverless-development-with-aws-28gf), follow the steps to download and install the components needed to complete this project.

> If you are familiar with a concept or service go ahead and skip it to save yourself some time.

## About this Project

This Tenant-service project represents a property-rental scenario where a tenant rents an available property and receives an SMS notification for payment. After that happens, the tenant becomes active and the property becomes unavailable to be rented by another tenant. Subsequently, an active tenant can renew the rent for their current property. Finally, an SMS notification is sent to tenants 1 month before the expiration of the rent. The operations include:

* Create a tenant

* Update tenant record

* List all tenants by status

* Delete a tenant

* Record a payment transaction

* Send an SMS after payment

* Send a reminder via SMS, one month before the expiration

## Integrated AWS Services

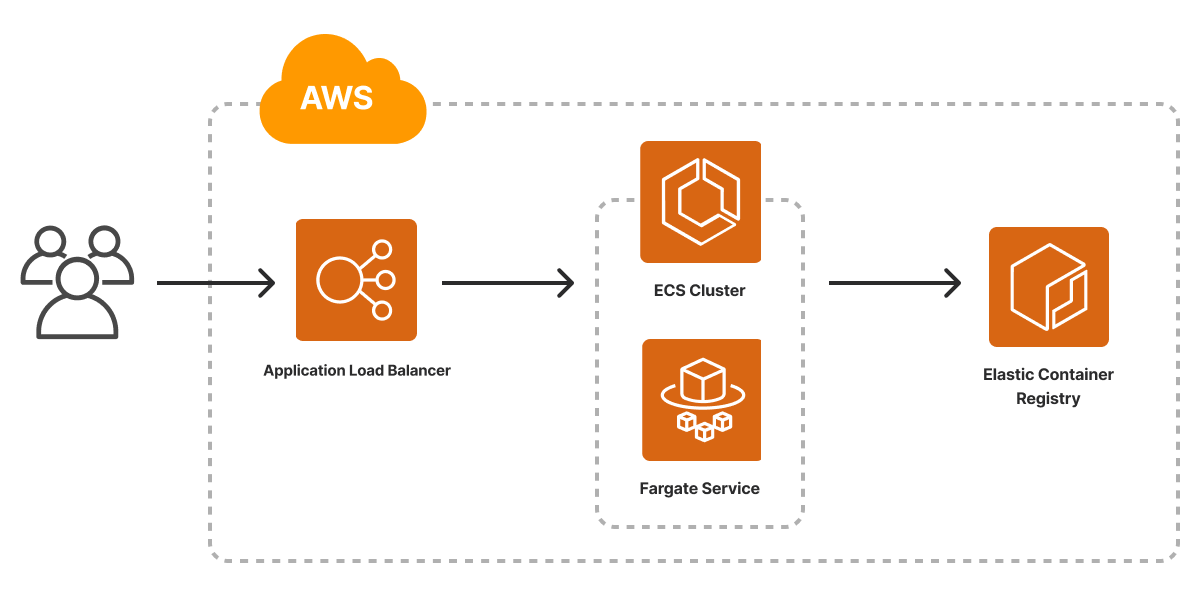

The Tenant service project is built on AWS as a serverless API. I have included a brief description of the AWS services used in this project and how they work. As we go on you will see how they are used in this project. These five (5) services are:

### Amazon API Gateway

Amazon API Gateway receives and directs traffic to the appropriate service or handler that is responsible for that API request. Of course, such interactions must have been linked. Users can access whatever backend services you provide via the API Gateway. After the request is handled, the response is forwarded back to the API Gateway and finally, to the user. Amazon API Gateway is completely managed hence you do not need to install any server or component to start using it. It supports REST APIs as well as WebSocket APIs and also integrates easily with other supported AWS services.

### AWS Lambda

AWS Lambda is one of the compute services for Serverless Computing on AWS. You can deploy a function - yes! just a function - even if the function only returns the traditional "Hello World" string, you can deploy that function to AWS Lambda and Lambda will invoke your function when it is triggered. AWS Lambda supports many programming languages but this project uses TypeScript.

### Amazon DynamoDB

Amazon DynamoDB is a NoSQL, Key-Valued based Serverless database by AWS. It is highly efficient and can power high-performance applications at scale. Given it is serverless, there's no need for provisioning - you just need to configure your table and get started using it. We will be using this database to create the table used in this project.

#### DynamoDB Stream

Dynamodb Streams is one of the cool features of the Dynamodb Store. A stream is created when an `INSERT`, `MODIFY` or `DELETE` action is carried out on a DynamoDB table. Streaming by DynamoDB has to be enabled - it is not automated enabled. We will be using the streams to initiate checks and perform more logic based on an event in the table.

### Amazon EventBridge

At the foundation of AWS Lambda is the concept of a trigger. A trigger is an event that causes your lambda function to be invoked or executed. An example of a trigger is a user request - when it hits Amazon API Gateway and the endpoint references a Lambda function, that function is invoked. Another way a Lambda function can be invoked is by a time-based event, similar to a cron job schedule. Amongst several uses of EventBridge, it also keeps track of schedules and triggers functions as necessary based on such schedules.

### Amazon SNS

SNS is short for Simple Notification Service. It is a pub/sub for application-to-application and application-to-person messaging systems. It is serverless hence no need for installation of any kind. You only need to configure how you want to use it. We will be using it to send SMS to the users.

## Bootstrapping the Project

Execute the following command in a console to clone the project and get started.

```bash

git clone https://github.com/charlallison/tenant-service.git

```

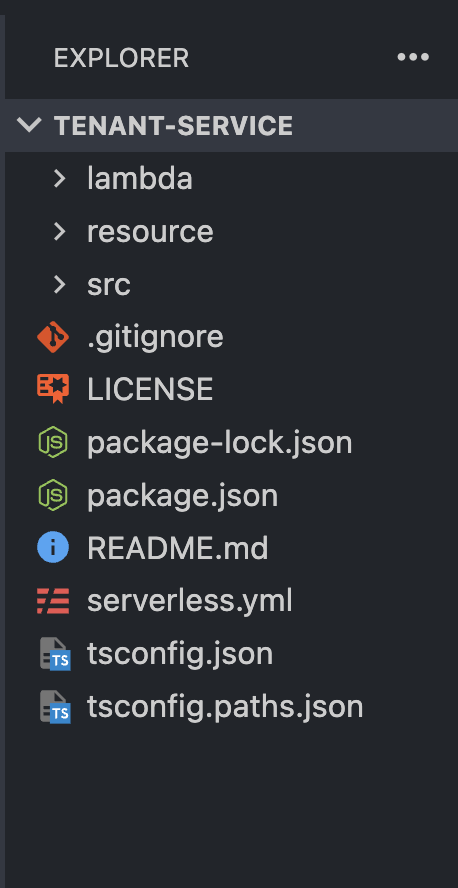

After the clone operation is completed, you should have a directory containing files as rendered in the image below:

### Files, Folder Structure and Project components

* `serverless.yml` - the single most important file in the project. This file contains the configuration that the Serverless Framework uses to interact with and deploy our project to our AWS platform via our account.

* `package.json`, `package-lock.json` - contains information about dependencies for our project. These include the necessary AWS dependencies, TypeScript or Node-project-specific packages used.

* `tsconfig.json`, `tsconfig.paths.json` - contains configurations for the TypeScript compiler - remember, the project is written using TypeScript.

* `src` - short for Source, refers to a folder containing `source code`.

* `lambda` - contains lambda functions and related configurations

* `resource` - contains code (in yml) used to set up our table in DynamoDB and other configurations like streams and indexes.

### The serverless.yml file

I stated that the `serverless.yml` file is the most important file in the project. If that is the case, it is worth reviewing to understand its content.

Some important keys to note:

* `service`: sets the name of the service - tenant-service here

* `package`: packages function individually with their dependencies. However, it excludes dev dependencies.

* `provider`

* `name`: specifies the name of the cloud provider - aws in this case

* `profile`: specifies the profile with which to interact with the AWS platform - the profile contains credentials for authentication and authorization.

* `tracing`: enables distributed tracing for all lambda functions in the project and the API Gateway

* `functions`: specifies lambda function config files. We will see more of this in the next sessions.

* `resources`: used to specify resources used in the project. We only have the DynamoDB table as our resource in this project. Its definition and configuration are located in the `database-table.yml` file with the `TenantServiceTable` key

* `plugins`: you can call these helper function for the serverless.yml file -

* `esbuild`: used to build the functions

* `serverelss-iam-roles-per-functions`: used to indicate that each function can have its permission or role instead of grouping all under one.

* `custom`: All the keys mentioned are defined by the serverless framework but there could be cases where you want a user-defined key. The custom key is where you can define a user-defined key that can be used in the other parts of the file.

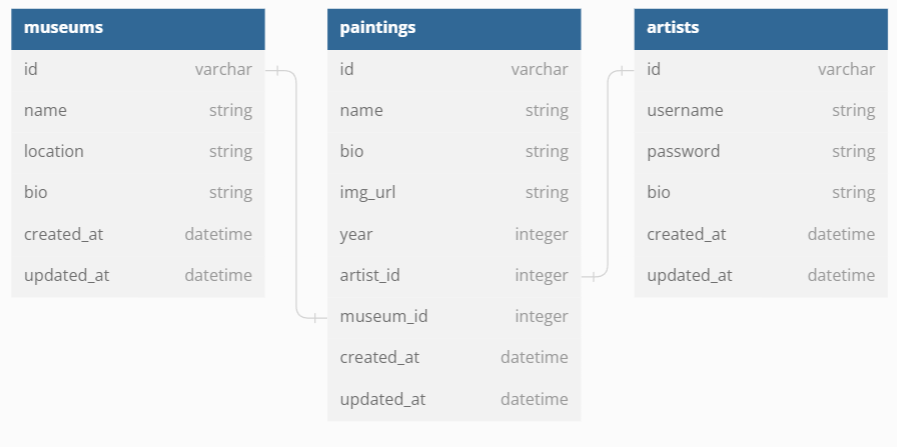

### Entities

Entities are a representation of real-world objects as used in source code relating to the context. Of course, there is a relationship with the database in which they are stored. For the tenant service, we have three entities that we will be working with. They contain fields that hold data and that can be used in operations.

When working with DynamoDB it is a good practice to include fields or attributes that will be used for indexing - more on this later. The following are entities in the tenant service project and their respective fields. It is worth mentioning that the indexing attributes are made up of the application attributes.

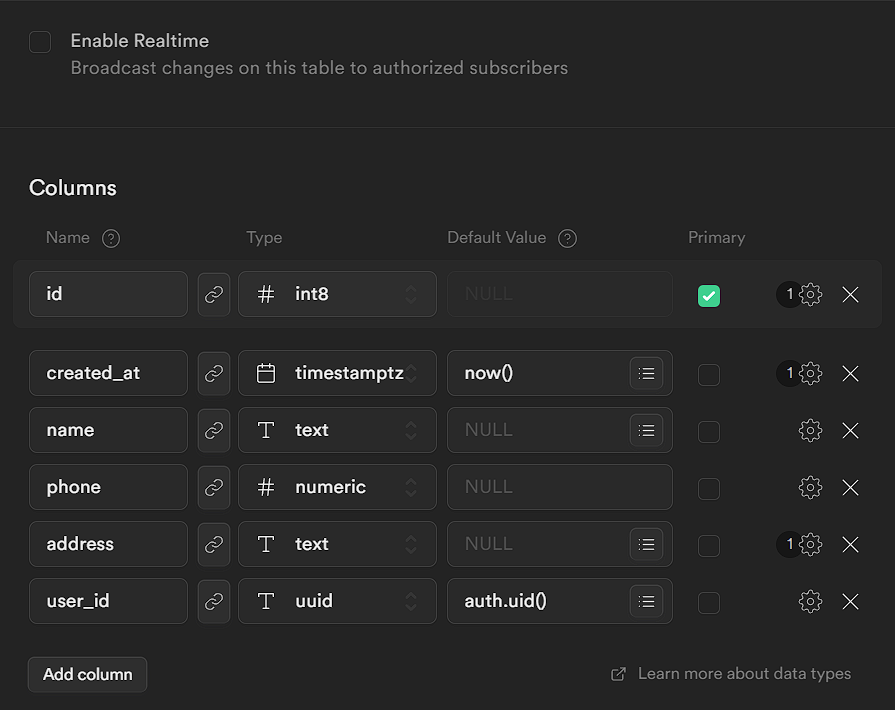

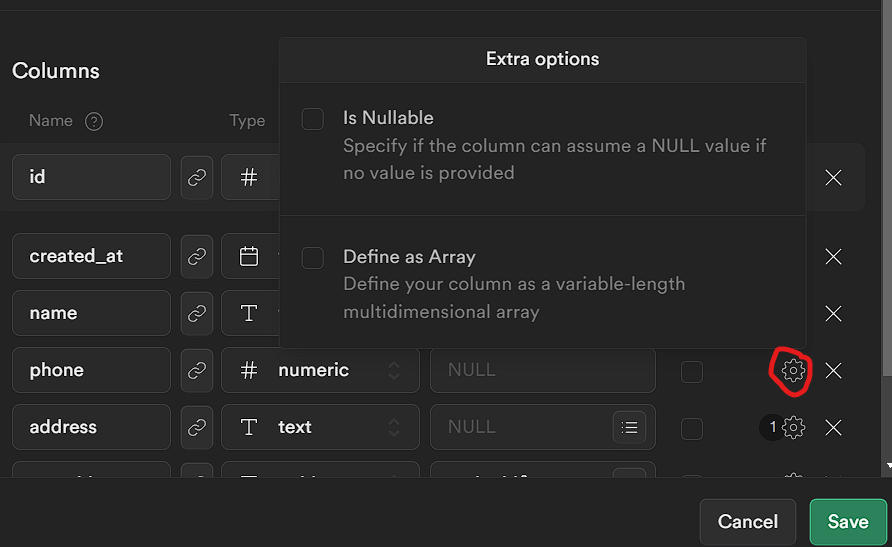

* **Tenant**

* application attributes: `id`, `name`, `phone`, `status`

* primary key: `PK`, `SK`

* index attributes: `GSI1PK`, `GSI2PK`

* **Property**

* application attributes: `id`, `city`, `state`, `address`, `cost`, `rooms`, `status`

* primary key: `PK`, `SK`

* index attributes: `GSI1PK`

* **Payment**

* application attributes: `propertyId`, `tenantId`, `amount`, `paidOn`, `expiresOn`

* primary key: `PK`, `SK`

* index attributes: `GSI1PK`

It is okay at this point to say we will be leveraging a strategy known as **Single table design**. This is a table design strategy where all of your data is saved to one table with no joins — more on this in subsequent parts of this article.

Three other files that contain very useful functions are the files in the `/src/libs` directory. These functions are used across the service and it makes sense to have a single reference point.

* `api-gateway.ts`: has two-fold usage:

* enables schema validation with middy

* formats the response message for API gateway service.

* `aws-client.ts`: contains initialization code for AWS clients

* `lambda.ts`: contains a middy function that chains body-parser, validator and error-handler middlewares.

## Conclusion

We have looked at the project structure, some important files and AWS services used in this project. We now have an idea of its setup. In the next article of this series, we will look at the lambda functions and the necessary configurations needed to them deploy on AWS. Comments are certainly appreciated. If there are questions, I will try my best to answer them. I hope this was informative and thank you for sticking right on till the end. | charlallison | |

1,476,860 | RowySync: A React App which syncs text, images and styles from Rowy Tables. | Hello Everyone! 👋 Recently I came across the GitHub + DEV 2023 Hackathon on the Dev Platform and... | 0 | 2023-05-22T21:00:31 | https://dev.to/jasmin/rowysync-a-react-app-which-syncs-text-images-and-styles-from-rowy-tables-569i | githubhack23, rowy, react, webdev | Hello Everyone! 👋

Recently I came across the **[GitHub + DEV 2023 Hackathon](https://dev.to/devteam/announcing-the-github-dev-2023-hackathon-4ocn)** on the Dev Platform and started planning to participate in the hackathon.😂

Dev community and especially Dev hackathons have played a major role in my learning journey by helping me learning about new tools and frameworks. 😇

While going through the hackathons post I came across the comment made by @harinilabs and looked into [Rowy](https://www.rowy.io/). After looking and learning about Rowy I was amazed by the idea of implementation behind Rowy and low code backend support it provides to build our applications faster.

I started digging up some ideas and came across the idea of implementing **RowySync** which helps to _update the content, images and styles of the text quickly of a React application._

## What I built 💡

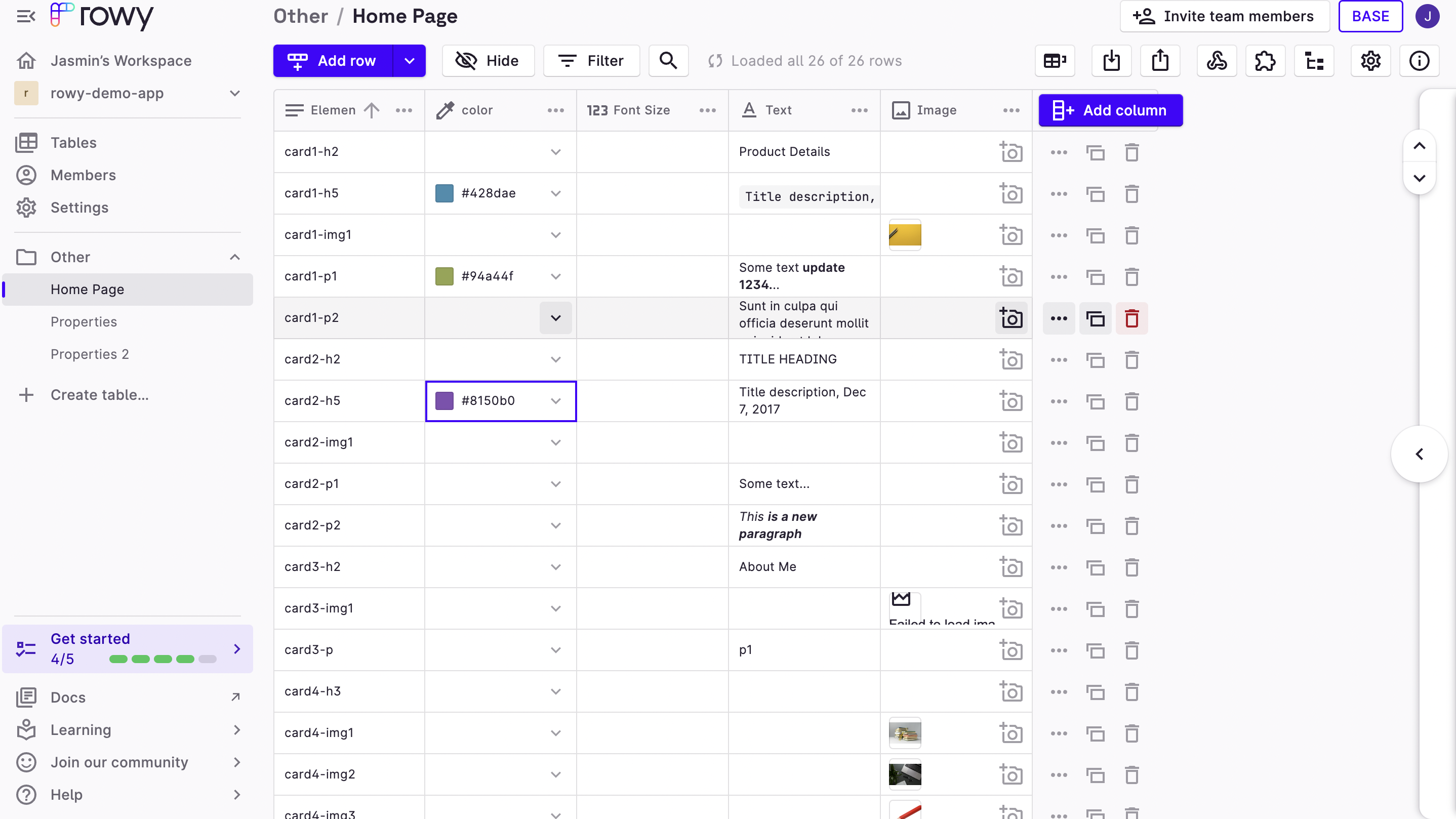

I created a React Application that can be styled/customized using Rowy. Anyone who has editing access in Rowy project can easily update the text, font styles and edit images from Rowy Table.

The simple spreadsheet UI by Rowy provides an easy way to edit and manage the elements on the website.

### Category Submission:

I am submitting this project under **Wacky wildcards** as this app is built and deployed using **Github Codepsaces** and **Github Actions** to make development and collaboration easier and helps us to understand the **integration between Rowy and React Application**.

### App Link

Link to the deployed [App](https://jasmin2895.github.io/rowy-pages-editor/)

### Screenshots

1. Screenshot of the Rowy Table containing website details.

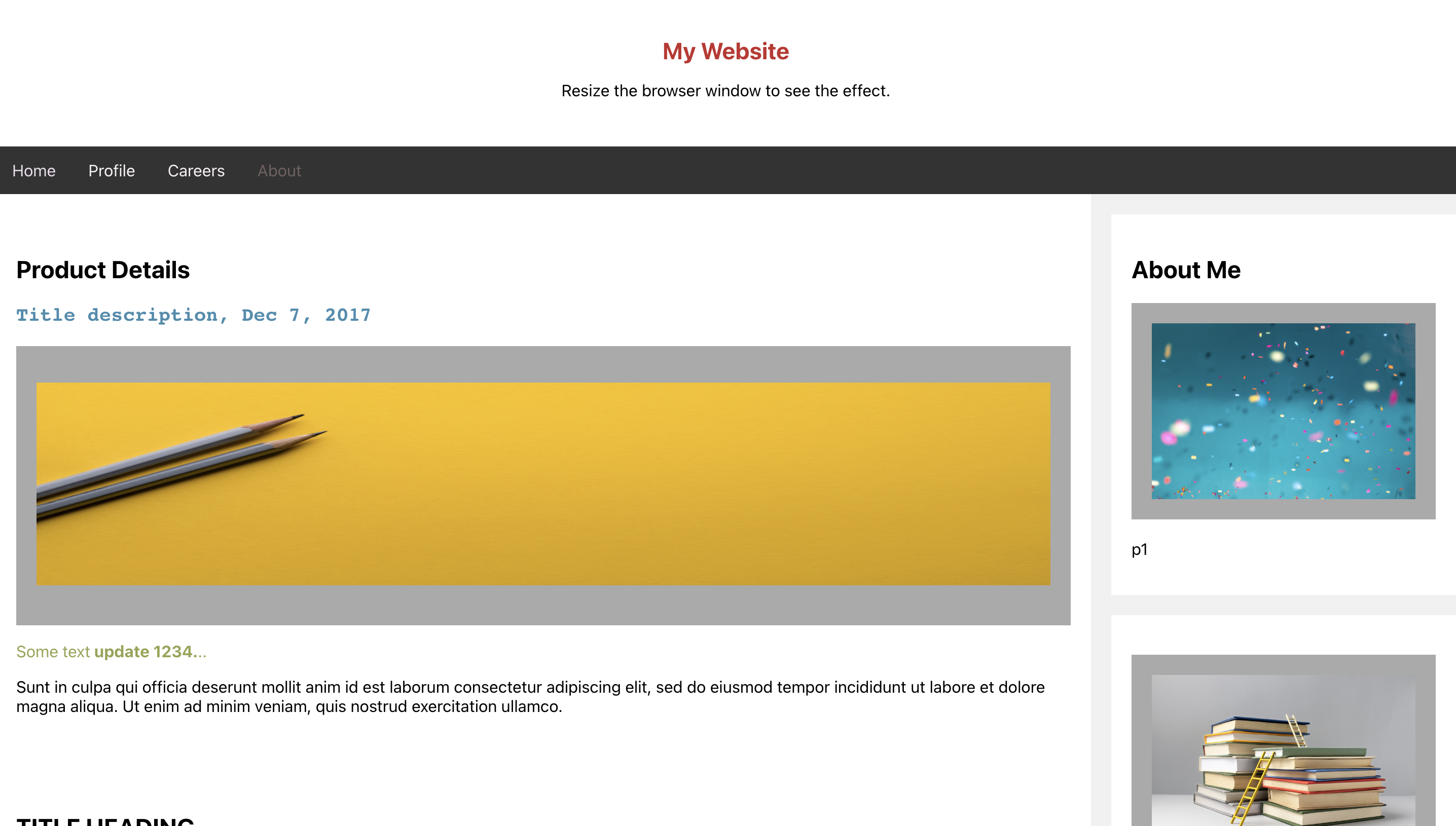

2.Screenshot of the React Application.

A small video demonstrating the working of this app.

{% embed https://youtu.be/lZnxuvTZwHg %}

### Description

Rowy Sync aka rowy web page editor built using **Github Codespaces** and using **Github Actions** provides a simple and intuitive interface for users to modify the content of the website easily. It helps to edit text, apply different font styles, such as bold, italic, and underline, etc and upload images to enhance the visual appeal of their website.

I have used GitHub Actions to build and deploy the app to Github pages.

### Link to Source Code

Github Repo [link](https://github.com/Jasmin2895/rowy-pages-editor)

### Permissive License

RowySync/ Rowy Pages editor is distributed under the MIT License — you can find the license [here](https://github.com/Jasmin2895/rowy-pages-editor/blob/main/LICENSE).

## Background

Well!😅

While working on frontend applications we might have had multiple requests from the stakeholders to update the font and formatting on the website.

This often leads to increase in development and review cycles and more waiting time for the stakeholders and less flexibility to provide more visual customisations.