id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,589,677 | Navigating the shift from customer service to coding | In the rapidly evolving landscape of software development, professionals with diverse backgrounds... | 0 | 2023-09-11T01:49:30 | https://dev.to/wdp/navigating-the-shift-from-customer-service-to-coding-oe6 | webdevpathsuccess, techcareerjourney, opensourcecollaboration |

In the rapidly evolving landscape of software development, professionals with diverse backgrounds bring unique perspectives and valuable skills to the table. Meet [Thorrell Turner](https://www.linkedin.com/in/thorrellt/), a Mobile Software Developer currently dedicated to a healthcare platform that aims to improve accessibility to medical care. Balancing work and a family of five, Thorrell successfully transitioned from a career in Customer Service to the tech industry, leveraging his Bachelor's degree in Computer Science. With his solid foundation and a [portfolio](https://thorrellt.com/) showcasing his expertise in mobile software development, Thorrell is driven to make a meaningful impact in the field.

Explore our interview with Thorrell as we delve into his inspiring story, his experiences in web development, and his significant contributions to the [Web Dev Path project](https://www.webdevpath.co/)—an open-source initiative that empowers aspiring tech professionals, such as designers, developers, and project managers to gain practical, real-life experience. Through his narrative, we aim to inspire others considering a similar path and emphasize the boundless opportunities that come with embracing change and pursuing a fulfilling career in the ever-evolving world of mobile software development.

---

**Can you share your experience, your background in web development, and how it led you to contribute to the Web Dev Path project?**

Absolutely! My journey in web development began when I decided to make a career transition to software development. At the time, I had approximately one year of web development experience under my belt. Most recently, I completed a project utilizing React and Node.

While I was focusing on securing more job interviews to gain industry-worthy experience, a close friend introduced me to the Web Dev Path project. Intrigued by its details, I dove in and immediately knew that I wanted to be part of this initiative. I quickly familiarized myself with Next.js and React and reached out the very next day to offer my contribution.

**As a software developer with full-stack experience, how do you see the Web Dev Path project benefiting aspiring web developers in their learning journey?**

The Web Dev Path project offers aspiring web developers several valuable benefits. Firstly, it provides an opportunity to interact with an existing codebase, which is crucial since professionals will often encounter code written by others in their careers. This project allows individuals to enhance their ability to understand and work with unfamiliar code—an essential skill in the industry.

The project also offers access to more experienced developers, creating an invaluable learning environment. Being part of the Web Dev Path community saved me time and alleviated stress through discussions about solutions, strategies, and providing insights into the logic behind specific decisions.

**Can you describe some of the key features and functionalities of the Web Dev Path project that make it a valuable resource for individuals looking to enter or progress in the field of web development?**

For those lacking professional experience in the tech industry, like myself when I first contributed to the project, Web Dev Path plays a significant role in introducing core aspects of the development lifecycle. Participants have the opportunity to assign themselves tasks with requirements, test code, document changes, and more—replicating real-world scenarios.

From my perspective as a developer starting out, the most impactful aspect of the Web Dev Path project was the experience gained with Git. This ranged from cloning a repository, running it on my local machine, to completing pull requests and merging changes. In fact, during an interview that led to my first job offer, I successfully answered a Git question based on knowledge gained through my involvement with the project.

**How do you believe the Web Dev Path project promotes a sense of community and knowledge sharing among aspiring web developers, considering your personal values of collaboration and learning?**

The Web Dev Path community emanates warmth and a genuine eagerness to assist others. It creates a safe space where individuals feel empowered to ask questions, even if they consider them "dumb." This environment fosters learning and personal growth.

Having mostly pursued my journey in isolation, it was a refreshing change to engage in brainstorming dialogues within the community and discover insightful articles or videos recommended by fellow members. I quickly realized how much I had limited myself by not embracing collaboration earlier.

**What advice would you give to aspiring web developers who are interested in leveraging the Web Dev Path project to enhance their skills and knowledge? How can they make the most out of this open-source resource?**

To those interested in maximizing their benefit from the Web Dev Path project, I encourage you to dive in even before you feel completely ready. Embrace the challenge of stepping out of your comfort zone, as that's where lasting knowledge is truly built.

Additionally, make sure to document and leverage the experience gained from contributing to an open-source project like this. Take notes of your contributions with the intention of showcasing them on your resume or discussing them during interviews.

For those with limited tech experience on their resume, consider using headings like "Technical Experience" and "Non-technical Experience" instead of the traditional "Work Experience." Under the "Technical Experience" section, you can list any technical work experience you may have, as well as your open-source contributions and personal projects. This approach can provide an edge and catch the attention of recruiters, especially if your "Technical Experience" section spans half a page or more.

---

## Conclusion

Thorrell's journey from Customer Service to Tech exemplifies the possibilities that arise from transitioning careers. Through his interview, Thorrell invites aspiring web developers to embrace challenges and leverage resources like open-source projects to enhance their skills and knowledge in the dynamic field of web development.

As we conclude our conversation with Thorrell, we encourage you to explore the transformative impact of the Web Dev Path project. Join the vibrant community of like-minded individuals, embark on your own journey of growth, and unlock new opportunities. Visit the [Web Dev Path website](https://www.webdevpath.co/) to discover how you can contribute and propel your web development career forward.

| marianacaldas |

1,592,016 | Laravel 10 Image Upload Tutorial | A Laravel 10 image upload tutorial is a step-by-step guide that teaches you how to implement image... | 0 | 2023-09-07T06:06:18 | https://dev.to/tutsmake/laravel-10-image-upload-tutorial-10cj | A Laravel 10 image upload tutorial is a step-by-step guide that teaches you how to implement image uploading functionality in a web application using the Laravel PHP framework. Laravel is a popular web application framework that simplifies the development of web applications, and it provides various tools and features to make tasks like image uploading relatively straightforward.

## Laravel 10 Image Upload Tutorial

To upload an image in Laravel 10, you can follow these steps:

- Setting Up Laravel: The tutorial will typically start by guiding you through the process of setting up a Laravel project on your development environment. You'll need PHP, a web server (like Apache or Nginx), Composer, and Laravel installed.

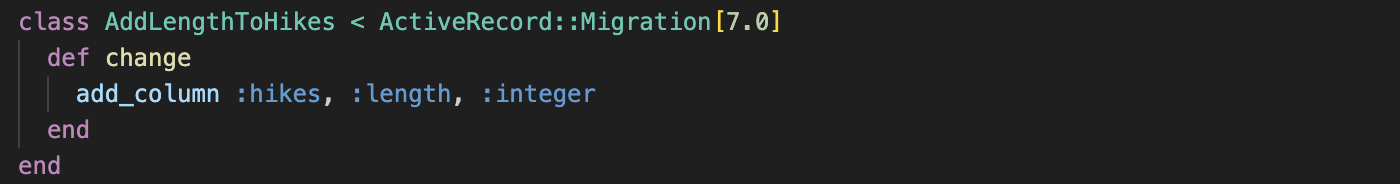

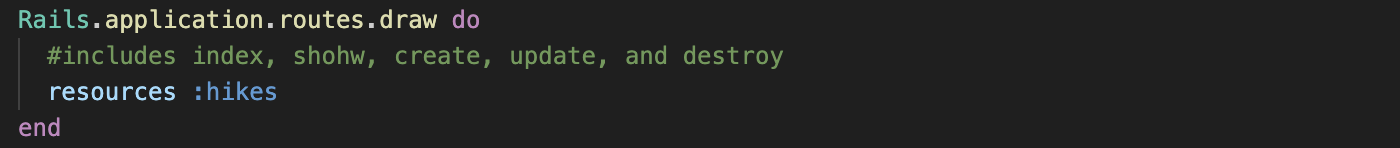

- Creating a Database: If your application requires storing information about the uploaded images, you may need to set up a database table for this purpose. The tutorial might include instructions on creating a migration and running it to create the necessary table.

- Creating a Form: You'll learn how to create an HTML form that allows users to select and upload images. Laravel's Blade templating engine is often used to generate the form.

- Handling Form Submission: The tutorial will show you how to handle the form submission in a Laravel controller. You'll learn how to validate the uploaded file and store it on the server.

- Uploading and Storing Images: This section will cover how to upload the image to a specific folder on the server. Laravel's file handling functions like store() or move() will be used.

- Displaying Uploaded Images: You'll learn how to display the uploaded images on your website, either by serving them directly from the server or by using Laravel's asset management system.

- Validating Images: To ensure that the uploaded files are valid images, the tutorial might include information on image validation, checking file types, and preventing malicious uploads.

- Thumbnail Generation: If needed, the tutorial might cover how to generate thumbnails or resize uploaded images using packages like Intervention Image.

- Security Considerations: You'll learn about security best practices, such as validating and sanitizing user input, protecting against image-based attacks, and securing your image upload routes.

- Authentication and Authorization: Depending on the application, you might learn how to implement authentication and authorization to control who can upload images.

- Error Handling: The tutorial may cover handling errors gracefully, such as displaying error messages to users when image uploads fail.

- Testing: You might be introduced to writing tests to ensure that your image upload functionality works as expected.

- Deployment: The tutorial might include guidance on deploying your Laravel application to a production server, including configuring file storage for uploaded images.

- Best Practices: Throughout the tutorial, best practices for organizing code, structuring your Laravel application, and optimizing image uploads may be emphasized.

- Conclusion: The tutorial will usually conclude with a summary, pointers to additional resources, and suggestions for further improvements or customization.

Read More [Laravel 10 Image Upload Tutorial](https://www.tutsmake.com/laravel-10-image-upload-tutorial/) | tutsmake | |

1,595,365 | Relationship Based Access Control (ReBAC): Using Graphs to Power your Authorization System | Setting the scene: AuthZ vs AuthN Have you ever chatted with a fellow developer about an... | 0 | 2024-01-10T16:20:37 | https://authzed.com/blog/exploring-rebac | ---

title: Relationship Based Access Control (ReBAC): Using Graphs to Power your Authorization System

published: true

date: 2021-03-03 00:00:00 UTC

tags:

canonical_url: https://authzed.com/blog/exploring-rebac

---

## Setting the scene: AuthZ vs AuthN

Have you ever chatted with a fellow developer about an application's permission system and quickly realized you're also talking about its login system? It's rather unfortunate, but these two entirely distinct systems often get merged together simply because their formal names start with the same four letters: **AUTH**.

> Authentication ("authN" or "identity") is who you are

>

> Authorization ("authZ" or "permissions" or "access control") is what you're allowed to do

This is no amateur mistake. Even [major web frameworks](https://docs.djangoproject.com/en/3.1/ref/contrib/auth/) bundle these concepts together out of convenience.

Because so many applications need to support users from inception, identity becomes vital for developers to understand on day one. However, building a robust permission system can usually be deferred until users start demanding it. When requests for [fine grained access control](https://authzed.com/blog/blog/fine-grained-access-control) inevitably start pouring in, they often come alongside feature requests for integrations with various [Identity Providers](https://en.wikipedia.org/wiki/Identity_provider). This makes it seem natural to assume that the permission systems should be direct integrations with the primitives that the Identity Providers expose. However, the _authorization_ functionality that is often found in most _authentication_ systems is generally overly simplistic and the resulting permissions systems that are built on top are usually fragile and error prone.

This is the last thing you want to hear when discussing software that determines whether or not a user has access to sensitive content. If you're thinking "that's only if you work in a domain, like healthcare or government, where you know sophisticated access control is required", you should consider that even in simple use cases you'll likely be iterating on your design, which gives you ample opportunities to introduce bugs that manifest themselves as security vulnerabilities.

## LDAP flips Conway's Law on its head

> Any organization that designs a system (defined broadly) will produce a design whose structure is a copy of the organization's communication structure.

>

> — Melvin E. Conway

Conway's Law describes how the architecture of software is a reflection of the organization of the people who built it. For example, software components that are decoupled, but belong to the same application are often separate only because they were built by separate teams.

When permission systems are based on Identity Providers, this law is entirely reversed.

If an organization of people cannot use any software because everything, for example, only supports a structure that can be modeled by [LDAP Groups](https://ldapwiki.com/wiki/Groups%20Are%20Bad), it forces the people to reorganize into something that can be modeled by LDAP Groups.

This may be viable for organizations like businesses (the reorgs will continue until morale improves!), but obviously not all software can demand that their users reorganize just to use their product.

## Groups, Scopes, Claims don't answer the question

LDAP Groups, OAuth Scopes, SAML Claims, [JWT Claims](https://authzed.com/blog/pitfalls-of-jwt-authorization): all a rose by any other name. These concepts all represent the same kind of data: an _[attribute](https://docs.authzed.com/authz/abac)_ that is stored on a user, indicating something about that user. Attributes are useful: they can provide context about a user (such as an object they can access, or a role that the user has), which makes such a system, in theory, a reasonable solution to determining what a user can access. However, in practice, developers realize that this isn't quite so obvious once they have started working with this data.

Software that relies on these concepts for permissions all struggle in the same core principle: how they choose to interpret and apply significance to the presence of an attribute:

- If a user has both the "admin" and the "banned" attribute, what is the correct action?

- Should admins be able to ignore bans or was the employee just fired from the company?

- What about attributes that imply other attributes?

- Does being "admin" also imply "write" access to this resource?

You can see how this quickly gets out of hand. And once it's been decided how attributes should be properly interpreted, it's time to audit every other application and make sure they interpret the attribute the exact same way or else you might have a security problem!

Now, there is nothing fundamentally wrong with attribute-based permission systems. In fact, mature permission systems are almost always a fusion of ideas from [various models] based on the requirements at hand. In this case, how the attributes from _authentication_ systems manifest themselves when they become the foundation of a permission system is the problem. This is because attributes can only state facts about an identity. But what we really need is the answer to the question "can this subject take this action on this resource?".

## Intro to Relationship-Based Access Control (ReBAC)

While identity is required to ask the question "Does this `subject` have permission to do this `action` to this `object`?", it is not the _only_ variable. Identity sits alongside the _action_ and the _object_. That's all well and good, but how _should_ one arrive at the answer to one of these questions?

A great place to start is to crack open a social network like Facebook. Go review (and probably update) your privacy settings; you'll find a variety of configurations for sharing your content like friends-only or friends-of-friends. When you change that setting, you'll find that it applies instantaneously; there is no migration happening in their backend where thousands of users are granted the attribute to view your content. This is because Facebook is designed to store and query _relationships_ between their users. Facebook is powered by a social _graph_.

If we lean on the idea of modeling relationships, like Facebook does, you can change the question from "Does X have permission to do Y to Z?" into "Does X have the relationship Y with Z?". For example, "Does User #123 have the Write relationship with Document #456?". Recall the example from reading attributes where the application has to decide if the "admin" attribute also implies the "write" attribute. In a relationship-based access control model, this problem disappears because these are just more relationships:

The beauty of a relationship-based access control is that the application doesn't care _how_ the user got to the Write relationship. Maybe they're the user that created the document. Maybe the user had some kind of admin relationship that gives them the ability to do everything. It doesn't matter as long as there is some path through our relationship graph from the user to the Write relationship on the document.

This means that relationships can change (like changing your privacy settings) and applications will not need to have their code rewritten because there was no longer anything left open to interpretation.

## So how does Relationship-Based Access Control (ReBAC) work?

ReBAC, or Relationship-Based Access Control, is a modern approach to access control that focuses on the relationships between users or services, aka subjects, and resources. Instead of relying solely on traditional role-based access control (RBAC) or attribute-based access control (ABAC), ReBAC takes into account the dynamic nature of relationships within an organization. In ReBAC, access control is not assigned based on predefined roles or attributes. Instead, they are determined by the relationships between subjects and resources. This means that access to certain resources can be granted or revoked based on the specific relationships a user has with those resources.

For example, let's say you have a project management application. In a traditional RBAC system, you might assign defined roles such as "project manager" or "team member" to users, and those roles would determine their access to various project resources. However, in a ReBAC system, permissions would be determined by the specific relationships between users and projects, and not the explicit roles assigned to them. That is one the main differences between RBAC and ReBAC.

This relationship-based approach offers several advantages:

It allows you to build fine-grained access control systems. Instead of relying on broad roles, you can define specific relationships and their associated permissions. This leads to a more flexible and scalable permission system that can adapt to changes in the organization.

ReBAC enables a more intuitive and natural way of managing access control. Instead of trying to fit users into predefined roles or attributes, you can simply define the relationships they have with resources. This makes it easier to understand and manage permissions, especially in complex organizational structures. ReBAC promotes a more collaborative and dynamic approach to access control. As relationships between users and resources evolve, access permissions can be easily adjusted to reflect those changes. This ensures that the right people have the right level of access at any given time.

[SpiceDB](https://authzed.com/products/spicedb), our open-source Google Zanzibar-inspired authorization authorization service, provides a solid foundation for implementing ReBAC. It offers a flexible authorization model and powerful query capabilities, allowing you to efficiently manage and enforce access control policies based on relationships. With SpiceDB, you can easily define fine-grained permissions and dynamically update them as relationships evolve. Following its muse, Google Zanzibar, SpiceDB is meant to be distributed which ensures high performance and fault tolerance, making it suitable for large-scale applications.

## Restoring Conway's Law

By realizing that permissions systems are fundamentally coupled to the _relationships_ between people and objects in our software, we can build systems that mimic the way people naturally organize their world to be most effective. This not only empowers the people consuming the software but leads developers to arrive at more robust permission systems that can withstand changes to the organization.

If you're left wondering what a permission system based on relationships looks like in practice, [Authzed](https://authzed.com) is exactly that! We're currently working hands-on with customers to help them understand and migrate to a better permission system. If adding or refactoring permissions to support new functionality in your app is next on your roadmap, [reach out to us](https://authzed.com/contact).

## Additional Reading

If you’re interested in learning more about Authorization and Google Zanzibar, we recommend reading the following posts:

- [Understanding Google Zanzibar: A Comprehensive Overview](https://authzed.com/blog/what-is-google-zanzibar)

- [A Primer on Modern Enterprise Authorization (AuthZ) Systems](https://authzed.com/blog/blog/authz-primer)

- [Fine-Grained Access Control: Can You Go Too Fine?](https://authzed.com/blog/blog/fine-grained-access-control)

- [Pitfalls of JWT Authorization](https://authzed.com/blog/pitfalls-of-jwt-authorization) | authzd | |

1,595,519 | Exploring the basics of Hono, a fast and lightweight alternative to Express. | Express Express has historically been a popular choice for constructing RESTful APIs and... | 0 | 2023-09-10T12:59:06 | https://dev.to/ntstarling/exploring-the-basics-of-hono-a-fast-and-lightweight-alternative-to-express-3me4 | webdev, javascript, hono, programming |

### Express

Express has historically been a popular choice for constructing RESTful APIs and application backends. However, with the emergence of newer tools and technologies, Express's dominant position in these areas is facing competition.

### Hono

Hono is such an alternative to express which is an ultrafast web framework which is capable of creating RESTful APIs and backends for your applications. According to a benchmark, It is about 12 times faster than express.

### What is Hono?

According to the developers,

Hono - [炎] means flame🔥 in Japanese - is a small, simple, and ultrafast web framework for the Edges. It works on any JavaScript runtime: Cloudflare Workers, Fastly Compute@Edge, Deno, Bun, Vercel, Netlify, Lagon, AWS Lambda, Lambda@Edge, and Node.js.

### How to install Hono

To create a new Hono project you can use the following command in the terminal

`npm create hono@latest <project-name>`

A new folder will be created based on the name you provided.

### Install dependencies

Now to install the dependencies, navigate to the folder of your project and run `npm i` in the terminal.

Congratulations, now you have setup a Hono project that could be used as a backend for your frontend frameworks such as React,Solid,Svelte or Vue.

### Understanding the project structure

The Hono project structure is simple and easy to understand. Most of the time, we will be working with the **Src** folder most of the time as it contains our code. We can also configure our typescript optionns in the `tsconfig.json` file.

### Basic Hello world application using Hono

In the `index.ts` file, we will delete all of the code and start from scratch.

At first, we import the Hono package which we have installed.

`import { Hono } from 'hono'`

Initialize the library

`const app = new Hono()`

Now with using this 'app' variable, we can create various routes for our application.

We will now create our index route '/' using Hono

`app.get('/', (c) => c.text('Congrats!'))`

We can also send data back in JSON format like a traditional api.

`

`app.get('/users', (c) => c.json({user_1:"Bob",user_2:"Mary"}))`

Now we will export the app so that Hono can run it

`export default app`

The final code should look something like this

````typescript

import { Hono } from 'hono'

const app = new Hono()

app.get('/', (c) => c.text('Congrats!'))

app.get('/users', (c) => c.json({user_1:"Bob",user_2:"Mary"}))

export default app

````

### Creating dynamic routes using Hono

We might need dynamic routes for our app. Dynamic routes allow us to build routes that take a dynamic value. For example when you see a video of youtube, instead of creating a new route for every video, they use a dynamic route. To create a dynamic route in Hono, we can just add a slug like

`/user/:id`

Here we can use this example

````javascript

app.get('/user/:id',(c) => c.text("user id is " + c.req.param('id')))

````

The final code should look like this

````javascript

import { Hono } from 'hono'

const app = new Hono()

app.get('/', (c) => c.text('Congrats!'))

app.get('/users', (c) => c.json({user_1:"Bob",user_2:"Mary"}))

app.get('/user/:id',(c) => c.text(`user id is ${c.req.param('id')}`))

export default app

### Conclusion

Hono makes it easy and simple to build RESTful APIis for our frontend frameworks such as solid,svelte,vue and react. The performance is also great. Overall it is giving tough competition to Express

````

| ntstarling |

1,597,030 | JavaScript Functions(Part 3): Your Magic Spells | In our last magical seminar, we delved deep into the intricate world of JavaScript functions,... | 0 | 2023-09-11T17:57:18 | https://dev.to/init_chandan/javascript-functionspart-3-your-magic-spells-2i7c | javascript, webdev, programming, beginners | In our last magical seminar, we delved deep into the intricate world of JavaScript functions, learning the spells and chants that shape our world. The spell book was opened, and secrets were revealed. But like every great wizard knows, the depth of magic is vast, and our journey has just begun.

Today, we venture further into this enchanted forest of functions, unearthing more potent spells and understanding the legacy of ancient code mages.

### **🪄 Closures: The Enchanted Lockets**

In the magical world of JavaScript, a closure is like an enchanted locket, preserving memories (variables) from disappearing even after the spell (function) has been cast.

Imagine casting a spell to create a magical barrier. This barrier remembers the strength at which you cast it and can adjust itself accordingly in the future.

```jsx

function magicalBarrier(strength) {

let barrierStrength = strength;

return function() {

barrierStrength++;

console.log("Barrier's current strength: " + barrierStrength);

}

}

let enhanceBarrier = magicalBarrier(5);

enhanceBarrier(); // Outputs: "Barrier's current strength: 6"

```

The inner function, even when invoked outside its containing function, still has access to **`barrierStrength`**.

### **🌌 Higher-Order Functions: Conjuring Magic with Magic**

In our realm, some spells can manipulate other spells to create new enchantments. These are known as higher-order functions.

For instance, let's take the ancient spell **`map`**, which can transform every item in an array.

```jsx

const numbers = [1, 2, 3, 4];

const doubledNumbers = numbers.map(function(number) {

return number * 2;

});

console.log(doubledNumbers); // Outputs: [2, 4, 6, 8]

```

The **`map`** spell takes another spell (function) and applies it to each item, giving us a new potion (array).

### **🧙♂️ Recursive Functions: The Magic that Calls Upon Itself**

Some spells are so mighty that they call upon themselves for added power. These are our recursive functions.

Imagine a mirror that reflects another mirror, which then reflects another, creating an infinite loop of reflections.

```jsx

function magicalMirror(reflections) {

if(reflections === 0) return;

console.log("Another reflection appears!");

return magicalMirror(reflections - 1);

}

magicalMirror(3); // Outputs the message three times

```

Use recursive spells with caution, young mage. For if not controlled, they can spiral out of hand!

### **🌠 Conclusion: The Ever-Expanding Grimoire**

The world of JavaScript functions is as expansive as the universe of magic itself. With every page turned in our spell book, we uncover more wonders, more nuances, and greater power.

As we close this chapter of our grimoire, remember: The true magic isn't just in knowing the spells, but in understanding their essence and wielding them with wisdom.

Till our next magical meet, practice these spells, and always remember the first rule of magic - respect the craft!

### **Sorcerer’s Assignments: Test Your Magic**

🔮 **1. The Enchanted Forest of Closures:**

**Objective:** Venture deep into the world of closures and craft a spell that creates magical creatures. Each creature remembers the number of times it has been summoned.

```jsx

// Your task: Define the spell createCreature

// Hint: Use closures to make the creature remember its summon count.

let summonDragon = createCreature('Dragon');

summonDragon(); // Should say: "A Dragon has been summoned! Total times summoned: 1"

summonDragon(); // Should say: "A Dragon has been summoned! Total times summoned: 2"

```

🌌 **2. Mystic Transformations with Higher-Order Spells:**

**Objective:** Use the ancient spell **`filter`** to find all the magical artifacts that are older than a thousand years from an array.

```jsx

const artifacts = [

{ name: 'Enchanted Staff', age: 500 },

{ name: 'Mystic Crystal Ball', age: 1500 },

{ name: 'Ageless Tome', age: 3000 },

{ name: 'Newbie Wand', age: 50 }

];

// Your task: Use the 'filter' spell to find artifacts older than a thousand years.

```

🧙♂️ **3. Mirrors of Recursion:**

**Objective:** Craft a recursive spell that counts down from a number and at the end shouts "Blast off!"

```jsx

javascriptCopy code

// Your task: Define the spell countdown

// Hint: Don't forget to include the base case to stop the recursion.

countdown(5);

// Should say:

// "5..."

// "4..."

// "3..."

// "2..."

// "1..."

// "Blast off!"

```

---

**Remember, young mage:** Crafting spells is not just about getting them right, but understanding the essence and rhythm within them. Once you've attempted these tasks, share your incantations in the comments. Let's see who's ready for the next level in the world of magic! | init_chandan |

1,597,627 | How IVF Treatment in Indore Can Fulfill Your Parenthood Dreams | Introduction Parenthood is a cherished dream for many couples, but infertility can pose significant... | 0 | 2023-09-12T10:21:31 | https://dev.to/sipu/how-ivf-treatment-in-indore-can-fulfill-your-parenthood-dreams-n0h | ivfindore, fertilityindore, ivfclinicindore, indoreivfspecialists | **Introduction**

Parenthood is a cherished dream for many couples, but infertility can pose significant challenges on the path to achieving it. In recent years, advancements in medical science have offered hope and solutions to couples struggling with infertility. In this article, we will explore how seeking treatment at the [best IVF clinic in Indore](https://www.motherhoodivf.com/best-fertility-ivf-center-in-indore/) can help fulfill your parenthood dreams.

**Understanding IVF**

In vitro fertilization (IVF) is a widely recognized assisted reproductive technology that has enabled countless couples to overcome fertility issues and achieve their dream of having a child. IVF involves the fertilization of an egg with sperm outside the body, creating an embryo that is then implanted into the uterus.

**Why Choose Indore?**

Indore, a vibrant city in Madhya Pradesh, has emerged as a hub for fertility treatments, with several renowned IVF clinics providing world-class care. Couples from all over the country are choosing Indore as their destination for IVF treatment, and for good reasons.

**Expertise:** The best IVF clinics in Indore boast highly skilled and experienced specialists who are well-versed in the latest advancements in reproductive medicine. They understand that every case is unique and offer personalized treatment plans tailored to individual needs.

**Cutting-edge Technology:** These clinics are equipped with state-of-the-art technology and modern laboratories, ensuring the highest standards of care and success rates in IVF treatments.

**Affordability:** IVF treatments in Indore are not only of high quality but also affordable when compared to many other major cities in India. This makes top-notch fertility care accessible to a wider range of couples.

**Emotional Support:** Going through fertility treatments can be emotionally challenging. The best IVF clinics in Indore prioritize patient support and counseling, helping couples navigate the emotional roller coaster that often accompanies infertility treatments.

**Success Stories:** Many couples have achieved their dreams of parenthood in Indore, thanks to the exceptional services provided by the city's IVF clinics. These success stories stand as a testament to the quality of care available in the region.

**The Process of IVF Treatment**

**IVF treatment involves several key steps:**

**Ovulation Stimulation:** The woman is given medications to stimulate the ovaries to produce multiple eggs.

**Egg Retrieval:** Mature eggs are retrieved from the ovaries through a minor surgical procedure.

**Fertilization:** The eggs are fertilized with sperm in a laboratory setting to create embryos.

**Embryo Transfer:** One or more healthy embryos are transferred into the woman's uterus.

**Monitoring and Support:** Throughout the process, patients are closely monitored, and support is provided to enhance the chances of a successful pregnancy.

**Conclusion**

Parenthood is a dream that should not be hindered by fertility challenges. Indore, with its best IVF clinics, offers hope to couples by providing world-class fertility treatments at affordable prices. If you are facing infertility issues and dreaming of parenthood, consider exploring the options available at the best IVF clinics in Indore. With their expertise, advanced technology, and compassionate care, they can help turn your parenthood dreams into a beautiful reality. | sipu |

1,598,791 | Paranoiac nature of School Investment in the UAE | The current fee framework for schools in the UAE has made it difficult to attract investors to open... | 0 | 2023-09-13T08:58:16 | https://dev.to/marthagodsay/paranoiac-nature-of-school-investment-in-the-uae-alo | The current fee framework for schools in the UAE has made it difficult to attract investors to open new educational institutions. While it has been a challenge to attract private funding, the government has introduced a new system. However, the new fees structure will help schools increase their incomes. This is particularly important if the schools are high quality. The government wants to promote UAE as a desirable place to live and work. But the UAE has not always been an easy place to invest.

In order to establish a school in UAE, prospective investors must first get land or an existing building. Then, they should acquire permits. The Knowledge and Human Development Authority, which regulates educational institutions in the country, can help investors with legal advice. Aside from the government, the KHDA also assists investors with the process of obtaining the necessary permits. It is also important to consider the legal and financial aspects of school investment.

Aside from education, there are other benefits associated with [School investment in UAE](https://www.d3consultants.net/services-investors/). It can be a low-risk investment option that will help investors build a strong portfolio. Allied Investment Partners (AIP), a wealth management firm, offers investment solutions for school owners. The firm's partners include Shell and AECOM. The company also has a successful track record of helping investors find the best investment opportunities. In addition to the UAE, Allied Investment Partners helps its members find interesting investments in the region.

Allied Investment Partners (AIP) has been an education investment company in the region. The fund's Atlas Real Estate platform, which is accredited by the CAA, offers educational properties with long-term lease agreements with operators. These long-term agreements offer stability and a predictable income stream for investors. In addition to investing in schools, AIP has helped its clients find investment opportunities in the region, such as acquiring an existing school or two.

A successful investment in a school in the UAE will be a great way to create a valuable asset. The process will start by identifying an existing school or acquiring land. Once the property is identified, it is important to obtain all the necessary permits. The Knowledge and Human Development Authority will provide legal advice for the investor in the UAE. It is important to note that this is a complex region, so a thorough understanding of the laws and regulations will help it to find the best opportunities.

There are several reasons why an education investment is essential for the economy in the UAE. It builds human capital and community stakeholders in the country. It creates the next generation of leaders. The country also has a growing population, which needs educated employees. A strong education system will help the community grow. This will be the cornerstone of the nation. There are many benefits to a school, and the investment in UAE can be beneficial to the community.

The UAE has an innovative education policy that is committed to human capital development. The government is committing to investing in the education sector, and it has done so through a series of transactions. These transactions are concentrated in the higher education and K-12 sector. The goal is to create a successful educational enterprise. In Dubai, the Education Policy has been implemented over the last few years. The government has a clear vision for the future of the UAE. The country is committed to fostering a well-educated workforce.

Investment in education is important to the country's future. In the UAE, the federal budget has allocated Dh9 billion to education. According to Van Hout, the investment in education creates human capital and community stakeholders. It also helps in creating the next generation of leaders. If you are looking for a school in the UAE, there are many opportunities available. The market for school education in the region is huge. The government is investing in schools in the UAE to ensure the success of educational facilities.

The government is committed to enhancing the quality of education in the UAE. Currently, there are several [school investment in KSA](https://www.d3consultants.net/services-investors/) opportunities in the region. The Government has also committed to increasing the number of schools in the region. This means that the investment is good for the country's economic development. It is a good time to buy educational assets. This is an opportunity to improve the standard of education. The United Arab Emirates has a high quality of education.

| marthagodsay | |

1,600,286 | Announcement!!! | We have just released our "generative" software delivery engine source on GitHub... | 0 | 2023-09-14T13:28:44 | https://dev.to/trustacks/announcement-3e5m | devops, opensource, cicd, github | We have just released our "generative" software delivery engine source on GitHub -https://lnkd.in/guGVRJ3F.

More docs and features are coming in the near future. Progress on the project can be tracked here -> https://lnkd.in/g2pk26mP

We are also rolling out early access to the hosted version of the engine where action plans can be centralized and executed from any #cicd tool such as #gitlab ci or #github actions. Join the waitlist at https://www.trustacks.com/.

If you are interested in contributing in any way join us on the catalyst channel on our Discord(https://lnkd.in/giMQh2HX) to get involved in the next iteration.

Let us know if you have any questions and stay on the lookout for new updates.

| trustacks |

1,600,314 | Amazon API Gateway HTTP Errors | Amazon API Gateway is a fully managed service that helps developers to create and deploy scalable... | 0 | 2023-09-14T14:15:06 | https://awsmag.com/amazon-api-gateway-http-errors/ | serverless, aws, cloudcomputing, awsapigateway | ---

title: Amazon API Gateway HTTP Errors

published: true

date: 2022-01-24 06:07:00 UTC

tags: serverless,aws,cloudcomputing,awsapigateway

canonical_url: https://awsmag.com/amazon-api-gateway-http-errors/

---

[Amazon API Gateway](https://awsmag.com/introduction-to-api-gateway/) is a fully managed service that helps developers to create and deploy scalable APIs on AWS. These APIs act as an entry point for the applications to connect and get access to data, perform business logic or access any other AWS service.

Amazon API Gateway also returns some HTTP Errors and we will be discussing some of the errors in this blog post and what they mean when returned from the Amazon API Gateway. Usually, the errors returned are in the range of 4xx or 5xx and examples for the same are 400 or 500. As a rule of thumb errors in the range of 400–499 are usually returned if there are problems with the client or you are breaking some of the rules defined by the Amazon API Gateway.

The errors in the range of 500–599 mean the server is not working problem or you have an issue with the network or the issues in the infrastructure which runs your server.

### 400 Error: Bad Request

The HTTP Status 400: Bad Request is the broadest error and depending on what AWS service API Gateway is integrating with, this error means many things. Some of the reasons for this error can be an invalid JSON, wrong data types and required fields etc.

### 403 Error: Access Denied

The HTTP Status 403: Forbidden means there are some permission issues. In AWS, this can be an issue with a wrong IAM role configuration. If your service uses an auth mechanism like AWS Cognito or a custom authorizer, this can be a permission issue because of this, then this error code will be returned.

### 404 Error: Not Found

The HTTP Status 404: This means the resource is not available or the URL does not exist. You can check the URL if it is right or not or have been implemented right to make sure that you are not making any mistake.

### 409 Error: Conflict

The HTTP Status 409: indicates that your request is trying to do something that conflicts with the current state of the target resource. It is most likely to occur in response to a PUT request.

### 429 Error: Too Many Requests

There are two cases when you can receive 429 errors from API Gateway.

The first one for HTTP Status 429: “Too Many Request”. This usually happens when the downstream resource is not able to handle the number of requests coming in.

For example, if you have a Lambda which gets triggered via an API Gateway and there is a reserved concurrency assigned to it let’s say 20 then 21 requests will same time probably give you this error.

This can also happen if your API keys are not allowing more than x number of requests concurrently. If the number of requests exceeds the number even if the downstream resource can handle it, the API Gateway will give this error.

### 429 Error: Limit Exceeded

The second one for HTTP Status 429 is “Limit Exceeded Exception,” which means that you have exceeded the allowed number of requests. This happens when the request is metered using an API key in API Gateway. The usage plan is associated with the key and the plan decides how many requests are allowed in a month by that particular resource.

### 500 Error: Internal Server Error

HTTP Status 500: It is the most generic HTTP error you will see. If the downstream service is Lambda, this error can mean an issue or a bug in the code of the function.

This can also happen if the status code mapping in the API is wrong. The default mapping if the error mapping is not configured properly, then the status code returned to the client is HTTP Status code 500.

### 502 Error: Bad Gateway

HTTP Status 502: this usually happens when the downstream service is not able to provide a response that can be mapped easily with the API Gateway. Sometimes the downstream service is not ready and cannot return a response.

Amazon API Gateway has a hard limit of 30 seconds timeouts. If the downstream service is not able to respond in this time frame, the API Gateway returns the HTTP Status 503.

### Conclusion:

The above-mentioned codes are some of the common errors which you may encounter while working with the Amazon API Gateway. If you would like to be familiar with the other things related to Amazon API Gateway, we have a collection that lists all the articles related to it. You can find it [here](https://awsmag.com/tag/amazon-api-gateway/).

_Originally published at_ [_https://awsmag.com_](https://awsmag.com/amazon-api-gateway-http-errors/) _on January 24, 2022._ | singhs020 |

1,600,758 | Curso de No Code, Algoritmos, IA e Mais Opções Gratuitas | No cenário atual de capacitação, o programa QualiFacti se destaca ao lançar uma série de cursos... | 0 | 2023-09-15T00:16:10 | https://guiadeti.com.br/curso-no-code-algoritmos-ia-gratis/ | cursogratuito, algoritmos, chatgpt, cursosgratuitos | ---

title: Curso de No Code, Algoritmos, IA e Mais Opções Gratuitas

published: true

date: 2023-09-15 00:07:46 UTC

tags: CursoGratuito,algoritmos,chatgpt,cursosgratuitos

canonical_url: https://guiadeti.com.br/curso-no-code-algoritmos-ia-gratis/

---

No cenário atual de capacitação, o programa QualiFacti se destaca ao lançar uma série de cursos online gratuitos. Estes cursos, centrados no desenvolvimento de competências técnicas e comportamentais, abordam tópicos de vanguarda, como No Code, Inteligência Artificial e [Algoritmos](https://guiadeti.com.br/guia-tags/cursos-de-algoritmos/).

O objetivo principal é preparar os profissionais para enfrentar as demandas dinâmicas do mercado de trabalho.

E, embora o foco possa ser predominantemente em Tecnologias da Informação e Comunicação (TICs), o conteúdo é aplicável a uma variedade de áreas, demonstrando a interdisciplinaridade do conhecimento atual.

A segunda fase do QualiFacti, iniciada em junho de 2023, promete ser mais enriquecedora, se estendendo até março de 2024.

Ao longo deste período, os participantes terão acesso a uma seleção criteriosa de cursos, todos meticulosamente projetados para atender às tendências emergentes. Neste mundo em constante mudança, esta é uma chance imperdível de se atualizar e se destacar!

## Conteúdo

<nav><ul>

<li>

<a href="#cursos-quali-facti">Cursos QualiFacti</a><ul>

<li><a href="#uma-nova-fase-de-oportunidades">Uma Nova Fase de Oportunidades </a></li>

<li><a href="#capacitacao-gratuita-e-de-qualidade">Capacitação Gratuita e de Qualidade </a></li>

<li><a href="#sobre-o-quali-facti">Sobre o QualiFacti </a></li>

<li><a href="#uma-metodologia-que-faz-a-diferenca">Uma Metodologia que Faz a Diferença </a></li>

<li>

<a href="#cursos-com-inscricoes-abertas">Cursos com Inscrições Abertas</a><ul>

<li><a href="#ia-algoritmos-de-otimizacao-em-python">IA: Algoritmos de Otimização em Python </a></li>

<li><a href="#no-code-e-low-code-na-robotica">No Code e Low Code na robótica</a></li>

<li><a href="#resolucao-de-problemas">Resolução de Problemas</a></li>

<li><a href="#automacao-de-atendimentos-com-chat-gpt">Automação de Atendimentos com ChatGPT </a></li>

<li><a href="#aplicacao-mobile-para-automacao-residencial">Aplicação Mobile para Automação Residencial</a></li>

<li><a href="#io-t-e-lo-ra">IoT e LoRa</a></li>

<li><a href="#transicao-de-carreira-como-migrar-para-a-area-da-tecnologia">Transição de Carreira: Como Migrar para a Área da Tecnologia</a></li>

</ul>

</li>

<li><a href="#certifique-se-e-destaque-se">Certifique-se e Destaque-se </a></li>

</ul>

</li>

<li>

<a href="#no-code">No Code</a><ul>

<li><a href="#por-que-o-no-code-esta-ganhando-tracao">Por que o No Code está ganhando tração? </a></li>

<li><a href="#ferramentas-populares-no-universo-no-code">Ferramentas Populares no Universo No Code </a></li>

<li><a href="#o-impacto-no-mercado-de-trabalho">O Impacto no Mercado de Trabalho </a></li>

<li><a href="#desafios-e-consideracoes">Desafios e Considerações </a></li>

<li><a href="#o-futuro-da-construcao-digital">O Futuro da Construção Digital </a></li>

</ul>

</li>

<li>

<a href="#quali-facti">QualiFacti</a><ul>

<li><a href="#pilares-tematicos-da-plataforma">Pilares Temáticos da Plataforma </a></li>

<li><a href="#fases-e-cronograma">Fases e Cronograma </a></li>

<li><a href="#metodologia-e-abordagem">Metodologia e Abordagem </a></li>

<li><a href="#certificacao-e-destaque-no-mercado">Certificação e Destaque no Mercado </a></li>

<li><a href="#a-missao-da-quali-facti">A Missão da QualiFacti </a></li>

</ul>

</li>

<li><a href="#inscreva-se-no-quali-facti-e-transforme-sua-carreira">Inscreva-se no QualiFacti e transforme sua carreira!</a></li>

<li><a href="#compartilhe-o-quali-facti-e-ajude-alguem-a-evoluir-profissionalmente">Compartilhe o QualiFacti e ajude alguém a evoluir profissionalmente!</a></li>

</ul></nav>

## Cursos QualiFacti

Os cursos do QualiFacti trazem à tona tópicos cruciais da atualidade, como No Code, Inteligência Artificial e Algoritmos.

_Página da QualiFacti_

Ao se imergir nesses conteúdos, os participantes se preparam para atender às demandas contemporâneas e vindouras do mercado, não apenas no setor de Tecnologias da Informação e Comunicação (TICs), mas em múltiplas áreas.

### Uma Nova Fase de Oportunidades

Atualmente, o QualiFacti adentra sua segunda fase, iniciada em junho de 2023 e com previsão de encerramento em março de 2024. Nesse interstício, a iniciativa se destaca por seu portfólio de cursos gratuitos, meticulosamente arquitetados para destacar aspectos essenciais no desenvolvimento de habilidades técnicas e comportamentais.

### Capacitação Gratuita e de Qualidade

Não deixe escapar esta chance única de alavancar sua carreira. A primeira fase do QualiFacti foi um estrondoso sucesso, validado pelo alto grau de satisfação de inúmeros certificados.

Agora, na sequência, o programa se expande, disponibilizando novos cursos acessíveis a todos no Brasil. Gratuitos e online, estes cursos incorporam modalidades síncronas e assíncronas, sob a orientação de instrutores renomados no setor e no meio acadêmico.

### Sobre o QualiFacti

Originário da Facti, uma Instituição Científica e Tecnológica (ICT) de vanguarda, o QualiFacti nasce da expertise em fomentar soluções tecnológicas e capacitação em [Tecnologia da Informação](https://guiadeti.com.br/guia-tags/cursos-de-tecnologia-da-informacao/) e Comunicação.

A iniciativa é fruto do Programa MCTI Futuro, um projeto do Ministério da Ciência, Tecnologia e Inovação, com fundos provenientes do PPI da Lei de Informática, e coordenação da Softex.

### Uma Metodologia que Faz a Diferença

O diferencial do QualiFacti reside em sua abordagem pedagógica. A metodologia empregada garante que os alunos não só assimilem, mas apliquem de forma ágil e prática o conhecimento adquirido, favorecendo uma experiência de aprendizado imersiva e colaborativa.

### Cursos com Inscrições Abertas

#### IA: Algoritmos de Otimização em Python

Ementa:

- Introdução à Inteligência Artificial e à importância dos processos de otimização;

- Pesquisa randômica;

- Método Hill Climb;

- _Simulated annealing_;

- Algoritmos Genéticos;

- Estudo de caso: Representação do problema da viagem em grupo.

#### No Code e Low Code na robótica

Ementa:

- O que é No Code e Low Code;

- Autômatos e o surgimento da robótica;

- Automação de processos industriais com robôs;

- [O que é robótica](https://guiadeti.com.br/guia-tags/cursos-de-robotica/) humanoide;

- Cases de robótica humanoide;

- Desenvolvimento de uma aplicação com no code na robótica industrial e outra com Low Code na robótica humanoide.

#### Resolução de Problemas

Ementa:

- Metodologias para a resolução de problemas;

- Problematização;

- Pensamento Sistêmico;

- Decomposição de Problemas;

- Estratégias e Métodos;

- Ferramentas;

- Resolução de problemas na prática.

#### Automação de Atendimentos com ChatGPT

Ementa:

- Introdução a chatbots;

- Tipos de chatbots disponíveis no mercado;

- Introdução ao Chat GPT como Inteligência Artificial;

- Construção de chatbots com estrutura de árvore de navegação com a ferramenta da Take Blip;

- Conexão com canais de atendimento como Facebook Messenger e Telegram;

- Estudo de caso: automatizar o atendimento com respostas fornecidas pela Inteligência Artificial do Chat GPT.

#### Aplicação Mobile para Automação Residencial

Ementa:

- [Introdução à Internet das Coisas.](https://guiadeti.com.br/guia-tags/cursos-de-internet-das-coisas/)

- Noções básicas da Plataforma de Prototipagem Arduino.

- Prototipagem com Tinkercad.

- Introdução do App Inventor.

- Estudo de Caso: Aplicação Mobile para Automação Residencial.

#### IoT e LoRa

Ementa:

- IoT; LoRa: O que é? Por que usar?

- Definição e vantagens do uso de LoRa;

- Aplicação de LoRa em projetos de Internet das Coisas;

- Diferenças entre LoRa e LoRaWan;

- Definição da LoRa Alliance;

- Estudo de caso.

#### Transição de Carreira: Como Migrar para a Área da Tecnologia

Ementa:

- Introdução à área de tecnologia;

- Adaptação a novas culturas;

- Profissões na área de tecnologia;

- Empreendedorismo digital;

- Marketing pessoal: Criação de uma marca pessoal forte e aprimoramento da presença online e offline;

- Networking: Desenvolvimento de uma rede de contatos e estratégias para cultivar relacionamentos profissionais.

### Certifique-se e Destaque-se

Ao concluir sua trajetória no QualiFacti e atender aos critérios estabelecidos, você se tornará merecedor de um certificado, um aval de sua dedicação e competência, abrindo portas para novos horizontes profissionais.

## No Code

No Code” refere-se a uma abordagem de desenvolvimento de software que permite a criação de aplicativos, sites e automações sem a necessidade de escrever código. Usando ferramentas visuais, os usuários montam funcionalidades como se estivessem montando blocos em um jogo de construção.

### Por que o No Code está ganhando tração?

A crescente demanda por soluções digitais em um mundo cada vez mais conectado supera a oferta de desenvolvedores disponíveis. O No Code permite que pessoas sem conhecimento técnico criem soluções rápidas e eficientes, democratizando o [desenvolvimento de software](https://guiadeti.com.br/guia-tags/cursos-de-software-livre/) e acelerando a inovação.

### Ferramentas Populares no Universo No Code

Existem várias ferramentas que possibilitam essa revolução, incluindo Webflow, Bubble, Zapier e muitas outras. Elas cobrem desde a criação de sites e aplicativos até a automação de tarefas e processos de negócios.

### O Impacto no Mercado de Trabalho

Com a popularização do No Code, novos papéis surgem no mercado. Designers, gestores de projeto e outros profissionais agora têm a chance de se tornar “construtores”, criando soluções sem depender totalmente de equipes de desenvolvimento.

### Desafios e Considerações

Enquanto o No Code traz inúmeras vantagens, ele não substitui todas as necessidades de desenvolvimento tradicional. Há questões de escalabilidade, personalização e complexidade que ainda podem exigir abordagens baseadas em código. Além disso, é essencial escolher a ferramenta certa para cada projeto.

### O Futuro da Construção Digital

O movimento No Code representa um passo significativo em direção a um mundo onde mais pessoas podem criar, inovar e resolver problemas por meio da tecnologia. Ele não só acelera o desenvolvimento de soluções, mas também fomenta uma era de inclusão digital mais ampla.

<iframe title="No Code x Low Code x Programação: A verdade que ninguém te contou ainda" width="1200" height="675" src="https://www.youtube.com/embed/YNfvv0VEk9Q?feature=oembed" frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share" allowfullscreen></iframe>

_Créditos: Canal Renato Asse – Sem Codar_

## QualiFacti

A QualiFacti surge como uma iniciativa focada na oferta de cursos voltados ao desenvolvimento de competências técnicas e comportamentais essenciais no cenário tecnológico atual e futuro.

### Pilares Temáticos da Plataforma

Os cursos da QualiFacti abordam tópicos emergentes e de alta relevância, como No Code, Inteligência Artificial e Algoritmos. Essas áreas são fundamentais para atender às crescentes demandas do mercado, não apenas dentro do setor de Tecnologias da Informação e Comunicação, mas também em campos interdisciplinares.

### Fases e Cronograma

Atualmente, a QualiFacti encontra-se em sua segunda fase, que iniciou em junho de 2023 e se estenderá até março de 2024. Neste período, os inscritos terão acesso a uma série de cursos gratuitos, meticulosamente desenhados para atender às exigências do mercado.

### Metodologia e Abordagem

O diferencial da QualiFacti reside na sua abordagem de ensino. Os cursos são desenvolvidos para permitir uma aplicação prática imediata dos conhecimentos adquiridos. Combinando aulas ao vivo com atividades assíncronas, os alunos têm oportunidades ímpares de interação e aprendizado.

### Certificação e Destaque no Mercado

Ao concluir os cursos, os participantes recebem um certificado de capacitação. Esse reconhecimento não apenas valida o aprendizado, mas também serve como um distintivo no perfil profissional dos alunos, destacando-os em um mercado competitivo.

### A Missão da QualiFacti

A QualiFacti não é apenas uma plataforma de cursos. Ela é um compromisso com o futuro da [educação tecnológica](https://guiadeti.com.br/guia-tags/cursos-de-tecnologia/), buscando preparar profissionais para os desafios do amanhã e emponderando-os para criar soluções inovadoras em suas respectivas áreas.

## Inscreva-se no QualiFacti e transforme sua carreira!

As [inscrições para os cursos da QualiFacti](https://qualifacti.facti.com.br/home) devem ser realizadas no site da QualiFacti.

## Compartilhe o QualiFacti e ajude alguém a evoluir profissionalmente!

Gostou do conteúdo sobre os cursos gratuitos? Então compartilhe com a galera!

O post [Curso de No Code, Algoritmos, IA e Mais Opções Gratuitas](https://guiadeti.com.br/curso-no-code-algoritmos-ia-gratis/) apareceu primeiro em [Guia de TI](https://guiadeti.com.br). | guiadeti |

1,601,407 | Dependency Injection in Flutter: implementing the Dependency Inversion principle | Photo by Vardan Papikyan on Unsplash If you are looking to get your code to the next level, you have... | 0 | 2023-09-15T14:30:40 | https://dev.to/iriber/dependency-injection-in-flutter-implementing-the-dependency-inversion-principle-1e0p | solidprinciples, dependencyinversion, flutter, cleancoding | Photo by <a href="https://unsplash.com/es/@varpap?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Vardan Papikyan</a> on <a href="https://unsplash.com/es/fotos/DnXqvmS0eXM?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a>

If you are looking to get your code to the next level, you have to know about dependency injection.

## Introduction

When we write code we follow the SOLID guidelines. One of those principles (“D”) take care of Dependency Inversion. Let's see what it means.

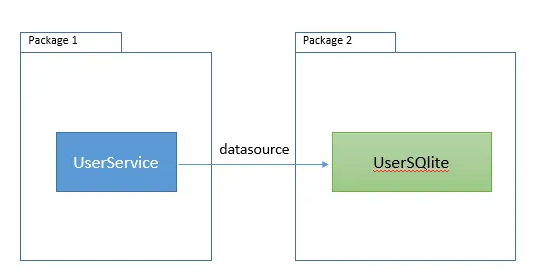

Suppose you have a service class called “UserService” that uses a datasource called UserSqlite to get users from a SQLite database:

So Package 1 depends on Package 2. If you have this relation on your code, Mr. SOLID will go to your desktop and say: “Ey, do NOT couple your code with other libraries, what are you thinking about?”.

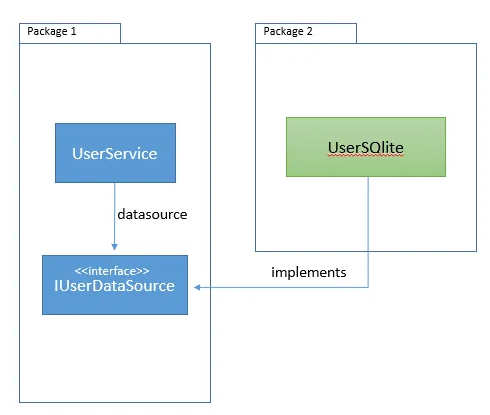

What about this:

What we have already done was change the dependency direction. Now, Package 2 depends on Package 1. The right direction of dependency makes our code reusable, and eliminates cyclic dependencies.

### Dependency Injection

The dependency injection concept will help us to drive the right implementation of the interface IUserDataSource to our UserService.

UserService wouldn’t be concerned about the IUserDataSource implementation, it just uses it.

## How can you implement Dependency Injection in Flutter?

I use a package called get_it, you can find it here:

[GetIt](https://pub.dev/packages/get_it?source=post_page-----41094bd6b38c--------------------------------)

I like to wrap the third party packages, because maybe tomorrow I want to change it to another that I like more than this. It is a premise that I have, you can skip that step, but it is one more thing that helps me to have my code the most descouple than I can.

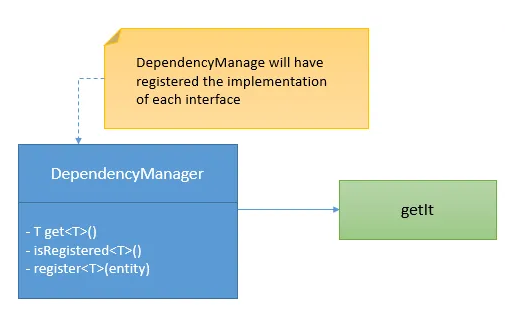

So, I created a class called DependencyManager which wraps the get_it library:

### Inject dependencies

In your app config class or your app initializer you have to inject the dependencies you want to have. In the example of the second picture, we have to inject the implementation of IUserDataSource:

```

void injectDependencies() {

try {

/// Get dependency manager

DependencyManager manager = DependencyManager();

/// Register UserSQLite as IUserDataSource

manager.registerFactory<IUserDataSource>(() => UserSQLite());

} catch (e2) {

debugPrint("Manage depency injections error");

}

}

```

And then we must use this manager everywhere we need to use the user data source implementation. Let’s see:

```

class UserService {

/// User datasource.

final IUserDataSource datasource= DependencyManager().get<IUserDataSource>();

....

}

```

DependencyManager manages the implementations of our interface. It registers and returns concrete classes.

### DependencyManager

To have the example simple I just showed you how to register a factory for our user datasource, but the dependency manager also could call constructors that requires some parameters, could be a little more complex.

My DependencyManager looks like this:

```

import 'package:get_it/get_it.dart';

class DependencyManager {

/// GetIt instance.

final getIt = GetIt.instance;

/// I use singleton to create this manager.

DependencyManager._internal();

static final DependencyManager _singleton = DependencyManager._internal();

factory DependencyManager() {

return _singleton;

}

T get<T extends Object>(){

return getIt.get<T>();

}

T getWithParam<T extends Object, P1>(dynamic p){

return getIt.get<T>(param1: p);

}

T getWith2Param<T extends Object, P1, P2>(dynamic p1, dynamic p2){

return getIt.get<T>(param1: p1, param2: p2);

}

bool isRegistered<T extends Object>(){

return getIt.isRegistered<T>();

}

void registerLazySingleton<T extends Object>(FactoryFunc<T> factoryFunc){

getIt.registerLazySingleton<T>(factoryFunc );

}

void registerFactory<T extends Object>(FactoryFunc<T> factoryFunc){

getIt.registerFactory<T>(factoryFunc );

}

void registerFactoryParam<T extends Object, P1, P2>(

FactoryFuncParam<T, P1, P2> factoryFunc, {

String? instanceName,

}){

getIt.registerFactoryParam<T,P1,P2>(factoryFunc);

}

}

```

## Conclusion

We talked about a **SOLID** principle, the **dependency inversion**, we introduced to **dependency injection** and saw how to get implemented it in Flutter.

Now you have the concept, and you know how to apply it. You will drive your code to the next level:

1. Descoupled

2. Testeable

3. Easy to mantain

Thanks for reading, Clap if you like it!

Let me know your comments below.

| iriber |

1,601,982 | Power of Javascript Array Methods 🔍🛠️ | 🎲 "Roll the Dice: Unveiling the Power of Array Methods in JavaScript" It's a... | 24,642 | 2023-09-29T11:12:35 | https://dev.to/shivams1007/power-of-javascript-array-methods-3fbp | javascript, webdev, typescript, react | ### 🎲 "Roll the Dice: Unveiling the Power of Array Methods in JavaScript"

#### It's a Array's World, We're Just Coding in It!

Hey, you array-spiring JavaScripters! 👋 Tired of `for` loops that go on and on like your grandma’s stories? Ready to tap into the untapped magic of array methods? Then welcome to the ultimate guide to mastering JavaScript array methods, where you'll find more sugar than in a candy store. 🍬

---

##### 1️⃣ "The Map is not the Territory: The `map()` Method"

Remember Dora the Explorer? Map, map, map! 🗺️ Just like Dora's magical map, JavaScript's `map()` method helps you transform your array without changing the original one.

Example:

```javascript

const numbers = [1, 2, 3];

const doubled = numbers.map(x => x * 2);

// Output: doubled = [2, 4, 6]

```

---

##### 2️⃣ "Filter It Out, Keep it Clean: The `filter()` Method"

No, this isn't about your Instagram filter. The `filter()` method helps you keep only what you want in an array.

Example:

```javascript

const arr = [1, 2, 3, 4, 5];

const even = arr.filter(x => x % 2 === 0);

// Output: even = [2, 4]

```

---

##### 3️⃣ "Finding Nemo with `find()`: Where’s Waldo? Nah, Where’s My Element?"

Want to find something quickly? Use `find()` and stop playing hide-and-seek with your elements!

Example:

```javascript

const animals = ['cat', 'dog', 'fish'];

const findFish = animals.find(x => x === 'fish');

// Output: findFish = 'fish'

```

---

##### 4️⃣ "Slice and Dice: The `slice()` Method"

Who needs a knife when you've got `slice()`? Cut your array into pieces, just like your favorite birthday cake! 🍰

Example:

```javascript

const fruits = ['apple', 'banana', 'cherry'];

const myFruits = fruits.slice(0, 2);

// Output: myFruits = ['apple', 'banana']

```

---

##### 5️⃣ "Reduce, Reuse, `reduce()`: The Marie Kondo of Arrays"

Just like Marie Kondo, `reduce()` helps you tidy up an array into a single value that sparks joy! 🌟

Example:

```javascript

const values = [1, 2, 3, 4];

const sum = values.reduce((acc, val) => acc + val, 0);

// Output: sum = 10

```

---

##### 6️⃣ "It’s Not Stalking, It’s `indexOf()`: Find Your Crush in an Array"

Looking for that special something or someone? `indexOf()` helps you find the position of your 'crush element' in an array.

Example:

```javascript

const crushes = ['apple', 'banana', 'cherry'];

const position = crushes.indexOf('banana');

// Output: position = 1

```

---

##### 7️⃣ "Going Backwards with `reverse()`: Benjamin Button Your Array"

Who said time travel isn't possible? With `reverse()`, you can turn back time on your arrays!

Example:

```javascript

const time = [1, 2, 3];

const reversedTime = time.reverse();

// Output: reversedTime = [3, 2, 1]

```

---

##### 8️⃣ "Shuffle the Deck with `sort()`: Your Array, Your Rules"

Last but not least, let's talk about `sort()`. Put some order into your chaotic array life!

Example:

```javascript

const chaos = [3, 1, 2];

const order = chaos.sort();

// Output: order = [1, 2, 3]

```

#### Conclusion: Arrays Are Fun, After All!

There you have it! You're now an array whisperer. 🤠 You've learned how to manipulate, transform, and basically have a party with arrays. If you have any favorite array methods or tricks up your sleeve, drop a comment below and share the wealth!

Go forth, and array like you've never arrayed before! 🎉

---

Feel free to comment, share, and let me know what you think! See you next time! ✌️

| shivams1007 |

1,602,513 | Writing Clean Code: Best Practices and Principles | Introduction Writing clean code is a fundamental skill for every software developer. Clean... | 0 | 2023-09-16T21:12:29 | https://dev.to/favourmark05/writing-clean-code-best-practices-and-principles-3amh | webdev, javascript, programming, productivity | ## Introduction

Writing clean code is a fundamental skill for every software developer. Clean code not only makes your codebase more maintainable and easier to understand but also fosters collaboration among team members. In this comprehensive article, we will explore what clean code is, why it's important, and provide you with a set of best practices and principles to help you write clean and maintainable code.

## What is Clean Code?

Clean code is code that is easy to read, easy to understand, and easy to modify. It is code that is devoid of unnecessary complexity, redundancy, and confusion. Clean code follows a set of conventions and best practices that make it more consistent, making it easier for multiple developers to work on the same project seamlessly.

## Why is Clean Code Important?

1. **Readability**: Clean code is easy to read, which means that anyone - including your future self - can understand it quickly. This reduces the time required to grasp the code's functionality, leading to faster development and debugging.

2. **Maintainability**: Code is read more often than it is written. When you write clean code, it becomes easier to maintain and extend the application over time. This is crucial in the software development lifecycle, where projects often evolve and grow.

3. **Collaboration**: Clean code encourages collaboration. When your code is clean and well-organized, other team members can work on it effectively. This makes it easier to divide tasks and work on different parts of the codebase simultaneously.

4. **Bug Reduction**: Clean code reduces the likelihood of introducing bugs. Code that is difficult to understand is more prone to errors during modifications or enhancements.

5. **Efficiency**: Clean code is efficient code. It typically runs faster and uses fewer resources because it avoids unnecessary operations and complexity.

Now that we understand why clean code is important, let's delve into some best practices and principles to help you write clean code.

## Best Practices and Principles for Writing Clean Code

1 . **Meaningful Variable and Function Names**

Use descriptive names for variables, functions, classes, and other identifiers. A well-chosen name can convey the purpose of the entity, making the code more understandable. Avoid single-letter variable names or cryptic abbreviations.

```

# Bad variable name

x = 5

# Good variable name

total_score = 5

```

2 . **Keep Functions and Methods Short**

Functions and methods should be concise and focused on a single task. The Single Responsibility Principle (SRP) states that a function should do one thing and do it well. Shorter functions are easier to understand, test, and maintain. If a function becomes too long or complex, consider breaking it down into smaller, more manageable functions.

```

// Long and complex function

function processUserData(user) {

// Many lines of code...

}

// Refactored into smaller functions

function validateUserInput(userInput) {

// Validation logic...

}

function saveUserToDatabase(user) {

// Database operation...

}

```

3 . **Comments and Documentation**

Use comments sparingly, and when you do, make them meaningful. Code should be self-explanatory whenever possible. Documentation, such as inline comments and README files, helps other developers understand your code's purpose and usage. Document complex algorithms, non-trivial decisions, and public APIs.

```

# Bad comment

x = x + 1 # Increment x

# Good comment

# Calculate the total score by incrementing x

total_score = x + 1

```

4 . **Consistent Formatting and Indentation**

Adhere to a consistent coding style and indentation. This makes the codebase look clean and organized. Most programming languages have community-accepted coding standards (e.g., PEP 8 for Python, eslint for JavaScript) that you should follow. Consistency also applies to naming conventions, spacing, and code structure.

```

// Inconsistent formatting

if(condition){

doSomething();

} else {

doSomethingElse();

}

// Consistent formatting

if (condition) {

doSomething();

} else {

doSomethingElse();

}

```

5 . **DRY (Don't Repeat Yourself) Principle**

Avoid duplicating code. Repeated code is harder to maintain and increases the risk of inconsistencies. Extract common functionality into functions, methods, or classes to promote code reusability. When you need to make a change, you'll only need to do it in one place.

Suppose you're working on a JavaScript application that calculates the total price of items in a shopping cart. Initially, you have two separate functions for calculating the price of each item type: one for calculating the price of a book and another for calculating the price of a laptop. Here's the initial code:

```

function calculateBookPrice(quantity, price) {

return quantity * price;

}

function calculateLaptopPrice(quantity, price) {

return quantity * price;

}

```

While these functions work, they violate the DRY principle because the logic for calculating the total price is repeated for different item types. If you have more item types to calculate, you'll end up duplicating this logic. To follow the DRY principle and improve code maintainability, you can refactor the code as follows:

```

function calculateItemPrice(quantity, price) {

return quantity * price;

}

const bookQuantity = 3;

const bookPrice = 25;

const laptopQuantity = 2;

const laptopPrice = 800;

const bookTotalPrice = calculateItemPrice(bookQuantity, bookPrice);

const laptopTotalPrice = calculateItemPrice(laptopQuantity, laptopPrice);

```

In this refactored code, we have a single calculateItemPrice function that calculates the total price for any item type based on the quantity and price provided as arguments. This adheres to the DRY principle because the calculation logic is no longer duplicated.

Now, you can easily calculate the total price for books, laptops, or any other item type by calling calculateItemPrice with the appropriate quantity and price values. This approach promotes code reusability, readability, and maintainability while reducing the risk of errors caused by duplicated code.

6 . **Use Meaningful Whitespace**

Properly format your code with spaces and line breaks. This enhances readability. Use whitespace to separate logical sections of your code. Well-formatted code is easier to scan, reducing the cognitive load on readers.

```

// Poor use of whitespace

const sum=function(a,b){return a+b;}

// Improved use of whitespace

const sum = function (a, b) {

return a + b;

}

```

7 . **Error Handling**

Handle errors gracefully. Use appropriate try-catch blocks or error-handling mechanisms in your code. This prevents unexpected crashes and provides valuable information for debugging. Don't suppress errors or simply log them without a proper response.

```

// Inadequate error handling

try {

result = divide(x, y);

} catch (error) {

console.error("An error occurred");

}

// Proper error handling

try {

result = divide(x, y);

} catch (error) {

if (error instanceof ZeroDivisionError) {

console.error("Division by zero error:", error.message);

} else if (error instanceof ValueError) {

console.error("Invalid input:", error.message);

} else {

console.error("An unexpected error occurred:", error.message);

}

}

```

8 . **Testing**