id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,651,072 | Will Google's $2 Billion Investment In Anthropic Be A Game-changer? | Read the full news article here. Like what you read? Head to the TechDogs homepage to find the... | 0 | 2023-10-30T16:03:52 | https://dev.to/techdogs_inc/unpublished-video-562i-3cd3 | Read the full news article [here](https://www.techdogs.com/tech-news/td-newsdesk/will-googles-2-billion-investment-in-anthropic-be-a-game-changer).

Like what you read? Head to the [TechDogs ](https://www.techdogs.com/)homepage to find the latest tech content infused with drama and entertainment. We've got [Articles](https://www.techdogs.com/resource/td-articles), White Papers, Case Studies, Reports, [Videos](https://www.youtube.com/channel/UCZ0lgIRSVraU14O9bp3bMCQ), [News ](https://www.techdogs.com/resource/tech-news)and [Events & Webinars](https://www.techdogs.com/resource/events) - the complete lot to help you Know Your World of Technology. | td_inc | |

1,651,115 | Carefully choosing sounds | Carefully choosing sounds for my games, only during moments like these do I feel like myself... | 0 | 2023-10-30T17:02:42 | https://dev.to/tonicatfealidae/carefully-choosing-sounds-508a | Carefully choosing sounds for my games, only during moments like these do I feel like myself ^^

Although they are just small projects.

I love them with my heart.

They are literally my children. | tonicatfealidae | |

1,651,120 | Day 1: 100 Days Of Code Challenge | Today I finished the Small Music Player Android App that I was working on. It has 5 buttons to play... | 0 | 2023-10-30T17:09:51 | https://dev.to/harshaart/day-1-100-days-of-code-challenge-5458 | Today I finished the Small Music Player Android App that I was working on. It has 5 buttons to play up to 5 songs, pause, reset and stop buttons too.

> I want to make a Spotify clone in the future.

| harshaart | |

1,651,124 | Orientation media query | Check out this Pen I made! | 0 | 2023-10-30T17:15:40 | https://dev.to/glagol/orientation-media-query-5cpk | codepen | Check out this Pen I made!

{% codepen https://codepen.io/web-dot-dev/pen/qBXVowV %} | glagol |

1,652,407 | Day 78: Web Storage | 🌐 What is the Web Storage API? The Web Storage API comprises two mechanisms: localStorage... | 23,670 | 2023-10-31T17:01:27 | https://dev.to/dhrn/day-78-web-storage-d6b | webdev, frontend, 100daysofcode, html |

### 🌐 What is the Web Storage API?

The Web Storage API comprises two mechanisms: `localStorage` and `sessionStorage`. These are ways to store key-value pairs in a web browser.

- `localStorage`: Offers persistent storage that persists even after the browser is closed and reopened. Data stored here remains until explicitly removed by the application or the user.

- `sessionStorage`: Provides session-based storage where data persists for the duration of the page session. Once the user closes the tab or browser, the data is cleared.

### Basic Usage:

```javascript

// Storing data in localStorage

localStorage.setItem('key', 'value');

// Retrieving data from localStorage

const value = localStorage.getItem('key');

// Removing data from localStorage

localStorage.removeItem('key');

```

### Advantages of Web Storage API:

1. **Simple Interface**: Offers an easy-to-use key-value pair storage system.

2. **Large Storage Capacity**: Allows for more significant data storage compared to cookies.

3. **Better Security**: Data stored in the Web Storage API is not transmitted to the server with every HTTP request, enhancing security.

### Tips

1. **Data Serialization**: Objects can be stored by serializing them using `JSON.stringify` and deserialized using `JSON.parse`.

2. **Error Handling**: Always handle exceptions when working with Web Storage to prevent the application from crashing due to storage limitations or security issues.

3. **Clear Outdated Data**: Regularly clear unused or outdated data to optimize storage and maintain efficiency.

### Best Practices:

1. **Encrypt Sensitive Data**: If storing sensitive information, consider encrypting it before saving it in the Web Storage.

2. **Graceful Degradation**: Always ensure your application gracefully handles scenarios where Web Storage may not be available or accessible.

3. **Consistent Data Structure**: Maintain a consistent structure for stored data to ease retrieval and manipulation.

### Comparing Web Storage Options:

Here's a comparison between `localStorage` and `sessionStorage`:

| Feature | `localStorage` | `sessionStorage` |

|-----------------|----------------------------------|------------------------------|

| **Scope** | Origin-specific | Tab or window-specific |

| **Persistence** | Survives browser close | Cleared at the end of session |

| **Storage** | Larger storage capacity | Limited storage capacity |

| **Accessibility**| Accessible from any window/tab | Accessible only within the tab |

| **Security** | Relatively secure | More secure |

| **Expiration** | Does not expire unless cleared | Cleared when the session ends |

| **Usage** | Long-term data storage | Short-term data storage |

| dhrn |

1,651,263 | A beginner's guide to building a Retrieval Augmented Generation (RAG) application from scratch | Retrieval Augmented Generation, or RAG, is all the rage these days because it introduces some serious... | 0 | 2023-10-31T22:25:42 | https://learnbybuilding.ai/tutorials/rag-from-scratch | ai, rag, beginners, nlp | Retrieval Augmented Generation, or RAG, is all the rage these days because it introduces some serious capabilities to large language models like OpenAI's GPT-4 - and that's the ability to use and leverage their own data.

This post will teach you the fundamental intuition behind RAG while providing a simple tutorial to help you get started.

## The problem with learning in a fast moving space

There's so much noise in the AI space and in particular about RAG. Vendors are trying to overcomplicate it. They're trying to inject their tools, their ecosystems, their vision.

It's making RAG way more complicated than it needs to be.

This tutorial is designed to help beginners learn how to build RAG applications from scratch.

No fluff, no (ok, minimal) jargon, no libraries, just a simple step by step RAG application.

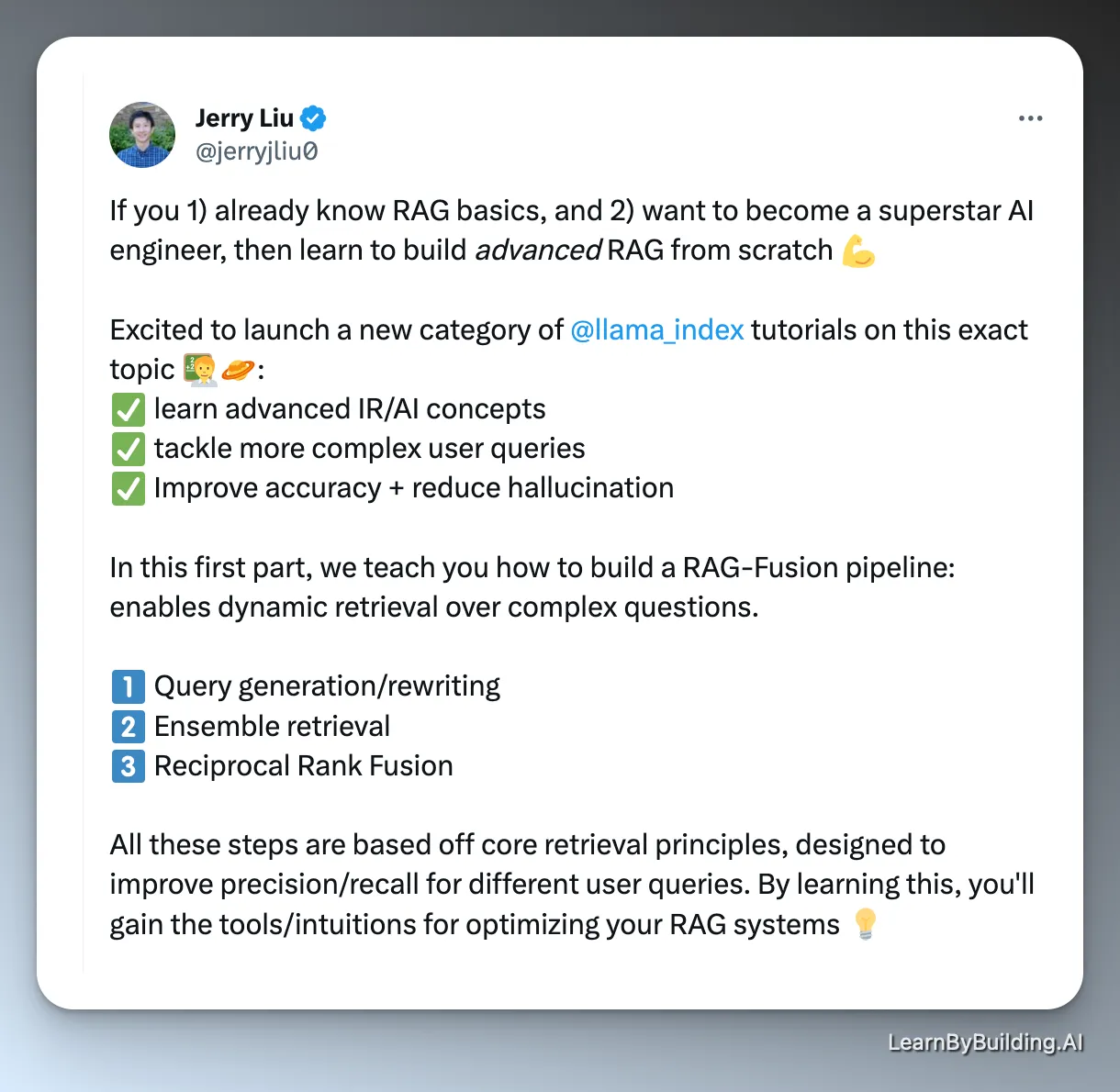

[Jerry from LlamaIndex advocates for building things from scratch to really understand the pieces](https://twitter.com/jerryjliu0/status/1716122650836439478). Once you do, using a library like LlamaIndex makes more sense.

Build from scratch to learn, then build with libraries to scale.

Let's get started!

## Introducing our concept: Retrieval Augmented Generation

You may or may not have heard of Retrieval Augmented Generation or RAG.

Here's the definition from [the blog post introducing the concept from Facebook](https://ai.meta.com/blog/retrieval-augmented-generation-streamlining-the-creation-of-intelligent-natural-language-processing-models/):

> Building a model that researches and contextualizes is more challenging, but it's essential for future advancements. We recently made substantial progress in this realm with our Retrieval Augmented Generation (RAG) architecture, an end-to-end differentiable model that combines an information retrieval component (Facebook AI’s dense-passage retrieval system) with a seq2seq generator (our Bidirectional and Auto-Regressive Transformers [BART] model). RAG can be fine-tuned on knowledge-intensive downstream tasks to achieve state-of-the-art results compared with even the largest pretrained seq2seq language models. And unlike these pretrained models, RAG’s internal knowledge can be easily altered or even supplemented on the fly, enabling researchers and engineers to control what RAG knows and doesn’t know without wasting time or compute power retraining the entire model.

Wow, that's a mouthful.

In simplifying the technique for beginners, we can state that the essence of RAG involves adding your own data (via a retrieval tool) to the prompt that you pass into a large language model. As a result, you get an output.

That gives you several benefits:

1. You can include facts in the prompt to help the LLM avoid hallucinations

2. You can (manually) refer to sources of truth when responding to a user query, helping to double check any potential issues.

3. You can leverage data that the LLM might not have been trained on.

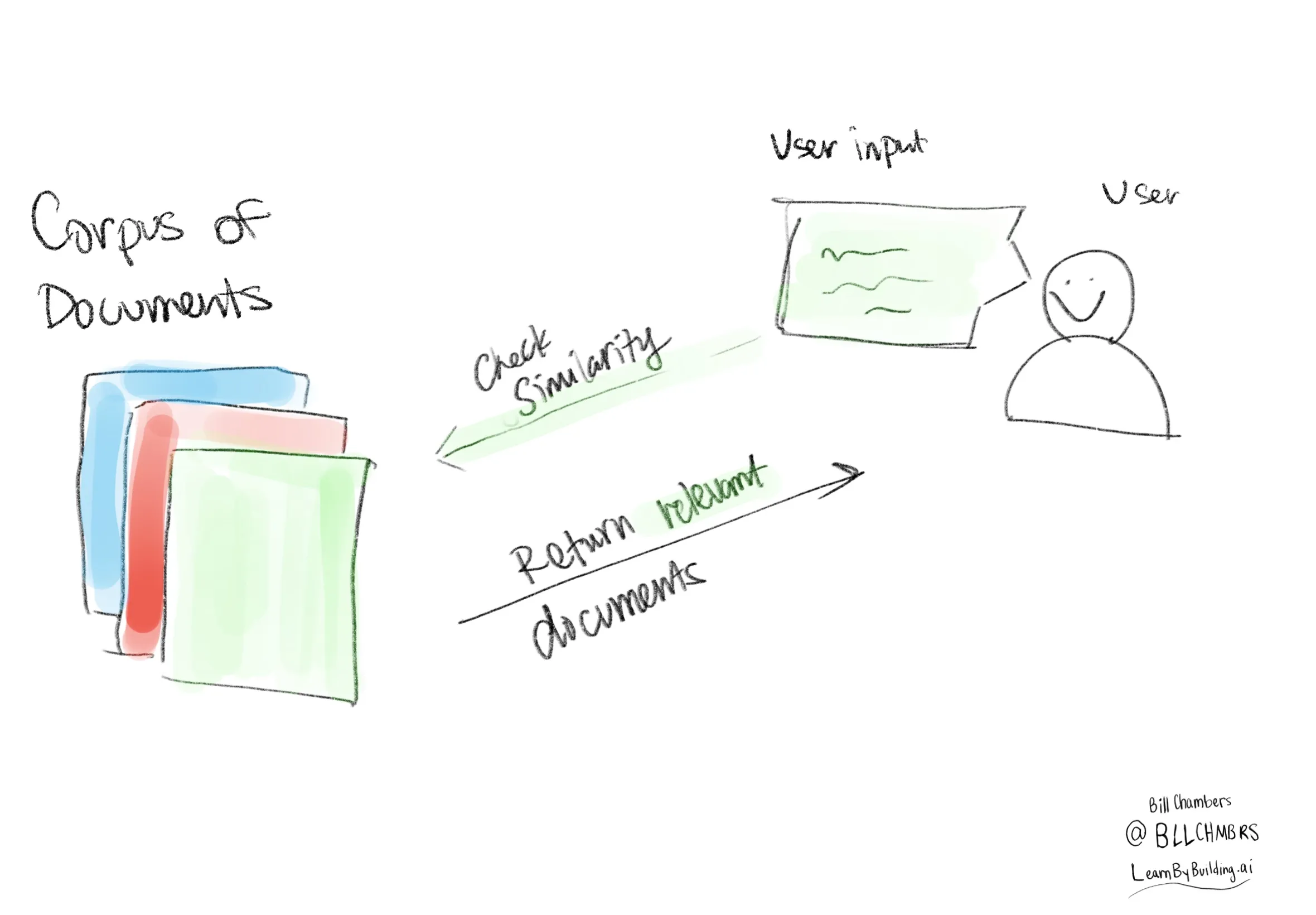

## The High Level Components of our RAG System

1. a collection of documents (formally called a corpus)

2. An input from the user

3. a similarity measure between the collection of documents and the user input

Yes, it's that simple.

To start learning and understanding RAG based systems, you don't need a vector store, you don't even *need* an LLM (at least to learn and understand conceptually).

While it is often portrayed as complicated, it doesn't have to be.

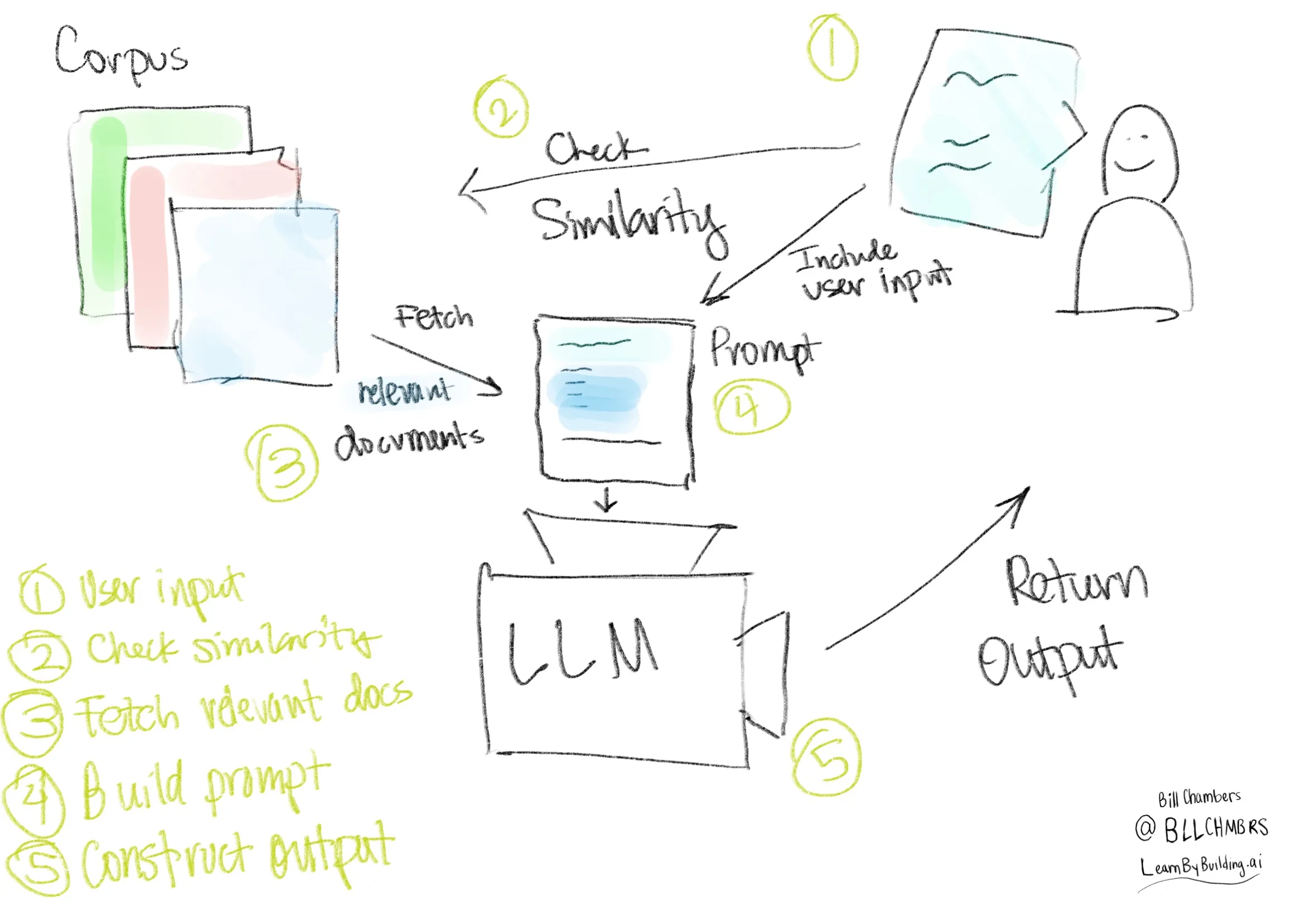

## The ordered steps of a querying RAG system

We'll perform the following steps in sequence.

1. Receive a user input

2. Perform our similarity measure

3. Post-process the user input and the fetched document(s).

The post-processing is done with an LLM.

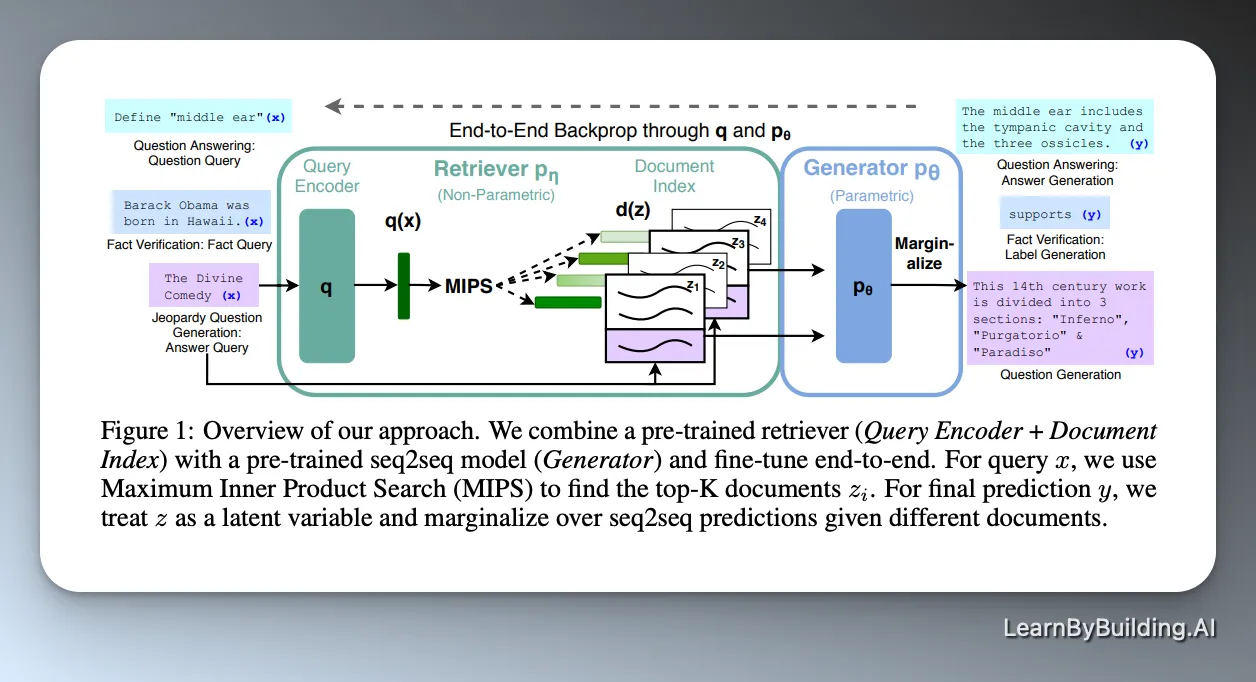

## A note from the paper itself

[The actual RAG paper](https://arxiv.org/abs/2005.11401) is obviously *the* resource. The problem is that it assumes a LOT of context. It's more complicated than we need it to be.

For instance, here's the overview of the RAG system as proposed in the paper.

That's dense.

It's great for researchers but for the rest of us, it's going to be a lot easier to learn step by step by building the system ourselves.

## Working through an example - the simplest RAG system

Let's get back to building RAG from scratch, step by step. Here's the simplified steps that we'll be working through.

### Getting a collection of documents

Below you can see that we've got a simple corpus of 'documents' (please be generous 😉).

```python

corpus_of_documents = [

"Take a leisurely walk in the park and enjoy the fresh air.",

"Visit a local museum and discover something new.",

"Attend a live music concert and feel the rhythm.",

"Go for a hike and admire the natural scenery.",

"Have a picnic with friends and share some laughs.",

"Explore a new cuisine by dining at an ethnic restaurant.",

"Take a yoga class and stretch your body and mind.",

"Join a local sports league and enjoy some friendly competition.",

"Attend a workshop or lecture on a topic you're interested in.",

"Visit an amusement park and ride the roller coasters."

]

```

### Defining and performing the similarity measure

Now we need a way of measuring the similarity between the **user input** we're going to receive and the **collection** of documents that we organized. Arguably the simplest similarity measure is [jaccard similarity](https://en.wikipedia.org/wiki/Jaccard_index). I've written about that in the past (see [this post](https://billchambers.me/posts/tf-idf-explained-in-python) but the short answer is that the **jaccard similarity** is the intersection divided by the union of the "sets" of words.

This allows us to compare our user input with the source documents.

#### Side note: preprocessing

A challenge is that if we have a plain string like `"Take a leisurely walk in the park and enjoy the fresh air.",`, we're going to have to pre-process that into a set, so that we can perform these comparisons. We're going to do this in the simplest way possible, lower case and split by `" "`.

```python

def jaccard_similarity(query, document):

query = query.lower().split(" ")

document = document.lower().split(" ")

intersection = set(query).intersection(set(document))

union = set(query).union(set(document))

return len(intersection)/len(union)

```

Now we need to define a function that takes in the exact query and our corpus and selects the 'best' document to return to the user.

```python

def return_response(query, corpus):

similarities = []

for doc in corpus:

similarity = jaccard_similarity(user_input, doc)

similarities.append(similarity)

return corpus_of_documents[similarities.index(max(similarities))]

```

Now we can run it, we'll start with a simple prompt.

```python

user_prompt = "What is a leisure activity that you like?"

```

And a simple user input...

```python

user_input = "I like to hike"

```

Now we can return our response.

```python

return_response(user_input, corpus_of_documents)

```

'Go for a hike and admire the natural scenery.'

Congratulations, you've built a basic RAG application.

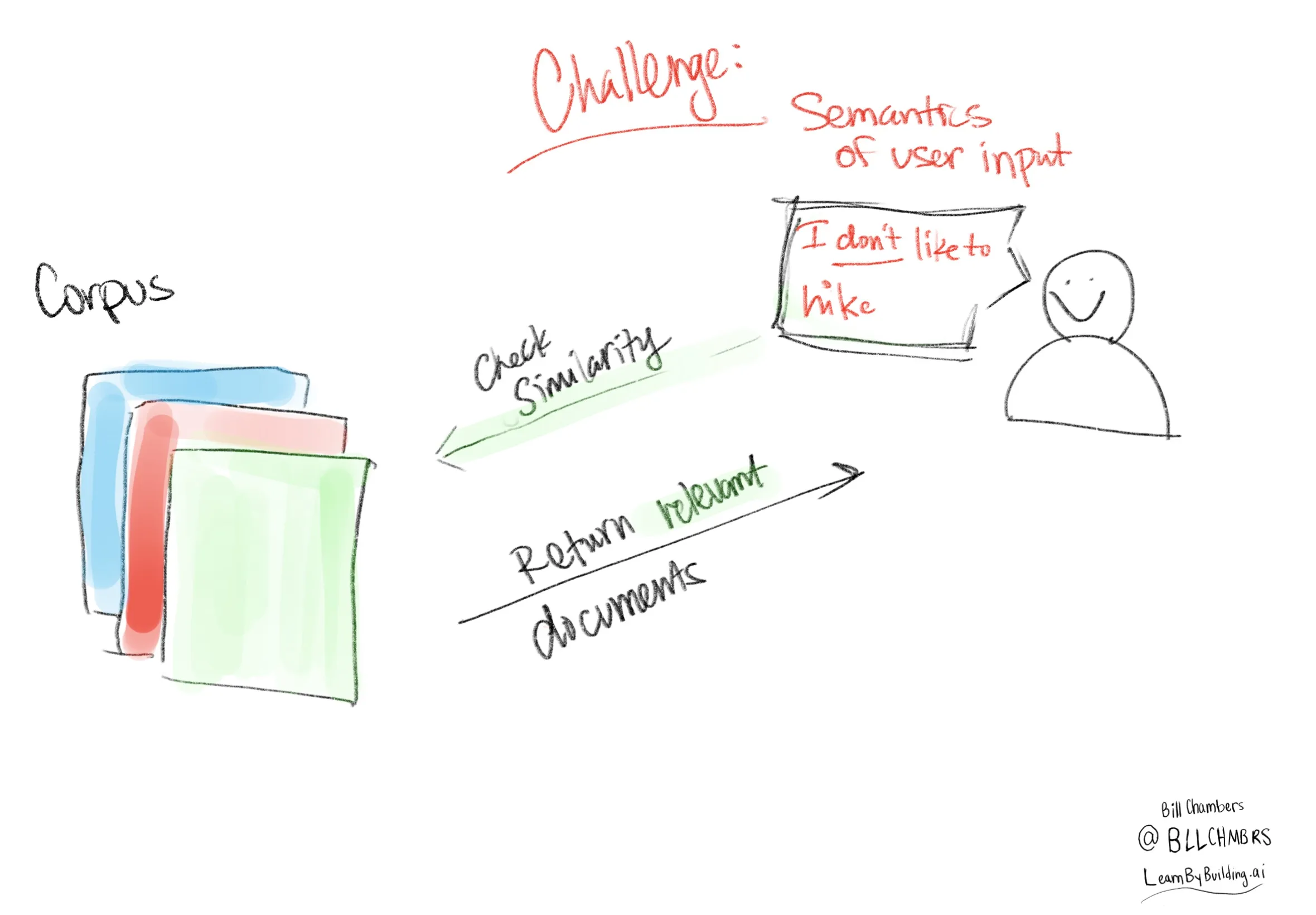

#### I got 99 problems and bad similarity is one

Now we've opted for a simple similarity measure for learning. But this is going to be problematic because it's so simple. It has no notion of **semantics**. It's just looks at what words are in both documents. That means that if we provide a negative example, we're going to get the same "result" because that's the closest document.

```python

user_input = "I don't like to hike"

```

```python

return_response(user_input, corpus_of_documents)

```

'Go for a hike and admire the natural scenery.'

This is a topic that's going to come up a lot with "RAG", but for now, rest assured that we'll address this problem later.

At this point, we have not done any post-processing of the "document" to which we are responding. So far, we've implemented only the "retrieval" part of "Retrieval-Augmented Generation". The next step is to augment generation by incorporating a large language model (LLM).

## Adding in a LLM

To do this, we're going to use [ollama](https://ollama.ai/) to get up and running with an open source LLM on our local machine. We could just as easily use OpenAI's gpt-4 or Anthropic's Claude but for now, we'll start with the open source llama2 from [Meta AI](https://ai.meta.com/llama/).

- [ollama installation instructions are here](https://ollama.ai/)

This post is going to assume some basic knowledge of large language models, so let's get right to querying this model.

```python

import requests

import json

```

First we're going to define the inputs. To work with this model, we're going to take

1. user input,

2. fetch the most similar document (as measured by our similarity measure),

3. pass that into a prompt to the language model,

4. *then* return the result to the user

That introduces a new term, the **prompt**. In short, it's the instructions that you provide to the LLM.

When you run this code, you'll see the streaming result. Streaming is important for user experience.

```python

user_input = "I like to hike"

relevant_document = return_response(user_input, corpus_of_documents)

full_response = []

# https://github.com/jmorganca/ollama/blob/main/docs/api.md

prompt = """

You are a bot that makes recommendations for activities. You answer in very short sentences and do not include extra information.

This is the recommended activity: {relevant_document}

The user input is: {user_input}

Compile a recommendation to the user based on the recommended activity and the user input.

"""

```

Having defined that, let's now make the API call to ollama (and llama2).

an important step is to make sure that ollama's running already on your local machine by running `ollama serve`.

> Note: this might be slow on your machine, it's certainly slow on mine. Be patient, young grasshopper.

```python

url = 'http://localhost:11434/api/generate'

data = {

"model": "llama2",

"prompt": prompt.format(user_input=user_input, relevant_document=relevant_document)

}

headers = {'Content-Type': 'application/json'}

response = requests.post(url, data=json.dumps(data), headers=headers, stream=True)

try:

count = 0

for line in response.iter_lines():

# filter out keep-alive new lines

# count += 1

# if count % 5== 0:

# print(decoded_line['response']) # print every fifth token

if line:

decoded_line = json.loads(line.decode('utf-8'))

full_response.append(decoded_line['response'])

finally:

response.close()

print(''.join(full_response))

```

Great! Based on your interest in hiking, I recommend trying out the nearby trails for a challenging and rewarding experience with breathtaking views Great! Based on your interest in hiking, I recommend checking out the nearby trails for a fun and challenging adventure.

This gives us a complete RAG Application, from scratch, no providers, no services. You know all of the components in a Retrieval-Augmented Generation application. Visually, here's what we've built.

The LLM (if you're lucky) will handle the user input that goes against the recommended document. We can see that below.

```python

user_input = "I don't like to hike"

relevant_document = return_response(user_input, corpus_of_documents)

# https://github.com/jmorganca/ollama/blob/main/docs/api.md

full_response = []

prompt = """

You are a bot that makes recommendations for activities. You answer in very short sentences and do not include extra information.

This is the recommended activity: {relevant_document}

The user input is: {user_input}

Compile a recommendation to the user based on the recommended activity and the user input.

"""

url = 'http://localhost:11434/api/generate'

data = {

"model": "llama2",

"prompt": prompt.format(user_input=user_input, relevant_document=relevant_document)

}

headers = {'Content-Type': 'application/json'}

response = requests.post(url, data=json.dumps(data), headers=headers, stream=True)

try:

for line in response.iter_lines():

# filter out keep-alive new lines

if line:

decoded_line = json.loads(line.decode('utf-8'))

# print(decoded_line['response']) # uncomment to results, token by token

full_response.append(decoded_line['response'])

finally:

response.close()

print(''.join(full_response))

```

Sure, here is my response:

Try kayaking instead! It's a great way to enjoy nature without having to hike.

## Areas for improvement

If we go back to our diagream of the RAG application and think about what we've just built, we'll see various opportunities for improvement. These opportunities are where tools like vector stores, embeddings, and prompt 'engineering' gets involved.

Here are ten potential areas where we could improve the current setup:

1. **The number of documents** 👉 more documents might mean more recommendations.

2. **The depth/size of documents** 👉 higher quality content and longer documents with more information might be better.

3. **The number of documents we give to the LLM** 👉 Right now, we're only giving the LLM one document. We could feed in several as 'context' and allow the model to provide a more personalized recommendation based on the user input.

4. **The parts of documents that we give to the LLM** 👉 If we have bigger or more thorough documents, we might just want to add in parts of those documents, parts of various documents, or some variation there of. In the lexicon, this is called chunking.

5. **Our document storage tool** 👉 We might store our documents in a different way or different database. In particular, if we have a lot of documents, we might explore storing them in a data lake or a vector store.

6. **The similarity measure** 👉 How we measure similarity is of consequence, we might need to trade off performance and thoroughness (e.g., looking at every individual document).

7. **The pre-processing of the documents & user input** 👉 We might perform some extra preprocessing or augmentation of the user input before we pass it into the similarity measure. For instance, we might use an embedding to convert that input to a vector.

8. **The similarity measure** 👉 We can change the similarity measure to fetch better or more relevant documents.

9. **The model** 👉 We can change the final model that we use. We're using llama2 above, but we could just as easily use an Anthropic or Claude Model.

10. **The prompt** 👉 We could use a different prompt into the LLM/Model and tune it according to the output we want to get the output we want.

11. **If you're worried about harmful or toxic output** 👉 We could implement a "circuit breaker" of sorts that runs the user input to see if there's toxic, harmful, or dangerous discussions. For instance, in a healthcare context you could see if the information contained unsafe languages and respond accordingly - outside of the typical flow.

The scope for improvements isn't limited to these points; the possibilities are vast, and we'll delve into them in future tutorials. Until then, don't hesitate to [reach out on Twitter](https://twitter.com/bllchmbrs) if you have any questions. Happy RAGING :).

## References

- [Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks](https://arxiv.org/abs/2005.11401)

- [Jerry Liu on Twitter advocating for users to build RAG from scratch](https://twitter.com/jerryjliu0/status/1716122650836439478)

If you like this post, you'll love what we're doing at [learn By Building AI](https://learnbybuilding.ai/).

| bllchmbrs |

1,651,357 | Embracing Key Communication Trends? | What communication trends do you believe are crucial in today's digital age? Follow the DEVteam for... | 0 | 2023-11-01T21:20:00 | https://dev.to/devteam/embracing-key-communication-trends-249p | discuss |

What communication trends do you believe are crucial in today's digital age?

Follow the DEVteam for more discussions and online camaraderie!

{% embed [https://dev.to/devteam](https://dev.to/devteam) %} | thepracticaldev |

1,651,413 | The Illusion of Data Ownership | Table of Contents Introduction Why I'm reflecting on this The Illusion of Ownership The... | 24,870 | 2023-10-30T23:01:29 | https://dev.to/tbdevs/the-illusion-of-data-ownership-3nbi | web5, javascript, webdev, decentralization | ## Table of Contents

- [Introduction](#introduction)

- [Why I'm reflecting on this](#why-im-reflecting-on-this)

- [The Illusion of Ownership](#the-illusion-of-ownership)

- [The Tyranny of Social Media](#the-tyranny-of-social-media)

- [Exploitation of Disenfranchised People](#exploitation-of-disenfranchised-people)

- [AI Thrives On Our Data](#ai-thrives-on-our-data)

- [ChatGPT](#chatgpt)

- [The Art Community](#the-art-community)

- [The Original Intent of the Internet](#the-original-intent-of-the-internet)

- [Web5](#web5)

- [How Web5 Enables Data Sovereignty](#how-web5-enables-data-sovereignty)

- [Curious about Web5?](#curious-about-web5)

## Introduction

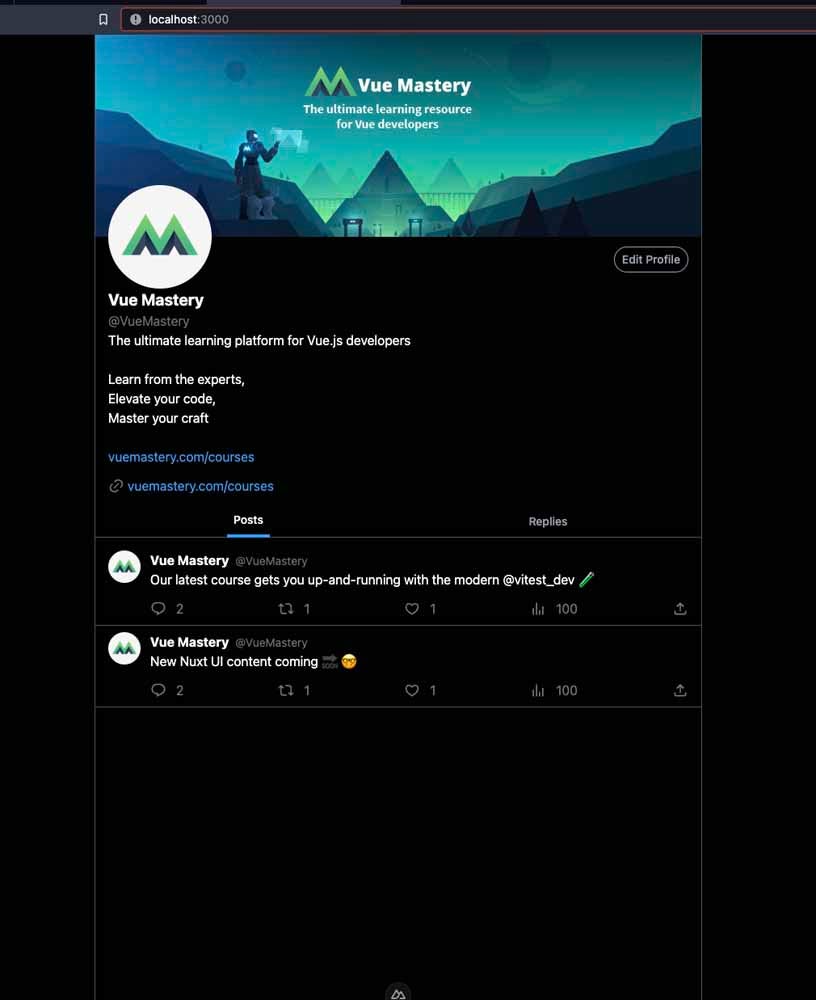

The rise of Mastodon and BlueSky as decentralized Twitter alternatives highlights the need for data ownership. But the slow adoption of a decentralized web reveals a gap in our collective comprehension. For as long as the internet has existed, internet users rarely owned their data, so it's hard to envision a web where data sovereignty is the norm.

I'm a quintessential baby millennial – born in '95. I was born on the cusp of GenZ, but I don't identify with GenZ because I'm not hip to their lingo, dances, or fashion sense. I started surfing the web during the early 2000s. I grew up using PBS Kids, Everything Girl, The Doll Palace, Club Penguin, MySpace, and Tumblr. Today, I use platforms like Twitter, GitHub, and Instagram. Each login, each acceptance of terms and conditions, was an implicit agreement to share fragments of my identity. As a result, corporations capitalize on trading and harnessing my data for profit. I mindlessly accepted it because I didn't know any other way of using the internet.

The idea that users can have full data sovereignty seemed like a utopian fantasy. Listening to company leaders share the organization's vision during a company offsite transformed my interpretation of data ownership.

## Why I'm reflecting on this

I am someone who likes to reflect on the philosophy behind a technology, so I can confidently endorse it. While I believe all technology can be used for good and evil, I like to determine its current impact. If it leans towards the negative, I'm driven to influence people to use it in a way that positively impacts society. Take generative AI, for instance. While it poses the risk of job losses for some creatives, I dedicated two years to championing its use as an educational tool—one that offers a sense of psychological safety to learners. Similarly, I want to think holistically about decentralized web technologies such as Web5.

In this blog post, we will discuss the meaning of data ownership on the web and how it impacts society.

## The Illusion of Ownership

Many internet users operate under the assumption that our online data is ours.The reality is we merely possess our data. There’s a huge difference between holding our data (possession) and owning our data (property).

* **Possession**: This is about having control or physical custody of data. Here’s an example of what that looks like on social media platforms:

* **Access and View**: You can log in, view your posts, and interact with content.

* **Modify**: Edit captions, comments, or profile details.

* **Interact**: Engage with content through likes, comments, and shares.

* **Upload**: Add new content.

* **Delete (with limitations)**: Remove posts or deactivate accounts, but the platform might still retain or use your data.

* **Property**: This is about having inherent rights to own, control, and manage data. Truly owning your data on a social media platform looks like:

* **Complete Deletion**: You'd have the right to permanently erase all traces of your data from the platform's servers, with no backups or archives retained.

* **Data Portability**: You could seamlessly transfer all your data, including posts, comments, and likes, to another platform without any loss or format change.

* **Monetization Control**: You'd have the authority to decide if and how your data is used for advertising or other revenue-generating purposes.

* **Data Access Control**: You could dictate who, including the platform itself, can access or view your data.

* **No Unilateral Changes**: The platform couldn't change terms of service or data policies without your explicit consent.

We are more possessors than owners.

## The Tyranny of Social Media

Recent changes on platforms like Twitter/X underscore my point.

Here are examples:

* **Usernames taken** - When X rebranded from Twitter, they claimed the username '@X', despite it already being in use by another individual.

* **Vanishing features** - Recently, X announced they are removing Twitter Circles. Twitter Circles allows users to select a subgroup of followers to receive particular posts. Many people use this for private sharing, but now that option will not exist. And while X promises to leave the Circle posts private, there have been instances in the past where bugs made Circle posts publicly viewable.

* **Lost content** - Integrated newsletters like Revue were suddenly removed, leading to loss of content and subscribers.

Twitter/X is not the only culprit. Google has a history of discontinuing products including Google Podcasts. See: [Killed by Google](https://killedbygoogle.com/).

### Exploitation of Disenfranchised People

> “If you know whence you came, there are absolutely no limitations to where you can go.” - James Baldwin

I don’t know my ancestral history, but I want to. All I know is I was born in Antigua and my parents and grandparents were born in Guyana. I want to take an ancestry test, but there are data privacy risks. The powers that be have exploited disenfranchised people enough. I want to shield our history from potential data breaches and commercial interests. I don’t want to offer more of our narrative to those who might exploit it.

## AI Thrives On Our Data

I am a huge fan of generative AI because it’s so powerful. However, I recognize that it’s only that powerful because it was trained on our data.

### ChatGPT

ChatGPT is an integral part of my daily routine. It helps me brainstorm ideas and refactor code. I'm not sure how I could survive or how I ever survived without it. But there's a catch -- ChatGPT is super helpful because it was trained on public data, including data from users like us. This means that any confidential information we share could become part of its training data. There's a risk that if you tell ChatGPT sensitive information about you or your company, someone else can potentially prompt ChatGPT for that data, and get ahold of it. One of many examples is the [case with Samsung](https://www.forbes.com/sites/siladityaray/2023/05/02/samsung-bans-chatgpt-and-other-chatbots-for-employees-after-sensitive-code-leak/?sh=4813eefe6078) where employees inadvertently shared proprietary code and internal business strategies with ChatGPT.

### The Art Community

Many artists are upset with the rise of generative AI art. They suspect the tools were trained on their work because they recognize their own styles in generated AI pieces.

Whether these are actual problems or ethical gray areas, one thing is clear: _wouldn’t it be better if we had a say in how our data is used and who uses it?_

### The Original Intent of the Internet

These are some of the reasons why data ownership is important to me. Even Tim Berners-Lee, the inventor of the World Wide Web, is disappointed in how we leveraged data on the Internet.

> “I think the public has been concerned about privacy--the fact that these platforms have a huge amount of data, and they abuse it. But I think what they're missing sometimes is the lack of empowerment. You need to get back to a situation where you have autonomy, you have control of all your data.” - Tim Berners-Lee

## Web5

[Web5](https://developer.tbd.website/blog/what-is-web5) is a platform (currently under development) that puts users in control of their data and identity. It doesn’t aim to replace current technologies, but enhance.

### How Web5 Enables Data Sovereignty

Here’s how Web5 puts users in control of their data:

#### Decentralized Identifiers

Identity on traditional systems often looks like username and password pairings.

In the Web5 ecosystem, every person has a Decentralized Identifier (DID), represented as an alphanumeric string. DIDs are:

- a W3C open standard

- based on cryptographic principles.

- not tied to one web application or system

Because of these factors, DIDs enable users to securely authenticate to any web app within the Web5 ecosystem.

#### Decentralized Web Nodes

Your DID gives you access to a Decentralized Web Node (DWN) or a personal data store. You can think of a DWN like your personal Dropbox. However, centralized platforms like Dropbox can change terms of service, access your data, or even shut down services, leaving you without access. Instead, a DWN provides a personal space where your data is stored and you decide who gets access.

#### Protocols

Protocols are responsible for structuring your data and establishing rules for data access and interaction within a DWN.

In other words, you can control who has access to your DWN and who interacts with it via a protocol. Here's an abridge example of a protocol you can write for a user's interactions on social media applications:

```javascript

const socialMediaProtocolDefinition = {

protocol: "https://sovereignsocialmedia.org/protocol",

published: true,

types: {

personalInfo: {

schema: "https://schema.org/Person",

dataFormats: ["application/json"],

},

preferences: {

schema: "https://schema.org/UserPreferences",

dataFormats: ["application/json"],

},

posts: {

schema: "https://schema.org/BlogPosting",

dataFormats: ["application/json"],

},

comments: {

schema: "https://schema.org/Comment",

dataFormats: ["application/json"],

},

photos: {

schema: "https://schema.org/ImageObject",

dataFormats: ["image/jpeg", "image/png"],

},

videos: {

schema: "https://schema.org/VideoObject",

dataFormats: ["video/mp4"],

},

},

structure: {

personalInfo: {

$actions: [

{ who: "author", can: "write" },

{ who: "author", can: "read" },

],

},

preferences: {

$actions: [

{ who: "author", can: "write" },

{ who: "author", can: "read" },

],

},

posts: {

$actions: [

{ who: "author", can: "write" },

{ who: "anyone", can: "read" },

],

},

comments: {

$actions: [

{ who: "author", can: "write" },

{ who: "anyone", can: "read" },

],

},

photos: {

$actions: [

{ who: "author", can: "write" },

{ who: "anyone", can: "read" },

],

},

videos: {

$actions: [

{ who: "author", can: "write" },

{ who: "anyone", can: "read" },

],

},

}

};

```

Here's a breakdown of the permissions (who has access to this data) in this protocol:

- **Personal Info Permissions**:

- **Write**: Only the user (author) can write or update their personal information.

- **Read**: Only the user (author) can view their personal information.

- **Preferences Permissions**:

- **Write**: Only the user (author) can set or change their preferences.

- **Read**: Only the user (author) can view their preferences.

- **Posts Permissions**:

- **Write**: Only the user (author) can create or update their posts.

- **Read**: Both the user (author) and the public can view the posts.

- **Comments Permissions**:

- **Write**: Only the user (author) can create or update their comments on posts.

- **Read**: Both the user (author) and the public can view the comments.

With this protocol, content creation is open to all, but users have control over their personal data and preferences.

Data ownership isn't just a technical decision or a fun concept for developers. It is about creating a more equitable online ecosystem.

_What are your thoughts on data ownership and Web5?_

## Curious about Web5?

* Read: [What is Web5?](https://developer.tbd.website/blog/what-is-web5)

* Read: [Why Companies Would Embrace Web5](https://developer.tbd.website/blog/why-would-companies-embrace-web5)

* Read: [How to Query Records by Protocol Path](https://dev.to/tbdevs/web5-how-to-query-records-by-protocol-path-1g6h)

* Read: [What’s the point of Web5?](https://dev.to/tbdevs/whats-the-point-of-web5-2kjb)

* Build: [How to build a Web5 shared to do list with Nuxt](https://developer.tbd.website/docs/web5/build/apps/shared-todo-app)

* Build: [How to build a Web5 chat app with Next](https://developer.tbd.website/docs/web5/build/apps/dinger-tutorial)

* Join: [An Intro to TBD's Web 5 SDK and Decentralized Web Nodes ](https://www.eventbrite.com/e/an-intro-to-tbds-web-5-sdk-and-decentralized-web-nodes-tickets-729176565737?aff=tbd)

* Join: [On Wednesdays We Use Web5: A 'Mean Girls' Guide to Data Sovereignty](https://cfe.dev/events/on-wednesdays-we-use-web5/)

* Join: [TBD's Discord](https://discord.com/invite/tbd)

| blackgirlbytes |

1,651,424 | Applying the Power of Machine Learning for Data and Analytics | 1. Introduction Data analytics is the process of examining data to uncover insights and make... | 0 | 2023-10-30T23:40:01 | https://dev.to/danielwambo/applying-the-power-of-machine-learning-for-data-and-analytics-o59 | **1. Introduction**

Data analytics is the process of examining data to uncover insights and make informed decisions. Machine learning is a subset of artificial intelligence that empowers data analytics by automating predictions based on data patterns.

**2. What is Machine Learning?**

Machine learning is a technology that enables computers to learn and make predictions or decisions without being explicitly programmed. It's divided into three main types: supervised learning, unsupervised learning, and reinforcement learning.

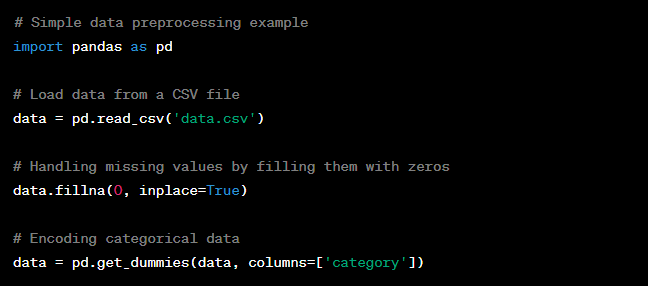

**3. Data Collection and Preprocessing**

Quality data is essential for machine learning. Data preprocessing includes cleaning and preparing the data for analysis.

**4. Supervised Learning**

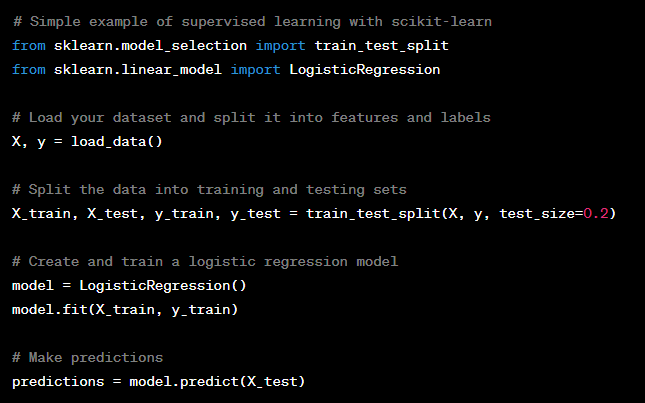

Supervised learning is used for tasks like classification and regression. It involves using labeled data to train a model.

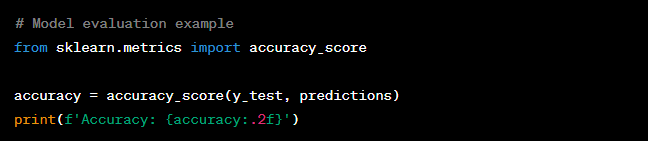

**5. Model Evaluation**

To understand how well your model performs, you can use evaluation metrics like accuracy, which measures how often the model is correct.

**Conclusion**

Machine learning is a powerful tool that can enhance your data analytics efforts. By understanding its basics and applying it to your data, you can make more accurate predictions and gain valuable insights.

| danielwambo | |

1,652,536 | Understanding the basics of Smart Pointers in Rust | In today's post we'll delve into the basics of smart pointers in Rust, while we build from scratch a... | 0 | 2023-11-01T04:15:27 | https://dev.to/leandronsp/understanding-the-basics-of-smart-pointers-in-rust-3dff | rust, datastructures | In today's post we'll delve into the basics of smart pointers in Rust, while we build from scratch a simple linked list - starting from a singly linked list and then evolving to a doubly one.

---

## Prelude, intro to Rust

It's not intended to be an introduction about Rust. For that, you can follow along [this blogpost series](https://dev.to/mfcastellani/series/23318) by [@mfcastellani](https://dev.to/mfcastellani).

Also, you can read [his book](https://www.casadocodigo.com.br/products/livro-rust) (pt-BR). Moreover, I have a [live coding video](https://www.youtube.com/watch?v=6VSgMbFNUuQ) where I explored the Rust fundamentals by covering an introduction to Rust, data types, functions, ownership, references, structs/enums and error handling.

Another content about Rust I higly recommend is presented on [this Youtube channel](https://www.youtube.com/watch?v=zWXloY0sslE) by Bruno Rocha, which creates great videos about Rust as well (pt-BR).

> Please note that this post you are currently reading was written during a [live coding session (pt-BR)](https://www.youtube.com/watch?v=bdZe0LjDUyk) where you can follow the process I use to write blogposts in general and how I created this particular one. It's a novel format I'm experimenting with to share content.

However, if you are looking for introdutory content in english only, the Youtube channel [Let's get Rusty](https://www.youtube.com/@letsgetrusty) provides great content on Rust from basics to advanced.

---

No more introduction, let's embark on this journey of **Smart Pointers in Rust**.

---

## Table of Contents

- [First things first](#first-things-first)

- [A linked list using Rust](#a-linked-list-using-rust)

- [Meet the Box smart pointer](#box)

- [Shared ownership using Rc](#rc)

- [Interior mutability with RefCell](#refcell)

- [Weak references on a circular linked list](#thinking-about-a-circular-linked-list)

---

## 👉 First things first

Rust employs a mechanism for dealing with memory management where it prevents dangling references, double free error and other problems related to memory management.

This mechanism is called "ownership" and through [RAII](https://en.wikipedia.org/wiki/Resource_acquisition_is_initialization) (Resource Acquisition Is Initialization), it follows three basic rules:

* Each value in Rust has a single owner

* There are only *one* owner at a time

* When the owner's scope is finished, its associated value is dropped and invalidated

When we need to transfer ownership, in case the value is in the stack (fixed-sized types), Rust performs a *Copy*:

> I'm assuming that all code snippets within this post are being executed inside a `fn main() {}` function

```rust

let age = 20;

let copied_age = age;

println!("copied_age: {}", copied_age);

println!("age: {}", age); // age is still valid because Rust performs a "Copy" for data in the stack

```

As for _dynamically-sized_ types, which live in the heap, Rust performs a *Move*:

```rust

let name = String::from("John");

let other_name = name;

println!("other_name: {}", other_name);

println!("name: {}", name); // name is no loger valid because Rust performs a "Move"

// Error:

// error[E0382]: borrow of moved value: `name`

```

*Copy* literally copies the data in the stack, while the *Move* operation transfers ownership, which means that the former owner is no longer the owner and its reference is completely dropped.

---

## 👉 A Linked List using Rust

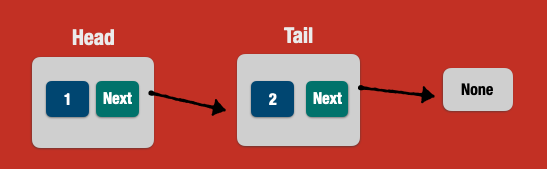

A linked list is a data structure which represents a collection of nodes where each node points to the next node. This is basically a **singly linked list**.

Also, we can build a linked list where each node points to the previous node as well. In this case, such a list is called **doubly linked list**.

### 🔵 A Singly Linked List

The first version of our linked list will be a singly one. As we evolve to a doubly linked list, we'll bring Rust concepts about ownership, references and smart pointers.

We start by modeling the Node:

```rust

struct Node {

value: i32,

next: Node

}

```

We are bound to situations where the **next** pointer points to "nothing", or simply a `null` pointer when the list reaches the end, commonly seen in a variety of programming languages.

But Rust has no `null` pointers. That said, we can represent the `next` pointer by using the enum **Option**, which in Rust gives us two possibilities of types:

* None (the end of the list)

* Some(node)

```rust

struct Node {

value: i32,

next: Option<Node>

}

let head = Node { value: 1, next: None };

assert_eq!(1, head.value);

assert_eq!(None, head.next);

```

The above code is not yet compiling:

```

error[E0072]: recursive type `Node` has infinite size

--> src/main.rs:2:5

|

2 | struct Node {

| ^^^^^^^^^^^

3 | value: i32,

4 | next: Option<Node>

| ---- recursive without indirection

|

help: insert some indirection (e.g., a `Box`, `Rc`, or `&`) to break the cycle

|

4 | next: Option<Box<Node>>

| ++++ +

```

The Rust compiler is saying that _Node_ has unknown size at compile-time and as such it can't be determined, because the "next" pointer points to another Node which points to another Node and so on, infinitely.

This is a **recursive type**.

In order to solve this problem, we have to help the Rust compiler to use some abstraction which can allocate data on the heap and determine the size of the Node at compile-time, resolving the recursive type.

Such abstraction is called **Box**, which is a smart pointer in Rust.

---

## 👉 Box

By using Box, we want to allocate the data on the heap.

Also, Box has a known size at compile-time. Being a pointer, the _size of the Box is the pointer size_, which makes it a good fit for recursive types.

The following code compiles sucessfully:

```rust

#[derive(Debug, PartialEq)]

struct Node {

value: i32,

next: Option<Box<Node>>

}

let head = Node { value: 1, next: None };

assert_eq!(1, head.value);

assert_eq!(None, head.next);

```

What if we add one more node, called "tail"?

```rust

let tail = Node { value: 2 next: None };

let head = Node { value: 1, next: Some(tail) };

```

As always (the Rust compilers always wins), it won't compile:

```

---- ^^^^ expected `Box<Node>`, found `Node`

```

We have to wrap the tail in a _Box_:

```rust

struct Node {

value: i32,

next: Option<Box<Node>>

}

let tail = Box::new(Node { value: 2, next: None });

let head = Node { value: 1, next: Some(tail) };

assert_eq!(1, head.value);

assert_eq!(2, head.next.unwrap().value);

```

* We wrap the tail box in an Option (Some)

* The _head.next_ points to an **Option**. Because it's the enum Option, we have to call `unwrap` to fetch the underlying value

Let's go further in the example and implement a **doubly linked list**, by specifying the _prev_ attribute on the Node struct.

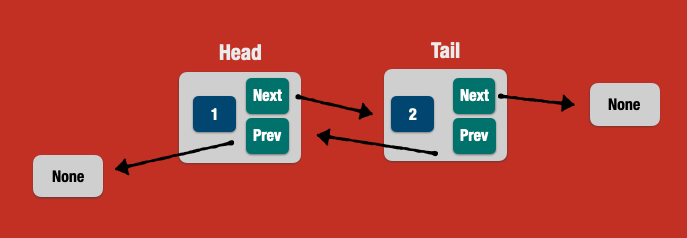

### 🔵 A Doubly Linked List

```rust

struct Node {

value: i32,

next: Option<Box<Node>>,

prev: Option<Box<Node>>,

}

let tail = Box::new(Node { value: 2, prev: None, next: None });

let head = Node { value: 1, prev: None, next: Some(tail) };

```

* the `head.prev` points to `None`

* the `tail.prev` points to `None` (at this moment...)

In order to change the `tail.prev`, we have to mutate its underlying value, from `None` to `Some(head)`. May we change the source code:

```rust

let mut tail = Box::new(Node { value: 2, prev: None, next: None });

let head = Box::new(Node { value: 1, prev: None, next: Some(tail) });

tail.prev = Some(head); // mutating the tail.prev

```

And...

```

error[E0382]: use of moved value: `head.next`

--> src/main.rs:14:15

|

9 | let head = Box::new(Node { value: 1, prev: None, next: Some(tail) });

| ---- move occurs because `head` has type `Box<Node>`, which does not implement the `Copy` trait

10 |

11 | tail.prev = Some(head);

| ---- value moved here

...

14 | assert_eq!(2, head.next.unwrap().value);

| ^^^^^^^^^ value used here after move

```

_Welcome to the ownership saga in Rust!_

Let's clarify some points here:

First, a Box has **single ownership**, meaning that each value holds one owner at a time. Here, in this line:

```rust

let head = Box::new(Node { value: 1, prev: None, next: Some(tail) }); // value was moved here

```

`Tail` has been *moved*, that's why we cannot use it later, due to ownership rules.

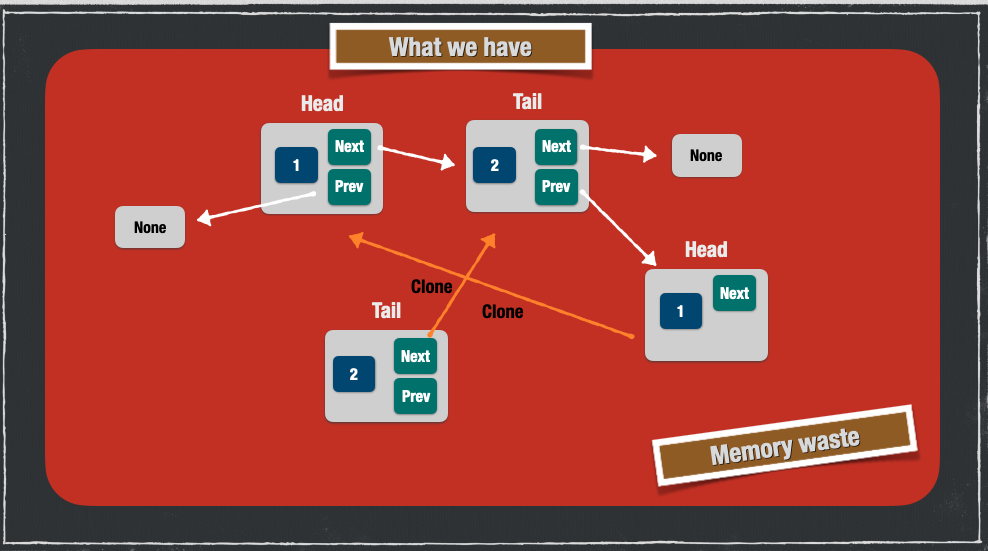

To fix that, we can make use of the method `clone` implemented in the Box, which will perform a deep copy (clone) of the value in the heap:

```rust

let head = Box::new(Node { value: 1, prev: None, next: Some(tail.clone()) });

tail.prev = Some(head);

```

Additionally, in the following line, `tail.prev` takes ownership of the value of `head`, so the value was moved to the new owner:

```rust

tail.prev = Some(head); // value as moved here

```

Now the solution is calling `clone` as we did in the `tail`:

```rust

tail.prev = Some(head.clone());

```

Here's the current solution for a doubly linked list using Box:

```rust

#[derive(Clone)]

struct Node {

value: i32,

next: Option<Box<Node>>,

prev: Option<Box<Node>>,

}

let mut tail = Box::new(Node { value: 2, prev: None, next: None });

let head = Box::new(Node { value: 1, prev: None, next: Some(tail.clone()) });

tail.prev = Some(head.clone());

assert_eq!(1, head.value);

assert_eq!(2, tail.value);

assert_eq!(2, head.next.unwrap().value);

assert_eq!(1, tail.prev.unwrap().value);

```

By using Box, we've solved the problem but we may end up wasting memory, as demonstrated in the following picture:

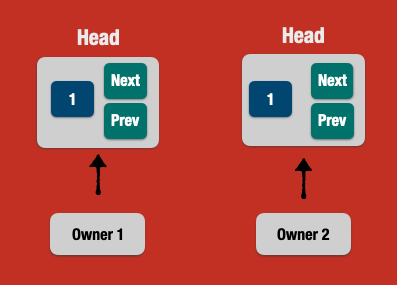

At this point in time, we have the following abstraction model about ownership, which is single and shares no value in the heap (Box):

We have to find a way to overcome the single ownership problem. What about _not taking ownership at all_, by using **References** instead?

### 🔵 References & Lifetimes

References in Rust do not take ownership, as they allow to work with the reference of the data which is allocated in the heap.

This way, references can be "borrowed" without taking ownership, and as such they are bound to a mechanism called **borrow checker**.

```rust

let name = String::from("John"); // value in the heap. name is the owner

let other_name = &name; // not a move. other_name has a reference to the value in the heap. name is still the owner

println!("other_name: {}", other_name);

println!("name: {}", name);

```

The above code compiles successfully. The borrow checker ensures that the reference is pointing to some valid value in the heap, thus not "moving" the ownership.

Let's change the code to use References instead of Box:

```rust

struct Node {

value: i32,

next: Option<&Node>,

}

let tail = Node { value: 2, next: None };

let head = Node { value: 1, next: Some(&tail) };

```

* The `next` is an enum Option which wraps a *reference to another Node*

* The `head.next` is now using `Some(&tail)` which is a reference to the tail (other node), instead of a Box which takes ownership

But this code won't compile yet:

```

error[E0106]: missing lifetime specifier

--> src/main.rs:4:18

|

4 | next: Option<&Node>,

| ^ expected named lifetime parameter

|

help: consider introducing a named lifetime parameter

|

2 ~ struct Node<'a> {

3 | value: i32,

4 ~ next: Option<&'a Node>,

```

Each reference has an implicit lifetime in the Rust compiler. In our example of a linked list, the compiler can't determine the lifetime of the `next` pointer because it points to another Node which could have a different lifetime.

Because the borrow checker prevents dangling references by using lifetimes, we have to help the compiler by annotating lifetimes in the struct definition:

```rust

struct Node<'a> {

value: i32,

next: Option<&'a Node<'a>>,

}

// or, using generics

struct Node<'a, T> {

value: T,

next: Option<&'a Node<'a, T>>,

}

```

_It's quite verbose, I know._ 😬

Now the version of a singly linked list using references:

```rust

#[derive(Debug, PartialEq)]

struct Node<'a, T> {

value: T,

next: Option<&'a Node<'a, T>>,

}

let tail = Node { value: 2, next: None };

let head = Node { value: 1, next: Some(&tail) };

assert_eq!(1, head.value);

assert_eq!(2, head.next.unwrap().value);

assert_eq!(None, tail.next);

```

* The `Node` and its `next` (reference) node has a lifetime `'a`

* we can use tail/head even after they been applied to the repective nodes, because we took no ownership

But a singly linked list is not enough. We want a doubly one:

```rust

#[derive(Debug, PartialEq)]

struct Node<'a, T> {

value: T,

next: Option<&'a Node<'a, T>>,

prev: Option<&'a Node<'a, T>>,

}

let mut tail = Node { value: 2, prev: None, next: None };

let head = Node { value: 1, prev: None, next: Some(&tail) };

tail.prev = Some(&head);

assert_eq!(1, head.value);

assert_eq!(2, head.next.unwrap().value);

assert_eq!(None, tail.next);

```

We run the code and...

```

error[E0506]: cannot assign to `tail.prev` because it is borrowed

--> src/main.rs:12:1

|

10 | let head = Node { value: 1, prev: None, next: Some(&tail) };

| ----- `tail.prev` is borrowed here

11 |

12 | tail.prev = Some(&head);

| ^^^^^^^^^^^^^^^^^^^^^^^ `tail.prev` is assigned to here but it was already borrowed

13 |

14 | assert_eq!(1, head.value);

| ------------------------- borrow later used here

```

_What happened here?_

The **borrow checker** checks at compile-time that we can have only *one mutable reference* at a time in the same scope.

Our example has a scenario where the `tail.prev` is **mutable** and is already borrowed to the `head`.

That's why we simply *can't implement a doubly linked list* in Rust using references (AFAIK).

Then we should go back to ownership. But what about having a "shared ownership" instead of a "single ownership" like in the Box example?

Enter _Rc_.

---

## 👉 Rc

Rc stands for **reference counting**, which performs heap allocation, like a Box.

But unlike Box, it enables _shared ownership_, where one or more owners point to the same value in the heap. Each time an owner _comes to the party_, it increments the counter. When the owner goes out of scope, it decrements the counter.

Only when all owners are dropped, then the Rc is entirely dropped as well freeing the underlying data from the heap.

Rc brings one caveat: **the reference must be immutable**. Otherwise, it would lead to double-free errors.

```rust

use std::rc::Rc;

let name = Rc::new(String::from("John"));

assert_eq!(1, Rc::strong_count(&name));

let cloned_name = Rc::clone(&name);

assert_eq!(2, Rc::strong_count(&name));

assert_eq!("John", *cloned_name); // Dereference

assert_eq!("John", *name); // Dereference

```

Each time an `Rc` is called `data.clone()` or by using `Rc::clone(&data)`, the data is not being copied on the heap (deep copy). Only the reference is copied and the strong count of references is incremented.

The original owner is still valid after _cloning_ multiple Rc references.

Let's implement the singly linked list using Rc instead of references or Box:

```rust

use std::rc::Rc;

struct Node<T> {

value: T,

next: Option<Rc<Node<T>>>

}

```

Cool, now let's add some data to our linked list:

```rust

let tail = Rc::new(Node { value: 2, next: None });

let head = Rc::new(Node { value: 1, next: Some(tail) });

assert_eq!(1, head.value);

assert_eq!(2, head.next.clone().unwrap().value);

```

It simply works! _How cool is that?_

Time to evolve to a doubly linked list using Rc:

```rust

use std::rc::Rc;

struct Node<T> {

value: T,

next: Option<Rc<Node<T>>>,

prev: Option<Rc<Node<T>>>,

}

let tail = Rc::new(Node { value: 2, prev: None, next: None });

let head = Rc::new(Node { value: 1, prev: None, next: Some(Rc::clone(&tail)) });

tail.prev = Some(Rc::clone(&head));

assert_eq!(1, head.value);

assert_eq!(2, head.next.clone().unwrap().value);

```

Instead of deep copy like in Box, the Rc smart pointer only increments the reference counter. Check `Rc::clone(&head)` and `Rc::clone(&tail)`.

But it won't compile:

```

error[E0594]: cannot assign to data in an `Rc`

--> src/main.rs:24:5

|

24 | tail.prev = Some(Rc::clone(&head));

| ^^^^^^^^^ cannot assign

```

_Cannot assign data in an Rc!_

Even if we used `let mut tail = ...`, Rc is now allowed to mutate because **all references in Rc are immutable**.

How about _mutating the underlying data_ even if the reference is immutable? We could achieve that by using "unsafe Rust", where **some checks could be done at runtime instead of compile-time.**

Even better, what about Rust providing an abstraction which uses unsafe capabilities under the hood but wrapping in a safe API?

Yes, _we are talking about RefCell_.

---

## 👉 RefCell

**RefCell** is an smart pointer which provides a safe API to mutate underlying data (on the heap) but through immutable references.

This approach is called **interior mutability**.

The borrow checker won't perform checks, but Rust will check them at runtime. In case we cause a problem regarding mutable data, the program will crash and stop (`panic!`).

```rust

use std::cell::RefCell;

let name = RefCell::new(String::from("John"));

name.borrow_mut().push_str(" Doe");

assert_eq!("John Doe", *name.borrow());

```

* RefCell wraps a String in the heap

* The reference is immutable

* Through `borrow_mut`, we get `RefMut<T>` to mutate the underlying data

* Through `borrow`, we get a `Ref<T>` to read the underlying data

In a RefCell, we can have multiple borrows for reading or **only one borrow mutable** for writing.

With that in place, time to implement our doubly linked list using Rc + RefCell:

```rust

use std::rc::Rc;

use std::cell::RefCell;

struct Node<T> {

value: T,

next: Option<Rc<RefCell<Node<T>>>>,

prev: Option<Rc<RefCell<Node<T>>>>,

}

let tail = Rc::new(RefCell::new(Node { value: 2, prev: None, next: None }));

let head = Rc::new(RefCell::new(Node { value: 1, prev: None, next: Some(Rc::clone(&tail)) }));

tail.borrow_mut().prev = Some(Rc::clone(&head));

assert_eq!(1, head.borrow().value);

assert_eq!(2, head.borrow().next.clone().unwrap().borrow().value);

assert_eq!(1, tail.borrow().prev.clone().unwrap().borrow().value);

```

Our Node model now is composed of a value and a `next` pointer which basically is:

* an enum Option

* which wraps an Rc (shared ownership)

* which wraps an RefCell (for interior mutability)

* which points to other Node

* and so on and on and on...

With RefCell, every time we have to write, we use `borrow_mut`, and every time we have to read, we use `borrow`.

_How wonderful is that?_

---

## 👉 Thinking about a circular linked list

In order to make our linked list to be circular, we have to make `tail.next` point to the `head`:

```rust

use std::rc::Rc;

use std::cell::RefCell;

struct Node<T> {

value: T,

next: Option<Rc<RefCell<Node<T>>>>,

prev: Option<Rc<RefCell<Node<T>>>>,

}

let tail = Rc::new(RefCell::new(Node { value: 2, prev: None, next: None }));

let head = Rc::new(RefCell::new(Node { value: 1, prev: None, next: Some(Rc::clone(&tail)) }));

tail.borrow_mut().prev = Some(Rc::clone(&head));

tail.borrow_mut().next = Some(Rc::clone(&head));

....

assert_eq!(1, tail.borrow().next.clone().unwrap().borrow().value);

```

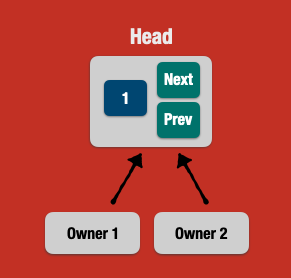

What's the challenges of a circular linked list using Rc?

### 🔵 Strong references may never reach zero

Remember that the Rc underlying data is dropped and invalidated when the `Rc::strong_count` reaches zero.

But in a circular linked list, for instance, we may have a **cyclic reference**, which in turn will never make the `strong_count` to reach zero, **leading to memory leaks**.

In such a scenario, the `tail.next` is a "weak" reference. Rust provides a way for `Rc` to have a different counter, called `weak_count`.

Thus, the weak counter will not be used for deciding when Rust should drop the value from the heap.

For solving this problem, Rc brings a method called `downgrade`, which **does not involve ownership at all** and transforms a strong reference into a weak one.

This smart pointer is called **Weak** and it's a weak reference in an _Rc_.

Let's see a basic usage of downgrading or upgrading references in an Rc (see below in the comments):

```rust

use std::rc::Rc;

// Just a strong reference

let name = Rc::new(String::from("John"));

assert_eq!(1, Rc::strong_count(&name));

// Cloning Rc is a strong reference

let _other_name = Rc::clone(&name);

assert_eq!(2, Rc::strong_count(&name));

assert_eq!(0, Rc::weak_count(&name));

// Downgrade makes it a weak reference

let weak_name = Rc::downgrade(&name);

assert_eq!(2, Rc::strong_count(&name));

assert_eq!(1, Rc::weak_count(&name));

// Upgrade makes it a strong reference again

let upgraded_name = weak_name.upgrade().unwrap();

assert_eq!(3, Rc::strong_count(&name));

assert_eq!(1, Rc::weak_count(&name));

assert_eq!("John", *upgraded_name);

```

In a linked list, the `prev` should be the "weak" reference because starting from the head, the Rc has already strong references that make the entire linked list through the `next` pointers.

Now, let's explore the final solution of this entire blogpost, using `Rc` for **shared ownership**, `RefCell` for **interior mutability** and `Rc::Weak` for preventing cyclic references in a linked list:

```rust

use std::rc::Rc;

use std::cell::RefCell;

use std::rc::Weak;

struct Node<T> {

value: T,

next: Option<Rc<RefCell<Node<T>>>>,

prev: Option<Weak<RefCell<Node<T>>>>,

}

let tail = Rc::new(RefCell::new(Node { value: 2, prev: None, next: None }));

let head = Rc::new(RefCell::new(Node { value: 1, prev: None, next: Some(Rc::clone(&tail)) }));

// Weak reference (no ownership)

tail.borrow_mut().prev = Some(Rc::downgrade(&head));

// Strong reference (shared ownership)

tail.borrow_mut().next = Some(Rc::clone(&head));

assert_eq!(1, head.borrow().value);

assert_eq!(2, head.borrow().next.clone().unwrap().borrow().value);

assert_eq!(1, tail.borrow().prev.clone().unwrap().upgrade().unwrap().borrow().value);

assert_eq!(1, tail.borrow().next.clone().unwrap().borrow().value);

```

---

## Wrapping Up

In this very post we demonstrated the fundamentals smart pointers in Rust and the problems they solve about memory management.

This post was written during a [live coding](https://www.youtube.com/watch?v=bdZe0LjDUyk) while building a doubly linked list by explaining fundamental concepts of ownership, references, borrowing and smart pointers.

I hope you had fun while learning a bit more about the _Rust ownership mental model_ as I did.

**Cheers!**

---

## References

https://doc.rust-lang.org/book/

https://en.wikipedia.org/wiki/Smart_pointer

https://ricardomartins.cc/2016/06/08/interior-mutability

https://www.youtube.com/watch?v=6VSgMbFNUuQ

| leandronsp |

1,651,518 | Hướng Dẫn Đăng Ký V99 Siêu dễ dàng | Hướng Dẫn Đăng Ký V99 Siêu dễ dàng Đăng ký #V99co đơn giản và nhanh chóng Đăng ký #v99.com hưởng... | 0 | 2023-10-31T03:11:08 | https://dev.to/v99comco/huong-dan-dang-ky-v99-sieu-de-dang-4cog | Hướng Dẫn Đăng Ký V99 Siêu dễ dàng

Đăng ký #V99co đơn giản và nhanh chóng

Đăng ký #v99.com hưởng chương trình khuyến mãi đặc biệt dành cho thành viên mới

Ưu đãi lên đến 200% giá trị số tiền bạn đã nạp ban đầu

Nhanh tay đăng ký #v99 trải nhiệm chơi game nhận ngay ưu đãi

Chi Tiết Đăng Ký CMT

Web: https://v99.com.co/dang-ky-v99/

Fanpage: https://www.facebook.com/v99comco

Mail: v99comco@gmail.com

Địa chỉ: 157 Ng. 86 P. Hào Nam, Chợ Dừa, Đống Đa, Hà Nội, Việt Nam

Sđt: 09453077445

**#v99 #v99comco #codeV99**

| v99comco | |

1,651,794 | TypeScript Type Utilities: Extracting Array Element Types | TypeScript provides the ability to create custom type utilities that can simplify complex type... | 25,236 | 2023-10-31T08:58:44 | https://dev.to/kuncheriakuruvilla/typescript-type-utilities-extracting-array-element-types-2mg | typescript, programming, webdev | TypeScript provides the ability to create custom type utilities that can simplify complex type operations. In this blog post, we'll explore a custom type utility called `ArrayElement`, which is designed to extract the element type of an array. We'll also demonstrate how it can be utilized in practical scenarios.

**The `ArrayElement` Type Utility**

Let's begin by taking a closer look at the `ArrayElement` type utility:

```typescript

type ArrayElement<ArrayType extends readonly unknown[]> =

ArrayType extends readonly (infer ElementType)[] ? ElementType : never;

```

At first glance, this code might appear a bit cryptic, but we'll break it down step by step.

The `ArrayElement` type utility is a generic type that expects an array type, denoted as `ArrayType`, as its parameter. Its primary purpose is to determine the data type of the elements within the array.

**Conditional Typing**

One of the powerful features of TypeScript is conditional typing, and this is where it comes into play in the `ArrayElement` type. The conditional expression `ArrayType extends readonly (infer ElementType)[]` checks if the `ArrayType` meets the criteria of an array represented as `readonly (infer ElementType)[]`. The `readonly` keyword ensures that the array is read-only, making it compatible with most TypeScript arrays.([Read more](https://www.typescriptlang.org/docs/handbook/2/conditional-types.html))

**Extracting the Element Type**

If the `ArrayType` satisfies the condition, TypeScript proceeds to infer the element type of the array and assigns it to the variable `ElementType`. In essence, this step extracts the type of the elements contained within the array.

**Using the `never` Type**

On the other hand, if the `ArrayType` does not match the pattern of a read-only array, TypeScript uses the `never` type. The `never` type is a TypeScript construct indicating that a type does not exist or is not valid in the given context. In this case, it signifies that the `ArrayType` is not an array as per the defined criteria.

**Practical Usage**

Now that we've examined the `ArrayElement` type utility, let's see how it can be applied in practical scenarios.

Consider the following example:

```typescript

type NumbersArray = Array<number>;

const numbers: NumbersArray = [10, 20, 30];

const number: ArrayElement<NumbersArray> = 12;

```

In this example, we define a type called `NumbersArray`, which represents an array containing numbers. We then initialize an array called `numbers` with values 10, 20, and 30, and TypeScript ensures that it adheres to the defined `NumbersArray` type.

Next, we declare a constant variable named `number` and assign it the value 12. TypeScript's type inference capabilities come into play here. By using the `ArrayElement` type utility with `NumbersArray` as the generic type parameter, TypeScript correctly deduces that `number` should have a type of `number`. This showcases how the `ArrayElement` utility can be used to ensure that a variable is of the expected element type.

**Conclusion**

Custom type utilities, like the `ArrayElement` type we've explored here, can greatly enhance the type safety and expressiveness of your TypeScript code. By utilizing conditional typing and type inference, you can create more robust and maintainable TypeScript applications. Whether you're building web applications, libraries, or APIs, TypeScript's type system offers valuable tools to keep your code reliable and error-free. | kuncheriakuruvilla |

1,651,905 | Orkes Monthly Highlights - October 2023 | Welcome to the October edition of Orkes Monthly Highlights. Let's delve into the captivating events... | 0 | 2023-10-31T10:35:44 | https://orkes.io/blog/orkes-monthly-highlights-oct-2023/ | newsletter, orchestration, microservices | Welcome to the October edition of Orkes Monthly Highlights.

Let's delve into the captivating events that occurred in October 2023 and an exclusive glimpse of what's in store for the upcoming months.

## Product Updates

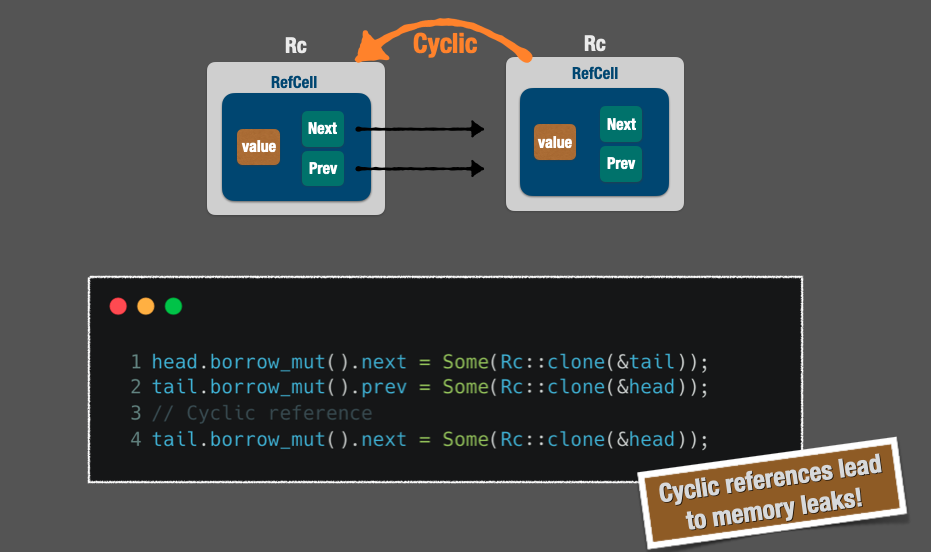

### System Task - Update Secret

Orkes Conductor has built-in support for handling [secrets](https://orkes.io/content/developer-guides/secrets-in-conductor), allowing you to securely store crucial sensitive keys and values that should not be openly exposed in your workflow definitions. However, there may be specific scenarios where you must dynamically provide these secret keys to your workflows. In such cases, you can modify the existing secret values to align with your business processes by utilizing the new “Update Secret” system task. [Learn more](https://orkes.io/content/reference-docs/system-tasks/update-secret).

### Upgrade Workflow API

Previously, you couldn’t update the workflow version of a running execution. To tackle this, we have added a new API that upgrades a running workflow to a newer version. [Learn more](https://orkes.io/content/reference-docs/api/workflow/upgrade-workflow).

### Get Workflow Definition API

We have added support for the following new APIs:

- [Get All Workflow Definitions](https://orkes.io/content/reference-docs/api/metadata/get-all-workflow-definitions)

- [Get Workflow Definition](https://orkes.io/content/reference-docs/api/metadata/get-workflow-definition)

## Community Updates

### 12K Stars on Netflix Conductor GitHub Repository

We celebrated a remarkable milestone in September when the [Netflix Conductor GitHub repository](https://github.com/Netflix/conductor) reached 10k stars. It was a momentous achievement for our DevRel team. Just a month later, we're thrilled to announce that we've surpassed 12k stars! ⭐🎉

None of this would have been possible without the incredible support of our Conductor community. On behalf of Conductor’s maintainer team, we extend our heartfelt gratitude for your contributions, and together, let's maintain this incredible momentum.

## Recap of October 2023 Events

### QCon San Francisco

**Oct 6, 2023: San Francisco, US**

We were at [QCon San Francisco](https://qconsf.com/) on Oct 6, 2023, where our Head of DevRel [Olivier Poupeney](https://www.linkedin.com/in/olivierpoupeney/) delivered an informative workshop about orchestration patterns and Conductor and how it helps develop applications that span multiple languages, teams, and personas ranging from developers to SREs and product managers.

### GDG DevFest Ranchi

**Oct 8, 2023: Ranchi, India**

We kick-started the DevFest series of the year with [GDG DevFest Ranchi](https://gdg.community.dev/events/details/google-gdg-ranchi-presents-devfest-ranchi-2023/) on Oct 8, 2023, at Birla Institute of Technology, Ranchi, India. [Sangeeta Gupta](https://www.linkedin.com/in/sangee-gupta/), Community Programs Manager & [Nitta Mathew](https://www.linkedin.com/in/nittamathew/), DevOps Engineer at Orkes, delivered an insightful session on unleashing the power of microservices in modernizing applications.

We hopped on to the fundamentals of microservices, their benefits, and how they can be utilized to build robust and scalable applications.

### GITEX - Expand North Star

**Oct 15-18, 2023: Dubai, UAE**

We were delighted to be part of the 43rd edition of [Gitex Global 2023](https://www.gitex.com/) from Oct 15-18, 2023, in Dubai, UAE. It was our debut event in the Middle East region, and we were part of the [Expand North Star](https://www.expandnorthstar.com/), renowned as the world’s largest gathering for startups and investors.

It was a fantastic opportunity to engage with prospects, learn more about their needs, and forge new partnerships. We would like to thank everyone who visited us at Gitex, and look forward to continuing the conversations!

### New York Weaviate Meetup

**Oct 27, 2023: New York, US**

We were part of the [Weaviate Meetup](https://www.meetup.com/weaviate-nyc/events/296401093/) in New York on Oct 27, 2023, from 06:00 PM - 09:00 PM EDT at 2 Orchard St, 2 Floor, NY 10002. Our Head of DevRel, [Olivier Poupeney](https://www.linkedin.com/in/olivierpoupeney/), delivered a session on “Orchestration in Retrieval-Augmented Generation”, where he tapped into AI Orchestration with Orkes Conductor.

### Cloud Native Day Pune

**Oct 28, 2023: Pune, India**

We wrapped up October with [Cloud Native Day Pune](https://www.cloudnativepune.com/), a community-organized event dedicated to helping, growing, and sustaining the cloud-native community.

We were thrilled to partner with Cloud Native Day, an event focused on emerging technologies such as GitOps, WASM, Docker, Kubernetes, Cloud Sustainability, GenAI, GPT, DevOps, and more.

## Join our Upcoming Events

### Open Source Finance Forum New York

**Nov 1, 2023: New York, US**

Bringing together experts across financial services, technology, and the open source community, [Open Source in Finance Forum](https://dev.events/conferences/open-source-in-finance-forum-new-york-new-york-10-2023) is the conference dedicated to innovation and collaboration in financial services through open source software and standards.

Our Head of DevRel, [Olivier Poupeney](https://www.linkedin.com/in/olivierpoupeney/), will deliver a session on “_[Implementing Resilient Financial Workflows using Netflix Conductor](https://osff2023.sched.com/event/1PzGU/implementing-resilient-financial-workflows-using-netflix-conductor-olivier-poupeney-orkes?iframe=no)_” on **Nov 1, 2023**, from **12:00 PM to 12:30 PM**.

[Register now](https://dev.events/conferences/open-source-in-finance-forum-new-york-new-york-10-2023)

### Microservices & Distributed Applications Meetup Australia

**Nov 2, 2023: Sydney, Australia**

We are excited to announce our next in-person Microservices & Distributed Applications meetup, which will be held on **Nov 2, 2023**, from **04:30 PM - 07:30 PM AEST** at _Amazon Web Services Australia Pty Ltd Level 5 – Conf Room 202, 2 Park Street Sydney, NSW 2000_.

Join us to discover exclusive strategies and insights for real-world application development, harness the power of Conductor, the open-source orchestration engine from Netflix, and connect with fellow tech enthusiasts to stay at the forefront of industry trends.

Let’s buckle up for the Sydney meetup to unlock the secrets to building scalable distributed applications with Microservices.

[Register now](https://orkes.io/meetups/microservices-and-orkes-conductor-meetup-australia)

### HackCBS

**Nov 4-5, 2023: Delhi, India**

We are excited to partner with [HackCBS](https://hackcbs.tech/), India’s largest student-run Hackathon, from **Nov 4-5, 2023**, at _Shaheed Sukhdev College of Business Studies in Delhi, India_. With a wide variety of themes spanning from FinTech to Web3, this hackathon provides you with an opportunity to learn new skills and network with industry experts & leaders.

Orkes team will be there to help you kickstart and build exciting projects with Conductor. Exclusive prizes await the winners. Block your seats now and be part of this revolutionary project.

[Register now](https://hackcbs.tech/)

### DevFest Durgapur

**Nov 5, 2023: Durgapur, India**

With the DevFest season being continued, we are hereby proudly announcing our collaboration with DevFest Durgapur, the biggest event in the region for developers and techies in India. [DevFest Durgapur](https://gdg.community.dev/events/details/google-gdg-durgapur-presents-devfest-durgapur-2023/) is set to take place on November 5, 2023, in Durgapur, India. Don’t miss this exciting opportunity to collaborate and network with regional developers.

[Register now](https://gdg.community.dev/events/details/google-gdg-durgapur-presents-devfest-durgapur-2023/)

### Microservices and Orkes Conductor Meetup Singapore

**Nov 8, 2023: Singapore**

We’re back in Singapore for another exciting edition of Microservices and Orkes Conductor Meetup on **Nov 8, 2023**, from **05:00 PM - 08:00 PM SGT** at _AWS Singapore, 23 Church St, #10-01, Singapore_.

Join us for a gathering of enthusiastic developers dedicated to microservice-based applications. In addition to highlighting the strategies and insights on real-world scalable applications development, this edition dives into AI orchestration and how to incorporate Conductor for intelligent, automated decision-making in daily business operations. Secure your spot today!

[Block your seats now](https://orkes.io/meetups/microservices-and-orkes-conductor-meetup-singapore)

## Recent Blog Posts

- [4 Microservice Patterns Crucial in Microservices Architecture](https://orkes.io/blog/4-microservice-patterns-crucial-in-microservices-architecture/)

| rizafarheen |

1,652,107 | JSON is Slower. Here Are Its 4 Faster Alternatives | Edit 2: Lots of insightful comments at the bottom, do give them a read, too, before going with any... | 0 | 2023-10-31T13:14:13 | https://dev.to/nikl/json-is-slower-here-are-its-4-faster-alternatives-2g30 | go, programming, javascript, json | Edit 2: Lots of insightful comments at the bottom, do give them a read, too, before going with any alternatives!

Edit 1: Added a new take on 'Optimizing JSON Performance' from comments

---

Your users want instant access to information, swift interactions, and seamless experiences. JSON, short for JavaScript Object Notation, has been a loyal companion for data interchange in web development, but could it be slowing down your applications? Let's dive deep into the world of JSON, explore its potential bottlenecks, and discover faster alternatives and optimization techniques to make your apps sprint like cheetahs.

---

You might want to check this tutorial too: [Using Golang to Build a Real-Time Notification System - A Step-by-Step Notification System Design Guide](https://dev.to/nikl/using-golang-to-build-a-real-time-notification-system-a-step-by-step-notification-system-design-guide-50l7)

---

### What is JSON and Why Should You Care?

Before we embark on our journey to JSON optimization, let's understand what JSON is and why it matters.

JSON is the glue that holds together the data in your applications. It’s the language in which data is communicated between servers and clients, and it’s the format in which data is stored in databases and configuration files. In essence, JSON plays a pivotal role in modern web development.