id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,675,702 | Image Upload in React Native | The ImagePicker is an Expo plugin designed to simplify the process of uploading images or capturing... | 0 | 2023-11-22T20:55:59 | https://dev.to/joey_clapton/implementing-image-upload-in-react-native-5ddf | The ImagePicker is an Expo plugin designed to simplify the process of uploading images or capturing photos using the camera without the need to directly handle complex camera or gallery APIs.

### Compatibility

The ImagePicker is compatible with the following platforms:

- Android Device

- Android Emulator

- iOS Device

- iOS Simulator

- Web Platform

### Installation

```bash

npx expo install expo-image-picker

```

### Configuring the plugin

To configure the plugin, we will go to the app.json file and add the following configuration:

```json

{

"expo": {

"plugins": [

[

"expo-image-picker",

{

"photosPermission": "The app need accesses your photos."

}

]

]

}

}

```

In the photosPermission property, we will set the message displayed to the user, requesting approval to access the camera feature.

### Implementing Image Upload Using the Mobile Gallery

```tsx

import react, { useState } from "react";

import { Button, Image, View } from "react-native";

// Let's import the library.

import * as ImagePicker from "expo-image-picker";

export default function App() {

const [image, setImage] = useState<string>();

const pickImage = async () => {

// No permission is required to open the gallery.

const result = await ImagePicker.launchImageLibraryAsync({

// The mediaTypes property defines which type of file is allowed.

mediaTypes: ImagePicker.MediaTypeOptions.All,

// Quality sets the image quality and accepts values from 0 to 1.

// 0 represents lower quality/smaller size, and 1 represents higher quality/larger size.

quality: 1,

});

console.log(result);

// Let's receive the image if the user doesn't close the photo gallery.

if (!result.canceled) {

const { uri } = result.assets[0];

setImage(uri);

}

};

return (

<View style={{ flex: 1, alignItems: "center", justifyContent: "center" }}>

<Button title="Pick an image from camera roll" onPress={pickImage} />

{image ? (

<Image

source={{ uri: image as string }}

style={{ width: 200, height: 200 }}

/>

) : null}

</View>

);

}

```

GitHub with the complete project code: https://github.com/joeyclapton/image-picker-example

#### References

- https://docs.expo.dev/versions/latest/sdk/imagepicker/

- https://docs.expo.dev/tutorial/image-picker/ | joey_clapton | |

1,676,001 | Typescript postman install in ubuntu/linux | postman-linux-x64.tar.gz Great! If you have a new version of the Postman application in the form of... | 0 | 2023-11-23T06:54:35 | https://dev.to/karakib2k18/postman-install-in-ubuntulinux-5086 | postman, webdev, express, typescript | postman-linux-x64.tar.gz

Great! If you have a new version of the Postman application in the form of a `postman-linux-x64.tar.gz` file, you can follow these steps to install and run it:

1. **Extract the Tarball:**

Navigate to the directory where the tarball is located and extract its contents:

```bash

tar -xzf postman-linux-x64.tar.gz

```

This command will extract the contents of the tarball into a new directory, likely named something like `Postman`.

2. **Navigate to the Postman Directory:**

Change into the newly created directory:

```bash

cd Postman

```

3. **Run Postman:**

Inside the `Postman` directory, you should find the executable file. Run it:

```bash

./Postman

```

If you encounter any permission issues, you might need to add execute permissions to the Postman executable:

```bash

chmod +x Postman

```

Then try running it again.

4. **Optional: Create a Desktop Shortcut:**

To make it easier to access Postman, you can create a desktop shortcut. You can do this manually or check if the installation provides an option to create a shortcut during the installation process.

```bash

cp Postman.desktop ~/.local/share/applications/

```

This command copies a desktop file to the appropriate location for desktop shortcuts.

Now, you should have the Postman application running on your system. If you encounter any issues during the installation or have specific instructions provided with the new version, make sure to follow those instructions for the best results.

If you have any further questions or run into any issues, feel free to let me know!

| karakib2k18 |

1,676,082 | Tô màu hoa hướng dương và tạo nên không gian sống nghệ thuật | Mỗi buổi sáng, khi tia nắng đầu tiên của ngày mới ban mai chiếu rọi, tôi thường nhìn thấy những bức... | 0 | 2023-11-23T08:41:26 | https://dev.to/tomaubonghoa/to-mau-hoa-huong-duong-va-tao-nen-khong-gian-song-nghe-thuat-574i | Mỗi buổi sáng, khi tia nắng đầu tiên của ngày mới ban mai chiếu rọi, tôi thường nhìn thấy những bức tranh sống động và tràn ngập sức sống của những đóa hoa hướng dương trong khu vườn nhỏ của mình. Đó là một khoảnh khắc tuyệt vời, nơi mà tôi cảm nhận được vẻ đẹp của tự nhiên và cảm giác hòa mình vào không gian nghệ thuật tinh tế.

Xem Chi Tiết Tại: [](https://tomautructuyen.vn/to-mau-hoa-huong-duong/)

Những đóa hoa hướng dương không chỉ là những bức tranh sống động, mà còn là nguồn cảm hứng lớn để tô điểm cho không gian sống của tôi. Tôi quyết định tô màu những bức tranh này bằng cách sử dụng những gam màu ấm áp và tươi sáng, tạo nên một bức tranh nghệ thuật tự nhiên độc đáo. Việc tô màu không chỉ là một hình thức sáng tạo mà còn là cách để tôi thể hiện tình yêu và đam mê của mình đối với nghệ thuật.

Khi tôi bắt đầu tô màu, từng đường nét trở nên sống động dưới đầu cọ của tôi. Gam màu vàng óng ánh của những bông hoa hướng dương mang lại không gian ấm cúng và tươi mới cho khu vườn. Tôi chọn những gam màu xanh mát để tô điều các lá cây xung quanh, tạo nên sự cân bằng và hài hòa tự nhiên. Bức tranh ngày càng trở nên phong cách và sáng tạo, giống như một tác phẩm nghệ thuật đang mở ra trước mắt tôi.

Không chỉ giới hạn việc tô màu trong giấy và tranh vẽ, tôi quyết định mở rộng không gian nghệ thuật của mình vào không gian sống. Bức tranh hoa hướng dương không chỉ là một tác phẩm nghệ thuật độc lập mà còn là một phần của trang trí nội thất, tô điểm cho căn phòng với vẻ đẹp ấn tượng và tinh tế.

Việc tô màu hoa hướng dương và tạo nên không gian sống nghệ thuật không chỉ là sở thích cá nhân của tôi mà còn là cách tôi tìm thấy niềm vui và ý nghĩa trong cuộc sống hàng ngày. Mỗi bức tranh là một câu chuyện riêng, làm cho không gian sống của tôi trở nên độc đáo và phản ánh tâm hồn của người sáng tạo. Đó không chỉ là nghệ thuật trên giấy mà còn là nghệ thuật sống, nơi mà mỗi chi tiết nhỏ đều được chăm sóc và yêu thương, tạo nên một không gian sống tràn ngập sức sống và nghệ thuật.

Name: Tô Màu Trực Tuyến

Website: [](https://tomautructuyen.vn/)

Phone: 0961 090 061

Tags: #tomau , #tranhtomau, #tomautructuyen, #tranhtomauchobe # tomauhoahuongduong

Email: tomautructuyen@gmail.com

| tomaubonghoa | |

1,676,315 | Python Online Training: Master Python Programming and Earn Certification | Introduction Python Online Training: Master Python Programming and Earn Certification Introduction:... | 0 | 2023-11-23T12:10:57 | https://dev.to/leoanthony/python-online-training-master-python-programming-and-earn-certification-2on9 | python, programming, beginners | **Introduction**

Python Online Training: Master Python Programming and Earn Certification Introduction: Do you want to become a Python pro? Our online training program offers a comprehensive curriculum to help you master Python programming from scratch. Whether you are a beginner or an experienced developer, this course is designed to give you the skills and knowledge needed to excel in the world of Python. Join us now and unlock the doors to a bright future filled with endless possibilities in the world of [Python Online Training](https://www.h2kinfosys.com/courses/python-online-training/).

**Why Learn Python?**

Python, the language of choice for those who love simplicity and flexibility. But wait, there's more! Python holds the key to a wide range of opportunities in the tech industry. From web development to data science, and even automation, Python has got your back. So, hop on the Python bandwagon and open doors to a world of endless possibilities. Not to mention, Python’s clean syntax and readability make it a joy to work with (unlike some other programming languages). Plus, Python has an ever-growing community that provides support and resources at your fingertips.

Don't miss out - join the Python revolution today! Okay, so maybe Python won't turn you into a superhero overnight, but it will definitely give you some superpowers in the coding world. And who knows, maybe one day you'll be able to code your way out of any situation, just like Tony Stark. So, what are you waiting for? Enroll in [Python online certification training](https://www.h2kinfosys.com), master the language, and earn your certification. Trust us, it's worth it. Python is everywhere, and you'll be equipped with the skills to conquer any coding challenge that comes your way. Embrace the power of Python and watch your career soar to new heights. PS: With Python, you can finally say goodbye to those frustrating syntax errors and endless debugging sessions. Debugging? Ain't nobody got time for that!

**Python Basics**

Are you tired of spending hours trying to figure out a programming language? Well, look no further because Python is here to save the day! With Python Basics, you'll learn all the essential elements that make Python so lovable – and trust me, there's a lot to love! First, let's talk about variables and data types. Picture this: you have a box, and you want to put something in it. Well, in Python, that box is a variable, and it can hold anything from numbers to text or even entire collections of data. It's like having a real-life Mary Poppins bag! Now, onto control structures.

Just like a traffic light, Python can make decisions. With if statements, you can tell your program to do something only if a certain condition is met. Imagine having that power in real-life situations – no more long queues at the grocery store! Lastly, we have functions. Think of them as mini superheroes that can perform specific tasks. They take inputs, do their thing, and give you an output. It's like having a personal assistant who can calculate your taxes, write emails, and even walk your dog if you want! So, with Python Basics, you'll conquer variables and data types, control structures, and functions like a boss. Whether you want to analyze data, build web applications, or automate mundane tasks, Python has got you covered. Trust me, Python is your new best friend in the world of programming!

**Python Libraries and Frameworks**

Python Libraries and Frameworks: Python is not just limited to being a simple programming language. It offers a vast number of libraries and frameworks that make your life as a developer a whole lot easier. Let's take a closer look at some of the key Python libraries and frameworks you should definitely get your hands on!

**1. NumPy:** NumPy, short for "Numerical Python," is a fundamental library for scientific computing in Python. It provides support for large, multi-dimensional arrays and matrices, along with a collection of mathematical functions to operate on these arrays efficiently. NumPy is a go-to choice for tasks such as data manipulation, linear algebra, random number generation, and much more. With NumPy, crunching numbers becomes a piece of cake!

**2. Pandas:** Pandas is another essential Python library that specializes in data manipulation and analysis. It offers data structures like DataFrames, which allow you to organize, filter, and dissect data effortlessly. Pandas also provides a plethora of functions and methods to handle missing data, merge datasets, perform statistical operations, and so on. With Pandas, mastering data analysis becomes a walk in the park!

**3. Django:** If you have dreams of becoming a web developer, Django is a framework you must acquaint yourself with. Django is a high-level web framework that simplifies the process of building web applications. It follows the model-view-controller (MVC) architectural pattern and comes packed with a range of features like URL routing, database connectivity, templating engine, and much more. Django empowers you to create robust, scalable, and secure web applications with ease.

Python libraries and frameworks like NumPy, Pandas, and Django are just the tip of the iceberg. As you delve deeper into Python, you'll discover a treasure trove of valuable tools that can make your programming journey smooth and enjoyable. So, buckle up, embrace Python, and unlock a world of endless possibilities! Now, go ahead and dive into the enchanting world of [Python libraries and frameworks](https://docs.google.com/document/d/e/2PACX-1vTnDVOcaMZZ87Ly3f0Ls3_ZGS0hf9OG-ZiyPLvCNled1_pt9zqZ_Ife_7KtUyvlBddA8RFr-dJXWjI5/pub). Trust me, it's a fascinating adventure you won't regret!

**Conclusion**

Python is a versatile programming language that offers numerous opportunities in various fields such as data science, web development, and automation. With Python, you can master the art of coding while enjoying the flexibility it provides. Whether you want to analyze data, build websites, or automate repetitive tasks, Python has got your back. So why wait? Sign up for Python online training and embark on a journey to becoming a Python pro.

| leoanthony |

1,676,351 | Free Mobile Game Development Procedure For Beginners | Recent years have increased hobbies within the creative and attractive sport improvement discipline.... | 0 | 2023-11-23T13:09:32 | https://dev.to/nostra/free-mobile-game-development-procedure-for-beginners-la | nostra, nostragames, gamedev, mobilegames | Recent years have increased hobbies within the creative and attractive sport improvement discipline. A developing range of humans are interested in creating their video games due to the buzz surrounding cellular gaming and the video game enterprise's explosive growth. However, it might not be easy to start game production, specifically for individuals who are not experienced in the area. We made the Ultimate Guide to [**Free Mobile Games Development**](https://nostra.gg/) to assist you in holding close to the guidelines and strategies they had to make their video games.

This article will cover all of the information required to create video games. It will guide you on the various platforms available for growing video games and how to select one. Furthermore, it publishes recreation layout, overlaying topics including degree layout and recreation mechanics. We will go through the many programming languages and software equipment available for game development and the importance of trying out and debugging your Free Mobile Games. Last but not least, it offers recommendations on the way to market and launch your games to the biggest target audience viable.

#### **Procedure for Game Development**

The technique of making Free Mobile Games are examined in detail below:

**1. Pitching Ideas**

The theory of the sport concept takes place at this preliminary level of the development procedure. Game designers expand the overall concept, gameplay mechanics, plot, and supposed target audience.

**2. Pre-Production**

In this segment, a complete method is created via the development crew. A design file that describes the Free Mobile Games mechanics, tale, situation, characters, and consumer interface is needed. They additionally create a challenge plan, finances, and manufacturing agenda.

**3. Post-Production**

The most labor-intensive and time-consuming phase of sports improvement is production. It involves designing the consumer interface, coding the gaming mechanics, and producing the sport's artwork, sound, and music. The development crew can also produce an alpha version of the sport or prototype to check the personal interface and gameplay mechanics.

**4. Testing**

The development group drastically tests the game after the finishing touch to ensure it's dependable and error-free. Internal trying out with the aid of the development team is blended with external testing by way of beta testers or awareness companies.

**5. Release**

After all, bugs are constant and trying out is over; the sport is prepared for release. This entails developing promotional content, including screenshots and trailers, and submitting the game to online shops like Steam or the App Store.

**6. Post-Launch**

The development crew continues to maintain the game after it's been launched using presenting patches and updates to restore any problems or problems that would arise. They may launch extra cloth and new characters or tiers as downloadable DLC.

**7. Game Design**

It impacts the gameplay mechanics, narrative, characters, and usual revel in; the game layout is crucial to the game introduction. It's a complex and disturbing method that requires technical understanding, creativity, and a deep comprehension of the target marketplace.

**8. Explain The Game's Mechanisms**

Game mechanics are the tactics and recommendations that govern **[Free Mobile Games](https://youtu.be/N1jpTSVlXFg)**. This consists of gameplay elements, including movement, combating, solving riddles, and more. Since those mechanisms will impact the gameplay, they must be detailed early in the sport design process.

**9. Create The Narrative**

The story that propels the game is called the plot. It must be fascinating and have a clear starting, center, and end. Additionally, the storyline wishes to match the gameplay.

**10. Design The Personas**

The non-player characters (NPCs) and avatars the player interacts with in the game are considered. They ought to have distinct personalities and objectives, be well-designed, and be remembered. The man or woman designs should also mesh nicely with the plot and gaming mechanics.

**11. Create the Levels**

The settings wherein gameplay takes vicinity are referred to as degrees. They must be artistically appealing, thoughtfully built, and difficult enough to keep players interested. The plot and gaming mechanics ought to move nicely with the level design.

**12. Make The UI Development**

The interactive and visual layout that the participant interacts with whilst playing the sport is known as the user interface. It ought to be easy to apply and offer unambiguous feedback to the person.

**Conclusion**

The procedure of making a Free Mobile Games is complex and fascinating, requiring a mixture of technical skill, creativity, and perseverance. Whether you are a beginner or an expert developer, there are usually new hints and techniques to sharpen your recreation development capabilities. With the assistance of the hints and strategies in this complete guide, novice builders may also begin developing their video games, even as pro creators can increase their abilities and create even more engaging video games. | nostra |

1,676,715 | Benchmark TypeScript Parsers: Demystify Rust Tooling Performance | TL;DR: Native parsers used in JavaScript are not always faster due to extra work across languages.... | 0 | 2023-11-23T20:05:31 | https://dev.to/herrington_darkholme/benchmark-typescript-parsers-demystify-rust-tooling-performance-2go8 | webdev, javascript, rust, node | > TL;DR: Native parsers used in JavaScript are not always faster due to extra work across languages. Avoiding these overhead and using multi-core are crucial for performance.

**Rust** is rapidly becoming a language of choice within the JavaScript ecosystem for its performance and safety features. However, integrating Rust into JavaScript tooling presents unique challenges, particularly when it comes to designing an efficient and portable plugin system.

> "Rewriting JavaScript tooling in Rust is advantageous for speed-focused projects that do not require extensive external contributions." - [Nicholas C. Zakas, creator of ESLint](https://twitter.com/slicknet/status/1726663311541100626)

Learning Rust can be daunting due to its steep learning curve, and distributing compiled binaries across different platforms is not straightforward.

A Rust based plugins necessitates either static compilation of all plugins or a carefully designed application binary interface for dynamic loading.

These considerations, however, are beyond the scope of this article. Instead, we'll concentrate on how to provide robust tooling for writing plugins in JavaScript.

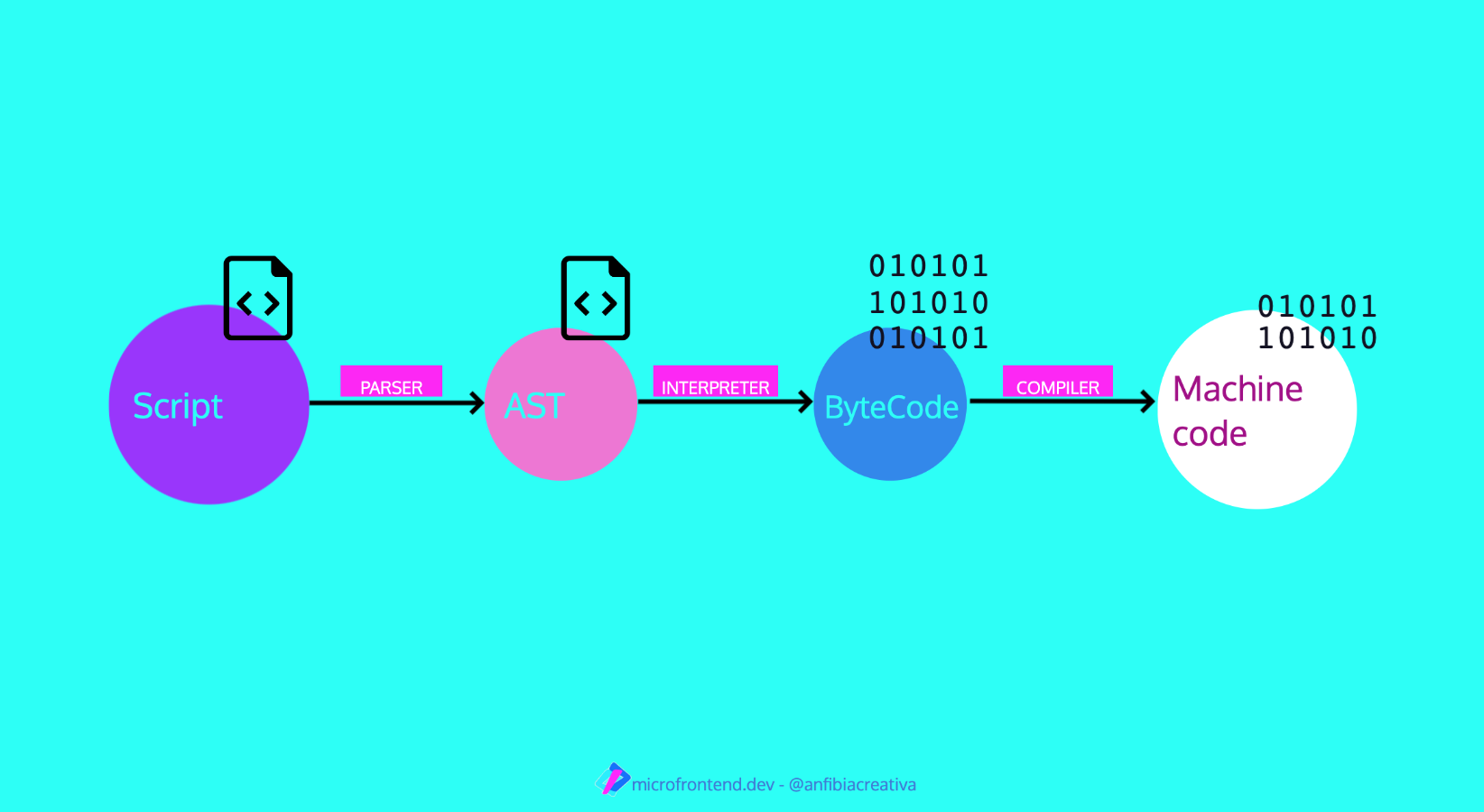

A critical component of JavaScript tooling is the parsing of source code into an Abstract Syntax Tree (AST). Plugins commonly inspect and manipulate the AST to transform the source code. Therefore, it's not sufficient to parse in Rust alone; we must also make the AST accessible to JavaScript.

This post will benchmark several popular TypeScript parsers implemented in JavaScript, Rust, and C.

## Parser Choices

While there are numerous JavaScript parsers available, we focus on TypeScript parsers for this benchmark. Modern bundlers must support TypeScript out-of-the-box, and TypeScript is a superset of JavaScript. Benchmarking TypeScript is a sensible choice to emulate the real-world bundler workload.

The parsers we're evaluating include:

- **[Babel](https://babeljs.io/)**: The Babel parser (previously Babylon) is a JavaScript parser used in Babel compiler.

- **[TypeScript](https://www.typescriptlang.org/)**: The official parser implementation from the TypeScript team.

- **[Tree-sitter](https://tree-sitter.github.io)**: An incremental parsing library that can build and update concrete syntax trees for source files, aiming to parse any programming language quickly enough for *text editor use*.

- **[ast-grep](https://ast-grep.github.io/)**: A CLI tool for code structural search, lint, and rewriting based on abstract syntax trees. We are using its [napi binding](https://github.com/ast-grep/ast-grep/tree/main/crates/napi) here.

- **[swc](https://swc.rs/)**: A super-fast TypeScript/JavaScript compiler written in Rust, with a focus on performance and being a library for both Rust and JavaScript users.

- **[oxc](https://oxc-project.github.io/)**: The Oxidation Compiler is a suite of high-performance tools for JS/TS, claiming to have the fastest and most conformant parser written in Rust.

## Native Addon Performance Characteristics

Before diving into the benchmarks, let's first review the performance characteristics of Node-API based solutions.

**Node-API Pros:**

- **Better Compiler Optimization:** Code in native languages have compact data layouts, leading to fewer CPU instructions.

- **No Garbage Collector Runtime Overhead:** This allows for more predictable performance.

However, Node-API is not a silver bullet.

**Node-API Cons:**

- **FFI Overhead:** The cost of interfacing between different programming languages.

- **Serde Overhead:** Serialization and deserialization of Rust data structures can be costly.

- **Encoding Overhead:** Converting JS string in utf-16 to Rust's utf-8 string can introduce significant delays.

We need to understand the pros and cons of using native node addons in order to design an insightful benchmark.

## Benchmark Design

We consider two main factors:

1. **File Size:** Different file sizes reveal distinct performance characteristics. The parsing time of an N-API based parser consists of actual parsing and cross-language overhead. While parsing time is proportional to file size, the growth of cross-language overhead depends on the parser's implementation.

2. **Concurrency Level:** Parallel parsing is not possible in JavaScript's single main thread. However, N-API based parsers can run in separate threads, either using libuv's thread pool or their own threading model. That said, thread spawning also incurs overhead.

We are not considering these factors in this post.

- **Warmup and JIT:** No significant difference observed between warmup and non-warmup runs.

- **GC, Memory Usage:** Not evaluated in this benchmark.

- **Node.js CLI arguments:** To make the benchmark representative, default Node.js arguments were used, although tuning could potentially improve performance.

## Benchmark Setup

### Testing Environment

The benchmarks were executed on a system equipped with the following specifications:

- **Operating System:** macOS 12.6

- **Processor:** arm64 Apple M1

- **Memory:** 16.00 GB

- **Benchmarking Tool:** [Benny](https://caderek.github.io/benny/)

### File Size Categories

To assess parser performance across a variety of codebases, we categorized file sizes as follows:

- **Single Line:** A minimal TypeScript snippet, `let a = 123;`, to measure baseline overhead.

- **Small File:** A concise 24-line TypeScript module, representing a common utility file.

- **Medium File:** A typical 400-line TypeScript file, reflecting average development workloads.

- **Large File:** The extensive 2.79MB `checker.ts` from the TypeScript repository, challenging parsers with a complex and sizable codebase.

### Concurrency Level

For this benchmark, we simulate a realistic workload by parsing five files concurrently. This number is an arbitrary but reasonable proxy to the actual JavaScript tooling.

It's worth noting, to seasoned Node.js developers, that this setup may influence asynchronous parsing performance. However it does not disproportionately favor Rust-based parsers. The rationale behind this is left as an exercise for the reader. :)

----

This post aims to provide a general overview of the benchmarking for TypeScript parsers, focusing on the performance characteristics of N-API based solutions and the trade-offs involved. Feel free to adjust the benchmark setup to better fit your workload.

Now, let's delve into the results of TypeScript parser benchmarking!

## Results

### Synchronous Parsing

The performance of each parser is quantified in operations per second—a metric provided by the Benny benchmarking framework. For ease of comparison, we've normalized the results:

* The fastest parser is designated as the benchmark, set at 100% efficiency.

* Other parsers are evaluated relative to this benchmark, with their performance expressed as a percentage of the benchmark’s speed.

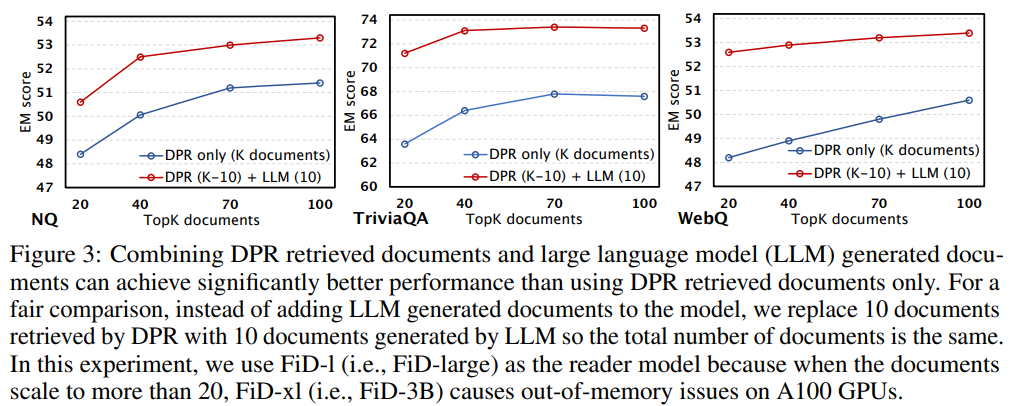

TypeScript consistently outperforms the competition across all file sizes, being twice as fast as Babel.

Native language parsers show improved performance for larger files due to the reduced relative impact of FFI overhead.

Nevertheless, the performance gains are not as pronounced due to serialization and deserialization (serde) overhead, which is proportional to the input file size.

### Asynchronous Parsing

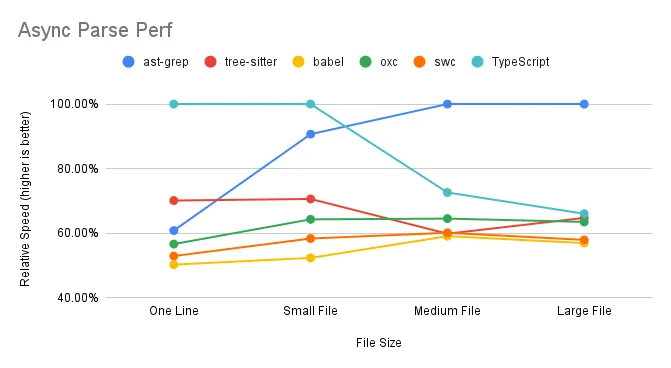

In the asynchronous parsing scenario, we observe the following:

ast-grep excels when handling multiple medium to large files simultaneously, effectively utilizing multi-core capabilities. TypeScript and Tree-sitter, however, experience a decline in performance with larger files. SWC and Oxc maintain consistent performance, indicating efficient use of multi-core processing.

### Parse Time Breakdown

When benchmarking a Node-API based program, it's crucial to understand the time spent not only executing Rust code but also the Node.js glue code that binds everything together. The parsing time can be dissected into three main components:

```

time = ffi_time + parse_time + serde_time

```

Here's a closer look at each term:

- **`ffi_time` (Foreign Function Interface Time):** This represents the overhead associated with invoking functions across different programming languages. Typically, `ffi_time` is a fixed cost and remains constant regardless of the input file size.

- **`parse_time` (Parse Time):** The core duration required for the parser to analyze the source code and generate an Abstract Syntax Tree (AST). `parse_time` scales with the size of the input, making it a variable cost in the parsing process.

- **`serde_time` (Serialization/Deserialization Time):** The time needed to serialize Rust data structures into a format compatible with JavaScript, and vice versa. As with `parse_time`, `serde_time` increases as the input file size grows.

In essence, benchmarking a parser involves measuring the time for the actual parsing (`parse_time`) and accounting for the extra overhead from cross-language function calls (`ffi_time`) and data format conversion (`serde_time`). Understanding these elements helps us evaluate the efficiency and scalability of the parser in question.

### Result Interpretation

This section offers a detailed and technical analysis of the benchmark results based on the parse time framework above. Readers seeking a high-level overview may prefer to skip ahead to the summary.

**FFI Overhead**

In both sync parsing and async parsing scenario, the "one line" test case, which is predominant FFI overhead with minimal parsing or serialization, shows TypeScript's superior performance. Surprisingly, Babel, expected to excel in this one-line scenario, demonstrates its own peculiar overhead.

As file size increases, FFI overhead becomes less significant, as it's largely size-independent. For instance, ast-grep's relative speed is 78% for a large file compared to 72% for a single line, suggesting an approximate 6% FFI overhead in synchronous parsing.

FFI overhead is more pronounced in asynchronous parsing. ast-grep’s performance drops from 72% to 60% when comparing synchronous to asynchronous parsing of a single line. The absence of a notable difference in performance for swc/oxc may be due to their [unique implementation details](https://github.com/oxc-project/oxc/blob/2d5e0d5d0775300463f36b925e2f1ce71f119b90/napi/parser/src/lib.rs#L96).

**Serde Overhead**

Unfortunately, we failed to replicate swc/oxc's blazing performance we witnessed in other applications.

Despite minimal FFI impact in “Large file” test cases, swc and oxc underperform compared to the TypeScript compiler. This can be attributed to their reliance on calling [`JSON.parse` on strings](https://github.com/swc-project/swc/blob/5d944185187402691292fdb73ea767bd580e2a52/node-swc/src/index.ts#L108) returned from Rust, which is, to our disappointment, still more efficient than direct data structure returns.

Tree-sitter and ast-grep avoid serde overhead by [returning a tree object](https://github.com/ast-grep/ast-grep/blob/1c3accfd7dccef293c480951759b86c418cde977/crates/napi/src/sg_node.rs#L297) rather than a full AST structure. Accessing tree nodes requires [invoking Rust methods](https://github.com/ast-grep/ast-grep/blob/1c3accfd7dccef293c480951759b86c418cde977/crates/napi/src/sg_node.rs#L78) from JavaScript, which distributes the cost over the reading process.

**Parallel**

Except tree-sitter, all native TS parsers have parallel support. Contrary to JS parsers, native parsers performance will not degrade when concurrently parsing larger files. This is thanks to the power of multiple cores. JS parsers suffer from CPU bound because they have to parse file one by one.

### Perf summary for parsers

The performance of each parser is summarized in the table below, which outlines the time complexity for different operations.

In the table, `constant` denotes a constant time cost that does not change with input size, while `proportional` indicates a variable cost that grows proportionally with the input size. An `N/A` signifies that the cost is not applicable.

JS-based parsers operate entirely within the JavaScript environment, thus avoiding any FFI or serde overhead. Their performance is solely dependent on the parsing time, which scales with the size of the input file.

The performance of Rust-based parsers is influenced by a fixed FFI overhead and a parsing time that grows with input size. However, their serde overhead varies depending on the implementation:

For ast-grep and tree-sitter, they have a fixed serialization cost of one tree object, regardless of the input size.

For swc and oxc, the serialization and deserialization costs increase linearly with the input size, impacting overall performance.

## Discussion

### Transform vs. Parse

While Rust-based tools are renowned for their speed in transpiling code, our benchmarks reveal a different narrative when it comes to converting code into an AST that’s usable in JavaScript.

This discrepancy highlights a critical consideration for Rust tooling authors: the process of passing Rust data structures to JavaScript is a complex task that can significantly affect performance.

It's essential to optimize this data exchange to maintain the high efficiency expected from Rust tooling.

### Criteria for Parser Inclusion

In our benchmark, we focused on parsers that offer a JavaScript API, which influenced our selection:

* **Sucrase:** Excluded due to its lack of a parsing API and [inability to produce a complete AST](https://github.com/alangpierce/sucrase#motivation), which are crucial for our evaluation criteria.

* **Esbuild/Biome:** Not included because esbuild functions primarily as a bundler, not a standalone parser. It offers transformation and build capabilities but [does not expose an AST](https://esbuild.github.io/api/#js-details) to JavaScript. Similarly, biome is a CLI application without a JavaScript API.

* **Esprima:** Not considered for this benchmark as it lacks TypeScript support, which is a key requirement for the modern JavaScript development ecosystem.

### JS Parser Review

**Babel:**

Babel is divided into two main packages: `@babel/core` and `@babel/parser`. It's noteworthy that `@babel/core` exhibits lower performance compared to `@babel/parser`. This is because the additional entry and hook code that surrounds the parser in the core package. Furthermore, the `parseAsync` function in Babel core is not genuinely asynchronous; it's essentially a synchronous parser method wrapped in an asynchronous function. This wrapper provides extra hooks but does not enhance performance for CPU-intensive tasks due to JavaScript's single-threaded nature. In fact, the overhead of managing asynchronous tasks can further burden the performance of `@babel/core`.

**TypeScript:**

The parsing capabilities of TypeScript defy the common perception of the TypeScript compiler (TSC) being slow. The benchmark results suggest that the primary bottleneck for TSC is not in parsing but in the subsequent type checking phase.

### Native Parser Review

**SWC:**

As the first Rust parser to make its mark, SWC adopts a direct approach by serializing the entire AST for use in JavaScript. It stands out for offering a broad range of APIs, making it a top choice for those seeking Rust-based tooling solutions. Despite some inherent overhead, SWC's robustness and pioneering status continue to make it a preferred option.

**Oxc:**:

Oxc is a contender for the title of the fastest parser available, but its performance is tempered by serialization and deserialization (serde) overhead. The inclusion of JSON parsing in our benchmarks reflects real-world usage, although omitting this step could significantly boost Oxc's speed.

**Tree-sitter**

Tree-sitter serves as a versatile parser suitable for a variety of languages, not specifically optimized for TypeScript. Consequently, its performance aligns closely with that of Babel, a JavaScript-focused parser implemented in JavaScript. Alas, a Rust parser is not inherently faster by default, even without any N-API overhead.

A general purpose parser in Rust may not beat a carefully hand-crafted parser in JavaScript.

**ast-grep**

ast-grep is powered by tree-sitter. Its performance is marginally faster than tree-sitter, indicating napi.rs is a faster binding than manual using C++ nan.h.

I cannot tell whether the performance gain is from napi or napi.rs but

Leveraging the capabilities of tree-sitter, ast-grep achieves slightly better performance, suggesting that napi.rs offers a more efficient binding than traditional C++ [nan.h](https://github.com/tree-sitter/node-tree-sitter/blob/master/src/parser.h) methods. While the exact source of this performance gain—whether from napi or napi.rs—is unclear, the results speak to the effectiveness of the implementation. Or put it in another way, [Broooooklyn](https://twitter.com/Brooooook_lyn) is 🐐.

### Native Parser Performance Tricks

**tree-sitter & ast-grep' Edge**

These parsers manage to bypass serde costs post-parsing by returning a Rust object wrapper to Node.js. This strategy, while efficient, can lead to slower AST access in JavaScript as the cost is amortized over the reading phase.

**ast-grep's async advantage:**

ast-grep's performance in concurrent parsing scenarios is largely due to its utilization of multiple [libuv threads](http://docs.libuv.org/en/v1.x/threadpool.html). By default, the libuv thread pool size is set to four, but there's potential to enhance performance further by [expanding the thread pool size](https://dev.to/bleedingcode/increase-node-js-performance-with-libuv-thread-pool-5h10), thus fully leveraging the available CPU cores.

## Future Outlook

As we look to the future, several promising avenues could further refine TypeScript parser performance:

- **Minimizing Serde Overhead:** By optimizing serialization and deserialization processes, such as employing Rust object wrappers, we can reduce the performance toll these operations take.

- **Harnessing Multi-core Capabilities:** Effective utilization of multi-core architectures can lead to substantial gains in parsing speeds, transforming the efficiency of our tooling.

- **Promoting AST Reusability:** Facilitating the reuse of Abstract Syntax Trees within JavaScript can diminish the frequency of costly parsing operations.

- **Shifting Workloads to Rust:** The creation of a domain-specific language (DSL) tailored for AST node querying could shift a greater portion of computational work to the Rust side, enhancing overall efficiency.

These potential improvements represent exciting opportunities to push the boundaries of Rust tooling in parsing performance.

Hope this article helps you! We can continue to innovate and deliver even more powerful tools to the developer community!

| herrington_darkholme |

1,676,733 | Step 3 - final result | Step 1 - planning Step 2 - progress Step 3 - release Technical feature of the work Issue... | 0 | 2023-12-02T00:17:57 | https://dev.to/avelynhc/step-3-final-result-1hdk | Step 1 - [planning](https://dev.to/avelynhc/step-1-let-me-plan-first-3fp0)

Step 2 - [progress](https://dev.to/avelynhc/step-2-progress-report-4ja4)

Step 3 - [release]()

-------------------------------------------------------------

**Technical feature of the work**

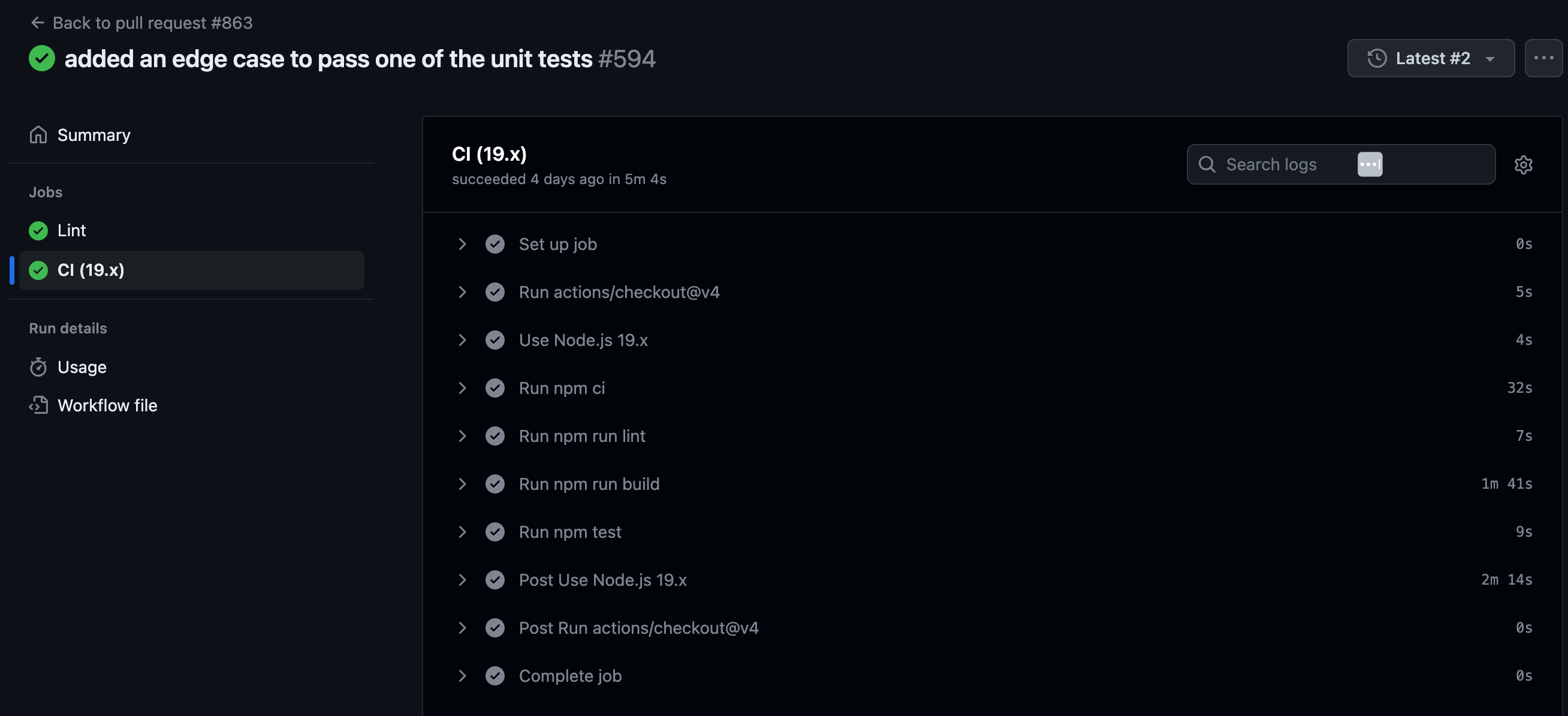

[Issue 1](https://github.com/PolicyEngine/policyengine-app/issues/852)

- added an edge case to the existing if/else statement to fix the CI failure issue.

The problem occurred as previous solution does not catch the edge case when certain variables are passed. Because of missing the edge case, else block is executed returning false, which caused CI to fail.

In the new solution, new edge case was added to continue the current `if` code flow when certain variables are passed. This edge case will be executed when array of excludedVariables includes a variable. If it does, it will delete it.

```typescript

...

let excludedVariables = ["fips", "sep"];

...

else if (excludedVariables.includes(variable)) {

delete editedHousehold[entityPlural][entity][variable];

}

...

```

_**Result**_

You can find more details in here: [PR](https://github.com/PolicyEngine/policyengine-app/pull/863)

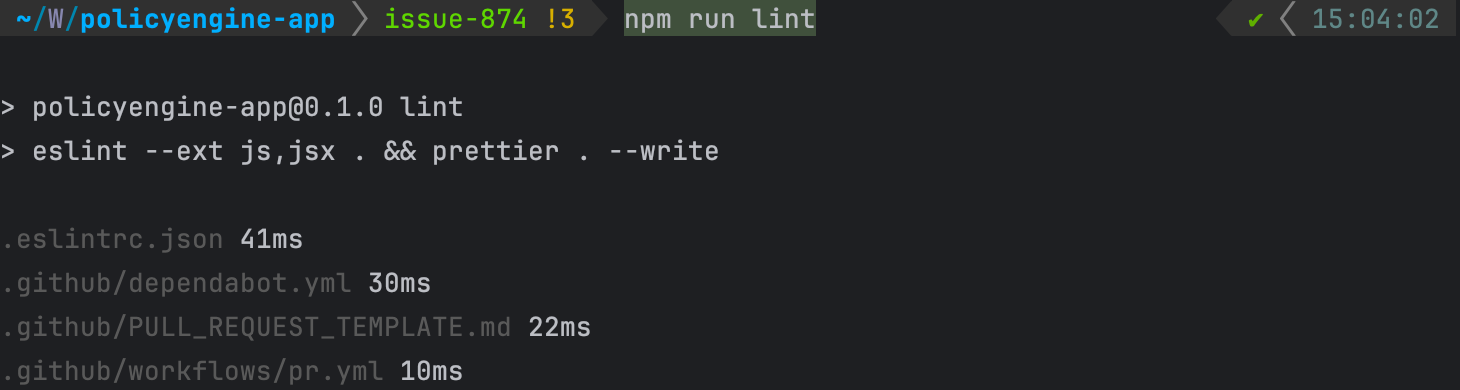

[Issue 2](https://github.com/PolicyEngine/policyengine-app/issues/865)

- `prettier . --write` was added to the existing lint script to be able to handle both linting and formatting without the need for an additional Prettier command.

**_Before_**

```shell

"lint": "eslint --ext js,jsx . && prettier -c ."

```

**_After_**

```shell

"lint": "eslint --ext js,jsx . && prettier . --write"

```

**_Result_**

You can find more details in here: [PR](https://github.com/PolicyEngine/policyengine-app/pull/871)

[Issue 3](https://github.com/PolicyEngine/policyengine-app/issues/874)

- `make format` rule was changed to not use Black. Instead of using Black, `npm run lint` was used to lint the project as below.

```shell

install:

npm ci

build:

npm run build

debug-no-lint:

ESLINT_NO_DEV_ERRORS=true npm start

debug:

npm start

test:

npm run test

deploy-setup:

cp gcp/.gcloudignore ./.gcloudignore

cp gcp/app.yaml ./app.yaml

cp gcp/main.py ./main.py

cp gcp/social_card_tags.py ./social_card_tags.py

cp gcp/requirements.txt ./requirements.txt

cp -r social_cards/ build/static/media/social_cards

deploy: build deploy-setup

gcloud config set app/cloud_build_timeout 1000

y | gcloud app deploy --project policyengine-app

rm app.yaml

rm .gcloudignore

rm main.py

rm requirements.txt

lint:

npm run lint

```

You can find more details in here: [PR](https://github.com/PolicyEngine/policyengine-app/pull/902)

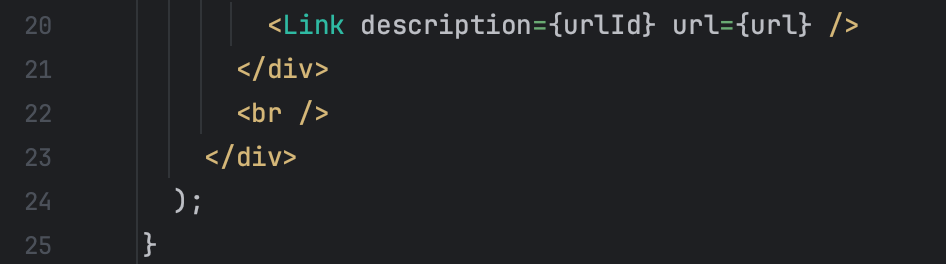

[Issue 4](https://github.com/atb-brown/austin/issues/39)

- `"sort-keys-fix/sort-keys-fix"` rule was added to `eslintrc.yml` file as below for ascending sorting.

```shell

"rules": {

...

"sort-keys-fix/sort-keys-fix":

["error", "asc", { "caseSensitive": false }],

},

```

- `fix` command was added to scripts in `package.json` file to be able to fix the lint problem with single command, `npm run fix`.

```shell

"scripts": {

"fix": "eslint src --fix --ext .ts,.tsx,.js",

...

},

```

- This changes applies to all of the keys in Object, not limited to React component property only.

**_Result_**

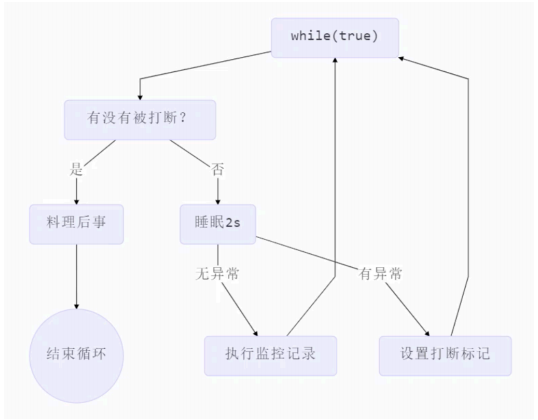

Examples of unsorted prop

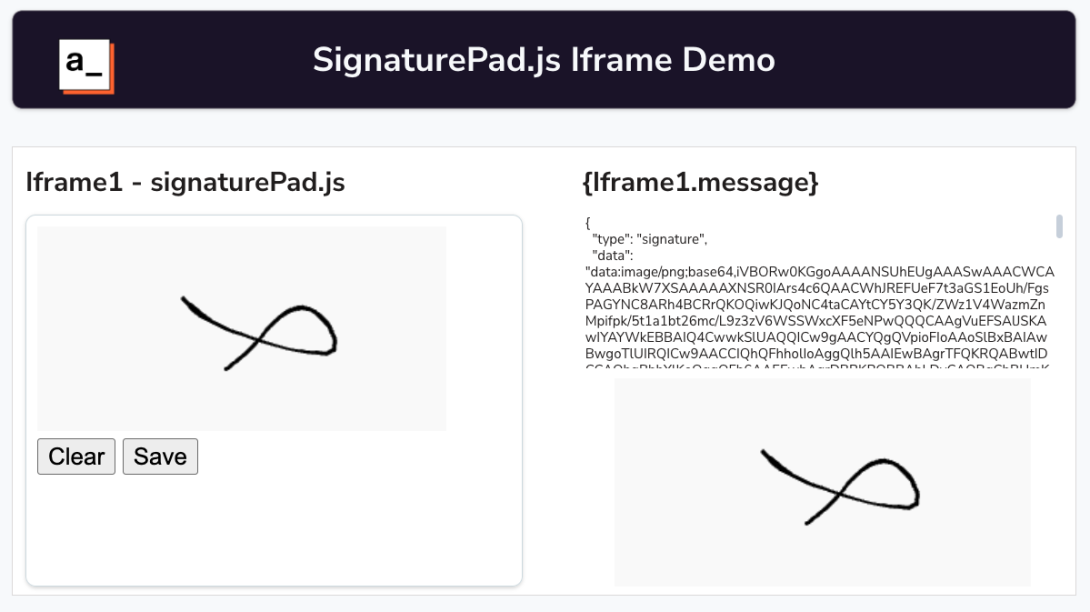

<img width="484" alt="Screenshot 2023-12-01 at 6 51 06 PM" src="https://github.com/atb-brown/austin/assets/75185537/5ff839ab-7f72-41e9-8964-8156e3cdba1f">

Unsorted props will give you an error.

<img width="760" alt="Screenshot 2023-12-01 at 6 51 12 PM" src="https://github.com/atb-brown/austin/assets/75185537/e43fefb6-7319-4f30-80e5-1d145e50175d">

With the help of command, `npm run fix`, it will automatically sort everything as expected.

<img width="439" alt="Screenshot 2023-12-01 at 7 18 49 PM" src="https://github.com/atb-brown/austin/assets/75185537/6ff10370-e3f8-496c-b3b5-b1ebb8508d91">

Fix result (props are ordered alphabetically now!)

After the fix, there is no error.

<img width="432" alt="Screenshot 2023-12-01 at 7 19 06 PM" src="https://github.com/atb-brown/austin/assets/75185537/7d837d3e-ec2b-4c82-ae74-08ec0c42906b">

You can find more details in here: [PR](https://github.com/atb-brown/austin/pull/55)

**What did you learn from this process?**

_**Makefile**_

'Make' is used to compile the program automatically. To be able to use 'make', it needs 'Makefile' to define multiple tasks/commands to be executed. 'Make' is commonly used in many open source projects for final compilation, and it can be installed using `make install` command. When cleaning is needed for this process, use `make clean` command.

There are lots of tasks you can add on top of the current 'Makefile'. For example, you can add a task to back up the project or initialize the project as below.

```shell

# Backup the project

backup:

cp -r project backup

# Initialize the project

init:

npm init

```

**How well did you do achieving your goals?**

As indicated in [Step 1 - planning](), I had two main goals for the release 0.4: to learn new skills and push myself out of my comfort zone. This project was the largest one I have contributed to so far. Numerous people were involved in each issue or pull request (PR). The projects I had contributed to before were comparatively smaller, so I would usually receive comments on the PR I submitted right away in a fast-paced environment. However, this time was different. Whenever I pushed a PR, it took days and days to be reviewed. For instance, one reviewer pinged another person to join the open discussion on the PR. I would describe it as a slow-paced environment. Nevertheless, I learned a lot through this experience.

I typically look at specific pieces of code, fix the issue, and move on. But it was not that simple in a larger project like this. Reviewers reminded me that the bigger picture cannot be ignored simultaneously. Additionally, I learned a new method to automate processes using `make` during this project. I can confidently say that I achieved both goals I set during the planning step.

**Reasoning**

Some of PRs are not merged yet. For examples, I am still waiting for teams to review the [PR](https://github.com/PolicyEngine/policyengine-app/pull/863) I pushed 2 weeks ago. For another [PR](https://github.com/PolicyEngine/policyengine-app/pull/871), there has been a conversation between maintainers what to do next with this pr, but no progress so far.

In addition, there was a comment about properly separating content within PRs. For example, I created several PRs, some of which had a nested structure. In one case, the second PR I made included the content of both the PR 1 and the PR 2. The third PR was even more complex, encompassing the contents of PR 1, PR 2, and PR 3. I believe that all these unorganized PRs makes it challenging for maintainers to review them efficiently. Going forward, I think I need to separate each PR I create in the same repository for better readability and overall efficiency. | avelynhc | |

1,677,068 | Exclusive Cyber Monday & Black Friday Deal | Hey, there looking for a Black Friday & Cyber Monday Deal? If yes we have the wildest deal... | 0 | 2023-11-24T07:25:53 | https://dev.to/ritirathod0/exclusive-cyber-monday-black-friday-deal-239 | webdev, programming, productivity |

Hey, there looking for a Black Friday & Cyber Monday Deal? If yes we have the wildest deal ever. Yes…Yes… **Save 50%** on the purchase of any **Premium Admin Template**.

If you are a developer and looking for the developer-friendly, responsive, highly customized fully coded admin templates whether it react admin template, angular admin template, bootstrap admin template, vue admin template, django admin template, or any other then **[CodedThemes](https://codedthemes.com/)** offers a 🤩 fantastic deal on every template.

However, don’t miss this amazing deal because it comes once in year. So what are you waiting for? This is the perfect time to invest in a highly-selling admin template. **The sale is Live Now! Hurry up!**🏃♂️🏃♂️

## **Detail about deal**

> **Offer** - 50% off on any Premium Admin Template💸

> **Promo Code** - CMBF2023🎁

> **Deal Validity** – 24th November to 27th November⏳ | ritirathod0 |

1,677,181 | CyberArk Interview Questions | CyberArk is an information security firm that provides privileged account security to multiple... | 0 | 2023-11-24T09:54:39 | https://dev.to/shivamchamoli18/cyberark-interview-questions-228h | cyberark, interviewquestions, cybersecurity, infosectrain | CyberArk is an information security firm that provides privileged account security to multiple industries, including banking, information technology, government organizations, healthcare, financial, and many more sectors.

This article is compiled with some important CyberArk interview questions and answers to help you ace your interview and achieve your ideal job in various CyberArk roles, such as CyberArk Analyst, CyberArk Specialist, Senior CyberArk Engineer, and more.

## **CyberArk interview questions:**

**1. Explain Application Identity Manager.**

CyberArk Application Identity Manager (AIM) enables enterprises to protect data stored in business systems by removing hard-coded passwords from configuration files, applications, scripts, and software code. AIM secures privileged and application credentials using patented Digital Vault technology, which is created to adhere to the strictest security standards.

**2. What is an SSH Key Manager?**

Secure Socket Shell (SSH) Key Manager aids enterprises in preventing unauthorized access to private SSH keys, which are widely used by privileged Unix/Linux users and apps to verify privileged accounts.

**3. Which CyberArk module is in charge of recording sessions?**

Privileged Session Manager (PSM) is in charge of recording sessions. It is designed to securely manage and monitor privileged sessions, including recording and auditing activities performed during these sessions.

**4. Which CyberArk module is in charge of updating passwords?**

The Central Policy Manager (CPM) is in charge of updating passwords. It automates the process of updating passwords for privileged accounts across various systems and applications.

**5. What are PSM's capabilities for SSH?**

The capabilities of PSM for SSH include:

• Video recording

• Centralized access control

• Secure remote access

**6. What is CyberArk ENE?**

The CyberArk Event Notification Engine (ENE) automatically sends email notifications about Privileged Access Security Solution events to predefined users.

**7. Describe the password vault.**

A password vault, often known as a password manager, is a software program that keeps various privileged account passwords in a Privileged Account Management system. It allows users to access multiple passwords for many websites or services using a single master password by encrypting the password storage.

**8. What are the authentication schemes that CyberArk Vault supports?**

The CyberArk Vault supports the following authentication protocols:

• Radius

• Public Key Infrastructure (PKI)

• Lightweight Directory Access Protocol (LDAP)

**9. How can you ensure that every character in a string is a number?**

We can ensure that every character in a string is a number using the ‘Python isnumeric() function.’ It checks every character of the string, and if a string only contains numeric characters, it returns true, otherwise false.

**10.What distinguishes a Lambda from a Def?**

Difference between Def and Lambda.

**Def function**

It can have a return statement.

It can hold multiple expressions.

Its computational time is slower.

It returns an integer value.

**Lambda function**

It can not have return statements.

It is a single-expression function.

Its computational time is faster.

It returns the function object value.

## **CyberArk with InfosecTrain**

Enroll in InfosecTrain's [CyberArk training](https://www.infosectrain.com/courses/cyberark-training/) course to learn more about CyberArk from our certified and highly experienced instructors who have in-depth knowledge of the subject.

You can also check out "[CyberArk Interview Questions and Answers](https://infosec-train.blogspot.com/2023/11/top-10-cyberark-interview-questions.html) " for more CyberArk interview questions.

| shivamchamoli18 |

1,677,194 | Buy Best Room Heater in Affordable Price. | As winter arrives, bringing with it a chill in the air and frosty mornings, there's one modern... | 0 | 2023-11-24T10:09:34 | https://dev.to/honey5135/buy-best-room-heater-in-affordable-price-5262 | As winter arrives, bringing with it a chill in the air and frosty mornings, there's one modern comfort that becomes a cherished necessity – the warmth that room heaters and water heaters provide. In the realm of seasonal companions, these appliances are the unsung heroes of our winter days. Let's explore why room heaters and water heaters are essential during the winter season and how they can transform your cold days and nights into warm, comforting experiences.

[Room Heaters](https://summerkingonline.com/collections/room-heaters): A Warm Retreat from the Cold

When the winter cold seeps into your home, there's nothing more inviting than the warmth emanating from a reliable room heater. Stepping into a toasty room on a frosty morning or cozying up in a well-heated space in the evening becomes a source of immediate comfort. Room heaters ensure that you can create your warm haven, setting a positive and comforting tone for the rest of the day and night.

[Water Heaters](https://summerkingonline.com/collections/water-heater): Instant Comfort in Every Drop

The prospect of facing a cold shower on a chilly morning is not just daunting; it can be a shock to the system. Enter the trusty water heater – the unsung hero of winter mornings. It provides instant relief from the cold, allowing you to start your day with a warm and comforting shower. This transforms your morning routine, making it a soothing and invigorating experience that sets a positive tone for the day ahead.

Energy Efficiency for Cost-Effective Warmth

Both room heaters and water heaters have evolved to prioritize energy efficiency. Modern appliances in these categories are designed with advanced features that not only provide warmth but also do so without unnecessary energy consumption. This ensures that you can enjoy the luxury of a warm room or a hot shower without worrying about skyrocketing energy bills.

Tailored to Your Winter Needs

Room heaters and water heaters come in various types and sizes to suit your specific winter requirements. Whether you prefer a radiant heater for quick room heating or a tankless water heater for on-demand hot water, there's an option to match your needs and preferences.

Reliability, Durability, and Safety

Well-maintained room heaters and water heaters are long-term investments. These appliances are built to last and withstand the test of time. High-quality heaters are your companions not only for this winter but for many more to come. Moreover, both types of heaters come with safety features to protect you and your family from accidents, ensuring worry-free use.

Choosing the Right Heaters

When selecting room heaters and water heaters, consider factors like the size of your room or household, your heating or hot water usage patterns, and your budget. With various options available, from ceramic space heaters to tank water heaters, you have the freedom to choose the ones that best fit your winter needs.

In Conclusion: Embrace Winter with Cozy Comfort

As the winter season approaches, room heaters and water heaters can make all the difference in your daily comfort. They are not just functional appliances; they are sources of warmth and relaxation in a season that often challenges us with its cold embrace.

So, if you want to enjoy a cozy and comforting winter season, make sure your home is equipped with reliable and efficient room heaters and water heaters. They are the secrets to keeping the chill at bay and savoring the warmth of winter on your terms.

| honey5135 | |

1,677,393 | DEVWorld BLACK FRIDAY : Code, Conquer, and Score Big! | 🚀 Brace yourselves, tech enthusiasts! It's that magical time of the year when discounts rain down... | 0 | 2023-11-24T12:56:00 | https://dev.to/devworld_conf/devworld-black-friday-code-conquer-and-score-big-2bh8 | blackfriday, javascript, programming, news | 🚀 Brace yourselves, tech enthusiasts! It's that magical time of the year when discounts rain down like confetti at a coding carnival. And guess what? We've got the scoop of the century – it's **BLACK FRIDAY**, and we're diving into the discount pool headfirst with the enthusiasm of a programmer finding the last bug in their code!

🎉 Drumroll, please! We're thrilled to announce that DEVWorld is not just joining the Black Friday party; we're hosting the bash with the **BIGGEST** discount extravaganza of the season, mind-blowing **650 Euro discoun**t on your DEVWorld tickets – that's a jaw-dropping **75% off!** But here's the catch: It's a 24-hour whirlwind of savings, and you better believe it's the talk of the coding town.

**ONLY 249 EUROS**

🔊 Spread the word like! This deal is so rare, gather your family, notify your colleagues, even inform your long-lost coding buddies – everyone needs to know about this once-in-a-lifetime opportunity to score a discount that's practically coding history!

🚀✨ But wait, there's more! Imagine being part of the #1 developer conference on the entire planet, nestled in the tech capital of Europe. DEVWorld is not just a conference; it's a technological fiesta where innovation meets celebration, and this **Black Friday deal is your golden ticket to the festivities.**

**✈️ Pack your bags – we're expecting 7,500 attendees ready to geek out.**

**💼 Get ready to network with 3,500 companies that could be your next coding soulmates.**

**🌎 Represent your flag among the 94+ countries making DEVWorld a global tech sensation.**

**🏢 And did we mention the expo? Brace yourself for 23,000m2 of pure coding ecstasy!**

**🗓️ Save the date: February 29 to March 1, 2024 – because this is not just any conference; it's the DEVWorld experience.**

**📍 And where is this tech utopia located? Rai Amsterdam – the LARGEST venue in The Netherlands, because we don't do things small; we think big and code bigger.**

Don't let this opportunity! Mark your calendar, set an alarm, tie a string around your finger – do whatever it takes to remind yourself because DEVWorld with a **75% Black Friday discount** is a tech odyssey you can't afford to miss. See you there! 🚀🎈 | devworld_conf |

1,677,426 | JavaScript's Grouping Methods: Object.groupBy and Map.groupBy 🤯 | JavaScript, the language that powers the dynamic and interactive web, is constantly evolving to make... | 0 | 2023-11-24T13:53:49 | https://dev.to/shameel/javascripts-grouping-methods-objectgroupby-and-mapgroupby-aba | webdev, javascript, programming, beginners | JavaScript, the language that powers the dynamic and interactive web, is constantly evolving to make developers' lives easier. **This blog is the introduction of two powerful methods for grouping data:** `Object.groupBy` and `Map.groupBy`. 🤯

These methods promise to simplify grouping operations, eliminating the need for external dependencies and enhancing the overall development experience.

## What's the Buzz About?

`groupBy` methods aim to streamline process of grouping data, offering a native and efficient solution for grouping objects and maps.

### Object.groupBy

Let's start by exploring `Object.groupBy`. This method is designed to work with arrays of objects, making it a valuable addition for handling complex datasets.

```javascript

const employees = [

{ name: 'Shameel', department: 'HR' },

{ name: 'Uddin', department: 'Engineering' },

{ name: 'Syed', department: 'HR' },

];

// Grouping employees by department using Object.groupBy

const groupedByDepartment = Object.groupBy(employees,({department})=>department)

console.log(groupedByDepartment);

```

In this example, `employees` are grouped by their respective departments, resulting in a clear and concise structure.

**Result:**

```js

{

"HR": [

{

"name": "Shameel",

"department": "HR"

},

{

"name": "Syed",

"department": "HR"

}

],

"Engineering": [

{

"name": "Uddin",

"department": "Engineering"

}

]

}

```

### Map.groupBy

The `Map.groupBy` method extends the grouping capabilities to Map objects, providing a versatile solution for scenarios where a Map structure is preferred.

```javascript

const inventory = [

{ name: '🥦 broccoli', type: 'vegetables', quantity: 9 },

{ name: '🍌 bananas', type: 'fruit', quantity: 5 },

{ name: '🐐 goat', type: 'meat', quantity: 23 },

{ name: '🍒 cherries', type: 'fruit', quantity: 12 },

{ name: '🐟 fish', type: 'meat', quantity: 22 },

];

const restock = { restock: true };

const sufficient = { restock: false };

// Using Map.groupBy to categorize items based on quantity

const result = Map.groupBy(inventory, ({ quantity }) =>

quantity < 6 ? restock : sufficient

);

// Displaying items that need restocking

console.log(result.get(restock));

```

Here, `orders` are grouped by the product they contain, showcasing the flexibility and expressiveness of the `Map.groupBy` method.

## Conclusion

The beauty of these new methods lies in their simplicity. They abstract away the complexity of manual grouping, providing a clean and expressive syntax. No more lengthy code or external dependencies – just pure JavaScript magic!

Happy coding! 🎉💻✨

Follow me for more such content:

LinkedIn: https://www.linkedin.com/in/shameeluddin/

Github: https://github.com/Shameel123 | shameel |

670,676 | Java 8 Streams | https://grokonez.com/java/java-8/java-8-streams Java 8 Streams Java 8 comes with some prominent fea... | 0 | 2021-04-18T17:41:00 | https://dev.to/loizenai/java-8-streams-ji | java, java8, streams | ERROR: type should be string, got "https://grokonez.com/java/java-8/java-8-streams\n\nJava 8 Streams\n\nJava 8 comes with some prominent features like <a href=\"https://grokonez.com/java/java-8-lambda-expressions\">Lambda Expressions</a>, <a href=\"https://grokonez.com/java/java-8-method-references\">Method References</a>. And <strong>Streams</strong> are also an important concept that we should comprehend.\n\nThis tutorial will help you have a deep view of Java 8 Streams: what they are, ways to create them, how they work with intermediate operations, terminal operation...\n\n<!--more-->\n<div id=\"tuttoc\" class=\"jsa-toc\"></div>\n\n<div id=\"tutcontents\">\n<h2>I. Overview</h2>\n<h3>1. What is Java 8 Stream?</h3>\nA stream is an abstract concept that represents a sequence of objects created by a source, it’s neither a data structure nor a collection object where we can store items. So we can't point to any location in the stream, we just interact with items by specifying the functions.\n\nThis is an example of a Stream:\n<pre><code class=\"language-java\">\nList<Integer> numbers = Arrays.asList(1, 2, 3, 4, 5, 6, 7, 8, 9);\n// get List from Stream Operation\nList<String> result = numbers.stream()\n\t\t.filter(i -> (i % 2) == 0)\n\t\t.map(i -> \"[\" + i + \"]\")\n\t\t.collect(Collectors.toList());\n\nSystem.out.println(result);\n</code></pre>\nRun the code above, the console shows:\n<pre><code class=\"language-java\">\n[[2], [4], [6], [8]]\n</code></pre>\nNow, we have concept of using a Stream is to enable functional-style operations on streams of elements. Those operations are composed into a stream pipeline which consists of:\n<code>Source</code> > <code>Intermediate Operations</code> > <code>Terminal Operation</code>\n- a <strong><em>source</em></strong> (in the example, it is a collection - List, but it is also an array, a generator function, an I/O channel...)\n- <em><strong>intermediate operations</strong></em> (which transform current stream into another stream at the current chain, in the example, <strong>filter</strong> is the first operation and <strong>map</strong> is the second one)\n- a <em><strong>terminal operation</strong></em> (which produces a result or side-effect, in the example, it is <strong>collect</strong>) \n\nMore at:\n\nhttps://grokonez.com/java/java-8/java-8-streams\n\nJava 8 Streams" | loizenai |

1,677,931 | Solving the Puzzle: How to Pass Environment Variables in AWS AppSync Resolvers using Serverless | Are you just diving into the world of AWS AppSync and puzzled about passing environment variables to... | 0 | 2023-11-25T05:29:04 | https://dev.to/am_i_dev/solving-the-puzzle-how-to-pass-environment-variables-in-aws-appsync-resolvers-using-serverless-kl0 | appsync, serverless, javascript, resolverfunction | Are you just diving into the world of AWS AppSync and puzzled about passing environment variables to the resolver using the Serverless Framework? If you've scoured countless blogs without finding a solution, you've landed on the right page!

## Scenario

Imagine you have an AppSync endpoint triggering a mutation. In this scenario, you need to validate user information and perhaps trigger an SQS with a unique accountID for each environment or use the tableName in the resolver function to fetch data from DynamoDB. Whatever your use case, you can follow these steps to seamlessly pass environment variables to your resolver function.

## Solution

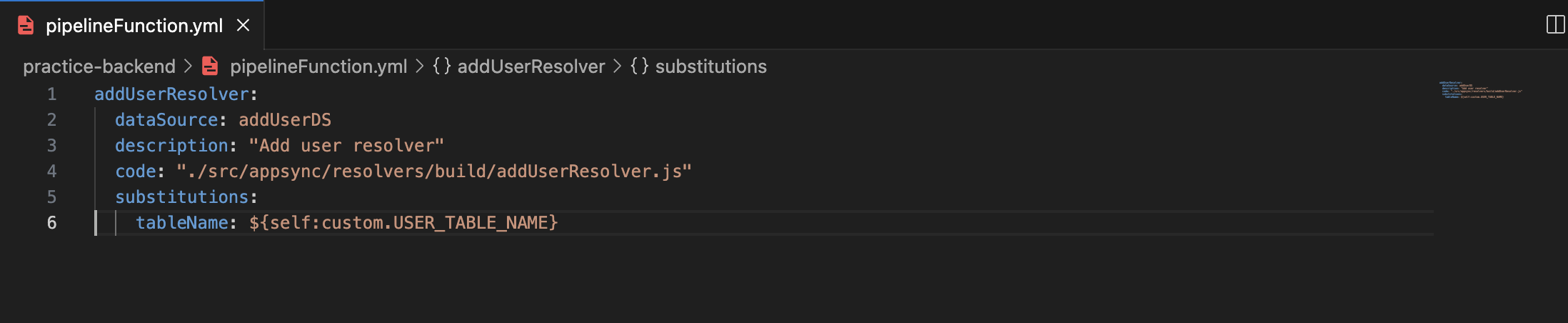

**Step1:-** Add a property called substitutions to the resolver you've created. This is where you'll pass your environment variables as key-value pairs. In the example below, I'm using tableName as an environmental variable.

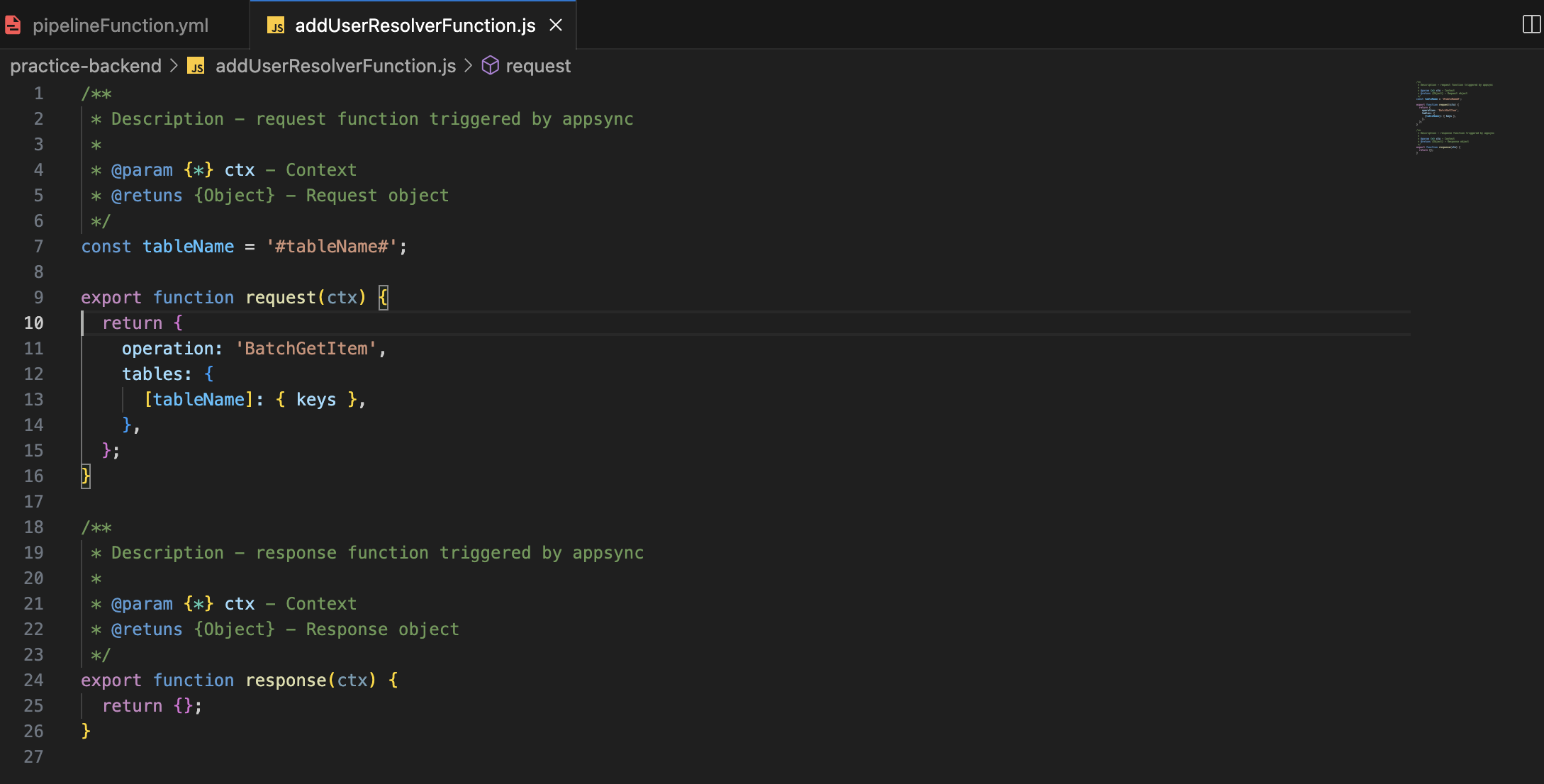

**Step2:-** Now that you've successfully passed your environment variables to the resolver, you'll need to access them within the resolver function. While in a Lambda function, you might use **'process.env.tableName'**, in the resolver function, you'll need to add **"#"** at the start and end of the name you passed in Step 1. Here's an example:

```

const tableName = '#tableName#';

const accountId = '#accountId#';

```

Ensure you add this before the request and response functions in your resolver.

**Step3:-** With the setup complete, you now have the knowledge to pass environment variables seamlessly to your AppSync resolver function.

This blog aims to simplify the process, ensuring you can efficiently manage and utilize environment variables in your AppSync projects. Feel free to explore, experiment, and elevate your serverless development experience!"

| am_i_dev |

1,677,958 | LLMs are not AI | Do large language models qualify as artificial intelligence? No. pause for gasps Intelligence is... | 0 | 2023-11-25T06:50:07 | https://dev.to/jjcx/llms-are-not-ai-1f4c | ai, llm | Do large language models qualify as artificial intelligence? No.

**pause for gasps**

Intelligence is multifaceted. It understands, it reasons, it learns, and it innovates. An LLM, on the other hand, mimics the facade of comprehension without the substance. Saying an LLM understands the text is like saying a calculator understands mathematics. Both tools process inputs through predefined operations but don’t ‘grasp’ the concepts involved.

Imagine a library as vast as the horizon, filled with every book ever written. An LLM is like a librarian who’s never read a single book but can find you quotes on any topic by following the Dewey Decimal System. This librarian is efficient but has no understanding of literature, history, or science beyond the labels on the book spines.

Understanding is an active, conscious process, but the responses from an LLM are the result of passive, statistical modeling. They are echoes of human thought, not the thought itself. For example, an LLM can produce an essay on quantum mechanics, but it cannot comprehend the subject. It cannot engage with the content beyond what its algorithms predict to be the most likely next word or sentence based on past data.

The bedrock of intelligence is the ability to learn from a few examples and then apply that learning broadly. Human children do this remarkably well. They can learn a concept from a few instances and then recognize it in a variety of contexts. LLMs, in contrast, need to be fed with enormous datasets to ‘learn,’ and even then, they are only replaying patterns contained within that data. They don’t learn in the active sense; they don’t have the ‘aha’ moments that lead to understanding beyond their initial programming.

Reasoning is another pillar of true intelligence. It’s the ability to connect disparate bits of knowledge in a meaningful way. When we reason, we do more than follow a script; we create new narratives and solutions that never existed before. LLMs are not capable of this type of innovation. They generate responses based on what’s been seen before, not on a reasoned process that can create new insights from old information.

These systems don’t possess the spark of curiosity that ignites the human quest for knowledge. They don’t wonder, they don’t hypothesize, they don’t contemplate. They are to genuine curiosity what a wax fruit is to fresh produce —- convincing at a glance, but upon closer inspection, clearly inanimate.

So, while LLMs can produce work that feels human-created, their operational principles are closer to complex reflexes than to conscious thought. They are reflections of intelligence, not its embodiment.

This is not to say that these are not the precursors to AI, or protoAI if you will, but let’s not mistake the smoke for the fire. LLMs are sophisticated mirrors reflecting the brilliance of the data they’ve been fed, but the light of understanding and the warmth of consciousness originate from the human mind.

Except Q*, that might be something scary. | jjcx |

1,678,021 | Exploring the Traditional Markets of Bhutan: A Shopper's Paradise | Exploring the traditional markets of Bhutan can indeed be a fascinating experience, as they offer a... | 0 | 2023-11-25T09:28:38 | https://dev.to/medheyapp/exploring-the-traditional-markets-of-bhutan-a-shoppers-paradise-1fff | Exploring the traditional markets of Bhutan can indeed be a fascinating experience, as they offer a glimpse into the rich cultural tapestry of this Himalayan kingdom. Bhutan is known for its unique blend of tradition and modernity, and its markets reflect this duality. Here's a virtual tour of what you might find in the traditional markets of Bhutan:

Thimphu Weekend Market:

Location: Thimphu, the capital city.

Highlights: The Thimphu Weekend Market is a vibrant and colorful affair, with farmers, traders, and artisans coming from all over the country to sell their goods. You can find fresh produce, local textiles, handicrafts, and traditional Bhutanese cuisine. Don't miss the red rice, traditional Bhutanese cheese, and handmade crafts.

Paro Market:

Location: Paro, one of the most scenic valleys in Bhutan.

Highlights: Paro Market offers a more relaxed shopping experience. You can find traditional Bhutanese clothing, handmade jewelry, and souvenirs. The market is surrounded by the backdrop of lush green hills and the iconic Paro Dzong.

Centenary Farmers' Market (CFM):

Location: Thimphu.

Highlights: CFM is one of the largest markets in Bhutan, offering a variety of goods from different regions. It's a great place to explore Bhutanese agriculture, with organic fruits, vegetables, and traditional grains. The market also has sections dedicated to handmade crafts and textiles.

Gagyel Lhundrup Weaving Center:

Location: Thimphu.

Highlights: For those interested in Bhutanese textiles, this weaving center is a must-visit. Bhutanese weavers showcase their skills, creating intricate patterns and designs. You can purchase beautifully woven traditional Bhutanese garments like kiras and ghos.

Zorig Chusum School of Traditional Arts:

Location: Thimphu.

Highlights: This school trains students in 13 traditional arts and crafts of Bhutan, including painting, wood carving, and sculpture. The school has a gift shop where you can buy authentic Bhutanese art pieces and crafts.

Bhutanese Handicraft Emporium:

Location: Various locations across Bhutan.

Highlights: Run by the National Women’s Association of Bhutan, these emporiums are dedicated to preserving and promoting traditional Bhutanese handicrafts. You can find intricately designed items like thangkas (religious paintings), wooden bowls, and traditional masks.

Changlimithang Archery Ground:

Location: Thimphu.

Highlights: Archery is the national sport of Bhutan, and visiting an archery ground can be a unique experience. While not a traditional market, you might find local artisans selling handmade bows and arrows.

When exploring these markets, it's important to engage with the locals, learn about the stories behind the crafts, and savor the unique flavors of Bhutanese cuisine. Bhutan's commitment to preserving its cultural heritage is evident in its markets, making them a true shopper's paradise for those seeking authentic experiences.

See more:-

https://play.google.com/store/apps/details?id=com.medhey.app

https://apps.apple.com/app/medhey/id1579240703

https://medheyapp.com/2023/02/18/why-medhey-app-is-best-for-booking-international-flight-tickets-from-bhutan/

https://medheyapp.com/2023/02/28/medhey-best-digital-marketplace-in-bhutan/

https://medheyapp.com/2023/03/02/why-to-choose-medhey-for-travelling-to-bhutan-the-happiest-country-of-the-world/

https://medheyapp.com/2021/10/19/which-is-a-better-e-commerce-solution-build-an-app-or-choose-a-listing-e-service-app/

https://medheyapp.com/2021/09/25/bhutanese-community-medhey-logo-online-shopping-in-bhutan/

https://medheyapp.com/2023/06/18/exploring-job-vacancy-in-bhutan-opportunities-for-growth-and-fulfillment/

https://medheyapp.com/2023/06/18/unveiling-hyundai-in-bhutan-elevating-driving-experiences-with-style-and-reliability/

https://medheyapp.com/2023/06/18/exploring-toyota-in-bhutan-a-perfect-blend-of-performance-and-cultural-harmony/ | medheyapp | |

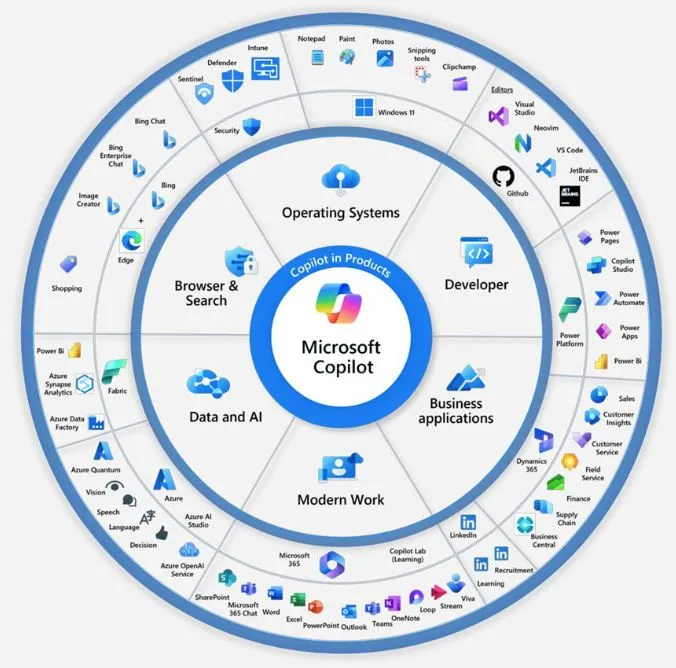

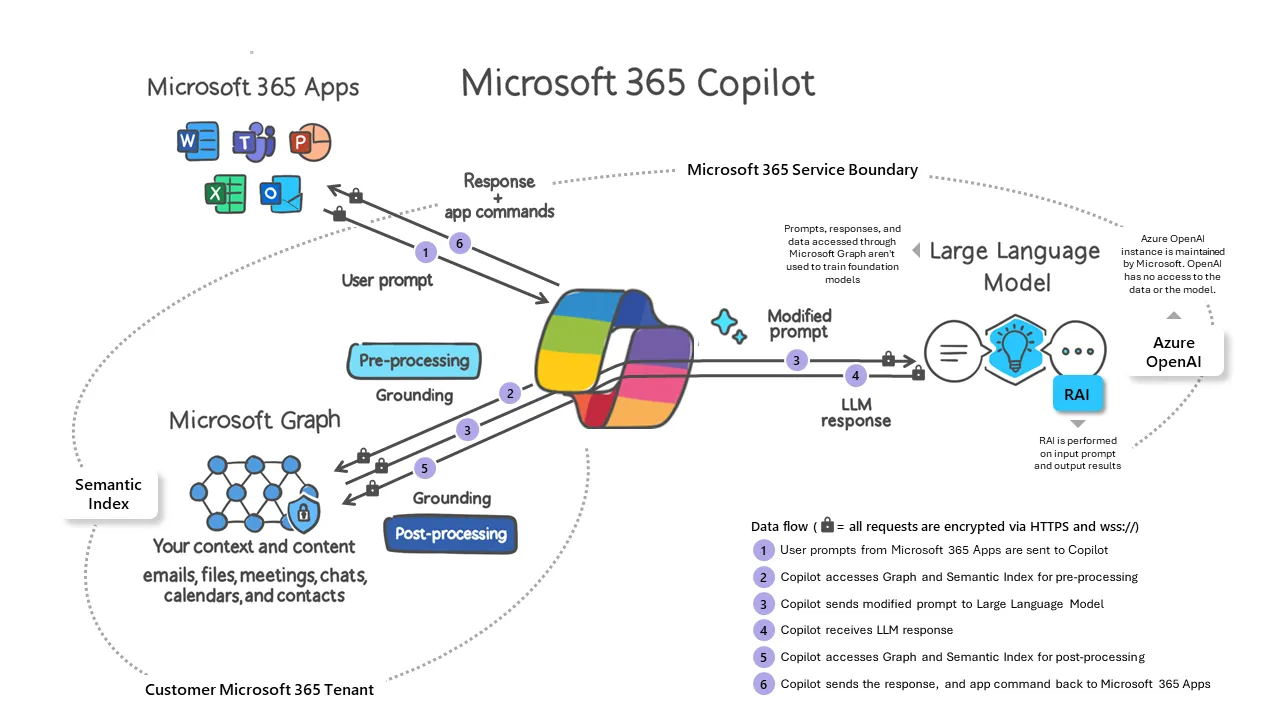

1,678,235 | Am I Alone in Wondering How Many Faces Microsoft Copilot has? | In the ever-evolving landscape of Microsoft's digital realm, the concept of Microsoft Copilot has... | 21,622 | 2023-11-28T07:30:00 | https://intranetfromthetrenches.substack.com/p/wondering-how-many-faces-copilot-has | githubcopilot, microsoft365 | In the ever-evolving landscape of Microsoft's digital realm, the concept of **Microsoft Copilot** has undergone a transformation, paving the way for a new perspective on efficiency. Rather than a distinct tool continuously at hand, **Microsoft** seems to be shifting towards a paradigm where **Copilot** seamlessly integrates into main services and applications, becoming an option as ordinary as any other feature.

What sets this approach apart is the integration of **Copilot** into the very fabric of **Microsoft Graph**, establishing a common context for every user. This foundation draws from the wealth of activities within the **Microsoft 365** tenant, creating a unified backdrop against which **Copilot** operates. This shift implies that **Copilot**, once perceived as *service-specific* variants, may soon be regarded as a *behind-the-scenes* force ever-ready to assist, triggered at the user's discretion.

> *The notion of having different **Copilots** based on the specific service or application is gradually giving way to a mindset where **Copilot** is omnipresent, quietly awaiting activation when summoned.*

At its core, the **Microsoft Copilot** experience is becoming synonymous with the idea that help is always within reach, ready to augment productivity when needed. This shift from a *service-centric* to a *user-centric* approach signals a more personalized and user-friendly experience, where **Copilot** functions more as a reliable companion than a standalone tool.

This integration into the **Microsoft Graph** framework not only streamlines the user experience but also marks a strategic move by **Microsoft** to provide a seamless and interconnected environment for its users. The prospect of **Copilot** always lurking in the background, backed by the contextual richness of **Microsoft Graph**, signifies a departure from the segmented nature of productivity tools toward a more holistic and integrated approach.

As users navigate this evolving landscape, the integration of **Copilot** into the very fabric of Microsoft's suite prompts a reevaluation of its role. It transforms **Copilot** from a specific tool to a dynamic, always-on assistant, ready to contribute to efficiency whenever the user deems fit. In essence, Microsoft's approach seems to be sculpting a future where **Copilot**, unified by **Microsoft Graph**, seamlessly intertwines with our daily digital activities, enriching the user experience in a subtle yet impactful manner.

## References

- *Microsoft 365 Copilot overview: [https://learn.microsoft.com/en-us/microsoft-365-copilot/microsoft-365-copilot-overview](https://learn.microsoft.com/en-us/microsoft-365-copilot/microsoft-365-copilot-overview)*

- *Copilot for Microsoft 365: [https://adoption.microsoft.com/en-us/copilot/](https://adoption.microsoft.com/en-us/copilot/)*

- *Copilot breakdown by Mason Whitaker in LinkedIn: [https://www.linkedin.com/posts/masonwhitaker_msignite-microsoftignite-microsoftignite2023-activity-7131033698476462080-mdLL/](https://www.linkedin.com/posts/masonwhitaker_msignite-microsoftignite-microsoftignite2023-activity-7131033698476462080-mdLL/)*

- *What are Microsoft's different Copilots? Here's what they are and how you can use them: [https://www.zdnet.com/article/what-is-microsoft-copilot-heres-everything-you-need-to-know/](https://www.zdnet.com/article/what-is-microsoft-copilot-heres-everything-you-need-to-know/)* | jaloplo |