id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

195,719 | Extending Python with Rust | Introduction: Python is a great programming language but sometimes it can be a bit of sl... | 0 | 2019-10-26T17:00:21 | https://dev.to/p_chhetri/extending-python-with-rust-4pna | performance, python3, rust, python | ---

title: Extending Python with Rust

published: true

date: 2019-05-01 17:37:44 UTC

tags: performance,python3,rust,python

canonical_url:

---

### Introduction:

Python is a great programming language but sometimes it can be a bit of slowcoach when it comes to performing certain tasks. That’s why developers have been [building C/C++ extensions](https://docs.python.org/3/extending/building.html) and integrating them with Python to speed up the performance. However, writing these extensions is a bit difficult because these low-level languages are not type-safe, so doesn’t guarantee a defined behavior. This tends to introduce bugs with respect to memory management. Rust ensures memory safety and hence can easily prevent these kinds of bugs.

### Slow Python Scenario:

One of the many cases when Python is slow is building out large strings. In Python, the string object is immutable. Each time a string is assigned to a variable, a new object is created in memory to represent the new value. This contrasts with languages like Perl where a string variable can be modified in place. That’s why the common operation of constructing a long string out of several short segments is not very efficient in Python. Each time you append to the end of a string, the Python interpreter must allocate a new string object and copy the contents of both the existing string and the appended string into it. As the string under manipulation become large, this process can become increasingly slow.

Problem: Write a function which accepts a positive integer as argument and returns a string concatenating a series of integers from zero to that integer.

So let’s try solving the above problem in python and see if we can improve the performance by extending it via Rust.

### Python Implementations:

#### Method I: Naive appending

{% gist https://gist.github.com/chhetripradeep/fb1c2af57c7d3a65d9e2b0924defdcee %}

This is the most obvious approach. Using the concatenate operator (+=) to append each segment to the string.

#### Method II: Build a list of strings and then join them

{% gist https://gist.github.com/chhetripradeep/c2452b87b9df809a0c0ef5eec3222eb4 %}

This approach is commonly suggested as a very pythonic way to do string concatenation. First a list is built containing each of the component strings, then in a single join operation a string is constructed containing all of the list elements appended together.

#### Method III: List comprehensions

{% gist https://gist.github.com/chhetripradeep/4e1a604d225a75993802153485af8275 %}

This version is extremely compact and is also pretty understandable. Create a list of numbers using a list comprehension and then join them all together. This is just an abbreviated version of last approach and it consumes pretty much the same amount of memory.

Let’s measure the performance of each of these three approaches and see which one wins. We are going to do this using [pytest-benchmark](https://pypi.org/project/pytest-benchmark/) module.

{% gist https://gist.github.com/chhetripradeep/3b0cecfa5c7ead2a41bbf1d77e3d3e49 %}

Here is the result of the above benchmarks. Lower the value, better is the approach.

{% gist https://gist.github.com/chhetripradeep/e601bf2f4e06f625a8273d68d1124780 %}

Just by looking at the **Mean** column, one can easily justify that the list comprehension approach is definitely the winner among three approaches.

### Rust Implementations:

After trying out basic implementation of the above problem in Rust, and doing some rough benchmarking using [cargo-bench](https://github.com/rust-lang/cargo/blob/master/src/doc/man/cargo-bench.adoc), the result definitely looked promising. Hence, I decided to port the rust implementation as shared library using [rust-cpython](https://github.com/dgrunwald/rust-cpython) project and call it from python program.

To achieve this, I had create a rust crate with the following src/lib.rs.

{% gist https://gist.github.com/chhetripradeep/3c64187f83f598551b0bb05649553b91 %}

Building the above crate created a . **dylib** file which needs to be rename **.so**.

{% gist https://gist.github.com/chhetripradeep/6a0c2d1749f5ddf39de889bea664a12e %}

Then, we ran the same benchmark including the rust one as before.

{% gist https://gist.github.com/chhetripradeep/0243d40048dfd3b27a96470981cb1e33 %}

This time the result is more interesting.

{% gist https://gist.github.com/chhetripradeep/08cad5cbd4ff501011b002d33165b954 %}

The rust extension is definitely the winner. As you increase the number of iterations to even more, the result is even more promising.

Eg. for iterations = 1000, following are the benchmark results

{% gist https://gist.github.com/chhetripradeep/b2962ea087ce39fc06896d61307b0345 %}

### Code:

You can find the code used in the post:

- [https://github.com/chhetripradeep/rust-python-example](https://github.com/chhetripradeep/rust-python-example)

- [https://github.com/chhetripradeep/cargo-bench-example](https://github.com/chhetripradeep/cargo-bench-example)

### Conclusion:

I am very new to Rust but these results definitely inspires me to learn Rust more. If you know better implementation of above problem in Rust, do let me know.

[PyO3](https://github.com/PyO3/pyo3) started a fork of [rust-cpython](https://github.com/dgrunwald/rust-cpython) but definitely has lot more active development and hence on my todo-list of experimentation.

Distributing of your python module will demand the rust extension to be compiled on the target system because of the variation of architecture. [Milksnake](https://github.com/getsentry/milksnake) is a extension of [python-setuptools](https://pypi.org/project/setuptools/) that allows you to distribute dynamic linked libraries in Python wheels in the most portable way imaginable. | p_chhetri |

195,721 | A look at itertools chain method | The python itertools module is a collection of tools for handling iterators. I want to make an honest... | 0 | 2019-10-26T20:06:03 | https://dev.to/teaglebuilt/a-look-at-itertools-chain-method-4c13 | python |

The python itertools module is a collection of tools for handling iterators. I want to make an honest effort to break the habit of repetitive standard patterns of basic python functionality. For example...

We have a list of tuples.

```

a = [(1, 2, 3), (4, 5, 6)]

```

In order to iterate over the list and the tuples, we have a nested for loop.

```

for x in a:

for y in x:

print(y)

# output

1

2

3

4

5

6

```

I have decided to challenge myself to start using all that itertools has to offer in my every day solutions. Today lets take a look at the chain method!

This first approach will only return the two tuples as as index 0 and 1. Instead of nesting another loop, lets apply the chain method.

```]

from itertools import chain

a = [(1, 2, 3), (4, 5, 6)]

for _ in chain(a, b):

print(_)

# output

(1, 2, 3)

(4, 5, 6)

```

now we have access to iterate over the tuples without nesting another iteration with a for loop.

```

for _ in chain.from_iterable(a):

print(_)

# output

1

2

3

4

5

6

```

I think the chain method gives the flexibility to use this as a default choice for list iteration. | teaglebuilt |

195,733 | Wish you everyone a happy Diwali! | video demo of fireworks on html5 canvas | 0 | 2019-10-26T17:51:57 | https://dev.to/svijaykoushik/wish-you-everyone-a-happy-diwali-4mm6 | html, javascript, css, canvas | ---

title: Wish you everyone a happy Diwali!

published: true

description: video demo of fireworks on html5 canvas

tags: html, Javascript, CSS, canvas

---

Hello world,

I'm sorry, I couldn't publish a post this week. I wanted to write about particle physics with JavaScript and html5 canvas. But the demo took too long to make and I was busy with Diwali shopping. So I present to you the video of the demo this week and the actual post next week.

> Diwali, in the true sense, means ending all evils, cruelty and hatred > towards one another. Get together to celebrate the spirit of the festival.

Happy Diwali! ✨🎆🙌🏽 | svijaykoushik |

196,014 | Soldier to Software Engineer | As my 4 years in the U.S. Army came to an end, my Expiration - Term of Service date was approaching e... | 0 | 2019-10-27T04:53:08 | https://dev.to/arielb506/soldier-to-software-engineer-3dhd | career, advice, motivation, discuss | As my 4 years in the U.S. Army came to an end, my Expiration - Term of Service date was approaching extremely fast. As most veterans know, when you're approaching this, most people in your chain of command tell you that if you don't continue in the military, your life will fall apart and you will either end up being homeless or as a bus driver (no offense to bus drivers out there!)

## Backstory

When this date approached I was fortunate enough to be part of a great transitioning team, they were just rebranding to the "Soldier for Life" motto. But thanks to them, my eyes were opened about all the different routes I could take to become what I always wanted to be but always seemed far out of reach because of my lack of resources: a Software Engineer.

The doors that the G.I. Bill can open for you are endless, and it is sad to know that most of the transitioning veterans are not aware of them. Not only is the V.A. going to pay for your school, but also your housing, your medical insurance, and your books. They recommended me to start in a community college and then transition to a university from there.

In January 2016 I started my A.A. degree, the requirements to transition to the University of Florida were mostly math and physics requirements but thankfully I was able to learn in the environment that the Veteran's Success Center had in Santa Fe College, they provide tutors and they also recommended me to switch to Chapter 31, since I am a 50% disabled veteran. Here is my obtaining my A.A. with my lovely girlfriend:

##Outcome

Long story short, I was accepted into the University of Florida, where I will graduate with a degree in Computer Science. Besides all the coding, engineering, logical and analytical skills I have acquired in school, what I am most grateful for, is that my skills as a former soldier opened the doors to a lot of opportunities that I see my classmates miss out on for their lack of experience or discipline. When they mostly pass incredibly hard coding interviews in top companies around the country, they miss out on the opportunities because they fail the ability to be able to build rapport in the behavioral side of the interviews and leave a lasting impression.

I am happy and proud to announce, that even though I am already 28 years old, I have decided from several offers, to officially become a Software Engineer, where I'll be in this company's Engineering Leadership Development Program, where I will be able to obtain my Master's Degree absolutely free.

I wish I could motivate existing soldiers, veterans and all kinds of people, to never give up on your dreams, there are always paths to get there, no matter how difficult your path may seem, even if it's **O( n^2 ) time complexity.**

Never stop learning, go beyond school and in your free time, read books and take tutorials or extra classes and certification where you will be more valuable. When facing interviewers, never forget where you come from, be yourself, and remind yourself why you **want and need** that job, the combination of those things will give you the confidence needed to get that job you dreamed of! (Don't forget to answer any question in the STAR format!!)

Stay active, eat healthy foods, and you'll be a better developer, I promise. | arielb506 |

196,040 | [vim問題] iOSで利用できるVimは? | この記事では、iOS環境(iPhone/iPad)で利用できる「Vim」について、ご紹介します。 [解答] iVim オープンソースとして公開されている、iOS版Vimこと「iVim」です。2019年現... | 0 | 2019-10-27T05:30:37 | https://dev.to/vimtry/vim-ios-vim-egf | この記事では、iOS環境(iPhone/iPad)で利用できる「Vim」について、ご紹介します。 [解答] iVim オープンソースとして公開されている、iOS版Vimこと「iVim」です。2019年現在、唯一定期的に更

つづきは[こちら](https://vim.blue/vim-ios-ivim/)。

[https://vim.blue/vim-ios-ivim/](https://vim.blue/vim-ios-ivim/) | vimtry | |

196,072 | [Vim問題] ウインドウを水平分割する方法は何種類? | この記事では、Vimでプラグインを使わずに「ウインドウを水平分割する」ための方法について、ご紹介します。 [解答] コマンドもしくはキーバインドの2種類 Vimコマンド. 水平分割(上下の分割) :vs... | 0 | 2019-10-27T05:39:30 | https://dev.to/vimtry/vim-17ka | この記事では、Vimでプラグインを使わずに「ウインドウを水平分割する」ための方法について、ご紹介します。 [解答] コマンドもしくはキーバインドの2種類 Vimコマンド. 水平分割(上下の分割) :vsplit virt

つづきは[こちら](https://vim.blue/vim-window-vsplit/)。

[https://vim.blue/vim-window-vsplit/](https://vim.blue/vim-window-vsplit/) | vimtry | |

196,129 | Save forms temporary data | By now you should have come to a point were you already coded some forms. Used HTML to structure your... | 0 | 2019-10-27T10:29:05 | https://dev.to/jdsaraiva/save-forms-temporary-data-5e98 | javascript, php, html | By now you should have come to a point were you already coded some forms.

Used HTML to structure your form, JavaScript to get the data and PHP to save it, right ?

Maybe you have come to a point where you have a consistent form, with several inputs and options, however if this data isn’t validated on the server side the page will refresh and all the users inputs will be lost… obviously causing some frustration.

With this simple snippets my goal is to show you how to implement an almost generic form functions that will fetch the inputs values, save them on a temporary session variable and if something goes wrong the values are loaded again on the form.

This way you can have a safer server side validation and you visitors won’t have to refill the values if something goes wrong.

Let’s start with a really basic form (form.php);

```

<?php }

// check if data is valid

if (!filter_var($_POST["email"], FILTER_VALIDATE_EMAIL)) {

echo("e-mail is not valid");

// Something went wrong, load the user's data

loadSessionVariables();

} else {

echo "Welcome " . $_POST["name"] . ", you e-mail is: " . $_POST["email"];

}

}

?>

<form action="form.php" method="post">

First name:<br>

<input required type="text" name="name"><br>

E-mail:<br>

<input required type="text" name="email"><br><br>

<input onClick="saveData()" type="submit" value="Submit">

</form>

```

As you can see we have a basic form that gets the data and displays it.

Normally this data would be saved on a database of have further processing, although for the sake of simplicity we are just displaying it.

We will start by looping the form and save it’s input data, with this function:

```

function saveData(){

const forms = document.querySelectorAll('form');

const form = forms[0];

let array = [];

[...form.elements].forEach((input) => {

if ( input.type === "text" ) array.push(input.value);

});

sessionStorage.setItem("form-data", JSON.stringify(array ));

}

```

And if an error is detected we can load the data again with this one:

```

function loadSessionVariables(){ ?>

document.addEventListener("DOMContentLoaded",function(){

if (sessionStorage.getItem("form-data")) {

const forms = document.querySelectorAll('form');

const form = forms[0];

let retrievedData = sessionStorage.getItem("form-data");

retrievedData = JSON.parse(retrievedData);

let index = 0;

[...form.elements].forEach((input) => {

if ( input.type === "text") {

input.value = retrievedData[index];

index++;

}

});

sessionStorage.clear();

}

});

```

Overall you can find all the code here: https://github.com/jdsaraiva/Save-forms-temporary-data

Hope this simple code can help you to tackle this issue and make your website even more user friendly.

Let me know if it helped you out.

Best regards, João Dessain Saraiva. | jdsaraiva |

196,254 | What’s the biggest c*ck up you’ve made in an interview? | A few years ago now, I was interviewing for an internship as a Computational Biologist in my second y... | 0 | 2019-10-27T13:46:51 | https://dev.to/lukegarrigan/what-s-the-biggest-c-ck-up-you-ve-made-in-an-interview-4lnk | discuss, watercooler | A few years ago now, I was interviewing for an internship as a Computational Biologist in my second year of university. I had a pretty shoddy CV so I had a google and copied/pasted a Software Engineers template and put in my skills.

In the interview, everything was going perfect — I was interviewing well — I think — met the team, met the boss, text-book stuff.

Then LateX happened. One of the interviewers asked me about LateX — at the time I knew nothing about LateX so I was honest and said "I don’t really know much about it".

**”But you have it down as one of your skills”**

“Is it?” Was my reply.

SHIT, I’d copied and pasted this CV without removing all the skills they’d put. I panicked, I started to make up some nonsense about when I’d used it but didn’t feel very confident with it.

The whole interview was downhill from that point, I was shaking, embarrassed and just wanted to crawl in a hole.

So yeah, that’s my biggest cock up in an interview, I’d love to hear yours!

Oh yeah, I got the internship in the end, a bloody miracle if you ask me.

Thank you, if you like my rambling check out my personal blogging site at https://codeheir.com/

| lukegarrigan |

196,276 | angularJS + undefined SSR = SEO ⚡️ | [Story] How I made an angularJS SPA SEO friendly Introduction By reading the ti... | 0 | 2019-11-18T17:33:33 | https://dev.to/steeve/angularjs-undefined-ssr-seo-46lm | angular, php, writing | # [Story] How I made an angularJS SPA SEO friendly

## Introduction

By reading the title, you may have asked yourself:

`Sacrebleu! Are you still using angularJS? um.. 🤔`

This article is going against the flow by sharing to you outdated content through dying Framework _#AngularJS_. Some years ago, I used a lot of AngularJs and now I want to share the development of my first web project and solution I discovered when making an angularJS application SEO friendly.

AngularJS was created to make your website better by giving a better user experience. You wanted to make your app faster and you thought SPA was going to be awesome. You were right, but not totally...

## Table Of Contents

1. [Some context](#some-context)

2. [The problems](#the-problems)

3. [Solutions available](#solutions-available)

4. [My SSR solution](#my-ssr-solution)

## Some context

Back in time, I was a junior developer discovering web programming and I wanted to help a pom-pom girls organization managed by friends of mine. My goals were to:

- Create a (stunning) website from scratch

- Promote them on the internet

- Learn, learn, learn and learn

I heard about 3 emerging client-side frameworks to create SPA (Single page application): ember, Backbone.js, and AngularJS. As a beginner, I did not pay attention to SPA as I told myself "`A framework created by Google should be SEO friendly`" 😑 I chose AngularJS as front end, PHP as back end API with a MySQL database and handmade CSS design 👨🏻🎨. After some months of reading documentations, testing, designing, and programming during my spare time, the website was done: a fancy home page with a presentation, a team page, a blog page with articles and a contact page. An admin panel was available for the Pom Pom manager to edit the public pages (it's working like a CMS). After some months, I published the website. After following an SEO basis tutorial and creating a robots.txt and sitemap.xml, the website has been referenced on Google. 🎉

I was so proud to discover my first website on the google search list... <b>but</b> only one over 4 URL were referenced. At this moment begun a new adventure: How to make an angularJS SPA website optimized for search engine?

## The problems

Single-page applications are dynamically rewriting the current page rather than loading entire new pages from a server that makes the app faster and instantaneous for the user. However, when the crawlers are reading the template of your page, it sees:

```html

<h1>{{websiteName}}</h1>

```

instead of:

```html

<h1>Title of my website</h1>

```

Also, the head HTML tag was not filled with the right metadata on different pages. It was impossible to index the pages correctly,

resulting in bad organic search.

Before 2009, Google used to index only pure HTML without including dynamic Javascript content. The websites may receive an SEO penalty because Google considered the javascript content render as "Cloaking" and it was against the Webmaster Guidelines. However, since 2015, [Google announced](https://webmasters.googleblog.com/2015/10/deprecating-our-ajax-crawling-scheme.html) the ability to understand dynamic pages:

`Today, as long as you’re not blocking Googlebot from crawling your JavaScript or CSS files, we are generally able to render and understand your web pages like modern browsers.`

The other players like Bing were not able to render javascript. To be clear, you still needed to make your main content available through the server response to guarantee that search engines index it.

## Solutions available

First, enabled [html5mode API on your angular app](https://docs.angularjs.org/guide/$location#hashbang-and-html5-modes) but this requires server-side configuration to rewrite the URL (on the .htaccess in our case). It was not enough to make an SEO friendly website. Below is a list of available solutions at that time:

- Rewrite the codebase to the latest version of Angular (2) because it supports server-side rendering with the Universal module. I already passed to much time on this project.

- One alternative to avoid duplication was to parse the site with a browser executing javascript like PhantomJS to pre-render and save the result into a server and finally serve it to the crawlers. That's mean every change, I had to pre-render the page and push it to the production server? Mhh... it was not really exciting 🤔

- Use a pre-rendering platform or service to crawl the website, execute the javascript, create a cache from it and send the latter to the web crawlers. Sound like a good idea but it's not free.

## My SSR solution

First, enabling angularJS html5mode API is useful to remove the hashbang and get a regular URL.

Second, Here's my trick:

### Turn the main angularJS HTML template into PHP file.

I was able to:

- Edit the PHP template and fill the metadata tags according to the URL requested (title, description, etc...).

- Add some [structured data](https://developers.google.com/search/docs/guides/intro-structured-data) used by google crawlers to display rich results.

- Being SEO friendly through server-side rendering, here is an example of the index.php

```php

<?php

$path = $_SERVER['REQUEST_URI'];

$chunks = explode('/', $path);

$page = "";

$title = "";

$description = "";

$img = 'logo.jpg';

$alt = "";

?>

```

Then depending on the path, I was setting the metadata:

```php

if (in_array("contact", $chunks) == true) {

$title = "Contact";

$description = "Contact us to have more informations !";

$img = "/contact.jpg";

$alt = "contact picture";

} else if (in_array("team", $chunks) == true) {

$title = "Team";

$description = "Some description...";

$img = "/team.jpg";

$alt = "Some alt...";

}

// More else if

```

Next, I inserted the PHP variables into the HTML:

```php

<head>

<meta charset="utf-8" />

<title>

<?php echo $title; ?> - Cheery Dolls

</title>

<base href="/">

<meta name="description" content="<?php echo $description; ?>" />

<meta property="og:url" content="https://cheerydolls.fr<?php echo $path; ?>" />

<meta property="og:title" content="<?php echo $title; ?>" />

<meta property="og:description" content="<?php echo $desc; ?>" />

<!-- etc... -->

<head>

```

Every time a crawler was requesting a page, the server responded by the corresponding metadata that was making the page unique and indexable on the search page.

Also, I was able to print structured data on the index.php, for example, a blog breadcrumb:

```php

<?php

function display_breadcrumb ($article_title) {

$front = '<!-- breadcrumb -->

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "BreadcrumbList",

"itemListElement": [{

"@type": "ListItem",

"position": 1,

"name": "Blog",

"item": "https://cheerydolls.fr/blog"

}';

if (isset($article_title) && !empty($article_title)) {

$front .= ',{

"@type": "ListItem",

"position": 2,

"name": "Article",

"item": "https://cheerydolls.fr/blog/' . $article_title . '"

}';

}

$front .= ']}</script>';

return $front;

}

echo display_breadcrumb($article_name);

?>

```

It was not the best solution (also no the prettiest) to appear on the search page but it was doing the job. 🤷♂️ It leads to a hell of maintenance when I had to make important changes to the content, I had to change the rendering content every time...

## Conclusion

Build a website from scratch on your own is highly instructive in many aspects: server-side, API, client-side, SEO, security, design and more. I will advise you to do the same 😉 I spent so much energy on this project and I did think to give up sometimes because I was struggling so much in the beginning. It was also a project I was doing during my spare time and I was not paid for. By telling you this story, it makes me step back and revive the experience, choices I made and I learned a lot from it.

Nowadays a lot of wonderful frameworks are making our life easier like Nuxt, Next, Hugo and more! [AngularJS entered in long term support period](https://docs.angularjs.org/misc/version-support-status) and I advised you to migrate to the latest version of Angular or other frameworks.

I migrated the original Pom Pom girl's website to a modern stack: Nuxt + Vuetify + Express API. Check this out: [cheerydolls.fr](https://cheerydolls.fr).

If you want to learn more about Search Engine Optimization, I read recently a complete and interesting [cheat sheet](https://dev.to/pagely/seo-cheat-sheet-for-devs-5h1g) on dev.to.

Leave a like/comment to support my article or follow me to be notified of my next articles. 🔥

Thanks for reading!

### References in 2016

https://geoffkenyon.com/angularjs-seo-crawling/

https://stackoverflow.com/questions/41957366/angularjs-seo-once-and-for-all

https://www.verticalmeasures.com/blog/search-optimization/overcoming-angular-seo-issues-associated-with-angularjs-framework/

https://www.yearofmoo.com/2012/11/angularjs-and-seo.html | steeve |

196,312 | Do Design Systems prevent creativity? Let's Discuss about a question | What is a Design System? Design System is a comprehensive guide for project design – a col... | 0 | 2019-10-27T14:56:42 | https://justaashir.com/blog/design-systems-are-preventing-creativity/ | design, startup, discuss, codequality | ### What is a Design System?

Design System is a comprehensive guide for project design – a collection of rules, principles, constraints, and best practices. The core element of the Design System is often a Library of UI Components. These UI elements have also their representation implemented in code.

Design Systems are often called the single source of truth for the team involved in product creation. It allows the team to design, develop and maintain the quality of the product.

___

So I read this on Twitter :

{% twitter 1187438643763220480 %}

I was like how to answer this question? So Design Systems do prevent creativity because it's needed to generate more products than just making a perfect button. Every time you try to create a new button from scratch, your mind will generate a new type of button and you will find your previous work waste. It's much about solving the problems by designing not just designing for good looks.

this concept applies to the Frameworks too, Design Frameworks created by companies so their website looks the same to provide a better user experience but if you try to read the pros and cons of the Bootstrap and other Frameworks, You will find this point in cons that websites look same. Google Material Design is the hard work of many years by the creative team.

They simplify the design for us and what we are doing! We are marketing the Google Material Design by using it in our Web Apps.

The design System really provides consistency and unified design which attracts the user. I will follow-up more in upcoming posts.

{% twitter 1173988027795832832 %}

##### Don't Trust Blindly on the provided design systems by well-known companies

Almost Every Big company has a design system nowadays, I read about their design systems and they were like well-polished pixel-perfect. But Do the companies really follow their own design systems or this is just a myth or they are just doing it for marketing. I don't know! Design System is not everything, implementation of it matters most. I am not an expert at all, I am just writing my point of view.

`Design has always been largely about systems, and how to create products in a scalable and repeatable way… These systems enable us to manage the chaos and create better products… A unified design system is essential to building better and faster; better because a cohesive experience is more easily understood by our users, and faster because it gives us a common language to work with.`

___Airbnb

I'm [Aashir Aamir Khan](https://twitter.com/justaashir), Teenage Junior Web Developer, Looking for some Chocolates in the Chocolate Factory. Blogging to tell the community that I exist in this world.

___

- **Most Popular** : [Bulma - The Most Underrated Framework of the CSS Framework Era

](https://dev.to/justaashir/bulma-the-most-underrated-framework-of-the-css-framework-era-2gj8)

- **Most Popular** [Web Development Projects That can help You Get a Job in 2019-2020](https://dev.to/justaashir/web-development-projects-that-can-definitely-get-you-a-job-in-2019-2020-4c36)

- **My Study About Static Site Generators**

- [Static Site Generators are not for me! My Experience with Jekyll, Hugo, and NetlifyCMS

](https://dev.to/justaashir/static-site-generators-are-not-for-me-my-experience-with-jekyll-hugo-and-netlifycms-4mo5)

- [What is Going on With Static Site Generators? Why the numbers are increasing?

](https://dev.to/justaashir/what-is-going-on-with-static-site-generators-why-the-numbers-are-increasing-47fj)

| justaashir |

196,389 | Firefox (and other browsers) will be making better use of height and width attributes for modern sites | This video goes into it all... I'm excited about this because we deal with this problem on DEV.... | 0 | 2019-10-27T16:07:05 | https://dev.to/ben/firefox-and-other-browsers-will-be-making-better-use-of-height-and-width-attributes-for-modern-sites-4kpm | news, webdev, firefox, css | This video goes into it all...

{% youtube 4-d_SoCHeWE %}

I'm excited about this because we deal with this problem on DEV. Generating the proper height and width attributes on user generated content is not table stakes, but this change makes ultimately getting it right _way_ less hacky.

I've been really annoyed by this browser behavior and considered some unconventional ways to deal with this. I'm glad we didn't try to do something overly hacky.

This is a total aside to this feature in general, but in case you're curious, I made an issue about how we can implement this on DEV's user-generated content if anyone wants to take a hack at it...

{% github https://github.com/thepracticaldev/dev.to/issues/4616 %}

If anybody has more info about this in Chrome than is mentioned in the video, please drop a comment.

Happy coding ❤️ | ben |

196,442 | Overlay com cor sobre imagem no CSS: pseudo-element ou element? Analisando os métodos | Como fazer um overlay sobre uma imagem, mas por baixo de um texto, e que altere a opacidade no hover?... | 0 | 2019-10-27T21:07:56 | https://dev.to/quila/overlay-com-cor-sobre-imagem-no-css-pseudo-element-ou-element-analisando-os-metodos-41o3 | css, html | Como fazer um ***overlay sobre uma imagem, mas por baixo de um texto, e que altere a opacidade no hover***? Talvez com [pseudo-elements](https://developer.mozilla.org/pt-BR/docs/Web/CSS/Pseudo-elementos), se o HTML já estiver montado, sendo necessário apenas alterar o CSS ou usando elementos básicos.

A primeira vista, usar :pseudo-elements me parece mais prático, já que preciso mexer apenas no CSS do projeto, evitando qualquer modificação no HTML. Mas sabemos que é possível obter o mesmo resultado usando [elementos básicos](https://developer.mozilla.org/pt-BR/docs/Web/HTML/Element), e com o mesmo (quase o mesmo) CSS obter visualmente o mesmo resultado.

Mira:

Vale ressaltar que esse post não faz um comparativo de performance *(mas poderia)*, tão pouco irá apontar a melhor maneira ou a correta de resolver esse problema. Esse comparativo é apenas para fins didáticos.

---

## Ponto de partida

A partir de dois _cards_ idênticos, onde o _card_ recebe uma imagem de fundo e comporta apenas duas _[div](https://developer.mozilla.org/pt-BR/docs/Web/HTML/Element/div)_, sendo respectivamente título e subtitulo.

*O texto no rodapé do card com a cor branca é apenas para ficar elegante* :)

{% codepen https://codepen.io/quilamcz/pen/ExxXGXx ?default-tab=html,result %}

Veremos então como fazer um ***overlay sobre uma imagem, mas por baixo de um texto, e que altere a opacidade no hover...***

---

## Pseudo-element

O pseudo-element [:before](https://developer.mozilla.org/pt-BR/docs/Web/CSS/::before) e [:after](https://developer.mozilla.org/pt-BR/docs/Web/CSS/::after) para ser visível é necessário especificar um conteúdo, sendo essa a dificuldade mais corriqueira, já que é bem fácil esquece-lo.

Nesse caso, como não precisamos de conteúdo, adicionamos uma _string_ vazia apenas para o mesmo ser inserido no DOM:

```css

content: '';

```

Para estilizar e posicionar afim de obter o overlay, é necessário aplicar o pseudo-element no próprio card, além de alterar a posição do mesmo para *relative*, dessa maneira:

Acrescentei uma classe modificadora no primeiro card chamada **card--pseudo-elements**, para diferencia-lo do padrão.

```css

.card--pseudo-elements {

position: relative;

}

.card--pseudo-elements:before {

/* requerido */

content: '';

/* estilo de preto quase transparente */

background-color: black;

opacity: 0.5;

/* posiciona nos limites do elemento pai */

position: absolute;

top: 0;

right: 0;

bottom: 0;

left: 0;

/* suavização básica */

transition: 200ms all;

}

```

Feito isso, para fazer o efeito de escurecer o overlay, é necessário apenas aplicar o hover no card, e manipular o pseudo-element, assim:

```css

.card--pseudo-elements:hover:before {

opacity: 1;

}

```

Estaria pronto se não fosse por um problema: o overlay cobre todo o conteúdo do card. Isso ocorre por quê *(de modo resumido)* o elemento com posição absoluta fica numa camada superior [(z-index)](https://www.devmedia.com.br/css-z-index-entendendo-sobre-o-eixo-z-na-web/28698).

Contornamos isso apenas definindo o z-index dos elementos, dentro do card:

```css

.card--pseudo-elements .card__title,

.card--pseudo-elements .card__subtitle {

z-index: 1;

}

```

*Nesse estágio você pode ter se questionado ou tentado aplicar um z-index negativo para o seu :pseudo-element, afim de empurra-lo para trás do texto, mas isso não funciona. Veja mais sobre [z-index](https://www.google.com/search?q=z-index) para entender melhor.*

O resultado final com :pseudo-elements você confere abaixo:

{% codepen https://codepen.io/quilamcz/pen/MWWoxeE %}

---

## Elemento

Neste método, precisamos acrescentar um **elemento** no HTML. Além da classe módificadora *card--element* para diferenciar do outro método. O código fica assim:

```html

<div class="card">

<div class="card__title">

Maceió

</div>

<div class="card__subtitle">

Alagoas

</div>

<span class="card__overlay">

<!-- div overlay vazia -->

</span>

</div>

```

_Você pode inserir no início ou no final do card, **pessoalmente** prefiro inserir no final e como span visando reduzir o risco de interferência no layout atual._

No CSS o código é quase idêntico, mudando apenas os seletores, e a não obrigatoriedade do content. Veja:

```css

.card--element .card__overlay {

background-color: black;

opacity: 0.5;

position: absolute;

top: 0;

right: 0;

bottom: 0;

left: 0;

transition: 200ms all;

}

/* aplicando o hover */

.card--element:hover .card__overlay {

opacity: 0.9;

}

```

Aqui encontramos o mesmo problema do :pseudo-element, o overlay sobrepõe todo o conteúdo do card, sendo assim a solução é a mesma:

```css

.card--element .card__title,

.card--element .card__subtitle {

z-index: 1;

}

```

Abaixo você confere o resultado final com elemento básico:

{% codepen https://codepen.io/quilamcz/pen/YzzQgOP %}

## Conclusão

*Nota-se que a diferença entre os métodos é mínima, como o seletor diferente para o CSS e, de modo geral, uma linha a mais de código para ambos.*

Se com o :pseudo-element é necessário adicionar o content à sua regra de estilo e dispensa a marcação HTML, com elemento básico não precisamos do content, mas é necessário adicionar o elemento ao HTML. **O estilo e posicionamento do overlay é feito exatamente da mesma maneira.**

Abaixo deixo o resultado final com os dois métodos:

{% codepen https://codepen.io/quilamcz/pen/ExxXOme %}

---

**Não esqueça de comentar com suas dúvidas e/ou sugestões. Obrigado.**

*Talvez continue com a parte 2, abordando acessibilidade e performance.* | quila |

196,488 | Make Your Python Tests Run 10x Faster By using Heroku's CI | In this post, I'll show you how to deploy a very simple Python web app with very long tests. I'll also show you how to speed up those tests significantly using parallelism. | 0 | 2019-10-27T20:06:07 | https://www.daolf.com/posts/heroku-python-tests/ | python, devops, heroku | ---

title: Make Your Python Tests Run 10x Faster By using Heroku's CI

published: true

description: In this post, I'll show you how to deploy a very simple Python web app with very long tests. I'll also show you how to speed up those tests significantly using parallelism.

tags: ["python", "devops", "heroku"]

canonical_url: https://www.daolf.com/posts/heroku-python-tests/

cover_image: "https://thepracticaldev.s3.amazonaws.com/i/70gwdq9s9qt4aieg6k79.jpeg"

---

In this post, I'll show you how to deploy a very simple Python web app with very long tests. I'll also show you how to speed up those tests significantly using parallelism.

If you are already familiar with Heroku and just want to go straight to the point, go directly to [part 3](#parallel).

# It's testing time.

For my last project, ([a web scraping API](https://www.scrapingbee.com)) we decided to have part of our infrastructure on Heroku. The reason was simple: neither my co-founder nor I were very good at the ops side of dev, so we have chosen the simplest, most time-efficient way to deploy our app: Heroku. Prior to this we'd had middling experience with AWS, in particular [EBS](https://aws.amazon.com/elasticbeanstalk/).

Make no mistake – this simplicity comes at a price, and Heroku is crazy expensive. But their free plan is very good for side projects such as a [Twitch SMS notificator](https://www.freecodecamp.org/news/20-lines-of-python-code-get-notified-by-sms-when-your-favorite-team-scores-a-goal/) 😎.

So as I said, I've been using Heroku for quite a bit of time. And since the beginning we used the lightweight but simple CI integration that would automatically deploy our application every time we push, if and only if all our tests pass.

Nothing new under the sun here.

In this post you will see how to easily deploy a Heroku application and set up the continuous integration. But more importantly, you will see how to parallelize tests. Again, if you are already familiar with how to deploy a Heroku application and the continuous application, go directly here to learn about parallelising the test.

# First, deploy an app on Heroku:

If you don't already, you need to create a [Heroku account ](www.heroku.com). You also need to download and install the Heroku client.

I've provided a [test project](https://github.com/daolf/parallel-testing-heroku) on Github, so do not hesitate to check it out it you need help bootstrapping this tutorial.

You can pull this repo, `cd` into it and just do a `heroku create --app <app name>`. Then if you go onto your [app dashboard](https://dashboard.heroku.com/apps), you'll see your new app.

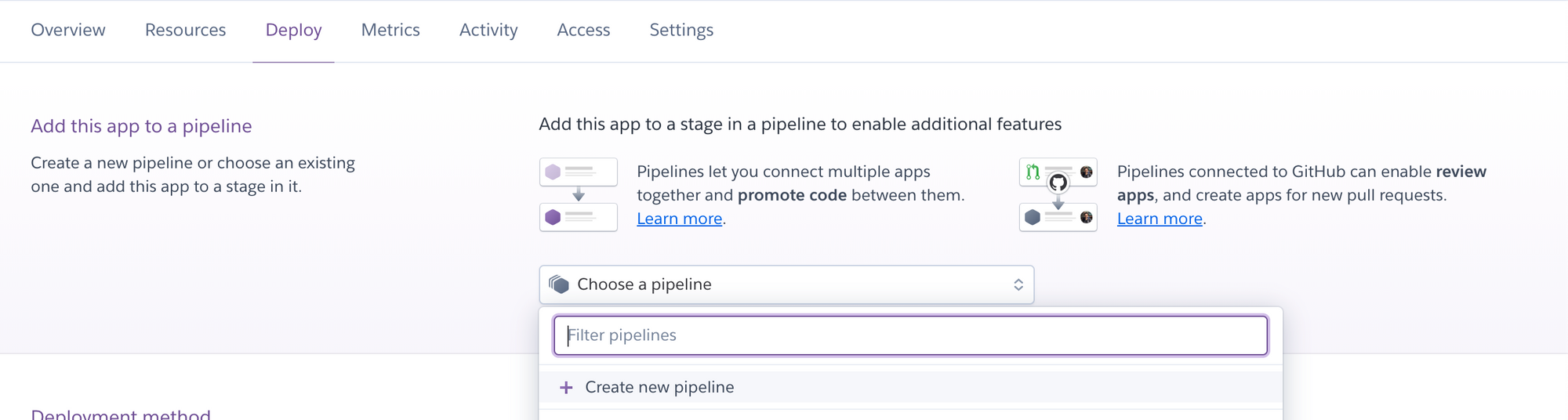

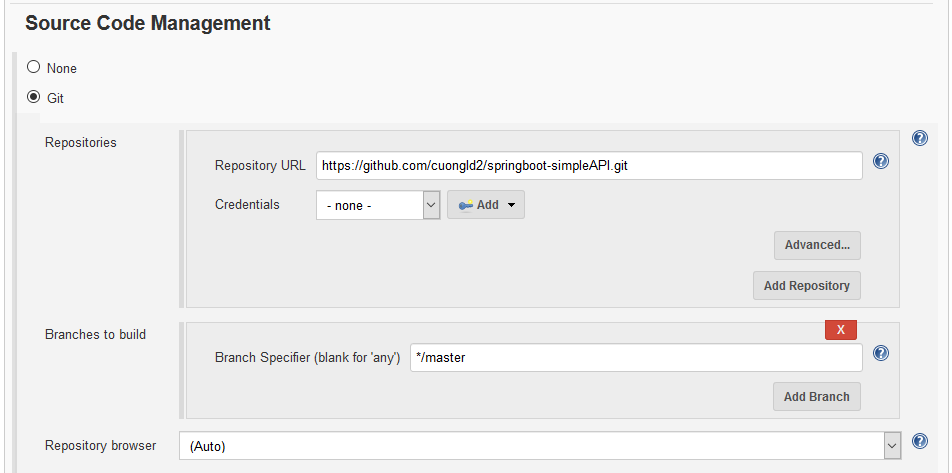

OK, now comes the interesting part – just go onto your dashboard and click on the name of your newly created app, then go to the "deploy" panel.

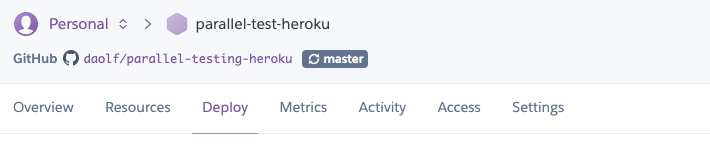

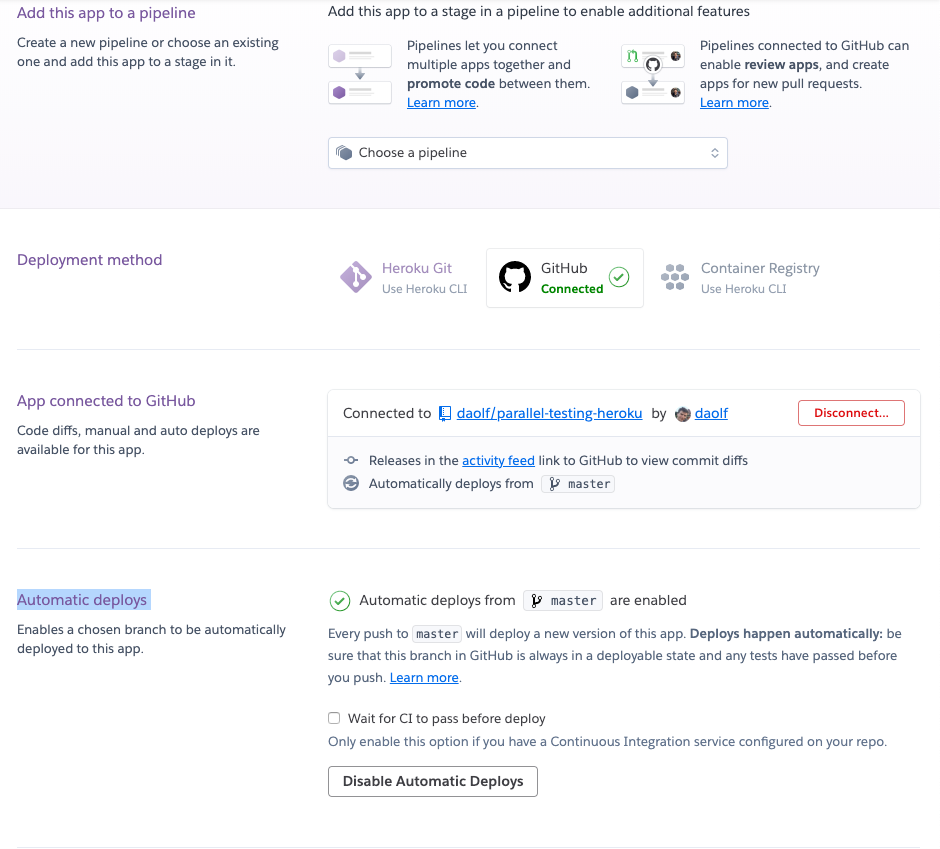

We will now link this Heroku app with your Github repo. This is rather easy: simply click on "Github" in the "Deployment method" section, add your repo in the "App connected to Github" section, and don't forget to click "Enable automatic deploys" in the "Automatic deploys" section.

Once everything is setup it should look a little bit like this:

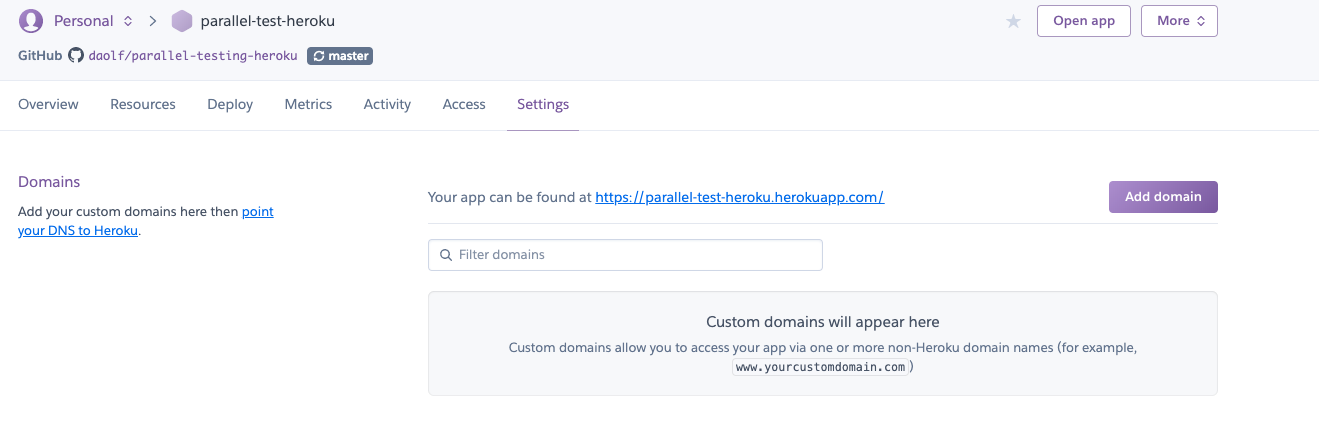

If you go over "Settings -> Domains" you should see the domain where your app is live.

So now your app is live, and every-time you'll push to Github a new deploy will take place.

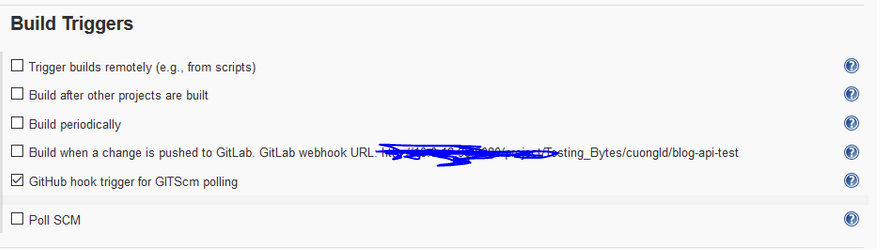

# Then add tests and CI:

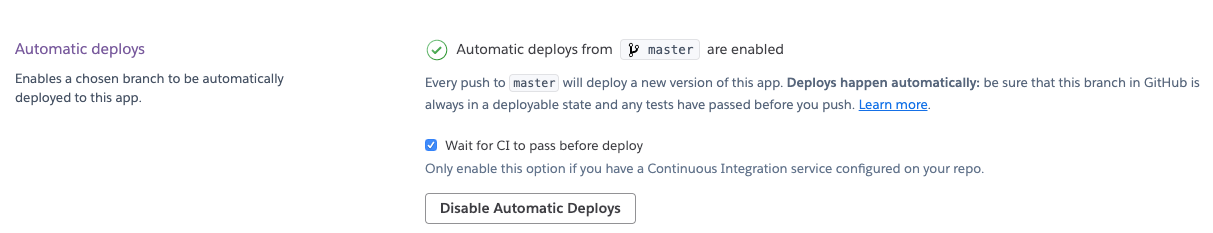

In order to run tests on Heroku you have to do to is click on "Wait for CI to deploy" in the deploy section of your app.

You also need to add your application to a Heroku pipeline.

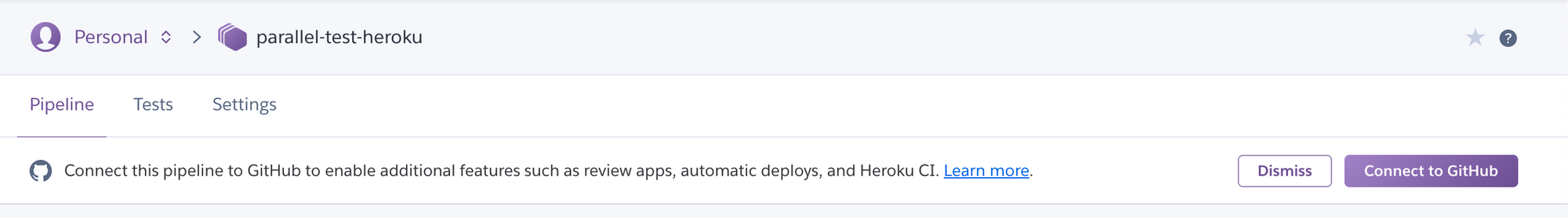

Doing this is really easy: just go on the Deploy tab of your application and create a new Pipeline with the name of your choice.

You have now access to the Pipeline view where you can click on your previously deployed app.

Go over to the Tests tab, link your Github repo, and click on "Enable Heroku CI". Be aware, this option costs **$10 a month**.

Let's go back to our code. The test file is already written, and now, all you have to do to trigger the magic is simply to push to master.

`git commit --allow-empty -m "Trigger heroku" && git push origin master`

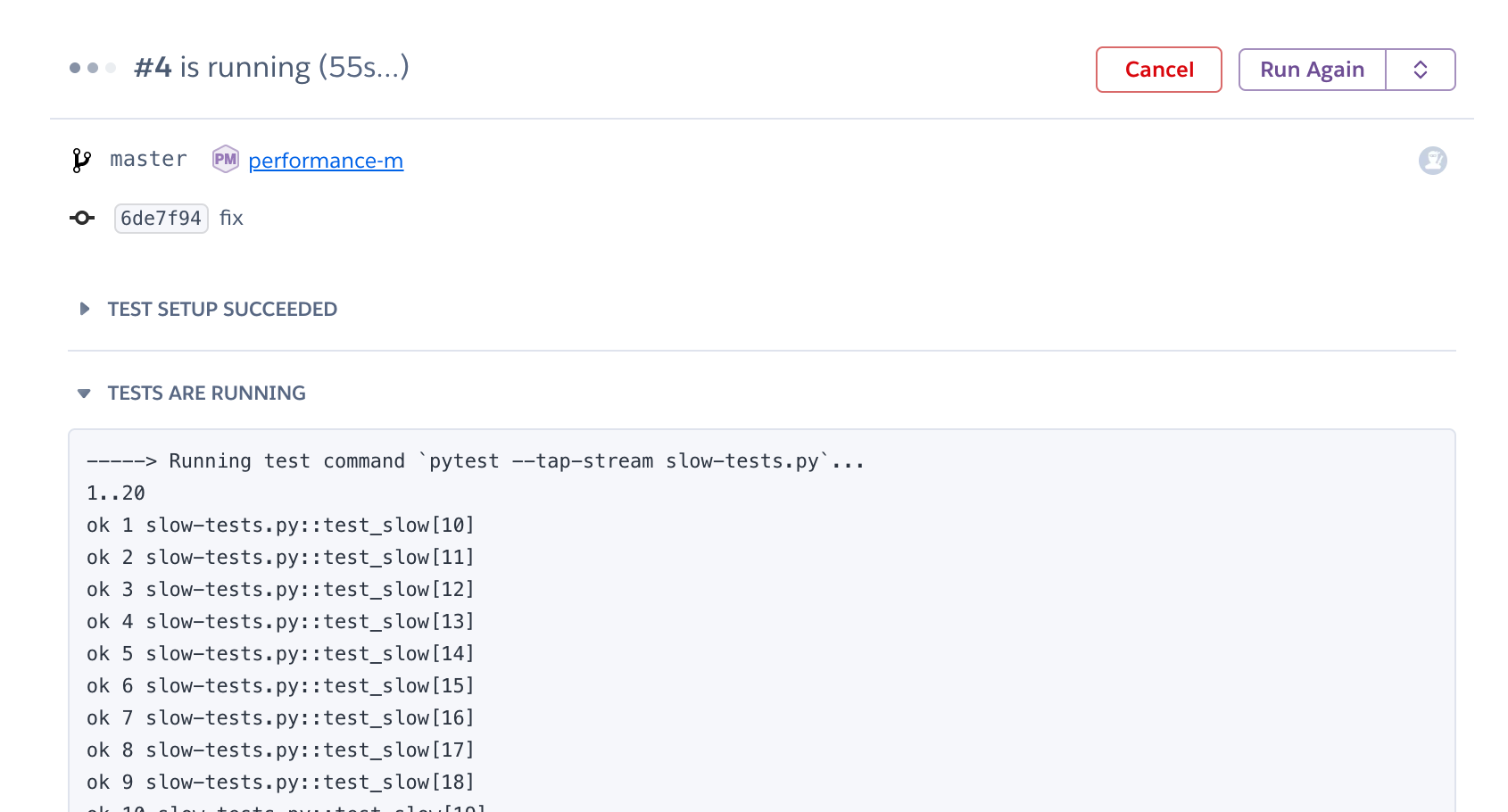

And now, the app won't deploy right away – Heroku will wait for tests to pass before deploying. You can check what's going on behind the curtain on the Test tab.

The command that is run during the test is defined in the `app.json` file.

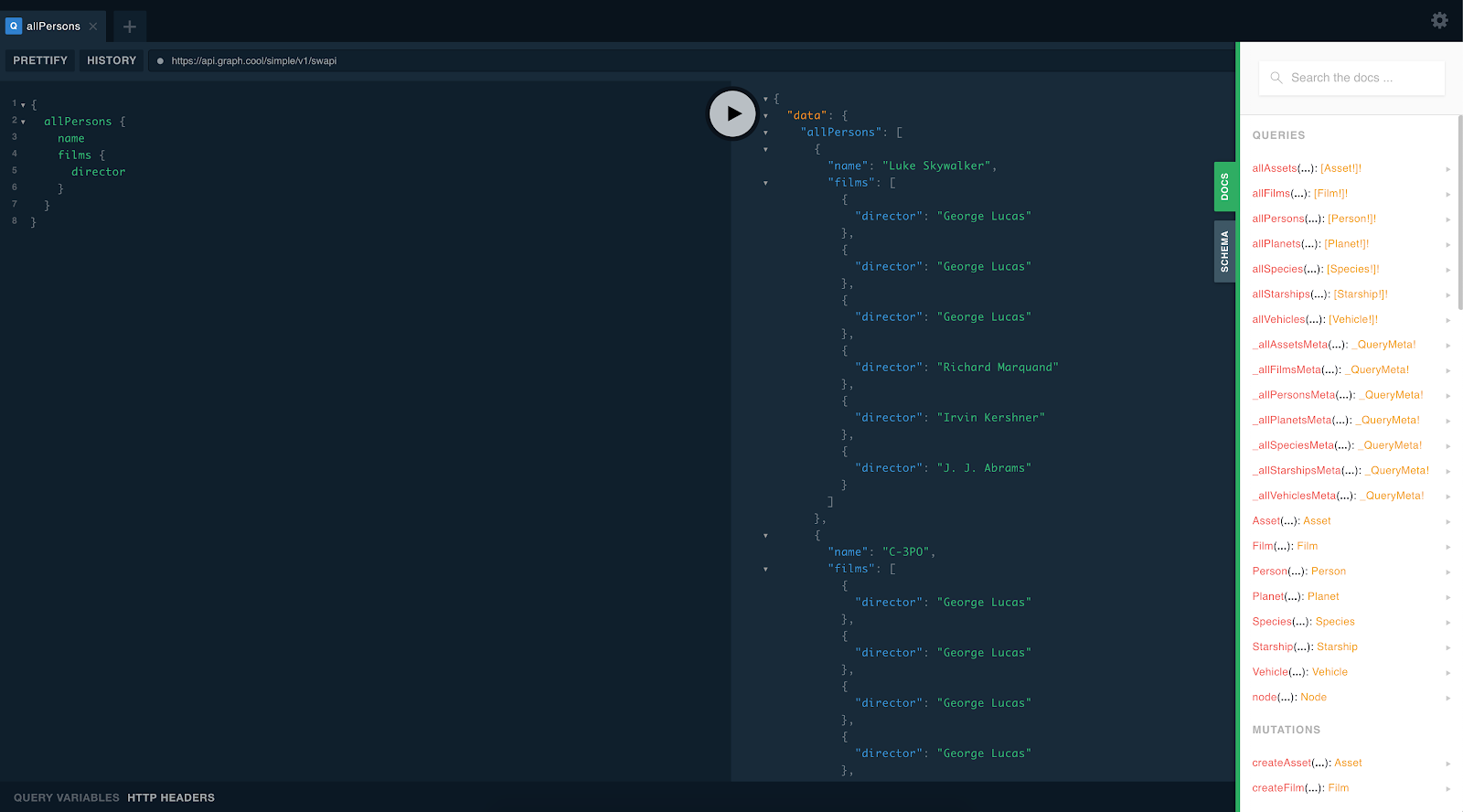

As you can see, tests are now being run sequentially on Heroku. If you look at the `slow-tests.py` file, you will see that I defined my tests using `pytest.mark.parametrize` that allows me to trigger multiple tests in one line:

```python

pytest.mark.parametrize("wait_time", [5] * 20)

def test_slow(wait_time):

time.sleep(wait_time)

assert True

```

This decorator means that the test will be run 20 times with `wait_time=5`.

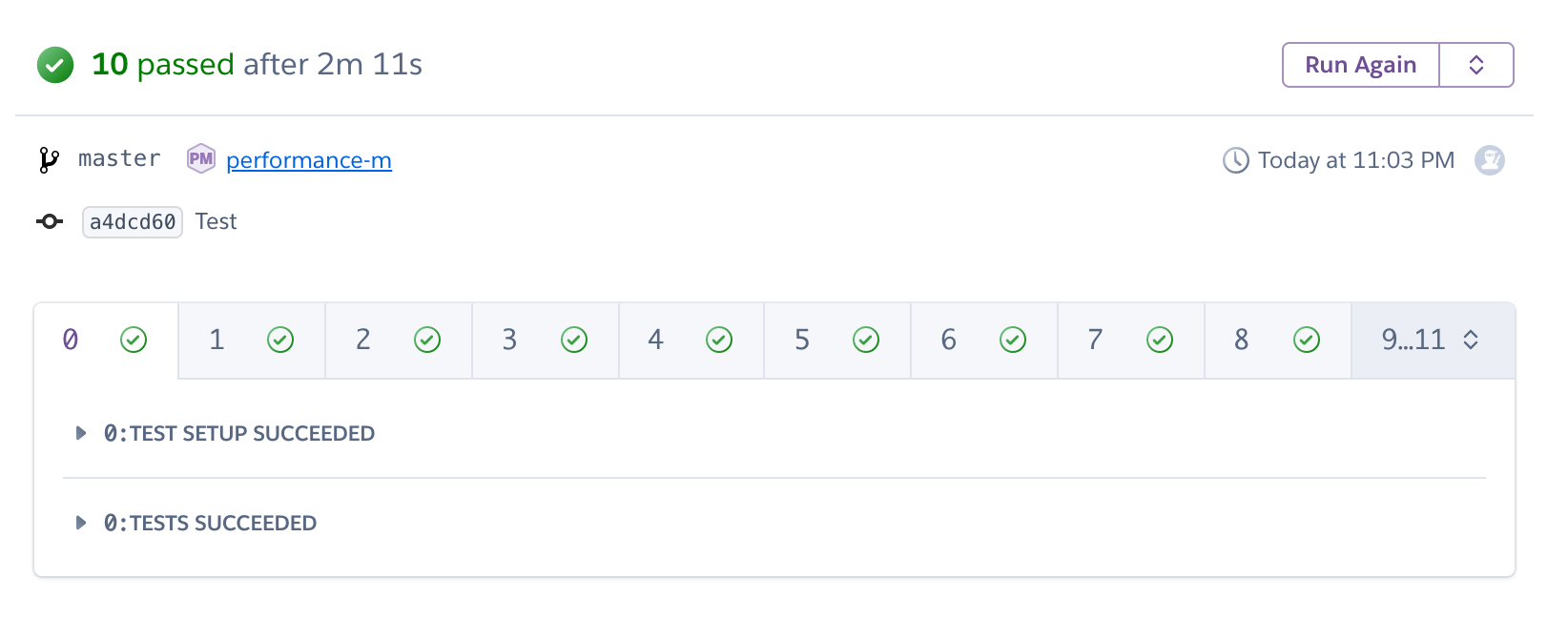

As you can see in Heroku, this test suite is (artificially) rather slow:

<h1 id="parallel"> Parallelising test on Heroku </h1>

As stated [here](https://devcenter.heroku.com/articles/heroku-ci-parallel-test-runs) in the doc, Heroku easily offers the ability to parallelise tests. In order to launch your tests on multiple dynos at the same time, you just have to tweak your `app.json` file a little bit.

```json

{

"environments": {

"test": {

"scripts": {

"test-setup": "pip install -r requirements.txt",

"test": "pytest --tap-stream slow-tests.py"

},

"formation": {

"test": {

"quantity": 12

}

}

}

},

"buildpacks": [{ "url": "heroku/python" }]

}

```

The `quantity` key will tell Heroku on how many dynos you want to run your test. From now on, pushing on master will launch the test on 12 dynos. But stopping here won't make your tests faster because the entire test suite will be run on 12 dynos. What we want is to run 1/12 of all tests on each of the 12 dynos.

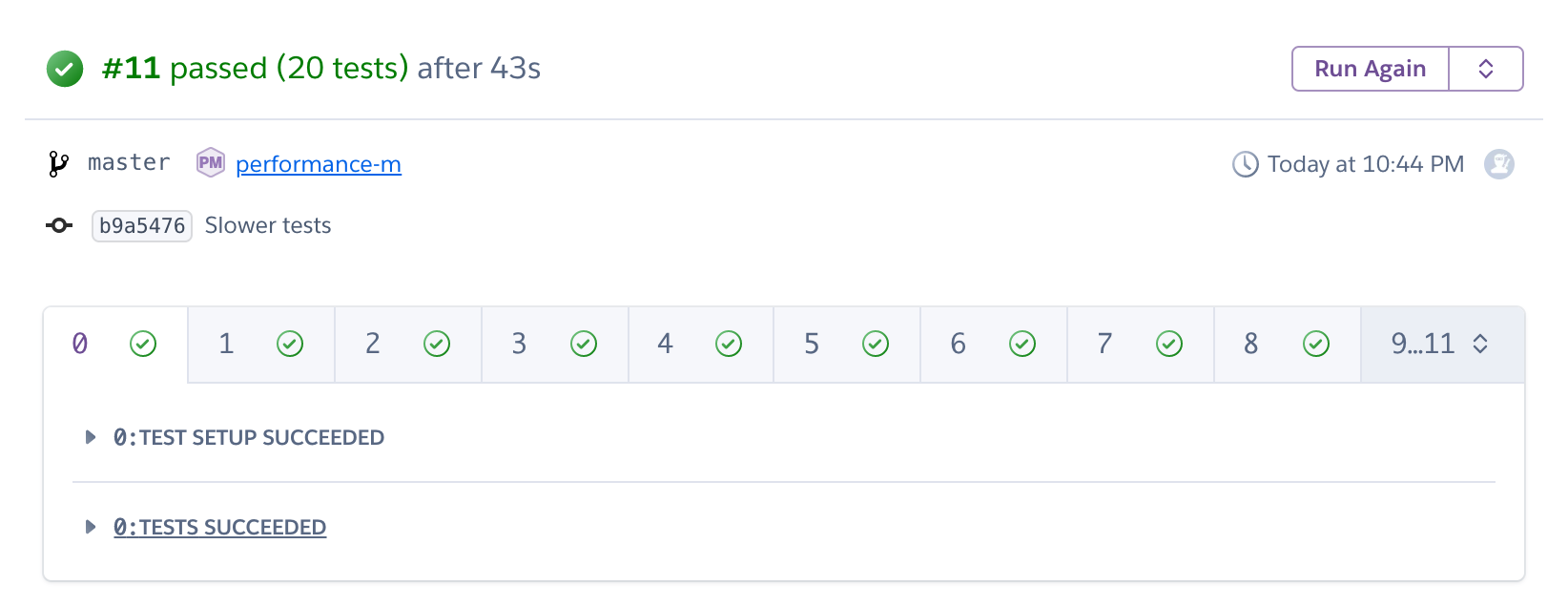

It is actually easy to check:

Tests were run on 12 dynos, but were not that much faster. So now comes the tricky and unfortunately not very well documented part: how do we tell Heroku to run 1/12 of the test suite on each of the 12 dynos?

# Splitting up tests

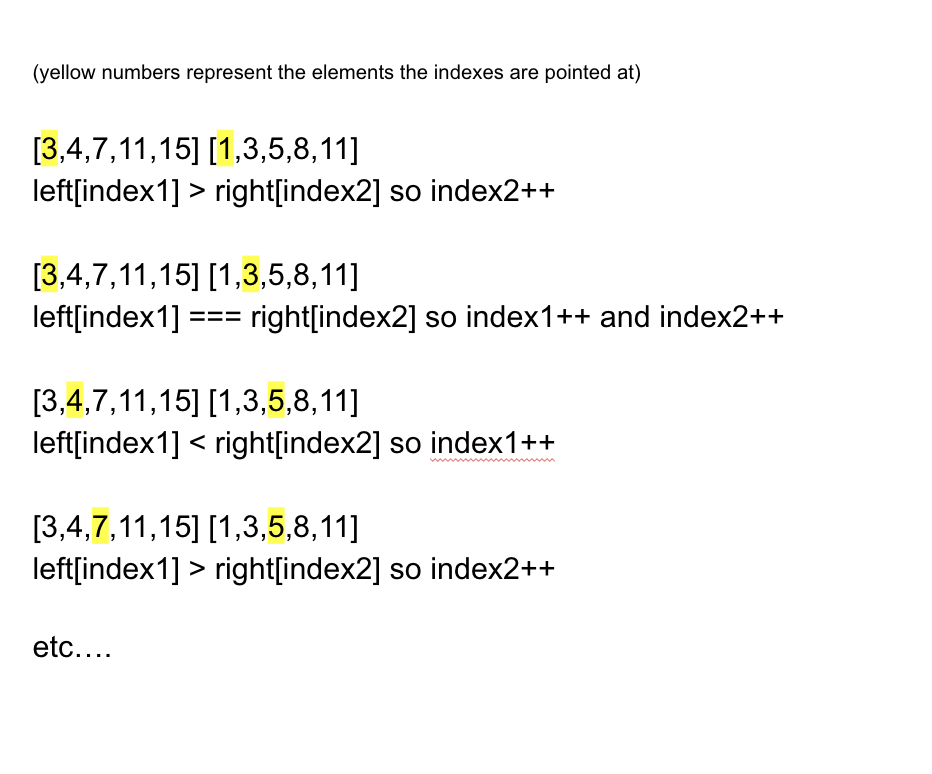

To do this we will use 2 environment variables set by Heroku and accessible on each dyno, `CI_NODE_TOTAL` and `CI_NODE_INDEX`. The first one indicates the total number of the dynos on which the tests are run, and the second one indicates the current dyno are you.

Let's see how to use them. pytest offers you the ability to overwrite the test items that are going to be executed during the test phase. To overwrite this function, just declare this snippet of code in `conftest.py` file:

```python

import os

def pytest_collection_modifyitems(items, config):

ci_node_total = int(os.getenv("CI_NODE_TOTAL", 1))

ci_node_index = int(os.getenv("CI_NODE_INDEX", 0))

items[:] = [

item

for index, item in enumerate(items)

if index % ci_node_total == ci_node_index

]

```

This method is used to modify test items that are going to be tested in place. This method does not return anything, which is why you have to update the array in place. This usually an example of what not to do, but that is not the subject of this post.

You have to keep in mind that this snippet is run on every test node. On every test node, `CI_NODE_TOTAL` is the same and `CI_NODE_INDEX` is different, so by only keeping tests whose index in items modulo `CI_NODE_TOTAL` equals `CI_NODE_INDEX` we ensure 2 things:

- every node runs 1 / `CI_NODE_TOTAL` number of tests

- every test originally in items ended up being run`

If it is not clear, imagine that I have 24 tests in items: `[t1, t2, ...., t24]`. This snippet of code, executed on the number 1, will update the items variable such that, at the end of `pytest_collection_modifyitems`, we have `items = [t1, t13]`. Then in dyno number 2 we have `items = [t2, t14]`, and so on.

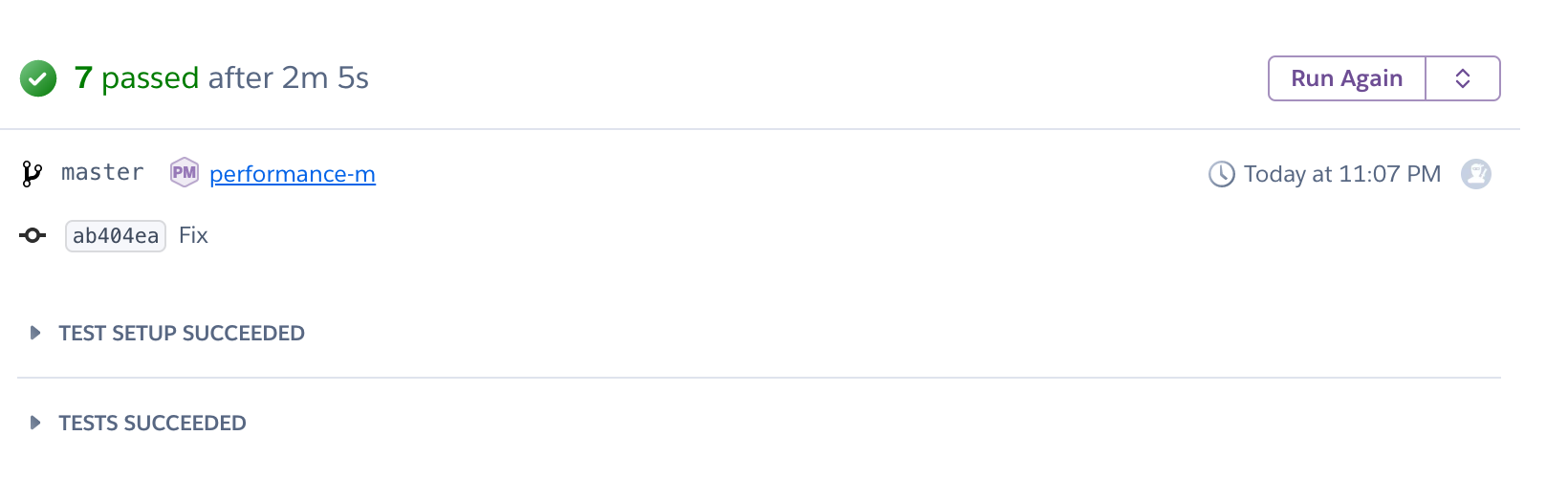

And here is what happens on Heroku once we push:

As you can see, we did not manage to divide the time by 12. The reason is simple: each dyno takes about 30 seconds to boot, and this time is incompressible. But we managed to divide time by 2, and more importantly, we can parallelize our tests to up to 32 dynos, so there is plenty of room for time improvement.

#Thank you for reading

I had trouble finding documentation about parallelising tests on Heroku in Python, and I really hope you liked this post and that it will speed up your deployment time on Heroku. All source code is freely available here on [Github](https://github.com/daolf/parallel-testing-heroku).

I frequently blog about Python and web scraping. Actually, I recently wrote a [Python web-scraping guide](https://www.scrapingbee.com/blog/web-scraping-without-getting-blocked) that got some nice attention from Reddit 😎, so don't hesitate to check it out.

You can follow me [here](https://twitter.com/PierreDeWulf) on Twitter so you don't miss any of my future blog posts.

| daolf |

196,521 | binary: what? why? how? | A quick guide to learn how to convert from decimal to binary and viceversa | 0 | 2019-10-28T14:32:39 | https://dev.to/wendisha/binary-what-why-how-3di7 | binary, computerscience, hardware, numericsystem | ---

title: binary: what? why? how?

published: true

description: A quick guide to learn how to convert from decimal to binary and viceversa

tags: binary,computerscience,hardware,numericsystem

---

In simple words, **binary** refers to something that is composed of or relates to two things; something that can be broken into two groups or categories. In the context of computing and mathematics, **binary** is a numeric system consisting of just 0's and 1's.

Everything needs to be translated into these 0's and 1's, so our computers get the appropiate signals to respond accordingly to the instructions and/or tasks we want them to perform. This translation is possible thanks to, among other components, the thousands, even billions of tiny transistors that when receiving voltage turn ON, representing 1's, and in the absence of said voltage turn OFF, representing 0's.

So now that we know what **binary** is, and why it is relevant to the computing world, let's look at how we can convert the numbers we are used to working with, to the Binary System.

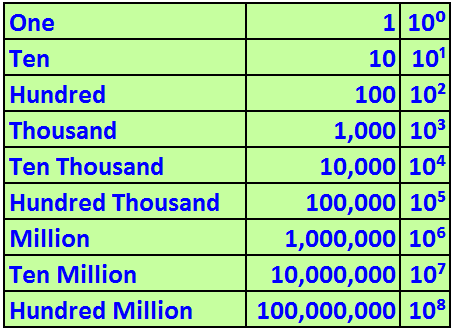

These numbers that we use on a daily basis, are part of the Decimal System, based on powers of 10.

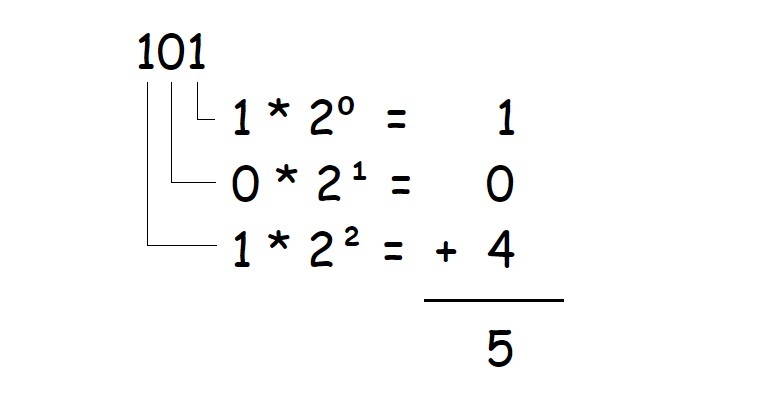

As per the previous image, we can see that if we have a number such as **532**, it is the result of the following operation:

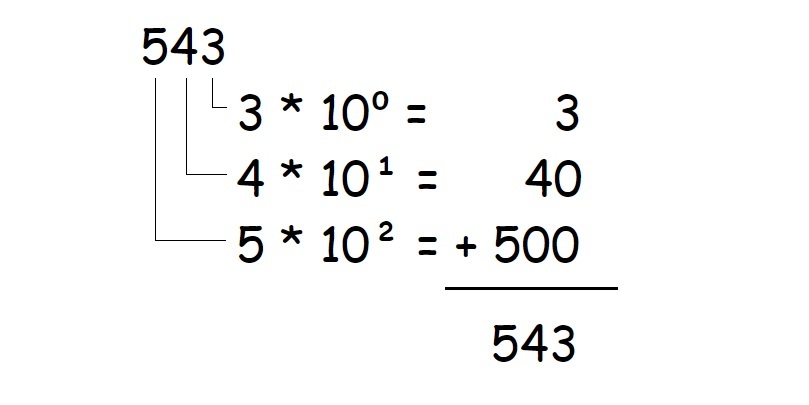

Now let's say we have a sequence of digits representing a binary number, such as **101**. How can we turn this number into our known "base ten" numbers? While the Decimal System uses powers of ten, the Binary System uses powers of two:

So knowing that the Binary System is "base two", we could convert numbers to "base ten" by considering their position from left to right, and summing up the values where a 1 is present.

Each of these binary digits are considered bits, and 8 bits conform a byte. Do these terms sound familiar? More on this on another post :)

| wendisha |

196,547 | Intro To HTML | Week three of coding school. Have you ever been internet surfing and never even looked at the website... | 0 | 2019-10-28T00:10:48 | https://dev.to/speerkrystal/intro-to-html-2i6h | codeschool, html |

Week three of coding school. Have you ever been internet surfing and never even looked at the websites you were going to? Or even noticed them? Well I haven’t until just recently. We are starting our first big project this week in class. Building our personal websites. I was like this is going to be easy I will just click here and copy paste there and done. Um…. Boy was I wrong. Till this week I never knew was HTML was or CSS. SO I have decided to share a few things about the first days of website development.

We are using HTML. HTML is a markup language which is used by the browser to manipulate text, images and other content to display it in required format. At first I was a little intimidated and then realized I had been using it all along. So below are the 5 points of our element.

1. Opening tag

2. Closing tag

3. The content

4. The element

By telling our compnter how to do this we are creating the format of how people will view your website. Now CSS ( Cascading style sheets)is what makes your website look awesome and also where you can add all your personal touches. I am looking forward to seeing my finished product. | speerkrystal |

196,618 | My first Java FX App | Hello fellow Devs, Have a look at my first Java FX Desktop Application with MySQL. Your feedback w... | 0 | 2019-10-28T03:48:36 | https://dev.to/ankitbeniwal/my-first-java-fx-app-3nb1 | javafx, java | Hello fellow Devs,

Have a look at my first Java FX Desktop Application with MySQL.

**Your feedback will be much appreciated.**

{% youtube ZVZwsPTVis4 %}

Thanks in anticipation! | ankitbeniwal |

196,743 | Benefits of Educational Management Software from Warals Technology? | The management system boosts the productivity of the institute. The reason of the increase in produc... | 0 | 2019-10-28T11:53:27 | http://warals.com/wims/ | javascript | The management system boosts the productivity of the institute. The reason of the increase in

productivity is decreased time to maintain the track records and increased accuracy in organizing the

data. We have developed all the required functions which can help to achieve the desired result with minimal effort and fewer resources and not to mention with easy to use interface.

WIMS is like a mini ERP for all kind of institute and training organization. Manage your operations like batch schedule, Student/Employee Management, Attendance and Course Tracker. We genuinely believe in customer success using our Products and Services.

Our Product WIMS is designed to reduce operational complexity and help to achieve the core goal of revenue generation of any Institutes/Training organizations. WIMS, as a specialized product is a result of collaborative efforts by our architects and end-users by getting the business requirements and aligning the flow according to them.

Our reporting Tool helps in informed decision making, and Informative Dashboard provides a quick snapshot of overall business for managers and owners. The IMS Business report is the annual leading industry study that provides vital journalistic reports.

Get a free online demo....

| waralst |

196,769 | 5 reasons to choose Open Source Parse Server and SashiDo for your Backend | We in SashiDo, strongly believe in the “no vendor lock-in” policy. We want our customers to stay with... | 0 | 2019-10-28T12:50:07 | https://blog.sashido.io/five-reasons-to-choose-open-source-parse-server-and-sashido-for-your-backend/ | backend, opensource, parseserver, mbaas | We in SashiDo, strongly believe in the “no vendor lock-in” policy. We want our customers to stay with us because they like the service we are providing, not because they are hostages of proprietary software. We are providing managed Mobile Backend as a Service using Parse Server (open-source backend engine) and our mission is to give to the mobile developers the easiest and cost-effective way to create functional and beautiful apps. We take care of apps’ infrastructure, scaling, security, database maintenance, backups, and Parse Server deployment to give the developers the time and energy to focus on their business idea.

We are really passionate about Parse Server, and we are here to give you 5 reasons why you should consider using Parse Server and SashiDo for your backend.

## 1. MBaaS providers retire or discontinue products, open-source Parse Server is here to stay.

Companies come and go. Just recently Telerik (a Progress company) decided to discontinue yet another MBaaS, forcing their clients to completely rewrite their code. And there’s absolutely no guarantee that this will not happen again. It’s not hard to imagine just how much additional expenses will occur to all Telerik Platform clients. Of course, they have their official alternative provided by Progress - Kinvey, but the new platform is so different that they will have to spend months in order to get their apps up and running in the new platform causing a huge delay in their current roadmap. And who will compensate them? No one.

Open Source Parse Server is a completely different story. It gives you vendor independence or how we like to call it “no vendor lock-in”. There are already several companies that offer Managed Parse Server hosting - SashiDo, Buddy, Back4App and more. Even if one of them decides to pivot or discontinue its offerings, the others will stay. And that’s not all - if you are unsatisfied with one company, you can always migrate to another (often free of charge) without rewriting your code. Or you can choose to host your app in your own Parse Server setup in Heroku or Digital Ocean. Basically, you have plenty of options none of which will drain your development budget which definitely makes the Open Source Parse Server more attractive than any proprietary MBaaS on the market.

##2. Using Open Source Parse Server will reduce your business risk.

Open Source Parse Server is the better choice if you planning long-term projects. Migration from one MBaaS to another will always come with additional expenses for development. And sometimes these expenses can go as much as the original development cost for your app.

Choosing Parse Server will guarantee you that you’ll never be forced to migrate to another backend solution. Even if one Parse Server hosting provider disappears you will always be able to move your app to another provider or get the public source code and host it on your own servers. Your app can live as long as you want without the need for unanticipated budget allocation for development.

##3. Parse Server community is independent of any financial decision.

Parse Server is maintained by developers with the common goal to make it better and feature-rich for other fellow app developers to use. Everybody can contribute to the open-source project and the decisions about what features to develop next are based solely on what the developers need.

On the other hand, companies that offer a proprietary backend solution often prioritize new features developed based on how much money they will be able to make out of them. And sometimes if a certain feature is not considered as profitable enough to make sane to be developed - it’s never started.

##4. Open Source projects have fewer prerequisites for hacker attacks and data leaks.

Cybersecurity is no joke these days. We witness major data breaches even from big companies too often to think there’s no way this can happen to us.

Companies that provide proprietary software often rely on [security through obscurity](https://en.wikipedia.org/wiki/Security_through_obscurity) principle, meaning that if no one outside the company know the exact implementation then the code is secure.

Almost every data breach is caused by human mistakes. Unfortunately, humans do make mistakes and security through obscurity principles may seems to cover up these mistakes nicely. The truth, however, is that nowadays hackers are capable of finding exploits even when the source code is not available for them to use.

No project is protected from human mistakes, not even the open-source one. But what makes open source more secure, is the way how open source communities work.

First, the project’s code is out there for everybody to see. Everybody who is interested can evaluate the code and if they see a vulnerability or just a small bug in the code they can suggest a fix. Instead of being automatically applied, this fix is to send as a suggestion for the people involved with the project. They review it and accept it only when they are sure the quality of the solution meets the project requirements. Finally, when a solution is accepted, it is merged with the master branch of the open-source project and the fix is available for everybody to use immediately. This makes the time between identifying a possible security breach and distributing a fix way shorter in the open-source projects than in the proprietary software.

Although we haven’t witnessed major data leak caused by an MBaaS provider yet, it’s not a guarantee that will not happen in the future. And if you are afraid that your data may go in the wrong hands, it’s better to trust what you can see for yourself, than what others claim, but no one can see for sure.

##5. Many hosting options for Parse Server create pricing competition.

If you are using a closed source MBaaS, provided by just one company, and you know that you’ll spend thousands of dollars for development to migrate to another MBaaS, you’ll accept some percentage of price increases as long as it’s not too drastic. It’s not exactly a monopoly, but the result is almost the same.

On the other hand, if you’re using Open Source Parse Server, you can switch providers at no development cost. When your provider increases its pricing, you have the power to decide whether this increase is reasonable or not and to take action to migrate elsewhere if necessary.

Open Source Parse Server is cool and the list with the benefits you will have with it does not end here. The truth is that it has something to offer to everyone. And we can all agree here, there’s nothing better than having provider alternatives because competition makes the world go round. | veselinastaneva |

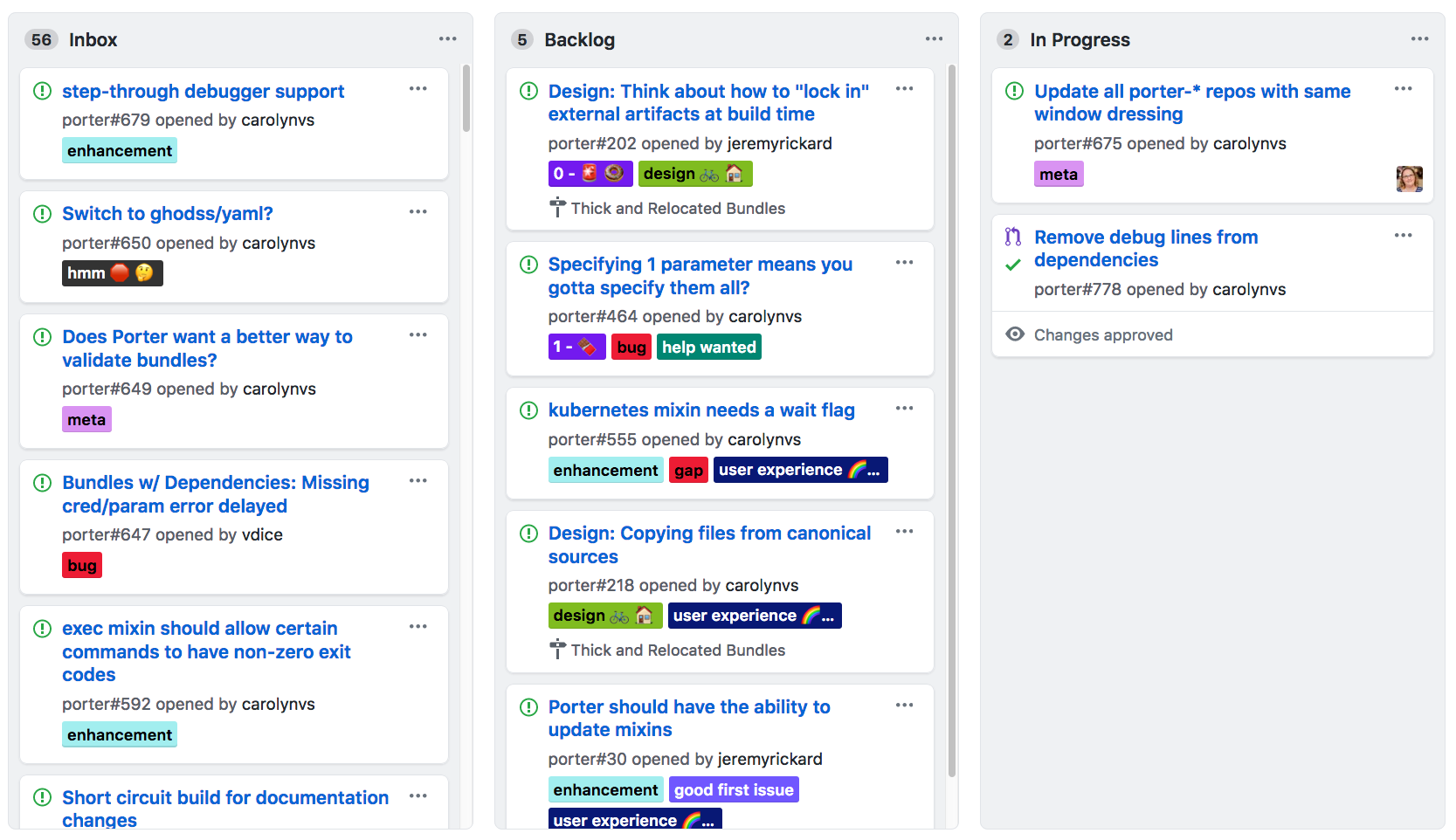

196,783 | Bug tracking & new features development by mastering GitHub Issues | Content List Introduction Milestones, Labels & Assignees Notifications, @mentions, an... | 0 | 2019-10-28T13:15:03 | https://blog.sashido.io/bug-tracking-and-features-development-with-sashido-and-github-issues/ | github, debugging, tutorial, beginners | ## Content List ##

* [**Introduction**](#introduction)

* [**Milestones, Labels & Assignees**](#milestoneslabelsassignees)

* [**Notifications, @mentions, and References**](#notificationsmentionsandreferences)

* [**Search**](#search)

* [**Other Uses**](#otherusesforissues)

## Introduction ##

Each application you create on SashiDo has its own **Free Private** repository on GitHub.

Like any great project, yours will probably need to have a way to track its bugs, tasks, and improvements. Great news, as GitHub has just the right feature for the job. Its called **Issues** and it’s GitHub’s way of tracking and dealing with these sorts of things.

It’s really simple, as they function almost like an email, though the great part is that they can be shared and discussed with your team. Every repository comes with its own Issues section. So let's get straight to the point.

We can start by looking at [**Parse Server’s Issues section**](https://github.com/parse-community/parse-server/issues) for example:

GitHub's tracker is also quite special. It offers excellent formatting and focuses on collaboration and references. You can check out how a typical issue on GitHub looks in the image below:

* You can see that there is a **title** and a **description** which informs us what the issue is about.

* There are some neat color-coded **labels** which help you filter and organize your issue by category.

* **Milestones** are very useful for linking issues with specific feature or project phase (e.g Weekly Sprint 9/5-9/16 or Shipping 1.0). They are like a container for issues.

* Every issue can have an **assignee** which is responsible for moving the issue forward.

* And of course **comments** let anyone with access to the repository provide feedback and suggestions.

## Milestones, Labels & Assignees ##

At some point, you're going to have many issues and you may find it hard to find a specific one. Don't worry, because **labels**, **Milestones** & **assignees** are awesome features to help you filter and sort issues.

You can change and edit all of them by just clicking on their corresponding gears in the sidebar which is located on the right.

In case you do not see the edit button, that means that you do not have permission to edit the issue and you can ask the repository's owner to make you a collaborator, so you can get access.

### Milestones ###

Milestones are a great feature to group up your issues, for example by project, a feature or even a time period. They can be used in many different ways in software development. Here are some examples:

* **Beta Launch** - You can include any bugs that need to be fixed before you release the beta. By doing so you make sure that you don't miss anything along the way.

* **June Issues** - If you have many things to get done, you can specify a Milestone with the given issues you would like to work on during a specific period of time.

* **Redesign** - A great way to handle issues regarding the design of your project as well as collecting new ideas along the way.

### Labels ###

Labels are an exceptionally good way to organize your issues. There is no limit to how many labels you can have and you can even filter your issues by one or more labels at the same time.

### Assignees ###

Each issue may have an assignee which is responsible for pushing it forward. That's a great way to assign a particular person to track the issue and is familiar with it. That way he can easily track and push it forward.

## Notifications, @mentions, and References ##

Communication is the key to resolving any issue. By using GitHub's **@mention** system and references, you can link issues to the right people or teams so that the issues are resolved effectively. These features are really easy to learn and use and work in every text field as they're part of GitHub's text formatting syntax called [GitHub Flavored Markdown](https://help.github.com/articles/writing-on-github#name-and-team-mentions-autocomplete).

If you want to learn more about the syntax, you can check GitHub's official guide on [Mastering Markdown](http://guides.github.com/features/mastering-markdown/)

### Notifications ###

GitHub's way to keep you posted about your Issues is [Notifications](https://github.com/notifications). They can be utilized so you are up-to-date with new issues or to simply know if someone's waiting for your input so they can continue with their work.

You can receive notifications by two ways - either by email or via the web. To configure these settings navigate to your [notification settings](https://github.com/settings/notifications).

If you plan to receive many notifications, it's suggested that you configure to receive **Web** & **Email** notifications for **Participating** and **Web** for **Watching**.

Given that you're using this configuration, you'll receive emails when someone specifically mentions you and you can visit the web-based interface to keep in touch with repositories you have interest in.

You can easily view your notifications in the [Notifications Screen](https://github.com/notifications). It's located on the left of your avatar in the top-right corner of the page. There you can easily navigate through many notifications at the same time. You can mark them as **read** or you can **mute** a specific thread. You can speed up this process by using keyboard shortcuts. To check a list of available shortcuts press the `?` on the page.

There is a little difference between muting a thread and marking it as read. When you've marked a certain thread as read, you will not be notified until there has been a new comment, whereas if you mute it, you will not receive notifications until you are specifically @mentioned in the thread. That gives you the power to choose which threads are important to you and which you are not interested in.

GitHub comes with an awesome feature which syncs your notification statuses. Basically, if you read notification in your email, it will be marked as read in the web-based interface. Awesome, right. However, you should enable your email client to show images if you want this to work.

### @mentions ###

In order to reference other GitHub users in Issues, we use the @mention system. We can do so in both the description and the comment section of an issue by including the `@username` of the person. When we mention someone like that they'll receive a notification about it.

If you want to include people to a given issue you can use the `/cc` syntax. Here's an example:

> It looks like the images on our initial page are not loading.

>

> /cc @alexSmith

That's great, but only if you know who exactly to include. In most cases, we tend to work in teams and that way we may not know who exactly could be of help. Don't worry, because @mentions work with teams within organizations on GitHub. When you @mention a team it will send notifications to everyone who's part of it. Let's say for example you create an organization named @MySashiDoApp and under it, a team @javascrip-devs. An example @mention, in this case, would look like this:

> /cc @MySashiDoApp/javascript-devs

### References ###

Quite often different issues are intertwined or maybe you just want to reference one into the other. This can be done by using the hashtag `#` followed by the issue number.

> Hey @alexR, I think this issue may have something in common with #62

After you've done it, an event is created in issue #62 which looks something like this:

If you want to refer to an issue in an entirely different repo, just include the repository name like this `alex/example_project#62`

You can also reference issues directly in commits by including the issue number in the commit message.

If you preface the commit message with “Fixes”, “Fixed”, “Fix”, “Closes”, “Closed”, or “Close” and afterwards merge to master, the issue will automatically be closed.

All in all, references are great for adding visibility to the history of your project, as they profoundly link the work being done with the bug being tracked.

## Search ##

You can find the search box at the top of the page.

You can apply different filters to your search, for example:

* Keyword - e.g. [all issues mentioning the sidebar](https://github.com/twbs/bootstrap/issues?q=sidebar)

* State - [all issues mentioning the sidebar that are **closed**](https://github.com/twbs/bootstrap/issues?q=sidebar+is%3Aclosed)

* Assignee - [all issues mentioning the sidebar that were assigned to @mdo](https://github.com/twbs/bootstrap/issues?q=sidebar+is%3Aclosed+assignee%3Amdo)

If you'd like to read more about the search, you can do so in the official GitHub Article about [Using search to filter issues and pull requests](https://help.github.com/articles/using-search-to-filter-issues-and-pull-requests/)

## Other uses for Issues ##

Issues are not tied only for development purposes. In fact, they are quite useful to collaborate with your team on whatever issues you have. Here are some examples:

* [Bug tracker for your house](https://github.com/frabcus/house/issues?labels=building&state=open) including such gems as [the door being hung incorrectly](https://github.com/frabcus/house/issues/58)

* [Bug tracker for your open source projects](https://github.com/joyent/node/issues)

* [Request for recipes](https://github.com/newmerator/recipes/issues), maybe you have a good [gluten-free pizza dough recipe](https://github.com/newmerator/recipes/issues/3)?

* MostlyAdequate's [E-books](https://github.com/MostlyAdequate/mostly-adequate-guide/issues)

* [The French Civil Code](https://github.com/steeve/france.code-civil/issues), as unlikely as it may sound.

## Final ##

Whew! That was a long article, but you've learned how to manage and keep track of your issues. | veselinastaneva |

196,804 | It's the end of Python 2. Are we prepared? | In just a few short months, Python 2 will officially reach the end of its supported life. 💀 This mean... | 0 | 2019-10-28T13:45:35 | https://blog.tidelift.com/its-the-end-of-python-2.-are-we-prepared | opensource, python | In just a few short months, Python 2 will officially reach the end of its supported life. 💀 This means that anyone building applications in Python will need to have moved to Python 3 if they want to keep getting updates including, importantly, fixes for any security vulnerabilities in the core of Python or in the standard library. How did we get here?

Python 3 was initially released on December 3, 2008 and included a variety of major compatibility-breaking changes. Overall, these changes are welcomed by Pythonistas and remove a lot of hacks and workarounds that had evolved over time. One of my favorites is that things like *dict.items()* no longer return a list, so you don’t have to use *dict.iteritems()* to get a lower memory and more performant way to iterate over dictionary items.

Others, while still welcome, are more challenging from a compatibility perspective as they bring along syntax changes to the core language. This meant that many of the Python libraries that we use for building applications weren’t ready for Python 3. Django, Flask, urllib3, etc... none were ready for the initial release of Python 3. But they now are and have been for quite a while. The efforts to support multiple Python versions have been great but can’t continue forever.

This isn’t the first time this kind of event has happened in the Python world, though. Way back in October 2000, Python 2 came out. This major release of Python had a number of incompatible changes that impacted developers, especially surrounding how one worked with strings and Unicode.

At that time I was working for Red Hat and maintaining Anaconda, the installer for Red Hat Linux. We had decided that migrating all of the Python usage within Red Hat to Python 2 was a priority. There were many less Python modules back then and a small group of us (employed by Red Hat!) were able to do the work to update the modules we shipped to support Python 2. We sent patches upstream, in some cases taking over upstream maintenance of the module, and were able to help move the world forward to Python 2.

But today is different. There are now over 200,000 Python libraries. It’s not practical for one company to help drive all of the changes in the ecosystem to support this new and incompatible release. And the vast majority of the Python packages out there are maintained by volunteers—people who are doing this in their spare time and as a labor of love.

This challenge of how to migrate successfully from Python 2 to Python 3 is exactly the sort of situation where having an incentive for maintainers to support a new version and work with the incompatibilities would be so much better. It’s a perfect example of why we need to pay the maintainers of the open source libraries that all of our applications depend upon. With strong financial incentives in place, the speed and comprehensiveness of our preparation for Python 3 could have been accelerated.

For users, major incompatible changes like those involved in the migration to Python 3 are an important part of keeping software vibrant, alive, and performant. But without being a psychic, it is simply impossible to understand how the world will change and evolve and require modifications to our software.

| katzj |

196,831 | Need feedback for my personal portfolio | Hello, I'm Maksum Rifai from Indonesia. Please take time to see/test and feedback for my personal por... | 0 | 2019-10-28T14:21:53 | https://dev.to/maksumrifai/need-feedback-for-my-personal-portfolio-4o3h | webdev, portfolio, frontend, bootstrap | Hello, I'm Maksum Rifai from Indonesia.

Please take time to see/test and feedback for my personal portfolio. Thanks.

https://maksumrifai.github.com

https://maksumrifai.netlify.com | maksumrifai |

206,142 | What should a developer CV / resume look like? | There's no doubt in my mind, my CV (it's a resume in other countries) from school is nothing like my... | 0 | 2019-11-16T00:06:58 | https://dev.to/adam_cyclones/what-should-a-developer-cv-look-like-2f1g | discuss | There's no doubt in my mind, my CV (it's a resume in other countries) from school is nothing like my CV today, it's got a layout which is a little bit unusual for a CV but not mad as a box of frogs either. I like my CV like I like my tattoos, black and white. But never mind what I think, should a developer open InDesign and get to work building something that looks more like a magazine layout.

I am especially interested in hearing your thoughts! | adam_cyclones |

206,179 | Hover scroll portfolio, reCAPTCHA form | Module Monday 64 | Module Monday 64 | 0 | 2019-11-18T23:00:36 | https://guide.anymod.com/module-monday/64.html | showdev, githunt, webdev, javascript | ---

title: Hover scroll portfolio, reCAPTCHA form | Module Monday 64

published: true

description: Module Monday 64

tags: showdev, githunt, webdev, javascript

cover_image: https://res.cloudinary.com/component/image/upload/b_rgb:ffffff,c_lpad,h_350,w_800/v1573698448/screenshots/slide-cards.gif

canonical_url: https://guide.anymod.com/module-monday/64.html

---

## Open source modules

These modules can be used on any website, web app, or anywhere else. There are hundreds more like these built & shared on [AnyMod](https://anymod.com).

Click a mod to see it along with its source code.

## Hover scroll portfolio

Cards to display your work.

<a class="btn btn-sm" href="https://anymod.com/mod/portfolio-cards-with-auto-scroll-preview-balmna">View mod</a>

<a href="https://anymod.com/mod/portfolio-cards-with-auto-scroll-preview-balmna">

<img src="https://res.cloudinary.com/component/image/upload/v1573698448/screenshots/slide-cards.gif"/>

</a>

## Transitive intro

Simple hero unit that fades in on page load.

<a class="btn btn-sm" href="https://anymod.com/mod/hero-raodab?preview=true">View mod</a>

<a href="https://anymod.com/mod/hero-raodab?preview=true">

<img src="https://res.cloudinary.com/component/image/upload/v1573698447/screenshots/transitive-intro.gif"/>

</a>

## reCAPTCHA form

Contact form that prevents spam with a reCAPTCHA.

<a class="btn btn-sm" href="https://anymod.com/mod/contact-form-with-recaptcha-edlbn">View mod</a>

<a href="https://anymod.com/mod/contact-form-with-recaptcha-edlbn">

<img src="https://res.cloudinary.com/component/image/upload/v1573698441/screenshots/reCaptcha.gif"/>

</a>

## Image display

Show off your visuals with this responsive mod.