id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

213,551 | What is an engineer's worth? | An engineer's worth can be seen from the number of different perspectives they can look a problem fro... | 0 | 2019-12-01T13:45:04 | https://dev.to/anshbansal/what-is-an-engineer-s-worth-n4m | career | An engineer's worth can be seen from the number of different perspectives they can look a problem from. As a software engineer I may think about getting things across the finish line. But I have found that if I start thinking it from someone else's perspective it makes my work better. Different people in different professions may look at things differently. e.g.

- Someone who is doing software testing might think "How do I break this", "What do developers usually not test"?

- Someone who manages Infrastructure might think "In what sequence would this get deployed" or "How many servers would this change require"?

- Someone who does ETL might think where is data coming from, where is it going?

- Someone who does BI might be more interested in what each column in a table means and how does it relate to business?

- Someone in customer success might be more interested in how will this be rolled out to customers? If something happens would there be workarounds for the customer? How would the team find out before the customer has to report the problem?

- Someone who has to increase sales for a product might be more interested in reducing the number of steps customer has to take to buy something to increase the likelihood of sales.

- Someone who has been working more as a SQL dev might tend to solve problems one tabular operation at a time, someone who has been working in general purpose programming languages might tend to solve problems one row at a time.

I recently started thinking much more about how to grow more as an engineer. This is the answer that I came up with. Try and put more hats when looking at a problem to make the solutions better.

What's your take on this?

---

If you want to know when I write more articles join telegram channel for [Data posts](https://t.me/aseem_data), [software posts](https://t.me/aseem_software) or [other posts](https://t.me/aseem_life). I don't spam, promise. | anshbansal |

213,560 | Self introduction | Hello guys, I am an 11 years old programmer, engineer, photographer, composer, saxophonist and a desi... | 0 | 2019-12-01T14:35:21 | https://dev.to/saxode/self-introduction-hhg | introducing | Hello guys, I am an 11 years old programmer, engineer, photographer, composer, saxophonist and a designer from Taiwan, I code in Arduino, C, HTML, CSS, JS, Python, VBA, I am now learning C++, happy to meet you guys! | saxode |

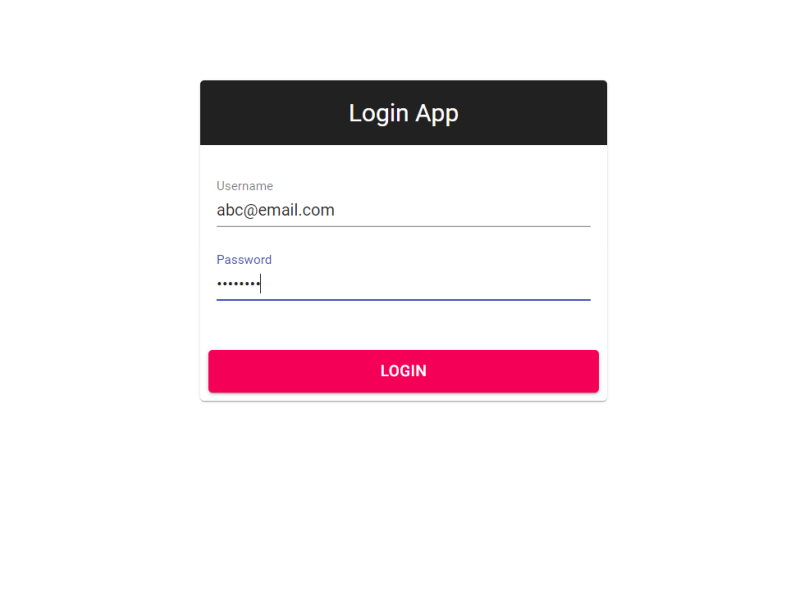

213,613 | A Login Form built using React Hooks | Created a simple login form using React, Typescript and React Material UI featuring useReducer and us... | 0 | 2019-12-01T17:43:13 | https://dev.to/creativesuraj/a-login-form-built-using-react-hooks-2ko2 | react, typescript, javascript, opensource | Created a simple login form using **React, Typescript and React Material UI** featuring `useReducer` and `useEffect` hooks.<br/>

A detailed article on [How to build a React Login Form with Typescript and React hooks](https://surajsharma.net/blog/react-login-form-typescript)

| creativesuraj |

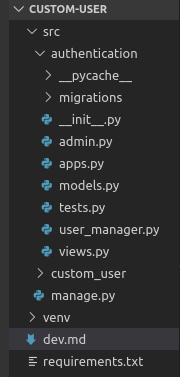

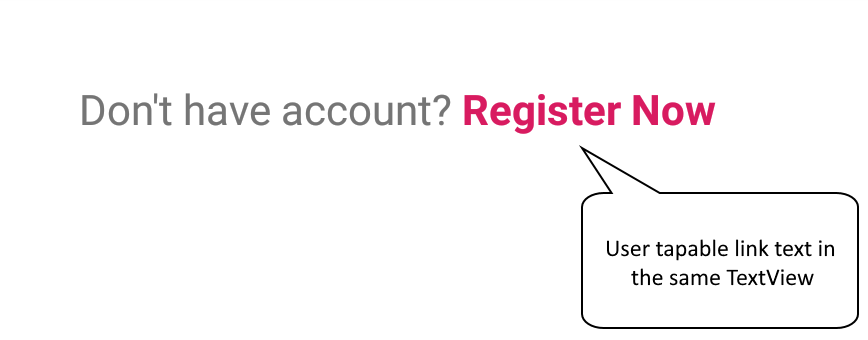

213,631 | Webview native authentication in React Native | Original published on my blog TL;DR: React Native App: https://github.com/smakosh/article-auth-a... | 0 | 2019-12-01T22:06:08 | https://smakosh.com/webview-native-authentication | reactnative, react, mobile, dev | ---

title: Webview native authentication in React Native

published: true

date: 2019-12-01 07:50:01 UTC

tags: React native, React, mobile, dev

canonical_url: https://smakosh.com/webview-native-authentication

cover_image: https://smakosh.com/static/a06a144f8099f4242540e8b94df0ac67/7a72d/webview-react-native.webp

---

> Original published on [my blog](https://smakosh.com/webview-native-authentication)

TL;DR:

- React Native App: [https://github.com/smakosh/article-auth-app](https://github.com/smakosh/article-auth-app)

- React web app: [https://github.com/smakosh/article-auth-web](https://github.com/smakosh/article-auth-web)

- REST API: [https://github.com/smakosh/article-auth-api](https://github.com/smakosh/article-auth-api)

## Theory

Before you start reading and getting into this article, you must be aware that only the minority of mobile developers get into this use case and due to that, I decided to write this article to guide you through on how to implement authentication within a native app that has a webview part included.

You may be wondering why going through this while you could have just converted the web app into a fully native app or just go fully with the webview.

Well to answer the first question, sometimes your client wants a quick & cheap MVP to deploy to TestFlight or the Beta track on the Play Store for their customers to test and share feedback.

The reason we want to have at least the authentication part being fully native is because your submitted app on the App Store unlike Google gets tested by humans, and they reject the app if it uses the webview only.

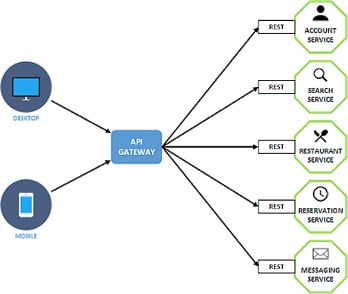

Before we move into to the practical part in this guide, let me explain how we will deal with authentication first:

1. User has to register or sign in

2. A request is sent to our REST or GraphQL API returning a JWT token

3. Token gets stored within the device storage

4. User gets redirected to the webview screen being authenticated as we pass the token to the web app using a great library called `react-native-webview-invoke`, that lets us pass values and functions to be executed within the web app.

5.

When the user signs out within the webview screen, a function will be invoked from the web app that logs out the user on the native app as well

> This way, when the user opens up the app once again, they will start from the authentication process

6. We will be getting the stored token and verifying that it is still valid, if it is, the API will return user’s data, else user has to login once again.

## Practice

So let us begin by initializing a new React Native project using `npx react-native init authApp`

> ⚠️ I’ll be using React Native `0.61.5`

Let us install all the libraries we will be using in this example:

- Navigation: react-native-navigation

- HTTP requests: axios

- Webview: react-native-webview

- Storage: @react-native-community/async-storage

- Forms & validation: formik + yup

- Styling: styled-components

## Configuring RNN

As I’m using React Native 0.61.5, it’s way easier to configure react-native-navigation now, you can follow these steps to get it configured:

### for iOS

1. `cd ios`

2. open the `Podfile`

3. add this line to your Podfile

```ruby

pod 'ReactNativeNavigation', :podspec => '../node_modules/react-native-navigation/ReactNativeNavigation.podspec'

```

1. open your xcworkspace project in Xcode

2. In Xcode, you will need to edit this file: `AppDelegate.m`

3. Its content should look like this

```objective-c

#import "AppDelegate.h"

#import <React/RCTBundleURLProvider.h>

#import <React/RCTRootView.h>

#import <ReactNativeNavigation/ReactNativeNavigation.h>

@implementation AppDelegate

- (BOOL)application:(UIApplication *)application didFinishLaunchingWithOptions:(NSDictionary *)launchOptions

{

NSURL *jsCodeLocation = [[RCTBundleURLProvider sharedSettings] jsBundleURLForBundleRoot:@"index" fallbackResource:nil];

[ReactNativeNavigation bootstrap:jsCodeLocation launchOptions:launchOptions];

return YES;

}

@end

```

1. Open `AppDelegate.h` and make sure its content looks like below

```objective-c

#import <UIKit/UIKit.h>

@interface AppDelegate : UIResponder <UIApplicationDelegate>

@property (nonatomic, strong) UIWindow *window;

@end

```

### for Android

You might want to check [the official guide](https://wix.github.io/react-native-navigation/#/docs/Installing?id=android)

> ⚠️ Make sure to create `react-native.config.js` file on the root of your app and put this content:

```js

module.exports = {

dependencies: {

'@react-native-community/async-storage': {

platforms: {

android: null,

},

},

'react-native-webview': {

platforms: {

android: null,

},

},

},

}

```

We basically want to avoid auto linking those two libraries on Android.

## Registering our screens

Let’s start by opening up our `index.js` file and removing its content, then we will import `Navigation` from react-native-navigation, along with our registered screens under `src/config/index.js` and initialize our app using the `registerAppLaunchedListener` callback.

> `index.js`

```jsx

import { Navigation } from 'react-native-navigation'

import { registerScreens } from './src/config'

registerScreens()

Navigation.events().registerAppLaunchedListener(() => {

Navigation.setRoot({

root: {

component: {

name: 'Initializing',

},

},

})

})

```

We set `Initializing` as the first screen to render.

Let’s now register the rest of the screens

We have:

- **Initializing** screen, which has been explained above ☝️

- **Home** screen which will contain the webview of our web app

- **Login/Register** screens are self descriptive

> `src/config/index.js`

```jsx

import { Navigation } from 'react-native-navigation'

import Initializing from 'src/screens/Initializing'

import Home from 'src/screens/Home'

import Login from 'src/screens/Login'

import Register from 'src/screens/Register'

export const BASE_URL = 'http://localhost:5000/api'

export const REACT_APP = 'http://localhost:3000'

export const registerScreens = () => {

Navigation.registerComponent('Home', () => Home)

Navigation.registerComponent('Initializing', () => Initializing)

Navigation.registerComponent('Login', () => Login)

Navigation.registerComponent('Register', () => Register)

}

```

> `BASE_URL` is our REST API and `REACT_APP` is our React web app.

Now let’s move on creating our screens

> `src/screens/Initializing.js`

This screen is the one that will appear to users first while fetching and validating their tokens

```jsx

import React from 'react'

import Layout from 'src/components/Layout'

import Initializiation from 'src/modules/Initializiation'

export default () => (

<Layout>

<Initializiation />

</Layout>

)

```

Initialization is where the logic exists which lives under `src/modules/Initializing`

```jsx

import React, { useContext } from 'react'

import { View, Text } from 'react-native'

import { Context } from 'src/providers/UserProvider'

import useGetUser from 'src/hooks/useGetUser'

import Container from 'src/components/Container'

import CustomButton from 'src/components/CustomButton'

export default () => {

const { user, dispatch } = useContext(Context)

const { loading, isLoggedIn } = useGetUser(user, dispatch)

return (

<Container>

{loading ? (

<Text>Loading</Text>

) : isLoggedIn ? (

<View>

<Text>Welcome back {user.data.user.username}!</Text>

<CustomButton goHome={() => goHome(user.data.token)}>

Go Home

</CustomButton>

</View>

) : (

<View>

<Text>Welcome!</Text>

<CustomButton onPress={() => goToRegister()}>Register</CustomButton>

<CustomButton onPress={() => goToAuth()}>Sign In</CustomButton>

</View>

)}

</Container>

)

}

```

> Notice that I’m using a custom hook `useGetUser` that contains all that logic and I’m passing the `user` object and `dispatch` function from the User Context.

Layout is a wrapper component that wrapps the passed children with the User Provider as shown below

> You can add the header and more components that are meant to appear on all your screens

Layout lives under `src/components/Layout`

```jsx

import React from 'react'

import UserProvider from 'src/providers/UserProvider'

export default ({ children }) => <UserProvider>{children}</UserProvider>

```

And I’m using React Context API to manage my global state, here’s the User Provider component and reducer

It lives under `src/providers/UserProvider`

```jsx

import React, { useReducer, createContext } from 'react'

import UserReducer from 'src/reducers/UserReducer'

export const Context = createContext()

export default ({ children }) => {

const [user, dispatch] = useReducer(UserReducer, [])

return (

<Context.Provider

value={{

user,

dispatch,

}}

>

{children}

</Context.Provider>

)

}

```

the user reducer lives under `src/reducer/UserReducer`

```js

export default (user, action) => {

switch (action.type) {

case 'SAVE_USER':

return {

...user,

isLoggedIn: true,

data: action.payload,

}

case 'LOGOUT':

return {

...user,

isLoggedIn: false,

data: {},

}

default:

return user

}

}

```

And here’s the `useGetUser` hook which lives under `src/hooks/`

```jsx

import { useState, useEffect, useCallback } from 'react'

import { verifyToken } from 'src/modules/auth/actions'

export default (user, dispatch) => {

const [loading, setLoading] = useState(true)

const [error, _setError] = useState(null)

const fetchUser = useCallback(() => verifyToken(dispatch, setLoading), [

dispatch,

])

useEffect(() => {

if (!user.isLoggedIn) {

fetchUser()

}

}, [user.isLoggedIn, fetchUser])

return {

error,

loading,

isLoggedIn: user.isLoggedIn,

}

}

```

I’m importing `verifyToken` from the auth actions, the action simply verifies that the token hasn’t expired yet, [see Step 6 above on the Theory section](/webview-native-authentication#theory)

> It lives under `src/modules/auth/actions.js`

```jsx

import axios from 'axios'

import AsyncStorage from '@react-native-community/async-storage'

import setAuthToken from 'src/helpers/setAuthToken'

import { BASE_URL } from 'src/config'

export const verifyToken = async (dispatch, setLoading) => {

try {

const token = await AsyncStorage.getItem('token')

if (token) {

const { data } = await axios({

method: 'GET',

url: `${BASE_URL}/user/verify`,

headers: {

'Content-Type': 'application/json',

'x-auth': token,

},

})

setAuthToken(data.token)

await dispatch({ type: 'SAVE_USER', payload: data })

AsyncStorage.setItem('token', data.token)

}

} catch (err) {

setError(err)

} finally {

setLoading(false)

}

}

```

More actions will be added as we move on through this guide through.

Next, let’s prepare both the `SignIn` and `Register` screens:

Login lives under `src/screens/Login`

```jsx

import React from 'react'

import Login from 'src/modules/auth/Login'

import Layout from 'src/components/Layout'

export default () => (

<Layout>

<Login />

</Layout>

)

```

And Login module lives under `src/modules/auth/Login`

```jsx

import React, { useContext } from 'react'

import { View } from 'react-native'

import { Formik } from 'formik'

import * as Yup from 'yup'

import { Context } from 'src/providers/UserProvider'

import { login } from 'src/modules/auth/actions'

import Container from 'src/components/Container'

import InputField from 'src/components/InputField'

import ErrorField from 'src/components/ErrorField'

import CustomButton from 'src/components/CustomButton'

import DismissibleKeyboardView from 'src/components/DismissibleKeyboardView'

import { Label } from '../styles'

export default () => {

const { dispatch } = useContext(Context)

return (

<Formik

initialValues={{

email: '',

password: '',

}}

validationSchema={Yup.object().shape({

email: Yup.string()

.email()

.required(),

password: Yup.string().required(),

})}

onSubmit={async (values, { setSubmitting, setErrors }) => {

try {

login({ dispatch, setErrors, setSubmitting, values })

} catch (err) {

setSubmitting(false)

}

}}

>

{({

isSubmitting,

handleSubmit,

errors,

touched,

values,

handleChange,

handleBlur,

}) => (

<Container>

<DismissibleKeyboardView keyboardShouldPersistTaps="handled">

<View>

<Label>Email</Label>

<InputField

value={values.email}

onChangeText={handleChange('email')}

onBlur={handleBlur('email')}

selectTextOnFocus

/>

{touched.email && errors.email && (

<ErrorField>{errors.email}</ErrorField>

)}

</View>

<View>

<Label>Password</Label>

<InputField

value={values.password}

onChangeText={handleChange('password')}

onBlur={handleBlur('password')}

selectTextOnFocus

secureTextEntry

/>

{touched.password && errors.password && (

<ErrorField>{errors.password}</ErrorField>

)}

</View>

<CustomButton onPress={handleSubmit} disabled={isSubmitting}>

Login

</CustomButton>

</DismissibleKeyboardView>

</Container>

)}

</Formik>

)

}

```

I’m using the newest version of Formik with yup for validation, there is one action called `login` being dispatched there when the form is submitted.

login action lives under `src/modules/auth/actions`, the same file where `verifyToken` lives

```jsx

import axios from 'axios'

import AsyncStorage from '@react-native-community/async-storage'

import setAuthToken from 'src/helpers/setAuthToken'

import { BASE_URL } from 'src/config'

import { goHome } from 'src/config/navigation'

export const login = async ({ dispatch, setErrors, setSubmitting, values }) => {

try {

const { data } = await axios.post(`${BASE_URL}/user/login`, values)

setAuthToken(data.token)

await dispatch({ type: 'SAVE_USER', payload: data })

await AsyncStorage.setItem('token', data.token)

setSubmitting(false)

goHome(data.token)

} catch (err) {

setSubmitting(false)

setErrors({ email: err.response.data.error })

}

}

export const verifyToken = async (dispatch, setLoading) => {

try {

const token = await AsyncStorage.getItem('token')

if (token) {

const { data } = await axios({

method: 'GET',

url: `${BASE_URL}/user/verify`,

headers: {

'Content-Type': 'application/json',

'x-auth': token,

},

})

setAuthToken(data.token)

await dispatch({ type: 'SAVE_USER', payload: data })

AsyncStorage.setItem('token', data.token)

}

} catch (err) {

setError(err)

} finally {

setLoading(false)

}

}

```

We will add three more actions later on as we move on.

The `setAuthToken` function simply adds a `x-auth` header to all upcoming requests

It lives under `src/helpers/setAuthToken`

```jsx

import axios from 'axios'

export default token => {

if (token) {

axios.defaults.headers.common['x-auth'] = token

} else {

delete axios.defaults.headers.common['x-auth']

}

}

```

Register is following the same logic, you’ll be able to find the source code on the repositories as everything will be open sourced, so let’s move on to the important screen which is the **Home** screen

It lives under `src/screens/Home`

```jsx

import React from 'react'

import Home from 'src/modules/dashboard/Home'

import Layout from 'src/components/Layout'

export default ({ token }) => (

<Layout>

<Home token={token} />

</Layout>

)

```

the actual logic exists within `src/module/dashboard/Home`

let’s start by creating an invoke from the native side and add the webview of our React app

```jsx

import React, { Component } from 'react'

import { SafeAreaView } from 'react-native'

import { WebView } from 'react-native-webview'

import createInvoke from 'react-native-webview-invoke/native'

import { REACT_APP } from 'src/config/'

class Home extends Component {

webview

invoke = createInvoke(() => this.webview)

render() {

return (

<SafeAreaView style={{ flex: 1, backgroundColor: '#fff' }}>

<WebView

useWebKit

ref={webview => (this.webview = webview)}

onMessage={this.invoke.listener}

source={{

uri: `${REACT_APP}`,

}}

bounces={false}

/>

</SafeAreaView>

)

}

}

Home.options = {

topBar: {

title: {

text: 'Home',

},

visible: false,

},

}

export default Home

```

We want to pass one function and value from React Native to the React web app:

1. Passing the token as url param, not sure if it’s a good approach to follow, feel free to enlighten me if you know any better approach to achieve this.

2. A function that will log the user out from the React Native app, remove the token from the device storage and redirect them back to the `Login` screen, triggered/invoked from the React web app.

So let’s add that to the **Home** module

```jsx

import React, { Component } from 'react'

import { SafeAreaView, Alert } from 'react-native'

import { WebView } from 'react-native-webview'

import AsyncStorage from '@react-native-community/async-storage'

import createInvoke from 'react-native-webview-invoke/native'

import { goToAuth } from 'src/config/navigation'

import { REACT_APP } from 'src/config/'

class Home extends Component {

webview

invoke = createInvoke(() => this.webview)

componentDidMount() {

this.invoke.define('onLogout', this.onLogout)

}

onLogout = async () => {

try {

AsyncStorage.clear()

goToAuth()

} catch (err) {

Alert.alert('Something went wrong')

}

}

render() {

const { token } = this.props

return (

<SafeAreaView style={{ flex: 1, backgroundColor: '#fff' }}>

<WebView

useWebKit

ref={webview => (this.webview = webview)}

onMessage={this.invoke.listener}

source={{

uri: `${REACT_APP}/?token=${token}`,

}}

bounces={false}

/>

</SafeAreaView>

)

}

}

export default Home

```

Let’s now see how can we handle that from the React web app.

> I’ll skip right into the part where we handle the passed function to invoke it, the source code will be able available for you.

First of all, let’s import `invoke` from `react-native-webview-invoke/browser`

```js

import invoke from 'react-native-webview-invoke/browser'

```

All we have to do to access the function and invoke it is binding, checking if it exists and invoking it.

```js

const onLogout = invoke.bind('onLogout')

if (onLogout) {

onLogout().then(() => {})

}

```

That’s basically the guide through to implement authentication within a native app that has a webview section.

If you managed to make it until the end, make sure to subscribe to the news letter down below in order to get the latest articles delivered right to your inbox!

- React Native App: [https://github.com/smakosh/article-auth-app](https://github.com/smakosh/article-auth-app)

- React web app: [https://github.com/smakosh/article-auth-web](https://github.com/smakosh/article-auth-web)

- REST API: [https://github.com/smakosh/article-auth-api](https://github.com/smakosh/article-auth-api) | smakosh |

213,651 | Advent of Code 2019 Day 1 | Day one of Advent of Code 2019 Challenge | 0 | 2019-12-01T21:28:01 | https://dev.to/yamakasy/advent-of-code-2019-day-1-9ba | ---

title: Advent of Code 2019 Day 1

published: true

description: Day one of Advent of Code 2019 Challenge

tags:

---

# The Challenge

Advent of Code is an Advent calendar of small programming puzzles for a variety of skill sets and skill levels that can be solved in any programming language you like.

Each day two puzzles will be provided.

# Why this year?

The last 2-3 month I have been improving my Functional programming skills. First I readed about FP on Javascript and now I am focusing on Haskell. I though this would be a good oportunity to use what I am learning.

# First puzzle of the day

`Fuel required to launch a given module is based on its mass. Specifically, to find the fuel required for a module, take its mass, divide by three, round down, and subtract 2.`

Apart from this description some examples are given:

1. `For a mass of 12, divide by 3 and round down to get 4, then subtract 2 to get 2`

2. `For a mass of 14, dividing by 3 and rounding down still yields 4, so the fuel required is also 2.`

3. `For a mass of 1969, the fuel required is 654.`

4. `For a mass of 100756, the fuel required is 33583.`

`the Fuel Counter-Upper needs to know the total fuel requirement. To find it, individually calculate the fuel needed for the mass of each module (your puzzle input), then add together all the fuel values.`

# Implementation process an solution

As I am really new to Haskell I decided to also implement the puzzle in Javascript.

As some examples were given, I decide to transform them intro a test cases.

```javascript

const { calculateFuelRequired } = require('./FuelRequerimentCalculator')

describe('calculateFuelRequired', () => {

it('returns 0 when the mass is 0', () => {

const fuelRequired = calculateFuelRequired(0);

expect(fuelRequired).toBe(0);

});

it('returns 0 when the mass is less than 0', () => {

const fuelRequired = calculateFuelRequired(-1);

expect(fuelRequired).toBe(0);

});

it('returns 2 when the mass is 12', () => {

const fuelRequired = calculateFuelRequired(12);

expect(fuelRequired).toBe(2);

});

it('returns 2 when the mass is 14', () => {

const fuelRequired = calculateFuelRequired(14);

expect(fuelRequired).toBe(2);

});

it('returns 654 when the mass is 1969', () => {

const fuelRequired = calculateFuelRequired(1969);

expect(fuelRequired).toBe(654);

});

it('returns 33583 when the mass is 100756', () => {

const fuelRequired = calculateFuelRequired(100756);

expect(fuelRequired).toBe(33583);

});

})

```

The resulting code is simple:

```javascript

function calculateFuelRequired(mass) {

if (mass <= 0) return 0;

return Math.floor((mass / 3)) - 2;

}

```

To execute the code against the inputs given by the challenge and calculating the answer:

```javascript

function main() {

const values = getValues(); //function that returns an array with all the values given.

const total = values.reduce((prev, curr) => {

return prev + calculateFuelRequired(curr);

}, 0);

console.log(total);

}

```

Ones solved the challenge in Javascript I tried my best to translate it to something that the Haskell compiler wouldn't claim about:

```haskell

module FuelRequerimentCalculator where

calculateFuelRequired :: Double -> Integer

calculateFuelRequired mass | mass <= 0 = 0

| otherwise = floor(mass/3) - 2

main :: Integer

main = sum (map calculateFuelRequired getValues)

```

#Second puzzle of the day

`Fuel itself requires fuel just like a module - take its mass, divide by three, round down, and subtract 2. However, that fuel also requires fuel, and that fuel requires fuel, and so on. Any mass that would require negative fuel should instead be treated as if it requires zero fuel; the remaining mass, if any, is instead handled by wishing really hard, which has no mass and is outside the scope of this calculation.`

1. `A module of mass 14 requires 2 fuel. This fuel requires no further fuel (2 divided by 3 and rounded down is 0, which would call for a negative fuel), so the total fuel required is still just 2.`

2. `At first, a module of mass 1969 requires 654 fuel. Then, this fuel requires 216 more fuel (654 / 3 - 2). 216 then requires 70 more fuel, which requires 21 fuel, which requires 5 fuel, which requires no further fuel. So, the total fuel required for a module of mass 1969 is 654 + 216 + 70 + 21 + 5 = 966.`

3. `The fuel required by a module of mass 100756 and its fuel is: 33583 + 11192 + 3728 + 1240 + 411 + 135 + 43 + 12 + 2 = 50346.`

`What is the sum of the fuel requirements?`

# Implementation process an solution

The same examples with the updated result are given, so I create again some tests.

```javascript

describe('calculateFuelRequiredCountingWithFuel', () => {

it('returns 0 when the mass is 0', () => {

const fuelRequired = calculateFuelRequiredCountingWithFuel(0);

expect(fuelRequired).toBe(0);

});

it('returns 0 when the mass is less than 0', () => {

const fuelRequired = calculateFuelRequiredCountingWithFuel(-1);

expect(fuelRequired).toBe(0);

});

it('returns 2 when the mass is 12', () => {

const fuelRequired = calculateFuelRequiredCountingWithFuel(12);

expect(fuelRequired).toBe(2);

});

it('returns 2 when the mass is 14', () => {

const fuelRequired = calculateFuelRequiredCountingWithFuel(14);

expect(fuelRequired).toBe(2);

});

it('returns 966 when the mass is 1969', () => {

const fuelRequired = calculateFuelRequiredCountingWithFuel(1969);

expect(fuelRequired).toBe(966);

});

it('returns 50346 when the mass is 100756', () => {

const fuelRequired = calculateFuelRequiredCountingWithFuel(100756);

expect(fuelRequired).toBe(50346);

});

})

```

The solution is a bit more complicated and it includes recursion.

```javascript

function calculateFuelRequiredCountingWithFuel(mass) {

const calc = Math.floor((mass / 3)) - 2;

if (calc <= 0) return 0;

const result = calc + calculateFuelRequiredCountingWithFuel(calc);

return result;

}

```

And this is the updated main method to show both the solutions.

```javascript

function main() {

const values = getValues();

const noFueltotal = values.reduce((prev, curr) => {

return prev + calculateFuelRequired(curr);

}, 0);

const withFuelTotal = values.reduce((prev, curr) => {

return prev + calculateFuelRequiredCountingWithFuel(curr);

}, 0);

console.log('The total without adding the fuel is ' + noFueltotal);

console.log('The total adding the fuel is ' + withFuelTotal);

}

```

And finally the Haskell version:

```haskell

calculateFuelRequiredCountingWithFuel:: Double -> Integer

calculateFuelRequiredCountingWithFuel mass | calculateFuelRequired mass <= 0 = 0

| otherwise = (calculateFuelRequired mass) + (calculateFuelRequiredCountingWithFuel ( fromIntegral (calculateFuelRequired mass)))

main2 :: Integer

main2 = sum (map calculateFuelRequiredCountingWithFuel getValues)

```

#Conclusion

This was the first day, I will continue updating the repository and trying to create one post peer day.

{% github IObregon/adventofcode2019 no-readme%}

If you want to participate this is the web page of the challenge [Advent of code](https://adventofcode.com/2019/day/1) | yamakasy | |

213,662 | Refactor a function to be more functional | Functional paradigm is kind of mystical knowledge for me, as it involves a lot of hard words and conc... | 0 | 2019-12-01T21:34:01 | https://dev.to/pavelloz/refactor-a-function-to-be-more-functional-1bdj | javascript, functional, beginners | Functional paradigm is kind of mystical knowledge for me, as it involves a lot of hard words and concepts from math. But once in a while I read or watch materials about it hoping that I will understand more. This has been going for years now.

Some concepts are easy, but without a good, iterative example it is still hard to incorporate into everyday developer's life. Last night, I think I found a good example, that would help me a lot with understanding some of the basics of composition if someone showed me something like I'm about to show you. I hope you find it hopeful in your journey to writing good and easy to maintain code.

---

Function that will serve me as an example will take a string and return a number of unique letters in that string.

## Prepare the test case

I always do that first, because I prototype in [RunJS](https://runjs.dev). I find it the easiest and quickest that way. There is also Scratchpad in Firefox, but RunJS has live code evaluation.

```js

const input = 'Hi, my name is Pawel!';

const expected = 11;

const count = (string) => '';

console.log(expected === count(input));

```

## Make it work

Now let's implement the first version that will return correct result.

```js

const count = string => {

const array = Array.from(string);

const onlyLetters = array.filter(char => char.match(/[a-zA-Z]/));

const lowercase = onlyLetters.map(char => char.toLowerCase());

const unique = new Set(lowercase);

const output = unique.size;

return output;

}

```

It is pretty verbose, line by line it is pretty easy to understand what is going on. Probably the biggest downside is that it uses a lot of assignments.

Note: Im using `Set` to make array values unique.

## Make it better

Let me walk you through some of the variants I came up with when trying to find the optimal solution.

### A little bit of chaining

```js

const count = string => {

const array = Array.from(string)

.filter(char => char.match(/[a-zA-Z]/))

.map(char => char.toLowerCase());

return new Set(array).size;

}

```

Now we used less constants and used the fact that `Array` can chain methods like `filter`, and `map`. This is a first step to what is coming next.

## "The Oneliner"

```js

const count = string => {

return new Set(

Array.from(string)

.filter(char => char.match(/[a-zA-Z]/))

.map(char => char.toLowerCase())

).size;

}

```

In general I consider chaining a very nice way of making things prettier. But when your goal is only to make code shorter, usually readability hurts, like in this case. I wouldn't consider this a improvement compared to the previous version.

But its fun to know it could be done, and shows how important indentation is in those cases where you decide to go with it.

## One big chain

```js

const count = string => {

return [string]

.map(string => Array.from(string))

.map(array => array.filter(char => char.match(/[a-zA-Z]/)))

.map(array => array.map(char => char.toLowerCase()))

.map(array => new Set(array))

.map(set => set.size)[0]

}

```

This stage takes advantage of the same chaining property of `Array` from second version, but this time it takes things to the next level, literally. It puts input immediately into an array and uses `map` for composition to do the necessary operations.

## More composition

```js

const onlySmallLetters = string => {

return Array.from(string)

.filter(char => char.match(/[a-zA-Z]/))

.map(char => char.toLowerCase())

}

const count = string => {

return [string]

.map(onlySmallLetters)

.map(array => new Set(array))

.map(set => set.size)[0]

}

```

Lastly, not the most condensed version, but this implementation adds another dimension.

You might want to reuse `onlySmallLetters` function somewhere else - this would be called composition - compose functions from smaller functions. Those smaller functions are easier to test, understand and debug.

And this is where I landed at the end of my journey with this challenge that I found when learning basics of python.

---

Mixing types, accepting a string and returning an array might not be predictable, thats why, as I understand, functional programming has specific constructs to make it easier and more predictable for everybody knowing the paradigm.

Dive deeper into those mystical parts of functional programming in JS by watching "[Professor Frisby Introduces Composable Functional JavaScript](https://egghead.io/courses/professor-frisby-introduces-composable-functional-javascript)" by Brian Lonsdorf. | pavelloz |

213,711 | New rails 6 app with postgresql database and heroku deployment | I have been working with rails professionally for over a year now, and I want to share the guide I us... | 0 | 2019-12-01T23:40:06 | https://dev.to/chair/quick-guide-new-rails-6-app-with-postgresql-database-and-heroku-deployment-7ji | ruby, rails, postgres, todayilearned | I have been working with rails professionally for over a year now, and I want to share the guide I use personally to quickly spin up a new rails app with a postgresql database, and deploy to heroku.

My aim is to make it quick and easy for you to get going, and take the fuzziness out of the setup process.

Find my gist here!

https://gist.github.com/chair28980/acaa7c8f8a71dc663194ce79c44d00b9

I'd love to hear your feedback. Drop a comment here or find me on twitter: @vrycmfy | chair |

213,724 | How To Create Custom State Hook - Custom Hooks ReactJS Mini Course Part 1 | Welcome to ReactJS Custom Hooks Mini Course. In this course we'll learn how to create custom hooks in React, how to test them using React Testing Library and how to publish them as a standalone npm package. | 3,557 | 2019-12-02T01:28:06 | https://dev.to/satansdeer/how-to-create-custom-state-hook-custom-hooks-reactjs-mini-course-part-1-5mh | react | ---

title: How To Create Custom State Hook - Custom Hooks ReactJS Mini Course Part 1

published: true

description: Welcome to ReactJS Custom Hooks Mini Course. In this course we'll learn how to create custom hooks in React, how to test them using React Testing Library and how to publish them as a standalone npm package.

tags: react

series: Custom Hooks ReactJS Mini Course

---

{% youtube hTmWfTniyIk %}

Welcome to ReactJS Custom Hooks Mini Course. In this course we'll learn how to create custom hooks in React, how to test them using React Testing Library and how to publish them as a standalone npm package.

Course playlist: http://bit.ly/35FHALa

Subscribe to the channel: http://bit.ly/2QZ2UY3 | satansdeer |

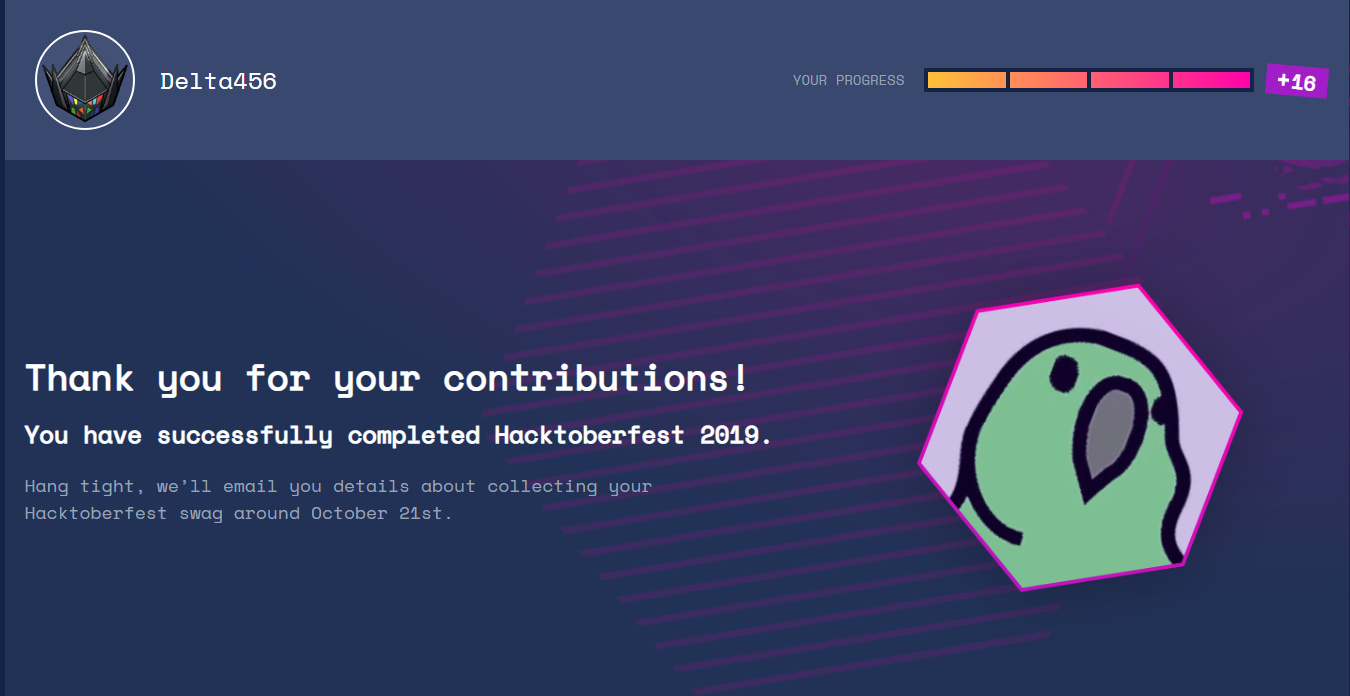

213,735 | Minor Follow-up on Hacktoberfest 2019 | In a previous essay I wrote about the process behind my Hacktoberfest 2019 contri... | 0 | 2019-12-02T08:49:27 | http://hugomartins.io/essays/minor-followup-on-hacktoberfest/ | opensource, hacktoberfest | ---

title: Minor Follow-up on Hacktoberfest 2019

published: true

date: 2019-12-01 00:00:00 UTC

tags: open-source, hacktoberfest

canonical_url: http://hugomartins.io/essays/minor-followup-on-hacktoberfest/

---

In a previous essay I wrote about the process behind my Hacktoberfest 2019 contributions. It is now worthwhile to make a quick follow-up, at this point, at the status of those contributions.

It is relevant to start by mentioning I made a bit of a mess of my contributions, right after I wrote up the article. I tried to correct an issue with the email and user of the contributions and re-wrote the history of my git repositories…bummer! That forced me to close my initial PRs and having to open up new ones - and re-writing all the changes I had made.

I had opened [PR #4476](https://github.com/pyinstaller/pyinstaller/pull/4476), [PR #4499](https://github.com/pyinstaller/pyinstaller/pull/4499) and [PR #4500](https://github.com/pyinstaller/pyinstaller/pull/4500). PR #4499 had to be closed and re-opened as [PR #4516](https://github.com/pyinstaller/pyinstaller/pull/4516). PR #4500 had to be closed and re-opened as [PR #4517](https://github.com/pyinstaller/pyinstaller/pull/4517). That makes a total of 5 PRs, of which only 3 were actually valid for review by PyInstaller’s maintainers.

PR #4476 was accepted, within a two-week period. This seems to be a fairly usual time frame, by looking at previous PR reviews in the project. There was a minor wrapping issues, which the maintainers of PyInstaller promptly resolved and pushed to my branch. PR #4516 was merged within approximately the same two-week period, without any need for further modification. PR #4517 took a bit longer to merge because I had made an error when creating the changelog entry - plus there was a need for a small lint correction.

I was very happy with the end result, getting all 3 PRs approved and merged. I was surprised with how friendly the maintainers of PyInstaller were, even with the small errors. I was also thankful they completely ignored my shenanigans of duplicating the PRs.

They should be an example for the entire community, how they deal with new contributors and their mistakes. That is one of the reasons why they have 280+ contributors on Github.

Now, looking towards the future, I hope I can be of more use to them by adding more hooks. Adding hooks seems to be something that I can do, without creating a lot of work for the maintainers in terms of reviews. But , it will also allow me to start understanding more of the codebase and contribute in different areas. | caramelomartins |

213,746 | Created iPadOS/iOS browser to operate without touching | I bought iPad Pro and found that I need to touch screen to move pages. I know there is browser extens... | 0 | 2019-12-02T03:11:23 | https://dev.to/shohei/created-ipados-ios-browser-to-operate-without-touching-4fg2 | reactnative, ios, ipados, javascript |

I bought iPad Pro and found that I need to touch screen to move pages. I know there is browser extension, like [Vimium](https://github.com/philc/vimium) to operate with only keyboard. To move page, use "hit-a-hint". I like this idea and wanted to have the app. I looked for App store but I unabled to find it. Ok, it's time to develop it by myself. I spent 5 months to build this. I used React Native as I had experience on it.

I named this app as Wazari Brower and this can be downloaded from [here](https://www.wazaterm.com/wazaribrowser). I wrote documentation [here]()

This is an open source project and able to see the code inside from [the github repository](https://github.com/shohey1226/wazari). I’m sure there are tons of bugs there, so any bug report is welcome (and also PR :) )

I’ve also found that iOS doesn’t support keyboard key configuration. So I added this function to replace capslock and ctrl. The final feature is folloing. I hope you like this.

## Feature

* Customizable shortcuts to operate browser. e.g. Change tabs without touching screen.

* Panes to split views vertiacally or horizontally.

* Hit-A-Hint - without touching, click links to move pages.

* Customizable modifiers. e.g. swap capslock with ctrl key.

* Customizable default search engine - DuckDuckGo or Google

* Exclude web sites not to use keymapping. Some dynamic web site doesn't use Input type=text or textarea, which Wazari keymapping doesn't work. But you can exclude these website so you can still type on it.

* Histories to go back easily

* Integrated to Wazaterm so you can terminal - I had a separate project for this. I wanted to integrate with it too.

| shohei |

213,831 | Copying and extending Objects in javaScript | We are going to review few of the most popular techniques to copy and extend javaScript Objects | 0 | 2019-12-06T19:43:49 | https://dev.to/leolanese/copying-and-extending-objects-in-javascript-3o0o | javascrpt, deepcopy, shallowcopy, es6 | ---

title: Copying and extending Objects in javaScript

published: true

description: We are going to review few of the most popular techniques to copy and extend javaScript Objects

tags: javascrpt, deepCopy, shallowCopy, ES6

---

1) Copying Objects

--[1.1] Copying plain Objects

--[1.2] Copying deeply nested Objects

2) Extending Objects

--[2.1] Extending plain Objects

--[2.2] Extending deeply nested Objects

---

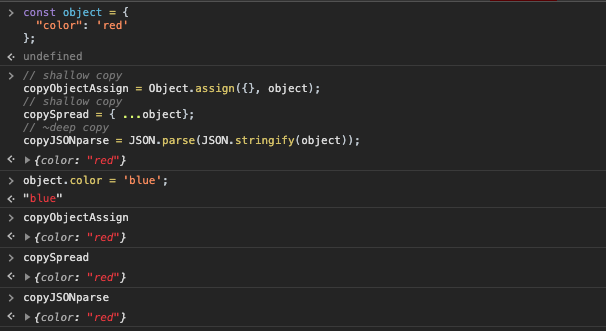

### 1) Copying plain Objects:

#### [1.1] Copying plain Objects

Simple Array of Object

```javascript

const object = {

'color': 'red'

};

// shallow copy

copyObjectAssign = Object.assign({}, object);

// shallow copy

copySpread = { ...object};

// ~deep copy

copyJSONparse = JSON.parse(JSON.stringify(object));

object.color = 'blue'; // changing original object

object === copyJSONparse; // FALSE

object === copyObjectAssign; // FALSE

object === copySpread ; // FALSE

```

{% runkit

// hidden setup JavaScript code goes in this preamble area

%}

const object = {

'color': 'red'

};

// shallow copy

copyObjectAssign = Object.assign({}, object);

// shallow copy

copySpread = { ...object};

// ~deep copy

copyJSONparse = JSON.parse(JSON.stringify(object));

object.color = 'blue'; // changing original object

console.log(object === copyJSONparse); // FALSE

console.log(object === copyObjectAssign); // FALSE

console.log(object === copySpread); // FALSE

{% endrunkit %}

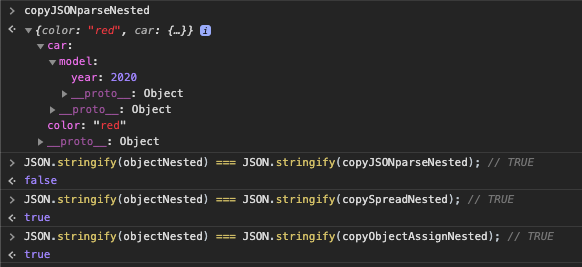

#### [1.2] Copying deeply nested Objects

These are Objects that have more than one level deep

```javascript

const objectNested = {

"color": "red",

"car": {

"model": {

year: 2020

}

}

};

// shallow copy

copyObjectAssignNested = Object.assign({}, objectNested );

// shallow copy

copySpreadNested = { ...objectNested };

// ~deep copy

copyJSONparseNested = JSON.parse(JSON.stringify(objectNested));

// changing the original objectNested

objectNested.car.model.year = 1975; // change here

// original object IS changed!

objectNested // {"color":"red", "car":{"model": { year: 1975 }}

// shallow-copy IS changed!

copyObjectAssignNested // {"color":"red", "car":{"model": { year: 1975 }}

copySpreadNested // {"color":"red", "car": {"model": { year: 1975 }}

// deep-copy NOT changed: deepClone Object won't have any effect if the main source object obj is modified and vice-versa

copyJSONparseNested // {"color":"red", "car": {"model": { year: 2020 }}

// let see what changes then?

JSON.stringify(objectNested) === JSON.stringify(copyObjectAssignNested); // TRUE

JSON.stringify(objectNested) === JSON.stringify(copySpreadNested); // TRUE

JSON.stringify(objectNested) === JSON.stringify(copyJSONparseNested); // FALSE (changes don't affect each other after deep-copy)

```

{% runkit

// hidden setup JavaScript code goes in this preamble area

const hiddenVar = 42

%}

const objectNested = {

"color": "red",

"car": {

"model": {

year: 2020

}

}

};

// shallow copy

copyObjectAssignNested = Object.assign({}, objectNested );

// shallow copy

copySpreadNested = { ...objectNested };

// ~deep copy

copyJSONparseNested = JSON.parse(JSON.stringify(objectNested));

// changing the original objectNested

objectNested.car.model.year = 1975; // change here

// original object IS changed!

objectNested // {"color":"red", "car":{"model": { year: 1975 }}

// shallow-copy IS changed!

copyObjectAssignNested // {"color":"red", "car":{"model": { year: 1975 }}

copySpreadNested // {"color":"red", "car": {"model": { year: 1975 }}

// deep-copy NOT changed: deepClone Object won't have any effect if the main source object obj is modified and vice-versa

copyJSONparseNested // {"color":"red", "car": {"model": { year: 2020 }}

// let see what changes then?

console.log(JSON.stringify(objectNested) === JSON.stringify(copyObjectAssignNested)); // TRUE

console.log(JSON.stringify(objectNested) === JSON.stringify(copySpreadNested)); // TRUE

console.log(JSON.stringify(objectNested) === JSON.stringify(copyJSONparseNested)); // FALSE (changes don't affect each other after deep-copy)

{% endrunkit %}

### Why:

- Object.assign({})

Can only make shallow copies of objects so it will only work in a single level (first level) of the object reference.

- Object spread:

Object spread does a 'shallow copy' of the object. Only the object itself is cloned, while "nested instances are not cloned".

- JSON.parse(JSON.stringify()):

This is a questionable solution. Why? Because this is going to work fine as long as your Objects and the nested Objects "only contains primitives", but if you have objects containing functions or 'Date' this won't work.

Changing a property value from the original object or property value from the shallow copy object it will affect each other.

The reason is how the javascript engine works internally: JS passes the primitive values as value-copy and the compound values as reference-copy to the value of the primitives on that Object. So, when copied the Object containing the nested Object, that will create a shallow-copy of that Object:

Primitive found on the first level of the original object it will be copied as value-copy: changing is reciprocal: changing the value of one will affect the other one. So they will be depending on each other

Deeply nested properties will be copied as reference-copy: changing one it will not affect the reference of the other one

> first-level properties: value-copy

> deeply nested properties: reference-copy

### <u>Solution:</u>

We can create our own or we can use the third-party libraries to achieve a future-proof deep copy and deep merge.

#### Third party solutions:

lodash's cloneDeep()

```javascript

import * as cloneDeep from 'lodash/cloneDeep';

...

clonedObject = cloneDeep(originalObject);

```

```javascript

const objectNested = {

"name":"John",

"age":30,

"cars": {

"car1":"Ford",

"car2":"BMW",

"model": {

year: 2020

}

}

};

// making a copy of the reference, a new object is created that has an exact copy of the values in the original object.

const deep = _.cloneDeep(objectNested);

console.log(JSON.stringify(deep) === JSON.stringify(objectNested)); // TRUE

console.log("deep reference", deep.cars.model === objectNested.cars.model); // FALSE

// assinging one Object to other reference

const deep2 = objectNested;

console.log('share reference', deep2.cars.model === objectNested.cars.model); // TRUE

console.log('share references', deep2 === objectNested); // TRUE

```

### Lodash cloneDeep()

```javascript

var objects = [{ 'a': 1 }, { 'b': 2 }];

var deepCopy = _.cloneDeep(objects);

console.log(deepCopy[0] === objects[0]); // => false

objects[0].a === deepCopy[0].a // true

deep[0].a = 123; // original object changes

objects[0].a === deepCopy[0].a // false = changes no affecting deepCopy

```

### Further Information:

Lodash

https://lodash.com/docs/4.17.15#cloneDeep

Lodash npm package:

https://www.npmjs.com/package/lodash.clonedeep

Immutability-helper:

A light and easy to use helper which allows us to mutate a copy of an object without changing the original source:

https://github.com/kolodny/immutability-helper

---

### 2) Extending Objects

Few options we are going to evaluate:

```html

JS | JS ES6+ | jQuey | Lodash | AngularJS

Object.assign() Spread operator $.extend() .merge() .extend()

mix() .merge()

```

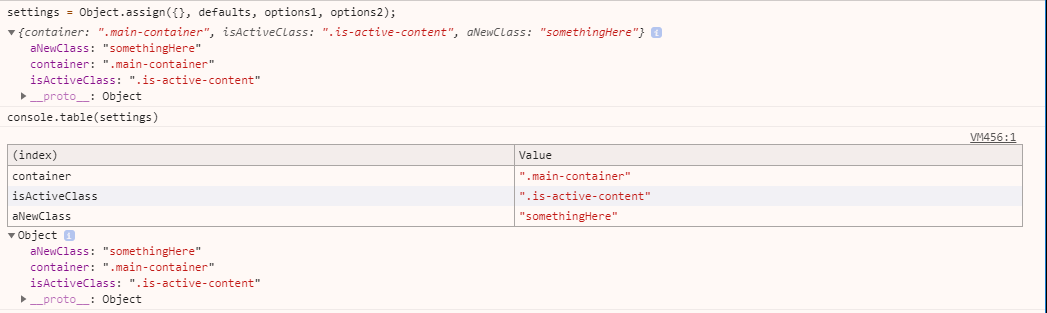

#### [2.1] Extending plain Objects

Extend Objects is a simple process but required to know what we want to do with:

- Objects that have the same name attributes

- Mutation of the Object

### Object.assign({}):

```javascript

let defaults = {

container: ".main",

isActiveClass: ".is-active"

};

let options1 = {

container: ".main-container",

isActiveClass: ".is-active-element"

};

let options2 = {

aNewClass: "somethingHere",

isActiveClass: ".is-active-content"

};

settings = Object.assign({}, defaults, options1, options2); // using {}

// { container: ".main-container", isActiveClass: ".is-active-content", aNewClass: "somethingHere"}

```

{% runkit

// hidden setup JavaScript code goes in this preamble area

const hiddenVar = 42

%}

// visible, reader-editable JavaScript code goes here

let defaults = {

container: ".main",

isActiveClass: ".is-active"

};

let options1 = {

container: ".main-container",

isActiveClass: ".is-active-element"

};

let options2 = {

aNewClass: "somethingHere",

isActiveClass: ".is-active-content"

};

console.log(settings = Object.assign({}, defaults, options1, options2)); // using {}

// { container: ".main-container", isActiveClass: ".is-active-content", aNewClass: "somethingHere"}

{% endrunkit %}

Further information:

https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Object/assign

---

### Custom .mix() method for ES5 and earlier:

#### Flat Object

Add an Object2 to another Object1:

$.extend() like, but DO NOT replace similar keys = the FIRST OBJECT WILL PREVAIL

We are navigating thought the flat object and '=' the values.

```javascript

// source

options = {

underscored: true,

"name": 1

}

// target

products = {

foo: false,

"name": "leo"

}

function mix(source, target) {

for(var key in source) {

if (source.hasOwnProperty(key)) {

target[key] = source[key];

}

}

console.log(target)

}

mix(options, products); // { foo: false, name: 1, underscored: true }

```

{% runkit

// hidden setup JavaScript code goes in this preamble area

const hiddenVar = 42

%}

// visible, reader-editable JavaScript code goes here

options = {

underscored: true,

"name": 1

}

// target

products = {

foo: false,

"name": "leo"

}

function mix(source, target) {

for(var key in source) {

if (source.hasOwnProperty(key)) {

target[key] = source[key];

}

}

console.log(target)

}

console.log(mix(options, products)); // { foo: false, name: 1, underscored: true }

{% endrunkit %}

---

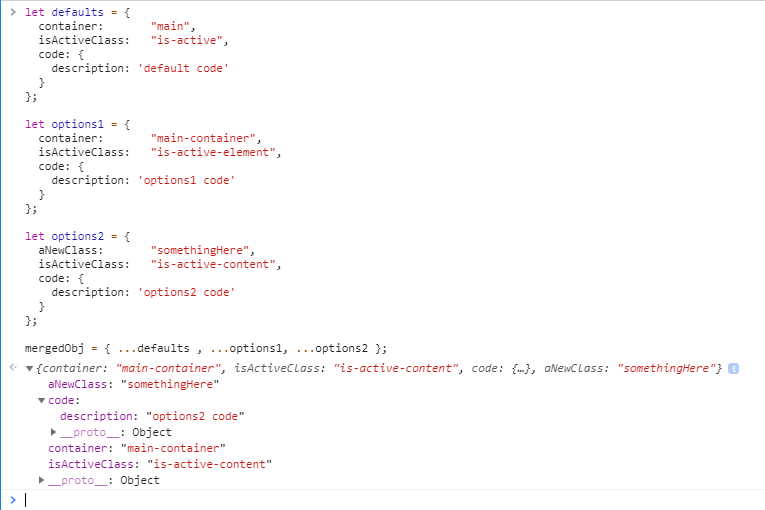

### ES6 Spread operator

```javascript

let defaults = {

container: "main",

isActiveClass: "is-active",

code: {

description: 'default code'

}

};

let options1 = {

container: "main-container",

isActiveClass: "is-active-element",

code: {

description: 'options1 code'

}

};

let options2 = {

aNewClass: "somethingHere",

isActiveClass: "is-active-content",

code: {

description: 'options2 code'

}

};

mergedObj = { ...defaults , ...options1, ...options2 };

// { aNewClass: "somethingHere"

code: {

description: "options2 code"

},

container: "main-container"

isActiveClass: "is-active-content"

}

```

{% runkit

// hidden setup JavaScript code goes in this preamble area

const hiddenVar = 42

%}

// visible, reader-editable JavaScript code goes here

let defaults = {

container: "main",

isActiveClass: "is-active",

code: {

description: 'default code'

}

};

let options1 = {

container: "main-container",

isActiveClass: "is-active-element",

code: {

description: 'options1 code'

}

};

let options2 = {

aNewClass: "somethingHere",

isActiveClass: "is-active-content",

code: {

description: 'options2 code'

}

};

console.log(mergedObj = { ...defaults , ...options1, ...options2 });

// { aNewClass: "somethingHere", code: {description: "options2 code"},container: "main-container",isActiveClass: "is-active-content"}

{% endrunkit %}

If some objects have a property with the same name, then the second object property overwrites the first. If we don't want this behaviour we need to perform a 'deep merge' or object and array recursive merge.

Further information:

https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Operators/Spread_syntax

---

### $.extend()

It is a jQuery function that will extend and replace similar keys.

#### $.extend() replace similar keys:

```javascript

const defaults = { d1: false, d2: 5, d3: "foo" };

const options = { d4: true, d6: "bar" };

// jQuery Merge object2 into object1 (modifying there results)

$.extend( defaults, options );

// Object {d1: false, d2: 5, d3: "foo", d4: true, d6: "bar"}

```

#### $.extend() without replace similar keys:

Remember that Javascript objects are mutable and store by reference.

```javascript

const defaults = { validate: false, limit: 5, name: "foo" };

const options = { validate: true, name: "bar" };

// Merge defaults and options, without modifying defaults

settings = $.extend({}, defaults, options);

Object {validate: true, limit: 5, name: "bar"}

```

Further information:

jquery.extend()

https://api.jquery.com/jquery.extend

---

### Lodash .merge()

"This method is like _.assign except that it recursively merges own and inherited enumerable string keyed properties of source objects into the destination object. Source properties that resolve to undefined are skipped if a destination value exists. Array and plain object properties are merged recursively. Other objects and value types are overridden by assignment. Source objects are applied from left to right. Subsequent sources overwrite property assignments of previous sources."

<sub><sub>https://lodash.com/docs/4.17.15#merge</sub></sub>

> Note: This method mutates object.

Using Lodash .merge() with first level (flat) object

```javascript

let defaults = {

container: "main",

isActiveClass: "is-active"

};

let options1 = {

container: "main-container",

isActiveClass: "is-active-element"

};

let options2 = {

aNewClass: "somethingHere",

isActiveClass: "is-active-content"

};

_.merge(defaults, options1, options2);

_.merge(defaults, options1, options2);

// { aNewClass: "somethingHere", container: "main-container", isActiveClass: "is-active-content"}

```

Using Lodash .merge() with deeply nested object:

```javascript

let defaults = {

container: "main",

isActiveClass: "is-active",

code: {

description: 'default code'

}

};

let options1 = {

container: "main-container",

isActiveClass: "is-active-element",

code: {

description: 'options1 code'

}

};

let options2 = {

aNewClass: "somethingHere",

isActiveClass: "is-active-content",

code: {

description: 'options2 code'

}

};

_.merge(defaults, options1, options2);

// {

aNewClass: "somethingHere"

code: {

description: "options2 code"

},

container: "main-container"

isActiveClass: "is-active-content"

}

```

#### [2.2] Extending deeply nested Objects

#### AngularJS 'angular.extend()' and 'angular.merge()':

> angular.merge() it will be preserving properties in child objects.

> angular.extend() it will not preserve, it will replace similar properties

It does a deep copy of all properties from source to destination preserving properties in child objects. Note how we can also use multiple source objects that will be merged in order:

```javascript

const person1 = {

name: 'Leo',

address: {

description: 'Oxford Street'

}

}

const person2 = {

id: 1,

address : {

postcode: 'SW1'

}

}

const merged = angular.merge(person1, person2); // ALL the similar WILL PREVAIL

// merged object

// {id: 1, name:'Leo', address:{description:'Oxford Street',postcode: 'SW1'}}

const extended = angular.extend(person1, person2); // replace similar properties

// extended object

// {id: 1, name:'John', address:{postcode:'SW1'}}

```

---

<h5> { 'Leo Lanese',<br>

'Building Inspiring Responsive Reactive Solutions',<br>

'London, UK' }<br>

</h5>

<h5>Portfolio

<a href="http://www.leolanese.com" target="_blank">http://www.leolanese.com</a>

</h5>

<h5>Twitter:

<a href="http://twitter.com/LeoLaneseltd" target="_blank">twitter.com/LeoLaneseltd</a>

</h5>

<h5>Questions / Suggestion / Recommendation ?

<a href="mail:to">developer@leolanese.com</a>

</h5>

<h5>DEV.to:

<a href="http://www.dev.to/leolanese" target="_blank">www.dev.to/leolanese</a>

</h5>

<h5>Blog:

<a href="http://www.leolanese.com/blog" target="_blank">leolanese.com/blog</a>

</h5>

| leolanese |

213,851 | Steps For Integrating MD Bootstrap In Jhipster Project | Hi everyone I a gonna share one of my first posts I written on Medium, I hope you all will like it us... | 0 | 2019-12-02T09:04:33 | https://medium.com/@erbalvindersingh/steps-for-integrating-md-bootstrap-in-jhipster-project-84e5d0cc9aa1 | design, angular, css, jhipster | Hi everyone I a gonna share one of my first posts I written on Medium, I hope you all will like it useful. We were working with a Jhipster project and wanted to integrate MD Bootstrap that is a material design + Bootstrap framework.JHipster is a web application generator using Spring Boot for backend and Angular for front End with multiple technologies.

>Before starting you must have Sass support in your project

*Link to Jhipster* :[http://jhipster.tech/](http://jhipster.tech/)

MD Bootstrap provides various design components based on material design and layouts. You can check here :

*Link to MD Bootstrap* :

[https://mdbootstrap.com/getting-started/](https://mdbootstrap.com/getting-started/)

### So let’s start steps for integrating MD Bootstrap with JHipster

1. First create a directory in your system with name let myAPP or any you want.

2. CD using terminal or file explorer in your system into above created myApp folder.

3. Open terminal in myApp (If you have not JHipster setup follow link https://www.jhipster.tech/installation/).

4. Enter Command to generate JHipster project in your folder```yo jhipster```

5. After JHipster is installed. In the same terminal give the command for installing external libraries```npm install -–save chart.js@2.5.0 font-awesome hammerjs```

6. To app.module.ts add```typescript

import { NgModule,Injector,NO_ERRORS_SCHEMA } from ‘@angular/core’;

import { MDBBootstrapModule } from ‘angular-bootstrap-md’;

@NgModule({

imports: [

MDBBootstrapModule.forRoot(),

],

schemas: [ NO_ERRORS_SCHEMA ]

});

```

7. To Vendor.scss add```scss

/* after changing this file run ‘yarn run webpack:build’ */

$fa-font-path: ‘~font-awesome/fonts’;

// Images Path for angular-bootstrap-md

$image-path: ‘../../../../../node_modules/angular-bootstrap-md/img’ !default;

// Fonts Path for angular-bootstrap-md

$roboto-font-path: “../../../../../node_modules/angular-bootstrap-md/font/roboto/” !default;

/***************************

put Sass variables here:

eg $input-color: red;

****************************/

// Override Boostrap variables

@import “bootstrap-variables”;

// Import Bootstrap source files from node_modules

@import ‘node_modules/font-awesome/scss/font-awesome’;

@import ‘node_modules/angular-bootstrap-md/scss/bootstrap/bootstrap’;

@import ‘node_modules/angular-bootstrap-md/scss/mdb-free’;

```

8. In angular-cli.json add```

“styles”: [

“../node_modules/font-awesome/scss/font-awesome.scss”,

“../node_modules/angular-bootstrap-md/scss/mdb-free.scss”,

“./styles.scss”,

“content/scss/vendor.scss”,

“content/scss/global.scss”

],

“scripts”: [

“../node_modules/chart.js/dist/Chart.js”,

“../node_modules/hammerjs/hammer.min.js”

]

```

9. Add the following into tsconfig.json file located in root folder```“inlcude”: [ “node_modules/angular-bootstrap-md/**/*.ts ”,

“src/**/*.ts”

]```

10. Run in terminal to compile styles and scripts and inject to project```yarn webpack:build```

11. That are all steps for integrating MD Bootstrap with Jhipster

> Note : If you got any warnings in terminal after running yarn “webpack:build” then you can fix using tsLint manually

> Note 2: If you got compile failed errors then you may have styles conflict

You can fix by removing normal bootstrap styles or comment it if you do not want to remove

//@import ‘node_modules/bootstrap/scss/bootstrap’;

Here is the link to my Git Repository with sample project integrated with MD BootStrap :

https://github.com/balvinder294/MaterialThemeJhipster

Thanks for reading. Hope I helped you. if you find it useful then write below and share your views, also let me know if anything needs to be updated if something not works, I will look into it.

Looking forward to sharing more with the community.

> Originally published at [Tekraze.com](https://medium.com/@erbalvindersingh/steps-for-integrating-md-bootstrap-in-jhipster-project-84e5d0cc9aa1) | balvinder294 |

214,012 | Web layouts like it’s 2020 | Written by Facundo Corradini✏️ If you ever get the feeling that designers and developers are from... | 0 | 2019-12-03T14:22:25 | https://blog.logrocket.com/web-layouts-like-its-2020/ | featuredposts, css | ---

title: Web layouts like it’s 2020

published: true

date: 2019-12-02 14:00:59 UTC

tags: Featuredposts,css

canonical_url: https://blog.logrocket.com/web-layouts-like-its-2020/

cover_image: https://thepracticaldev.s3.amazonaws.com/i/q5kp2coygk1dgukfolbx.jpg

---

**Written by [Facundo Corradini](https://blog.logrocket.com/author/facundocorradini/)**✏️

If you ever get the feeling that designers and developers are from different worlds, you should have seen what it was like 10 or 20 years ago. In the early days of the internet, we were building websites _while_ trying to figure out what a website was and how it should work.

Coming from a print background, designers were used to the features (and limitations) of a known-dimension canvas and tried to replicate them in a medium that’s essentially designed as _exactly not that_. Developers were struggling mightily with the extremely limited features of early CSS, trying to implement those designs in browsers that were radically different from one another.

In the middle of all that, the users were getting a web experience that was quite inaccessible, hard to use, and simply unaesthetic.

Over time, we agreed on a core rule set for how a website should look and feel based on the concept of symmetrical columns such as [960.gs](https://960.gs/), which later on was implemented in many popular frameworks, including Bootstrap. This streamlined the process, providing a common _language_ that designers, developers, and users felt comfortable with.

But I’m sure I’m not alone when I get the feeling that web layouts have stagnated since. We all have seen those “all websites look the same” parodies, to the point that all those parodies are starting to look the same.

CSS has come a long way since those early days, with the development cycle greatly accelerating in the last couple years. Finally, web layouts are not a hack anymore (floats were originally meant to simply float text around an image).

We got multicol, flex, and grid to allow us a degree of freedom we’ve never seen before. We can finally break out of that symmetric columns paradigm and use all sorts of [effects](https://dev.to/bnevilleoneill/new-in-chrome-76-the-frosted-glass-effect-with-backdrop-filter-hgb) and features that we would’ve never dreamed of. We are not in the early 2000s anymore.

The newer specs allow us to build layouts that we would have discarded for being unusable or for lack of responsiveness just a couple of years ago. So I believe it’s time to start revisiting those concepts. Maybe we can bring back some of those print-like layouts in a way that adapts to the unknown canvas of the web.

[](https://logrocket.com/signup/)

Early this year, Jenn Simmons posted these magazine layouts as inspiration, wondering how they could work for the web. I went ahead and turned them to code, so we can explore the core concepts of building a web layout that’s different from what everyone seems to be doing. Here’s how to build web layouts like it’s 2020.

{% twitter 1084494889214193666 %}

## Thinking responsive, progressive layouts

Designing for the web is, by definition, [designing for an unknown canvas](https://www.youtube.com/watch?v=aHUtMbJw8iA). The web can be accessed from all sorts of devices with radically different dimensions and through all kinds of browsers — from a tiny mobile device or even a watch to a ginormous 4K smart TV, not to mention all sorts of alternate approaches that are not even based on graphic display.

So the first challenge in converting a magazine layout for web use is considering how it should adapt to whatever device is accessing it. Where are the boundaries where this approach doesn’t work anymore? How should the alternative look? What are some technical limitations that a browser can have trouble implementing?

Considering this layout, I identify the parts that can be challenging.

1. The multicolumn layout can work on the wider devices, but it’s certainly a no-go for smaller screen sizes.

2. The title itself with a “center float” is something most browsers won’t know how to deal with.

3. The intro paragraph between the columns can be somewhat tricky.

Luckily, the solution pretty much works itself out if we consider a progressive enhancement approach from the start. We can think of all the different layers as progressive enhancement: from the layout for different viewport sizes via the use of media queries, adding newer features in a safe way with feature queries, or even adding accessibility goodies such as [prefers-reduced-motion](https://blog.logrocket.com/new-in-chrome-74-prefers-reduced-motion-media-query-50cd89d3e769/) or [dark mode with prefers-color-scheme](https://blog.logrocket.com/whats-new-in-firefox-67-prefers-color-scheme-and-more-195be81df03f/). Every layer can work over the previous to create the best user experience for a given device.

Personally, I like to start from how the website should look if no CSS is loaded at all. This means using nothing but semantic markup in a reasonable order. This will ensure that the web is usable even if we strip it all the way down to the browser’s default styling.

```jsx

<article class="print-like">

<header class="intro">

<h1 class="title">Print-like Layouts on the web</h1>

<p class="summary">We've been stuck for decades in the simetrical columns paradign. Let's try to spice things up a little.</p>

<address class="author">By <a rel="author" href="https://twitter.com/fcorradini">Facundo Corradini</a><br/>

</address>

</header>

<img class= "main-image" src="https://placeimg.com/640/480/animals/sepia" alt="random image"/>

<section class="main-text">

<p>...</p>

<p>...</p>

<p>...</p>

</section>

</article>

```

<figcaption id="caption-attachment-10528">Even without any CSS, the document structure ensures the browser provides a somewhat usable default.</figcaption>

The semantic markup in a logical order means that we can get away with title on top, intro, paragraphs, then progressively enhance the layout for the wider screens and the different features.

Best thing about it is that mobile layouts are usually not that different from the browser defaults. Sure, we put a great deal of effort into our typography, spacing, and such, but the mobile content is rarely seen in any format other than the traditional blocky layout were elements flow one below the other.

<figcaption id="caption-attachment-10529">A little bit of styling provides a decent layout for small mobile devices.</figcaption>

We can then think of a slightly bigger screen size. What if the viewport is wide enough to fit two columns, but not quite wide enough for the full layout?

It’d make sense to keep the title and intro above everything else, but have the paragraphs as two columns with a variable width, growing as needed.

Simply turning the text container to multicolumn layout in a media query does the trick.

```jsx

@media screen and (min-width: 600px){

.print-like{

display: grid;

}

.main-image{

grid-row: 3/4;

}

.main-text{

column-count: 2;

}

.main-text :first-child{

margin-top:0;

}

.main-text :last-child{

margin-bottom: 0;

}

}

```

<figcaption id="caption-attachment-10531">Multicolumn + CSS grid to move the image to the bottom.</figcaption>

When the viewport becomes big enough to fit all columns (including the container in the middle), we can use the column-gap property to clear the space in the middle for the title and intro, then position the elements with a simple grid declaration in the container. Although the original design was based on thirds, I chose to keep the central column in fixed width and let the side ones adjust to the container, just as an experiment to see what happens when we break the symmetric columns paradigm.

```jsx

@media screen and (min-width: 900px){

.print-like{

grid-template-columns: 1fr 300px 1fr;

align-items: center;

}

.intro{

grid-row:1;

grid-column: 2/3;

max-width: 0px;

}

.main-text{

column-gap: 310px;

grid-row: 1;

grid-column: 1/4;

}

.main-image{

grid-column: 1 / -1;

margin: 0 auto;

}

}

```

<figcaption id="caption-attachment-10534">A CSS grid declaration allows us to position the title and intro in the space cleared by the grid-gap.</figcaption>

The final touch — and the one that makes the layout — is the text wrapping around the title. Unfortunately, we need to use CSS exclusions to create that effect, as there’s no such thing as `float: center`. This means that only IE11 and Edge will provide that experience, which is quite ironic. But other browsers still provide a perfectly usable layout, and the code will most likely work when they finally decide to implement that feature (perhaps with minor tweaking if the spec changes).

```jsx

@media screen and (min-width: 900px){

@supports (-ms-wrap-flow: both){

.title{

-ms-wrap-flow: both; /* CSS exclusions! */

position: absolute;

right: 25%;

padding: 10px 20px;

}

}

}

```

<figcaption id="caption-attachment-10535">Visualization in a browser that supports grid, multicol, and exclusions (MS Edge).</figcaption>

With all those layers, the final layout will work like this:

{% codepen https://codepen.io/facundocorradini/pen/abzozVe %}

## Conclusion

Let’s be clear: I’m not saying the current paradigm is _wrong_, and definitely not trying to say that websites should look like magazines — or even defending the use of multicol. I just think a little innovation might make our layouts stand out from the rest. We finally have the right tools, let’s make web layouts more diverse!

* * *

**Editor's note:** Seeing something wrong with this post? You can find the correct version [here](https://blog.logrocket.com/web-layouts-like-its-2020/).

## Plug: [LogRocket](https://logrocket.com/signup/), a DVR for web apps

[LogRocket](https://logrocket.com/signup/) is a frontend logging tool that lets you replay problems as if they happened in your own browser. Instead of guessing why errors happen, or asking users for screenshots and log dumps, LogRocket lets you replay the session to quickly understand what went wrong. It works perfectly with any app, regardless of framework, and has plugins to log additional context from Redux, Vuex, and @ngrx/store.