id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

230,175 | The First Two Weeks: A Compiler Writing Journey | Photo by Nick Fewings on Unsplash Welcome back to the first update in my compiler & interpreter... | 3,825 | 2020-01-04T06:04:32 | https://dev.to/bamartindev/the-first-two-weeks-a-compiler-writing-journey-2ko8 | compilers, interpreters, standardml, devjournal | *Photo by Nick Fewings on Unsplash*

Welcome back to the first update in my compiler & interpreter journey! I want to spend some time this post to share some things I have learned about so far, as well as what I plan to tackle in the next two weeks! The first few sections will focus on Compiler / Interpreter information that I have been gathering and starting to digest. The later section will focus on my experience so far with Standard ML, the language that I will be utilizing with the book ["Modern Compiler Implementation in ML"](https://www.cs.princeton.edu/~appel/modern/ml/)

Here is a look at the sections that I want to cover:

* [What Are Compilers And Interpreters?](#what-are-compilers-and-interpreters)

* [What Are The Stages of Compilation?](#what-are-the-stages-of-compilation)

* [Lets Check Out The "Super Tiny Compiler"!](#lets-check-out-the-super-tiny-compiler)

* [What I Have Learned About Standard ML](#what-i-have-learned-about-standard-ml)

* [Useful References And Links](#useful-references-and-links)

## What Are Compilers And Interpreters?

A compiler is a program that translates code written in one language (the source language) into another language (the target language). The target language is usually something like assembly or machine code when the compiler is creating an executable, but it can also be another high level programming language like JavaScript. The [Rust](https://www.rust-lang.org/) compiler is an example of the former, while [Babel](https://babeljs.io/) is an example of the latter.

A key characteristic is that a compiler does its work **ahead of time**. You can use the compiler to generate its output and then wait to execute it at a later time. A side effect of this, is that an executable generated by the compiler can exist even if the source code is lost.

An interpreter, on the other hand, directly executes the instructions written in the source language without converting it to some target language before. It requires the source code every time it is executed!

Some examples of compiled & interpreted languages:

Compiled | Interpreted

--- | ---

C, C++, Rust, Standard ML, and Java | Lua, Python, and JavaScript

Now, an interesting thing is that *any* language can be compiled or interpreted. In fact, a lot of programming languages provide both capabilities to developers to improve the development experience. For example, if I want to write some [Elixir](https://elixir-lang.org/) code it will be compiled to bytecode to be run on the Erlang VM, but if I write an Elixir script or use the interactive mode REPL (Read-Evaluate-Print-Loop) it will behave more like an interpreted language.

As I will show in the next section, compilation (and interpretation) have multiple stages to it. The "front end" stages of parsing and lexing, and creating an abstract representation of the source will be very similar for compilers and interpreters. However, the "back end" stages will start to diverge as an interpreter is more concerned with immediate execution while a compiler is concerned with creating an executable.

## What Are The Stages of Compilation?

Compilation is broken down into two major stages, and in those stages are smaller stages that will be of focus as I work through implementing a compiler. I hope to speak to each part in my own words as I go through them!

The two major stages are the front end, and the back end. I know, super descriptive. Similar to any other piece of software, a compiler should be designed with modularity in mind. The front end is the stage of the compiler that takes the source code written in the programming language and turns it into an intermediate representation (IR). This IR is some data representation of the program, that is independent of the source programming language itself. The back end takes the IR and does optimizations and also generates the output of the compiler into some other target language.

This is a very broad description, but it is the first division that can be seen in compiler architecture. A slightly more in depth look can be seen in a lovely picture of a mountain in Crafting Interpreters, in the ["A Map of the Territory" chapter](https://www.craftinginterpreters.com/a-map-of-the-territory.html#the-parts-of-a-language). This shows some sub-steps of the front end and back end, like scanning, parsing, analysis, and code generation. The book that I am following has twelve stages listed out! Those stages are:

1. Lex

2. Parse

3. Semantic Actions

4. Semantic Analysis

5. Frame Layout

6. Translate

7. Canonicalize

8. Instruction Selection

9. Control Flow Analysis

10. Dataflow Analysis

11. Register Allocation

12. Code Emission

If I am being honest I know what *maybe* two of those stages entail (lex and parse). The rest I feel like the profit gnomes in south park.

I am excited to be able to someday intelligently speak to all of these stages in the future, and I think that will be the focus of most future posts. This post is a little all over the place as I am getting my bearings and looking at various resources as I learn Standard ML. Speaking of all over the place...

## Lets Check Out The "Super Tiny Compiler"!

Now that we have a bit of understanding of what a compiler is, I think it would be fun to look at a very simple compiler, [The Super Tiny Compiler!](https://github.com/jamiebuilds/the-super-tiny-compiler/blob/master/the-super-tiny-compiler.js) This compiler is written in JavaScript and very well annotated, so I won't dive **too** deep into it because I encourage you to take a look at the authors great work!

Essentially this compiler takes some Lisp like function calls, and compiles them into C like function calls! Essentially taking something like `(add 2 2)` and converting it to `add(2, 2)`.

What I want to take a look at is the entry point for this compiler, this function that loosely follows the lex, parse, translate, and code emission steps above:

```javascript

function compiler(input) {

let tokens = tokenizer(input);

let ast = parser(tokens);

let newAst = transformer(ast);

let output = codeGenerator(newAst);

// and simply return the output!

return output;

}

```

This takes us through the major steps: tokenization, parsing, transforming, and code generation. The first step is what finds the individual parts of the input, taking something like `(add 2 2)` and building a list like

```javascript

const tokens = [{type: 'paren', value: '('}, {type: 'string', value: 'add'},

{type: 'number', value: '2'}, {type: 'number', value: '2'},

{type: 'paren', value: ')'}];

```

to represent the input program, with added metadata about what each token is. The next step takes care of making sure that those tokens **make sense** with the semantics of the language. Luckily in the case of this compiler, there are no keywords, but there are expectations of matching parens!

After creating a correct program as defined by its semantics, the transformation of the abstract syntax can take place to change its representation to a C like function call.

Finally, after the transformation is applied, the code is generated in the C like manner! Again, this is a highly simplified overview of what is in the compiler, but I encourage you to take a look at the source - pull it down and tinker with it. See if you can add something new to it. What about transforming from C -> Lisp instead?

> As an aside, I think that is an interesting property of compilers and languages - they can be as simple or complicated as needed. That means that I might detour here and there as I am learning to write small, simple languages that focus on implementing the new techniques! 🤓

## What I Have Learned About Standard ML

This is where I spent the majority of my last two week. Standard ML (Standard Meta Language aka SML) is a modular functional programming language that is supposedly very well suited to compiler implementation. I have been posting to the GitHub repository [standard-ml-learning](https://github.com/bamartindev/standard-ml-learning) all of the code that I have been writing as a result of learning - feel free to check it out!

I have a couple of directories in this project, one for following the text "Programming in Standard ML", and the other is a workspace for me to do practice problems from an online course I found [CS 312](https://www.cs.cornell.edu/courses/cs312/2008sp/schedule.html).

One thing I have noticed is that while the text has been useful, its easy to *think* that I am learning while following along with examples. Its another thing to **know** that I am learning by tackling small coding challenges and other more free form problems and getting the correct solution!

I am lucky to have some experience with functional programming - I try and use a functional style when it makes sense at work with JavaScript and I have dabbled in Haskell and Elixir as well. The idea of coding in a declarative is a little less harsh for me coming in with that background. Even though that is the case, I still love tripping up over little things as captured in my notes:

> Ok, I have typed var and let way too many times when trying to write sml - I have to remember its val!

And even that note to myself is misleading, because there **IS** a keyword `let` as well!

One thing that I liked was the concept of sharp notation, which is the following:

```sml

val person = ("Jim", "Bob", "Software Developer", #"A", 45)

val fullName = (#1 person) ^ " " ^ (#2 person)

val jobClass = #4 person

```

The sharp notation is a way of accessing values in an n-tuple. As you can see, it isn't the most clear what it is trying to do, but I can imagine that it could be useful in an anonymous function for mapping or something like that.

Another thing that I always love with languages like this is the ease of pattern matching.

```sml

val person = ("Jim", "Bob", "Software Developer", #"A", 45)

val (firstName, lastName, _, jobClass, age) = person

```

This will bind the individual values of the tuple to the variables firstName, lastName, jobClass, and age. Note the use of `_` in the pattern matching, this ignores the field "Software Developer" and doesn't bind it to anything!

Another powerful concept is the `cons` operator which is written as `::` and is used during list processing. Can you guess what this function does?

```sml

fun mystery [] = 1

| mystery (x::xs) = x * mystery xs

```

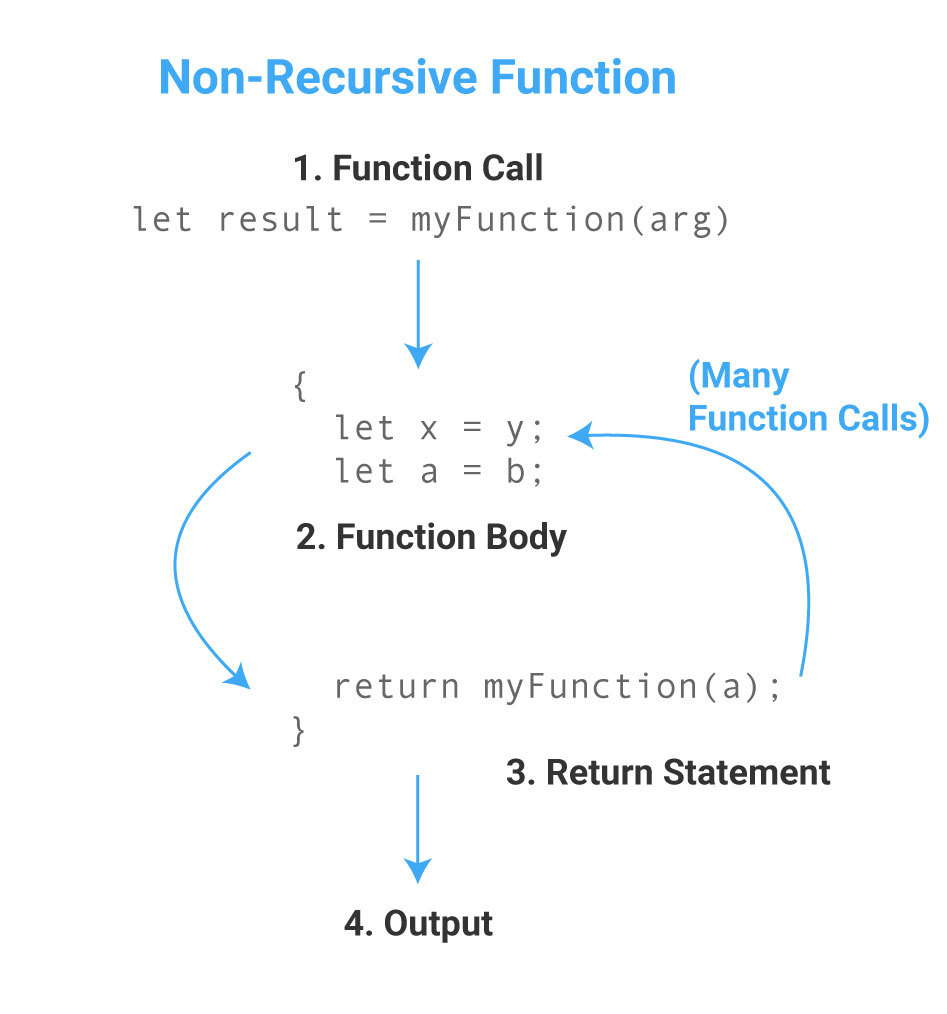

Yeah, I cheated a bit by throwing a bunch of new syntax at you, but it takes a list of integers, like `mystery [1,2,3,4,5]` and returns `120` - thats all of the elements multiplied together! So, the new keyword introduced is fun, which is a function declaration. Then pattern matching is employed in a new way! The first pattern checks for the value `[]` which is an empty list. If there is an empty list we return 1. So calling `mystery []` would result in 1. The next part of the pattern matching uses the cons operator to destructure the list provided. By doing this, we are grabbing the head and tail of the list - x is the head, xs is the tail. If the list is `[1,2,3,4,5]`, then `x = 1` and `xs = [2,3,4,5]`! Now, given that information hopefully the function body of `x * mystery xs` makes sense. We are recursively calling the mystery function with a reduced version of the initial input: `1 * mystery [2,3,4,5]`. This will continue to happen until we reach the base case of `[]` and return 1, then the full evaluation will occur of `1 * 2 * 3 * 4 * 5 * 1`, which returns `120`. Awesome!

There have been a lot more topics that I have learned as well from recursion, to higher order functions, to exception handling. I don't think I am the right person to teach these things, as I have linked to the primary source of my learning, so instead I will conclude by sharing some of the code that I wrote that *wasn't* guided by the book - some challenges that I tackled on this site as well as some problem set code I wrote for the CS 312 course:

[Daily Challenge #148 - Disemvowel Trolls](https://dev.to/thepracticaldev/daily-challenge-148-disemvowel-trolls-h76)

```sml

val vowels = [#"a", #"e", #"i", #"o", #"u"]

fun member_of (item, list) = List.exists (fn x => x = item) list

fun disemvowel s = implode (List.filter (fn x => not(member_of(Char.toLower x, vowels))) (explode s))

```

[Daily Challenge #149 - Fun with Lamps](https://dev.to/thepracticaldev/daily-challenge-149-fun-with-lamps-11nk)

```sml

fun gen_alt (starting, next, len) = List.tabulate(len, fn x => if x mod 2 = 0 then starting else next)

fun diff ([], []) = 0

| diff (x::xs, y::ys) = (if x = y then 0 else 1) + diff(xs, ys)

| diff (_, _) = ~1 (* List lengths don't match for some reason *)

fun lamps [] = 0

| lamps i = Int.min(diff(i, gen_alt(0, 1, length i)), diff(i, gen_alt(1, 0, length i)))

```

Answers to some parts of [Problem Set 1](https://www.cs.cornell.edu/courses/cs312/2008sp/hw/ps1/ps1.html)

```sml

exception NumberFormatException

fun parseInt (s: string) : int =

let

val SOME x = Int.fromString s

in

x

end

handle Bind => raise NumberFormatException

datatype tree = Node of tree list

val tt = Node([Node([Node([Node([])])]), Node([Node([]), Node([Node([])])]), Node([Node([])])])

fun treeSize (Node([])) = 1

| treeSize (Node(x::[])) = 1 + treeSize x

| treeSize (Node(x::xs)) = (treeSize x) + (treeSize (Node(xs)))

val correctSize = treeSize tt = 10

fun rev [] = []

| rev (hd::tl) = rev tl @ [hd]

fun isWhitespace c = c = #" "

fun reverseWords words =

String.concatWith " " (rev (String.tokens isWhitespace words))

val reversed = (reverseWords "A MAN A PLAN A CANAL PANAMA") = "PANAMA CANAL A PLAN A MAN A"

```

Some answers to [Problem Set 2 - Part 2](https://www.cs.cornell.edu/courses/cs312/2008sp/hw/ps2/ps2.html)

```sml

(* Part 2 *)

(* a *)

val product = List.foldl Int.* 1

(* b *)

fun even_odd_idx (a: 'a, (b1: 'a list, b2: 'a list)) : ('a list * 'a list) =

if (length b1 = length b2) then

(b1 @ [a], b2)

else

(b1, b2 @ [a])

(* Any way I can make this point free? *)

fun partition (l: 'a list) : ('a list * 'a list) = List.foldl even_odd_idx ([], []) l

(* c *)

fun apply_twice_positive (i: int) = fn (f: int -> int, count: int) => if f(f i) > 0 then count + 1 else count

val count_positive_funcs = foldl (apply_twice_positive(~1)) 0

(* This returns 2! *)

val positive_count = count_positive_funcs [fn x => x + 1, fn x => x - 1, fn x => x * ~1, fn x => x*x]

```

> One final thought on Standard ML learning - there are a lot less resources than a popular modern language! I know this is to be expected, but I was very surprised to see that only around 1800 questions had been asked on stack overflow, as opposed to the 1.9 million you see for JavaScript. I am very happy with my progress given that fact, and I am getting close to the goal of being able to fully understand a large "real world" program implemented in SML.

## Useful References And Links

One resource I really want to give a shout out to is [Crafting Interpreters](https://www.craftinginterpreters.com/) - this is a really well written and free resource on writing an interpreter for a programming language called Lox. The first part is implemented in Java, the second part is implemented in C.

The only reason I am not using this as my primary learning text is that I wanted a more rigorous text to get started - something that dives a little more into the theory. I wouldn't be surprised to see myself reference this resource throughout my journey though!

Another place that I have been looking at is the programming languages subreddit, [/r/programminglanguages](https://www.reddit.com/r/ProgrammingLanguages/) I am using it as a gauge to see how much I am learning - at the moment a lot of the topics being discussed are way over my head, but I hope to start understanding the common problems discusses in programming language creation as I learn.

The subreddit also has an associated [discord server](https://discordapp.com/invite/yqWzmkV) for a little bit more live discussions.

## Until Next Time

If you made it this far, thanks for reading! I think that moving forward I am going to move my "publish" date to a Monday so that way I can spend a bit more time organizing my thoughts. This post felt like it was a bit more of a brain dump than I wanted it to be this time around.

By next time I hope to have finished my initial learning of Standard ML so that way I can talk a bit more about implementing a specific stage of a compiler next time. I am super excited to start!

I will post the next update on January 20th, and all future updates will be on the Monday 2 week after.

*If you have any corrections or clarifications to statements I have made, please drop a comment. The last thing I want is to be misleading anyone, even though this is about my journey to learn and not a tutorial.* | bamartindev |

230,190 | welcome me git hub community | I really appreciate your effort here, how can we easily communicate and build a genuine community her... | 0 | 2020-01-02T05:48:18 | https://dev.to/rashi07hub/welcome-me-git-hub-community-n3j | I really appreciate your effort here, how can we easily communicate and build a genuine community here. I am a programmer and hope I'll learn more skills here at Dev community.

Thanks,

Dev.to | rashi07hub | |

230,210 | Gitting gud | Git is now an essential part of my work flow. It's invaluable. I like knowing that even though I may... | 0 | 2020-01-02T07:04:46 | http://jamessessford.com/blog/gitting-gud | git, ubuntu | ---

title: Gitting gud

published: true

date: 2019-12-26 00:00:00 UTC

tags: git, ubuntu

canonical_url: http://jamessessford.com/blog/gitting-gud

---

Git is now an essential part of my work flow. It's invaluable. I like knowing that even though I may have to dig to find it, I have the history of an entire project at my fingertips.

Since moving over to Ubuntu, I've made a few optimisations to my environment to help use Git.

## Keys

I modified this from a Stack Overflow answer, it'll add the desired keys to SSH agent when you load a terminal/login through SSH and destroy the agent when the last thing using it is closed.

I have this at the bottom of my ~/.zshrc config file

```

# Start ssh-agent to keep you logged in with keys, use `ssh-add` to log in

agent=`pgrep ssh-agent -u $USER` # get only your agents

if [["$agent" == "" || ! -e ~/.ssh/.agent_env]]; then

# if no agents or environment file is missing create a new one

# remove old agents / environment variable files

if [["$agent" != ""]]; then

kill $agent;

fi

rm -f ~/.ssh/.agent_env

# restart

eval `ssh-agent`

/usr/bin/ssh-add

echo 'export SSH_AUTH_SOCK'=$SSH_AUTH_SOCK >> ~/.ssh/.agent_env

echo 'export SSH_AGENT_PID'=$SSH_AGENT_PID >> ~/.ssh/.agent_env

fi

# create our own hardlink to the socket (with random name)

source ~/.ssh/.agent_env

MYSOCK=/tmp/ssh_agent.${RANDOM}.sock

ln -T $SSH_AUTH_SOCK $MYSOCK

export SSH_AUTH_SOCK=$MYSOCK

end_agent()

{

# if we are the last holder of a hardlink, then kill the agent

nhard=`ls -l $SSH_AUTH_SOCK | awk '{print $2}'`

if [["$nhard" -eq 2]]; then

rm ~/.ssh/.agent_env

ssh-agent -k

fi

rm $SSH_AUTH_SOCK

}

trap end_agent EXIT

set +x

```

## Clone via SSH

After setting up injection of my SSH keys, I went around the projects I had on my machine and changed the remote from HTTPS to SSH. Now I can interact with remote repositories without having to enter my username and password every time!

## WIP & NAH

Earlier this year Dave Hemphill tweeted about the power of WIP. Essentially that's the only commit message you need. That gives us our first alias

```

alias wip="git add . && git commit -m 'WIP'"

```

I wouldn't advocate this behavoiur for team work or a situation where commit messages are necessary but for me to quickly save state and gaurantee that I'm storing project history, it more than works for me.

The second alias is nah. Nah is a git reset and clean to get your working tree back to the state of the last commit.

```

alias nah="git reset --hard && git clean -df"

```

## GitLens

GitLens gives VSCode super powers. I probably haven't scratched the surface of what this package can do but I can now instantly get the full commit/edit history for any project file from within the editor. | jamessessford |

230,242 | The fight between Try-Catch and If-Else | How do you decide where to use if-else and try-except? I have started this thread because many new p... | 0 | 2020-02-03T04:58:43 | https://dev.to/mrsaeeddev/the-fight-between-try-catch-and-if-else-47fa | discuss, codenewbie, help, refactorit | How do you decide where to use if-else and try-except?

I have started this thread because many new people overuse try-catch or try-except over if-else or vice versa.

## Share your thoughts!!! | mrsaeeddev |

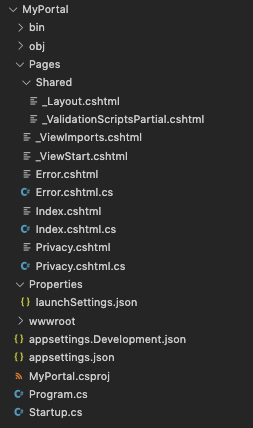

230,278 | Creating a C# Repository in .NET Core using RepoDb | This is an article about creating a dynamic C# Repository using RepoDb | 0 | 2020-01-02T16:08:33 | https://dev.to/mikependon/creating-a-c-repository-in-net-core-using-repodb-57gn | csharp, dotnet, sql, beginners | ---

title: Creating a C# Repository in .NET Core using RepoDb

published: true

description: This is an article about creating a dynamic C# Repository using RepoDb

tags: csharp, dotnet, sql, beginner

---

In this article, we will show you how to create an entity-based C# *Repository* using *RepoDb* ORM.

## Benefits

This will give you a knowledge on how to implement a standard entity-based *Repository* in *fast* and *clean* manner.

## Before we begin

We expect that you have the following software install in your machine.

- Microsoft SQL Server (at least 2016).

- Microsoft SQL Server Management Studio (at least 2016)

- Microsoft Visual Studio (at least 2017).

To proceed, follow the steps below.

## Create a Database

In your SQL Server, execute the script below.

```

CREATE DATABASE [Inventory];

GO

```

## Create a Table

In your SQL Server, execute the script below.

```

USE [Inventory];

GO

CREATE TABLE [dbo].[Customer]

(

[Id] BIGINT IDENTITY(1,1)

, [Name] NVARCHAR(128) NOT NULL

, [Address] NVARCHAR(MAX)

, CONSTRAINT [PK_Customer] PRIMARY KEY CLUSTERED ([Id] ASC )

)

ON [PRIMARY];

GO

```

## Create a C# Project

The project information must be below:

- Name: *InventoryAPI*

- Type: *ASP.NET Core API*

- Test: *No*

- Location: *Any Location*

Leave this project an empty one as we will create our own objects moving forward.

## Create a Model Class

In your C# project, do the following.

- Add a folder named *Models*.

- Inside the *Models* folder, add a new class named *Customer.cs*.

Replace the content of the *Customer.cs* with the code snippets below.

```csharp

public class Customer

{

public long Id { get; set; }

public string Name { get; set; }

public string Address { get; set; }

}

```

## Creating a Repository Class

In your C# project, do the following.

- Add a folder named *Repositories*.

- Inside the *Repositories* folder, add a new class named *CustomerRepository.cs*.

The content of your class would be below.

```csharp

public class CustomerRepository

{

}

```

## Inherit from BaseRepository

At this point in time, you already have the following.

- A database named *Inventory*.

- A table named *Customer*.

- A C# ASP.NET Core API project.

- A class model named *Customer*.

- A repository class named *CustomerRepository*.

Before we proceed, you need to install *RepoDb*. Type the command below in your *Package Manager Console*.

```

Install-Package RepoDb

```

Now, replace the content of your class *CustomerRepository* with the one below.

```csharp

public class CustomerRepository : BaseRepository<Customer, SqlConnection>

{

public CustomerRepository() : base(@"Server=.;Database=Inventory;Integrated Security=SSPI;")

{ }

}

```

> As recommended, not covered by this tutorial, the repository must accept a *connectionString* (or *settings* object) in the constructor. Then pass the value of *connectionString* on the constructor of the base class *BaseRepository*.

The class *BaseRepository* is an embedded base repository object within *RepoDb* library. It is usually used to create an entity-based repository.

> Actually, at this point in time, you already have inherited all the operations of the *BaseRepository*. Without doing anything more, you can now call the *CustomerRepository*.

See sample codes below.

```csharp

using (var repository = new CustomerRepository())

{

var customer = new Customer

{

Name = "John Doe",

Address = "New York"

};

var id = repository.Insert<long>(customer);

...

}

```

The code above will insert a new record in your table *Customer*.

To query, you can use the code below.

```csharp

using (var repository = new CustomerRepository())

{

var customer = repository.QueryAll().FirstOrDefault();

...

}

```

Even though you already have a working *CustomerRepository*, that is still not enough as per the C# standard. By standard, we usually use an interface for dependency-injection.

**Note:** You can also inject a class, not just an interface!

## Implement a Repository Interface

In your C# project, do the following.

- Add a folder named *Interfaces*.

- Inside the *Interfaces* folder, add a new class named *ICustomerRepository.cs*.

Replace the content of the file with the code snippets below.

```csharp

public interface ICustomerRepository

{

Customer GetById(long id);

Customer GetByName(string name);

IEnumerable<Customer> GetAll();

long Insert(Customer customer);

int Update(Customer customer);

int Delete(long id);

}

```

We have created *6 methods* which would enable you to do a basic *CRUD* operations on the *Customer* entity.

## Implement your Custom Methods

First, implement the interface within the *CustomerRepository* class.

See the codes below.

```csharp

public class CustomerRepository : BaseRepository<Customer, SqlConnection>, ICustomerRepository

{

public CustomerRepository ()

: base(@"Server=.;Database=Inventory;Integrated Security=SSPI;")

{ }

}

```

Then, implement the method *one-by-one*. Simply copy and paste the code snippets provided below inside your *CustomerRepository* class.

For *GetById*:

```csharp

public Customer GetById(long id)

{

return Query(id).FirstOrDefault();

}

```

For *GetByName*:

```csharp

public Customer GetByName(string name)

{

return Query(e => e.Name == name).FirstOrDefault();

}

```

For *GetAll*:

```csharp

public IEnumerable<Customer> GetAll()

{

return QueryAll();

}

```

For *Insert*:

```csharp

public long Insert(Customer customer)

{

return Insert<long>(customer);

}

```

For *Update*:

```csharp

public int Update(Customer customer)

{

return Update(customer);

}

```

For *Delete*:

```csharp

public int Delete(long id)

{

return Delete(id);

}

```

By this time, you can now call your own customized methods inside *CustomerRepository* class.

See sample codes below to insert.

```csharp

using (var repository = new CustomerRepository())

{

var customer = new Customer

{

Name = "John Doe",

Address = "New York"

};

var id = repository.Insert(customer);

...

}

```

And the code below is for *GetByName*.

```csharp

using (var repository = new CustomerRepository())

{

var customer = repository.GetByName("John Doe");

...

}

```

## Register your Repository as Service Component

Now, in order for you to be able to use the *Repository* in your project as an injectable object within your controllers, you have to register it as a service component.

To register as a service component, follow the steps below.

- In your *Solution Explorer*, double-click the *Startup.cs* file.

- Navigate inside `ConfigureServices()` method and paste the code below before the method end.

```csharp

services.AddTransient<ICustomerRepository, CustomerRepository>();

```

- Resolve the missing namespaces by placing the mouse inside the *CustomerRepository* and press *Ctrl+Space* > *Enter*.

- Press *Ctrl+S* keys to save the changes.

The engine will register the *CustomerRepository* object (as implemented by *ICustomerRepository* interface) into the services collection. Once the registration is complete, it signifies that our *CustomerRepository* class is now ready to be used for injection.

**Note**: We can as well add it as *Singleton* via `AddSingleton()` method of *IServiceCollection* if we wish to have our `Repository` in a singleton mood.

## Create a Controller

Follow the steps below to create a *Controller* for your *Customer* entity.

- Inside the *Controllers* folder, add a new class named *CustomerController.cs*.

- The new file named *CustomerController.cs* will be created. Replace the class implementation with the script below.

```csharp

[Route("api/[controller]")]

[ApiController]

public class CustomerController : ControllerBase

{

private ICustomerRepository m_customerRepository;

public CustomerController(ICustomerRepository repository)

{

m_customerRepository = repository;

}

}

```

- Press *Ctrl+S* keys to save the changes.

The *Controller* class above accepts an injected *ICustomerRepository* service component. We hold an instance of *Interface*, not *Class*.

> The engine will do the magic for injecting the *ICustomerRepository* after your service component registration.

## Create a Get Method from your Controller

We are almost done! :)

The provided methods below will call the *CustomerRepository* operation. Please copy the provided code snippets below and paste it inside *CustomerController* class just right after the *Constructor*.

```csharp

[HttpGet()]

public ActionResult<IEnumerable<Customer>> Get()

{

return m_customerRepository.GetAll().AsList();

}

[HttpGet("{id}")]

public ActionResult<Customer> Get(long id)

{

return m_customerRepository.GetById(id);

}

```

## Testing the Controller

At this point, our solution is now ready for testing. Build the solution by simply pressing the *Alt + B + R* keys.

Once the build is complete, press the *F5* key to start.

In the browser, type the URL below.

```

http://localhost:44341/customer

```

You can also query the specific records with the link below.

```

http://localhost:44341/customer/1

```

Where the value *1* is equals to the *Customer* id you have in the database.

You will notice that the result is being displayed in the browser as *JSON* file.

## How to find a port?

- Right-click on the project *InventoryAPI* from the *Solution Explorer* and click the *Properties*.

- Click the *Debug* tab.

- Under *Web Server Settings*, you will see the *App URL* field that contains the port.

## Links

You can see and download the actual project [here](https://github.com/mikependon/Tutorials/tree/master/Blogs/DEV.to/BaseRepository/InventoryAPI).

--------

Voila! You have completed this tutorial! Thank you for reading this article. Your support is valuable to us, please do not forget to star our [GitHub](https://github.com/mikependon/RepoDb) page. | mikependon |

230,301 | Super basic: How Hashmap works in Java | Hi there! It is 2020 already and what's a better way to start off the new year than to recap what we... | 0 | 2020-01-02T11:01:04 | https://dev.to/ham8821/super-basic-of-hashmap-in-java-53be | java, devops, beginners, kotlin | Hi there!

It is 2020 already and what's a better way to start off the new year than to recap what we've already know such as how to walk and breathe and get drunk? LOL

Today I might bring up some real basic concepts of hashmap in Java. I am pretty sure a lot of you already know better than anyone on this one. If that's the case, feel free to click the go back button but if you are a little unsure or have no idea what I am talking about, it is up to you spend 2 minute reading this article!

Well, the most well known definition of has a hash map would be a data structure that implements an associative array abstract data type, a structure that can map keys to values.

To make it even easier,

you know when we think of an array, we would probably think of a number index to access to a certain value. ex) arrayName[index]= value

Same goes to hash map except that we can use key instead of number index values.

As shown in the image below, the hashmap is an array of nodes that has Key and Value which makes look ups to be much easier and more efficient by using key values.

If we look into the hashmap slightly more in detail, we can see it looks like a table that has nodes so called buckets which can represent a class having following objects:

<ol>

<li>K key : <i>key string value that we use for look ups</i></li>

<li>int hash: <i>integer hashcode from the string key value</i></li>

<li>V value: <i>the actual value that we want to access</i></li>

<li>Node next: <i>to point out the next entry or node</i></li>

</ol>

Alright, now we kinda understand what the hashmap is, then it is time for us to have a try to work with it to understand how it actually works.

To explain the steps in order to access to a certain value using key, first of all, I would like to insert some values into the map first for better understanding.

To insert the values, we can use put(k,v) method and to to that, let's quickly create a simple hashmap called scroes where we will be storing the names and scores accordingly.

```

HashMap<String, integer>scores = new HashMap<String, Integer>();

```

Once the hashmap is created, the size of the map will be automatically initialised by 16 which makes the index of the map starts from 0 and finishes to 15.

Now let's say we want to put these three records into the scores map.

<code>

scores.put("Smith","100");

scores.put("Blake","87");

scores.put("King","100");

</code>

To start with the first record, we will be going through this put() method as shown below.

```

put(K k, V v)

hash(k)

index = hash & (n-1)

```

Let's go ahead and start inserting the data.

```

put(K k, V v) // k = "Smith" and v = "100"

hash(k) // This will get the hash of the string key. in this case hash("SMITH")= 79019862

index = hash & (n-1)// n= 16 and index will be 6

```

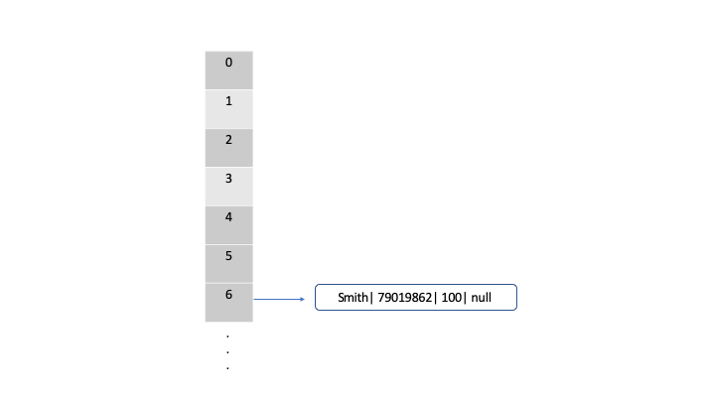

After this process, the map will look like this

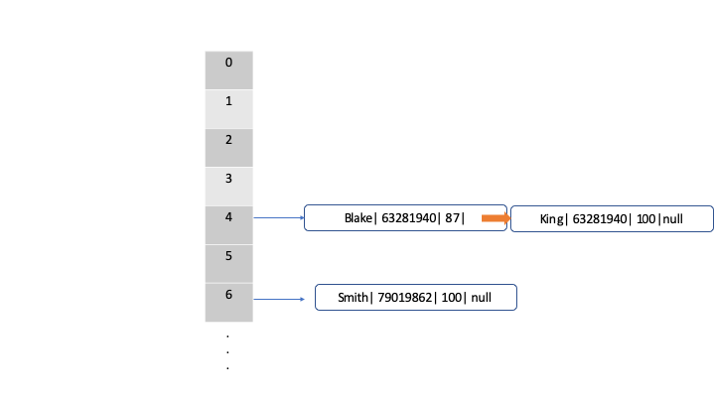

After following the same steps for all the records, the map will end up looking like below

*notice: I forgot to change the hash value for the second record for Kings in the picture. just note that the hash value for king is different from the one for Blake*

Wait, hold on a second, some of you might have noticed that we have 2 records in index 4 node. How did this happen?

If we scroll back a bit up to where we get the index by hash & (n-1), we can check those two records end up having same index which is 4 in this case. Let's say we tried to put Blake's record first and there shouldn't have been any problem, like we understood the data "Blake | hash| score| null" must have been inserted.

But as we insert Kings record after, we will figure out that they have same index number, the map will automatically put the record next to the Blake's record by changing the null value to point out to the next node which is King's in this case. That is how the outcome looks like the map above.

This will also lead us to this question.

"If they have same index number, how do we access to them?"

In order to access to the nodes, we can use get(k) method.

This method looks like this.

```

V get(Object key)

hash(key)

Index = hash & (n-1)

```

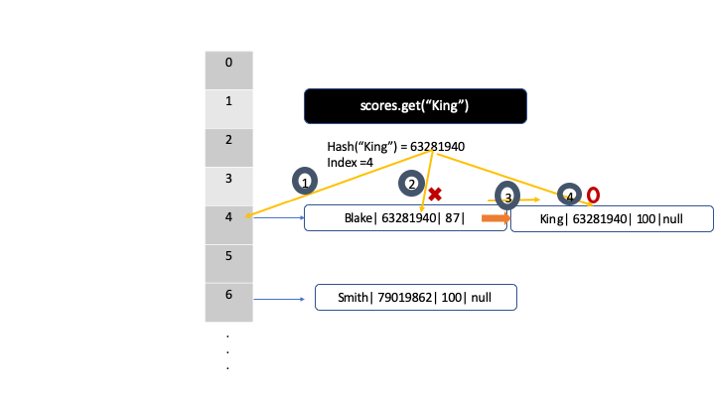

Now, let's say we want to find King's score in this hashmap using

```

scores.get("King")

```

then it will get the hash which is 2306996 and will get the index number which is 4 in this case. In the first node which has index 4, it will compare hashcode between the hascode that we are looking for and the hashcode that this node has. For example, the hascode we are looking for is 2306996 and the node has 63281940 as a hash value. They don't match, so it will be pointed out to the next node which and will do the comparison again. This time the hash value do match since it has 2306996 which we are looking for.

*notice: I forgot to change the hash value for the second record for Kings. just note that the hash value for king is different from the one for Blake*

Alright!

Today, we have talked about some of the basic concepts of hashmap. Actually, the reason I brought this topic up today was that I realised that hashmap come across very often we we code and it is very easy to just overlook, thinking that we understand how it works 100%. However, when I faced some complicated issues, I realised that I wasn't really understanding how it works and how to use it properly. I hope it helped for some of you guys to understand little bit better about hashmap and not get confused later when we really need to go through some concepts along the way of programming.

Thanks a lot for your time to read this article and Happy new year!!!

| ham8821 |

230,317 | Build a Rest API with Python - The easiest way | How you can build a Rest api with Python | 0 | 2020-01-02T10:30:29 | https://dev.to/xarala221/build-a-rest-api-with-python-the-easiest-way-16f0 | python, django, djangorest, api | ---

title: "Build a Rest API with Python - The easiest way"

published: true

description: "How you can build a Rest api with Python"

tags: python, django, django-rest, api

cover_image: https://images.unsplash.com/photo-1496262967815-132206202600?ixlib=rb-1.2.1&ixid=eyJhcHBfaWQiOjEyMDd9&auto=format&fit=crop&w=795&q=80

---

In this tutorial we will transform our Django phonebook Application to a Rest API

> A REST API defines a set of functions which developers can perform requests and receive responses via HTTP protocol such as GET, POST, PUT and DELETE

Think REST API as a web service that provide you the data you want to use in your application(mobile or front-end client).

The key component for a REST API request are:

**GET** — The most common option, returns some data from the API based on the given endpoint .

**POST** — Creates a new record and add it to the database.

**PUT** — Update an existing record.

**DELETE** — Deletes the record on the given endpoint.

## Getting started

```sh

$ git clone https://github.com/xarala221/django-phonebook.git

$ cd django-phonebook

```

## Application setup

```sh

$ pipenv install

Creating a virtualenv for this project…

Using /usr/bin/python3.7m (3.7.5) to create virtualenv…

$ pipenv shell

Spawning environment shell (/usr/bin/zsh). Use 'exit' to leave.

. /home/username/.local/share/virtualenvs/phonebook_rest_api-9zIZds3o/bin/activate

```

## Run the application

```sh

(my-env) $ python manage.py runserver

```

Notice i use **pipenv** instead of pip but you can use pip if you want it's up to you.

The application is running at http://localhost:800/

```sh

.

├── accounts

│ ├── admin.py

│ ├── apps.py

│ ├── forms.py

│ ├── __init__.py

│ ├── migrations

│ │ └── __init__.py

│ ├── models.py

│ ├── tests.py

│ ├── urls.py

│ └── views.py

├── contact

│ ├── admin.py

│ ├── apps.py

│ ├── __init__.py

│ ├── migrations

│ │ ├── 0001_initial.py

│ │ └── __init__.py

│ ├── models.py

│ ├── tests.py

│ ├── urls.py

│ └── views.py

├── db.sqlite3

├── manage.py

├── phonebook

│ ├── asgi.py

│ ├── __init__.py

│ ├── settings.py

│ ├── urls.py

│ └── wsgi.py

├── Pipfile

├── Pipfile.lock

├── README.md

├── requirements.txt

└── templates

├── accounts

│ ├── login.html

│ └── register.html

├── base.html

├── contact

│ ├── contact_details.html

│ ├── contact_list.html

│ ├── delete_contact.html

│ ├── new_contact.html

│ └── update_contact.html

├── index.html

└── partials

└── _navbar.html

```

This is our folder structure.

## Setup Django REST Framework

Django REST framework is a powerful and flexible toolkit for building Web APIs.

```sh

(myenv) $ pipenv install djangorestframework

```

In phonebook/settings.py add

```sh

# Application definition

INSTALLED_APPS = [

'django.contrib.admin',

'django.contrib.auth',

'django.contrib.contenttypes',

'django.contrib.sessions',

'django.contrib.messages',

'django.contrib.staticfiles',

'rest_framework', # add this

'contact',

]

```

## Serialize the contact Table(Model)

Let's create a new file -- contact/serializers.py

```sh

# contact/serializers.py

from rest_framework import serializers

from .models import Contact

class ContactSerializer(serializers.ModelSerializer):

class Meta:

model = Contact

fields = '__all__'

```

## Preparing the data

Create a new file -- contact/api.py

```sh

# contact/api.py

from rest_framework import viewsets

from .serializers import ContactSerializer

from .models import Contact

class ContactViewSet(viewsets.ModelViewSet):

serializer_class = ContactSerializer

queryset = Contact.objects.all()

```

Update our contact/urls.py

```sh

from django.urls import path, include # add this

from rest_framework.routers import DefaultRouter # add this

from .views import (

index, contact_list,

new_contact, contact_details,

update_contact, delete_contact

)

from .api import ContactViewSet # add this

router = DefaultRouter() # add this

router.register(r'contacts', ContactViewSet,

basename='contact') # add this

urlpatterns = [

path("api/", include(router.urls)),

path("", index, name="home"),

path("contacts/", contact_list, name="contacts"),

path("contacts/new/", new_contact, name="new"),

path("contacts/<int:id>/details/", contact_details, name="details"),

path("contacts/<int:id>/update/", update_contact, name="update"),

path("contacts/<int:id>/delete/", delete_contact, name="delete"),

]

```

Open your browser and go to http://localhost:8000/api/

You will see something like this :

```sh

HTTP 200 OK

Allow: GET, HEAD, OPTIONS

Content-Type: application/json

Vary: Accept

{

"contacts": "http://localhost:8000/api/contacts/"

}

```

Click on the link what you see ?

- You should see a list of contact if they exist in your database.

- You can also create new data

Let's wrap it

In this tutorial you learned how to create a REST API with Django and Django Rest Framework.

In the next tutorial i will handle the more complex topic like :

- Authentication and authorization

- Serialize nested object

- Serialize relation field

- etc..

See you in the next tutrial

| xarala221 |

230,327 | Deploying Resources to Azure with Ansible | Ansible is a configuration management tool used to control and apply configuration changes to infrast... | 4,008 | 2020-01-06T11:57:42 | https://dev.to/cloudskills/deploying-resources-to-azure-with-ansible-1pon | azure, ansible, devops | <!-- Introduction, Prerequisites, and Goals -->

Ansible is a configuration management tool used to control and apply configuration changes to infrastructure. However, before you can apply changes to the infrastructure it first has to exist. Ansible has several Azure modules that allow you to deploy resources in Azure. This tutorial will walk you through deploying a Windows virtual machine to Azure using an Ansible playbook. By the end of the tutorial you'll understand how to use several of the Azure Ansible modules to deploy workloads to Azure.

### Prerequisites

In order to follow along in this tutorial you'll need the following:

* [Ansible installed](https://docs.microsoft.com/en-us/azure/virtual-machines/linux/ansible-install-configure?toc=%2Fazure%2Fansible%2Ftoc.json&bc=%2Fazure%2Fbread%2Ftoc.json#install-ansible-on-an-azure-linux-virtual-machine)

* `ansible[azure]` pip packaged installed

* [Connection to Azure from Ansible setup](https://dev.to/joshduffney/connecting-to-azure-with-ansible-22g2)

# Table Of Contents

* [Azure Ansible Modules](#Ansible-Module-Index-Azure)

* [Playbook Contents](#playbook-contents)

* [Create a Resource Group](#create-resource-group)

* [Create a Virtual Network](#create-virtual-network)

* [Create a Public Ip](#create-public-ip)

* [Create a Network Security Group](#create-network-security-group)

* [Create a Virtual Network Interface Card](#create-virtual-network-card)

* [Create a Virtual Machine](#create-virtual-machine)

* [Deploy an Azure Windows Virtual Machine](#deploy-azure-windows-virtual-machine)

* [Conclusion](#conclusion)

# [Azure Ansible Modules](https://docs.ansible.com/ansible/latest/modules/list_of_cloud_modules.html#azure) <a name="Ansible-Module-Index-Azure"></a>

Creating a virtual machine in Azure requires several different Azure resources; a resource group, virtual network, subnet, public ip address, network security group, network interface card, and the virtual machine itself. Each of these Azure resources can be managed and modified using an Ansible module. These Ansible modules allow you to codify your infrastructure in yaml files in the form of Ansible playbooks. Below is a list of the Ansible modules that are used throughout this tutorial. However, many more Ansible modules exist and can be found in [Ansible's module index.](https://docs.ansible.com/ansible/latest/modules/list_of_cloud_modules.html#azure)

_Ansible modules required to deploy an Azure virtual machine_

* [azure_rm_resourcegroup](https://docs.ansible.com/ansible/latest/modules/azure_rm_resourcegroup_module.html#azure-rm-resourcegroup-module)

* [azure_rm_virtualnetwork](https://docs.ansible.com/ansible/latest/modules/azure_rm_virtualnetwork_module.html#azure-rm-virtualnetwork-module)

* [azure_rm_subnet](https://docs.ansible.com/ansible/latest/modules/azure_rm_subnet_module.html#azure-rm-subnet-module)

* [azure_rm_publicipaddress](https://docs.ansible.com/ansible/latest/modules/azure_rm_publicipaddress_module.html#azure-rm-publicipaddress-module)

* [azure_rm_securitygroup](https://docs.ansible.com/ansible/latest/modules/azure_rm_securitygroup_module.html#azure-rm-securitygroup-module)

* [azure_rm_networkinterface](https://docs.ansible.com/ansible/latest/modules/azure_rm_networkinterface_module.html#azure-rm-networkinterface-module)

* [azure_rm_virtualmachine](https://docs.ansible.com/ansible/latest/modules/azure_rm_virtualmachine_module.html#azure-rm-virtualmachine-module)

# Playbook Contents <a name="playbook-contents"></a>

### Create a Resource Group <a name="create-resource-group"></a>

Azure resource groups are used to logically group related resources. Resource groups help organize your cloud environment and can also be used to grant access to specific workloads within the resource group. They are also required when creating other Azure resources. Such as virtual networks. Another benefit of resource groups is easy cleanup. When you delete a resource group everything inside of it is deleted with it.

In order to create an Azure resource group with Ansible use the `azure_rm_resourcegroup` module. It requires two parameters; `name` and `location`. The name parameter will become the name of the resource group and the location is the Azure region the resource group is placed in. Placing a resource group in one region does not mean you can only use that region for other Azure resources inside the resource group. It is simply where the metadata of the resource group is located.

```yaml

- name: Create resource group

azure_rm_resourcegroup:

name: ansible-rg

location: eastus

```

### Create a Virtual Network <a name="create-virtual-network"</a>

Virtual networks allow you to build out your private network within Azure. Virtual networks are used to connect resources running within an Azure data center. Even though they are virtual and exist out of reach to you. The same networking principals apply. The virtual network requires two main parts; address space and a subnet or subnets.

In order to deploy the virtual network in Azure with Ansible you'll need use two Ansible modules; `azure_rm_virtualnetwork` and `azure_rm_subnet`. The subnet module depends on the network module and for that reason the network module will be defined first. Ansible is procedural and the order of the tasks is very important.

The task `Create virtual network` requires three parameters; `resource_group`, `name`, and `address_prefixes`. The resource_group is the name given to the previous tasks which created the resource group. `vNet` is the name given to the virtual network resource and will be used when creating the subnet. Address prefixes of `10.0.0.0/16` defines the address space of the virtual network.

```yaml

- name: Create virtual network

azure_rm_virtualnetwork:

resource_group: ansible-rg

name: vNet

address_prefixes: "10.0.0.0/16"

```

`Add subnet` is the next tasks which is used to add a subnet range to the virtual network. A subnet is a logical subdivision of an IP network and in this example it is used to carve out a section of IP addresses for web machines within that network. The task uses the `azure_rm_subnet` Ansible module. The subnet is given the name of `webSubnet` followed by the address prefix of the subnet `10.0.1.0/24` and it specifies the virtual network where the subnet will be created which is `vNet`.

```yaml

- name: Add subnet

azure_rm_subnet:

resource_group: ansible-rg

name: webSubnet

address_prefix: "10.0.1.0/24"

virtual_network: vNet

```

### Create a Public Ip <a name="create-public-ip"</a>

While the virtual network created previously will assign a private Ip address to the virtual machine the Public Ip address assigns a public Ip address to the virtual machine. Without a Public Ip address you won't be able to communicate with the virtual machine without a VPN or other means. To create an Azure Public Ip address resource using Ansible, you'll use the `azure_rm_publicipaddress` module.

In order to create an Azure Public Ip address you'll need to define the following parameters; resource group, allocation method, and name. Specifying the resource group of ansible-rg places the public ip address in the same location as the rest of the resources already created. Allocation method determines when the Ip address is assigned to the resource and when the Ip address is released. There are two options for allocation method, static and dynamic and the resource SKU determines which of these you can choose. To learn more about these options check out [IP address types and allocation methods in Azure](https://docs.microsoft.com/en-us/azure/virtual-network/virtual-network-ip-addresses-overview-arm#sku). The name of the resource is `webPublicIP`.

Perhaps you want to return the public Ip address assigned right after the resource is created. You can accomplish this by using an Ansible command called [register](https://docs.ansible.com/ansible/latest/user_guide/playbooks_variables.html#registering-variables_). Register allows you to populate variables based on the output of Ansible tasks. In this example the output of the task Create public IP address is assigned to a variable named `output_ip_address_`. Registering the variable does not output the information. Which is what the debug command does within the `Output public IP` task. Because output from tasks are in JSON you can parse the results to display what you want. In this example the variable output_ip_address is parsed to display only the ip address and nothing else.

```yaml

- name: Create public IP address

azure_rm_publicipaddress:

resource_group: ansible-rg

allocation_method: Static

name: webPublicIP

register: output_ip_address

- name: Output public IP

debug:

msg: "The public IP is {{ output_ip_address.state.ip_address }}"

```

### Create Network Security Group <a name="create-network-security-group"</a>

Azure Network Security Groups are what filter network traffic based on a set of rules that are defined. You can think of them as being similar to a network firewall. Without a network security group allowing traffic in, you will not be able to connect to your virtual machine within Azure. For that reason it is necessary to use the `azure_rm_securitygroup` module to create one and also define some rules allowing traffic in.

In this example you'll want to connect to the virtual machine using three different protocols; RDP, HTTP, HTTPS, and WimRM. Each of these uses different inbound ports to communicate. RDP uses 3389, HTTP uses 80, HTTPS uses 443, and WinRM uses 5985 and 5986. Each of these protocols will require a set of rules associated with the network security group allowing inbound traffic in. To create an Azure network security group you only need two pieces of information. The resource group to associate it with and the name of the network security group. However, you'll need more information to create rules associated with it.

It is a common practice to create one rule per protocol. In this example, you'll create three rules. When creating the rules each one requires you specify a name, protocol, destination port range, access, priority, and direction.

```yaml

- name: Create Network Security Group

azure_rm_securitygroup:

resource_group: ansible-rg

name: networkSecurityGroup

rules:

- name: 'allow_rdp'

protocol: Tcp

destination_port_range: 3389

access: Allow

priority: 1001

direction: Inbound

- name: 'allow_web_traffic'

protocol: Tcp

destination_port_range:

- 80

- 443

access: Allow

priority: 1002

direction: Inbound

- name: 'allow_powershell_remoting'

protocol: Tcp

destination_port_range:

- 5985

- 5986

```

### Create a Virtual Network Interface Card <a name="create-virtual-network-card"</a>

Every computer virtual or not requires some form of network interface card to communicate with computers outside of itself. That is where the Azure virtual network interface card comes in. Previous to this you've created several virtual networking components; a virtual network, subnet, a public ip address, and a network security group. All of these resources will be assigned to a virtual network interface card giving the Azure virtual machine private and public network access.

The Ansible module to create an Azure virtual network interface is `azure_rm_networkinterface`. In order to properly configure the interface you must associate it with a virtual network, subnet, network security group, and to access the virtual machine from the internet a public Ip address.

```yaml

- name: Create a network interface

azure_rm_networkinterface:

name: webNic

resource_group: ansible-rg

virtual_network: vNet

subnet_name: webSubnet

security_group: networkSecurityGroup

ip_configurations:

- name: default

public_ip_address_name: webPublicIP

primary: True

```

### Create a Virtual Machine <a name="create-virtual-machine"</a>

Everything to this point as prepared the Azure environment for you to deploy the virtual machine. You have a lot of options when it comes to deploying a virtual machine in Azure. However, in this tutorial you'll be deploying a Standard DS1 v2 Windows Server 2019 virtual machine. The Ansible module used to deploy Azure virtual machines is `azure_rm_virtualmachine`.

As with every Azure resource you've created so far the virtual machine requires a resource group and a name. The name will be used for the Azure resource and the virtual machine's hostname. The `vm_size` uses Azure a Standard_DS1_v2 instance. You can learn more about Azure's instance sizes [here](https://docs.microsoft.com/en-us/azure/virtual-machines/windows/sizes). Windows virtual machines also require a user name and password be specified when you create the virtual machine. `admin_username` is set to azureuser and will be the local user account you'll login with. `admin_password` is set to `"{{ password }}"` which is an Ansible variable. To avoid having the password stored in clear text, you'll prompt for that input in the next section.

`webNic` is the only network interface you'll need to define at this time. It will connect the virtual machine to the private and public Ip addresses you defined when creating the network resources. `os_type` defines which Operating System will be used by the virtual machine. Setting this to `Windows` changes the behavior of the default parameters passed in to the task. `image` has several sub parameters that define the image used to build the virtual machine. When choosing an image you must specify; the offer (WindowsServer), the publisher (MicrosoftWindowsServer), sku (2019-Datacenter), and version (latest).

```yaml

- name: Create VM

azure_rm_virtualmachine:

resource_group: ansible-rg

name: winWeb01

vm_size: Standard_DS1_v2

admin_username: azureuser

admin_password: "{{ password }}"

network_interfaces: webNic

os_type: Windows

image:

offer: WindowsServer

publisher: MicrosoftWindowsServer

sku: 2019-Datacenter

version: latest

```

### Deploy an Azure Windows Virtual Machine <a name="deploy-azure-windows-virtual-machine"</a>

Throughout this tutorial you've seen snippets of Ansible tasks. When putting those tasks into a playbook you have to add a few additional sections. `hosts` is used to define which target the playbook will be executed against. Setting this to localhost will run the playbook on the Ansible server itself.

`var_prompts` is used to prompt for Ansible variables when the playbook is executed. It's a simple choice for populating variables that contain sensitive information that you do not want to store in the playbook file. You'll use it to prompt for and populate the password used by the task creating the virtual machine.

`tasks` define the sequential tasks that are executed to complete the playbook. The order of these tasks is very important. For example, the task creating the resource group must be at the top. Without the resource group all the tasks after it will fail because the resource group does not exist yet.

Because the tasks used in this playbook create Azure resources a connection from Ansible to Azure must be establish before you can execute the playbook. You have several options available to connect Ansible to Azure. You can define environment variables or create an Ansible credential file. Both options are explained in dept in [Connecting to Azure with Ansible](https://dev.to/joshduffney/connecting-to-azure-with-ansible-22g2)

{% link https://dev.to/joshduffney/connecting-to-azure-with-ansible-22g2 %}

```yaml

#deployWindowsAzureVirtualMachine.yaml

---

- hosts: localhost

connection: local

vars_prompt:

- name: password

prompt: "Enter local administrator password"

tasks:

- name: Create resource group

azure_rm_resourcegroup:

name: ansible-rg

location: eastus

- name: Create virtual network

azure_rm_virtualnetwork:

resource_group: ansible-rg

name: vNet

address_prefixes: "10.0.0.0/16"

- name: Add subnet

azure_rm_subnet:

resource_group: ansible-rg

name: webSubnet

address_prefix: "10.0.1.0/24"

virtual_network: vNet

- name: Create public IP address

azure_rm_publicipaddress:

resource_group: ansible-rg

allocation_method: Static

name: webPublicIP

register: output_ip_address

- name: Output public IP

debug:

msg: "The public IP is {{ output_ip_address.state.ip_address }}"

- name: Create Network Security Group

azure_rm_securitygroup:

resource_group: ansible-rg

name: networkSecurityGroup

rules:

- name: 'allow_rdp'

protocol: Tcp

destination_port_range: 3389

access: Allow

priority: 1001

direction: Inbound

- name: 'allow_web_traffic'

protocol: Tcp

destination_port_range:

- 80

- 443

access: Allow

priority: 1002

direction: Inbound

- name: 'allow_powershell_remoting'

protocol: Tcp

destination_port_range:

- 5985

- 5986

access: Allow

priority: 1003

direction: Inbound

- name: Create a network interface

azure_rm_networkinterface:

name: webNic

resource_group: ansible-rg

virtual_network: vNet

subnet_name: webSubnet

security_group: networkSecurityGroup

ip_configurations:

- name: default

public_ip_address_name: webPublicIP

primary: True

- name: Create VM

azure_rm_virtualmachine:

resource_group: ansible-rg

name: winWeb01

vm_size: Standard_DS1_v2

admin_username: azureuser

admin_password: "{{ password }}"

network_interfaces: webNic

os_type: Windows

image:

offer: WindowsServer

publisher: MicrosoftWindowsServer

sku: 2019-Datacenter

version: latest

```

`ansible-playbook` is the Ansible command used to execute playbooks. Because the playbook is targeting localhost, the Ansible server itself no inventory or host file is required. All that is required is the name of the playbook to be executed. Save the above playbook as `deployWindowsAzureVirtualMachine.yaml` and run the following command to deploy your Windows virtual machine to Azure!

```yaml

ansible-playbook deployWindowsAzureVirtualMachine.yaml

```

_Modified recording, actual run time 5 minutes_

### Conclusion <a name="conclusion"</a>

You've now deployed a Windows virtual machine to Azure! At this point you might be wondering what the benefit of using Ansible is over creating the resources in the Azure portal or by using a PowerShell script. The benefits of using Ansible are;

* codified infrastructure

* idempotent automation

* configuration management

If you had used the portal you'd have a difficult time recreating the environment exactly the way it was before. If you had scripted the creation of all these resources you wouldn't be able to run that same script over and over without a lot of modification and error handling. That is where the idempotent nature of Ansible is extremely valuable. | joshduffney |

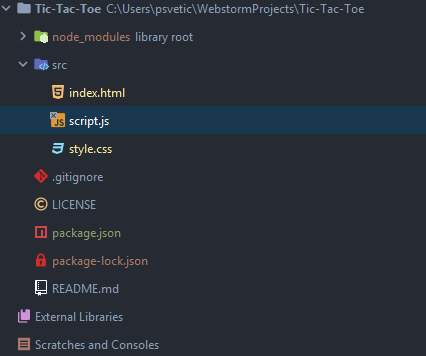

230,346 | Stock charts with fl_chart library | There is no better way to present data than a pie chart as we saw in this Dilbert comic strip. But s... | 4,011 | 2020-01-02T21:34:10 | https://dev.to/kamilpowalowski/stock-charts-with-flchart-library-1gd2 | flutter, dart, charts, stock | There is no better way to present data than a pie chart as we saw in this [Dilbert comic strip](http://dilbert.com/strip/2009-03-07).

But sometimes you want to display values that changed over time. More suitable for these tasks are the line and bar charts. It doesn’t matter which type of chart you want to use in your Flutter mobile app - all of them you can create using [fl_chart Package](https://pub.dev/packages/fl_chart) made by Iman Khoshabi - imaNNeoFighT.

## Challange

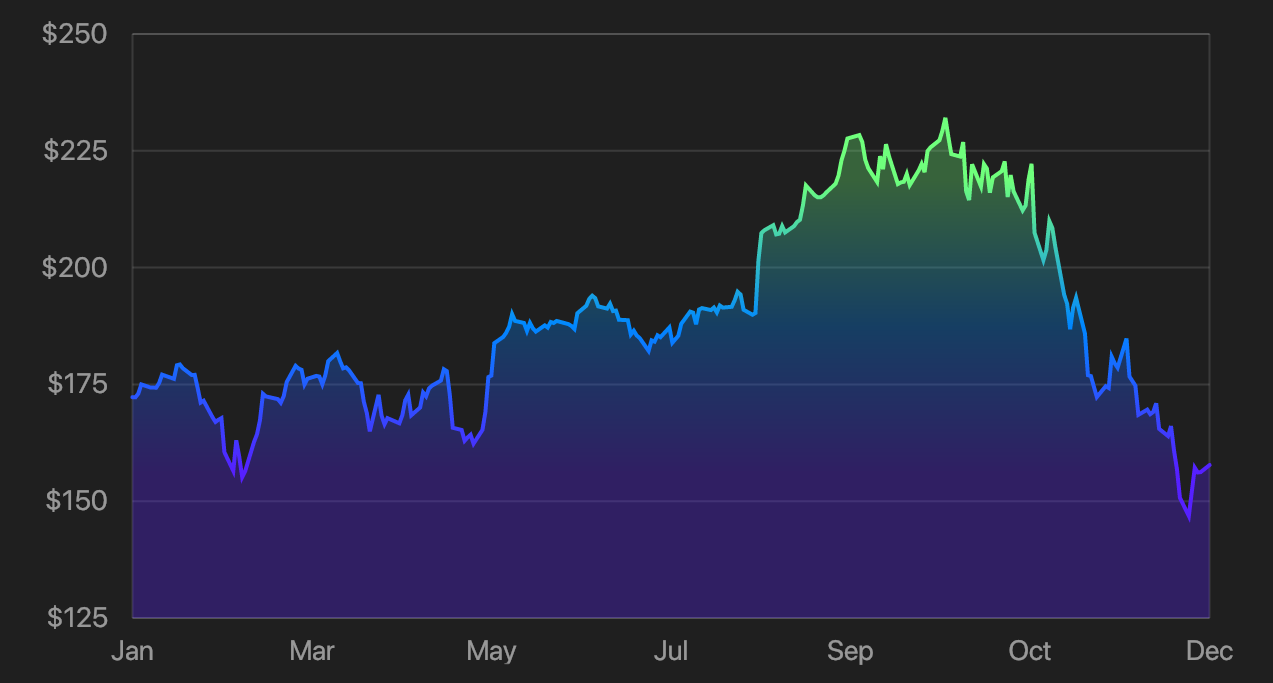

To get used to **fl_chart** library I created a challenge for myself - display stock data using Flutter. The chart should look pretty and I should be able to read some information by just looking at the chart. Here is an effect of my work:

## Solution

### Get data

The first problem that I was forced to solve was a way to get data for this challenge. Most of the stock charts' data APIs are paid and required registration. After some research, I found about [eodhistoricaldata.com](https://eodhistoricaldata.com/). They provide example endpoints with fixed user token for testing purposes. To my excitation, sample data was for AAPL (Apple) stock data on NASDAQ. Using `https://eodhistoricaldata.com/api/eod/AAPL.US?from=2018-01-01&to=2018-12-31&api_token=OeAFFmMliFG5orCUuwAKQ8l4WWFQ67YX&period=d&fmt=json` URL call I was able to get Apple stock prices for whole 2018 year. To make things easier for this challenge I saved the result of this call as `data.json` in `assets` directory and added it to project in `pubspec.yaml`:

```yaml

...

flutter:

assets:

- assets/data.json

...

```

### Load data

My `data.json` file contains an array/list of items that look like this:

```json

[

{

"date": "2018-01-02",

"open": 170.16,

"high": 172.3,

"low": 169.26,

"close": 172.26,

"adjusted_close": 167.1997,

"volume": 25555934

},

...

]

```

To use them I’ve created `datum.dart` model with content:

```dart

class Datum {

Datum({this.date, this.close});

final DateTime date;

final double close;

Datum.fromJson(Map<String, dynamic> json)

: date = DateTime.parse(json['date']),

close = json['close'].toDouble();

}

```

As you can see I took only two values that I’ll need for displaying the chart - date of entry and close price. Then I prepared the code to load this stock values to memory:

```dart

import 'dart:async';

import 'dart:convert' show jsonDecode;

import 'package:flutter/services.dart' show rootBundle;

import 'package:fluttersafari/datum.dart';

Future<List<Datum>> loadStockData() async {

final String fileContent = await rootBundle.loadString('assets/data.json');

final List<dynamic> data = jsonDecode(fileContent);

return data.map((json) => Datum.fromJson(json)).toList();

}

```

For this simple project `jsonDecode` function available in `dart:convert` package is sufficient but for bigger projects, I would recommend `json_serializable` as explained in [JSON and serialization](https://flutter.dev/docs/development/data-and-backend/json) article of Flutter documentation.

### Add library

To use **fl_chart** I added the library to the project. This required another edit of `pubspec.yaml` file:

```yaml

...

dependencies:

flutter:

sdk: flutter

fl_chart: ^0.6.0

...

```

And calling `flutter pub get` after it.

### Prepare data

Before I'll pass our data to **fl_chart** I have to transfer it to form understand by this library. Axis based charts are expecting a list of `FlSpot` objects that will represent points of interest on our chart. Each `FlSpot` object contains two `double` values (`x` and `y`). My X axis will display time and Y will show a close price.

```dart

final List<Datum> data = await loadStockData();

final List<FlSpot> values = data

.map((datum) => FlSpot(datum.date.millisecondsSinceEpoch.toDouble(), datum.close))

.toList();

setState(() {

_values = values;

});

```

I've used `millisecondsSinceEpoch` to get an integer number for each data point. Full solution (attached at the end of blogpost) requires to provide `minX`, `maxX`, `minY`, `maxY` values which present lowest and highest numbers (with additional offset if needed) limiting chart in both directions.

### Display chart

Now, when I have data ready to display, it's time to create a `LineChart` widget.

```dart

LineChart(_mainData());

```

Nothing interesting here, right? The real fun starts with `LineChartData` object.

```dart

LineChartData _mainData() {

return LineChartData(

gridData: _gridData(),

titlesData: FlTitlesData(

bottomTitles: _bottomTitles(),

leftTitles: _leftTitles(),

),

lineBarsData: [_lineBarData()],

);

}

```

We can control each aspect of `LineChart` widget. From the look of the grid, color and shape of chart border to labels on any side of the cart to most important - lines that visualize our data. All available parameters can be inspected on the [documentation](https://github.com/imaNNeoFighT/fl_chart/blob/master/repo_files/documentations/line_chart.md) page.

### Line and background

Now let's focus on some elements starting from crème de la crème - the `_lineBarData()` function that will provide stock data information for `LineChartData` widget.

```dart

LineChartBarData _lineBarData() {

return LineChartBarData(

spots: _values,

colors: _gradientColors,

colorStops: const [0.25, 0.5, 0.75],

gradientFrom: const Offset(0.5, 0),

gradientTo: const Offset(0.5, 1),

barWidth: 2,

isStrokeCapRound: true,

dotData: const FlDotData(show: false),

belowBarData: BarAreaData(

show: true,

colors: _gradientColors.map((color) => color.withOpacity(0.3)).toList(),

gradientColorStops: const [0.25, 0.5, 0.75],

gradientFrom: const Offset(0.5, 0),

gradientTo: const Offset(0.5, 1),

),

);

}

```

This may look scary to you at the begging but despite providing transformed values in `spots: _values` I'm setting just a few parameters that will give this chart these awesome gradient colors. Please take a look at `belowBarData` property. Using this field I can control how the area below chart line looks. I just set it to three gradient colors stored in the `_gradientColors` variable.

```dart

final List<Color> _gradientColors = [

const Color(0xFF6FFF7C),

const Color(0xFF0087FF),

const Color(0xFF5620FF),

];

```

### Labels

displaying left and bottom labels may be a bit tricky at first. Let's take a look at this code:

```dart

SideTitles _bottomTitles() {

return SideTitles(

showTitles: true,

textStyle: TextStyle(

color: Colors.white54,

fontSize: 14,

),

getTitles: (value) {

final DateTime date =

DateTime.fromMillisecondsSinceEpoch(value.toInt());

return DateFormat.MMM().format(date);

},

margin: 8,

interval: (_maxX - _minX) / 6,

);

}

```

For each data point, `LineChart` will call `getTitles` function (which in this case converts data back to `DateTime` object and returns short month name) and tries to display it below the chart. To not clutter chart with data, I used the interval parameter to limit how often `getTitles` should be called (I decided to display only 6 labels below the chart).

A similar solution is used for `_leftTitles()` but their interval calculations are more complicated so I leave them to check in full example available at the end of the blogpost.

```dart

SideTitles _leftTitles() {

return SideTitles(

showTitles: true,

textStyle: TextStyle(

color: Colors.white54,

fontSize: 14,

),

getTitles: (value) =>

NumberFormat.compactCurrency(symbol: '\$').format(value),

reservedSize: 28,

margin: 12,

interval: _leftTitlesInterval,

);

}

```

### Grid

Last, but not least - we can control the way the grid is displayed.

```dart

FlGridData _gridData() {

return FlGridData(

show: true,

drawVerticalLine: false,

getDrawingHorizontalLine: (value) {

return const FlLine(

color: Colors.white12,

strokeWidth: 1,

);

},

checkToShowHorizontalLine: (value) {

return (value - _minY) % _leftTitlesInterval == 0;

},

);

}

```

An optional `checkToShowHorizontalLine` function will tell which horizontal lines should be displayed. To fit grid lines to horizontal values, I've used logic related to a previously computed `_leftTitlesInterval` variable (to be inspected in the full code repository).

## Summary

Making eye-appealing charts usually required two very different steps. We have to prepare data first and then load it on the user interface. The first one is fully on you. But for UI, you can use a **fl_chart** or different library that can help you with this task. I hope that my challenge will motivate you for own experiments with this awesome library.

For full implementation of this project go to [Flutter Safari](https://github.com/kamilpowalowski/fluttersafari/tree/fl_chart) repository on Github. | kamilpowalowski |

230,381 | (Most) IT Recruiters suck. Here's how to fix it. | Ask techies: How's jobhunting, nowadays? The answer is: it's HORRIBLE. I am sorry but that's the mo... | 0 | 2020-01-02T12:25:05 | https://dev.to/luisnomad/most-it-recruiters-suck-here-s-how-to-fix-it-3e95 | recruiters, it, hr, jobhunting | Ask techies: How's jobhunting, nowadays?

The answer is: it's _HORRIBLE_.

I am sorry but that's the most accurate word to describe it. It's utterly terrible, disappointing, and frustrating. Considering we developers and technical people are such a juicy prize, we're treated like shit, both by recruiters and companies. It's not my opinion, ask any developer you know. They will agree in less than two seconds, no need to think about the answer.

But why?

I am going to tell you my opinion. The fact that dealing with interviews and recruiters is crap is not debatable. That is a fact. Now, the reasons for this situation are open to discussion and what I am saying here is my point of view, purely personal, based on my own experience.

## Where are the professional, trained recruiters?

It looks to me like nowadays anyone can call themselves "recruiters". Not so long ago, recruiters were people with specialized studies, even psychology degrees. People who were trained to understand people. What happened to that? There were proper formalities, and a candidate was informed of the status of the process. There was respect.

Now, we are contacted by random people offering some positions that might or might not fit our profile. We answer that message telling them we're interested, and we might or might not get a reply. If we get a reply, it's like it was us who started begging for that job, because we have to constantly ping the recruiter to know what's going on. That is, of course, if the conversation doesn't abruptly die (from their side).

## Secrecy about the company and job conditions

Maybe we're offered an interview with a ghost company because they don't want to give away the name or any detail. We probably don't know about the salary range either. Recruiters ask us, and we have to tell them about our expectations. That's no confirmation that we'll get anything close to that. I've been offered way less than expected AFTER all the interviews, because you know, "that's the average for the position in my city".

How can we prepare for interviews or do proper filtering of where we apply to, if we don't know all the details? If a recruiter wants to know everything about me, I have to tell them or I lose my chance. It should be reciprocal.

## No, really, read my profile

If you want to understand my profile, the best way to do it is to read it in the first place. I've been told things like "oh, you seem to be a bit unstable, there's a lot of jobs in your CV". No Mister, I have been freelancing and I've had a lot of customers. That, in my dictionary, is called a success, not instability.