id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

247,875 | Simple authentication and styling in beginner Rails 6 project | Ok so, here goes my first tech blog post. I've been trying to do this for a while, but just didn't ha... | 4,479 | 2020-01-24T13:47:42 | https://dev.to/bbborisk/simple-authetication-and-styling-in-beginner-rails-6-project-m6 | rails, bootstrap, beginners, books | Ok so, here goes my first tech blog post. I've been trying to do this for a while, but just didn't have the courage to get my self to do it. So, this is a bit about *how to do this thing* and a bit about *how to overcome imposter syndrome* for absolute beginners.

Let's just dive in. I have started a project driven by my wife's and my need to systematize hundreds of children's books we have for our toddlers. And I know, we could've made this in a few excel sheets, but my goal here is to make something of my own, customizable and editable.

And I love rails, for it is so beginner-friendly, yet so powerful. First,

I generated the project

```

rails new ChildrensBooks

```

Rails 6 takes a bit longer to generate, but it has tons of features, which I am still learning about. After making the index page, the next thing is to add gems for authentication and bootstrap templates. so, in gemfile, add gems `devise`, `devise-bootstrap-views` and `twitter-bootstrap-rails`, which is simple and easy to use. You also need `jquery-rails`.

```ruby

gem 'jquery-rails'

gem 'devise'

gem 'twitter-bootstrap-rails'

gem 'devise-bootstrap-views'

```

And, of course, install them with bundler - `bundle install`. Now, there are better ways to do this, but again, I'm doing this in the simplest way possible. Then, onto making model for the Users table.

In order to use `devise`, you must first install it with

```bash

rails generate devise:install

```

Then, generate Users model. Devise makes it so simple, also making all the routes in `routes.rb` file.

```bash

rails generate devise User

```

This is a perfect setup for me at this moment, no need for other configurations. so I just migrated the database.

```bash

rails db:migrate

```

The styling idea I got from some Udemy course I saw a while back, and it seems great now. It adds a twitter style outlook, and is both available for page layout and devise form aswell.

First, add the bootstrap requirements to the style resources file.

application.css:

```css

*= require twitter-bootstrap-static/bootstrap

```

and application.js

```javascript

//= require twitter/bootstrap

```

Also, include both files in manifest.js

```javascript

//= link_tree ../images

//= link_directory ../stylesheets .css

//= link application.js

```

Next, install the theme. I used static, but in the documentation of the gem you can find others as well.

```bash

rails generate bootstrap:install static

```

then, it is just the matter of adding `views` and updating the `application.html.erb` file.

```bash

rails g bootstrap:layout application

```

and then same for devise

```bash

rails g devise:views:locale en

```

```bash

rails g devise:views:bootsrap_templates

```

That should do it.

In my `application_controller.rb` I added the before_action for authentication

```ruby

before_action :authenticate_user!

```

so, when I run the server, it takes me straight to the login screen. From there, you can make a user and, I guess, that is it.

So, the next thing for me is making a model for books. I know this isn't the most brilliant text or groundbreaking tutorial. It is basically my experience in making the starter code following the documentation in gems. But I hope it helps someone, and it really helped me break the ice in the community.

Feel free to get in touch, share your journey into coding world, share knowledge and tips for this newbie.

Cheers! | bbborisk |

247,906 | Hello | https://www.romexsoft.com/ -check out this company is Ukrainian outsourcing provider of AWS cloud sol... | 0 | 2020-01-24T14:33:47 | https://dev.to/joanalauren/hello-35n3 | https://www.romexsoft.com/ -check out this company is Ukrainian outsourcing provider of AWS cloud solution and software engineering services. | joanalauren | |

247,912 | Pick. Squash. Drop. Rebase! (Comic) | It is a common practice to use `git rebase` to squash commits before creating or merging a pull request; nobody needs to see that you fixed 10 typos in 5 separate commits, and keeping that history is of no use. So how does a rebase look like? | 4,483 | 2020-01-24T16:06:24 | https://dev.to/erikaheidi/pick-squash-drop-rebase-comic-607 | git, beginners, illustrated, comics | ---

title: Pick. Squash. Drop. Rebase! (Comic)

published: true

description: It is a common practice to use `git rebase` to squash commits before creating or merging a pull request; nobody needs to see that you fixed 10 typos in 5 separate commits, and keeping that history is of no use. So how does a rebase look like?

series: Git Illustrated

tags: git, beginners, illustrated, comics

cover_image: https://thepracticaldev.s3.amazonaws.com/i/mipao0n3oqno93o22s7f.png

---

[Git Rebase](https://git-scm.com/docs/git-rebase) allows us to rewrite Git history. It is a common practice to use `git rebase` to squash commits before creating or merging a pull request; nobody needs to see that you fixed 10 typos in 5 separate commits, and keeping that history is of no use. So how does a rebase look like?

Let's imagine you have your deck of cards, they are ordered in a certain way that cannot be changed. Each card represents a commit in a project's branch.

When running an interactive rebase with `rebase -i`, there are mainly three actions we may want to perform in a commit (card):

- **p**ick: pick a commit.

- **s**quash: squash this commit into the previous one.

- **d**rop: drop this commit altogether.

In this game, you want to **s**quash cards together into doubles and triples. Some cards make sense on their own, so you will **p**ick them. Sometimes, a card should not even be there, so you might want to **d**rop it.

Although there are other ways of using `git rebase`, the interactive rebase used like this is a common practice observed in projects that rely on multiple contributors, both in open as well as in closed source. It enables you to commit earlier and with more frequency, because you are able to edit your history before submitting your pull request.

If you'd like a deeper introduction to Git Rebase, please check this great dev article from [@maxwell_dev](https://dev.to/maxwell_dev):

{% post https://dev.to/maxwell_dev/the-git-rebase-introduction-i-wish-id-had %} | erikaheidi |

247,923 | The Macintosh was unveiled 36 years ago today | A beautiful machine | 0 | 2020-01-24T15:18:15 | https://dev.to/ben/the-macintosh-was-unveiled-36-years-ago-today-k7f | computerhistory, news, apple, mac | A beautiful machine

{% youtube 2B-XwPjn9YY %} | ben |

247,941 | Is Ruby On Rails The Biggest Waste Of Time in 2020? | A post by codingphasedotcom | 0 | 2020-01-24T16:03:54 | https://dev.to/codingphasedotcom/is-ruby-on-rails-the-biggest-waste-of-time-in-2020-41pd | ruby, rails, webdev | {% youtube Wbh6PMUsgzk %}

| codingphasedotcom |

247,960 | JavaScript and Friends - Tech Launchers | Tech Launchers is a program to go the extra mile beyond the regular mentor and mentee program. JavaSc... | 0 | 2020-01-24T16:47:23 | https://dev.to/jsandfriends/javascript-and-friends-tech-launchers-47mo | career, mentors | Tech Launchers is a program to go the extra mile beyond the regular mentor and mentee program. JavaScript and Friends is excited to launch this program in 2020.

There is always a gap which regular coursework at college or bootcamp do not teach or self-taught programmers do not learn. Tech Launchers are those who will fill that gap.

### Why did we name the program as "Launchers"?

The most common words we get to imagine upon hearing the term Launcher is either a Rocket Launcher, Satellite Launcher or Launch Vehicle.

> Based on Wikipedia -

> A launch vehicle or carrier rocket is a rocket propelled vehicle used to carry a payload from Earth's surface to space, usually to Earth orbit or beyond.

> A rocket launcher is a device that launches an unguided, rocket-propelled projectile

Now that you have a basic understanding of the term "Launcher", Tech Launchers are those who will have both qualities in them.

### Who are Tech Launchers?

Tech Launchers are those who will guide and support college/bootcamp graduates and self-taught programmers to unleash their potential. Launchers will propel and boost college/bootcamp graduates and self-taught programmer's path to tech industry by offering support via knowledge, network, referrals and help them close the gaps making them job ready.

Tech Launchers will advise, coach and be there as support providing feedback to college/bootcamp graduates and self-taught programmer's. You can go the extra mile and be available to them until they reach their destination.

As a Tech Launcher, you can choose to launch as many as you want.

### How much time should I dedicate to be Tech Launcher?

The program is initially targeted for 14 days with a minimum overall time commitment of 10 hours. You can also go the extra mile by extending the program beyond 14 days.

On any given day, you may be tasked with helping the student understand some programming challenge / task. On another day, you may be giving career advice or reviewing a resume. You might help them understand current trends in industry and advise them on building their portfolio projects on Github.

### How do I connect and communicate with Students?

As moderator of the program, we will share with you details of students who are looking for Tech Launchers.

You can connect with them through JavaScript and Friends Slack channel "techlaunchers" initially and then choose either of the following preferred modes - email, chat, voice calls based on your preference.

You will define the rules on when you want to connect and the frequency.

### How do I participate?

Interested in being part of the program to help launch future aspiring students, leave your details in comments. Our team will reach out to you to discuss further logistics. The program is currently looking for Launchers in the USA. Once we validate the program, we will consider expanding the program to other locations.

| baskarmib |

248,055 | Daily Challenge #176 - Loopover | Create a function to solve Loopover puzzles. | 1,326 | 2020-01-30T15:36:21 | https://dev.to/thepracticaldev/daily-challenge-176-loopover-3b23 | challenge | ---

title: Daily Challenge #176 - Loopover

published: true

series: Daily Challenge

description: Create a function to solve Loopover puzzles.

tags: challenge

---

###Setup

Loopover puzzles are 2D sliding puzzles that work more like flat rubik's cubes. Instead of having one open slot for pieces to slide into, the entire grid is filled with pieces tha wrap back around when you slide a row or column.

You can try it out at this sketch here: https://www.openprocessing.org/sketch/576328

To complete this challenge, implement a function to return a list of moves that will transform an unsolved grid into a solved one.

Consider the grid:

<pre>

ABCDE

FGHIJ

KLMNO

PQRST

UVWXY

</pre>

If we make the move `R0` (move the 0th row right) then we get:

<pre>

EABCD

FGHIJ

KLMNO

PQRST

UVWXY

</pre>

Likewise, if we do `L0` (move the 0th row left), we get:

<pre>

ABCDE

FGHIJ

KLMNO

PQRST

UVWXY

Back to normal.

</pre>

Say we make the move `U2` (move the 2nd column up):

<pre>

ABHDE

FGMIJ

KLRNO

PQWST

UVCXY

</pre>

`D2` (2nd column down) would then return us to the original grid. With all of this in mind, our tests will give you the scrambled grid as input. Please return an array of the moves taken to unscramble the grid.

For example:

<pre>

SCRAMBLED GRID:

DEABC

FGHIJ

KLMNO

PQRST

UVWXY

SOLVED GRID:

ABCDE

FGHIJ

KLMNO

PQRST

UVWXY

</pre>

One possible solution would be `["L0", "L0"]` as moving the top row left twice would result in the original, solved grid. Another would be `["R0", "R0", "R0"]` etc. etc.

###Tests

`"ACDBE\nFGHIJ\nKLMNO\nPQRST\nUVWXY"`

`"ABCDE\nKGHIJ\nPLMNO\nFQRST\nUVWXY"`

Some of these can be kind of tricky. Good luck!

***

_This [challenge](https://www.codewars.com/kata/5c1d796370fee68b1e000611) comes from jaybruce1998 on CodeWars. Thank you to [CodeWars](<https://codewars.com/>), who has licensed redistribution of this challenge under the [2-Clause BSD License](<https://opensource.org/licenses/BSD-2-Clause>)!_

_Want to propose a challenge idea for a future post? Email **yo+challenge@dev.to** with your suggestions!_ | thepracticaldev |

248,060 | How this self taught developer made $70,000 whilst travelling | Guilherme Rizzo is the self-taught founder of CSS Scan which lets you easily inspect the CSS of a w... | 0 | 2020-04-01T11:29:58 | https://www.nocsdegree.com/how-this-self-taught-coder-made-70-000-whilst-travelling-the-world/ | css, beginners, webdev | ---

title: How this self taught developer made $70,000 whilst travelling

published: true

date: 2020-01-24 16:42:00 UTC

tags: CSS, Beginners, Webdev

canonical_url: https://www.nocsdegree.com/how-this-self-taught-coder-made-70-000-whilst-travelling-the-world/

---

Guilherme Rizzo is the self-taught founder of [CSS Scan](https://gumroad.com/a/997618803) which lets you easily inspect the CSS of a webpage and click and copy it for your own project. He's made $70,000 in the last year from [CSS Scan](https://gumroad.com/a/997618803) while travelling in Asia. [CSS Scan](https://gumroad.com/a/997618803) is a lot quicker than using Dev Tools and you can use it in other browsers besides Chrome. I use it a lot so it's great to be able to ask Guilherme some questions about how he learned to code, making [CSS Scan](https://gumroad.com/a/997618803) and his experience leaving Brazil with just a Mac mini to travel the world.

## Hey, so can you give us a short introduction for people who want to know more about you?

I’m [Guilherme Rizzo](https://twitter.com/gvrizzo) and I'm 21 years old (it’s my birthday today!!). I’m from Brazil but now I’ve been traveling around the world for over a year. I have made a browser extension called [CSS Scan](https://gumroad.com/a/997618803) and with it you can check or copy the CSS code of any element you see on the internet, by just moving your mouse over it. So far I’ve made over $70,000 in revenue with it and that’s my full-time job, along with CSS Scan Pro - its premium version).

## I really like CSS Scan! Why did you decide to create it?

I was always interested in what border-radius and box-shadow some elements had. So, inspired by WhatFont? I created this extension that wherever you hover your mouse over, you instantly get the CSS of the element and you can copy all of it with a single click.

So you don’t have to dig into multiple panels in the dev tools. Do you like this button? Just click and copy. Or, study how its made, and learn CSS on the way. It’s a great learning tool for beginners and a real time-saver for advanced users.

## What have you learned since coding CSS Scan?

Coding [CSS Scan](https://gumroad.com/a/997618803) was fun! I recorded all the development of the first version. It took me 50 hours, but I speed it up to 2 minutes so you can check it here:

<iframe width="480" height="270" src="https://www.youtube.com/embed/OtsNNXpXcYs?feature=oembed" frameborder="0" allow="accelerometer; autoplay; encrypted-media; gyroscope; picture-in-picture" allowfullscreen></iframe>

I learned how to develop Chrome extensions, firefox add-ons, and Swift because I needed to learn it to create the Safari App Extension. A lot of javascript and optimizations techniques. But I learned much more about business.

## If you don’t have a CS degree, how did you learn coding? Did you do any particular courses or bootcamps?

I learned programming by creating 2d games. When I was 9 years old, my friend gave me a DVD with a software called RPG Maker XP. It was a very cool tool to easily create 2d games (mostly RPGs). So I started developing those games. My first game was hugely criticized because it looked remarkably weird but I kept going and got better at that.

After one or two years, I needed to create a website for my game so that’s when I first heard about HTML and CSS. While I was creating my games just for fun and for my friends, I realized I could build things that could help my city, my country or even the whole world.

So I began to make friends on the internet and started to make websites/apps with them, and learning programming on the internet along the way. We tried a lot of things and most of them failed. We created more than 6 websites together - that was when I learned how to code.

In 2017 I joined a national competition for high school students in Brazil from Google, called “Maratona de Aplicativos”, where we had to develop an educational app. Me and my friends developed an app that helped parents that have autistic children to organize a routine with in-app interactive exercises for their children - it's name is Teacch.me, it was later discontinued but still exists on the Android's Play Store. After a lot of work, we happily won the competition, and by winning it, we got free tuition at a Brazilian university called FIAP, a notebook, printer, tablet, etc…

So inspired by the TV Show "Silicon Valley" we all moved to Sao Paulo to start a fashion company and some of us also enrolled in university. I was one of them. We met a lot of people in that city and learned a lot together. It's a game-changer experience to live in the same house, with friends that share your interests. I have great memories about that, and it turned out better than I thought. I started university by studying Information Systems but decided to change to Marketing 1 year later but I ended up dropping out of that 10 months later too. So, I learned coding by doing projects and businesses.

<!--kg-card-begin: markdown-->

[](https://triplebyte.com/a/Ww4mbM6/d)

<!--kg-card-end: markdown-->

### Do you want a developer job but don't have a Computer Science degree? Pass [Triplebyte's quiz](https://triplebyte.com/a/Ww4mbM6/d) and go straight to final onsite interviews at top tech companies

## What advice would you give to people wanting to get into coding but don’t have a CS degree?

The Codecademy courses helped me a lot. For real. Especially the Javascript one. I strongly recommend it. And also, make stuff. For you, for your friends, for your city, anything. The more you practice it, the more you’ll learn, everybody already says this.

Remember that you can do anything you want. But there are 3 levels of "want". I want, I will, and I commit myself. Commit yourself to learn and make it happen. Just like Pete [committed himself to get an IndieHackers podcast.](https://twitter.com/petecodes/status/1152986101642158080)

## You took off and went travelling armed just with a Mac Mini! Do you have any advice for developers that want to go nomad and work from anywhere?

If you feel and genuinely believe you can, I’d say: just do it. Because I felt the same feeling, even when my family and some friends were against it. I felt that nothing could stop me and that it would work because I’d make it work. I’m also young so it’s easier to do these kinds of things.

By the way, that’s the way I did. If you don’t think that would be good for you, you can wait for a safer environment by saving money, doing freelance, working on side-projects, etc, but don’t wait for the perfect moment - there isn’t.

<figcaption>Guilherme with no laptop worked from hotel TV screens in Asia</figcaption>

## Can you tell us what an average day looks like for you just now?

Monday: Take a bath with elephants. Tuesday: Sail around the British Virgin Islands. Jokes aside, I wake up, stretch, read my statements (I have A LOT), cook breakfast with my wife, wash the dishes, start working on my new business, go to explore the city I’m in, lunch, go to coffee work, post on twitter (every day), answer emails, read a book, imagine myself successful, take a shower, read my statements again, sleep.

<!--kg-card-begin: markdown-->

[](http://nocsok.com)

<!--kg-card-end: markdown-->

## Have you ever had imposter syndrome and if so, how have you dealt with it?

Yes, sometimes. I always look back to times that I was and felt successful, and I try to remember how I felt, what I did, why I was successful. I do that once a month. If you start doing that you’ll feel better with yourself too.

## What are the new features in the latest release of CSS Scan?

So, one-time payment for browser extensions is already proved to work, as I did with [CSS Scan](https://gumroad.com/a/997618803). Now I’m trying to play in a different game which is subscriptions. I build CSS Scan Pro because I felt CSS Scan already did it’s purpose very well, but I felt it was limited to the click-to-copy CSS functionality.

CSS Scan Pro is developed to be the definitive browser extension to work with web design, so with it, you can:

- Pick any color from any website (works on images too)

- Get the whole color palette of the website in an instant

- Precisely select any element with the DOM control (with arrow keys to go up and down - parents, children, siblings, etc)

- Understand any CSS animation you see on the web by reading CSS keyframes

- Measure distances and elements with a real-time advanced ruler

- Scan all pseudo-classes and pseudo-elements (while Basic only scans the pseudo-class :hover)

- Check all the changes made in the CSS and copy it or export it to a file

- Visualize guidelines to check alignments

- Scan inherited styles (it gets font-size, font-weight, and other properties that Basic can't catch)

## Awesome! Well, thanks a lot for interview. And Happy Birthday!

Thank you so much, Pete, for this opportunity and thank YOU for reading this interview, appreciate it! If you have any questions or want to chat, feel free to reach me through [Twitter](https://www.nocsdegree.com/p/58d3d113-6ae6-4808-9d5c-71c03ac566ee/twitter.com/gvrizzo) . | petecodes |

248,062 | Wireframing dev.to/videos | It’s not a secret some of us prefer to learn tech ‘X’ by watching videos, rather than reading blog... | 0 | 2020-01-24T19:45:04 | https://dev.to/madza/wireframing-dev-to-videos-1ebi | wireframing | ---

title: Wireframing dev.to/videos

published: true

description:

tags: wireframing

cover_image: https://thepracticaldev.s3.amazonaws.com/i/53g508jpytaka7e35h3c.png

---

It’s not a secret some of us prefer to learn tech ‘X’ by watching videos, rather than reading blog posts.

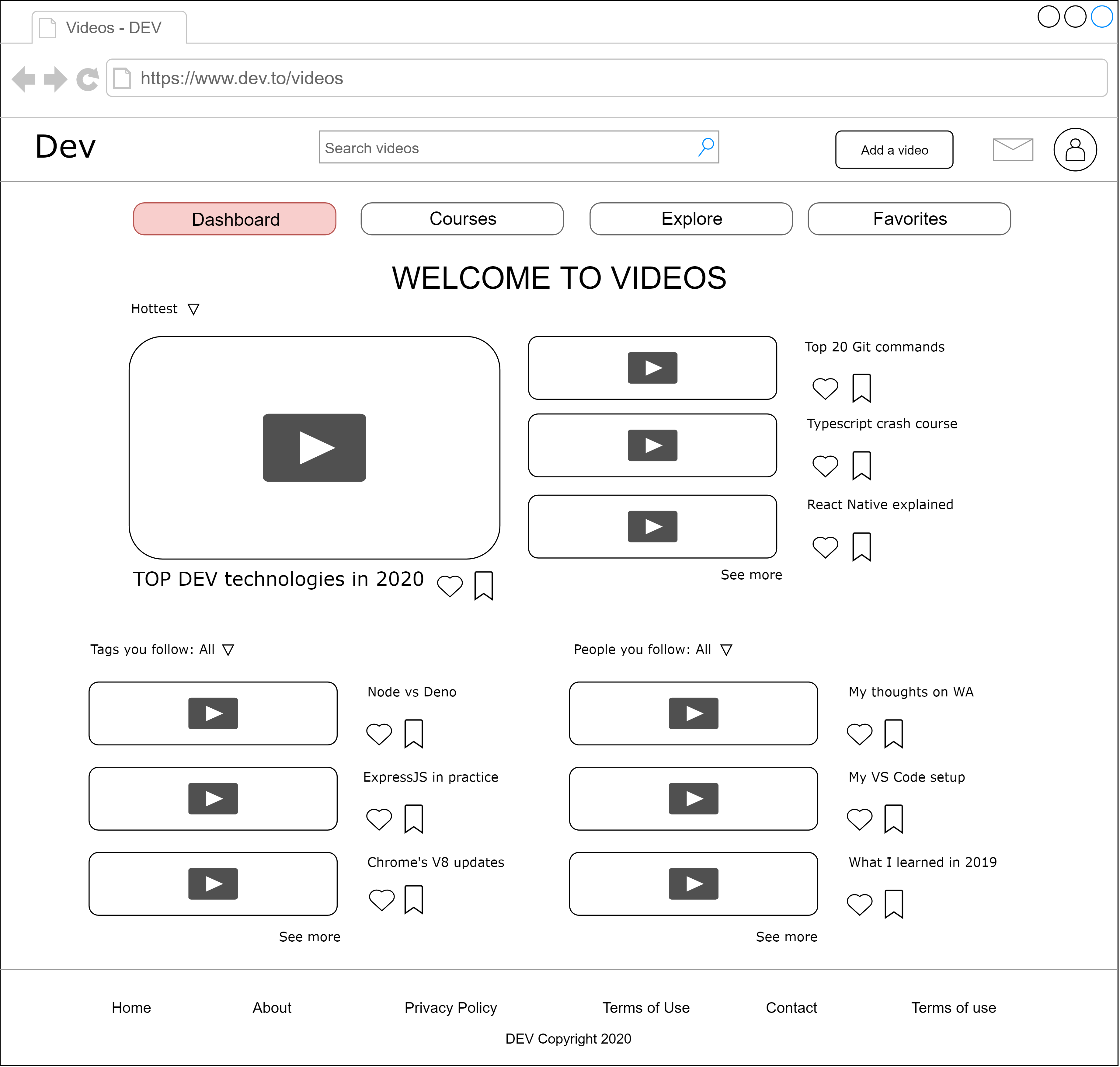

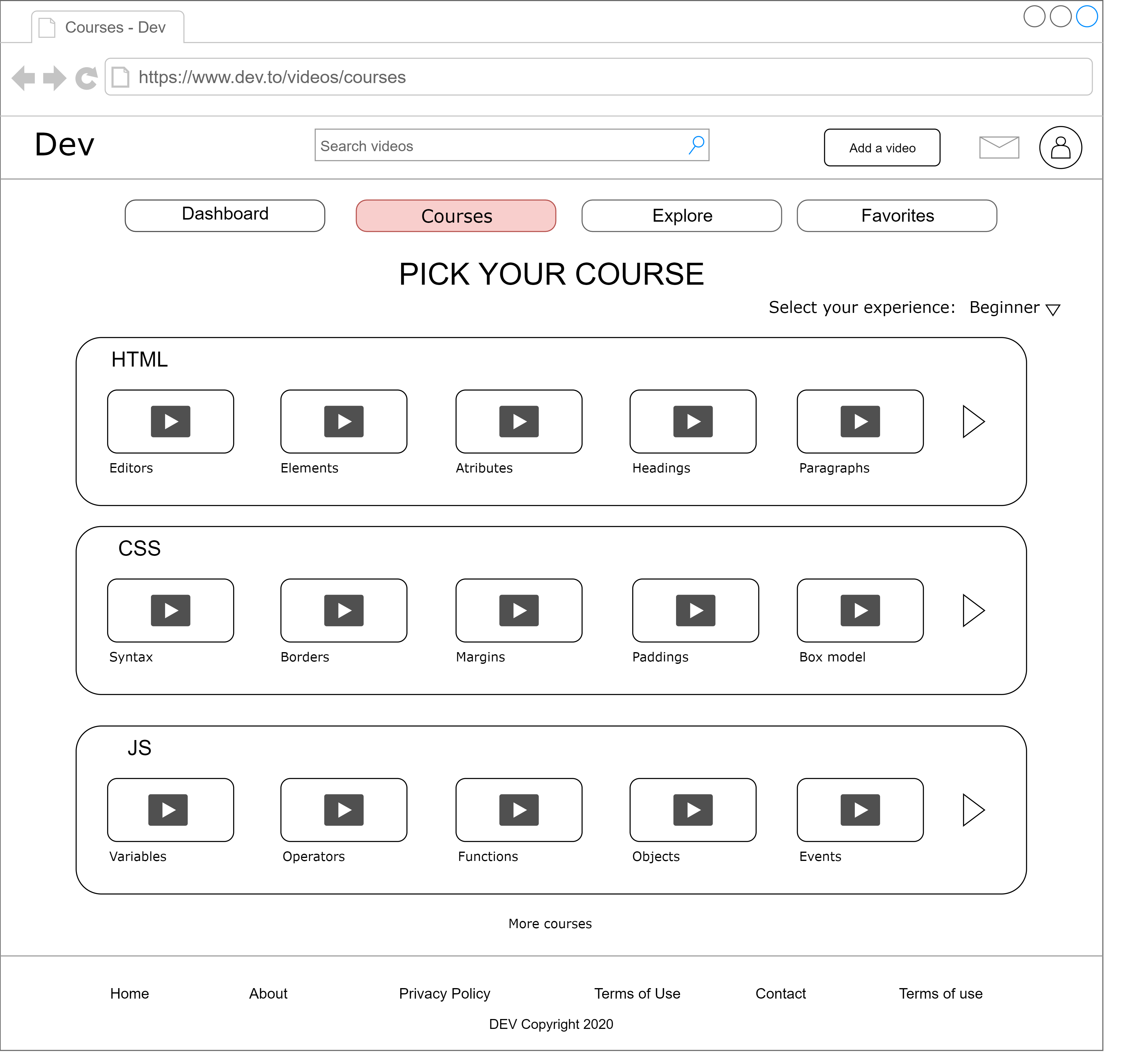

Dev.to has a separate video page dev.to/videos. It needs some more structure and layout, so I decided to do some wireframing on initial ideas to improve the particular section of site.

**1.Dashboard**

This would be the landing page of the video section. The main section would be dedicated for most curated videos. The content feed could be made more personalized by developing an algo for it. User would also be able to see the uploads on specific tags he/she has subscribed as well as from persons he/she follows. There would be an option to heart and save for later on each video.

**2.Courses**

Content in each course would be hand-picked manually. User would be able to select his/her experience level (beginner-intermediate-advanced) to select the complexity of course concepts. The content would be sorted accordingly to provide a proper learning path.

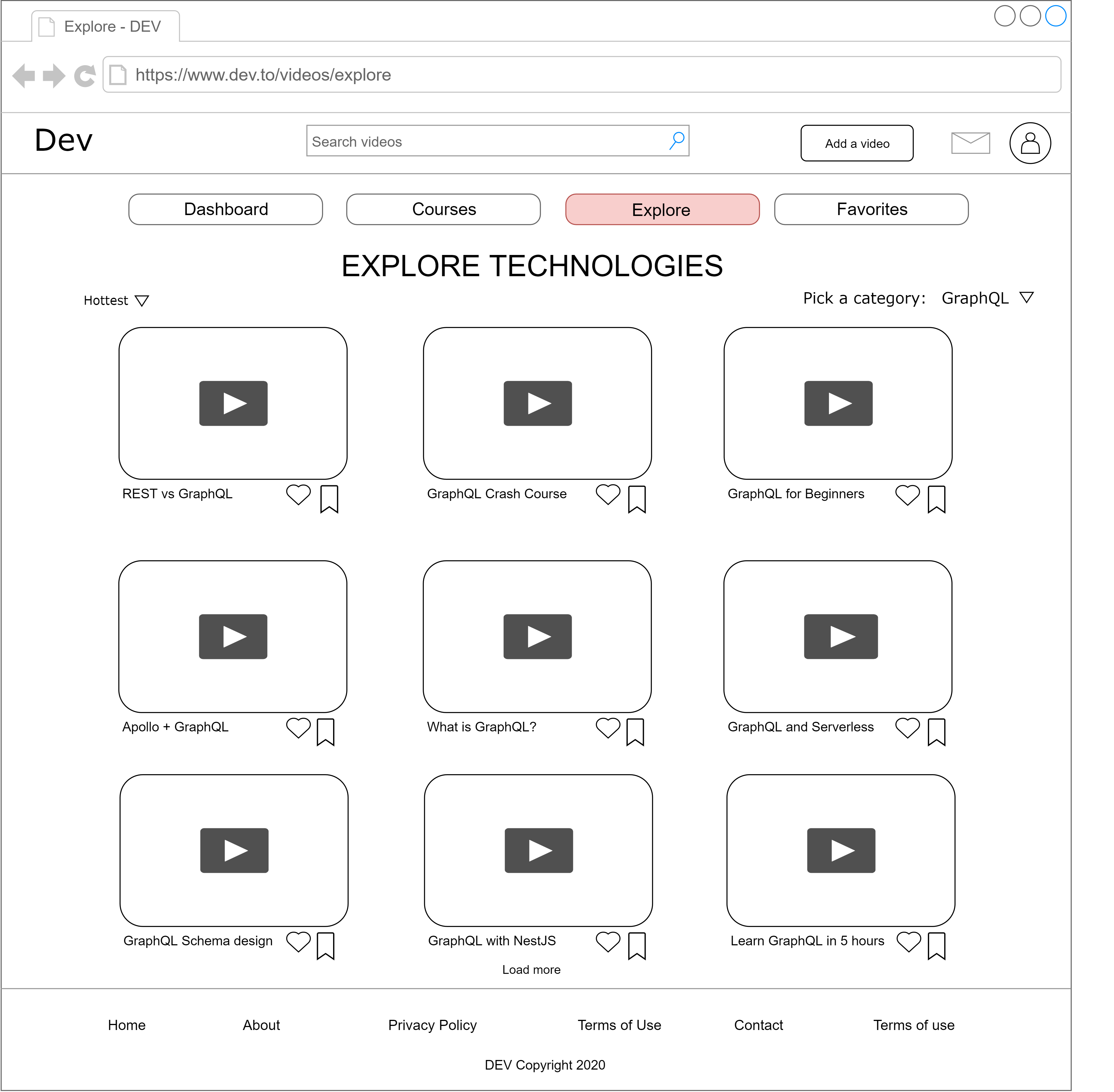

**3.Explore**

User would be able to explore the technologies of their interest (based on tags videos would get during the publish phase). There would be an option to sort displayed results based on the curates and most recent ones. There would be an option to heart and save for later as well.

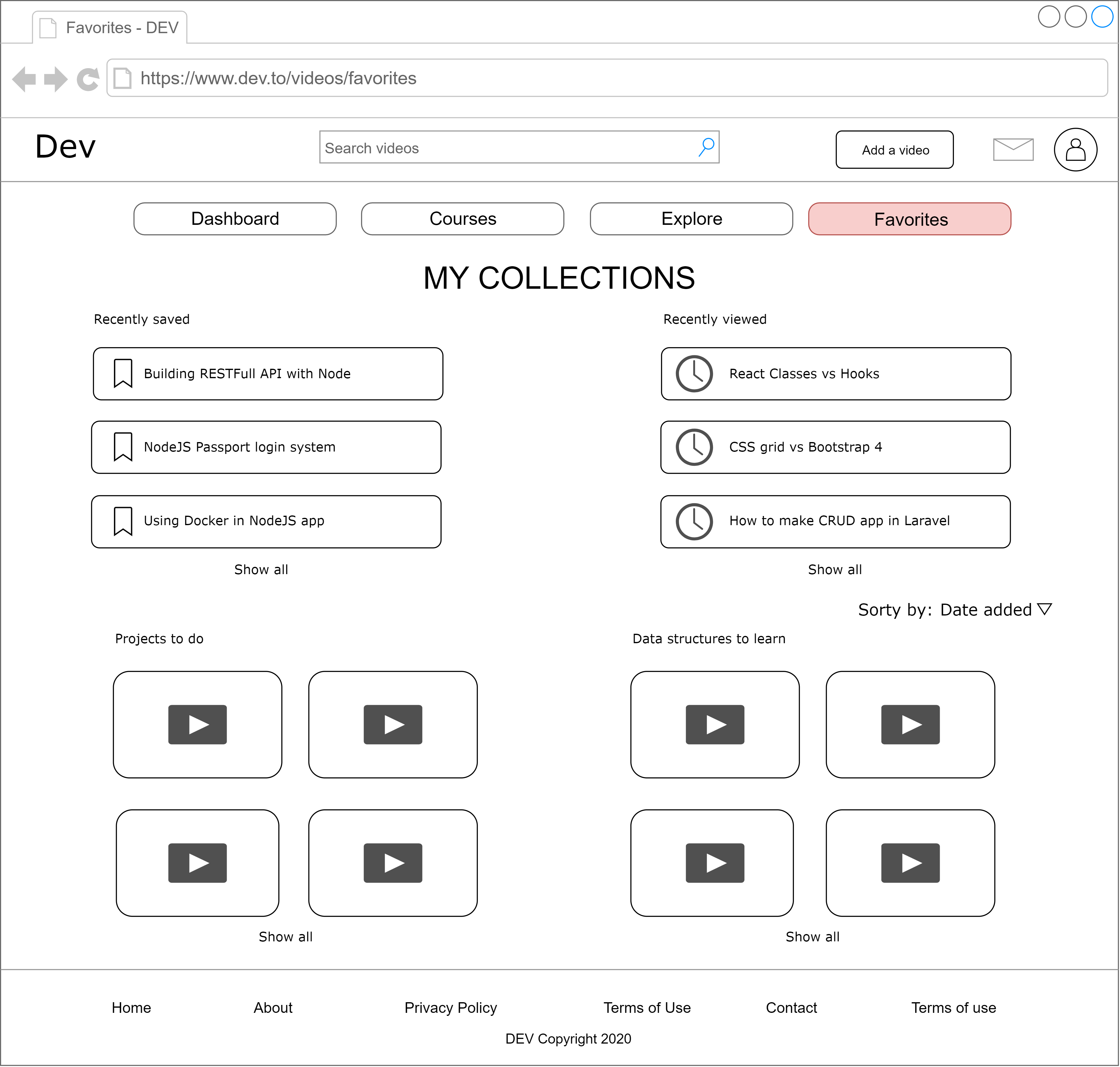

**4.Favorites**

This section would display users activities - recently watched videos and saved for later list. Furthermore user would also be able to make collections, by sorting the videos he/she has liked.

I’ve also put this in dev.to’s Github issues as a feature, where you can find better quality images.

In case if anyone wondering, I used draw.io (it's free).

| madza |

248,102 | Machine learning for logs (part 1 of 5) | In our last blog we discussed the need for Autonomous Monitoring solutions to help developers and ope... | 0 | 2020-01-24T20:48:35 | https://dev.to/gdcohen/machine-learning-for-logs-part-1-of-5-5473 | devops, machinelearning | In our [last blog](https://www.zebrium.com/blog/the-future-of-monitoring-is-autonomous) we discussed the need for Autonomous Monitoring solutions to help developers and operations users keep increasingly large and complex distributed applications up and running.

Although Autonomous Monitoring includes all three pillars of observability (metrics, traces and logs), at Zebrium we have started with logs (but stay tuned for more). This is because logs generally represent the most comprehensive source of truth during incidents, and are widely used to search for the root cause. Log management and log monitoring is also an area we feel hasn’t evolved much in the past 20 years. Most log solutions are still designed around “aggregate, index and search”. And they are mostly used reactively by skilled users who manually search for the root cause.

The main reason logging tools haven’t evolved much in the past two decades is because using Machine Learning (ML) with logs is hard. Logs are incredibly vast, noisy and mostly unstructured. To date ML work in the log space has been either purely academic, or limited to detecting basic anomalies that are both noisy and don’t easily roll up into real incidents that users need to know about.

This blog series will go into detail of how Zebrium has taken a unique approach to applying machine learning to logs, but to understand how the approach is superior, our story starts at what approaches have been tried previously.

**Machine learning for logs**

Machine Learning (ML) uses statistical models to make predictions. For monitoring logs, a useful prediction would be the ability to classify whether a particular log event, or set of events, are causing a real incident that requires action to resolve. Another useful prediction would be to correlate an incident to the root cause so users can easily rectify the issue.

In ML, usually the more data available, the more accurate the ML model will be at making predictions. This is why models usually become more accurate over time. However, this has two challenges – it leads to a long lead time to value, i.e. the system requires several days or weeks of data to serve accurate predictions and not raise false alerts (also referred to as “false positives”).

Worse, slow learning ML is actually not very useful when the behavior of the application itself keeps changing, for example because frequent updates are being deployed for each of its microservices. If the accuracy is poor, then we eventually will start ignoring the model as it will generate too many spammy alerts.

There are also two main approaches for training ML models on existing data: supervised and unsupervised. Supervised training requires a labelled data set, usually produced manually by humans, to help the model understand the cause and effect of the data. For example, we may label all log events that relate to a real incident so the model will recognize that incident again if it sees the same log events or pattern.

As you can imagine, this can take a lot of effort, especially considering the millions of potential failure modes complex software services can generate. Therefore, another approach used to train ML models, is Unsupervised training. In this approach, the model will try and figure out patterns and correlations in the data set by itself, which can then be used to serve predictions.

The challenge with using ML with logs, however, is every environment is different. Although there may be some common third-party services shared between environments (e.g. open source components like MySQL, NGinX, Kubernetes, etc.), there will likely also be custom applications that are unique to a particular environment and generating a unique stream of logs and patterns.

This means that any approach that needs to be trained on an environment’s specific data will not work unless the other environments run the same components. In addition, unless we want to invest a lot of resources and time for humans to accurately label the data, the models must be able to train unsupervised.

Another challenge, is any ML approach needs to figure out how to be accurate at predictions quickly and with limited data, to ensure the user isn’t waiting days or weeks for accurate alerts to be generated.

With these challenges in mind, we need an ML solution that can train quickly on a relatively small dataset and do this unsupervised, to ultimately generate accurate incident predictions across unique environments, and keep learning as an application continually evolves.

**Existing Approaches & Challenges**

While there have been a lot of academic papers on the subject, the approaches typically fall into two categories which are explained below:

**Generalized Algorithms**

This category refers to algorithms that have been designed to detect anomalous patterns in string-based data. Two popular models in this category are Linear Support Vector Machines (SVM) and Random Forrest.

Using SVM as an example, it classifies the probability that certain words in a log line are correlated with an incident. Some words such as “error” or “unsuccessful” may correlate with an incident and receive a higher probability score than other words such as “successful” or “connected”. The combined score of the message is used to detect an issue.

Both SVM and Random Forrest models use supervised learning for training and require a lot of data to serve accurate predictions. As we discussed earlier, unless we are only running common 3rd party software, where we can collect and label a lot of common log samples for training, this approach will not work well in new environments running bespoke custom software, as the models need to be trained on a large labelled data set from the new log samples generated by that specific environment.

These approaches also try to do anomaly detection using the raw log event messages. This may work well for individual log events but will be far too noisy to only detect real incidents. When incidents occur, we need to detect pattern changes across the entire log set, and not look for issues in individual log events.

**Deep Learning**

Deep learning is a very powerful form of ML, generally called Artificial Intelligence (AI). By training neural networks on large volumes of data, Deep Learning can find patterns in data, but generally is used with Supervised training using labeled datasets. AI has been used for hard problems such as image and speech recognition with great results.

One of the best academic papers for this approach is the Deeplog paper from the University of Utah, which uses deep learning to detect anomalies in logs. Interestingly, they have also applied ML to parse logs into event types, which is similar to Zebrium’s approach discussed later, as this significantly improves the accuracy of the anomaly detection.

The challenge with this approach again, is that it requires a large volume of data to become accurate. Which means new environments will take longer before they can serve accurate predictions, and smaller environments may never produce enough data for the model to be accurate enough.

However, unlike the statistical algorithms discussed previously, another issue with Deep Learning is it is very compute intensive to train. Many data scientists will run expensive GPU instances to train models quicker, but at significant cost. If we need to train the model on every unique environment individually, and continuously over time, this would be an extremely expensive way to detect incidents autonomously, and therefore this approach is not recommended for monitoring logs for environments running custom software.

Some vendors have trained deep learning algorithms on common 3rd party services (i.e. MySQL, NGinX etc.). This approach can work as they can take a large volume of publicly available datasets and error modes to train the model, and the trained model can be deployed to all their users. However, as no environment is only running these 3rd party services and has custom software that’s only running in that environment, this approach is limited to only discovering incidents in 3rd party services, and not the custom software running in the environment itself.

**Taking A Different Approach**

As we’ve discussed above, generalized algorithms and deep learning as has been applied up until now, have too many limitations to provide a truly autonomous, unsupervised, log monitoring solution.

Instead of applying a single approach, Zebrium has taken a multi-layer approach, first parsing the logs into normalized events, running anomaly detection across every single event, and then detecting changes in patterns that may indicate an incident is occurring.

The next blogs will go into detail on each step of this process and discuss how it helps to achieve accurate autonomous incident detection and root cause identification.

If you can’t wait for the blog series to finish, please download our white paper [here](https://www.zebrium.com/wp1?utm_campaign=Assets&utm_source=dev_to) or try our [free beta](https://www.zebrium.com/private-beta-sign-up?utm_campaign=Sign-up&utm_source=dev_to).

[Published with permission of the author David Gildeh @ Zebrium] | gdcohen |

248,122 | Introducing Pixz! Point. Shoot. Shared...Photo sharing for groups and events. | Hey everyone, I wanted to get your feedback on Pixz (pixzapp.com). It's a project I started after ret... | 0 | 2020-01-24T22:02:57 | https://dev.to/pixzapp/introducing-pixz-point-shoot-shared-photo-sharing-for-groups-and-events-5agh | showdev | Hey everyone,

I wanted to get your feedback on Pixz (pixzapp.com). It's a project I started after returning from a wedding a few months ago. I thought, wouldn't it be cool if the bride/groom could crowdsource photos from all their guests privately and instantly.

Here we are. We launch nearly a week ago. We built our app on flutter and firebase, and have gone through multiple iterations of the app.

I would love for you to check it out and would appreciate any feedback you have. If you want to join the Pixz Beta group. Here is the invite code: 1579209706055

Cheers! Michael | pixzapp |

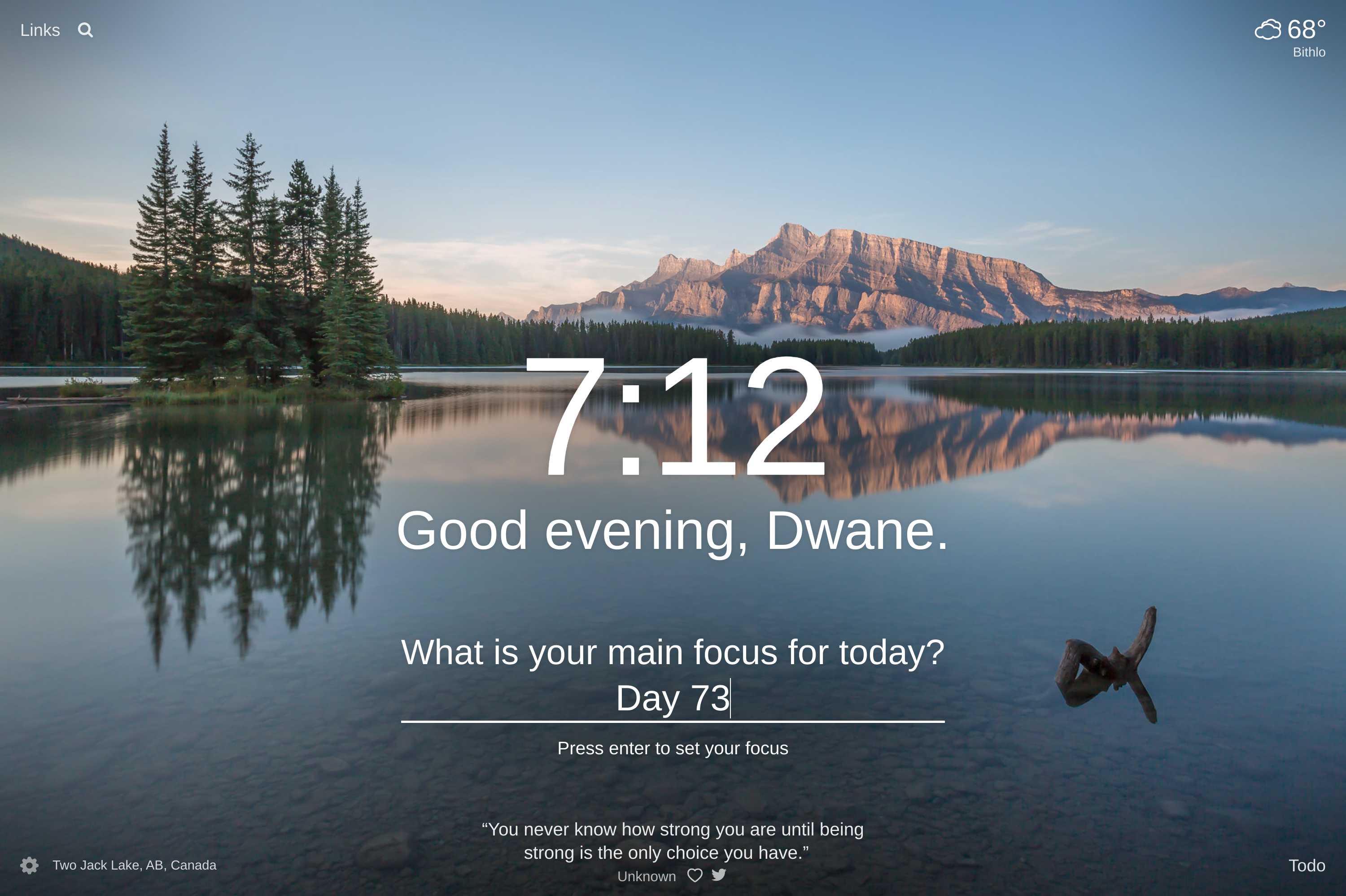

248,175 | Day 73 : Amazing | liner notes: Professional : So yeah, haven't really been keeping up with my daily blogs. haha I cou... | 0 | 2020-01-25T00:28:09 | https://dev.to/dwane/day-73-amazing-2884 | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : So yeah, haven't really been keeping up with my daily blogs. haha I could have sworn, I posted some blogs, but I guess not. To be fair, I have been on a work retreat thing for the last week and just got back today and have been sleeping. I did create a website to chronicle my adventures during that time and that can be found at https://dwane.in/PDXmiami2020

To sum it all up, it was a great time with AMAZING people! Really looking forward to the future.

Here's a pic:

(thanks to Beth for letting me use this pic)

- Personal : Got home from the work retreat, through my clothes into the washing machine and went to sleep. haha. Just got up and have been catching up on things on the internet.

For the rest of the night, I'll be working on the radio show for tomorrow and then some side projects if I have some time.

Have a great night and weekend!

peace piece

Dwane / conshus

https://dwane.io / https://HIPHOPandCODE.com

{% youtube sy8uXXreMi4 %} | dwane |

248,182 | Copy to clipboard button with Stimulus 2.0 (Beta) | Stimulus is a JavaScript framework developed by a team at Basecamp, and it aims to augment your exist... | 0 | 2020-01-28T00:46:41 | https://dev.to/david_ojeda/copy-to-clipboard-button-with-stimulus-2-0-beta-1nll | stimulus, javascript, webdev | [**Stimulus**](https://stimulusjs.org/handbook/introduction) is a JavaScript framework developed by a team at [Basecamp](https://basecamp.com/), and it aims to augment your existing HTML so things work without too much "connecting" code.

Contrary to other frameworks, Stimulus doesn't take over your front-end, so you can add it without too much hassle to your already running app.

**Its documentation is very clear and digestible**. Included in its handbook is an [example of building a clipboard functionality](https://stimulusjs.org/handbook/building-something-real), which I recommend you go through if you are trying Stimulus for the first time.

Right now we are **replicating** that functionality and adding a couple more things **using a development build** specified in this Pull Request (PR)

{% github https://github.com/stimulusjs/stimulus/pull/202 %}

It **includes new APIs that will be released with version 2.0** of the framework, so they are not yet available with the current stable production release.

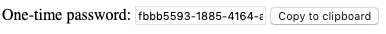

# What are we building?

A one-time password "copy to clipboard" button what wraps the DOM Clipboard API.

You can access the final working version on [Glitch](https://glitch.com/edit/#!/trapezoidal-seer):

{% glitch trapezoidal-seer %}

# Starting off

First, we are creating our base HTML where the one-time password will be and the actual button to copy it:

```html

<div>

<label>

One-time password:

<input type="text" value="fbbb5593-1885-4164-afbe-aba1b87ea748" readonly="readonly">

</label>

<button>

Copy to clipboard

</button>

</div>

```

This doesn't do anything by itself; we need to add our Stimulus controller.

# The controller definition

In Stimulus, **a controller is a JavaScript object that automatically connects to DOM elements that have certain identifiers**.

Let's define our clipboard controller. The main thing it needs to do? Grab the text on the input field and copy it to the clipboard:

```javascript

(() => {

const application = Stimulus.Application.start();

application.register("clipboard", class extends Stimulus.Controller {

// We'll get to this below

static get targets() {

return ['source']

}

copy() {

// Here goes the copy logic

}

});

})();

```

Now, this is a valid controller that doesn't do anything because it's not connected to any DOM element yet.

# Connecting the controller

Adding a `data-controller` attribute to our `div` will enable the connection:

```html

<div data-controller="clipboard">

[...]

```

Remember the `static get targets()` from above? That allows us to **access DOM elements as properties in the controller**.

Since there is already a `source` target, we can now access any DOM element with the attribute `data-clipboard-target="source"`:

```html

[...]

<input data-clipboard-target="source" type="text" value="fbbb5593-1885-4164-afbe-aba1b87ea748" readonly="readonly">

[...]

```

Also, we need the button to actually do something. We can link the "Copy to clipboard" button to the `copy` action in our controller with another identifier: `data-action="clipboard#copy"`. The HTML now looks like this:

```html

<div data-controller="clipboard">

<label>

One-time password:

<input data-clipboard-target="source" type="text" value="fbbb5593-1885-4164-afbe-aba1b87ea748" readonly="readonly">

</label>

<button data-action="clipboard#copy">

Copy to clipboard

</button>

</div>

```

Our controller is now automatically connected to the DOM, and clicking the copy button will invoke the `copy` function; let's proceed to write it.

# The copy function

This function is essentially a **wrapper of the DOM Clipboard API**. The logic goes like this:

```javascript

[...]

copy() {

this.sourceTarget.select();

document.execCommand('copy');

}

[...]

```

We take the `source` target we defined earlier, our text input that is, select its content, and use the Clipboard API to copy it to our clipboard.

At this point, **the functionality is practically done!** You can press the button and the one-time password is now available for you on your clipboard.

# Moving further

The copy button works now, but we can go further. **What if the browser doesn't support the Clipboard API or JavaScript is disabled?**

If that's the case, we are going to hide the copy button entirely.

# Checking API availability

We can check if the `copy` command is available to us by doing this:

```javascript

document.queryCommandSupported("copy")

```

One of the best places to check this is when the Stimulus controller connects to the DOM. Stimulus gives us some nice **lifecycle callbacks** so we can know when this happens.

We can create a `connect` function on our controller and it will be invoked whenever this controller connects to the DOM:

```javascript

[...]

connect() {

if (document.queryCommandSupported("copy"))

// Proceed normally

}

}

[...]

```

One way to hide/show the copy button depending on the API availability is to initially load the page with the button hidden, and then displaying it if the API is available.

To achieve this we can rely on CSS:

```css

.clipboard-button {

display: none;

}

/* Match all elements with .clipboard-button class inside the element with .clipboard--supported class */

.clipboard--supported .clipboard-button {

display: initial;

}

```

Our button is now hidden from the beginning, and will only be visible when we add the `.clipboard--supported` class to our `div`.

To do it, we modify the connect lifecycle callback.

Here is where we can start to see major differences from this latest development version. With the actual production version you would need to specify the CSS class in the controller, effectively doing this:

```javascript

[...]

connect() {

if (document.queryCommandSupported("copy"))

this.element.classList.add('clipboard--supported');

}

}

[...]

```

**There is a new, better way to achieve it.**

# Classes API

Now, **CSS classes can be actual properties of the controller**. To do so, we need to add some identifiers to our HTML and add a new array to our controller:

```html

<div data-controller="clipboard" data-clipboard-supported-class="clipboard--supported" class="clipboard">

[...]

```

```javascript

[...]

application.register("clipboard", class extends Stimulus.Controller {

[...]

static classes = ['supported']

connect() {

if (document.queryCommandSupported("copy"))

this.element.classList.add(this.supportedClass);

}

}

[...]

```

Great! Now we can access our supported class string from our controller with `this.supportedClass`. **This will help keep things loosely coupled.**

The clipboard real-life example from Stimulus' handbook ends here. Now, to show the other newest additions and use the *Classes API* once more, we're adding the following functionality:

- A new style to the "Copy to clipboard" button once it has been clicked

- A refresh interval for the one-time password. This will generate a new password every 2.5 seconds

- A data attribute to keep track of how many times the password has been generated

# Values API

This, along with the *Classes API*, is one of the new additions to Stimulus. Before this API you would need to add arbitrary values to your controller with the Data Map API, that is, adding `data-[identifier]-[variable-name]` to your DOM element, and then parsing that value in your controller.

This created boilerplate such as getters and setters with calls to `parseFloat()`, `parseInt()`, `JSON.stringify()`, etc. This is how it will work with the *Values API*:

```html

<div data-controller="clipboard" data-clipboard-supporte-class="clipboard--supported" data-clipboard-refresh-interval-value="2500" class="clipboard">

[...]

```

```javascript

[...]

application.register("clipboard", class extends Stimulus.Controller {

[...]

static values = {

refreshInterval: Number

}

connect() {

if (document.queryCommandSupported("copy"))

this.element.classList.add(this.supportedClass);

}

// Access refreshInterval value directly

this.refreshIntervalValue; // 2500

}

[...]

```

**Accessing your controller values is now cleaner since you don't need to write your getters and setters, nor do you need to parse from String to the type you need.**

Moving forward, let's write the one-time password refresh.

# Implementing password generation

We're going to define a new function to create a new random password. [I grabbed this random UUID generator snippet from the internet](https://www.arungudelli.com/tutorial/javascript/how-to-create-uuid-guid-in-javascript-with-examples/):

```javascript

([1e7]+-1e3+-4e3+-8e3+-1e11).replace(/[018]/g, c =>

(c ^ crypto.getRandomValues(new Uint8Array(1))[0] & 15 >> c / 4).toString(16));

```

Adding it to our Stimulus controller:

```javascript

connect() {

if (document.queryCommandSupported("copy"))

this.element.classList.add(this.supportedClass);

}

if(this.hasRefreshIntervalValue) {

setInterval(() => this.generateNewPassword(), this.refreshIntervalValue)

}

}

// copy function

generateNewPassword() {

this.sourceTarget.value = ([1e7]+-1e3+-4e3+-8e3+-1e11).replace(/[018]/g, c =>

(c ^ crypto.getRandomValues(new Uint8Array(1))[0] & 15 >> c / 4).toString(16));

}

[...]

```

We use `setInterval` to refresh our password text field each 2500ms since that's the value we defined in the DOM.

**Our refresh feature is now working!** Some things still missing:

- Add new style when copy button is clicked

- Keep track of how many times a password is generated

Giving all we have learned so far, this is what's need to be done:

- Add a new CSS class to the stylesheet, DOM element, and controller

- Add this new class when the button is clicked, and remove it when the password is refreshed

- Add to a counter when the password refreshes

This is how it will look at the end:

```css

/* CSS */

.clipboard-button {

display: none;

}

.clipboard--supported .clipboard-button {

display: initial;

}

.clipboard--success .clipboard-button {

background-color: palegreen;

}

```

```html

<!-- HTML -->

<div data-controller="clipboard"

data-clipboard-refresh-interval-value="2500"

data-clipboard-supported-class="clipboard--supported"

data-clipboard-success-class="clipboard--success"

data-clipboard-times-generated-value="1"

>

<label>

One-time password: <input data-clipboard-target="source" type="text" value="fbbb5593-1885-4164-afbe-aba1b87ea748" readonly="readonly">

</label>

<button data-action="clipboard#copy"

class="clipboard-button" >

Copy to Clipboard

</button>

</div>

```

```javascript

// JavaScript

(() => {

const application = Stimulus.Application.start()

application.register("clipboard", class extends Stimulus.Controller {

static get targets() {

return ['source']

}

static values = {

refreshInterval: Number,

timesGenerated: Number

}

static classes = ['supported', 'success'];

connect() {

if (document.queryCommandSupported("copy")) {

this.element.classList.add(this.supportedClass);

}

if(this.hasRefreshIntervalValue) {

setInterval(() => this.generateNewPassword(), this.refreshIntervalValue)

}

}

copy() {

this.sourceTarget.select();

document.execCommand('copy');

this.element.classList.add(this.successClass);

}

generateNewPassword() {

this.sourceTarget.value = ([1e7]+-1e3+-4e3+-8e3+-1e11).replace(/[018]/g, c =>

(c ^ crypto.getRandomValues(new Uint8Array(1))[0] & 15 >> c / 4).toString(16));

this.element.classList.remove(this.successClass);

this.timesGeneratedValue++;

}

// NEW! Read about it below

timesGeneratedValueChanged() {

if(this.timesGeneratedValue !== 0 && this.timesGeneratedValue % 3 === 0) {

console.info('You still there?');

}

}

});

})();

```

Apart from what we've already discussed about the *Values API*, there is also something new: **Value changed callbacks**.

These callbacks are called whenever a value changes, and also once when the controller is initialized. They are connected automatically given we follow the naming convention of `[valueName]ValueChanged()`.

We use it to log a message each time the password has been refreshed three times, but they can help with state management in a more complex use case.

# Wrapping up

I've created multiple Stimulus controllers for my daily job, and I must say that I always end up pleased with the results. Stimulus encourages you to keep related code together and, combined with the additional HTML markup required, ends up making your code much more readable.

If you haven't tried it yet, I highly recommend going for it! It offers a different perspective, one of magic 🧙🏻♂️.

Thanks for reading me 👋🏼.

| david_ojeda |

248,213 | Use any API in seconds with auth managed by Pipedream | Pipedream makes it easy to write Node.js and use any API in seconds! We securely manage auth for 100+... | 0 | 2020-01-25T03:25:52 | https://blog.pipedream.com/use-any-api-in-seconds-with-auth-managed-by-pipedream/ | node, javascript, github, tutorial | <p><a href="https://pipedream.com">Pipedream</a> makes it easy to write Node.js and use any API in seconds! We securely manage auth for 100+ apps (with <a href="https://github.com/PipedreamHQ/roadmap/issues?utf8=%E2%9C%93&q=is%3Aissue+is%3Aclosed+">more added daily</a>) – just connect your app accounts and use the <code>auths</code> object in code to reference tokens and keys.</p><h2 id="github-http-api">Github HTTP API</h2><p>For example, to use Github's HTTP API, just connect your account using a browser-based oauth flow and and pass <code>auths.github.access_token</code> as the value of the <code>Bearer</code> token (check out the <code>octokit</code> section below for an example of how to use <code>auths</code> with an npm package).</p><pre><code class="language-javascript">const axios = require('axios')

const response = await axios({

url: `https://api.github.com/user`,

headers: {

Authorization: `Bearer ${auths.github.oauth_access_token}`,

},

})

return response.data</code></pre><p>Here's a GIF that demonstrates how to use the Github API in 30-seconds:</p><figure class="kg-card kg-image-card"><img src="https://blog.pipedream.com/content/images/2020/01/githubdemo.gif" class="kg-image"></figure><p>In ~30-seconds, I add a code step with Github auth, paste in the code above, connect my account, query the API and inspect the results. <a href="https://pipedream.com/@pravin/github-demo-p_brCnan">Copy this template</a> to try it yourself .</p><h2 id="github-via-octokit">Github via Octokit </h2><p>You're not limited to using Pipedream managed auth with HTTP APIs – it works with npm packages too! Here's an example of the same API request as above, but using Github's <code>octokit</code> package:</p><pre><code class="language-javascript">const Octokit = require('@octokit/rest')

const octokit = new Octokit({

auth: auths.github.oauth_access_token

})

return (await octokit.users.getAuthenticated()).data</code></pre><p>Pipedream transparently installs any npm packages you require in your code when you deploy. To try it yourself, <a href="https://pipedream.com/@pravin/github-demo-p_brCnan">copy this template</a>, enable <code>steps.octokit</code>, connect your account and run the code.</p><figure class="kg-card kg-image-card"><img src="https://blog.pipedream.com/content/images/2020/01/octokitdemo.gif" class="kg-image"></figure><h2 id="scaffolding-api-auth-code">Scaffolding API + Auth Code</h2><p>Pipedream also supports actions, which are re-usable code steps. Actions provides scaffolding for popular APIs and functions. To scaffold a generic API for an app, just click the plus (+) button <strong>, </strong>select the app and choose <strong>Run Node.js with [App Name]</strong>:</p><figure class="kg-card kg-image-card"><img src="https://blog.pipedream.com/content/images/2020/01/basicscaffolding-1.gif" class="kg-image"></figure><p>To scaffold a specific API for an app, just click the plus (+) button , select the app and choose the action that you want to scaffold. Try editing the code and confirm that you want to customize it:</p><figure class="kg-card kg-image-card"><img src="https://blog.pipedream.com/content/images/2020/01/actionscaffold-1.gif" class="kg-image"></figure><h2 id="triggering-your-code">Triggering Your Code</h2><p>Trigger your code:</p><ul><li>Manually</li><li>On an interval or cron schedule</li><li>On HTTP request (Pipedream will generate a unique URL — any requests to this URL will trigger your code)</li><li>On an email (Pipedream will generate a unique email address — any emails sent to this address will trigger your code)</li><li>Via the Pipedream Node.js or Ruby SDKs</li></ul><h2 id="getting-started">Getting Started</h2><p>To get started, just sign in with your Google or Github account at <a href="https://pipedream.com">https://pipedream.com</a>. Check out the project templates at <a href="https://pipedream.com/explore">https://pipedream.com/explore</a>, and join our public Slack at <a href="https://pipedream.com/community">https://pipedream.com/community</a>!</p> | pipedream_staff |

257,264 | Importance of CEH Exam | With the emergence of security related issues across the world, the multinational organizations are l... | 4,719 | 2020-02-07T11:37:50 | https://dev.to/koenigsolutions/importance-of-ceh-exam-251n | ceh, cehexam, ethicalhacking, cybersecurity | With the emergence of security related issues across the world, the multinational organizations are looking for more number of professional and certified ethical hackers. Followed by this demand, CEH Exam is conducted to prepare and train certified ethical hackers, who can serve the MNCs for their security needs.

Importance of CEH Exam

In the digital world, breaching of security is not a much unknown phenomenon, and in order to get past these kinds of issues, one requires some special skills and training. Traditional textbooks cannot enable one with these kinds of skills, as these skills go beyond the traditional boundary of software engineering, network engineering, or mobile technologies.

How to Get Ethical hacking Certification?

The people, who can save the other from these kinds of security breaches, are called ethical hackers, and various multinational organizations recruit a large number of certified ethical hackers. These are the people, who have an in-depth knowledge of network engineering, mobile technology, database system, software engineering, and cyber law. However, anybody and everybody cannot be a specialized ethical hacker, as it requires focused training and guidance. For that reason, examinations, like CEH certification exam , are conducted for preparing an ethical hacker.

How to Prepare for the Exam?

There are several areas, which are taught and evaluated during the course of this exam. Following are those areas:

Tools and Programs

Under this category, you can learn the basic programming and scripting languages, basic and advanced network technologies, various features and background operations of operating systems, networking hardware design, antivirus technologies, security features of operating systems, and database modules.

Security Features

Under this category, you can learn the various security-related technologies required to protect a system or a network. It includes the technologies like cryptography, firewalls, validation techniques, wireless technologies, and other physical verification technologies.

Analysis

CEH certification exam aspirants should be ready to learn the basics of system analysis, the technical analysis and risk analysis of an existing system.

Regulatory Framework

For successful completion of the ethical hacking certification, it is required to know the regulatory framework regarding the existing cyber laws, cyber compliances, code of conduct, and the boundaries of a hacker.

These are the basic skills required for being an ethical hacker. Once these skills are evaluated successfully, one can earn the title of certified ethical hacker. | koenigsolutions |

257,431 | How to Fix a Typo After You've Already Pushed Your Commit | Or alternative title - How I learned to love Git rebase When I first started working with... | 0 | 2020-02-07T16:33:05 | http://bellawoo.com/blog/2020/02/02/rebase.html | git, tutorial | ### Or alternative title - How I learned to love Git rebase

When I first started working with Git, I was indoctrinated into the school of merge and was told to never rebase. Rebasing lets you re-write history. The whole point of Git is to track history. Therefore, rebasing is bad.

But there was one workflow that truly cemented my conversion and has become a regular part of my code writing process - rebasing on my active branch.

Even with linters and a spell checker extension installed in my code editor, from time to time, I'll catch a typo I've committed to git. Or I'll forget a change in a lingering file. And because the basic push workflow has become muscle memory at this point, I would push the commit before I noticed the mistake. I would fix it and do one of these...

```$ git commit -m "fix typo"```

Gross.

But we can fix this quickly with an interactive rebase!

## Fixup First

After fixing my mistakes, I'll stage the file like normal. Then instead of `-m` and the cringy message, I mark the commit with the `--fixup` option. The command expects a commit SHA-1 to attach the fix to.

```

$ git add .

$ git commit --fixup 710f0f8

```

Another neat trick is to refer to the previous commit as the parent of the current one. `HEAD~`, `HEAD^` will both work, as would `HEAD~2` to refer to the commit before last, or the grandparent of the current commit. Note that `~` and `^` [are not interchangable](https://git-scm.com/book/en/v2/Git-Tools-Revision-Selection#_ancestry_references). Git is even smart enought to be able to find the first words of a commit message.

```

# these will all fixup your commit to the previous one.

$ git commit HEAD~

$ git commit HEAD^

$ git commit :/update

```

When I run `git log`, the history will look like this:

```

f7f3f6d (HEAD) fixup! update variable name

310154e update variable name

a5f4a0d (master, origin/master, origin/HEAD) working code on master

```

## Let's rebase!

We add `-i` to run the rebase in interactive mode and supply an argument of the parent of the last commit you want to edit. I use this as a rule: add 1 to the number of commits I need to go back. Adding `--autosquash` will pick up any commits prefaced with `fixup!` and set your interactive rebase session with the commands filled in.

```

$ git rebase -i --autosquash HEAD~3

```

The result of that command will be a list of your commits in ascending order (my default opens in vim) along with the action git should apply when running the commit. Note that the last commit already has `fixup` command attached.

```

pick a5f4a0d working code on master

pick 310154e update variable name

fixup f7f3f6d fixup! update variable name

# Rebase a5f4a0d..f7f3f6d onto a5f4a0d (3 commands)

#

# Commands:

# p, pick <commit> = use commit

# r, reword <commit> = use commit, but edit the commit message

# e, edit <commit> = use commit, but stop for amending

# s, squash <commit> = use commit, but meld into previous commit

# f, fixup <commit> = like "squash", but discard this commit's log message

# x, exec <command> = run command (the rest of the line) using shell

# d, drop <commit> = remove commit

# l, label <label> = label current HEAD with a name

# t, reset <label> = reset HEAD to a label

# m, merge [-C <commit> | -c <commit>] <label> [# <oneline>]

# . create a merge commit using the original merge commit's

# . message (or the oneline, if no original merge commit was

# . specified). Use -c <commit> to reword the commit message.

#

# These lines can be re-ordered; they are executed from top to bottom.

#

# If you remove a line here THAT COMMIT WILL BE LOST.

#

# However, if you remove everything, the rebase will be aborted.

#

#

# Note that empty commits are commented out

```

At this point, if I'm doing a final clean up to push to a remote branch, I'll maybe `reword` a commit message or maybe even `squash` some extraneous commits together. It's worth having a read through the comments because this is a really powerful workflow, but `fixup` is the one I use the most.

Once you complete the rebase, your revised `git log` should have a new singular commit that has combined your fixup commit with the previous one. _*bows and accepts applause*_

```

2231360 update variable name

a5f4a0d (master, origin/master, origin/HEAD) working code on master

```

## Autosquash magic

`--autosquash` will also pick up commits with `--squash` option, but I tend to not want to keep that message, so fixup works just fine for me. Squash might be a good option if you have a significant amount of new code but want only one atomic commit.

You can also set the following git config setting to omit having to include the autosquash option every time you run an interactive rebase.

`$ git config --global rebase.autosquash true`

My setups always have this set to true, which helped make fixups and squashing commits feel like second nature since to enter a rebase session, I only have to type `git rebase -i HEAD~3` or however many commits I think I need to clean up.

## And that's how I converted to rebase!

Other helpful resources include this [git tutorial on revising history](https://git-scm.com/book/en/v2/Git-Tools-Rewriting-History). Once I digested that, I found it easier to understand the [full doc on rebase](https://git-scm.com/docs/git-rebase). | bellawoo |

257,479 | Supporting multiple configurations in Cypress | By default, Cypress will support a single configuration based on the optional file cypress.json as... | 0 | 2020-02-07T18:54:34 | http://yer.ac/blog/2020/02/07/supporting-multiple-configurations-in-cypress/ | testing, cypress, javascript, tutorial | ---

title: Supporting multiple configurations in Cypress

published: true

date: 2020-02-07 16:13:12 UTC

tags: Testing,Cypress,Javascript,tutorial

canonical_url: http://yer.ac/blog/2020/02/07/supporting-multiple-configurations-in-cypress/

---

By default, Cypress will support a single configuration based on the optional file `cypress.json` as described in their documentation [here](https://docs.cypress.io/guides/references/configuration.html).

Whilst this works fine for most, it would be great if we could have access to a `cypress.dev.json` for local development, or even better, a whole host of configuration files for use against a multi-tenant environment – for example `cypress.clientA.json`, `cypress.clientB.json` etc.

Whilst Cypress accepts a different config file during startup with the `--config-file` flag, it would be better if we could just pass the environment name through instead of the full file name and/or location, right?

### Uses for environmental variables

I personally use these environmental files to store things like:

- Base URL: Each client has its own SIT/UAT environments with different URLs

- Default username and password for test environments.

### Creating the different config files

We can create a root level folder named “Config”. Under here we can create as many files as we need to cover, for example I have `config.ClientA.json` which contains:

```

{

"baseUrl": "http://clientA.internalserver.co.uk/",

"env": {

"someVariable": "Foo"

}

}

```

And `config.ClientB.json` which contains:

```

{

"baseUrl": "http://clientB.internalserver.co.uk/",

"env": {

"someVariable": "Bar"

}

}

```

## Editing the plugin file

First we need to import “path” and “fs-extra” packages by adding the following at the top of the `index.js` file within the `/Plugins` folder (if it doesn’t already exist!). These will allow the file to be located and subsequently read.

```

const path = require("path");

const fs = require("fs-extra");

```

Next we need the method which will take in a client name/environmental variable, locate the appropriate config file (being /config/config. **name**.json), and then reading that file back to the calling method.

```

function getConfigurationFileByEnvName(env) {

const fileLocation = path.resolve("cypress/config", `config.${env}.json`);

return fs.readJson(fileLocation);

}

```

and finally we need the index.js file to export this file. This will also have a fallback in place if one is not defined.

```

module.exports = (on, config) => {

const envFile = config.env.configFile || "local";

return getConfigurationFileByEnvName(envFile);

};

```

The eagle eyed may realise that I am using `config.env.configFile` here which will mean passing an environmental flag in the command line rather than making direct use of the `--config` flag. This is personal preference, as I aim to expand on the `env` flags later so this will look cleaner.

### Consuming the configuration

Now, when running the usual open command, we can make use of the `--env` flag to pass it the environmental variable. We do so with:

`./node_modules/.bin/cypress open --env configFile=clientA`

It should now launch the test runner with your different files environmental variables available via `Cypress.env('key')`

The post [Supporting multiple configurations in Cypress](http://yer.ac/blog/2020/02/07/supporting-multiple-configurations-in-cypress/) appeared first on [yer.ac | Adventures of a developer, and other things.](http://yer.ac/blog). | yerac |

257,493 | WebScrapping articles to read offline | I don't have internet at home so I wanted to read some articles offline when I'm home.. Which approac... | 0 | 2020-02-07T17:39:26 | https://dev.to/rnrnshn/webscrapping-articles-to-read-offline-p7f | help, discuss, tutorial | I don't have internet at home so I wanted to read some articles offline when I'm home.. Which approach should I use to have those article offline?

Is there any web scrapping tutorial or tool that I should use?? | rnrnshn |

257,571 | An Intro to Elixir from a JavaScript Background | Elixir is a functional programming language that extends the Erlang language, which is an older langu... | 0 | 2020-02-08T21:00:34 | https://dev.to/ryanmoragas/an-intro-to-elixir-4k0d | beginners, elixir, productivity, javascript | Elixir is a functional programming language that extends the Erlang language, which is an older language that gained its popularity in the 80's, and is mixed with Ruby syntax. The language is fairly new, being created in 2011, and has excellent documentation. It is a functional programming language and has no classes, but instead modules with module functions. Elixir also has a mature web framework called Phoenix, which makes using it much easier when developing real world applications.

After you install Elixir you will have three new executables right out of the box, being `iex`, `elixir` and `elixirc`. If you compiled Elixir from source or are using a packaged version, you can find these inside the bin directory. For these exmaples I'll use `iex` (or `iex.bat` if you are on Windows) which stands for Interactive Elixir. In interactive mode, you can type any Elixir expression and get its result immediately in the terminal.

Before getting started it is probably best to cover the basics on Elixir, and that starts with its simple data types. There are several different types of simple data types in Elixir, being integers, floats, strings, atoms, booleans, lists, and tuples. I'll touch on each of these data types with a brief explanation of what they are.

Integers and floats are both number data types. Integers can be thought of as whole numbers, and floats are integers with decimal values. While these data types might seem similar, integers and floats are two completely different values and can never be strictly equal to each other. With that being said, you can still use integers and floats together in operations, and sometimes operations on integers will automatically produce floats.

``` ex

iex(1)> 2 + 2

4

iex(2)> 3 * 3

9

iex(3)> 3 / 3

1.0

iex(4)> 1 === 1.0

false

```

As seen from the code above, performing division on two integers will always produce a float. You can also see that even though mathematically 1 === 1.0 is true, since they are two different data types in Elixir they are not strictly equal values.

Next on the list of simple data types we have strings. All strings in Elixir must be declared using double quotes. Strings are essentially binaries converted to characters, so you can treat them the same. To append strings together, unlike using `+` in JavaScript, you use the `<>` operator. You can use simple concatenation to add strings together. You can also interpolate values but placing them into the `#{//value}` interpolation syntax.

```ex

iex(5)> intro = "hello"

"hello"

iex(6)> "#{intro} " <> "world!"

"hello world!"

```

Atoms are simple data types that will always equal themselves. The can be defined by putting a colon in front of the value. In other languages, they are sometimes called symbols. A close reference would be assigning a value to const in JavaScript, but atoms also act similar to booleans.

```ex

iex(7)> :atom === :atom

true

iex(8)> :true === true

true

iex(9)> :true === "true"

false

```

Lists are complex data types that store references to any value types in a specific order. They are defined with brackets, and very comparable to arrays in JavaScript. Any data type can be stored in a list and data is accessed in reference to the head and the tail. The head is the first value in a list and the tail is a list of all values after the head. The values in a list can be accessed with the `hd/1` and `tl/1` functions. To concatenate two lists you would use `++`, and to subtract you would use '--'.

```ex

iex(10)> list = [7, 16.6, :atom, "bird"]

[7, 16.6, :atom, "bird"]

iex(11)> list ++ ["cat", false]

[7, 16.6, :atom, "bird", "cat", false]

iex(12)> list

[7, 16.6, :atom, "bird"]

iex(13)> hd list

7

iex(14)> tl list

[16.6, :atom, "bird"]

```

Tuples are also lists of values, and defined with curly brackets. They still act more like a JavaScript array rather than an object, as the don't have key value pairs. A tuple may contain elements of different types, which are stored contiguously in memory. Accessing any element takes constant time, but modifying a tuple, which produces a shallow copy, takes linear time. Tuples are good for reading data while lists are better for traversals. Tuples are typically used either when a function has multiple return values or for error handling.

Hopefully this was a helpful first glance into Elixir. While they have some similarities, Elixir and JavaScript also have many differences. The documentation for Elixir is extremely helpful, and their website also has beginner tutorials if you'd like to learn more. | ryanmoragas |

257,579 | AES-GCM Encryption with C# | Here is example how can you use encryption with AES GCM with C#. Its currently supported in .NET Core... | 0 | 2020-02-07T21:32:52 | https://dev.to/maymeow/aes-gcm-encryption-with-c-23oi | csharp, dotnet |

Here is example how can you use encryption with AES GCM with C#. Its currently supported in .NET Core 3.0, 3.1 and .NET Standard 2.1. For .NET Framework you will need to use CBC. This code and more is awailable in my nuget package [MayMeow.Cryptography](https://www.nuget.org/packages/MayMeow.Cryptography/).

So How to encrypt data?

```csharp

public static byte[] Encrypt(byte[] toEncrypt, byte[] key, byte[] associatedData = null)

{

byte[] tag = new byte[KEY_BYTES];

byte[] nonce = new byte[NONCE_BYTES];

byte[] cipherText = new byte[toEncrypt.Length];

using (var cipher = new AesGcm(key))

{

cipher.Encrypt(nonce, toEncrypt, cipherText, tag, associatedData);

return Concat(tag, Concat(nonce, cipherText));

}

}

```

This will create byte array which looks like this

|Tag|Nonce|Encrypted Data|

|---|---|---|

|16 Bytes| 12 bytes | ...x bytes |

Everything you have to provide is key. Tag and NONCE is different for each data you vant to encrypt and its a part of array.

Function for decrypt data:

```csharp

public static byte[] Decrypt(byte[] cipherText, byte[] key, byte[] associatedData = null)

{

byte[] tag = SubArray(cipherText, 0, KEY_BYTES);

byte[] nonce = SubArray(cipherText, KEY_BYTES, NONCE_BYTES);

byte[] toDecrypt = SubArray(cipherText, KEY_BYTES + NONCE_BYTES, cipherText.Length - tag.Length - nonce.Length);

byte[] decryptedData = new byte[toDecrypt.Length];

using (var cipher = new AesGcm(key))

{

cipher.Decrypt(nonce, toDecrypt, tag, decryptedData, associatedData);

return decryptedData;

}

}

```

In those functions i using concat and subarray functions. For merging arrays together and splitting them. they are here:

```csharp

public static byte[] Concat(byte[] a, byte[] b)

{

byte[] output = new byte[a.Length + b.Length];

for (int i = 0; i < a.Length; i++)

{

output[i] = a[i];

}

for (int j = 0; j < b.Length; j ++)

{

output[a.Length + j] = b[j];

}

return output;

}

```

```csharp

public static byte[] SubArray(byte[] data, int start, int length)

{

byte[] result = new byte[length];

Array.Copy(data, start, result, 0, length);

return result;

}

```

Theese and more are part of **MayMeow.Cryptography** repository which is awailable on my [Github](https://github.com/MayMeow/MayMeow.Cryptography).

[](https://ko-fi.com/D1D5DMOTA) | maymeow |

257,747 | Peppermint OS - A Quick Review | Introduction This is a quick review of Peppermint OS 10, as I am not used to do running... | 3,426 | 2020-02-08T06:45:02 | https://dev.to/twitmyreview/peppermint-os-a-quick-review-3jg8 | productivity, ubuntu, opensource, linux |

### Introduction

This is a quick review of Peppermint OS 10, as I am not used to do running linux in a VM for my daily usage, the only thing I could do was to do a whirlwind check of Peppermint OS. My first impression from the initial flash screen and the desktop layout was that it looked right out of early 2000s desktop experience - retro by design. Running on a 4GB ram 64 bit VM, the response was snappy and reminded of early Linux OS desktops way back from 2009-10 when I mostly used as a desktop. But once you open the start menu and the applications, it was more modern and the dark theme really made me happy.

### Things I Liked

I liked the number of in-built apps and also links to web apps like Google Drive and Microsoft Word. While initially I thought it as a bloatware. After checking a tool called Ice created by Peppermint, made me like the whole concept of having Web Apps in start menu. This is one feature other light weight OS should have.

This is something I use extensively with chrome on Windows 10 and by organising websites like Gmail, Drive and Google Docs as shortcuts is very handy and I feel no need to install their desktop variants. While in Peppermint these links are opened in dedicated Firefox windows and the experience is not as clean as a Chrome dedicated window but still I feel in a real laptop or desktop this feature will be very handy.