id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

264,304 | A few clean-ups to relieve stress | [This story was originally posted on my personal blog] I've always had this itch of organizing the s... | 0 | 2020-02-19T00:14:24 | http://ismail.teamfluxion.com/diary/20200218/A_few_cleanups_to_relieve_stress | watercooler, productivity | *[This story was originally posted on [my personal blog](http://ismail.teamfluxion.com/diary/20200218/A_few_cleanups_to_relieve_stress)]*

I've always had this itch of organizing the stuff that I come across every day, sometimes to an extent that it's over-organized for me to access later. It's partly because my mind keeps on shifting its perspective of how I see (and want to see) things around me and otherwise it's something that comes straight out of me being an over-thinker for things that do not need that much of thinking. I might arrange objects in a particular way, only to completely remodel the entire space, arguably making my previous attempt to make things 'right' look like a complete waste of my productive time.

The following are a few items that I enjoy cleaning up and believe to be reducing my stress.

## To-Do list

My To-Do list keeps growing like crazy. It does help me track ideas and the things that I might need to be doing, but as it grows it tends to scare me with what's upcoming (especially the looking at the count of items in the list), often leaving my mind in a state where I find it very difficult to focus on the tasks that I currently have at hand.

To me, cleaning a To-Do list could either mean scheduling items that are still in the *unscheduled* state, better organize them by re-assign tags that make more sense to me (at that particular instant), or even deleting the items that I might have added a while back and do not seem to be worth doing or even making any sense at the time of the clean-up. And yes, it also helps me look past the mess I have in front of me.

I can discover tasks that were planned way back but got lost among other 'less important' ones. Finally, it also helps me discover the 'filler' tasks that I could potentially pick up when there's relatively nothing else to do, which I know is rarely the case, but who knows?

## Digital notes or lists

My digital list collection is another place to look for those buried lists and plans that never got converted into tasks and eventually scheduled and picked up to be worked upon. On the other hand, there's so much that does not need to be worried about or carried around anymore.

It mostly does not cost anything to keep this kind of useless data around, especially if you're on a free plan on the platform or service ([Dynalist](https://dynalist.io) in my case) that you're using to maintain these lists, but when taken out, it does help me to find what I might be looking for faster and easier.

## Web-browser bookmarks

No matter how much time I spend on my collection of web bookmarks in [Google Chrome](https://www.google.com/chrome), it doesn't take long for the tree to grow out of control and very overwhelming to look at.

Bookmarks can get accumulated quickly, especially with my pattern of web-browsing. It goes like: anything interesting that I come across and/or I feel needs more time and dedicated attention gets thrown into a subtree that I usually revisit regularly. As the second step, these deferred bookmarks get further categorized and sorted according to priority and subject. When the time finally comes to visit the web-resource it points to, it either gets deleted after sharing it with people who might find it interesting or stashed into the rest of the well-organized tree for almost forever.

My tree has been growing since I first started using Google Chrome, which is almost a decade now. Funny enough, I used to maintain an excel worksheet of internet URLs that I wanted to store as a future reference instead of saving them as 'favorites' on my [Internet Explorer](https://www.microsoft.com/en-us/download/internet-explorer.aspx) as they would otherwise be lost. As the bookmarks on my Google Chrome are associated with my account and the task of maintaining the collection for me is now taken care of by Google, it is the right place to maintain that kind of data. I recently conducted a moderately deep clean-up in my bookmarks collection and what I got from the result of the time-consuming (and arguably boring) activity is what I believe to be one of the cleanest bookmark collections I've seen to date, not that I have a habit of peeking into people's bookmarks.

## Projects kitchen

On all my computers, I have a [projects graveyard](https://dev.to/isaacandsuch/github-graveyards-ill-show-you-mine-49lh) where I perform coding experiments and most of the code residing there never makes its way out. Although some developers [share their collection](https://dev.to/peter/graveyard-groundskeepers-2886) on GitHub or other similar places, I keep mine private and call it 'kitchen' on every single workstation of mine. Most of the ideas developed and polished there get life in the form of some other public project but the mess in the kitchen tends to keep growing quickly.

Visiting these directories every once in a while and getting rid of waste helps me come across any unfinished experiments or at least helps me free some space on the tiny [SSD](https://en.wikipedia.org/wiki/Solid-state_drive) on my [MacBook Pro](https://www.apple.com/macbook-pro). Regardless, cleaning the kitchen feels great.

## Digital data on hard-drive or cloud

My digital data on the cloud tends to get out of hand as well. During my early years of using computers to store data, this kind of data used to be stored in smaller hard-drives and USB flash drives that were far easier to run out of storage capacity on. With my not-so-recent move to virtually infinite cloud-storage, space is not an issue anymore, and I expect it to be that way for at least another decade before I start running out of space again. However, re-arranging files and deleting unrequired data gives me a sense of satisfaction greater than what I achieve after refactoring a piece of code that I wrote a decade back.

## Apps, extensions, etc. from my setup

It could be unused software on my computers, apps on my phone(s) that I might have never used and forgotten about, plugins on my text-editor or even extensions on my web-browser, the list can go on. Apart from the most obvious advantage of speeding up my work environment by removing the redundant bloat that not only do I not need anymore but also do not remember the reason to exist, it makes space for new stuff to come in: think new apps, extensions or productivity tools, etc.

## More...

There's much more:

- **Email subscriptions** so that I do not need to delete multiple emails from the same sender every day as if it was a daily ritual

- **Games library** so that I don't feel bad for myself every time I come across it on discovering the huge list of games that I own but do not have time to play anymore

- **Browser autofill info** so that I have a shorter list of credentials, addresses, payment methods, etc. to choose from while filling a web form

- **Physical desk** so that I have to move lesser stuff around for being able to access other stuff

- **My car** not because it helps me in any way, but I just like doing it down to the smallest detail possible

As you might have guessed, the list does not end there.

## And beyond...

More than the amount of stress relief I tag these activities with, I also believe that they grant me some sort of a short-term productivity boost. I feel a little more focused and motivated towards doing what I'm currently doing, and that too with a fresh perspective until there's need for another round of clean-up. | myterminal |

264,328 | The most elegant debounce code you'll ever write featuring XState | Debouncing is the practice of delaying the execution of a resource or time-intensive task long enough... | 0 | 2020-02-19T02:25:00 | https://dev.to/codingdive/the-most-elegant-debounce-code-you-ll-ever-write-featuring-xstate-3hn0 | javascript, react |

Debouncing is the practice of delaying the execution of a resource or time-intensive task long enough to make the application feel very responsive. This is typically done by reducing the number of times the task is executed.

Whether you're filtering a giant list or simply want to wait a few seconds until the user has stopped typing before sending a request, chances are that you'll have to deal with debouncing one way or another especially if you happen to be a front end engineer.

I claim that handling this with the popular state management, state machine and statechart library XState is as good as it can get. Before you can convince yourself, let's quickly determine the best possible debounce user experience before translating it into code.

Good debounce logic should:

- give users **instant feedback** about what they're doing. We might want to wait a second before sending a request to the server but we do not ever want to delay the input of the user by a second as they'll either think our app is broken or their computer is lagging.

- have a way to **cancel** the resource-intensive action. Not only do we want to cancel it when the user makes another keystroke before our debounce timer has finished, but we also want the ability to cancel the queued action when changing state. For example, there is no point in filtering a giant list when we've already transitioned into a state that doesn't show the list anymore.

- allow us to set the **timed delay dynamically**. This could allow us to make the delay longer for mobile users as the average typing speed decreases.

With our optimal user experience out of the way, let's dive into the code.

Check out the [codesandbox](https://codesandbox.io/s/xstate-debounce-example-7i9mw) and read below for detailed explanations.

---

Let's write an app that displays tasty plants with the ability to filter them.

Since there are soo many tasty plants, we are expecting the server to take quite a long time. Therefore, we'll need to debounce the user input before the server starts filtering.

```javascript

const tastyPlants = [

"seeds 🌱",

"mushrooms 🍄",

"nuts 🥜",

"broccoli 🥦",

"leafy greens🥬"

];

// For the extended state of the machine, we want to store the user input and the plants to render.

const machineContext = {

input: "",

filteredTastyPlants: []

};

```

In other words, we don't want to send a server request on every keystroke; instead, we want to add a minor delay of 450 milliseconds. Also, instead of using an actual HTTP request, we are going to keep things local and just use a timeout.

The code that is responsible for performing the (fake) slow filter operation might look like this:

If you're new to asynchronous code in statecharts, you may want to check out this [blog post](https://dev.to/codingdive/state-machine-advent-asynchronous-code-in-xstate-102p) before understanding what's going on below.

```javascript

// inside our machine

apiClient: {

initial: "idle",

on: {

slowFilter: {

target: ".filtering"

}

},

states: {

idle: {},

filtering: {

invoke: {

id: "long-filter-operation",

src: (context, event) =>

new Promise(resolve =>

setTimeout(

() =>

resolve(

tastyPlants.filter(plant => plant.includes(context.input))

),

1500

)

),

onDone: {

target: "idle",

actions: assign({

filteredTastyPlants: (context, event) => event.data

})

}

}

}

}

},

```

We aren't doing anything special here just yet. We pretend that our server takes 1500 milliseconds until it completes the filtering and upon resolving, we can ultimately assign the filtered plants to our `filteredTastyPlants` context.

You might have noticed that within the `slowFilter` event, we haven't actually assigned the input to the state machine yet. As you'll see shortly, the trick to make debouncing work in XState is to use two events instead of one.

## Responsiveness

For instant feedback, which was our very first constraint, we'll define an extra event that assigns the input to the machine context. This event will also have the responsibility of sending the `slowFilter` event after a delay of 450ms. That's right. A machine can send events to itself. Let's see it in **action(s)**!

```javascript

// import { actions, assign } from 'xstate'

// const { send } = actions

// within our machine

on: {

filter: {

actions: [

assign({

input: (context, event) => event.input

}),

send("slowFilter", {

delay: 450,

});

];

}

}

```

The above code guarantees that the `slowFilter` event is called 450ms after every keystroke. Cool! In our component, we treat the `slowFilter` event like an internal event of the machine, meaning we'll only ever work with the `filter` event as seen in the example below.

```jsx

const [state, send] = useMachine(filterPlantsMachine).

return (

<input value={state.context.input} onChange={(e) => void send({type: 'filter', input: e.target.value})}>

// render state.context.filteredTastyPlants

)

```

## Cancellation

To work towards our second constraint, we now need a way to cancel the `slowFilter` event that is about to be sent. We can do so by giving the event an id, then canceling the event by the same id using the `cancel` action creator.

```javascript

// import { actions, assign } from 'xstate'

// const { send, cancel } = actions

// within our machine

on: {

filter: {

actions: [

assign({

input: (context, event) => event.input

}),

cancel('debounced-filter'),

send("slowFilter", {

delay: 450,

id: "debounced-filter"

});

];

}

}

```

Because the above code cancels and resends the `event` on every keystroke, it'll **only** be sent once the user has stopped typing for at least 450ms. Pretty elegant right? For even better readability, we can expressively name the actions.

```javascript

on: {

filter: {

actions: [

'assignInput',

'cancelSlowFilterEvent',

'sendSlowFilterEventAfterDelay'

];

}

}

// ...

// pass actions as config to the second argument of the Machine({}, {/* config goes here */}) function.

{

actions: {

assignInput: assign({

input: (context, event) => event.input,

}),

cancelSlowFilterEvent: cancel('debounced-filter'),

sendSlowFilterEventAfterDelay: send('slowFilter', {

delay: 450,

id: 'debounced-filter',

}),

},

}

```

## Dynamically set debounce delay

Last but not least, to provide the best possible user experience we may want to dynamically change the delay. To account for the typing speed decrease in words per minute when going from desktop to phone, let's only start the filtering 800ms after the last keystroke when the user is on their phone.

After adding an `isPhone` boolean to our context (we could also pass it via the event), we can use a [delay expression](https://xstate.js.org/docs/guides/delays.html#delay-expressions) to dynamically set the delay.

```javascript

sendSlowFilterEventAfterDelay: send('slowFilter', {

delay: (context, event) => context.isPhone ? 800 : 450,

id: 'debounced-filter',

}),

```

___

Let me know in the comments what you think and if you have any questions. Happy debouncing! ❤️ | codingdive |

264,332 | Cypress – code coverage reports for unit tests | One of most common test reports used is code coverage reports. And while Cypress does support them, s... | 0 | 2020-02-19T09:31:51 | https://dev.to/hi_iam_chris/cypress-code-coverage-reports-for-unit-tests-2hd2 | cypress, javascript, testing, frontend | One of most common test reports used is code coverage reports. And while Cypress does support them, setting them up can be a bit of pain. In this post, I will be explaining how to set up coverage reports for unit test. If you haven’t installed Cypress yet, you can use my [previous post](https://dev.to/chriss/cypress-initial-setup-l4) as a guide on that.

## Installation

As always, lets first start with required packages that you will need for this. Those packages are:

- @cypress/code-coverage

- babel-plugin-transform-class-properties

- instanbul-lib-coverage

- mocha@^5.2.0

- nyc

You can install all of these by executing next CLI command:

```

npm install --save-de @cypress/code-coverage babel-plugin-transform-class-properties instanbul-lib-coverage mocha@^5.2.0 nyc

```

## Babel

Because you will be importing your modules into unit test, which are probably written in ES6, you will need .babelrc file for this. While your can be different, depending on your project, following code is minimum that you will need in it.

```

// .babelrc

{

"presets": ["@babel/preset-react"],

"plugins": ["transform-class-properties", "istanbul"]

}

```

## Cypress commands

Now that you installed dependencies and set your babel configuration file, we can go into Cypress configuration. First, you will need to update cypress/support/index.js file. This change will be small, just adding one line.

```

// cypress/support/index.js

import '@cypress/code-coverage/support'

```

## Cypress plugins

Next step will be updating Cypress plugins file. Once again, very small change. You need to update cypress/plugins/index.js to contain following code.

```

// cypress/plugins/index.js

module.exports = (on, config) => {

on('task', require('@cypress/code-coverage/task'));

on('file:preprocessor', require('@cypress/code-coverage/use-babelrc'));

return config

};

```

## Adding tests

When it comes to set up, we are done. Now we can start adding tests. For this, under cypress/integration we can create new folder called unit. In this file we will keep all our unit tests. Usually, we would keep all tests along our code. If nothing, to reduce need for long dot slashes in imports. And Cypress does support keeping them in different location. However, this coverage plugin doesn’t work if tests are not inside of integration folder and just generates empty report.

## Running tests

Once our tests are written, we can run them. If we are running unit tests, it is good to run them separate from e2e tests. For that we can use also different command. That command can be following:

cypress run --spec cypress/integration/unit/*

## Generated reports

All coverage reports are generated in root of project in folder called coverage. I tried to change this location, but sadly, Cypress configuration does not work for it. Only option I was left with was either manually or creating different script that would move it to needed location.

## Wrap up

Cypress does support getting coverage reports for unit tests. But setting up, however small it is, is far from clear. There are many issues, like need for tests to be only in integration folder for it to work and lack of ability to change report output location. But I do hope this guide made it simpler and easier for you to set up.

All code example for this can be found in my [Cypress setup repository](https://github.com/kristijan-pajtasev/cypress-setup).

| hi_iam_chris |

264,348 | ¿En qué se diferencian width, max-width y min-width? | Muchas veces cuando se está aplicando CSS, te topas con estas 3 propiedades parecidas, width, max-wid... | 0 | 2020-02-19T03:26:35 | https://dev.to/thedavos/en-que-se-diferencian-width-max-width-y-min-width-497a | css, espanol, spanish | Muchas veces cuando se está aplicando CSS, te topas con estas 3 propiedades parecidas, `width`, `max-width` y `min-width`. ¿Alguna vez te confundiste en usar alguna de estas pero en verdad debisteno usar unel max-width o un min-width? Pues, a todos nos ha pasado, seguramente. En este post te explicaré qué hace cada propiedad y en qué se diferencian con las demás. Pues, comencemos.

## Sobre width

El más simple de los tres. Esta propiedad es la que define un ancho específico a un elemento. Sin esta, el elemento o bloque, si es que no tiene texto o algún contenido dentro, no podría ser visualizado por el usuario.

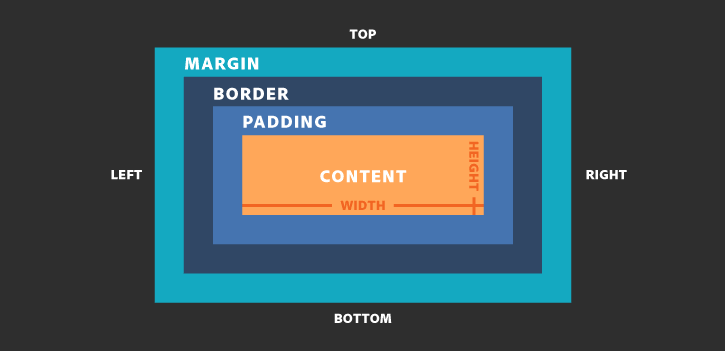

En términos del modelo de caja de CSS. El width vendría a definir al contenido del elemento. Lo que vendŕia a ser el **Content** en la imagen. Sin embargo, cuando se coloca a un elemento la propiedad `box-sizing` y se ponga como valor `border-box`, el width vendría a definir el **Content**, **Padding** y el **Border**.

Por el lado del Responsive Design, esta propiedad no puede adaptarse por sí mismo a los diferentes tamaños de otros dispositivos o si la pantalla hace un cambio de tamaño. Para esto, necesita respaldarse de otras propiedades.

Como se ve en el vídeo de abajo, un elemento no puede adaptarse al ancho de la pantalla con tan solo la propiedad width, para esto veremos las otras dos propiedades que si pueden manejar esto.

## Sobre max-width

Según en la página de [MDN](https://developer.mozilla.org/es/docs/Web/CSS/max-width), esta propiedad se define como:

> La propiedad de CSS `max-width` coloca el máximo ancho a un elemento. Además, impide que el valor de `width` sea más largo que el valor especificado en `max-width` .

Esto quiere decir que cuando el valor que nosotros especifiquemos en width sea mayor que max-width, este último sobrescribirá el ancho del elemento al valor que tiene max-width. Así max-width define el ancho máximo que un elemento puede tener.

Tal como se ve en el vídeo/gif de la parte inferior, max-width cambia el tamaño del elemento si el valor de width es mayor que el de max-width. Sin embargo, si el width es menor entonces max-width no tendría que actualizar el tamaño ya que no se alcanzado el tamaño máximo del elemento.

Por el lado del Responsive Design, max-width es aplicado en los media queries. Si no sabes qué son los media queries, te sugiero leer este [post](https://www.arsys.es/blog/programacion/diseno-web/media-queries-css3/). Entonces, max-width en un media query aplica estilos definidos en un determinado ancho de pantalla. Este ancho de pantalla parte desde 0 hasta lo que se haya colocado en max-width. Pasado este ancho máximo todos los estilos que se hayan puesto dejan de aplicarse en la pantalla.

```css

@media only screen and (max-width: 600px) {

body {

background-color: lightblue; /* Aplicará solo desde 0 hasta 600px */

}

}

/* Pasados los 600px, el background deja de ser lightblue */

```

## Sobre min-width

A diferencia de max-width que define el tamaño máximo, min-width define el mínimo tamaño que un elemento puede tener.

Por ejemplo, en el vídeo/gif inferior. Primero se intenta hacer un resize sin min-width. Esto disminuye el ancho del elemento hasta el mínimo posible, haciendo que el elemento se distorsione. Luego, se coloca un valor al min-width del elemento. Con min-width ya puesto se comienza el resize. Sin embargo, a diferencia de la anterior vez, el elemento no se distorsiono a su máximo valor ya que tiene un ancho mínimo especificado. Esta propiedad permite que el contenido que tenga un elemento, no se vea comprometido cuando se haga un resize en la pantalla o si este es de otros dispositivos con diferentes tamaños.

Por el lado del Responsive Design, si en un media query se especifica el min-width, entonces todos los estilos especificados serán aplicados a partir del min-width hacia adelante.

```css

@media only screen and (min-width: 800px) {

.box {

background-color: red; /* Aplicará a partir desde los 800px hacia adelante */

}

}

```

## Conclusiones

A pesar que algunos elementos del desarrollo web se parezcan, es importante no frustrarse de ello, calmarse y aprender. Aprender a diferenciar y ver la utilidad de tus herramientas puede ser clave al momento de aplicar CSS o implementar un diseño responsivo a un sitio web, inclusive puede ahorrarte líneas de código y abrirte las puertas a otros conocimientos, como el patrón de diseño Mobile First. Pero, sobre todo a ser una mejor desarrolladora o desarrollador. | thedavos |

264,351 | I published my first Ember Addon | Announcing an Ember addon for Fullcalendar 4 | 0 | 2020-02-19T03:53:33 | https://welchcanavan.com/ember-fullcalendar | javascript, ember, opensource | ---

title: I published my first Ember Addon

description: Announcing an Ember addon for Fullcalendar 4

published: true

tags: javascript, ember, opensource

canonical_url: https://welchcanavan.com/ember-fullcalendar

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/jyjz25xdurtml5xquutn.jpg

---

_Originally published at [welchcanavan.com](https://xiw.cx/2wqxO3b)_

**TL;DR**: _a new [ember-fullcalendar](https://github.com/Mariana-Tek/ember-fullcalendar) addon based on Fullcalendar v4 is released this week. Here is some of my process and experience._

## Backstory

My day job is working on a large [SaaS](https://en.wikipedia.org/wiki/Software_as_a_service) application at [Mariana Tek](https://marianatek.com/). We help boutique gyms with their business operations, which means our application serves a lot of purposes: point of sale, time clock, class scheduler, and a lot more. Having to write all this functionality from scratch would be difficult if not impossible, so I'm grateful for plugins in general and Ember's painless [addon](https://guides.emberjs.com/release/addons-and-dependencies/) system in particular.

While our application is on an earlier version Ember 3, we're in the process of a long walk to the exciting [Ember Octane](https://blog.emberjs.com/2019/12/20/octane-is-here.html). Part of this process has been gradually updating our addons. Our application, which is over four years old, had accrued some addons that are now abandoned. Most of these were easy to address, with one big exception - [Fullcalendar](https://fullcalendar.io/). Fullcalendar is a fantastic tool that allows the user to provide a fully-featured scheduling calendar (e.g. Google Calendar) with minimal code. The Ember Fullcalendar addon hadn't been updated in over a year and I really didn't want to write a calendar from the ground up. The writing was on the wall; I was going to have to write my first Ember addon.

## Well, not quite...

In actuality, my first inclination was to see if I could chip in with the _current_ Ember Fullcalendar plugin, but I found that Fullcalendar had done a major upgrade moving from [version 3 to version 4](https://fullcalendar.io/docs/upgrading-from-v3); having removed [jQuery](https://jquery.com/) and [Moment](https://momentjs.com/) as dependencies, and updated their API. If I wanted to fully get rid of jQuery as a dependency in our app it seemed I would have start from scratch.

## Inspiration

If I couldn't improve the current addon or use it as a guide for my new addon then what could I look at? For the Ember side of things I went to [Ember Observer](https://emberobserver.com/), a great resource for finding quality addons. I read through some of the popular addons that provide a similar service (bindings to a popular Javascript UI utility). This provided some hints and ideas for structure, but I still needed some ideas for the Fullcalendar side of things. I thought I'd take a peek at the [`fullcalendar-react`](https://github.com/fullcalendar/fullcalendar-react) package and was pleasantly surprised to find that it barely breaks fifty lines of code.

`fullcalendar-react` provided the idea to avoid explicit bindings for each property or attribute and simply batch updates and pass them along to Fullcalendar. `componentDidMount()` becomes `didInsertElement()`, while `componentDidUpdate()` becomes `didUpdateAttrs()`. This makes the addon easier to maintain as I can upgrade its Fullcalendar dependency version with minimal updates to the addon's code. The Ember best practice of [Data Down, Actions Up](https://dockyard.com/blog/2015/10/14/best-practices-data-down-actions-up) aligns well with React's philosophy and if you account for differences in each framework's API many patterns are easily transferable.

## Try it out

The addon can be installed using `ember install @mariana-tek/ember-fullcalendar`. You can find further instruction in the [documentation](https://github.com/Mariana-Tek/ember-fullcalendar#mariana-tekember-fullcalendar).

Please feel free to check out the [source code](https://github.com/Mariana-Tek/ember-fullcalendar/blob/master/addon/components/full-calendar.js) or [report an issue](https://github.com/Mariana-Tek/ember-fullcalendar/issues). Enjoy!

### Acknowledgement

Thanks to my employer for the support in making this! If you think you'd like to work on a kind and supportive team that talks Ember, React, and—above all—Javascript, please [check us out](https://marianatek.com/careers).

| xiwcx |

264,437 | A brief look into code quality | An intro to clean code | 0 | 2020-02-19T06:22:08 | https://dev.to/princessanjana1996/a-brief-look-into-code-quality-o7e | codequality, javascript, cleancode | ---

title: A brief look into code quality

published: true

description: An intro to clean code

tags: code-quality, javascript, clean-code

cover_image: https://anjanak.com/content/images/size/w2000/2020/02/coding-computer-data-depth-of-field-577585.jpg

---

When we are writing code, it should be human-readable and clean. This article is about some basics of writing readable and clean code.

###Syntax

Now let’s discuss these syntax and best practices in detail.

###Curly Braces

```

If (condition) {

// do this

// do this

}

```

In JavaScript language curly braces are written with the opening bracket on the same line not on a new line and there should be a space before the opening curly bracket as above example.

- Curly braces are not needed for a single-line construct.

```

if (i <= 0) {alert(`It should not be ${i}`);}

```

- If it is short, we can use it without curly braces.

```

if (i <= 0) return null;

```

- Don’t split to a separate line without braces.

```

if (i <= 0)

alert(`It should not be ${i}`);

```

- The best way. This is usually more readable.

```

if (i <= 0) {

alert(`It should not be ${i}`);

}

```

###Semicolon

The majority of developers put semicolons after each statement but it is optional and rarely used. In JavaScript there are problems where a line break is not interpreted as a semicolon, going the code vulnerable to errors.

```

let sum = a + b;

```

###Indents

- Horizontal indents

2 or 4 spaces( 4 spaces = key Tab). For instance, to align the arguments with the opening bracket.

```

function fruit(banana,

mango,

avocado

) {

}

```

- Vertical indents

Function can be divided into logical blocks. In the below example appears three vertically split. First one is initialization of variables, the second one main loop and the third one returning the result.

```

function sum(a) {

let total = 0;

while(a > 0) {

total += a;

}

return total;

}

```

If we want to make the code more readable we can add extra newline.

###Line Length

Don’t write long horizontal lines of code because no one prefers to read it. Back tick quotes(``) help to split the long string into multiple lines.

```

let pra = `

Lorem ipsum dolor sit amet, consectetur adipiscing elit.

Integer eu convallis sem.

Praesent in facilisis ligula.

Curabitur iaculis metus lacus, vitae dapibus odio iaculis vitae.

Morbi imperdiet ultricies tortor ac dignissim.

Quisque at mi a purus dignissim tincidunt eu eu ipsum.

Phasellus pharetra vitae neque id fermentum.

`;

```

Same as the if statement. For example,

```

if (

id === 10001 &&

name === 'Anjana Kumari' &&

address === 'Kandy'

) {

login();

}

```

The maximum line length is usually 80 or 120 characters but it will depend upon the team-level.

###Nesting Level

If we can use `continue` directive, we can easily avoid extra nesting. For example,

```

while (i < 10) {

if (!condition) continue;

// do that

}

```

###Style Guides

Style guides includes how to write code. If we work as a team we can use the same style guide. Then the code can see uniform. Also teams can use their own style guide too. The popular style guides are Google JavaScript Style Guide and StandardJS etc.

###Automated Linters

Automated Linter tools can automatically detect the style of our code and make suggestions. Also, the linter tools can detect typos in variable names and function names. Some of the popular linting tools are JSLint, JSHint, and ESHint.

###Summary

Carly Braces, Semicolons, Indents, Line Length and Nesting Levels rules described aim to increase the readability of our code.

We should always think about writing better code. There are two things to keep mind when we write codes. First one is what makes the code more readable and easier to understand? And the second one is what can help us avoid errors?

Finally, we should be up to date with reading the most popular style guides.

Original post in my [personal blog](https://anjanak.com/a-brief-look-into-code-quality/) | princessanjana1996 |

264,452 | PHPMyAdmin – count(): Parameter must be an array or an object that implements Countable | Edit file /usr/share/phpmyadmin/libraries/sql.lib.php: sudo nano /usr/share/phpmyadmin/libraries/sql... | 0 | 2020-02-19T07:09:13 | https://dev.to/sushmagangolu/phpmyadmin-count-parameter-must-be-an-array-or-an-object-that-implements-countable-ndb | ubuntu, php, phpmyadmin | Edit file <code>/usr/share/phpmyadmin/libraries/sql.lib.php:</code>

<code>sudo nano /usr/share/phpmyadmin/libraries/sql.lib.php</code>

On line 613 the count function always evaluates to true since there is no closing parenthesis after $analyzed_sql_results['select_expr']. Making the below replacements resolves this, then you will need to delete the last closing parenthesis on line 614, as it’s now an extra parenthesis.

Replace:

<code>

((empty($analyzed_sql_results['select_expr']))

|| (count($analyzed_sql_results['select_expr'] == 1)

&& ($analyzed_sql_results['select_expr'][0] == '*')))</code>

With:

<code>((empty($analyzed_sql_results['select_expr']))

|| (count($analyzed_sql_results['select_expr']) == 1)

&& ($analyzed_sql_results['select_expr'][0] == '*'))</code>

Restart the server apache:

<code>sudo service apache2 restart</code> | sushmagangolu |

264,479 | 4 Go-To Apps For Revision and Exam Prep | After so many weeks of writing essays, reports, term papers, and other academic assignments, it is hi... | 0 | 2020-02-19T08:27:23 | https://dev.to/jessywhite/4-go-to-apps-for-revision-and-exam-prep-5b05 | After so many weeks of writing essays, reports, term papers, and other academic assignments, it is high time to start revising for exams. This period of student life is really tough, especially for those who were not serious enough with the classroom activities. Students who didn’t take notes during lectures have much trouble preparing for the exams. As a rule, their memory isn’t sharp enough to keep in mind all the details their professor said. Learners struggle a lot before their exams. They have to spend long hours in libraries reading a pile of sources and doing thorough research. Don’t want to be among those students who have their hands full? Benefit from the most popular academic help apps that will make revision more effective and less stressful. Download them to your mobile phone and revise for college exams like a pro.

Study Blue

If you are a fan of flashcards, consider a study application Study Blue. It is a free app, so you can download it to your device and get quick help from the crowdsourced library of materials. This app can be used to make and share flashcards, to add audio and images to your study materials, to check the knowledge of the subject taking tests and keeping progress. After downloading this app, you’ll have access to an impressive library of materials for studying and helpful guides, created by students.

SpeedyPaper Adviser

One more useful application for all students is SpeedyPaper Adviser. This writing app will help you boost your grades and prepare for exams more effectively. Having SpeedyPaper Adviser on your phone, you will get unlimited access to the huge database of college and university papers. You will be able to have a look at samples of various academic assignments. Find necessary essays, reports, research papers, course works, and boost your grades. Students will know how to create, to structure, and to cite assignments. The customer support is available round-the-clock, so if you have trouble finding a required paper, you may contact managers and ask all your questions. Numerous positive reviews about this app prove that it is really great and worth giving a try. To know about all the features, download an app on your handy or visit their website.

Gojimo

This application was created to help students have a smooth revision. Undergraduates can use Gojimo both online and on mobile devices. An app contains helpful content for learners and some quizzes. You can select an exam you want to revise for, and it goes with a list of questions you may be asked. If you haven’t found a required topic, you can get in touch with the support via instant messaging.

Quizlet

It is one more helpful application for students who are going to prepare for exams. It is free, easy to use, and is a good choice for self-study. Having Quizlet on your device, you can learn with the help of flashcards, share them with your peers, learn foreign languages and get more info about coding, mathematics, science, history, and other college subjects. A lot of students benefit from Quizlet and leave their positive reviews about this study app.

https://speedypaper.app

| jessywhite | |

264,503 | How to Login 10.0.0.1 IP Address | How to connect your Comcast router login with Ip address 10.0.0.0.1, we describe it in very short. Fi... | 0 | 2020-02-19T09:11:11 | https://dev.to/routingkings/how-to-login-10-0-0-1-ip-address-4ida |

How to connect your Comcast router login with Ip address 10.0.0.0.1, we describe it in very short. First of all, write proper Ip address 10.0.0.0.1 in your address bar then enter the default username and password provided by default access gateway when you are purchasing your modem first time. Then you can maintain your admin panel, you can also change your password now you are getting connected with the internet. https://10-0-0-0-1.tech/

| routingkings | |

264,552 | Automatically detect secrets in your internal repos | At GitGuardian, we’ve been monitoring every single commit pushed to public GitHub since July... | 0 | 2020-02-19T10:44:19 | https://blog.gitguardian.com/product-launch-automated-secrets-detection-for-your-internal-repositories-now-widely-available/ | showdev, security, devops | #At GitGuardian, we’ve been monitoring every single commit pushed to public GitHub since July 2017. 2.5 years later, we’ve sent over 500k alerts to developers.

API keys, database connection strings, private keys, certificates, usernames and passwords, … As organizations embrace the power of cloud architectures, SaaS integrations and microservices, developers handle increasing amounts of sensitive information, more than ever before.

To add to that, companies are pushing for shorter release cycles to keep up with the competition, developers have many technologies to master, and the complexity of enforcing good security practices increases with the size of the organization, the number of repositories, the number of developer teams and their geographies…

As a result, secrets are spreading across organizations, particularly within the source code. This pain is so huge that it was even conceptualized under the name *“secret sprawl”.*

**After months of product iteration with security teams and developers, we’re now proud to officially introduce GitGuardian for internal repositories!**

##Credentials in private repositories: how much should you care?

Secrets stored in Version Control Systems is the current state of the world, yet VCSs are not a suitable place to store secrets for the following reasons:

* Everyone who has access to the source code has access to the secrets it contains. This often includes too many developers. It would just take a single compromised developer’s account to compromise all the secrets they have access to!

* You never know where your source code is going to end up. Because of the very nature of the git protocol, versioned code is made to be cloned in multiple places. It could end up on a compromised workstation, be inadvertently exposed on public GitHub, or released to customers.

Storing secrets in source code is a bit like storing unencrypted credit card numbers, or usernames and passwords in a Google Doc shared within the organization: good friends would not let you do this!

##As a developer or security professional, what should I do after a secret was pushed to a centralized version control?

*Every time I see a secret pushed to the git server, I consider it compromised...From one developer to another :)*

When a secret reaches centralized version control, it is always a good practice to revoke it. At this point, depending on the size of your organization, remediating is often a shared responsibility between Development, Operations and Application Security teams.

Indeed, you might need some special rights and approval to revoke the secret, some secrets might be harder to revoke than others, plus you must make sure that the secret is properly rotated and redistributed without impacting your running systems.

Apart from that, depending on your organization’s policies, you might want to clean your git history as well. This will require a ‘git push --force’, which comes with some risks as well, so there is definitely a tradeoff to consider, with no correct answer!

(Hint: if your secret is buried deep in your code, [BFG Repo-Cleaner](https://rtyley.github.io/bfg-repo-cleaner/) is a great Open Source project to help you get rid of it without having to use the intimidating ‘git-filter-branch’ command. Plus it is in Scala! We have [Roberto Tyley](https://github.com/rtyley) to thank for this.)

##When should I do secret detection?

With the nature of git comes a unique challenge: whereas most security vulnerabilities only have the potential to express themselves in the actual (and deployed) version of your source code, old commits can contain valid secrets, including deleted secrets that subsequently went unnoticed during code reviews.

First, you want to make sure that you start on a clean basis by scanning existing code repositories in depth.

Then, you want to continuously scan all incremental changes, ie every new commit in every branch of every repository.

When to do incremental scanning?

In his presentation about [“Improving your Security Posture with the Cloud”](https://speakerdeck.com/sebsto/automatisez-la-securite-de-vos-architectures-cloud-avec-le-devsecops-99e065c2-256d-45d4-8d7d-5552204622b2?slide=2), [Sébastien Stormacq](https://www.linkedin.com/in/sebastienstormacq/), Developer Evangelist @ AWS, advocates to implement security checks post-event in every case, and pre-event when possible.

We at GitGuardian share Sébastien's views. You should always implement automated secrets detection server side, in your CI/CD for example or via a native integration with GitHub / GitLab / Bitbucket repositories. Also, it is good to encourage your fellow developers to implement pre-commit hooks, but we often hear that this is hardly scalable across an entire organization.

##Try it out!

Our product will allow you to scan existing code as well as incremental changes, and benefit from secrets detection algorithms that were battle-tested at scale on the whole public GitHub activity for over two years! GitGuardian has a native integration with GitHub (GitLab and Bitbucket coming soon), and there is an on prem version available.

We offer a free version of our solution for individual developers and Open Source organizations, as well as a free trial for companies that you can access in SaaS here:

[https://dashboard.gitguardian.com/auth/signup](https://dashboard.gitguardian.com/auth/signup?utm_source=devto&utm_medium=referral&utm_campaign=prm_launch).

| cuireuncroco |

264,568 | 🎨 Micro Frontends, Chrome 80, JavaScript, DevPad and More — Weekly Picks #115 | List of most popular articles from Daily this week. | 0 | 2020-02-19T15:00:47 | https://dev.to/dailydotdev/micro-frontends-chrome-80-javascript-devpad-and-more-weekly-picks-115-76o | webdev, javascript, codenewbie, react | ---

title: 🎨 Micro Frontends, Chrome 80, JavaScript, DevPad and More — Weekly Picks #115

published: true

description: List of most popular articles from Daily this week.

tags: #webdev #javascript #codenewbie #react

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/aejk77j9kfymk3wldtxq.jpg

---

We are back again with the weekly picks of the last week. This time, the topics include micro frontends, exciting new updates in Chrome, JS libraries, and a new product — devpad.io. So, let's jump into the list. ⚡️

## 1️⃣ Introduction to micro frontends architecture

With time, new architectural solutions have been introduced for the frontend. We have moved from monolith to frontend/back and now microservices. This post describes micro frontend architecture in detail, including its perks, and why you should use it. 🌟

* [Introduction to micro frontends architecture](https://app.dailynow.co/r/83874844b5b6662a50e89ed4721f6b3e)

## 2️⃣ Chrome 80 Released

Chrome team at Google has recently launched Chrome 80. **It brings some exciting new features to life, including but not limited to Modules in Service Workers, Optional Chaining in JavaScript, and New Cookie Policy**. Learn about these features to utilize in your future projects. 💯

* [Chrome 80 Released](https://app.dailynow.co/r/a6d2eb5c0d289a66745b5c140080d2e5)

## 3️⃣ 5 Practices to Eliminate Bad Code

Writing a clean and modular with a good architecture is the key to its long term maintenance. But it takes time and experience to start writing such kind of code. This post at DZone describes a few ways to get avoid Bad Code. 🤞

* [5 Practices to Eliminate Bad Code](https://app.dailynow.co/r/27454ff1bf656d237cfdc651a1660c66)

## 4️⃣ 10 Top Javascript Libraries and Tools for an Awesome UI/UX

The best thing about JavaScript is that you can find a library to do almost everything. But the **problem is to stay up-to-date with all new libraries and pick the best one for your project**. This post list 10 such libraries that you can utilize to level up your UI/UX game. 💯

* [10 Top Javascript Libraries and Tools for an Awesome UI/UX](https://app.dailynow.co/r/d51a89160d16a02df723b21cc64bfdfe)

## 5️⃣ 36 most popular JavaScript repositories on GitHub in January 2020

⚛️ **The best way to stay updated in any technology is to find the community and see what others are doing**. Keeping an eye on the GitHub trends is one way to do it. This post list 36 most popular JS repositories from GitHub in Jan. 2020 that you can take a look at to see what other JS devs are up to.

* [36 most popular JavaScript repositories on GitHub in January 2020](https://app.dailynow.co/r/cf277be3f2331356f02723a4367d46b4)

## 6️⃣ 11 Useful Online Tools for Frontend Developers

🚀 From my experience, I can tell you this post list precisely the best tools that you must use as a frontend developer. They will not only help you save time but also become better at what you do. All the beginners out there, you should definitely check this post.

* [11 Useful Online Tools for Frontend Developers](https://app.dailynow.co/r/41ba1f305cf7afd2efc2f9d1ff6cfbe0)

## 7️⃣ Prettier is a Must-Have for Large-Scale Projects: Spent 20 Minutes Setting It Up and Forgot About Formatting for a Year

There's no doubt in the fact that you should use a code formatting tool like prettier to save time and keep the coding style consistent. **This post lists a real-world project example and describes how prettier helped them save time and improve their codebase.** 🎨

* [Prettier is a Must-Have for Large-Scale Projects: Spent 20 Minutes Setting It Up and Forgot About Formatting for a Year](https://app.dailynow.co/r/d156d403470b168e2648f84b4aacb37c)

## 8️⃣ 13 of the Best React JavaScript Frameworks

Instead of JavaScript frameworks, it lists the useful React related tools that help you build better React applications. I kind of find this title a little misleading. But the **post describes quite a useful list of tools that you should be aware of as a React developer**. 🌟

* [13 of the Best React JavaScript Frameworks](https://app.dailynow.co/r/8f9967980993cf86fac1549c704e74b7)

## 9️⃣ devpad.io — Devpad.io helps conduct the coding interview

🎯 Devpad.io is a tool that helps recruiters to improve the coding interview flow. According to their ProductHunt page, it is described as:

>Devpad.io helps conduct the coding interview, sending testing projects to the candidates and watch how they review the code. Using devpad.io in your recruiting process you save time and money. You can do more for less.

* [devpad.io — Devpad.io helps conduct the coding interview](https://app.dailynow.co/r/130588aa09ea62d5ee7aa6d62ece2b3a)

## 🔟 Learn p5.js by Making Fractals

The best way to learn a technology is to usually build something using it. p5.js is a JavaScript library that allows you to draw things on the canvas. In this post, Ben shows how he learned p5.js by making fractals using it. 🔥

* [Learn p5.js by Making Fractals](https://app.dailynow.co/r/6c5ed4165deb89caa3464683a75fc9b0)

## 🙌 Wrap Up!

This was all from the previous week. Let's know what you think of the trends this week in the comments below.

We will come back with another list of interesting development posts next week. Till then, peace! ✌️

<hr/>

<center> 👋 Follow us on [Twitter](https://r.dailynow.co/twitter) to stay up-to-date! </center>

_[Thanks to Daily](https://r.dailynow.co/web), developers can focus on code instead of searching for news. Get immediate access to all these posts and much more just by opening a new tab._

[](https://r.dailynow.co/web) | saqibameen |

264,575 | Angular - Custom Webpack Config to use SvgDocGen plugin | Hello everyone, in last time I've posted webpack plugin for generating demo and variables of your spr... | 0 | 2020-02-21T09:11:15 | https://dev.to/fasosnql/angular-custom-webpack-config-to-use-svgdocgen-plugin-3j34 | webpack, javascript, angular, tutorial | Hello everyone, in last time I've posted [webpack plugin for generating demo and variables of your sprites.svg](https://dev.to/fasosnql/svg-doc-generator-based-on-sprites-svg-1ao6) file. In this post I would like to show you how to add custom webpack configuration to your Angular project to use some plugins - in this article [SVG Doc Generator](https://github.com/Fasosnql/svg-doc-gen).

I will be working with angular project generated by `angular-cli` so to go through it with me you can just generate simple app `ng new app-name`.

When we have generated angular app we have to install some builders to build this app with additional webpack config. So let's install:

```bash

npm install @angular-builders/custom-webpack @angular-builders/dev-server --save-dev

```

Now we have to update `angular.json` to use installed builders.

```json

"architect": {

"build": {

"builder": "@angular-builders/custom-webpack:browser",

"options": {

"customWebpackConfig": {

"path": "./webpack.extends.js",

"replaceDuplicatePlugins": true

},

```

as you can see name of my webpack config is `webpack.extends.js` you can use whatever name you want.

Dump below we have to change builder for `ng serve`

```json

"serve": {

"builder": "@angular-builders/custom-webpack:dev-server",

```

In root directory according to `path` which we added to angular.json we have to create `webpack.extends.js` file. It is a file where we will be keeping our additional webpack configuration. For now let's just export empty object:

```js

module.exports = {};

```

Perfect, we've updated builders and added custom webpack config to Angular app. Everything is working so let's go to next step and let's add some custom plugin.

## Adding Custom Webpack Plugin

As I mentioned above I'm gonna add [SVG Doc Generator](https://github.com/Fasosnql/svg-doc-gen). plugin. According to instruction we have to install it via npm

```bash

npm install --save-dev svg-doc-gen

```

Great, now we can configure plugin in our `webpack.extends.js` file. But before it I will add my sprites.svg file to generate styles and demo html based on this. My SVG file looks like:

```xml

<svg version="1.1"

xmlns="http://www.w3.org/2000/svg"

xmlns:xlink="http://www.w3.org/1999/xlink"

viewBox="0 0 24 24">

<defs>

<style>

svg.sprites {

display: inline;

}

svg {

display: none;

}

svg:target {

display: inline;

}

svg[id^="primary-blue/"] use {

fill: #047BC1;

}

svg[id^="primary-white/"] use {

fill: #fefefe;

}

svg[id^="black/"] use {

fill: #000000;

}

svg[id^="gray/"] use {

fill: #AAAAAA;

}

svg[id^="green/"] use {

fill: #197F86;

}

svg[id^="orange/"] use {

fill: #C3561A;

}

svg[id^="red/"] use {

fill: #B21F24;

}

svg[id^="secondary-blue/"] {

fill: #002B49 !important;

}

svg[id^="white/"] {

fill: #FFFFFF;

}

</style>

<g id="def-icon1.svg">

<path d="M17 10.5V7c0-.55-.45-1-1-1H4c-.55 0-1 .45-1 1v10c0 .55.45 1 1 1h12c.55 0 1-.45 1-1v-3.5l4 4v-11l-4 4z"/>

<path d="M0 0h24v24H0z" fill="none"/>

</g>

<g id="def-icon2.svg">

<path d="M15.41 16.59L10.83 12l4.58-4.59L14 6l-6 6 6 6 1.41-1.41z"/><path fill="none" d="M0 0h24v24H0V0z"/>

</g>

<g id="def-icon3.svg">

<path d="M8.59 16.59L13.17 12 8.59 7.41 10 6l6 6-6 6-1.41-1.41z"/><path fill="none" d="M0 0h24v24H0V0z"/>

</g>

<g id="def-icon4.svg">

<path d="M0 0h24v24H0z" fill="none"/><path d="M12 2C6.48 2 2 6.48 2 12s4.48 10 10 10 10-4.48 10-10S17.52 2 12 2zm-2 15l-5-5 1.41-1.41L10 14.17l7.59-7.59L19 8l-9 9z"/>

</g>

</defs>

<!--

@SVGDoc

name: Icon1

variable: icon1

-->

<svg id="icon1.svg">

<use xlink:href="#def-icon1.svg"/>

</svg>

<!--

@SVGDoc

name: Icon2

variable: icon2

-->

<svg id="icon2.svg">

<use xlink:href="#def-icon2.svg"/>

</svg>

<!--

@SVGDoc

name: Icon3

variable: icon3

-->

<svg id="icon2.svg">

<use xlink:href="#def-icon2.svg"/>

</svg>

<!--

@SVGDoc

name: Icon4

variable: icon4

-->

<svg id="icon4.svg">

<use xlink:href="#def-icon4.svg"/>

</svg>

</svg>

```

As you see for every icon I've added `@SVGDocGen` comment according to plugin documentation. Now we can configure plugin,

Inside `webpack.extends.js` we have to add:

```js

const path = require('path');

const SVGDocGen = require('svg-doc-gen');

module.exports = {

plugins: [

new SVGDocGen({

svgDefsPath: path.resolve(__dirname, 'src/assets/sprites.svg'),

stylesConfig: {

outputPath: path.resolve(__dirname, 'src/assets/styles/svg-vars.scss'),

styleLang: 'scss',

svgPathInFile: 'assets/sprites.svg'

},

htmlConfig: {

outputPath: path.resolve(__dirname, 'src/assets/svg-demo/index.html')

}

})

]

};

```

This is configuration which is taking `sprites.svg` file from `assets` folder and generating variables and demo html with icons to `assets` folder.

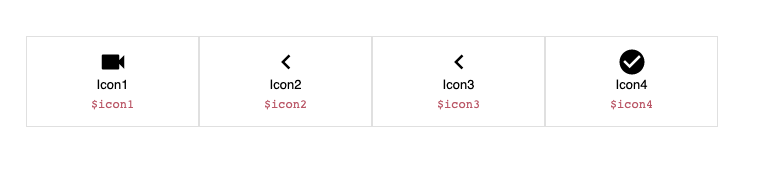

Now when you run `ng serve` or `ng build` according to path if you open `src/assets/svg-demo/index.html` you should see that output:

And `src/assets/styles/svg-vars.scss`:

```scss

$icon1: "assets/sprites.svg#icon1.svg";

$icon2: "assets/sprites.svg#icon2.svg";

$icon3: "assets/sprites.svg#icon2.svg";

$icon4: "assets/sprites.svg#icon4.svg";

```

Ok perfect, at the end we can just import our vars to `styles.scss` to get usage of generated variables. I.e:

```scss

@import "./assets/styles/svg-vars";

.icon1 {

background: url($icon1);

background-size: 100%;

width: 2rem;

height: 2rem;

}

```

That's all from me, If you have some questions feel free to add comments below this article. If you have some problems with configuring let's download this [zip](https://srv-file9.gofile.io/download/8APrqi/zip-svg.zip) package (this is working angular app generated for this article) and compare your code with code from this pack. | fasosnql |

264,606 | How to create own utility methods for your Rails application | Hello, As a developer, I need from time to time to quickly execute SQL, or check something in DB,... | 0 | 2020-02-19T15:33:43 | https://dev.to/igorkasyanchuk/how-to-create-own-utility-methods-for-your-rails-application-40lm | ruby, rails | ---

title: How to create own utility methods for your Rails application

published: true

description:

tags: ruby, rails

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/rlt9ue05pl4bspkxgetr.png

---

Hello,

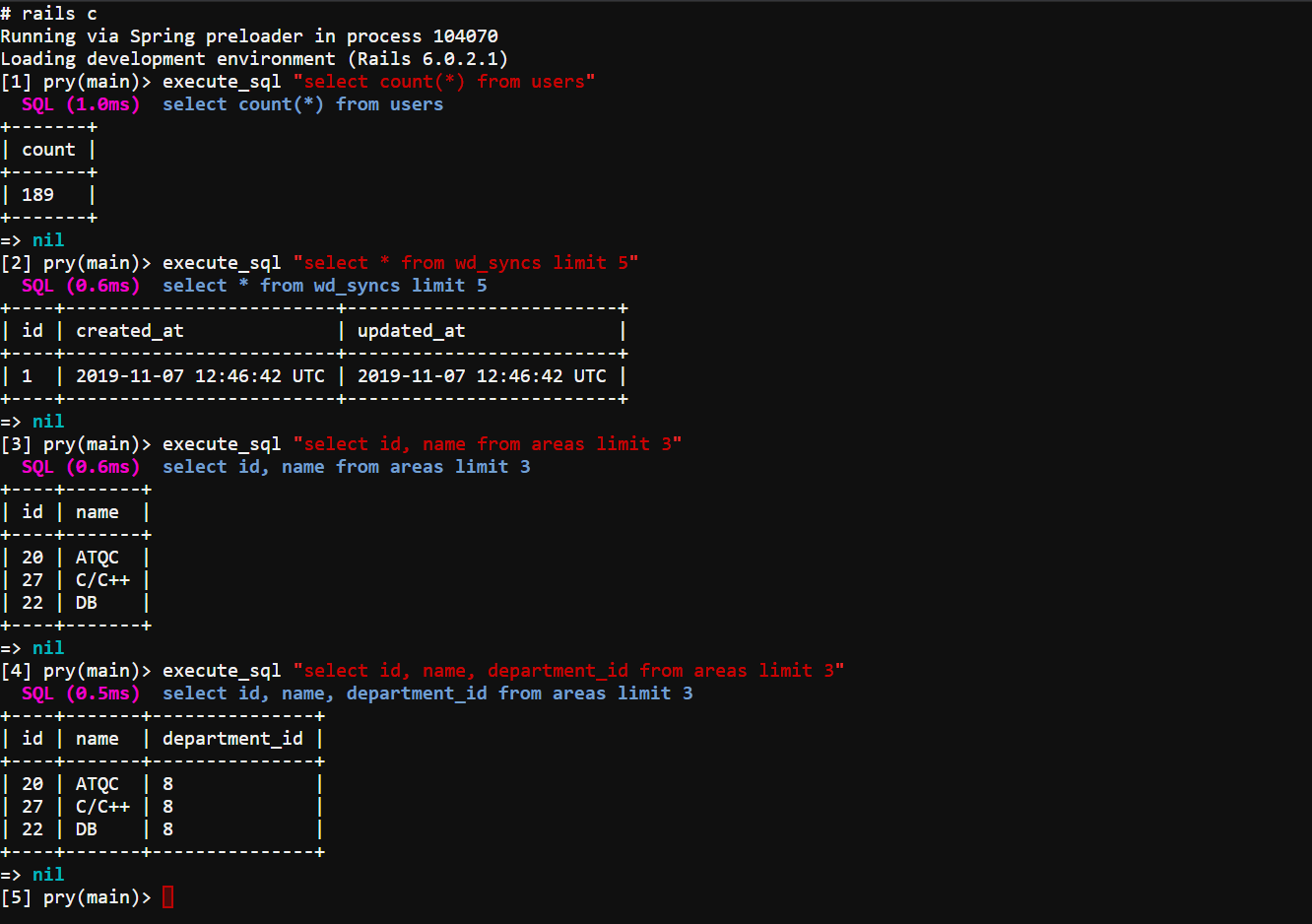

As a developer, I need from time to time to quickly execute SQL, or check something in DB, truncate a table, etc when I'm in the rails console.

You may already know about using `ActiveRecord::Base.connection.execute("SQL query")` solution, but I must admit I'm a lazy developer when it comes to doing routine tasks or things where I need to type long pieces of code. And actually a snippet of code above is not everything that you need to type, you may also need to add `.to_a` to see the result of the query.

I know the following rule:

## laziness is the engine of progress!

So, I tried to solve my issue as a developer and create an open-source solution to use it from project to project: https://github.com/igorkasyanchuk/execute_sql

This is a demo of how it works:

I'll explain how it works.

Step of creation gem I'll skip, but basically you need to create a new gem (`rails plugin new <name>`).

Now we need to understand how to add our own method just in the rails console.

```ruby

module ExecuteSql

class Railtie < ::Rails::Railtie

console do

TOPLEVEL_BINDING.eval('self').extend ExecuteSql::ConsoleMethods

end

end

end

```

This is a piece of code from my gem. You can see that Rails provides a helper method `console` where you can pass the code which will be executed when you starting `rails c`.

In our case this is `TOPLEVEL_BINDING.eval('self').extend ExecuteSql::ConsoleMethods`.

In case you have wondered, what this top-level constant TOPLEVEL_BINDING is all about better to check sample of usage below:

```ruby

a = 42

p binding.local_variable_defined?(:a) # => true

p TOPLEVEL_BINDING.local_variable_defined?(:a) # => true

def example_method

p binding.local_variable_defined?(:a) # => false

p TOPLEVEL_BINDING.local_variable_defined?(:a) # => true

end

example_method

```

More details about `TOPLEVEL_BINDING` you can find [here](https://idiosyncratic-ruby.com/44-top-level-binding.html).

```

[11] pry(main)> TOPLEVEL_BINDING.eval('self')

=> main

[12] pry(main)> TOPLEVEL_BINDING

=> #<Binding:0x0000000001350b00>

```

As you can see TOPLEVEL_BINDING.eval('self') is basically the context of our console app.

So the last thing for us to do is to extend the `main` app with our class methods from `ExecuteSql::ConsoleMethods` module, source code is [here](https://github.com/igorkasyanchuk/execute_sql/blob/master/lib/execute_sql.rb).

This module inside is responsible for queries DB, and basically I took most of the code from my other gem [rails_db](https://github.com/igorkasyanchuk/rails_db) and printing results using [terminal-table](https://github.com/tj/terminal-table) gem.

Now my life is easier :)

And I hope you can implement your own utility methods. | igorkasyanchuk |

272,294 | How to become Good back-end developer? | Here some point to be noted being a good back-end developer . 1.Read the official documentation care... | 0 | 2020-03-02T16:22:05 | https://dev.to/w3codeblog/how-to-become-good-back-end-developer-1620 | php, laravel, devops, career | Here some point to be noted being a good back-end developer .

1.Read the official documentation carefully of language .

2.Each topic are important for being a good back-end dev.

3.If any bug makes you unhappy take a short break than thin about problem it will help you to resolve bug .

4.Check your syntax twice and than run program .

5.Always find bug from initial to advance .

Thanks . | w3codeblog |

272,364 | Normalize your complex JS objects | The process of normalization its havily used in every software design because normalizing data has a big impact on reducing data redunancy. | 0 | 2020-03-02T18:29:34 | https://dev.to/danielpdev/normalize-your-complex-js-objects-21d9 | javascript, normalization | ---

title: Normalize your complex JS objects

published: true

description: The process of normalization its havily used in every software design because normalizing data has a big impact on reducing data redunancy.

tags: javascript, normalization

---

## Data normalization

The process of normalization its havily used in every software design because normalizing data has a big impact on reducing data redunancy.

## When to normalize your data?

Suppose we received the following data from an api:

```ts

const apiData = [

{

id: 1,

name: "Daniel Popa",

siteUrl: "danielpdev.io"

},

{

id: 2,

name: "Other Name",

siteUrl: "danielpdev.io"

}

];

```

Now, you get a task to find the item with id of 1.

### How will you solve it?

#### 1. Dummy solution with complexity O(n):

Iterate over the whole collection using a `find` and output the result.

```ts

const findId = (apiData, id) => apiData.find(el => el.id === id);

```

You finished quite fast and gone for a coffee, but over the next few months the data grows and now you don't have only two elements,

but 10000. Your time of searching for elements will increase considerabily.

#### 2. Normalized solution with complexity O(1):

Transform data from [objects] => { id: object}:

```ts

const apiData = [

{

id: 1,

name: "Daniel Popa",

siteUrl: "danielpdev.io"

},

{

id: 2,

name: "Other Name",

siteUrl: "danielpdev.io"

}

];

function assignBy(key) {

return (data, item) => {

data[item[key]] = item;

return data;

}

}

const optimizedData = apiData.reduce(assignBy("id"), {});

```

optimizedData variable looks like the following:

```ts

{

"1": {

"id": 1,

"name": "Daniel Popa",

"siteUrl": "danielpdev.io"

},

"2": {

"id": 2,

"name": "Other Name",

"siteUrl": "danielpdev.io"

}

}

```

Now, searching for an element becomes really easy. Just `optimizedData[id]` and your data is ready.

### Conclusion:

Normalize your data only when dealing with complex objects and searching for objects takes long.

Article first posted on [danielpdev.io](https://danielpdev.io/normalize-your-complex-js-data/)

[Follow me on twitter](https://twitter.com/danielpdev) | danielpdev |

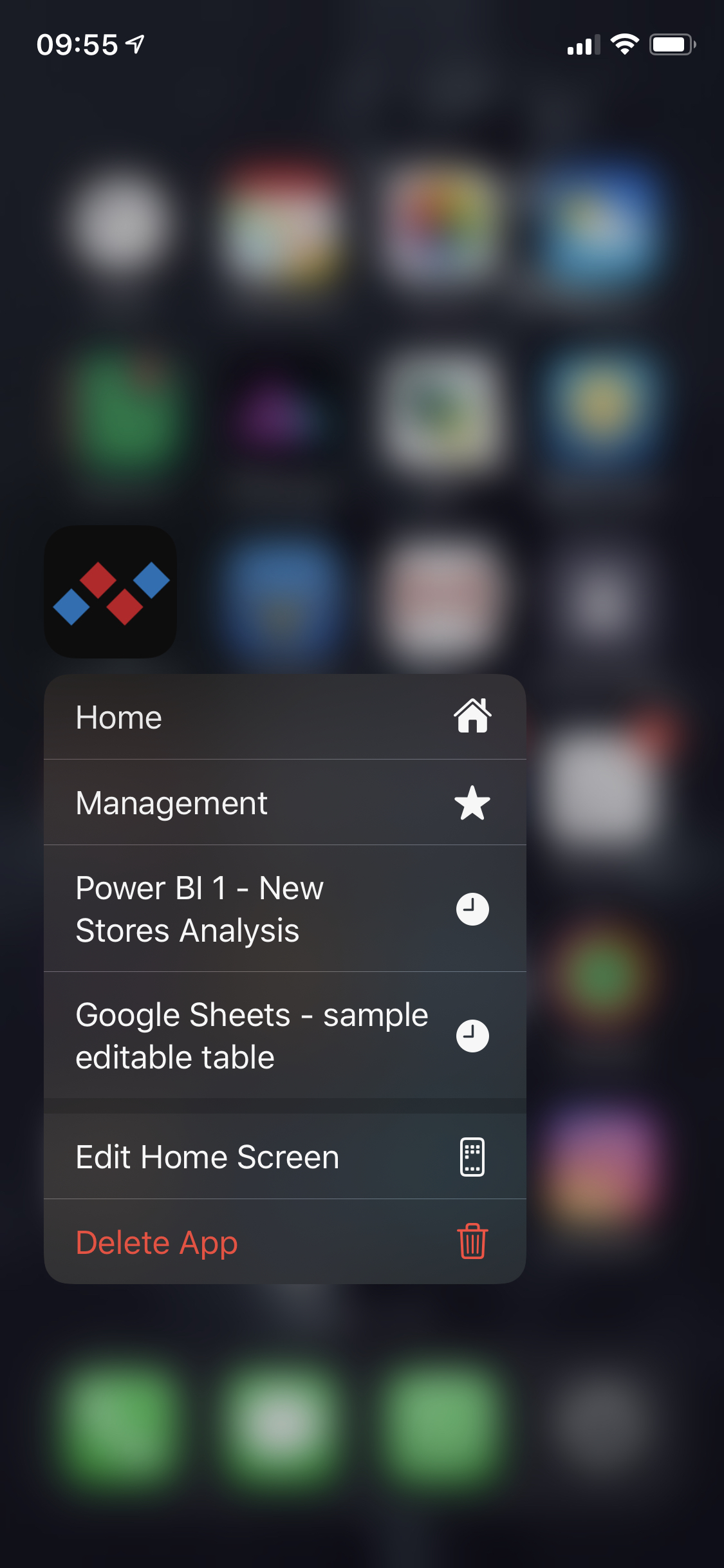

272,409 | iOS: How to add Quick Actions (shortcuts) to your app icon | iOS Quick Actions are meant as a shortcut for your users. They are triggered by long-pressing on the... | 0 | 2020-03-02T20:20:29 | https://dev.to/nemecek_f/ios-how-to-add-quick-actions-shortcuts-to-your-app-icon-ol5 | ios, swift | iOS Quick Actions are meant as a shortcut for your users. They are triggered by long-pressing on the app icon. Each app can present up to four of them and they can be nice touch you can add with not that much a code.

We can have static actions that are defined in `Info.plist` or dynamic actions which are much more versatile. Let’s focus on the dynamic bunch. 🙂

### Providing Quick Actions

We can use the class `UIApplicationShortcutItem` to define one shortcut item and then set all the items we want when app is moving to background.

For example this is one item from one of my recent apps to open management section:

```swift

UIApplicationShortcutItem(type: "OpenManagement", localizedTitle: "Management", localizedSubtitle: nil, icon: UIApplicationShortcutIcon(type: .favorite), userInfo: nil)

```

The `type` is used to differentiate the shortcut when user selects it. `localizedTitle` is the text on the shortcut and you can choose from variety of prepared icons. Here `.favorite` results in filled star. You can also provide your own.

There is also `userInfo` to provide dictionary with additional details.

### Activating Quick Actions

Let’s use `AppDelegate` to activate the shortcut item shown above in the `applicationWillResignActive` method:

```swift

func applicationWillResignActive(_ application: UIApplication) {

let managementShortcut = UIApplicationShortcutItem(type: "OpenManagement", localizedTitle: "Management", localizedSubtitle: nil, icon: UIApplicationShortcutIcon(type: .favorite), userInfo: nil)

application.shortcutItems = [managementShortcut]

}

```

The first part is done. Long-pressing on app icon will show this new Quick Action.

## Responding to Quick Actions

There are two places in `AppDelegate` where we have to respond to Quick Action selection. One is the startup method `didFinishLaunchingWithOptions` where we can check the `launchOptions` like so:

```swift

if let shortCut = launchOptions?[UIApplication.LaunchOptionsKey.shortcutItem] as? UIApplicationShortcutItem {

// handle shortCut here

}

```

And then there is also dedicated method called when the app is already running in the background:

```swift

func application(_ application: UIApplication, performActionFor shortcutItem: UIApplicationShortcutItem, completionHandler: @escaping (Bool) -> Void) {

print(shortcutItem.type)

// handle shortcutItem here

}

```

I would recommend creating helper method for handling shortcut items so you don’t have to duplicate logic in those two places.

Thanks for reading!

_Is anything not clear? Do you want more information? Ask in the comments and I will do my best to help you._

Here is Quick Actions example from the Dashboardy app:

| nemecek_f |

272,514 | Am I a “real” Software Engineer yet? | Am I a “real” Software Engineer yet? This question has haunted me for years. And it seems I’m not al... | 0 | 2020-03-04T18:31:46 | https://medium.com/free-code-camp/am-i-a-real-software-engineer-yet-a0f38a2248c0 | career, firstyearincode, motivation, inclusion | *Am I a “real” Software Engineer yet?*

This question has haunted me for years. And it seems I’m not alone. Many people experience this same insecurity. They desire to know if they’ve *made it. *Are they finally *good enough?*

While “Software Engineer” is the standard title handed out by employers, many in the software community have different standards. And to new programmers joining the field, especially those without CS degrees, it can feel like the title is safe-guarded. Only bestowed on the select that have proven themselves.

Many people refer to this sense of deficiency as **Impostor Syndrome**, though it goes by many names. Developers experience it in different ways and have differing reactions. To some, it can be crippling, but others might not notice it whatsoever.

In this article, I’m going to recount my own ordeals with Impostor Syndrome. While I don’t pretend to have a cure, I hope to shed some more light on the topic and help others who are dealing with it.

**Note:** All the comments I’ve shared are real comments I’ve found on the internet. Not all of them were directed towards me, but I’ve heard similar remarks. I’m sure you have too.

## When it all began

on [Unsplash](https://unsplash.com/search/photos/begin?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText)](https://cdn-images-1.medium.com/max/5356/1*jUoIve_Ff3QUjRsRsL_S2g.jpeg)*Photo by [Danielle MacInnes](https://unsplash.com/photos/IuLgi9PWETU?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText) on [Unsplash](https://unsplash.com/search/photos/begin?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText)*

For me, it started in July of 2016. I had started studying web development as a New Years Resolution. I wasn’t satisfied with my career at the time and was looking for a change. A common origin story that I’m sure many people can relate to.

I focused on front-end development as I had heard that HTML, CSS and JavaScript were easy to pick up. I spent my evenings after work parsing through Treehouse, Lynda, and Codecademy tutorials. The first three months of 2016 passed by like this. By April, my notebook was full of thorough notes and my GitHub contained a few static sites. But I wasn’t satisfied. I wanted to sink my teeth into a larger project.

That was when I decided to [create a portfolio site for my wife](https://dev.to/sunnyb/a-tale-of-two-websites-4b15), who was a product designer. For my skill level at the time, it wasn’t an easy undertaking. I struggled a lot and it took the better part of four months to complete.

It’s important to note that while working on my wife’s website, I did my best to surround myself with tech culture. I watched YouTube videos, listened to podcasts, and read blog posts from experienced engineers to keep myself motivated. I daydreamed what it would be like to stand in their shoes. To have a job at a tech company, work on the latest technology, and write “Software Engineer” as my **Occupation** on tax forms. [Silicon Valley](https://en.wikipedia.org/wiki/Silicon_Valley_(TV_series)) made it all look so glorious.

That’s why I couldn’t have been happier when my wife’s website went live in July of that year. I had done it. I had made my first real website that was going to receive real traffic. Could I finally start calling myself something of a *Software Engineer*?

## “Web development isn’t real programming”

Not according to the Internet:

*“being a fully competent and complete software is much more … ”*

](https://cdn-images-1.medium.com/max/4252/1*RwHFP6lwmeA6Ki7phIq-Kg.jpeg)*“Web development is not real programming” — Read more [here](http://joequery.me/code/the-self-hating-web-developer/)*

“Web development isn’t real programming”, “JavaScript isn’t a real programming language”, and “a front-end developer isn’t a Software Engineer” were all phrases I heard often. While I was determined to change careers, I was also self-conscious. I couldn’t shake the thought that the naysayers were right.

If I’d had a Plan B or a stable career to fall back on, I might’ve given up right then and there. Fortunately, I didn’t. For better or worse, I had no other career or skills to fall back on and I had already invested too much time. So I trudged on, with the [sunk cost](https://en.wikipedia.org/wiki/Sunk_cost) keeping me afloat.

I spent the next 18 months studying software development full time. I quit my job and moved in with my in-laws — which was a journey in-and-of itself. I put everything I had into my career move. I studied Ruby, Node, and some Go and built some small web apps.

By January of 2018, it had been two years since the start of my career switch and I had learned a great deal. I knew the fundamentals, I could program in multiple languages, and I had started contributing to open-source projects. I also had a [decent portfolio](https://dev.to/sunnyb/building-conclave-a-decentralized-real-time-collaborative-text-editor-1jl0) to show for it. I was excited to finally call myself a Software Engineer.

“Not so fast,” said the Internet.

*“software engineer” after 1–2 years? yeah no.”*

*“If you don’t have a software engineering degree you’re not a real software engineer. ... You only know how to do some code.”*

At this point, I had dealt with many doubters. While it still didn’t feel great to hear criticism, I had learned to ignore it for the most part. Furthermore, I had an Ace up my sleeve. There was one final step I could accomplish to put all the doubts to rest: Getting a job.

Yes, a software engineering job. The holy grail. I would have the title, the paycheck, and the acclaim. No more self-doubt. Only deserved validation from an employer.

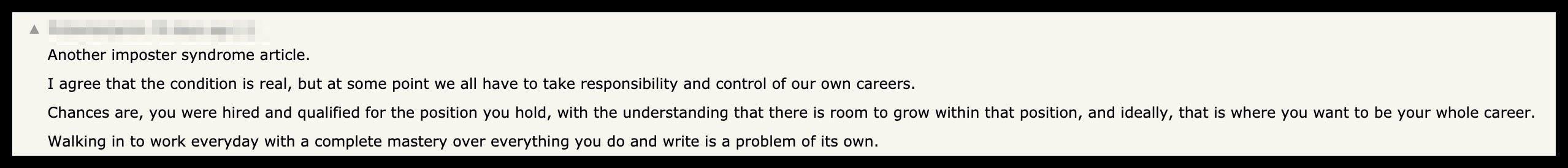

[And that’s what I did](https://hackernoon.com/what-it-took-to-land-my-dream-software-engineering-job-17c01240266b). I started interviewing in early 2018 and signed my first offer letter by March of 2018. My official role was “Software Engineer”. That was that, the hunt for acceptance was finally over.

## From bad to worse

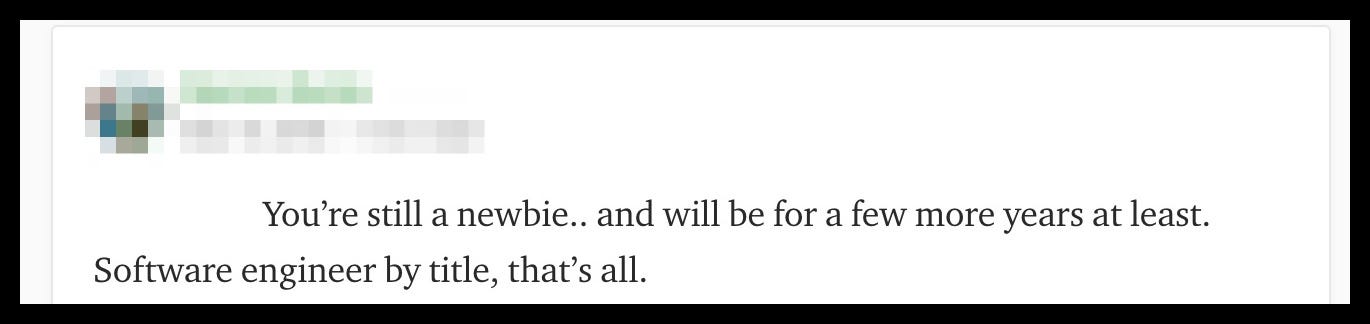

*“You’re still a newbie.. and will be for a few more years at least. Software engineer by title, that’s all.*

*“I’ve been in software engineering for 30 years. …You haven’t even begun to understand what you don’t know yet. Good luck to you!”*

At least that’s what I thought. It seemed the gate-keeping extended even into the realm of employment. But it was over. I was a Software Engineer — I had the W4 papers to prove it. The moment I stepped into the office for my first day on the job, all my worries would disappear.

But that wasn’t the truth. Who knew that adding obligations and deadlines would make one’s insecurities worse, not better?

My anxiety hit a fever pitch the moment I arrived for my first day of onboarding. Slack conversations I didn’t understand. GitHub repositories with thousands of lines of code I didn’t comprehend. Development tools I had never heard of or used. My first week on the job was a whirlwind of stress.

Don’t get me wrong, I was thrilled to be surrounded by such experienced engineers. My team was full of some of the smartest engineers I’d ever met and they were incredibly understanding. They mentored me and ramped me up to speed. But it was still intimidating as hell.

Many of my coworkers had advanced degrees, some had been programming since they were teens, and others were core maintainers of large open source projects. And who was I? Some guy who learned to code because it looked cool on TV. I felt out of place and the feeling didn’t disappear.

My thoughts began to resemble the negative comments that I had worked so hard to prove wrong:

*How the hell did I get here? Was it luck? Did someone make a mistake?*

I realized that I didn’t need Reddit to point out my inadequacies — I was quite capable of that myself. It’s true that, with time, I became more familiar with my environment. I began to understand the Slack conversations, the code became more familiar, and I learned how to use the various tools. And yet, I couldn’t shake the feeling that I was a stranger in a foreign land. Every mistake I made only served to prove that point further.

I anticipated the day that I made one mistake too many and someone finally asked, “How on Earth did you get hired?” That fear consumed me. I began reading whitepapers, frequenting Hacker News, and taking classes to get a Computer Science degree. All in the hopes that I would finally feel like I fit in. That I was a “real” software engineer. But none of it worked.

Luckily, I found something that did.

## Finding my solution

The solution that I found for myself was simple yet terrifying.

**Talking.**

I had to talk to someone. I couldn’t keep it bottled anymore. However, I was too nervous to ask any of coworkers a question like, “Have you ever felt like a fraud?” Instead, I started with my close friends. But I soon found myself discussing the topic to anyone I could, including coworkers and teammates. Because the more I talked and listened, the more I realized I wasn’t alone.

As it turns out, impostor syndrome is so common that it has become a cliché!

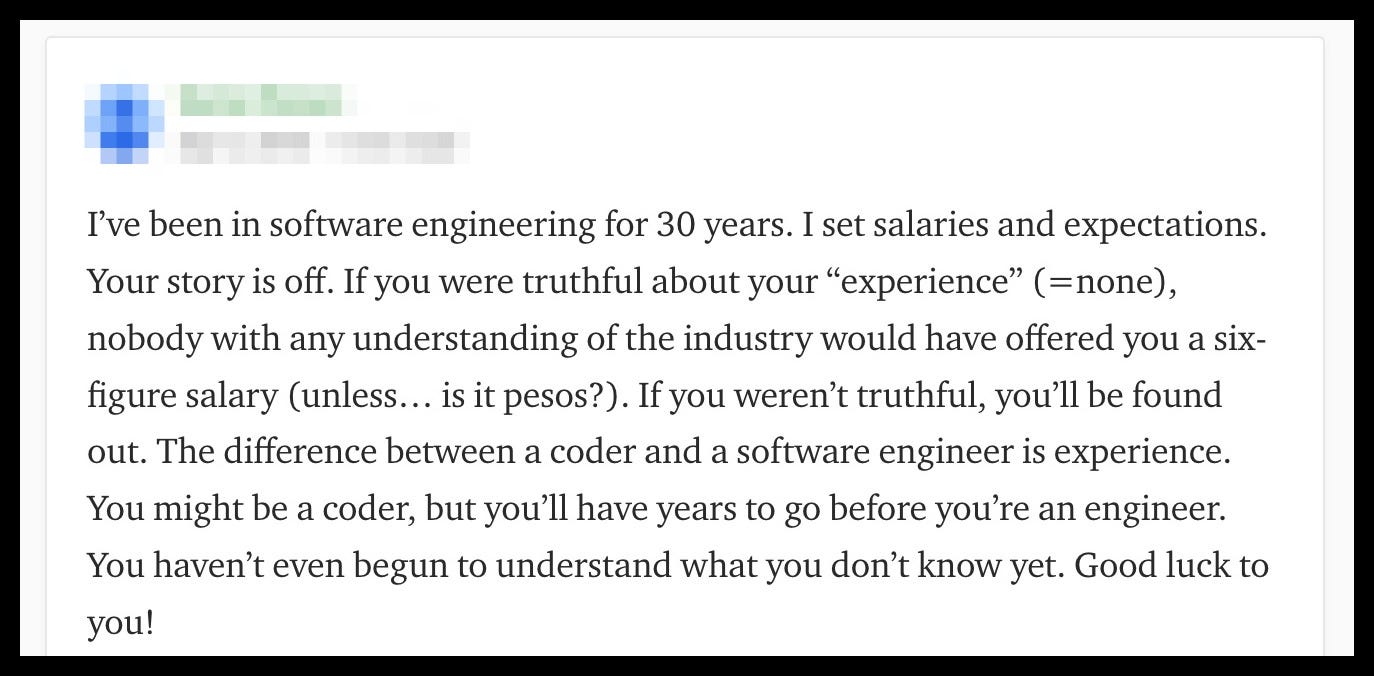

*“Another impostor syndrome article …. Walking in to work everyday with a complete mastery over everything you do and write is a problem of its own.”*

Many professionals at the top of their careers experience it. [Tina Fey](https://www.instyle.com/celebrity/stars-imposter-syndrome?slide=3163098#3163098), [John Steinbeck](https://www.grammarly.com/blog/notable-people-imposter-syndrome/), and even [Albert Einstein](https://www.therebegiants.com/overcoming-impostor-syndrome/). It was comforting to find out that I was in such good company. Furthermore, when talking with a close friend, he said something that struck a chord with me: