id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

915,289 | Publish your site assets with the Netlify CLI | Learn how the Netlify command line interface (CLI) can publish your web site assets to the Netlify hosting infrastructure for you with a single command. | 15,788 | 2021-12-02T10:26:07 | https://www.netlify.com/blog/2021/12/01/highlighting-a-different-netlify-feature-each-day-in-december/ | netlify, jamstack, cli, tools | ---

title: Publish your site assets with the Netlify CLI

published: true

description: Learn how the Netlify command line interface (CLI) can publish your web site assets to the Netlify hosting infrastructure for you with a single command.

tags: Netlify, Jamstack, CLI, Tools

cover_image: https://www.netlify.com/img/blog... | philhawksworth |

915,315 | Why you should make your tests fail | Let's face it, most of us developers don't necessarily love writing tests. We sometimes end up... | 0 | 2021-12-02T13:58:36 | https://cathalmacdonnacha.com/why-you-should-make-your-tests-fail | testing, javascript, webdev, beginners | Let's face it, most of us developers don't necessarily love writing tests. We sometimes end up rushing through them, and once we see that green tick next to a passing test, we're generally pretty happy to move on. However, an enemy is lurking amongst us.

## False positive test

The enemy I'm talking about here is othe... | cathalmacdonnacha |

915,347 | Free Bootstrap 5 Admin Dashboard Template - Dash UI | Dash-UI is a Bootstrap 5 Admin & Dashboard Theme. Dash UI Kit a free and open source components... | 0 | 2021-12-03T08:08:09 | https://dev.to/imjituchauhan/free-bootstrap-5-admin-template-dash-ui-19j2 | webdev, opensource, css, javascript | Dash-UI is a [Bootstrap 5 Admin & Dashboard](https://dashui.codescandy.com/) Theme. Dash UI Kit a free and open source components and templates kit fully coded with Bootstrap 5.

Demo - [Dash UI](https://codescandy.com/dashui/index.html)

Support - [Join the Community](https://github.com/codescandy/Dash-UI/discussions... | imjituchauhan |

915,600 | 6 Days Left until BotMeNot’s beta launch on the 7th of December, 2021! | In case you’ve missed it, we’ve recently announced BotMeNot’s beta launch! BotMeNot (Beta) will go... | 0 | 2021-12-02T15:58:06 | https://dev.to/botmenot/6-days-left-until-botmenots-beta-launch-on-the-7th-of-december-2021-48ik | discuss, security, testing | In case you’ve missed it, we’ve recently announced BotMeNot’s beta launch!

BotMeNot (Beta) will go live on the 7th of December 2021 and if you decide to register as a beta user you’ll receive 100 free credits!

Wha... | botmenot |

915,614 | Are you a video fan?🚀 | How many videos do you watch a week? I'm sure a lot. Some of the videos for pleasure, and some of... | 0 | 2021-12-02T16:14:31 | https://dev.to/liadshviro/are-you-a-video-fan-k44 | codereview, programming, productivity, video | How many videos do you watch a week? I'm sure a lot.

Some of the videos for pleasure, and some of them for educational reasons.

About two years ago (Covid 19) video usage increased dramatically.

We have learned th... | liadshviro |

915,620 | Getting started with scheduled pipelines | CircleCI’s scheduled pipelines let you run pipelines at regular intervals; hourly, daily, or weekly.... | 0 | 2021-12-14T23:24:02 | https://circleci.com/blog/using-scheduled-pipelines/ | circleci, pipelines, cicd | ---

title: Getting started with scheduled pipelines

published: true

date: 2021-12-14 14:00:00 UTC

tags: circleci, pipelines, cicd

cover_image: https://production-cci-com.imgix.net/blog/media/Tutorial-Beginner-RP.jpg?ixlib=rb-3.2.1&auto=format&fit=max&q=60&ch=DPR%2CWidth%2CViewport-Width%2CSave-Data&w=750

canonical_url:... | zmarkan |

915,624 | Implement JavaScript Array Methods From Scratch | Table of Contents Introduction prototype this Array Methods Resources ... | 0 | 2021-12-05T00:24:06 | https://dev.to/zagaris/implement-javascript-array-methods-from-scratch-2pl1 | javascript, webdev, beginners |

## Table of Contents

1. [Introduction](#introduction)

2. [prototype](#what-is-prototype)

3. [this](#what-is-this)

4. [Array Methods](#lets-implement-the-array-methods)

5. [Resources](#resources)

## Introduction

The **JavaScript Array class** is a global object that is used in the construction of arrays. Array is a ... | zagaris |

915,626 | Anatomy of a high-velocity CI/CD pipeline | If you’re going to optimize your development process for one thing, make it speed. Not the kind of... | 0 | 2021-12-02T16:35:17 | https://medium.com/developing-koan/anatomy-of-a-high-velocity-ci-cd-pipeline-43a1ae3b798b | ci, continuousdelivery, devops, tdd | If you’re going to optimize your development process for one thing, make it speed. Not the kind of speed that racks up technical debt on the team credit card or burns everyone out with breathless sprints, though. No, the kind of speed that treats time as your most precious resource, which it is.

_Speed is the startup’... | rjz |

915,641 | Typing Effect by using CSS | As you may have already seen some website which has some kind of typing animation. It looks cool... | 19,763 | 2021-12-03T12:35:11 | https://dev.to/j471n/typing-effect-by-using-css-50p | css, webdev, beginners, tutorial | As you may have already seen some website which has some kind of typing animation. It looks cool right but what if I tell you it is very easy to do and you can do it with just CSS only, not without using JS.

First of all, let's visualize what I am talking about -

### Preview

Here are th... | riocantre |

915,722 | Understanding filters in Office 365 triggers | This article explains how to use filters provided for Office365 triggers. In Office 365 triggers you... | 0 | 2021-12-06T09:26:11 | https://tech.forums.softwareag.com/t/understanding-filters-in-office-365-triggers/253665 | webmethods, integration, connectors | ---

title: Understanding filters in Office 365 triggers

published: true

date: 2021-12-02 15:06:50 UTC

tags: #webmethods, #integration, #connectors

canonical_url: https://tech.forums.softwareag.com/t/understanding-filters-in-office-365-triggers/253665

---

This article explains how to use filters provided for Office365 ... | techcomm_sag |

915,861 | Imagen al lado del texto | como puedo poner ese texto al lado de la imagen? | 0 | 2021-12-02T19:18:48 | https://dev.to/angeles_kerwin/imagen-al-lado-del-texto-57k | como puedo poner ese texto al lado de la imagen? | angeles_kerwin | |

916,025 | 100 días de código: 30, avance en The Odin Project. | ¡Hey hey hey! Bienvenidos a este día 30 del reto. Realmente no he avanzado mucho porque practique el... | 0 | 2021-12-03T00:15:27 | https://dev.to/darito/100-dias-de-codigo-30-avance-en-the-odin-project-46cb | spanish, 100daysofcode, webdev | ¡Hey hey hey!

Bienvenidos a este día 30 del reto. Realmente no he avanzado mucho porque practique el uso de prototipos en Javascript.

Me siento realmente motivado desde que elegí la ruta a seguir en The Odin Project la cual fue Javascript Full stack.

### Ayer:

- Practique 30 min de Touch Typing.

- Comencé a aprender... | darito |

916,030 | Guide to model training: Part 4 — Ditching datetime | TLDR Apply feature engineering by converting time series data to numerical values for... | 0 | 2021-12-03T01:09:35 | https://dev.to/mage_ai/guide-to-model-training-part-4-ditching-datetime-2eg6 | programming, machinelearning, tutorial, python | ## TLDR

Apply feature engineering by converting time series data to numerical values for training machine learning models.

## Outline

- Recap

- Before we begin

- The datetime data type

- Converting to date

- What’s next?

## Recap

In our series so far, we’ve gone over scaling data to prepare for model training. We sta... | mage_ai |

916,050 | Elixir Circuits.I2C with Mox | This is written in Japanese. I might convert it to English later, maybe. ... | 0 | 2021-12-03T02:34:36 | https://dev.to/mnishiguchi/elixir-circuitsi2c-with-mox-3186 | elixir, i2c, mox, mock | [Mox.verify_on_exit!/1]: https://hexdocs.pm/mox/Mox.html#verify_on_exit!/1

[Mox.stub_with/2]: https://hexdocs.pm/mox/Mox.html#stub_with/2

[mox]: https://hexdocs.pm/mox/Mox.html

[José Valim]: https://twitter.com/josevalim

[mocks-and-explicit-contracts]: https://dashbit.co/blog/mocks-and-explicit-contracts

[Elixir]: http... | mnishiguchi |

916,057 | Code Smell 108 - Float Assertions | Asserting two float numbers are the same is a very difficult problem TL;DR: Don't compare... | 9,470 | 2021-12-03T03:11:21 | https://maximilianocontieri.com/code-smell-108-float-assertions | oop, tutorial, codenewbie, cleancode | *Asserting two float numbers are the same is a very difficult problem*

> TL;DR: Don't compare floats

# Problems

- Wrong test results

- Fragile tests

- Fail fast principle violation

# Solutions

1. Avoid floats unless you have REAL performance concerns

2. Use arbitrary precision numbers

3. If you need to compare... | mcsee |

916,162 | JavaScript Course for free 2022 From Zero to Expert | In this course section, we will share with you the JavaScript course for free in 2022: from zero to... | 0 | 2021-12-03T05:50:59 | https://dev.to/alimammiya/javascript-course-for-free-2022-from-zero-to-expert-5bj4 | javascript, tutorial, beginners, programming | In this course section, we will share with you the [JavaScript course for free](https://usemynotes.com/javascript/) in 2022: from zero to expert! The modern JavaScript course for everyone! This JavaScript course contains challenges, theory, and Interview questions. So let’s start.

## What you'll learn

- Become an adv... | alimammiya |

916,205 | Create QR codes in JavaScript | This is an example of how you could create QR codes in JavaScript (and Node.js) with the "qrcode" npm... | 0 | 2021-12-03T07:43:34 | https://dev.to/coder4_life/create-qr-codes-in-javascript-325 | javascript, node, webdev, programming | This is an example of how you could create QR codes in JavaScript (and Node.js) with the "qrcode" npm package. This video includes browser and Node.js examples where we create the QR codes as base64 image strings.

{% youtube U0pRmzCxbnk %} | coder4_life |

916,370 | Instalación de DDEV y despliegue de proyecto Drupal 9 en Ubuntu 20.04 | Instalación de los componentes necesarios para ejecutar DDEV y crear entornos locales de trabajo de... | 0 | 2021-12-03T11:08:56 | https://dev.to/daniconil/instalacion-de-ddev-y-despliegue-de-proyecto-drupal-9-en-ubuntu-2004-236 | ddev, drupal, docker, ubuntu | Instalación de los componentes necesarios para ejecutar DDEV y crear entornos locales de trabajo de PHP. Docker y Docker Compose son requisitos imprescindibles y los instalaremos con la configuración mínima para que funcione DDEV.

El índice de este tutorial es:

1. Instalación de Docker

2. Instalación de Docker Compos... | daniconil |

916,488 | Top IT Career Meetups in November, 2021 | Eager to see the finest IT Career meetup videos from this November? Say no more, check our... | 0 | 2021-12-03T14:38:05 | https://blog.meetupfeed.io/it-career-meetups-november-2021/ | career, programming, techtalks | Eager to see the finest IT Career [**meetup videos**](https://blog.meetupfeed.io/it-career-meetups-november-2021/) from this November? Say no more, check our hand-picked selection below.

[**Writing Code is Easy, Being a Great Developer is Hard | Helen Scott**](https://meetupfeed.io/talk/tech-talks-writing-code-is-easy... | meetupfeedio |

916,610 | Best Mobile Apps For Couples Who Want To Stay Connected | Being next to your soul mate every minute gives you joy and warmth. However, time can also be spent... | 0 | 2021-12-03T14:49:17 | https://dev.to/downeranthony/best-mobile-apps-for-couples-who-want-to-stay-connected-3lb7 | mobile, android, ios | Being next to your soul mate every minute gives you joy and warmth. However, time can also be spent with benefit. You can share secret desires, learn something new about your loved one, and just have fun together via mobile apps. Also, with their help, you can not miss important dates and always be aware of the upcomin... | downeranthony |

916,615 | Set up es-lint, prettier, husky, lint-staged in vs-code for vanilla JS | As a beginner developer I ran to different problem while setting up the es-lint ,prettier,husky and... | 0 | 2021-12-05T16:07:00 | https://dev.to/adarshmaharjan/set-up-es-lintprettierhuskylint-staged-in-vs-code-1oig | javascript, webdev, beginners, tutorial | As a beginner developer I ran to different problem while setting up the es-lint ,prettier,husky and lint-staged. This is the beginners guide to setting up the es-lint with prettier by using husk with lint-staged.I have compiled the knowledge from different docs,blogs,videos and hints while writing this blog and the re... | adarshmaharjan |

916,638 | This is what I've experienced in Web/Software Development for more than 6 years. | I remember the first time I've got a job as a web developer. At that time I'm still studying at my... | 0 | 2021-12-03T16:21:41 | https://dev.to/msulaimanmisri/this-is-what-ive-experienced-in-websoftware-development-for-more-than-6-years-4468 | webdev, programming | I remember the first time I've got a job as a web developer. At that time I'm still studying at my University. I do part-time in one small media agency in Sarawak, Malaysia.

My reason to do part-time at that time is just to study how web developers do the work in real life. I don't want to know based on a textbook o... | msulaimanmisri |

916,664 | 👁️Accelerates your coding using Gaze | CLI tool that accelerates your quick coding | 0 | 2021-12-03T17:09:14 | https://dev.to/wtetsu/accelerates-your-coding-using-gaze-17p8 | programming | ---

title: 👁️Accelerates your coding using Gaze

published: true

description: CLI tool that accelerates your quick coding

tags: programming

#cover_image: https://direct_url_to_image.jpg

---

Software development often forces us to execute the same command again and again, by hand!

👁️[Gaze](https://github.com/wtetsu/... | wtetsu |

916,677 | My experience with hand on APIs workshop By using postman. | My hand on experience of learning APIs 101 with a postman workshop is amazing. before this workshop,I... | 0 | 2021-12-03T17:35:51 | https://dev.to/minal11/my-experience-with-hand-on-apis-workshop-by-using-postman-1o4i | todayilearned, devops, testing, discuss | My hand on experience of learning APIs 101 with a postman workshop is amazing. before this workshop,*I didn't be familiar with what is API and how it's work?* It's my very first workshop from where I practically learned about API. How the speaker is clarifying is very considerable along with this the session was intuit... | minal11 |

916,702 | AUTO INCREMENT On A MySQL Secondary Column | MySQL AUTO_INCREMENT MySQL provides us with AUTO_INCREMENT attribute that allows us to... | 0 | 2021-12-03T20:54:33 | https://dev.to/vinodsys/auto-increment-on-a-mysql-secondary-column-2jcn | mysql, autoincrement, tables, myisam | ## MySQL AUTO_INCREMENT

MySQL provides us with AUTO_INCREMENT attribute that allows us to create a column that contains a sequence of numbers (1, 2, 3, and so on). AUTO_INCREMENT attribute is generally used to generate a unique number that act as a primary key in a table.

Let see a simple example. Here we have a ... | vinodsys |

917,132 | WASM : The End of Javascript? | Microsoft has been working on WASM for a long time now. Their project named Blazor was doing well... | 17,014 | 2021-12-04T06:47:16 | https://dev.to/jwp/wasm-the-end-of-js-39oa | webassembly, blazor | Microsoft has been working on WASM for a long time now. Their project named Blazor was doing well back in 2019 with its precursor Razor going back to at least 2014.

I initially rejected Blazor thinking Javascript and JS packages are so dominant that the same type of Open Source collaboration will never catch up with ... | jwp |

917,145 | Advent of Code 2021 Python Solution: Day 4 | Again, I am using my helper function from day 1 to read data. This challenge was little bit harder... | 15,777 | 2021-12-04T06:32:29 | https://dev.to/qviper/advent-of-code-2021-python-solution-day-4-5fi1 | python, adventofcode, beginners, programming | Again, I am using my helper function from day 1 to read data.

This challenge was little bit harder than previous but with the help of NumPy, I did it.

```python

import numpy as np

data,data1 = get_data(day=4)

def get_blocks(dt):

block = []

num = [int(i) for i in dt[0].split(",")]

row = []

tdata=[]

... | qviper |

917,234 | Day 55 of 100 Days of Code & Scrum: Eighth Weekly Retrospective (RIP my streak) | Happy weekend, everyone! This week may have been the least productive one during my entire... | 14,990 | 2021-12-04T10:06:36 | https://dev.to/rammina/day-55-of-100-days-of-code-scrum-eighth-weekly-retrospective-rip-my-streak-49e3 | 100daysofcode, beginners, javascript, productivity | Happy weekend, everyone!

This week may have been the least productive one during my entire [challenge](https://dev.to/rammina/100-days-of-code-and-scrum-a-new-challenge-24lp). My 51-day streak also ended this week, and I took a two-day break. I just try not to be too hard on myself, because I had personal issues getti... | rammina |

917,333 | Sustainability on AWS (re:Invent2021) | Werner Vogels is an unparalleled titan of cloud computing, and his Keynote is one of the most popular... | 0 | 2021-12-04T15:09:00 | https://ssennett.net/posts/aws-sustainability-pillar/ | aws | Werner Vogels is an unparalleled titan of cloud computing, and his Keynote is one of the most popular sessions of re:Invent. And when he [announced the new Sustainability Pillar](https://aws.amazon.com/blogs/aws/sustainability-pillar-well-architected-framework/) of the AWS Well-Architected Framework at re:Invent this w... | ssennettau |

917,376 | Thoughts on Legacy Code and How to Live With it | Let's start with one statement before we even define what legacy code is. You will never get rid... | 0 | 2021-12-04T14:54:32 | https://www.johnraptis.dev/thoughts-on-legacy-code/ | webdev, programming, productivity | Let's start with one statement before we even define what legacy code is.

> You will never get rid of legacy code, you can only learn how to deal and live with it.

>

So what is legacy code anyway? You might fall into the trap of thinking that is just old code. This kind of answer is over-simplistic and doesn't refl... | john2220 |

917,381 | Javascript的5个小技巧 | 删除Array中重复的值 利用Set特性。 const array = [1,2,2,3,3,3,4,4,4,4,5]; const unique = [... new... | 15,780 | 2021-12-04T19:23:59 | https://dev.to/andylow/javascriptde-4ge-xiao-ji-qiao-4bjf | javascript | ## 删除Array中重复的值

利用`Set`特性。

```js

const array = [1,2,2,3,3,3,4,4,4,4,5];

const unique = [... new Set(array)];

```

## 模板字符串

使用反引号 (`` ` ` ``) 来代替普通字符串中的用双引号和单引号。

```js

const str = `Hello ${expression}!`;

const num1 = "1";

const num2 = "2";

console.log(`${num1}${num2}`); // 12

```

[更多资讯](https://developer.mozilla.org/zh... | andylow |

917,984 | How to Create GUI for remote server with i3 window manager | Hello there. This article is about providing management via a UI by installing a window manager or... | 0 | 2021-12-05T16:23:02 | https://dev.to/nuhyurdev/how-to-create-gui-for-remote-server-with-i3-window-manager-2mj9 | devops, linux, productivity, tutorial | Hello there. This article is about providing management via a UI by installing a window manager or desktop environment for servers and establishing a remote connection. A graphical server and desktop environment are not used on remote servers because server resources are usually limited. In this post I will be working ... | nuhyurdev |

918,327 | Job interview question and answer | Tricky techniques | Welcome to this page. I am really happy for your landing. Most probably, you have got your... | 0 | 2021-12-06T04:43:19 | https://remoteful.dev/blog/job-interview-question-answer | remotework, job, remotejobs | Welcome to this page.

I am really happy for your landing. Most probably, you have got your interview call, and you are here. Congratulation, my dear.

But still, you are stressed. What will be asked? How to answer? What will happen if you can’t answer?

I can feel this problem.

But great news! This article is th... | ryy |

923,770 | Odoo Developers | I am looking for perm and contract Odoo developers for a well-known retail company in the EU. Get in... | 0 | 2021-12-11T16:30:22 | https://dev.to/calvinjnr/odoo-developers-550h | python | I am looking for perm and contract Odoo developers for a well-known retail company in the EU. Get in touch for more details - calvinjnr@sky.com | calvinjnr |

923,868 | Github-content-generator | New content generator solution for Github.@allifam | 0 | 2021-12-11T18:09:13 | https://dev.to/ddelrio95/github-content-generator-4ljh | python, github, automation, programming | New content generator solution for Github.@allifam | ddelrio95 |

923,876 | How To Partially Update A Document in Cloud Firestore | There will be a scenario where you will want to partially update a document on the Cloud Firestore... | 0 | 2021-12-13T14:46:18 | https://dev.to/rajatamil/how-to-partially-update-a-document-in-cloud-firestore-4f1d | firebase, javascript, programming | There will be a scenario where you will want to partially update a document on the Cloud Firestore without compromising [security rules](https://softauthor.com/firebase-cloud-firestore-security-rules/).

Let’s say you have an orders collection and a signed-in user with a user role that can create a new order document i... | rajatamil |

923,936 | Build a data warehouse quickly with Amazon Redshift - Part 3 - The final layer cake | Building a cloud data warehouse and business intelligence modernization In this final... | 17,204 | 2021-12-14T21:01:43 | https://dev.to/aws-builders/build-a-data-warehouse-quickly-with-amazon-redshift-part-3-the-final-layer-cake-5798 | beginners, analytics, aws, tutorial | ## Building a cloud data warehouse and business intelligence modernization

In this final tutorial for AWS Redshift I will explain how we can bake our cake and eat it too by loading data into the Redshift cluster through to creating data visualizations with AWS QuickSight.

If you are reading this post for the first ti... | abc_wendsss |

924,089 | I asked GitHub Copilot if it will replace developers! | Please consider liking and subscribing <3 Github copilot tells an offensive joke: | 0 | 2021-12-12T04:54:09 | https://dev.to/virejdasani/i-asked-github-copilot-if-it-will-replace-developers-45ka | github, javascript, ai, webdev | {% youtube s-XGQOE8E1g %}

Please consider liking and subscribing <3

Github copilot tells an offensive joke:

{% youtube SFBSbAJBTOM %} | virejdasani |

924,119 | 5 Best Docker Courses for Java Developers to Learn in 2024 | Do you want to learn Docker in 2024? Here are the best online courses to learn Docker in 2024 from Udemy, Pluralsight, and other online platforms. | 0 | 2021-12-12T08:16:05 | https://dev.to/javinpaul/5-best-docker-courses-for-developers-to-learn-in-2022-o83 | docker, java, devops, programming | ---

title: 5 Best Docker Courses for Java Developers to Learn in 2024

published: true

description: Do you want to learn Docker in 2024? Here are the best online courses to learn Docker in 2024 from Udemy, Pluralsight, and other online platforms.

tags: Docker, Java, DevOps, Programming

//cover_image: https://direct_url_... | javinpaul |

924,199 | Striver's SDE Sheet Journey - #2 Pascal's Triangle | Hi👋,devs. I have started a Journey called Striver's SDE Sheet Journey and in this journey, I have... | 0 | 2021-12-15T08:59:38 | https://dev.to/sachin26/strivers-sde-sheet-journey-2-pascals-triangle-gp2 | beginners, dsa, programming, java | Hi👋,devs.

I have started a Journey called **Striver's SDE Sheet Journey** and in this journey, I have successfully solved the first problem [#1](https://dev.to/sachin26/strivers-sde-sheet-journey-1-set-matrix-zeroes-4l68) from the SDE Sheet, now i am going to tackle the second one.

## [#2 Pascal's Triangle](https://... | sachin26 |

924,214 | If-Else or Switch or Match - Laravel | We have an example of entering data of different type and here we use if if($request->type ==... | 0 | 2021-12-12T11:03:51 | https://dev.to/morcosgad/if-else-or-switch-or-match-laravel-3h45 | php, laravel, programming, beginners | We have an example of entering data of different type and here we use if

```php

if($request->type == 'users'){

$users = User::all();

dd($users)

}

if($request->type == 'projects'){

$projects = Project::all();

dd($projects)

}

```

But we want to make the code easier and less, so here I want to use switch

```ph... | morcosgad |

955,706 | What I learned about using and building public APIs | I've recently learned about the API Economy concept (A good primer and a contrarian view). After a... | 0 | 2022-01-15T03:28:11 | https://dev.to/gwenshap/what-i-learned-about-using-and-building-public-apis-2d00 | beginners | ---

title: What I learned about using and building public APIs

published: true

description:

tags: #beginners

---

I've recently learned about the API Economy concept ([A good primer](https://www.notboring.co/p/apis-all-the-way-down) and [a contrarian view](https://www.swyx.io/api-economy/)). After a bit of research, ... | gwenshap |

924,228 | An Overview of Cloud Computing. | What is Cloud Computing? Cloud computing is the on-demand delivery of IT resources over the Internet... | 0 | 2021-12-12T13:09:57 | https://dev.to/tioluwaniope/an-overview-of-cloud-computing-32eh | beginners, webdev, tutorial, cloudskills |

**What is Cloud Computing?**

Cloud computing is the on-demand delivery of IT resources over the Internet with pay-as-you-go pricing. Instead of buying, owning, and maintaining physical data centers and servers, you can access technology services, such as computing power, storage, and databases, on an as-needed basis ... | tioluwaniope |

924,245 | Beginner To Pro - Python Learning Path | Why Learn Python Python is one of the best programming languages of the 21st century. It's... | 0 | 2021-12-12T12:21:39 | https://dev.to/enirox/beginner-to-pro-python-learning-path-18k7 | python, codenewbie, webdev, beginners | ## Why Learn Python

Python is one of the best programming languages of the 21st century. It's not going anywhere anytime soon. Why? Well, because it's easy to learn, a clean and structured programming language with powerful capabilities.

So having seen how important a programming language Python is, How exactly should... | enirox |

924,358 | Starting journey toward building Blockchain App | Photo by Zoltan Tasi on Unsplash Hi all, in this article, we will discuss the path toward building... | 15,852 | 2021-12-12T15:44:43 | https://dev.to/nikhildhawan/starting-journey-toward-building-blockchain-app-2c33 | blockchain, technology | Photo by <a href="https://unsplash.com/@zoltantasi?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Zoltan Tasi</a> on <a href="https://unsplash.com/s/photos/ethereum?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a>

Hi all, in this article, we will discuss the path ... | nikhildhawan |

924,377 | Hologram shooter | A post by Jayant Goel | 0 | 2021-12-12T17:09:37 | https://dev.to/jayantgoel001/hologram-shooter-528 | codepen | {% codepen https://codepen.io/justjspr/pen/BajmpGY %} | jayantgoel001 |

924,477 | +Features no GitLab CI/CD | GitLab e AutoDevOps O Auto DevOps oferece uma configuração de CI/CD predefinida que... | 15,662 | 2021-12-15T17:41:27 | https://dev.to/bernardo/features-no-gitlab-cicd-2n5i | gitlab, git, beginners, devops | ## GitLab e AutoDevOps

O Auto DevOps oferece uma configuração de CI/CD predefinida que permite detectar, construir, testar, implantar e monitorar automaticamente seus aplicativos. Isso torna mais fácil configurar cada projeto de forma mais consistente.

Ele é ativado por padrão para todos os seus projetos, mas pode se... | bernardo |

342,903 | A Short Borg Tutorial | Borg is the new generation of attic. borg init --encryption=keyfile /path/to/repo borg create -C lz... | 0 | 2020-05-24T15:36:16 | https://mmap.page/dive-into/borg/ | ---

title: A Short Borg Tutorial

published: true

date:

tags:

canonical_url: https://mmap.page/dive-into/borg/

---

[Borg](https://www.borgbackup.org/) is the new generation of [attic](https://attic-backup.org/).

```

borg init --encryption=keyfile /path/to/repo

borg create -C lz4 /path/to/repo::NAME-YOUR-BACKUP ~/Doc... | weakish | |

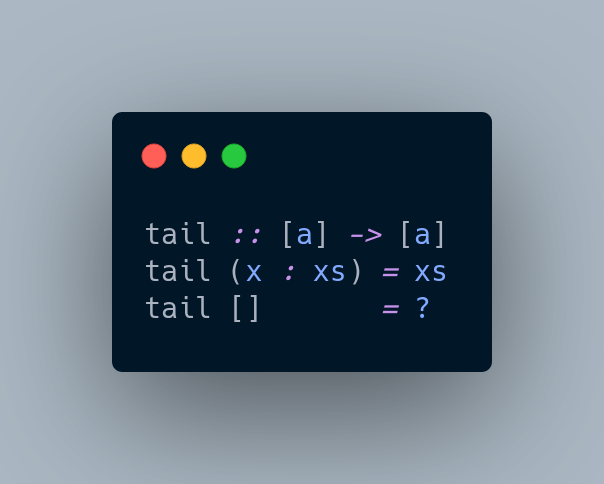

924,543 | Monads in a simple way | Monads were created by mathematicians in 1960 and rediscovered by computer scientists in 1990 as a... | 0 | 2021-12-13T21:17:15 | https://dev.to/kindsloth/monads-in-a-simple-way-7f9 | haskell, functional, programming | Monads were created by mathematicians in 1960 and rediscovered by computer scientists in 1990 as a new way to handle effects. Let's write some examples, imagine a function that gets the tail from a list like this:

The problem h... | kindsloth |

924,626 | Why You Need a Coding Scratch File | Sometimes the best place to write code for your project is outside it. This may sound like a lie.... | 0 | 2021-12-13T01:16:22 | https://kevinhicks.software/blog/88/why-you-need-a-coding-scratch-file | productivity, programming, beginners | Sometimes the best place to write code for your project is outside it.

This may sound like a lie. How could writing code outside of a project be the best place for the project's code? How can it even help the project at all?

Working within a project, especially a large and complex one, other code can get in the way o... | kevinhickssw |

924,682 | How WebSocket protocol designs bidirectional messaging and implements in Go | WebSocket WebSocket is a mechanism for low-cost, full-duplex communication on Web, which... | 0 | 2021-12-13T04:59:20 | https://dev.to/hgsgtk/how-websocket-protocol-designs-bidirectional-messaging-and-implements-in-go-260f | webdev, websocket, go | ## WebSocket

WebSocket is a mechanism for low-cost, [full-duplex](https://en.wikipedia.org/wiki/Duplex_(telecommunications)#FULL-DUPLEX) communication on Web, which protocol was standardized as [RFC 6455](https://datatracker.ietf.org/doc/html/rfc6455).

The following diagram, quoted by [Wikipedia](https://en.wikipedia... | hgsgtk |

924,754 | An introduction to React Hooks | Introduction Hooks are here to aid you if you don't like lessons. Hooks are methods that let you... | 0 | 2021-12-13T07:13:56 | https://dev.to/ashikarose/an-introduction-to-react-hooks-3f58 | **Introduction**

[Hooks ](https://reactjs.org/docs/hooks-intro.html)are here to aid you if you don't like lessons. Hooks are methods that let you leverage React's state and lifecycle capabilities without having to use classes. It allows you to connect into React state and lifecycle features from function components, as... | ashikarose | |

924,767 | Go Channel Patterns - Wait For Task | To improve my Go Programming skills and become a better Go engineer, I have recently purchased an... | 15,904 | 2021-12-13T07:39:41 | https://dev.to/b0r/go-concurrency-patterns-wait-for-task-34de | go, programming | ---

series: [Go Channel Patterns]

---

To improve my Go Programming skills and become a better Go engineer, I have recently purchased an excellent on-demand education from [Ardan Labs](https://www.ardanlabs.com/education/). Materials are created by an expert Go engineer, [Bill Kennedy](https://twitter.com/goinggodotnet... | b0r |

955,846 | Pre-load Angular Modules🥳 | Preloading 1. In preloading, feature modules are loaded in background asynchronously. In... | 0 | 2022-01-15T09:03:29 | https://dev.to/krishna7852/pre-load-angular-modules-5hg | angular, typescript, javascript, performance | ## Preloading

**1.** In preloading, feature modules are loaded in background asynchronously. In preloading, modules start loading just after application starts.

**2.** When we hit the application, first **AppModule** and modules imported by it, will be loaded eagerly. Just after that modules configured for preloading i... | krishna7852 |

924,801 | What Do You Look For In A Crypto Marketing Company? | A crypto marketing company has the ability to promote any sort of token-based campaign that aims to... | 0 | 2021-12-13T09:02:04 | https://dev.to/cryptopromoters/what-do-you-look-for-in-a-crypto-marketing-company-3686 | cryptomarketingcompany, cryptocurrency, cryptomarketing | A **[crypto marketing company](https://cryptopromoters.org/)** has the ability to promote any sort of token-based campaign that aims to raise money. With such a service provider, it is possible for you to have perfect operations for your crowdfunding project. Also, you are to present your idea to a large number of inve... | cryptopromoters |

924,806 | 🧭How to create a content explorer view in Microsoft Lists | 🎬NEW VIDEO N. 154🎬 In this video tutorial, you’ll learn how to create a content explorer view in... | 0 | 2021-12-13T09:16:14 | https://dev.to/giulianodeluca/how-to-create-a-content-explorer-view-in-microsoft-lists-4418 | microsoftlists, lists, sharepoint, giulianodeluca | ---

title: 🧭How to create a content explorer view in Microsoft Lists

published: true

description:

tags: MicrosoftLists, Lists, SharePoint, GiulianoDeLuca

cover_image: https://raw.githubusercontent.com/giuleon/MicrosoftListsContentExplorer/master/Thumbnail.png

---

🎬NEW VIDEO N. 154🎬

In this video tutorial, you’ll ... | giulianodeluca |

924,834 | If you're uncomfortable with RxJS, this article is for you! | RxJS patterns are popular nowadays as data management and manipulation in a web app are crucial for... | 0 | 2021-12-13T10:10:14 | https://dev.to/famzil/if-youre-uncomfortable-with-rxjs-this-article-is-for-you-1c9m | programming, webdev, javascript, react | RxJS patterns are popular nowadays as data management and manipulation in a web app are crucial for every app.

Who didn’t retrieve data from an API or whatever the source is in their app?

This article covers:

- The context and data

- RxJS and why is it used?

- When to use it?

This article had successfully made my... | famzil |

924,952 | 2 in one windows in Java Swing | How can I connect the window with camera (Right window) in Main window (Left window) so that they are... | 0 | 2021-12-13T10:54:37 | https://dev.to/namisrn/2-in-one-windows-in-java-swing-2hn6 |

How can I connect the window with camera (Right window) in Main window (Left window) so that they are in one window?

| namisrn | |

924,974 | Journey of a dyslexic programmer ? What is it like ? | Ever since I was a little kid I always knew that there was an issue with my reading, writing and... | 0 | 2021-12-14T13:13:26 | https://dev.to/aravindasiva/journey-of-a-dyslexic-programmer-what-is-it-like--2bgf | career, dyslexia, productivity, computerscience |

Ever since I was a little kid I always knew that there was an issue with my reading, writing and spelling, but never knew what it was. I always thought I was one of those different kids.

As I grew up in India, schools didn't really have anything special for dsylexic kids. Well, it wasn't really the school's mistake s... | aravindasiva |

925,132 | Launching the M.V.P. | Devshub Coming to the point. I am launching a MVP called Devshub. Basically, I am building... | 0 | 2021-12-13T14:14:55 | https://dev.to/tazim404/launching-the-mvp-797 | javascript, programming, webdev, showdev |

# [Devshub](https://devshub.netlify.app/)

Coming to the point. I am launching a MVP called [Devshub](https://devshub.netlify.app).

Basically, I am building a social network for developers to share their projects and view what other developers built.

Currently, it is a small MVP and running on a free tier. Slowly and ... | tazim404 |

925,333 | 3 Reason Why Javascript Should be your First Language | There are many languages to choose as your first language, i.e. Python, JavaScript, Go, and Ruby. All... | 0 | 2021-12-13T16:32:28 | https://dev.to/kinjiru09/3-reason-why-javascript-should-be-your-first-language-22jo | javascript, node, programming, beginners | There are many languages to choose as your first language, i.e. Python, JavaScript, Go, and Ruby. All these languages have been heralded as easy languages to learn. There are good reasons to call these languages “easy”.

1. They are easy to set up and relatively easy to start creating projects.

2. They have easy syntax... | kinjiru09 |

925,344 | Part 2/2 - Game in ReactJS - Cuzzle | In these articles I would like to share with you the process of development of the latest game that I... | 15,995 | 2021-12-21T15:09:58 | https://dev.to/jorger/part-22-game-in-reactjs-cuzzle-1662 | react, javascript, css, pwa | In these articles I would like to share with you the process of development of the latest game that I developed called Cuzzle _**(cube + puzzle = Cuzzle)**_ ReactJS, this game was inspired by the original game called [cuzzle](https://apps.apple.com/us/app/cuzzle/id1185407542) developed by [Redline Games](https://www.re... | jorger |

925,589 | Making a better 2D asset pipeline | I hate image formats. Maybe it's an emotional reaction to hours upon hours of searching... | 0 | 2021-12-21T20:54:10 | https://dev.to/hamishmilne/making-a-better-2d-asset-pipeline-5d3e | unity3d, gamedev | ## I hate image formats.

Maybe it's an emotional reaction to hours upon hours of searching for obscure specifications, finding bizarre proprietary blobs in supposedly standardised files, wondering if the tool I'm running is even doing anything, and having my (fairly high end) PC completely run out of memory several ti... | hamishmilne |

925,604 | Use Symbolic Links to version control your config files | Coming from Windows based OS, I thought of symbolic links in Unix based OS as a way of creating... | 0 | 2021-12-14T01:26:00 | https://dev.to/rounakcodes/use-symbolic-links-to-version-control-your-config-files-2048 | dotfiles, shell, config, stow | Coming from Windows based OS, I thought of symbolic links in Unix based OS as a way of creating shortcuts like "Create a Desktop shortcut for ..." and so I never paid much attention to it.

I have now (15 years late) discovered its value.

Usually, you have your configuration (*dotfiles*) files for shell, text editors... | rounakcodes |

1,180,830 | Automatically and intelligently processing SMS with attachments? | Hi there. Doesn't seem like it's asking too much, but I'm not sure. I'd like to be able to assign a... | 0 | 2022-08-31T18:41:48 | https://dev.to/redherring917b/automatically-and-intelligently-processing-sms-with-attachments-2039 | twilio, help | Hi there. Doesn't seem like it's asking too much, but I'm not sure.

I'd like to be able to assign a webhook to a recipient phone number that would automatically fire off whenever an SMS was received. I'd like that webhook to contain the details of the SMS, including the message itself, the sending mobile number, as ... | redherring917b |

925,658 | Building a waitlist for your product with Next.js | Building a waitlist allows your future users to express interest in you, before you've even started... | 0 | 2021-12-14T02:29:31 | https://blog.propelauth.com/nextjs-waitlist/ | javascript, nextjs, webdev, tutorial | Building a waitlist allows your future users to express interest in you, before you've even started your MVP. You can see if your messaging resonates with potential customers, and when you are ready to launch, the users from your waitlist will make excellent early product testers.

In this post, we'll build the followi... | propelauthblog |

925,707 | Getting Started with the React AutoComplete Component | Learn how easily you can create and configure the React AutoComplete component of Syncfusion using... | 0 | 2021-12-14T04:59:47 | https://dev.to/syncfusion/getting-started-with-the-react-autocomplete-component-30ok | react, reactnative, webdev, webcomponents | Learn how easily you can create and configure the [React AutoComplete component](https://www.syncfusion.com/react-ui-components/react-autocomplete) of Syncfusion using the create-react-app command. This video also explains how to configure a few of the control’s basic features like binding list and remote data and cust... | techguy |

925,725 | Tailwind CSS v3 Button Examples | In this section we will create tailwind css 3 button, tailwind v3 light button, button with icon,... | 0 | 2021-12-14T06:03:35 | https://larainfo.com/blogs/tailwind-css-v3-button-examples | tailwindcss, css, tutorial, webdev | In this section we will create tailwind css 3 button, tailwind v3 light button, button with icon, create beautiful tailwind button styles, tailwind v3 glow button example with Tailwind CSS version 3. Before we start you need to install or setup tailwind v3 project, you can check below article.

[How to install & setup ... | saim_ansari |

925,823 | Parallelizing Tasks Using MongoDB | Recently I published a post on our company's engineering blog. Check it out if you work with MongoDB... | 0 | 2021-12-14T06:50:58 | https://dev.to/eidellev/parallelizing-tasks-using-mongodb-24pj | mongodb, typescript, node, backend | Recently I published a [post](https://medium.com/zencity-engineering/parallelizing-tasks-using-mongodb-d788429b2b67) on our company's engineering blog.

Check it out if you work with MongoDB and want to find out how you can use it for parallel processing.

| eidellev |

925,835 | Database relation with laravel | Artisan Command: php artisan db:seed | 0 | 2021-12-14T07:23:03 | https://dev.to/vatheara/database-relation-with-laravel-24mm | laravel |

Artisan Command:

`php artisan db:seed` | vatheara |

925,850 | get user in AppServiceProvider Laravel | Laravel session is initialized in a middleware so you can't access the session from a Service... | 0 | 2021-12-14T07:49:50 | https://dev.to/vatheara/get-user-in-appserviceprovider-laravel-5cj9 | laravel | Laravel session is initialized in a middleware so you can't access the session from a Service Provider, because they execute before the middleware in the request lifecycle

If for some other reason you want to do it in a service provider, you could use a view composer with a callback, like this:

`view()->composer('*',... | vatheara |

925,900 | Top Data Recovery Tools For APFS Drives | Read this article about top tools to recover data lost from ApFS drives used on Mac computers or... | 0 | 2021-12-14T09:24:51 | https://dev.to/hetmansoftware/top-data-recovery-tools-for-apfs-drives-2obc | beginners, testing, tutorial, security | Read this article about top tools to recover data lost from ApFS drives used on Mac computers or other Apple devices. We will explore thoroughly what each of the utilities can do!

## Introduction

Apple File System or ApFS is the new file system by Apple which is used with latest Mac devices. However, this file system ... | hetmansoftware |

928,380 | Under the Lid: How AtomicJar is Reshaping Testcontainers | Let’s get nerdy with it. On this week’s episode of Dev Interrupted, Dan gets technical with Sergei... | 0 | 2021-12-16T17:32:39 | https://devinterrupted.com/podcast/under-the-lid-how-atomicjar-is-reshaping-testcontainers/ | testing, cloud, techtalks, podcast | Let’s get nerdy with it.

On this week’s episode of Dev Interrupted, Dan gets technical with Sergei Egorov, co-founder and CEO of [AtomicJar](https://www.atomicjar.com/).

With the mission to make integrated testing simpler and easier, AtomicJar created the Testcontainers Cloud which allows developers to test their cod... | conorbronsdon |

928,574 | How to Find Which Career is Right for Me: The Ultimate Guide | It is the age-old question: ‘What should I be when I grow up?’ This decision is made easier for... | 0 | 2021-12-16T20:45:29 | https://remoteful.dev/blog/how-find-career-right-for-me | remotework, remotejob, job, remotejobs |

It is the age-old question: ‘What should I be when I grow up?’ This decision is made easier for some of us by a family with the same career. Others might have an internship in their preferred field. But for many li... | ryy |

928,831 | Colon Broom Reviews – What is Colon Broom? | Is Colon Broom safe to take? | Colon Broom may assist address the issues with the gastrointestinal systems. Colon Broom is an... | 0 | 2021-12-17T05:43:18 | https://dev.to/colon_broom/colon-broom-reviews-what-is-colon-broom-is-colon-broom-safe-to-take-1601 | [Colon Broom](https://colonbroomus.wixsite.com/colonbroom) may assist address the issues with the gastrointestinal systems. Colon Broom is an supplement that might assist with overseeing stomach-related issues and keeping up with stomach-related wellbeing. Colon Broom is a dietary supplement detailed and sold by Max Nu... | colon_broom | |

929,096 | Magento Migration- Have You Given a Thought to Theme Migration | Assuming you weren't one of the individuals who migrated up to Magento 2 before June 2020, you likely... | 0 | 2021-12-17T13:25:45 | https://dev.to/sneharawat/magento-migration-have-you-given-a-thought-to-theme-migration-7cn | magento, design, devops, productivity | Assuming you weren't one of the individuals who migrated up to Magento 2 before June 2020, you likely definitely know why you ought to consider it shortly. Even though your Magento eCommerce store might, in any case, be unblemished and running, the absence of value fixes and security patches is reason enough to say goo... | sneharawat |

930,237 | Ensuring Santa's Success With Automated Tests (C# Advent 2021) | An exploration of TDD in the spirit of the season. | 0 | 2021-12-19T03:49:47 | https://seankilleen.com/2021/12/santa-sleigh/ | csharp, dotnet, nunit, xunit | ---

title: "Ensuring Santa's Success With Automated Tests (C# Advent 2021)"

published: true

description: "An exploration of TDD in the spirit of the season."

tags:

- csharp

- dotnet

- nunit

- xunit

canonical_url: https://seankilleen.com/2021/12/santa-sleigh/

cover_image: https://seankilleen.com/images/overlays... | seankilleen |

936,343 | Sort String by Flipping | Description You are given a string s consisting of the letters "x" and "y". In addition,... | 0 | 2021-12-25T12:52:27 | https://dev.to/jiangwenqi/sort-string-by-flipping-4ja3 | java, programming, algorithms, 100daysofcode | # [Description](https://binarysearch.com/problems/Sort-String-by-Flipping)

You are given a string `s` consisting of the letters `"x"` and `"y"`. In addition, you have an operation called `flip`, which changes a single `"x"` to `"y"` or vice versa.

Determine the smallest number of times you would need to apply this op... | jiangwenqi |

937,386 | 5 Reasons Why You Should Prefer PHP for Website Development | PHP has emerged as a leading technology for creating dynamic and static websites and web... | 0 | 2021-12-27T09:53:19 | https://dev.to/freita_browning/5-reasons-why-you-should-prefer-php-for-website-development-4c46 | php, webdev, programming |

PHP has emerged as a leading technology for creating dynamic and static websites and web applications. And, there are lots of websites or web app already using PHP today. PHP is a popular server-side scripting langu... | freita_browning |

937,409 | MySQL query timeouts on big database | Hi devs I have a question. I'm maintaining a laravel/MySQL application that has a lot of custom... | 0 | 2021-12-27T10:29:52 | https://dev.to/jobokoth/mysql-query-timeouts-on-big-database-g1l | mysql, laravel, aws, bigdata | Hi devs

I have a question. I'm maintaining a laravel/MySQL application that has a lot of custom reports that are generated using raw sql queries. As the usage grows, we are experiencing a lot of query timeouts because the data keeps growing, despite all our best efforts to optimize the queries, index the tables, etc. ... | jobokoth |

937,543 | "Kem kẹp sandwich là một trong những món ăn vặt đường phố được người dân Singapore rất yêu thích. | "Kem kẹp sandwich là một trong những món ăn vặt đường phố được người dân Singapore rất yêu thích. Vì... | 0 | 2021-12-27T14:01:48 | https://dev.to/kemkephawkerstar/kem-kep-sandwich-la-mot-trong-nhung-mon-an-vat-duong-pho-duoc-nguoi-dan-singapore-rat-yeu-thich-3k03 | webdev, beginners | "Kem kẹp sandwich là một trong những món ăn vặt đường phố được người dân Singapore rất yêu thích.

Vì thế Hawker Star ra đời để đem đếm món kem kẹp sandwich chuẩn vị Singapore tại Việt Nam!

Website: https://kemkepsingapore.com

Hotline: 097 908 24 69

Email: hawkerstar.kinhdoanh@gmail.com

Địa chỉ: 38 Nguyễn Huệ, Bến Ng... | kemkephawkerstar |

937,666 | Création de site avec Hugo | Installer un site sur Hugo peux poser des difficultés lors de l’installation. La partie sur... | 0 | 2021-12-27T15:27:39 | https://blog.moulard.org/creation-de-site/ | ---

title: Création de site avec Hugo

published: true

date: 2019-11-13 14:17:21 UTC

tags:

canonical_url: https://blog.moulard.org/creation-de-site/

---

Installer un site sur Hugo peux poser des difficultés lors de l’installation.

## La partie sur Hugo

### Installation de Hugo

Sur Ubuntu, un simple `snap install hu... | tommoulard | |

937,674 | ANSSI et automatisation de MOOC Secnumacademie avec Selenium | Pour mon école, j’ai eu a faire un MOOC de l’ANSSI. Or après avoir fait tous les tests du mooc à... | 0 | 2021-12-27T15:28:10 | https://blog.moulard.org/anssi-mooc-on-hacking/ | ---

title: ANSSI et automatisation de MOOC Secnumacademie avec Selenium

published: true

date: 2020-04-13 16:57:41 UTC

tags:

canonical_url: https://blog.moulard.org/anssi-mooc-on-hacking/

---

Pour mon école, j’ai eu a faire un [MOOC](https://secnumacademie.gouv.fr/) de l’ANSSI.

Or après avoir fait tous les tests du m... | tommoulard | |

937,704 | Errors and suspicious code fragments in .NET 6 sources | The .NET 6 turned out to be much-awaited and major release. If you write for .NET, you could hardly... | 0 | 2021-12-27T15:32:37 | https://dev.to/_sergvasiliev_/errors-and-suspicious-code-fragments-in-net-6-sources-2md5 | dotnet, csharp, opensource, codequality | The \.NET 6 turned out to be much\-awaited and major release\. If you write for \.NET, you could hardly miss such an event\. We also couldn't pass by the new version of this platform\. We decided to check what interesting things we can find in the sources of \.NET libraries\.

| mbogner |

937,779 | Practicing Data Structures and Algorithms Daily! | Boring but worthy Data structures and algorithms as important as your communication skills... | 0 | 2021-12-27T19:10:33 | https://dev.to/seek4samurai/practicing-data-structures-and-algorithms-daily-n62 | programming, beginners, tutorial, algorithms | ## Boring but worthy

Data structures and algorithms as important as your communication skills if you're into programming. If it's your dream to work as full time software engineer, well this thing should be your playground.

For software engineers, understanding of different types of algorithms is important. So if you ... | seek4samurai |

937,817 | 10 habits of 10x developer | Over 16 years of educating, tutoring and mentoring developers I have recognized ten habits that... | 0 | 2021-12-27T19:26:38 | https://dev.to/tomaszs2/10-habits-of-10x-developer-96p | programming, webdev, beginners | Over 16 years of educating, tutoring and mentoring developers I have recognized ten habits that differentiate 10x developers from others. 10x developers:

1. Take insane amount of notes

2. Start to code only when they gather all information and requirements needed to implement a feature

3. First reproduce the bug befo... | tomaszs2 |

937,887 | Table Driven Scanners | Introduction A scanner or tokenizer is a part of the compiler, it is also that potion of... | 0 | 2021-12-27T20:13:12 | https://dev.to/ezpzdevelopement/table-driven-scanners-40jd | ## Introduction

A scanner or tokenizer is a part of the compiler, it is also that potion of code or program that gets a set of characters as input and produces a set of tokens or words as an output.

We can count three types of scanners table-driven scanners, direct coded scanners, and hand-coded scanners, each one of... | ezpzdevelopement | |

937,908 | How to Create Aliases on macOS with Terminal | Aliases are those badass secret identities and mysteries that developers type into their terminal to... | 0 | 2021-12-27T20:57:05 | https://blog.zahrakhadijha.com/how-to-create-aliases-on-macos-with-terminal | tutorial, beginners, webdev, programming | Aliases are those badass secret identities and mysteries that developers type into their terminal to perform a task faster and make their workflow simpler. For the longest time, I procrastinated creating aliases because the thought of learning how to do it *seemed* really hard. But actually, it's extremely easy. And it... | za-h-ra |

938,181 | A11y tips: add a skip link | A skip link is a link located at the beginning of the page and that allows the user to jump directly... | 15,912 | 2021-12-28T07:35:41 | https://dev.to/carlosespada/a11y-tips-add-a-skip-link-222m | a11y, tips, html | A skip link is **a link located at the beginning of the page and that allows the user to jump directly to certain blocks** (main content, main search, important asides...) without having to go through all the previous elements that are repeated throughout of the entire site (logo, main navigation...).

It is **especial... | carlosespada |

938,207 | Things to Know Before You Start Tailwind CSS | With the rising popularity of Tailwind CSS, many of us might jump directly on Tailwind just to catch... | 16,052 | 2021-12-28T08:32:32 | https://clumsycoder.hashnode.dev/getting-started-with-tailwindcss | tailwindcss, beginners, css, webdev | With the rising popularity of Tailwind CSS, many of us might jump directly on Tailwind just to catch up with the hype. It won't take them much of their time to realize that it is not like any other traditional CSS framework. They might not even get a full picture of Tailwind and end up hating it thinking it's harder th... | clumsycoder |

938,296 | Topic: React Hook and Custom React Hook | When I was learning to React, some parts seemed to me difficult. Today, I have decided to... | 0 | 2021-12-28T10:32:10 | https://dev.to/zahidulislam144/topic-react-hook-and-custom-react-hook-5d6l | react | ## When I was learning to React, some parts seemed to me difficult. Today, I have decided to write some of my learnings and ideas about react hook and custom react hook.

## - **What is hook, basically?**

In real life, hook means a one kind of ring that holds something. In react, hook works basically based on this f... | zahidulislam144 |

938,411 | about_CRUD_operation | What are CRUD Operations: How CRUD Operations Work, Examples, Tutorials & More If you’ve ever... | 0 | 2021-12-28T11:19:28 | https://dev.to/cosmicraybd/aboutcrudoperation-40gc | What are CRUD Operations: How CRUD Operations Work, Examples, Tutorials & More

If you’ve ever worked with a database, you’ve likely worked with CRUD operations. CRUD operations are often used with SQL, a topic we’ve covered in-depth (see this article, this one, and this one for some of our recent SQL tips and tricks). ... | cosmicraybd | |

938,521 | Create a slug system with Strapi v4 | Steps to create a slug system with Strapi v4 | 0 | 2021-12-28T15:09:52 | https://dev.to/elchiconube/create-a-slug-system-with-strapi-v4-1abm | strapi, strapiv4, slug, javascript | ---

title: Create a slug system with Strapi v4

published: true

description: Steps to create a slug system with Strapi v4

tags: strapi, strapiv4, slug, javascript

cover_image: https://images.pexels.com/photos/574069/pexels-photo-574069.jpeg?auto=compress&cs=tinysrgb&dpr=2&h=750&w=1260

---

Let's create a slug system wi... | elchiconube |

938,556 | try to post something | hi everyone, this is long from google. love to hear from you guys | 0 | 2021-12-28T15:37:57 | https://dev.to/truonglong88/try-to-post-something-22if | new, trypro, create | hi everyone, this is long from google.

love to hear from you guys | truonglong88 |

938,765 | Help please | Hello, I'm new here in the community and I need help please, I have an application in Reactjs,... | 0 | 2021-12-28T15:56:08 | https://dev.to/juandavidlasso/help-please-47j3 | react, javascript | Hello, I'm new here in the community and I need help please, I have an application in Reactjs, yesterday I update all my dependencies, and I run my application with npm start and I got these errors, can you help me please

![Image description](https://dev-to-uploads.s3.amazonaws.com/uploads/articles/jp0fxjj1xhdotzr5xl... | juandavidlasso |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.