code stringlengths 2.5k 150k | kind stringclasses 1 value |

|---|---|

<h1><center>Report 12</center></h1>

<h3><center>Jiachen Tian</center><h3>

# Introduction

This week's task is primarily glint detection. Right now there are three potential ways: KCF, Hough Transform, and Ratio Comparison. Even though Ratio Comparison works well under certain conditions within a certain displacement of the glitn as illustrated in the last report, the noise sensitive nature guarantees its failure under uncertain inputs(thousands of different frams with different noise patterns). KCF tracker works a little better comparing to ratio comparison but it tends to fail with too many inputs. In comparion, Hough transform might still work the best with proper parameter settings. All the above algorithm will be illustrated.

# Setup

Setup path to include our files. import them. use `autoreload` to get changes in as they are made.

```

import os

import sys

import cv2

from matplotlib import pyplot as plt

import statistics

import numpy as np

# load our code

sys.path.insert(0, os.path.abspath('../'))

from plotting import auto_draw

# specific to jupyter notebook

from jupyter_help import cvplt, cvplt_sub

#Import image processing function from optimization

# load any changes as we make them

%load_ext autoreload

%autoreload 2

```

# Ratio comparison

The previous report illustrates how to map the rectangle back to the original place without taking into account two crucial parameters: ratio differences between each glint caused by noise(present even with noise filtering), and variation in terms of displacements. To diminish errors caused by ratio difference, one could find the stsndard deviation between ratio for each part(Top left, top right, bottom left, bottom right) and get the smallest std. However, using standard deviation would leave the program more vulnerable to errors caused by displacement changes.

```

#Read in the original image

image = cv2.imread("../input/chosen_pic.png")

keep1 = image.copy()

keep2 = image.copy()

#Run auto threshold on the original image

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

#Don't blur it

blurred = cv2.blur(gray,(1, 1))

_,proc = cv2.threshold(blurred,153,153,cv2.THRESH_BINARY)

#Draw the rendered program

cvplt(proc)

#Set the color

color = (255, 0, 0)

#When calculating standard deviation, the individual ratio is based upon number of non-zero pixels.

sample1 = np.array(proc[158:170, 125:137]) #User chosen coordinates

show1 = cv2.rectangle(keep1, (125, 158), (137, 170), color, 2)

number1 = np.count_nonzero(sample1)

cvplt(show1)

print(number1)

#Move it right 10 unit(Standard displacement I set on the previous report)

sample2 = np.array(proc[158:170, 135:147])

show2 = cv2.rectangle(keep2, (135, 158), (147, 170), color, 2)

number2 = np.count_nonzero(sample2)

cvplt(show2)

print(number2)

#Even when it moves to the border, we could still get roughly 27 false pixel that interferes the results.

```

# Hogh Transform

Previously thought that this method is infeasible on glint due to the small size of glint. However, with the help of OTSU's method and results from the pupil detection, it might just work.

```

#Read in the original image

image = cv2.imread("../input/chosen_pic.png")

result = image.copy()

#BGR to grey to eliminate extra layers

image = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

#First parameter: blur(set to 10)

blur = (10, 10)

image = cv2.blur(image,blur)

#second parameter: canny(set to 40 to 50)

#Any reasonable parameter would suffice. OTSU would handle the neutralization.

#Third parameter: threshold(determined by OTSU)

#We want the threshold to be exclusively on glint. So first crop the frame

cropped = image[158:170, 125:137]

#Run OTSU

thre,proc = cv2.threshold(cropped,0,255,cv2.THRESH_BINARY+cv2.THRESH_OTSU)

#Threshold we need

print(thre)

#Affect the threshold on the big image

_, image =cv2.threshold(image,thre,thre,cv2.THRESH_BINARY)

#Canny the image

edges = cv2.Canny(image, 40, 50)

cvplt(edges)

#If we just run the hough transform like this

circles = cv2.HoughCircles(edges, cv2.HOUGH_GRADIENT, 1,150,\

param1 = 200, param2 = 20,\

minRadius = 0, maxRadius = 0)

circles = circles[0][0]

#Draw the circle

circle = cv2.circle(result, (circles[0], circles[2]), circles[2], color, 2)

#Obviously, the algorithm thinks the circle is on the top

cvplt(circle)

#Way out: search in the cropped area cropped out by user in the first place

small_edges = edges[157:171, 125:139]

cvplt(small_edges)

circles = cv2.HoughCircles(small_edges, cv2.HOUGH_GRADIENT, 1,150,\

param1 = 200, param2 = 10,\

minRadius = 0, maxRadius = 0)

color_true = (0, 255, 0)

circles = circles[0][0]

print(circles)

#Map the circle back to the big picture

y = 157 + int(circles[1])

x = 125 + int(circles[0])

#Plot the fixed circle

circle = cv2.circle(result, (x, y), circles[2], color_true, 2)

cvplt(circle)

#As shown in the green circle, it correctly maps to the correct position.

```

# Optimization

What if the glint moves beyong the cell?

- Make the original cell bigger

- Update the cell position based on pupil

# Analysis

As shown above, for glit detection, I will use hough transform as the main algorithm and KCF as well as ratio differences as complementary analysis to get a more precise result.

# Conclusion

Both Pupil tracking and glint tracking is about to finish. The next step would be to further improve precision and find the angle of staring based upon values from both glint and pupil.

| github_jupyter |

## Seminar and homework (10 points total)

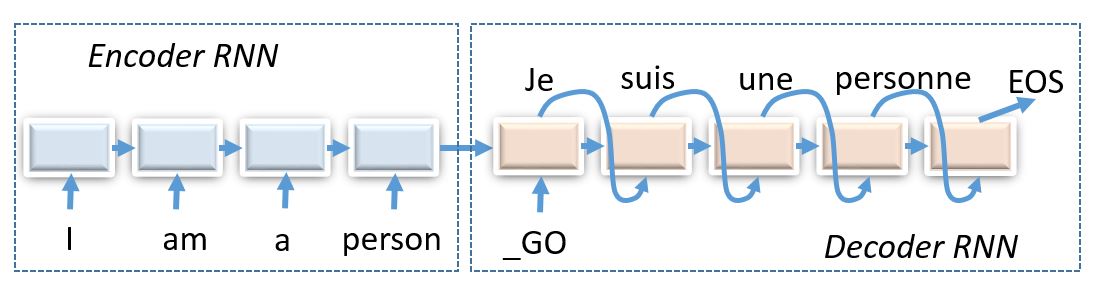

Today we shall compose encoder-decoder neural networks and apply them to the task of machine translation.

_(img: esciencegroup.files.wordpress.com)_

Encoder-decoder architectures are about converting anything to anything, including

* Machine translation and spoken dialogue systems

* [Image captioning](http://mscoco.org/dataset/#captions-challenge2015) and [image2latex](https://openai.com/requests-for-research/#im2latex) (convolutional encoder, recurrent decoder)

* Generating [images by captions](https://arxiv.org/abs/1511.02793) (recurrent encoder, convolutional decoder)

* Grapheme2phoneme - convert words to transcripts

## Our task: machine translation

We gonna try our encoder-decoder models on russian to english machine translation problem. More specifically, we'll translate hotel and hostel descriptions. This task shows the scale of machine translation while not requiring you to train your model for weeks if you don't use GPU.

Before we get to the architecture, there's some preprocessing to be done. ~~Go tokenize~~ Alright, this time we've done preprocessing for you. As usual, the data will be tokenized with WordPunctTokenizer.

However, there's one more thing to do. Our data lines contain unique rare words. If we operate on a word level, we will have to deal with large vocabulary size. If instead we use character-level models, it would take lots of iterations to process a sequence. This time we're gonna pick something inbetween.

One popular approach is called [Byte Pair Encoding](https://github.com/rsennrich/subword-nmt) aka __BPE__. The algorithm starts with a character-level tokenization and then iteratively merges most frequent pairs for N iterations. This results in frequent words being merged into a single token and rare words split into syllables or even characters.

```

# Uncomment selected lines if necessary

# !pip3 install tensorflow-gpu==2.0.0

# !pip3 install subword-nmt &> log

# !wget https://www.dropbox.com/s/yy2zqh34dyhv07i/data.txt?dl=1 -O data.txt

# !wget https://www.dropbox.com/s/fj9w01embfxvtw1/dummy_checkpoint.npz?dl=1 -O dummy_checkpoint.npz

# !wget https://raw.githubusercontent.com/yandexdataschool/nlp_course/2019/week04_seq2seq/utils.py -O utils.py

# # thanks to tilda and deephack teams for the data, Dmitry Emelyanenko for the code :)

from nltk.tokenize import WordPunctTokenizer

from subword_nmt.learn_bpe import learn_bpe

from subword_nmt.apply_bpe import BPE

tokenizer = WordPunctTokenizer()

def tokenize(x):

return ' '.join(tokenizer.tokenize(x.lower()))

# split and tokenize the data

with open('train.en', 'w') as f_src, open('train.ru', 'w') as f_dst:

for line in open('data.txt'):

src_line, dst_line = line.strip().split('\t')

f_src.write(tokenize(src_line) + '\n')

f_dst.write(tokenize(dst_line) + '\n')

# build and apply bpe vocs

bpe = {}

for lang in ['en', 'ru']:

learn_bpe(open('./train.' + lang), open('bpe_rules.' + lang, 'w'), num_symbols=8000)

bpe[lang] = BPE(open('./bpe_rules.' + lang))

with open('train.bpe.' + lang, 'w') as f_out:

for line in open('train.' + lang):

f_out.write(bpe[lang].process_line(line.strip()) + '\n')

```

### Building vocabularies

We now need to build vocabularies that map strings to token ids and vice versa. We're gonna need these fellas when we feed training data into model or convert output matrices into words.

```

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

data_inp = np.array(open('./train.bpe.ru').read().split('\n'))

data_out = np.array(open('./train.bpe.en').read().split('\n'))

from sklearn.model_selection import train_test_split

train_inp, dev_inp, train_out, dev_out = train_test_split(data_inp, data_out, test_size=3000,

random_state=42)

for i in range(3):

print('inp:', train_inp[i])

print('out:', train_out[i], end='\n\n')

from utils import Vocab

inp_voc = Vocab.from_lines(train_inp)

out_voc = Vocab.from_lines(train_out)

# Here's how you cast lines into ids and backwards.

batch_lines = sorted(train_inp, key=len)[5:10]

batch_ids = inp_voc.to_matrix(batch_lines)

batch_lines_restored = [' '.join(x) for x in inp_voc.to_lines(batch_ids)]

print("lines")

print(batch_lines)

print("\nwords to ids (0 = bos, 1 = eos):")

print(batch_ids)

print("\nback to words")

print(batch_lines_restored)

```

Draw source and translation length distributions to estimate the scope of the task.

```

plt.figure(figsize=[8, 4])

plt.subplot(1, 2, 1)

plt.title("source length")

plt.hist(list(map(len, map(str.split, train_inp))), bins=20);

plt.subplot(1, 2, 2)

plt.title("translation length")

plt.hist(list(map(len, map(str.split, train_out))), bins=20);

```

### Encoder-decoder model

The code below contas a template for a simple encoder-decoder model: single GRU encoder/decoder, no attention or anything. This model is implemented for you as a reference and a baseline for your homework assignment.

```

import tensorflow as tf

assert tf.__version__.startswith('2'), "Current tf version: {}; required: 2.0.*".format(tf.__version__)

L = tf.keras.layers

keras = tf.keras

from utils import infer_length, infer_mask

class BasicModel(L.Layer):

def __init__(self, inp_voc, out_voc, emb_size=64, hid_size=128):

"""

A simple encoder-decoder model

"""

super().__init__() # initialize base class to track sub-layers, trainable variables, etc.

self.inp_voc, self.out_voc = inp_voc, out_voc

self.hid_size = hid_size

self.emb_inp = L.Embedding(len(inp_voc), emb_size)

self.emb_out = L.Embedding(len(out_voc), emb_size)

self.enc0 = L.GRUCell(hid_size)

self.dec_start = L.Dense(hid_size)

self.dec0 = L.GRUCell(hid_size)

self.logits = L.Dense(len(out_voc))

def call(self, inp, out):

initial_state = self.encode(inp)

return self.decode(initial_state, out)

def encode(self, inp, **flags):

"""

Takes symbolic input sequence, computes initial state

:param inp: matrix of input tokens [batch, time]

:returns: initial decoder state tensors, one or many

"""

inp_emb = self.emb_inp(inp)

batch_size = inp.shape[0]

mask = infer_mask(inp, self.inp_voc.eos_ix, dtype=tf.bool)

state = [tf.zeros((batch_size, self.hid_size), tf.float32)]

for i in tf.range(inp_emb.shape[1]):

output, next_state = self.enc0(inp_emb[:, i], state)

state = [

tf.where(

tf.tile(mask[:, i, None],[1,next_tensor.shape[1]]),

next_tensor, tensor

) for tensor, next_tensor in zip(state, next_state)

]

dec_start = self.dec_start(state[0])

return [dec_start]

def decode_step(self, prev_state, prev_tokens, **flags):

"""

Takes previous decoder state and tokens, returns new state and logits for next tokens

:param prev_state: a list of previous decoder state tensors

:param prev_tokens: previous output tokens, an int vector of [batch_size]

:return: a list of next decoder state tensors, a tensor of logits [batch, n_tokens]

"""

<YOUR CODE HERE>

return new_dec_state, output_logits

def decode(self, initial_state, out_tokens, **flags):

state = initial_state

batch_size = out_tokens.shape[0]

# initial logits: always predict BOS

first_logits = tf.math.log(

tf.one_hot(tf.fill([batch_size], self.out_voc.bos_ix), len(self.out_voc)) + 1e-30)

outputs = [first_logits]

for i in tf.range(out_tokens.shape[1] - 1):

#on each step update the state and store new logits into output

<YOUR CODE HERE>

return tf.stack(outputs, axis=1)

def decode_inference(self, initial_state, max_len=100, **flags):

state = initial_state

outputs = [tf.ones(initial_state[0].shape[0], tf.int32) * self.out_voc.bos_ix]

all_states = initial_state

for i in tf.range(max_len):

state, logits = self.decode_step(state, outputs[-1])

outputs.append(tf.argmax(logits, axis=-1, output_type=tf.int32))

all_states.append(state[0])

return tf.stack(outputs, axis=1), tf.stack(all_states, axis=1)

def translate_lines(self, inp_lines):

inp = tf.convert_to_tensor(inp_voc.to_matrix(inp_lines))

initial_state = self.encode(inp)

out_ids, states = self.decode_inference(initial_state)

return out_voc.to_lines(out_ids.numpy()), states

model = BasicModel(inp_voc, out_voc)

```

### Training loss (2 points)

Our training objetive is almost the same as it was for neural language models:

$$ L = {\frac1{|D|}} \sum_{X, Y \in D} \sum_{y_t \in Y} - \log p(y_t \mid y_1, \dots, y_{t-1}, X, \theta) $$

where $|D|$ is the __total length of all sequences__, including BOS and first EOS, but excluding PAD.

```

dummy_inp = tf.convert_to_tensor(inp_voc.to_matrix(train_inp[:3]))

dummy_out = tf.convert_to_tensor(out_voc.to_matrix(train_out[:3]))

dummy_logits = model(dummy_inp, dummy_out)

ref_shape = (dummy_out.shape[0], dummy_out.shape[1], len(out_voc))

assert dummy_logits.shape == ref_shape, "Your logits shape should be {} but got {}".format(dummy_logits.shape, ref_shape)

assert all(dummy_logits[:, 0].numpy().argmax(-1) == out_voc.bos_ix), "first step must always be BOS"

def compute_loss(model, inp, out, **flags):

"""

Compute loss (float32 scalar) as in the formula above

:param inp: input tokens matrix, int32[batch, time]

:param out: reference tokens matrix, int32[batch, time]

In order to pass the tests, your function should

* include loss at first EOS but not the subsequent ones

* divide sum of losses by a sum of input lengths (use infer_length or infer_mask)

"""

inp, out = map(tf.convert_to_tensor, [inp, out])

#outputs of the model

logits_seq = <YOUR CODE HERE>

#log-probabilities of all tokens at all steps

logprobs_seq = <YOUR CODE HERE>

#select the correct

logp_out = logprobs_seq * tf.one_hot(out, len(model.out_voc), dtype=tf.float32)

mask = infer_mask(out, out_voc.eos_ix)

#return cross-entropy

return <YOUR CODE HERE>

dummy_loss = compute_loss(model, dummy_inp, dummy_out)

print("Loss:", dummy_loss)

assert np.allclose(dummy_loss, 8.425, rtol=0.1, atol=0.1), "We're sorry for your loss"

```

### Evaluation: BLEU

Machine translation is commonly evaluated with [BLEU](https://en.wikipedia.org/wiki/BLEU) score. This metric simply computes which fraction of predicted n-grams is actually present in the reference translation. It does so for n=1,2,3 and 4 and computes the geometric average with penalty if translation is shorter than reference.

While BLEU [has many drawbacks](http://www.cs.jhu.edu/~ccb/publications/re-evaluating-the-role-of-bleu-in-mt-research.pdf), it still remains the most commonly used metric and one of the simplest to compute.

__Note:__ in this assignment we measure token-level bleu with bpe tokens. Most scientific papers report word-level bleu. You can measure it by undoing BPE encoding before computing BLEU. Please stay with the token-level bleu for this assignment, however.

```

from nltk.translate.bleu_score import corpus_bleu

def compute_bleu(model, inp_lines, out_lines, bpe_sep='@@ ', **flags):

""" Estimates corpora-level BLEU score of model's translations given inp and reference out """

translations, _ = model.translate_lines(inp_lines, **flags)#TODO batches!

# to compute token-level BLEU we need to split the original data into list of tokens

# translation is already transformed in that form

out_lines = [x.split(' ') for x in out_lines]

return corpus_bleu([[ref] for ref in out_lines], translations) * 100

```

__Note: do not forget splitting strings into list of tokens. Otherwise nltk will split the strings into chars and char-level BLEU will be computed.__

```

compute_bleu(model, dev_inp, dev_out)

```

### Training loop

Training encoder-decoder models isn't that different from any other models: sample batches, compute loss, backprop and update

```

from IPython.display import clear_output

from tqdm import tqdm, trange

metrics = {'train_loss': [], 'dev_bleu': [] }

opt = keras.optimizers.Adam(1e-3)

batch_size = 32

for _ in trange(25000):

step = len(metrics['train_loss']) + 1

batch_ix = np.random.randint(len(train_inp), size=batch_size)

batch_inp = inp_voc.to_matrix(train_inp[batch_ix])

batch_out = out_voc.to_matrix(train_out[batch_ix])

with tf.GradientTape() as tape:

loss_t = compute_loss(model, batch_inp, batch_out)

grads = tape.gradient(loss_t, model.trainable_variables)

opt.apply_gradients(zip(grads, model.trainable_variables))

metrics['train_loss'].append((step, loss_t.numpy()))

if step % 100 == 0:

metrics['dev_bleu'].append((step, compute_bleu(model, dev_inp, dev_out)))

clear_output(True)

plt.figure(figsize=(12,4))

for i, (name, history) in enumerate(sorted(metrics.items())):

plt.subplot(1, len(metrics), i + 1)

plt.title(name)

plt.plot(*zip(*history))

plt.grid()

plt.show()

print("Mean loss=%.3f" % np.mean(metrics['train_loss'][-10:], axis=0)[1], flush=True)

# Note: it's okay if bleu oscillates up and down as long as it gets better on average over long term (e.g. 5k batches)

assert np.mean(metrics['dev_bleu'][-10:], axis=0)[1] > 35, "We kind of need a higher bleu BLEU from you. Kind of right now."

for inp_line, trans_line in zip(dev_inp[::500], model.translate_lines(dev_inp[::500])[0]):

print(inp_line)

print(trans_line)

print()

```

# Homework code templates will appear here soon!

### Your Attention Required (4 points)

In this section we want you to improve over the basic model by implementing a simple attention mechanism.

This is gonna be a two-parter: building the __attention layer__ and using it for an __attentive seq2seq model__.

```

COMMING SOON!

```

### Attention layer

Here you will have to implement a layer that computes a simple additive attention:

Given encoder sequence $ h^e_0, h^e_1, h^e_2, ..., h^e_T$ and a single decoder state $h^d$,

* Compute logits with a 2-layer neural network

$$a_t = linear_{out}(tanh(linear_{e}(h^e_t) + linear_{d}(h_d)))$$

* Get probabilities from logits,

$$ p_t = {{e ^ {a_t}} \over { \sum_\tau e^{a_\tau} }} $$

* Add up encoder states with probabilities to get __attention response__

$$ attn = \sum_t p_t \cdot h^e_t $$

You can learn more about attention layers in the leture slides or [from this post](https://distill.pub/2016/augmented-rnns/).

### Seq2seq model with attention

You can now use the attention layer to build a network. The simplest way to implement attention is to use it in decoder phase:

_image from distill.pub [article](https://distill.pub/2016/augmented-rnns/)_

On every step, use __previous__ decoder state to obtain attention response. Then feed concat this response to the inputs of next attetion layer.

The key implementation detail here is __model state__. Put simply, you can add any tensor into the list of `encode` outputs. You will then have access to them at each `decode` step. This may include:

* Last RNN hidden states (as in basic model)

* The whole sequence of encoder outputs (to attend to) and mask

* Attention probabilities (to visualize)

_There are, of course, alternative ways to wire attention into your network and different kinds of attention. Take a look at [this](https://arxiv.org/abs/1609.08144), [this](https://arxiv.org/abs/1706.03762) and [this](https://arxiv.org/abs/1808.03867) for ideas. And for image captioning/im2latex there's [visual attention](https://arxiv.org/abs/1502.03044)_

```

COMMING SOON!

```

### Training attentive model

We'll reuse the infrastructure you've built for the regular model. I hope you didn't hard-code anything :)

```

COMMING SOON!

```

## Grand Finale (4+ points)

We want you to find the best model for the task. Use everything you know.

* different recurrent units: rnn/gru/lstm; deeper architectures

* bidirectional encoder, different attention methods for decoder

* word dropout, training schedules, anything you can imagine

For a full grade we want you to conduct at least __two__ experiments from two different bullet-points or your alternative ideas (2 points each). Extra work will be rewarded with bonus points :)

As usual, we want you to describe what you tried and what results you obtained.

`[your report/log here or anywhere you please]`

| github_jupyter |

```

from sklearn.base import BaseEstimator, ClassifierMixin, clone

from sklearn.utils import check_X_y, check_random_state, check_array

from sklearn.metrics import get_scorer

from sklearn.utils.validation import column_or_1d, check_is_fitted

from sklearn.multiclass import check_classification_targets

from sklearn.utils.metaestimators import if_delegate_has_method

from sklearn.neighbors import KNeighborsClassifier

from sklearn.preprocessing import MinMaxScaler

from sklearn.feature_selection import mutual_info_classif

import numpy as np

class Knn_Forest(BaseEstimator, ClassifierMixin):

"""

Random feature selection for ensemble of knn classifiers.

Each knn model will view the samples from different perspectives.

Aggregating their views will result in a good ensemble result.

Can also bootstrap the features, optimize the knn params per each

different model and also sample the features based on their initial importance.

"""

def __init__(self,

base_estimator=KNeighborsClassifier(),

n_estimators=10,

random_state=42,

optim=False,

parameters=None,

max_features = 'auto',

bootstrap_feats = False,

feat_importance = [],

metric='accuracy'):

self.base_estimator = base_estimator

self.n_estimators = n_estimators

self.max_features = max_features

self.random_state = check_random_state(random_state)

self.bootstrap_feats = bootstrap_feats

self.optim = optim

self.feat_importance = feat_importance

if self.optim:

self.parameters = parameters

else:

self.parameters = None

self.scoring = get_scorer(metric)

self.ensemble = []

self.selected_feat_indices= []

def fit(self, X, y):

return self._fit(X, y)

def _validate_y(self, y):

y = column_or_1d(y, warn=True)

check_classification_targets(y)

self.classes_, y = np.unique(y, return_inverse=True)

self.n_classes_ = len(self.classes_)

return y

def _fit(self,X,y):

X, y = check_X_y(

X, y, ['csr', 'csc'], dtype=None, force_all_finite=False,

multi_output=True)

y = self._validate_y(y)

n_samples, self.n_features_ = X.shape

if self.max_features == 'auto':

self.N_feats_per_knn = int(np.sqrt(self.n_features_))

elif self.max_features == 'log2':

self.N_feats_per_knn = int(np.log2(self.n_features_))

elif type(self.max_features) == float:

self.N_feats_per_knn = int(self.max_features*self.n_features_)

elif type(self.max_features) == int:

self.N_feats_per_knn = int(self.max_features)

if self.feat_importance == []:

self.feat_probas = [1/float(self.n_features_) for i in xrange(self.n_features_)]

else:

self.feat_probas = self.feat_importance#MinMaxScaler().fit_transform(mutual_info_classif(X, y).reshape(1, -1),y)

print(X.shape[1], self.n_features_, len(self.feat_importance), len(self.feat_probas))

print(len(self.feat_probas), self.n_features_, len(self.feat_importance))

for i_est in xrange(self.n_estimators):

self.selected_feat_indices.append(np.random.choice(np.arange(self.n_features_),

self.N_feats_per_knn,

replace=self.bootstrap_feats,

p=self.feat_probas))

cur_X, cur_y = X[:, self.selected_feat_indices[i_est]], y

cur_mod = clone(self.base_estimator)

if self.optim:

grid_search = GridSearchCV(cur_mod, self.parameters, n_jobs=-1, verbose=0, refit=True)

grid_search.fit(cur_X, cur_y)

cur_mod = grid_search.best_estimator_

else:

cur_mod.fit(cur_X, cur_y)

self.ensemble.append(cur_mod)

#print(cur_X.shape, cur_y.shape)

print("%d ESTIMATORS -- %0.3f" % (len(self.ensemble), 100*accuracy_score(y, self.predict(X), normalize=True)))

return self

def _validate_y(self, y):

y = column_or_1d(y, warn=True)

check_classification_targets(y)

self.classes_, y = np.unique(y, return_inverse=True)

self.n_classes_ = len(self.classes_)

return y

def predict(self, X):

"""Predict class for X.

The predicted class of an input sample is computed as the class with

the highest mean predicted probability. If base estimators do not

implement a ``predict_proba`` method, then it resorts to voting.

Parameters

----------

X : {array-like, sparse matrix} of shape = [n_samples, n_features]

The training input samples. Sparse matrices are accepted only if

they are supported by the base estimator.

Returns

-------

y : array of shape = [n_samples]

The predicted classes.

"""

if hasattr(self.base_estimator, "predict_proba"):

predicted_probability = self.predict_proba(X)

return self.classes_.take((np.argmax(predicted_probability, axis=1)),

axis=0)

else:

predicted_probability = np.zeros((X.shape[0],1), dtype=int)

for i, ens in enumerate(self.ensemble):

predicted_probability = np.hstack((predicted_probability,

ens.predict(X[:, self.selected_feat_indices[i]]).reshape(-1,1)))

predicted_probability = np.delete(predicted_probability,0,axis=1)

final_pred = []

for sample in xrange(X.shape[0]):

final_pred.append(most_common(predicted_probability[sample,:]))

return np.array(final_pred)

def predict_proba(self, X):

"""Predict class probabilities for X.

The predicted class probabilities of an input sample is computed as

the mean predicted class probabilities of the base estimators in the

ensemble. If base estimators do not implement a ``predict_proba``

method, then it resorts to voting and the predicted class probabilities

of an input sample represents the proportion of estimators predicting

each class.

Parameters

----------

X : {array-like, sparse matrix} of shape = [n_samples, n_features]

The training input samples. Sparse matrices are accepted only if

they are supported by the base estimator.

Returns

-------

p : array of shape = [n_samples, n_classes]

The class probabilities of the input samples. The order of the

classes corresponds to that in the attribute `classes_`.

"""

check_is_fitted(self, "classes_")

# Check data

X = check_array(

X, accept_sparse=['csr', 'csc'], dtype=None,

force_all_finite=False

)

if self.n_features_ != X.shape[1]:

raise ValueError("Number of features of the model must "

"match the input. Model n_features is {0} and "

"input n_features is {1}."

"".format(self.n_features_, X.shape[1]))

all_proba = np.zeros((X.shape[0], self.n_classes_))

for i, ens in enumerate(self.ensemble):

all_proba += ens.predict_proba(X[:, self.selected_feat_indices[i]])

all_proba /= self.n_estimators

return all_proba

@if_delegate_has_method(delegate='base_estimator')

def decision_function(self, X):

"""Average of the decision functions of the base classifiers.

Parameters

----------

X : {array-like, sparse matrix} of shape = [n_samples, n_features]

The training input samples. Sparse matrices are accepted only if

they are supported by the base estimator.

Returns

-------

score : array, shape = [n_samples, k]

The decision function of the input samples. The columns correspond

to the classes in sorted order, as they appear in the attribute

``classes_``. Regression and binary classification are special

cases with ``k == 1``, otherwise ``k==n_classes``.

"""

check_is_fitted(self, "classes_")

# Check data

X = check_array(

X, accept_sparse=['csr', 'csc'], dtype=None,

force_all_finite=False

)

if self.n_features_ != X.shape[1]:

raise ValueError("Number of features of the model must "

"match the input. Model n_features is {0} and "

"input n_features is {1} "

"".format(self.n_features_, X.shape[1]))

all_decisions = np.zeros((X.shape[0], self.n_classes_))

for i, ens in enumerate(self.ensemble):

all_decisions += ens.predict_proba(X)

decisions = sum(all_decisions) / self.n_estimators

return decisions

from __future__ import print_function

from pprint import pprint

from time import time

import logging

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.feature_extraction.text import TfidfTransformer

from sklearn.linear_model import SGDClassifier

from sklearn.model_selection import GridSearchCV

from sklearn.pipeline import Pipeline

from sklearn.metrics import classification_report, confusion_matrix

print(__doc__)

# Display progress logs on stdout

logging.basicConfig(level=logging.INFO,

format='%(asctime)s %(levelname)s %(message)s')

# #############################################################################

# Load some categories from the training set

categories = [

'alt.atheism',

'talk.religion.misc',

]

# Uncomment the following to do the analysis on all the categories

#categories = None

print("Loading 20 newsgroups dataset for categories:")

print(categories)

data = fetch_20newsgroups(subset='train', categories=categories)

print("%d documents" % len(data.filenames))

print("%d categories" % len(data.target_names))

print()

#############################################################################

# Define a pipeline combining a text feature extractor with a simple

# classifier

pipeline = Pipeline([

('vect', CountVectorizer()),

('tfidf', TfidfTransformer()),

('clf', SGDClassifier()),

])

# uncommenting more parameters will give better exploring power but will

# increase processing time in a combinatorial way

parameters = {

'clf__alpha': (0.00001, 0.000001),

'clf__penalty': ('l2', 'elasticnet'),

'clf__max_iter': (10, 50, 80, 150),

}

X = data.data

y = data.target

if __name__ == "__main__":

# multiprocessing requires the fork to happen in a __main__ protected

# block

# find the best parameters for both the feature extraction and the

# classifier

grid_search = GridSearchCV(pipeline, parameters, n_jobs=-1, verbose=1)

# print("Performing grid search...")

# print("pipeline:", [name for name, _ in pipeline.steps])

# print("parameters:")

# pprint(parameters)

# t0 = time()

# # grid_search.fit(data.data, data.target)

# grid_search.fit(X, y)

# print("done in %0.3fs" % (time() - t0))

# print()

# print("Best score: %0.3f" % grid_search.best_score_)

# print("Best parameters set:")

# best_parameters = grid_search.best_estimator_.get_params()

# for param_name in sorted(parameters.keys()):

# print("\t%s: %r" % (param_name, best_parameters[param_name]))

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

X_train, X_test, y_train, y_test= train_test_split(X, y)

grid_search.fit(X_train, y_train)

cur_mod = grid_search.best_estimator_

pred = cur_mod.predict(X_test)

print(accuracy_score(y_test, pred))

from sklearn.feature_selection import mutual_info_classif

from sklearn.feature_extraction.text import TfidfVectorizer

# pip = Pipeline([

# ('vect', CountVectorizer()),

# ('tfidf', TfidfTransformer())])

# tr = pip.fit_transform(X_train, y_train)

# mi = mutual_info_classif(tr, y_train)

# print(len(mi),tr.shape[1])

mi = mi/sum(mi)

# uncommenting more parameters will give better exploring power but will

# increase processing time in a combinatorial way

parameters = {

'n_neighbors': [2,4,6,8,10],

'metric':['euclidean', 'manhattan', 'cosine', 'l2']

}

parameters2 = {

'clf__max_features': [50, 0.2, 0.3, 0.4,0.8, 'auto', 'log2'],

'clf__bootstrap_feats': [True, False],

'clf__n_estimators': [100,250,500],

'clf__feat_importance':[mi, []]

}

# Define a pipeline combining a text feature extractor with a simple

# classifier

pipeline = Pipeline([

('vect', CountVectorizer()),

('tfidf', TfidfTransformer()),

('clf', Knn_Forest(n_estimators=500,

max_features=0.2,

bootstrap_feats=False,

optim=False,

parameters=parameters,

feat_importance=mi

)),

])

pipeline.fit(X_train, y_train)

pred = pipeline.predict(X_test)

# grid_search = GridSearchCV(pipeline, parameters2, n_jobs=1, verbose=2)

# grid_search.fit(X_train, y_train)

# cur_mod = grid_search.best_estimator_

# pred = cur_mod.predict(X_test)

print(accuracy_score(y_test, pred))

np.array(mi).shape

if mi == []:

feat_probas = [1/float(15285) for i in xrange(15285)]

else:

feat_probas = mi/float(sum(mi))

print(feat_probas)

from sklearn.feature_selection import mutual_info_classif

from sklearn.feature_extraction.text import TfidfVectorizer

tr = TfidfVectorizer().fit_transform(X_train, y_train)

mi = mutual_info_classif(tr, y_train)

```

| github_jupyter |

This demo provides examples of `ImageReader` class from `niftynet.io.image_reader` module.

What is `ImageReader`?

The main functionality of `ImageReader` is to search a set of folders, return a list of image files, and load the images into memory in an iterative manner.

A `tf.data.Dataset` instance can be initialised from an `ImageReader`, this makes the module readily usable as an input op to many tensorflow-based applications.

Why `ImageReader`?

- designed for medical imaging formats and applications

- works well with multi-modal input volumes

- works well with `tf.data.Dataset`

## Before the demo...

First make sure the source code is available, and import the module.

For NiftyNet installation, please checkout:

http://niftynet.readthedocs.io/en/dev/installation.html

```

import sys

niftynet_path = '/Users/bar/Documents/Niftynet/'

sys.path.append(niftynet_path)

from niftynet.io.image_reader import ImageReader

```

For demonstration purpose we download some demo data to `~/niftynet/data/`:

```

from niftynet.utilities.download import download

download('anisotropic_nets_brats_challenge_model_zoo_data')

```

## Use case: loading 3D volumes

```

from niftynet.io.image_reader import ImageReader

data_param = {'MR': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG'}}

reader = ImageReader().initialise(data_param)

reader.shapes, reader.tf_dtypes

# read data using the initialised reader

idx, image_data, interp_order = reader(idx=0)

image_data['MR'].shape, image_data['MR'].dtype

# randomly sample the list of images

for _ in range(3):

idx, image_data, _ = reader()

print('{} image: {}'.format(idx, image_data['MR'].shape))

```

The images are always read into a 5D-array, representing:

`[height, width, depth, time, channels]`

## User case: loading pairs of image and label by matching filenames

(In this case the loaded arrays are not concatenated.)

```

from niftynet.io.image_reader import ImageReader

data_param = {'image': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'T2'},

'label': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'Label'}}

reader = ImageReader().initialise(data_param)

# image file information (without loading the volumes)

reader.get_subject(0)

idx, image_data, interp_order = reader(idx=0)

image_data['image'].shape, image_data['label'].shape

```

## User case: loading multiple modalities of image and label by matching filenames

The following code initialises a reader with four modalities, and the `'image'` output is a concatenation of arrays loaded from these files. (The files are concatenated at the fifth dimension)

```

from niftynet.io.image_reader import ImageReader

data_param = {'T1': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'T1', 'filename_not_contains': 'T1c'},

'T1c': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'T1c'},

'T2': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'T2'},

'Flair': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'Flair'},

'label': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'Label'}}

grouping_param = {'image': ('T1', 'T1c', 'T2', 'Flair'), 'label':('label',)}

reader = ImageReader().initialise(data_param, grouping_param)

_, image_data, _ = reader(idx=0)

image_data['image'].shape, image_data['label'].shape

```

## More properties

The input specification supports additional properties include

```python

{'csv_file', 'path_to_search',

'filename_contains', 'filename_not_contains',

'interp_order', 'pixdim', 'axcodes', 'spatial_window_size',

'loader'}

```

see also: http://niftynet.readthedocs.io/en/dev/config_spec.html#input-data-source-section

## Using ImageReader with image-level data augmentation layers

```

from niftynet.io.image_reader import ImageReader

from niftynet.layer.rand_rotation import RandomRotationLayer as Rotate

data_param = {'MR': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG'}}

reader = ImageReader().initialise(data_param)

rotation_layer = Rotate()

rotation_layer.init_uniform_angle([-10.0, 10.0])

reader.add_preprocessing_layers([rotation_layer])

_, image_data, _ = reader(idx=0)

image_data['MR'].shape

# import matplotlib.pyplot as plt

# plt.imshow(image_data['MR'][:, :, 50, 0, 0])

# plt.show()

```

## Using ImageReader with `tf.data.Dataset`

```

import tensorflow as tf

from niftynet.io.image_reader import ImageReader

# initialise multi-modal image and label reader

data_param = {'T1': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'T1', 'filename_not_contains': 'T1c'},

'T1c': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'T1c'},

'T2': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'T2'},

'Flair': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'Flair'},

'label': {'path_to_search': '~/niftynet/data/BRATS_examples/HGG',

'filename_contains': 'Label'}}

grouping_param = {'image': ('T1', 'T1c', 'T2', 'Flair'), 'label':('label',)}

reader = ImageReader().initialise(data_param, grouping_param)

# reader as a generator

def image_label_pair_generator():

"""

A generator wrapper of an initialised reader.

:yield: a dictionary of images (numpy arrays).

"""

while True:

_, image_data, _ = reader()

yield image_data

# tensorflow dataset

dataset = tf.data.Dataset.from_generator(

image_label_pair_generator,

output_types=reader.tf_dtypes)

#output_shapes=reader.shapes)

dataset = dataset.batch(1)

iterator = dataset.make_initializable_iterator()

# run the tensorlfow graph

with tf.Session() as sess:

sess.run(iterator.initializer)

for _ in range(3):

data_dict = sess.run(iterator.get_next())

print(data_dict.keys())

print('image: {}, label: {}'.format(

data_dict['image'].shape,

data_dict['label'].shape))

```

| github_jupyter |

<h1>Table of Contents<span class="tocSkip"></span></h1>

<div class="toc" style="margin-top: 1em;"><ul class="toc-item"><li><span><a href="#MSVO-3,-70" data-toc-modified-id="MSVO-3,-70-1"><span class="toc-item-num">1 </span>MSVO 3, 70</a></span></li><li><span><a href="#Text-Fabric" data-toc-modified-id="Text-Fabric-2"><span class="toc-item-num">2 </span>Text-Fabric</a></span></li><li><span><a href="#Installing-Text-Fabric" data-toc-modified-id="Installing-Text-Fabric-3"><span class="toc-item-num">3 </span>Installing Text-Fabric</a></span><ul class="toc-item"><li><span><a href="#Prerequisites" data-toc-modified-id="Prerequisites-3.1"><span class="toc-item-num">3.1 </span>Prerequisites</a></span></li><li><span><a href="#TF-itself" data-toc-modified-id="TF-itself-3.2"><span class="toc-item-num">3.2 </span>TF itself</a></span></li></ul></li><li><span><a href="#Pulling-up-a-tablet-and-its-transliteration-using-a-p-number" data-toc-modified-id="Pulling-up-a-tablet-and-its-transliteration-using-a-p-number-4"><span class="toc-item-num">4 </span>Pulling up a tablet and its transliteration using a p-number</a></span></li><li><span><a href="#Non-numerical-quads" data-toc-modified-id="Non-numerical-quads-5"><span class="toc-item-num">5 </span>Non-numerical quads</a></span></li><li><span><a href="#Generating-a-list-of-sign-frequency-and-saving-it-as-a-separate-file" data-toc-modified-id="Generating-a-list-of-sign-frequency-and-saving-it-as-a-separate-file-6"><span class="toc-item-num">6 </span>Generating a list of sign frequency and saving it as a separate file</a></span></li></ul></div>

# Primer 1

This notebook is meant for those with little or no familiarity with

[Text-Fabric](https://github.com/annotation/text-fabric) and will focus on several basic tasks, including calling up an individual proto-cuneiform tablet using a p-number, the coding of complex proto-cuneiform signs using what we will call "quads" and the identification of one of the numeral systems, and a quick look at the frequency of a few sign clusters. Each primer, including this one, will focus on a single tablet and explore three or four analytical possibilities. In this primer we look at MSVO 3, 70, which has the p-number P005381 at CDLI.

## MSVO 3, 70

The proto-cuneiform tablet known as MSVO 3, 70, is held in the British Museum, where it has the museum number BM 140852. The tablet dates to the Uruk III period, ca. 3200-3000 BCE, and is slated for publication in the third volume of Materialien zu den frühen Schriftzeugnissen des Vorderen Orients (MSVO). Up to now it has only appeared as a photo in Frühe Schrift (Nissen, Damerow and Englund 1990), p. 38.

We'll show the lineart for this tablet and its ATF transcription in a moment, including a link to this tablet on CDLI.

## Text-Fabric

Text-Fabric (TF) is a model for textual data with annotations that is optimized for efficient data analysis. As we will begin to see at the end of this primer, when we check the totals on the reverse of our primer tablet, Text-Fabric also facilitates the creation of new, derived data, which can be added to the original data.

Working with TF is a bit like buying from IKEA. You get all the bits and pieces in a box, and then you assemble it yourself. TF decomposes any dataset into its components, nicely stacked, with every component uniquely labeled. And then we use short reusable bits of code to do specific things. TF is based on a model proposed by [Doedens](http://books.google.nl/books?id=9ggOBRz1dO4C) that focuses on the essential properties of texts such sequence and embedding. For a description of how Text-Fabric has been used for work on the Hebrew Bible, see Dirk Roorda's article [The Hebrew Bible as Data: Laboratory - Sharing - Experiences](https://doi.org/10.5334/bbi.18).

Once data is transformed into Text-Fabric, it can also be used to build rich online interfaces for specific groups of ancient texts. For the Hebrew Bible, have a look at [SHEBANQ](https://shebanq.ancient-data.org/hebrew/text).

The best environment for using Text-Fabric is in a [Jupyter Notebook](http://jupyter.readthedocs.io/en/latest/). This primer is in a Jupyter Notebook: the snippets of code can only be executed if you have installed Python 3, Jupyter Notebook, and Text-Fabric on your own computer.

## Installing Text-Fabric

### Prerequisites

You need to have Python on your system. Most systems have it out of the box,

but alas, that is python2 and we need at least python 3.6.

Install it from [python.org]() or from [Anaconda]().

If you got it from python.org, you also have to install [Jupyter]().

### TF itself

```

pip install text-fabric

```

if you have installed Python with the help of Anaconda, or

```

sudo -H pip3 install text-fabric

```

if you have installed Python from [python.org](https://www.python.org).

###### Execute: If all this is done, the following cells can be executed.

```

import os, sys, collections

from IPython.display import display

from tf.extra.cunei import Cunei

import sys, os

LOC = ("~/github", "Nino-cunei/uruk", "primer1")

A = Cunei(*LOC)

A.api.makeAvailableIn(globals())

```

## Pulling up a tablet and its transliteration using a p-number

Each cuneiform tablet has a unique "p-number" and we can use this p-number in Text-Fabric to bring up any images and the transliteration of a tablet, here P005381.

There is a "node" in Text-Fabric for this tablet. How do we find it and display the transliteration?

* We *search* for the tablet by means of a template;

* we use functions `A.lineart()` and `A.getSource()` to bring up the lineart and transliterations of tablets.

```

pNum = "P005381"

query = f"""

tablet catalogId={pNum}

"""

results = A.search(query)

```

The `results` is a list of "records".

Here we have only one result: `results[0]`.

Each result record is a tuple of nodes mentioned in the template.

Here we only mentioned a single thing: `tablet`.

So we find the node of the matched tablets as the firt member of the result records.

Hence the result tablet node is `results[0][0]`.

```

tablet = results[0][0]

A.lineart(tablet, width=300)

A.getSource(tablet)

```

Now we want to view the numerals on the tablet.

```

query = f"""

tablet catalogId={pNum}

sign type=numeral

"""

results = A.search(query)

```

It is easy to show them at a glance:

```

A.show(results)

```

Or we can show them in a table.

```

A.table(results)

```

There are a few different types of numerals here, but we are just going to look at the numbers belonging to the "shin prime prime" system, abbreviated here as "shinPP," which regularly adds two narrow horizatonal wedges to each number. N04, which is the basic unit in this system, is the fourth, fith and ninth of the preceding numerals: in the fourth occurrence repeated twice, in the fifth, three times and, unsurprisingly, in the ninth, which is the total on the reverse, five times. (N19, which is the next bundling unit in the same system, also occurs in the text.)

```

shinPP = dict(

N41=0.2,

N04=1,

N19=6,

N46=60,

N36=180,

N49=1800,

)

```

First, let's see if we can locate one of the occurrences of shinPP numerals, namely the set of 3(N04) in the first case of the second column on the obverse, using Text-Fabric.

```

query = f"""

tablet catalogId={pNum}

face type=obverse

column number=2

line number=1

=: sign

"""

results = A.search(query)

A.table(results)

```

Note the `:=` in `=: sign`. This is a device to require that the sign starts at the same position

as the `line` above it. Effectively, we ask for the first sign of the line.

Now the result records are tuples `(tablet, face, column, line, sign)`, so if we want

the sign part of the first result, we have to say `results[0][4]` (Python counts from 0).

```

num = results[0][4]

A.pretty(num, withNodes=True)

```

This number is the "node" in Text-Fabric that corresponds to the first sign in the first case of column 2. It is like a bar-code for that position in the entire corpus. Now let's make sure that this node, viz. 106602, is actually a numeral. To do this we check the feature "numeral" of the node 106602. And then we can use A.atfFromSign to extract the transliteration.

```

print(F.type.v(num) == "numeral")

print(A.atfFromSign(num))

```

Let's get the name of the numeral, viz. N04, and the number of times that it occurs. This amounts to splitting apart "3" and "(N04)" but since we are calling features in Text-Fabric rather than trying to pull elements out of the transliteration, we do not need to tweak the information.

```

grapheme = F.grapheme.v(num)

print(grapheme)

iteration = F.repeat.v(num)

print(iteration)

```

Now we can replace "N04" with its value, using the shinPP dictionary above, and then multiple this value by the number of iterations to arrive at the value of the numeral as a whole. Since each occurrence of the numeral N04 has a value of 1, three occurrences of it should have a value of 3.

```

valueFromDict = shinPP.get(grapheme)

value = iteration * valueFromDict

print(value)

```

Just to make sure that we are calculating these values correctly, let's try it again with a numeral whose value is not 1. There is a nice example in case 1b in column 1 on the obverse, where we have 3 occurrences of N19, each of which has a value of 6, so we expect the total value of 3(N19 to be 18.

```

query = f"""

tablet catalogId={pNum}

face type=obverse

column number=1

case number=1b

=: sign

"""

results = A.search(query)

A.table(results)

sign = results[0][4]

grapheme = F.grapheme.v(sign)

iteration = F.repeat.v(sign)

valueFromDict = shinPP.get(grapheme, 0)

value = iteration * valueFromDict

print(value)

```

The next step is to walk through the nodes on the obverse, add up the total of the shinPP system on the obverse, and then do the same for the reverse and see if the obverse and the total on the reverse add up. We expect the 3(N19) and 5(N04) on the obverse to add up to 23, viz. 18 + 5 = 23.

```

shinPPpat = "|".join(shinPP)

query = f"""

tablet catalogId={pNum}

face

sign grapheme={shinPPpat}

"""

results = A.search(query)

A.show(results)

sums = collections.Counter()

for (tablet, face, num) in results:

grapheme = F.grapheme.v(num)

iteration = F.repeat.v(num)

valueFromDict = shinPP[grapheme]

value = iteration * valueFromDict

sums[F.type.v(face)] += value

for faceType in sums:

print(f"{faceType}: {sums[faceType]}")

```

It adds up!

## Non-numerical quads

Now that we have identified the numeral system in the first case of column 2 on the obverse, let's also see what we can find out about the non-numeral signs in the same case.

We use the term "quad" to refer to all orthographic elements that occupy the space of a single proto-cuneiform sign on the surface of the tablet. This includes both an individual proto-cuneiform sign operating on its own as well as combinations of signs that occupy the same space. One of the most elaborate quads in the proto-cuneiform corpus is the following:

```

|SZU2.((HI+1(N57))+(HI+1(N57)))|

```

This quad has two sub-quads `SZU2`, `(HI+1(N57))+(HI+1(N57))`, and the second sub-quad also consists of two sub-quads `HI+1(N57)` and `HI+1(N57)`; both of these sub-quads are, in turn, composed of two further sub-quads `HI` and `1(N57)`.

First we need to pick this super-quad out of the rest of the line: this is how we get the transliteration of the entire line:

```

query = f"""

tablet catalogId={pNum}

face type=obverse

column number=2

line number=1

"""

results = A.search(query)

line = results[0][3]

A.pretty(line, withNodes=True)

```

We can just read off the node of the biggest quad.

```

bigQuad = 143015

```

Now that we have identified the "bigQuad," we can also ask Text-Fabric to show us what it looks like.

```

A.lineart(bigQuad)

```

This extremely complex quad, viz. |SZU2.((HI+1(N57))+(HI+1(N57)))|, is a hapax legomenon, meaning that it only occurs here, but there are three other non-numeral quads in this line besides |SZU2.((HI+1(N57))+(HI+1(N57)))|, namely |GISZ.TE|, GAR and GI4~a, so let's see how frequent these four non-numeral signs are in the proto-cuneiform corpus. We can do this sign by sign using the function "F.grapheme.s()".

```

GISZTEs = F.grapheme.s("|GISZ.TE|")

print(f"|GISZ.TE| {len(GISZTEs)} times")

GARs = F.grapheme.s("GAR")

print(f"GAR = {len(GARs)} times")

GI4s = F.grapheme.s("GI4")

print(f"GI4 = {len(GI4s)} times")

```

There are two problems here that we need to resolve in order to get good numbers: we have to get Text-Fabric to count |GISZ.TE| as a single unit, even though it is composed of two distinct graphemes, and we have to ask it to recognize and count the "a" variant of "GI4". In order to count the number of quads that consist of GISZ and TE, namely |GISZ.TE|, it is convenient to make a frequency index for all quads.

We walk through all the quads, pick up its ATF, and count the frequencies of ATF representations.

```

quadFreqs = collections.Counter()

for q in F.otype.s("quad"):

quadFreqs[A.atfFromQuad(q)] += 1

```

With this in hand, we can quickly count how many quads there are that have both signs `GISZ` and `TE` in them.

Added bonus: we shall also see whether there are quads with both of these signs but composed with other operators and signs as well.

```

for qAtf in quadFreqs:

if "GISZ" in qAtf and "TE" in qAtf:

print(f"{qAtf} ={quadFreqs[qAtf]:>4} times")

```

And we can also look at the set of quads in which GISZ co-occurs with another sign, and likewise, the set of quads in which TE co-occurs with another sign.

```

for qAtf in quadFreqs:

if "GISZ" in qAtf:

print(f"{quadFreqs[qAtf]:>4} x {qAtf}")

for qAtf in quadFreqs:

if "TE" in qAtf:

print(f"{quadFreqs[qAtf]:>4} x {qAtf}")

```

Most of the time, however, when we are interested in particular sign frequencies, we want to cast a wide net and get the frequency of any possibly related sign or quad. The best way to do this is to check the ATF of any sign or quad that might be relevant and add up the number of its occurrences in the corpus. This following script checks both signs and quads and casts the net widely. It looks for the frequency of our same three signs/quads, namely GAR, GI4~a and |GISZ.TE|.

```

quadSignFreqs = collections.Counter()

quadSignTypes = {"quad", "sign"}

for n in N():

nType = F.otype.v(n)

if nType not in quadSignTypes:

continue

atf = A.atfFromQuad(n) if nType == "quad" else A.atfFromSign(n)

quadSignFreqs[atf] += 1

```

We have now an frequency index for all signs and quads in their ATF representation.

Note that if a sign is part of a bigger quad, its occurrence there will be counted as an occurrence of the sign.

```

selectedAtfs = []

for qsAtf in quadSignFreqs:

if "GAR" in qsAtf or "GI4~a" in qsAtf or "|GISZ.TE|" in qsAtf:

selectedAtfs.append(qsAtf)

print(f"{quadSignFreqs[qsAtf]:>4} x {qsAtf}")

```

Let's draw all these quads.

```

for sAtf in selectedAtfs:

A.lineart(sAtf, width="5em", height="5em", withCaption="right")

```

Besides our three targets, 34 occurrences of GI4~a, 401 of GAR and 26 of |GISZ.TE|:

34 x GI4~a

401 x GAR

26 x |GISZ.TE|

it has also pulled in a number of quads that include either GAR or GI4~a, among others:

20 x |ZATU651xGAR|

3 x |NINDA2xGAR|

6 x |4(N57).GAR|

1 x |GI4~a&GI4~a|

1 x |GI4~axA|

There are also other signs tas well as signs that only resemble GAR in transliteration such as LAGAR or GARA2, but as long as we know what we are looking for this type of broader frequency count can be quite useful.

## Generating a list of sign frequency and saving it as a separate file

First, we are going to count the number of distinct signs in the corpus, look at the top hits in the list and finally save the full list to a separate file. Then we will do the same for the quads, and then lastly we are going to combine these two lists and save them as a single frequency list for both signs and quads.

```

fullGraphemes = collections.Counter()

for n in F.otype.s("sign"):

grapheme = F.grapheme.v(n)

if grapheme == "" or grapheme == "…":

continue

fullGrapheme = A.atfFromSign(n)

fullGraphemes[fullGrapheme] += 1

len(fullGraphemes)

```

So there are 1477 distinct proto-cuneiform signs in the corpus. The following snippet of code will show us the first 20 signs on that list.

```

for (value, frequency) in sorted(

fullGraphemes.items(),

key=lambda x: (-x[1], x[0]),

)[0:20]:

print(f"{frequency:>5} x {value}")

```

Now we are going to write the full set of sign frequency results to two files in your `_temp` directory, within this repo. The two files are called:

* `grapheme-alpha.txt`, an alphabetic list of graphemes, along with the frequency of each sign, and

* `grapheme-freq.txt`, which runs from the most frequent to the least.

```

def writeFreqs(fileName, data, dataName):

print(f"There are {len(data)} {dataName}s")

for (sortName, sortKey) in (

("alpha", lambda x: (x[0], -x[1])),

("freq", lambda x: (-x[1], x[0])),

):

with open(f"{A.tempDir}/{fileName}-{sortName}.txt", "w") as fh:

for (item, freq) in sorted(data, key=sortKey):

if item != "":

fh.write(f"{freq:>5} x {item}\n")

```

Now let's go through some of the same steps for quads rather than individual signs, and then export a single frequency list for both signs and quads.

```

quadFreqs = collections.Counter()

for q in F.otype.s("quad"):

quadFreqs[A.atfFromQuad(q)] += 1

print(len(quadFreqs))

```

So there are 740 quads in the corpus, and now we ask for the twenty most frequently attested quads.

```

for (value, frequency) in sorted(

quadFreqs.items(),

key=lambda x: (-x[1], x[0]),

)[0:20]:

print(f"{frequency:>5} x {value}")

```

And for the final task in this primer, we ask Text-Fabric to export a frequency list of both signs and quads in a separate file.

```

reportDir = "reports"

os.makedirs(reportDir, exist_ok=True)

def writeFreqs(fileName, data, dataName):

print(f"There are {len(data)} {dataName}s")

for (sortName, sortKey) in (

("alpha", lambda x: (x[0], -x[1])),

("freq", lambda x: (-x[1], x[0])),

):

with open(f"{reportDir}/{fileName}-{sortName}.txt", "w") as fh:

for (item, freq) in sorted(data.items(), key=sortKey):

if item != "":

fh.write(f"{freq:>5} x {item}\n")

```

This shows up as a pair of files named "quad-signs-alpha.txt" and "quad-signs-freq.txt" and if we copy a few pieces of the quad-signs-freq.txt file here, they look something like this:

29413 x ...

12983 x 1(N01)

6870 x X

3080 x 2(N01)

2584 x 1(N14)

1830 x EN~a

1598 x 3(N01)

1357 x 2(N14)

1294 x 5(N01)

1294 x SZE~a

1164 x GAL~a

Only much farther down the list do we see signs and quads interspersed; here are the signs/quads around 88 occurrences:

88 x NIMGIR

88 x NIM~a

88 x SUG5

86 x EN~b

86 x NAMESZDA

86 x |GI&GI|

85 x GU

85 x |GA~a.ZATU753|

84 x BAD~a

84 x NA2~a

84 x ZATU651

84 x |1(N58).BAD~a|

83 x ZATU759

| github_jupyter |

# Math Kernel Library (oneMKL) and SYCL Basic Parallel Kernel

In this next set of modules, we will explore how we can utilize oneAPI and SYCL to implement Matrix Multiplication using Intel® oneAPI Math Kernel Library (oneMKL) and also implement Matrix Multiplication in the most basic parallel form and then improve performance by tuning the kernel code while trying to maintain performance portability. All code improvements will be measured in terms of relative performance to oneMKL.

### Learning Objectives

- Gain familiarity with oneMKL and able to use it for a two dimensional GEMM.

- Use a basic GEMM application for basis of enhancements.

- Interpret roofline and VTune™ analyzer results as a method to measure the GEMM applications.

## Intel oneAPI Math Kernel Library (oneMKL)

One of the best ways to achieve performance portable code is to take advantage of a library. In this case oneMKL offers a compelling GEMM implementation that we will use as our baseline. If there is a library, it should always be ones first attempt at achieving performant portable code. All other implementations will be measured against oneMKL.

Intel oneMKL is included in the Intel oneAPI toolkits and there is extensive documentation at [Get Started with Intel oneAPI Math Kernel Library.](https://software.intel.com/content/www/us/en/develop/documentation/get-started-with-mkl-for-dpcpp/top.html)

The Intel® oneAPI Math Kernel Library (oneMKL) helps you achieve maximum performance with a math computing library of highly optimized, extensively parallelized routines for CPU and GPU. The library has C and Fortran interfaces for most routines on CPU, and SYCL interfaces for some routines on both CPU and GPU. You can find comprehensive support for several math operations in various interfaces including:

SYCL on CPU and GPU

(Refer to the Intel® oneAPI Math Kernel Library—Data Parallel C++ Developer Reference for more details.)

- Linear algebra

- BLAS

- Selected Sparse BLAS functionality

- Selected LAPACK functionality

- Fast Fourier Transforms (FFT)

- 1D r2c FFT

- 1D c2c FFT

- Random number generators

- Single precision Uniform, Gaussian, and Lognormal distributions

- Selected Vector Math functionality

The example below uses the GEMM function from the oneMKL BLAS routine, which computes a scalar-matrix-matrix product and add the result to a scalar-matrix product, with general matrices. The operation is defined as:

```cpp

void gemm(queue &exec_queue, transpose transa, transpose transb, std::int64_t m, std::int64_t n, std::int64_t k, T alpha, buffer<T, 1> &a, std::int64_t lda, buffer<T, 1> &b, std::int64_t ldb, T beta, buffer<T, 1> &c, std::int64_t ldc)

```

This one line of oneMKL function does all of the necessary optimizations for CPU/GPU offload compute and as you go through the exercises you will discover that it did indeed deliver the best results with the least amount of code across all of the platforms.

## Matrix Multiplication with Math Kernel Library (oneMKL)

The following SYCL code below uses a oneMKL kernel: Inspect code, there are no modifications necessary:

1. __Run__ ▶the cell following __Select offload device__, in Jupyter everything is linear, a subsequent run will need to choose a new target and then the following cell will need to be executed to get the updated results.

2. Inspect the following code cell and click __Run__ ▶ to save the code to a file.

3. Next run -- the cell in the __Build and Run__ section below the code to compile and execute the code.

#### Select Offload Device

```

run accelerator.py

%%writefile lab/mm_dpcpp_mkl.cpp

//==============================================================

// Matrix Multiplication: SYCL oneMKL

//==============================================================

// Copyright © 2021 Intel Corporation

//

// SPDX-License-Identifier: MIT

// =============================================================

#include <CL/sycl.hpp>

#include "oneapi/mkl/blas.hpp" //# oneMKL DPC++ interface for BLAS functions

using namespace sycl;

void mm_kernel(queue &q, std::vector<float> &matrix_a, std::vector<float> &matrix_b, std::vector<float> &matrix_c, size_t N, size_t M) {

std::cout << "Configuration : MATRIX_SIZE= " << N << "x" << N << "\n";

//# Create buffers for matrices

buffer a(matrix_a);

buffer b(matrix_b);

buffer c(matrix_c);

//# scalar multipliers for oneMKL

float alpha = 1.f, beta = 1.f;

//# transpose status of matrices for oneMKL

oneapi::mkl::transpose transA = oneapi::mkl::transpose::nontrans;

oneapi::mkl::transpose transB = oneapi::mkl::transpose::nontrans;

//# Submit MKL library call to execute on device

oneapi::mkl::blas::gemm(q, transA, transB, N, N, N, alpha, b, N, a, N, beta, c, N);

c.get_access<access::mode::read>();

}

```

#### Build and Run

Select the cell below and click __Run__ ▶ to compile and execute the code on selected device:

```

! chmod 755 q; chmod 755 run_mm_mkl.sh; if [ -x "$(command -v qsub)" ]; then ./q run_mm_mkl.sh "{device.value}"; else ./run_mm_mkl.sh; fi

```

### Roofline Report

Execute the following line to display the roofline results

```

run display_data/mm_mkl_roofline.py

```

### VTune™ Profiler Summary

Execute the following line to display the VTune results.

```

run display_data/mm_mkl_vtune.py

```

## Basic Parallel Kernel Implementation

In this section we will look at how matrix multiplication can be implemented using a SYCL basic parallel kernel. This is the most simplest implementation using SYCL without any optimizations. In the next few modules we will add optimization on top of this implementation to improve the performance.

<img src="Assets/naive.PNG">

We can define the kernel with `parallel_for` with a 2-dimentional range for the matrix and perform matrix multiplication as shown below:

```cpp

h.parallel_for(range<2>{N,N}, [=](item<2> item){

const int i = item.get_id(0);

const int j = item.get_id(1);

for (int k = 0; k < N; k++) {

C[i*N+j] += A[i*N+k] * B[k*N+j];

}

});

```

## Matrix Multiplication with basic parallel kernel

The following SYCL code shows the basic parallel kernel implementation of matrix multiplication. Inspect code; there are no modifications necessary:

1. Run the cell in the __Select Offload Device__ section to choose a target device to run the code on.

2. Inspect the following code cell and click __Run__ ▶ to save the code to a file.

3. Next, run the cell in the __Build and Run__ section to compile and execute the code.

#### Select Offload Device

```

run accelerator.py

%%writefile lab/mm_dpcpp_basic.cpp

//==============================================================

// Matrix Multiplication: SYCL Basic Parallel Kernel

//==============================================================

// Copyright © 2021 Intel Corporation

//

// SPDX-License-Identifier: MIT

// =============================================================

#include <CL/sycl.hpp>

using namespace sycl;

void mm_kernel(queue &q, std::vector<float> &matrix_a, std::vector<float> &matrix_b, std::vector<float> &matrix_c, size_t N, size_t M) {

std::cout << "Configuration : MATRIX_SIZE= " << N << "x" << N << "\n";

//# Create buffers for matrices

buffer a(matrix_a);

buffer b(matrix_b);

buffer c(matrix_c);

//# Submit command groups to execute on device

auto e = q.submit([&](handler &h){

//# Create accessors to copy buffers to the device

auto A = a.get_access<access::mode::read>(h);

auto B = b.get_access<access::mode::read>(h);

auto C = c.get_access<access::mode::write>(h);

//# Parallel Compute Matrix Multiplication

h.parallel_for(range<2>{N,N}, [=](item<2> item){

const int i = item.get_id(0);

const int j = item.get_id(1);

for (int k = 0; k < N; k++) {

C[i*N+j] += A[i*N+k] * B[k*N+j];

}

});

});

c.get_access<access::mode::read>();

//# print kernel compute duration from event profiling

auto kernel_duration = (e.get_profiling_info<info::event_profiling::command_end>() - e.get_profiling_info<info::event_profiling::command_start>());

std::cout << "Kernel Execution Time : " << kernel_duration / 1e+9 << " seconds\n";

}

```

#### Build and Run

Select the cell below and click __Run__ ▶ to compile and execute the code on selected device:

```

! chmod 755 q; chmod 755 run_mm_basic.sh;if [ -x "$(command -v qsub)" ]; then ./q run_mm_basic.sh "{device.value}"; else ./run_mm_basic.sh; fi

```

### Roofline Report

Execute the following line to display the roofline results

```

run display_data/mm_basic_roofline.py

```

### VTune™ Profiler Summary

Execute the following line to display the VTune results.

```

run display_data/mm_basic_vtune.py

```

### Analysis

Comparing the execution times for Basic SYCL implementation and Math Kernel Library implementation for various matrix sizes, we can see that for small matrix size of 1024x1024, Basic SYCL implementation performs better than MKL implementation. When matrix size is large, MKL implementation out performs Basic SYCL implementation significantly. The graph below shows execution times on various hardware for matrix sizes 1024x1024, 5120x5120 and 10240x10240.

<img src=Assets/ppp_basic_mkl_graph.PNG>

### Summary

In this module we looked at oneAPI Math Kernel Library (oneMKL) and implemented matrix multiplication using oneMKL function. We also implemented matrix multiplication using SYCL basic parallel kernel. We compared performance numbers for the two implementations and can see benefits of using a library link oneMKL to implement computation rather than basic implementation using SYCL.

## Resources

Check out these related resources

#### Intel® oneAPI Toolkit documentation

* [Intel Advisor Roofline](https://software.intel.com/content/www/us/en/develop/articles/intel-advisor-roofline.html)

* [Intel VTune](https://software.intel.com/content/www/us/en/develop/documentation/vtune-help/top/introduction.html)

* [Intel® oneAPI main page](https://software.intel.com/oneapi "oneAPI main page")

* [Intel® oneAPI programming guide](https://software.intel.com/sites/default/files/oneAPIProgrammingGuide_3.pdf "oneAPI programming guide")

* [Intel® DevCloud Signup](https://software.intel.com/en-us/devcloud/oneapi "Intel DevCloud") Sign up here if you do not have an account.

* [Get Started with oneAPI for Linux*](https://software.intel.com/en-us/get-started-with-intel-oneapi-linux)

* [Get Started with oneAPI for Windows*](https://software.intel.com/en-us/get-started-with-intel-oneapi-windows)

* [Intel® oneAPI Code Samples](https://software.intel.com/en-us/articles/code-samples-for-intel-oneapibeta-toolkits)

* [oneAPI Specification elements](https://www.oneapi.com/spec/)

#### SYCL

* [SYCL* 2020 Specification](https://www.khronos.org/registry/SYCL/specs/sycl-2020/pdf/sycl-2020.pdf)

#### Modern C++

* [CPPReference](https://en.cppreference.com/w/)

* [CPlusPlus](http://www.cplusplus.com/)

***

| github_jupyter |

```

from resources.workspace import *

%matplotlib inline

```

## Dynamical systems

are systems (sets of equations) whose variables evolve in time (the equations contains time derivatives). As a branch of mathematics, its theory is mainly concerned with understanding the behaviour of solutions (trajectories) of the systems.

## Chaos

is also known as the butterfly effect: "a buttefly that flaps its wings in Brazil can 'cause' a hurricane in Texas".

As opposed to the opinions of Descartes/Newton/Laplace, chaos effectively means that even in a deterministic (non-stochastic) universe, we can only predict "so far" into the future. This will be illustrated below using two toy-model dynamical systems made by Edward Lorenz.

---

## The Lorenz (1963) attractor

The [Lorenz-63 dynamical system](https://en.wikipedia.org/wiki/Lorenz_system) can be derived as an extreme simplification of *Rayleigh-Bénard convection*: fluid circulation in a shallow layer of fluid uniformly heated (cooled) from below (above).

This produces the following 3 *coupled* ordinary differential equations (ODE):

$$

\begin{aligned}

\dot{x} & = \sigma(y-x) \\

\dot{y} & = \rho x - y - xz \\

\dot{z} & = -\beta z + xy

\end{aligned}

$$

where the "dot" represents the time derivative, $\frac{d}{dt}$. The state vector is $\mathbf{x} = (x,y,z)$, and the parameters are typically set to

```

SIGMA = 10.0

BETA = 8/3

RHO = 28.0

```

The ODEs can be coded as follows

```

def dxdt(xyz, t0, sigma, beta, rho):

"""Compute the time-derivative of the Lorenz-63 system."""

x, y, z = xyz

return [

sigma * (y - x),

x * (rho - z) - y,

x * y - beta * z

]

```

#### Numerical integration to compute the trajectories

Below is a function to numerically **integrate** the ODEs and **plot** the solutions.

<!--

This function also takes arguments to control ($\sigma$, $\beta$, $\rho$) and of the numerical integration (`N`, `T`).

-->

```

from scipy.integrate import odeint

output_63 = [None]

@interact( sigma=(0.,50), beta=(0.,5), rho=(0.,50), N=(0,50), eps=(0.01,1), T=(0.,40))

def animate_lorenz(sigma=SIGMA, beta=BETA, rho=RHO , N=2, eps=0.01, T=1.0):

# Initial conditions: perturbations around some "proto" state

seed(1)

x0_proto = array([-6.1, 1.2, 32.5])

x0 = x0_proto + eps*randn((N, 3))

# Compute trajectories

tt = linspace(0, T, int(100*T)+1) # Time sequence for trajectory

dd = lambda x,t: dxdt(x,t, sigma,beta,rho) # Define dxdt(x,t) with fixed params.

xx = array([odeint(dd, xn, tt) for xn in x0]) # Integrate

output_63[0] = xx

# PLOTTING

ax = plt.figure(figsize=(10,5)).add_subplot(111, projection='3d')

ax.axis('off')

colors = plt.cm.jet(linspace(0,1,N))

for n in range(N):

ax.plot(*(xx[n,:,:].T),'-' ,c=colors[n])

ax.scatter3D(*xx[n,-1,:],s=40,c=colors[n])

```

**Exc 2**:

* Move `T` (use your arrow keys). What does it control?

* Set `T` to something small; move the sliders for `N` and `eps`. What do they control?